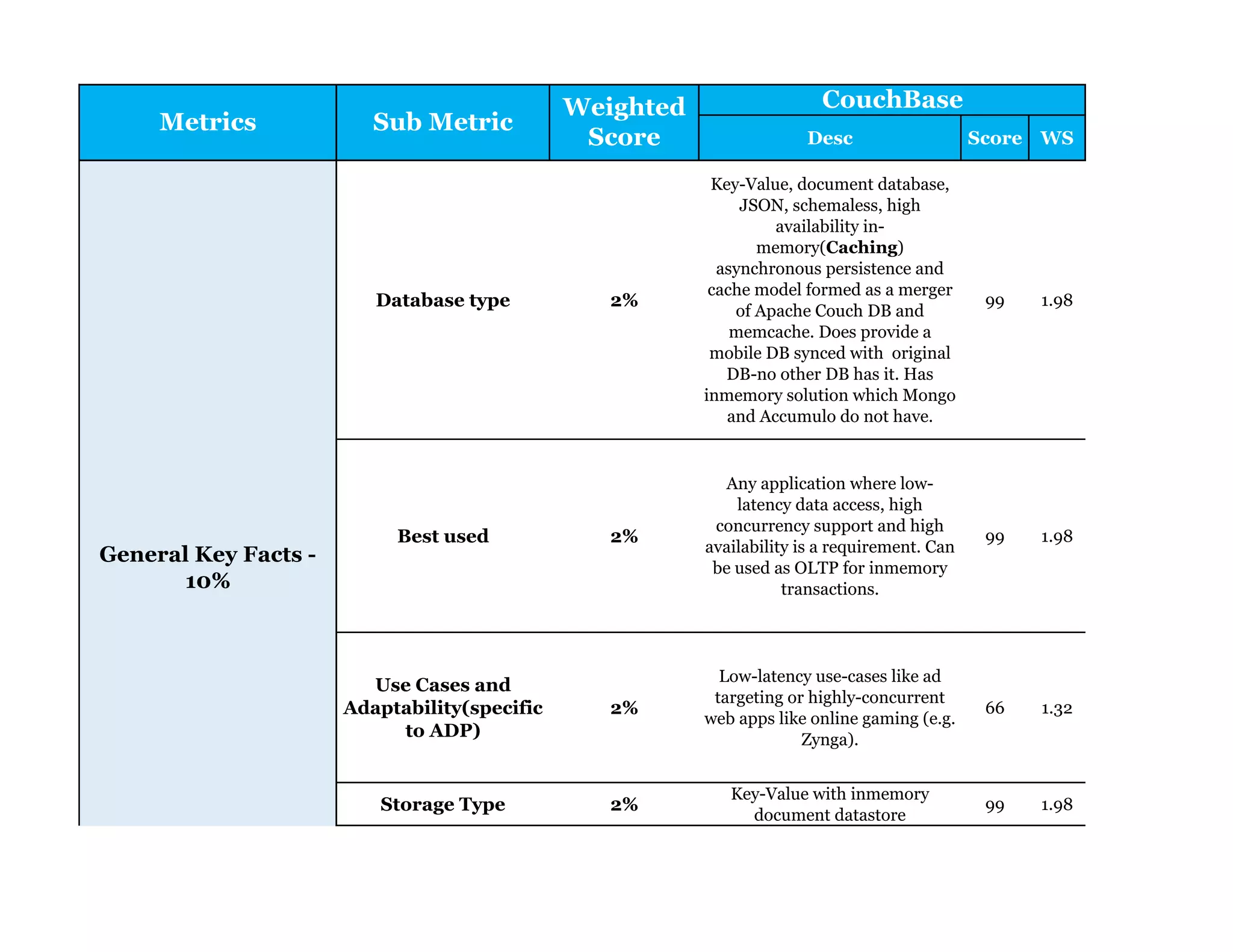

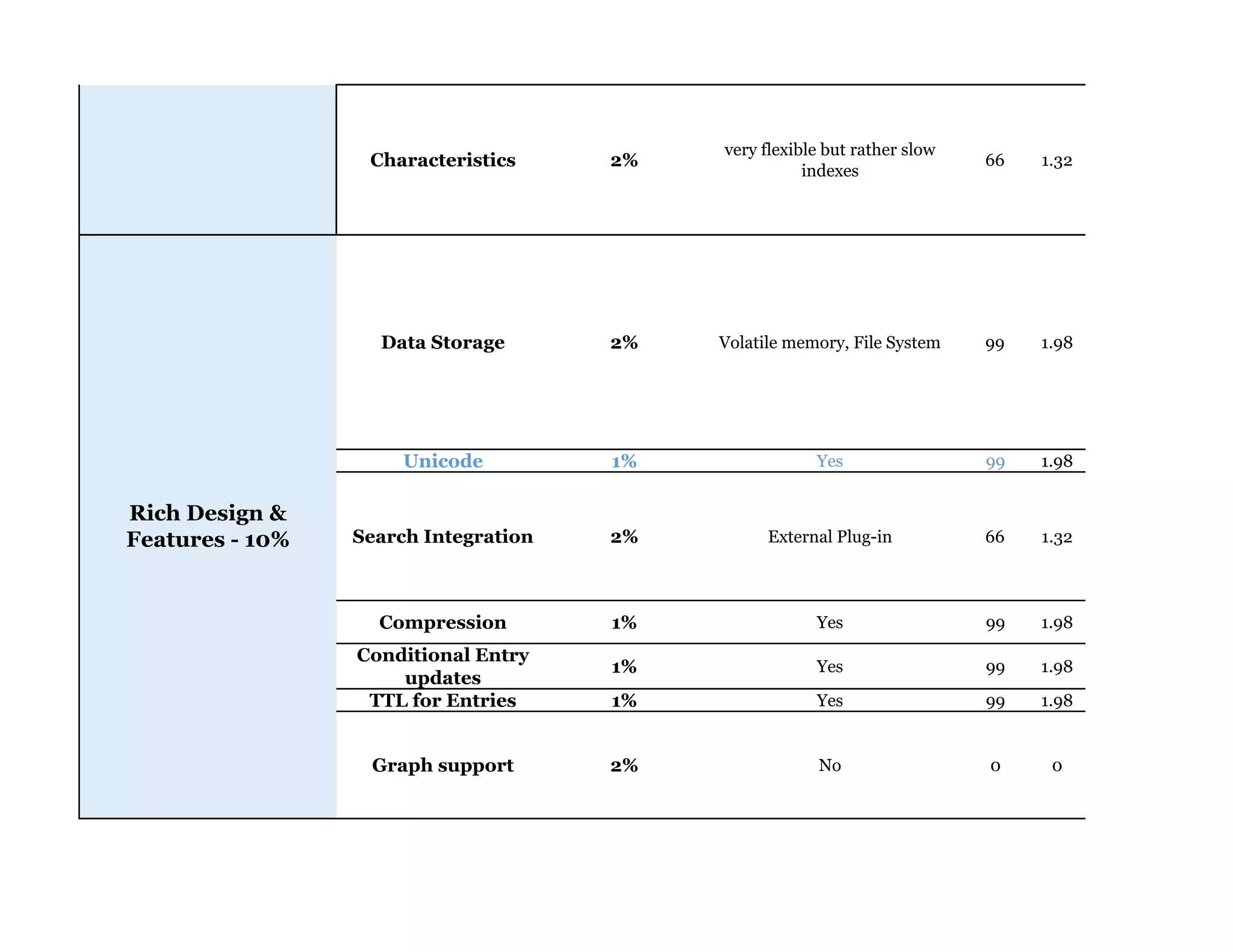

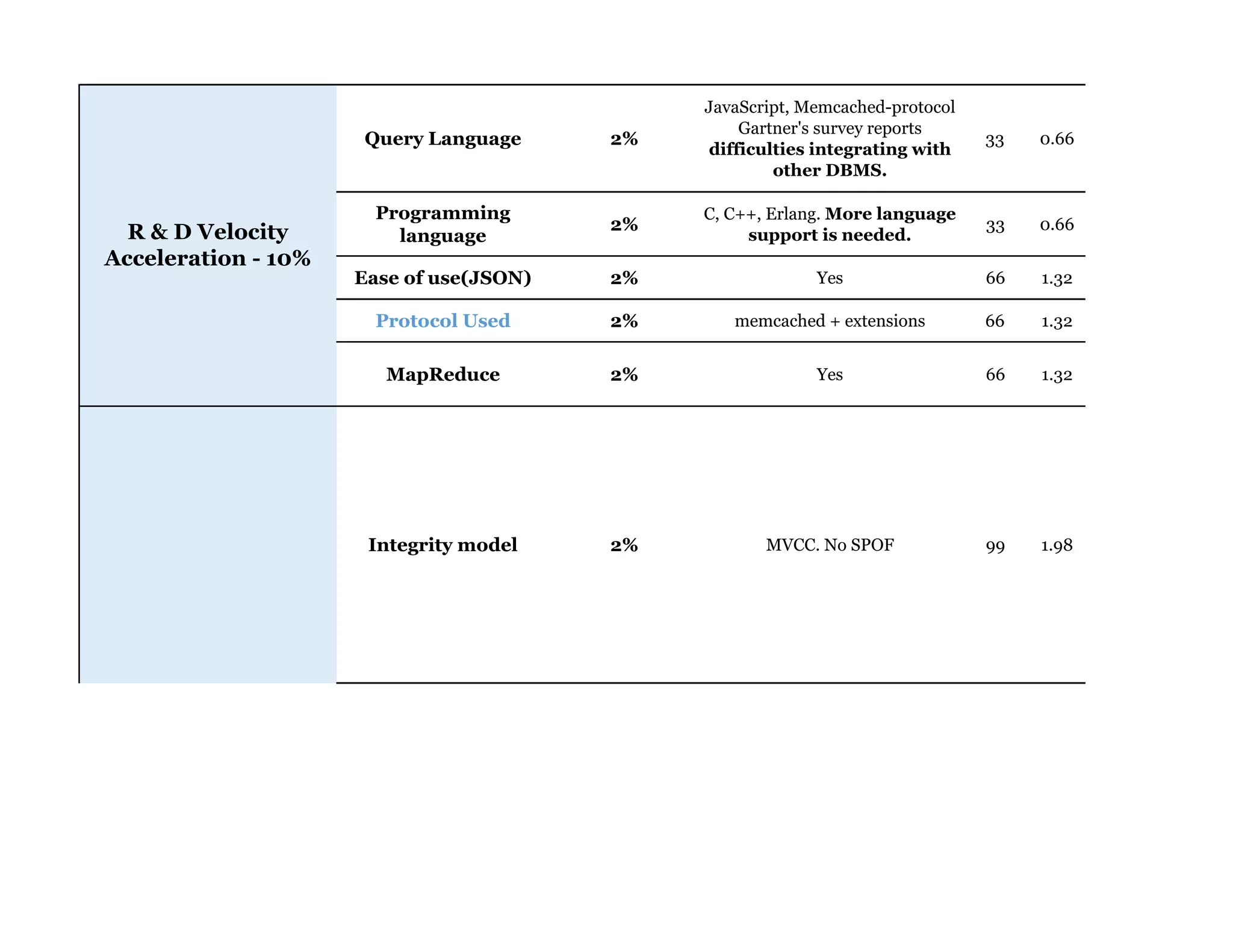

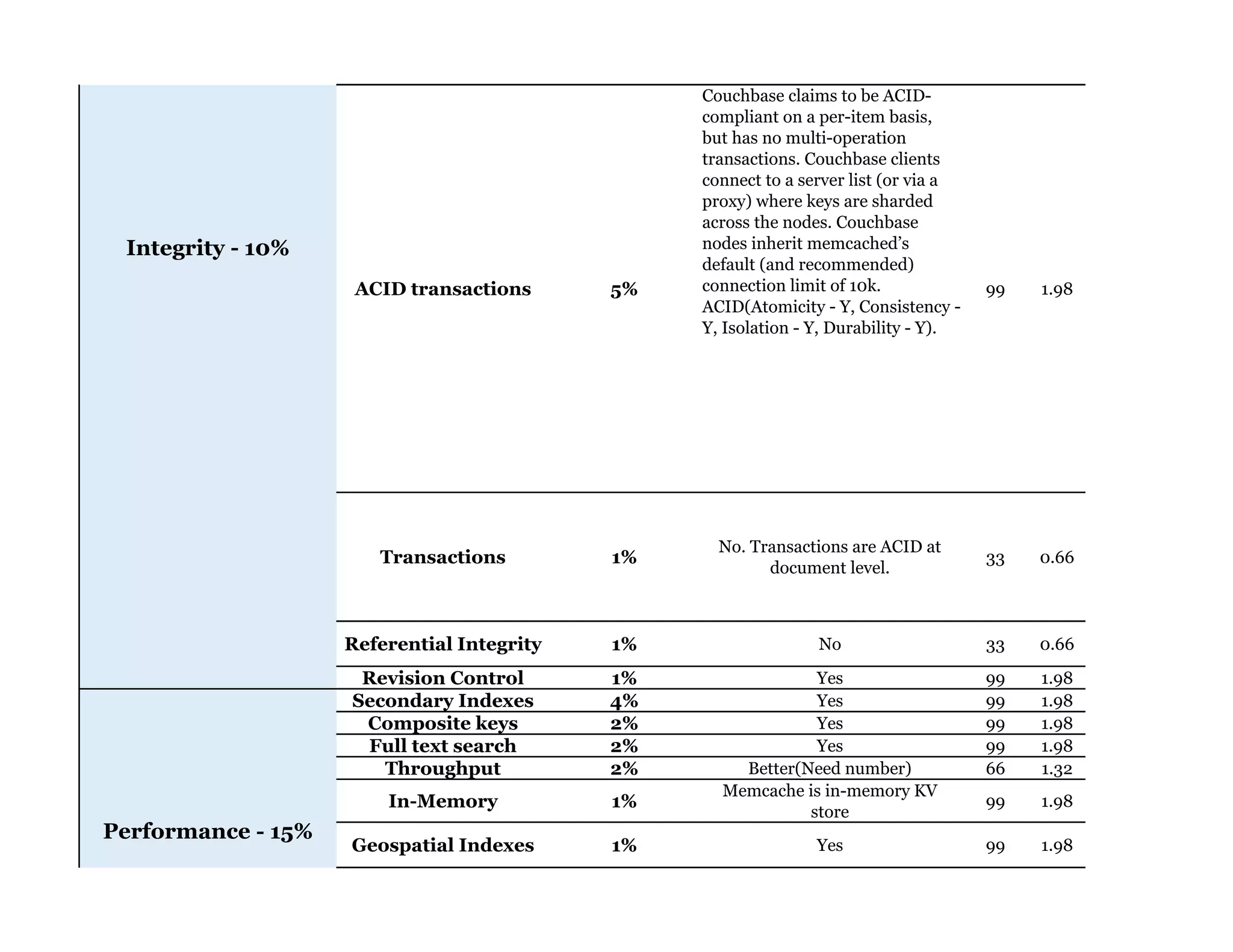

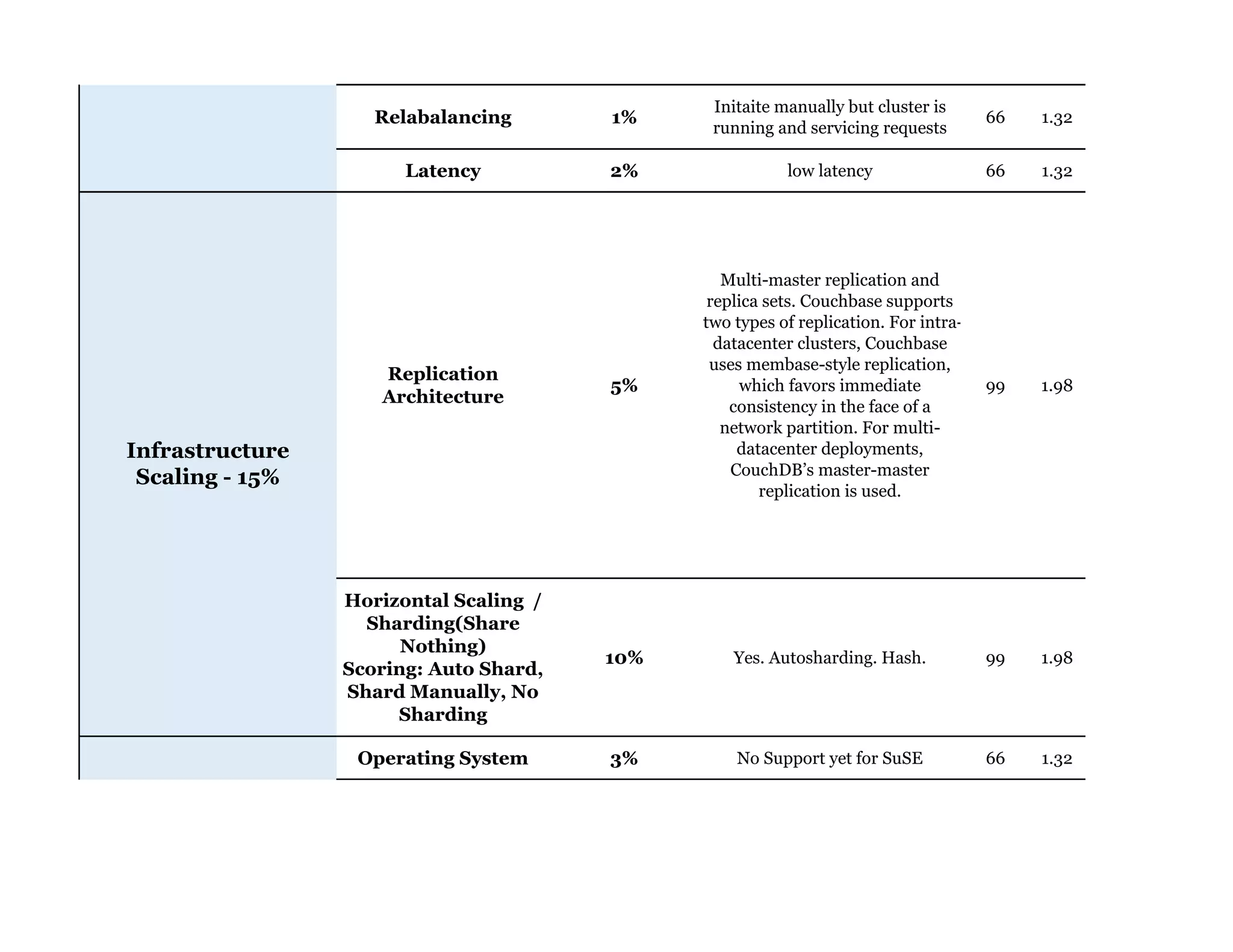

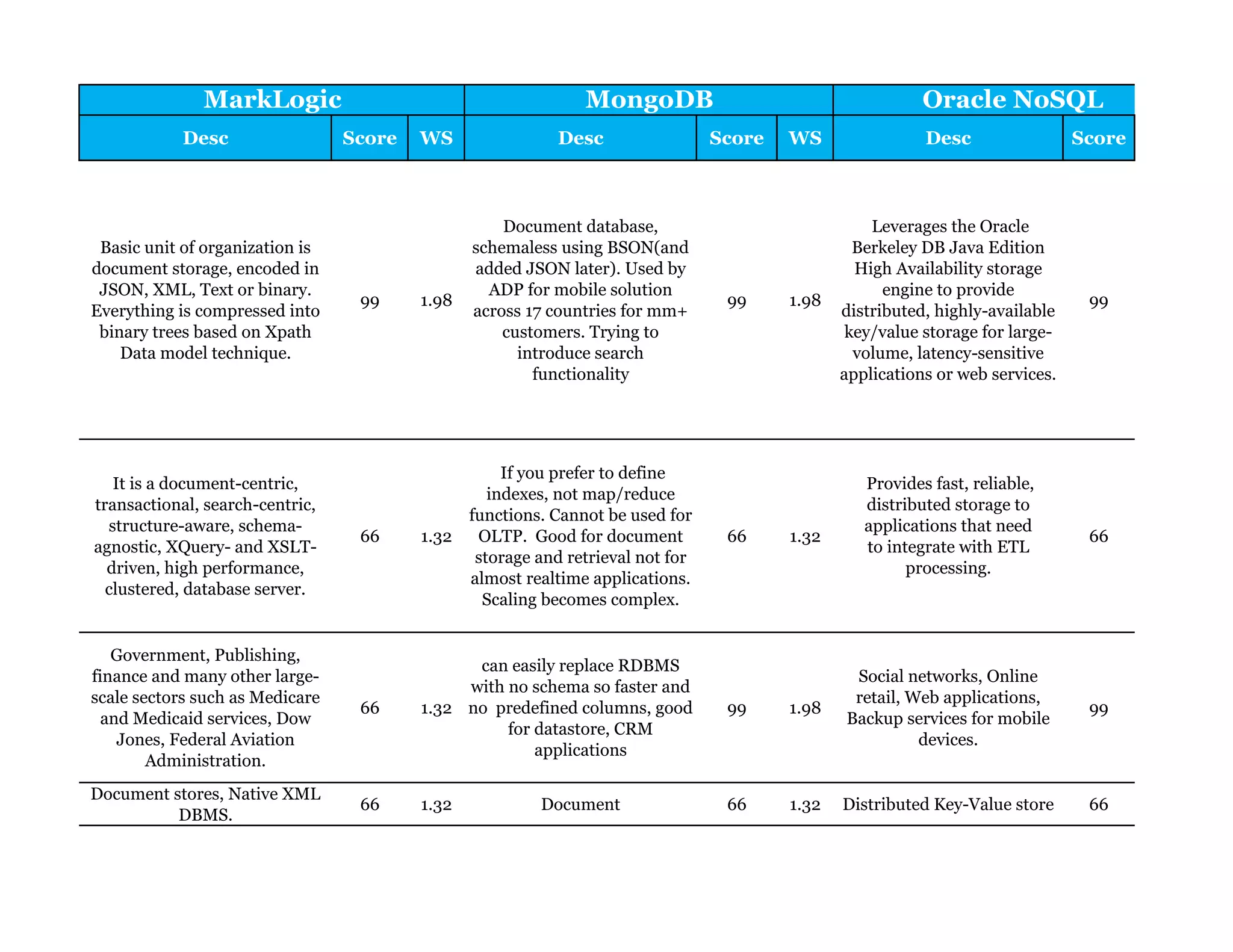

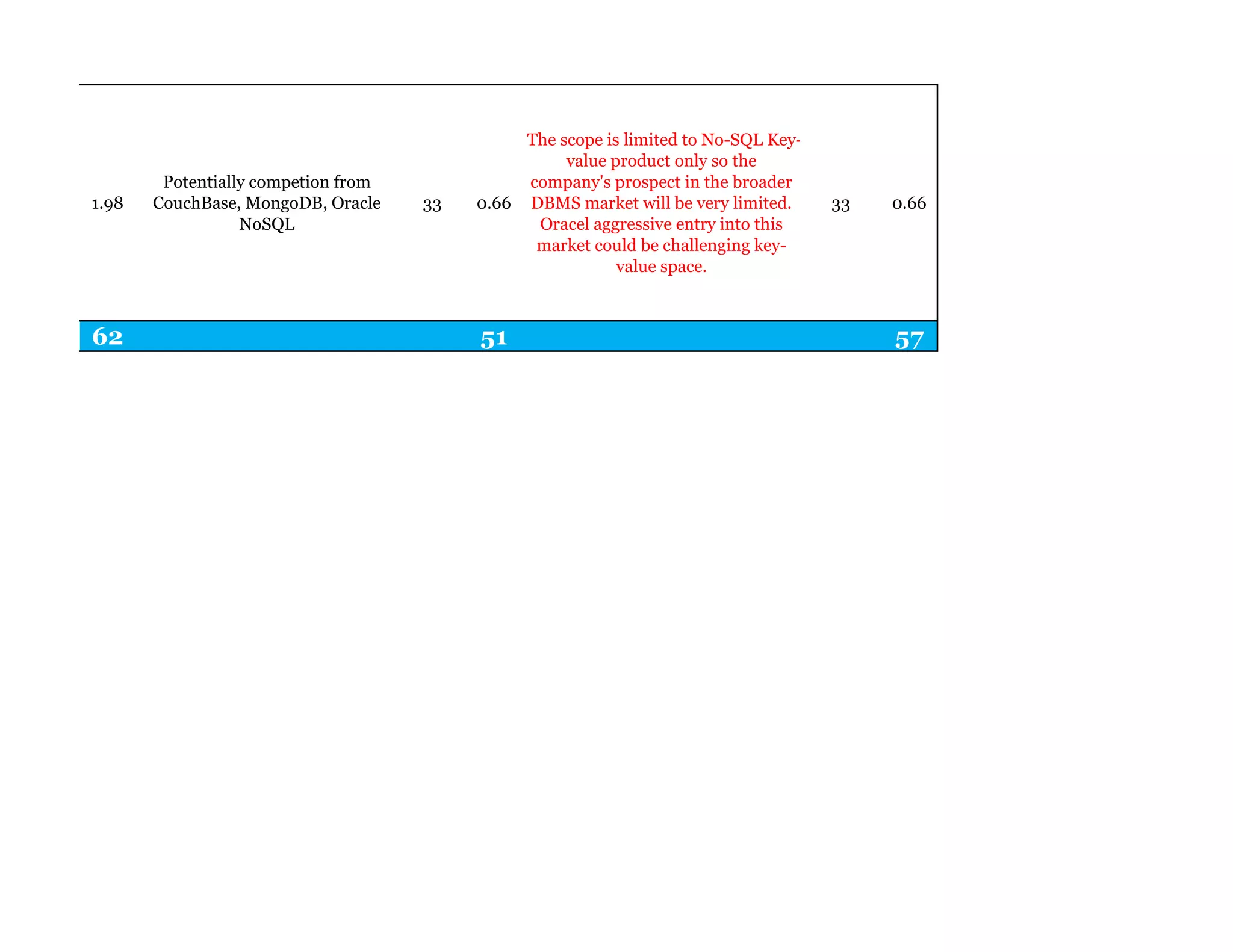

Couchbase is a document-oriented NoSQL database that provides a distributed key-value store with optional in-memory caching. It uses JSON documents with a schema-free approach and has built-in replication and high availability. Couchbase supports low-latency applications through its in-memory operations and integration with memcached. It allows flexible scaling through horizontal sharding of data across nodes.