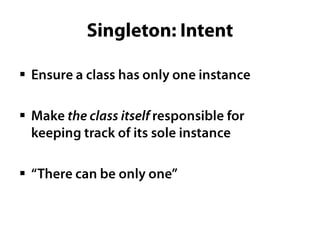

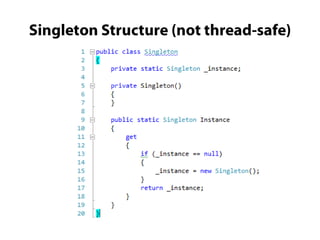

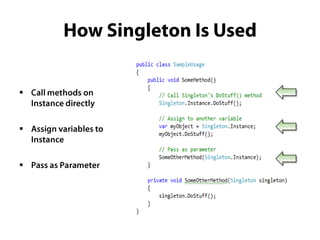

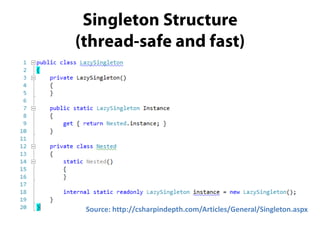

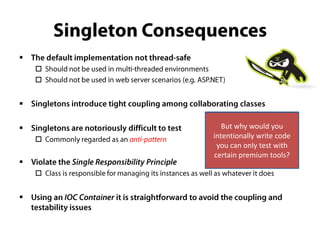

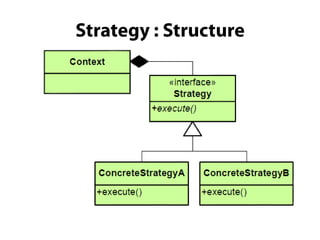

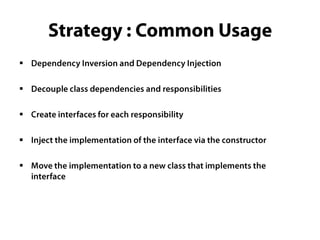

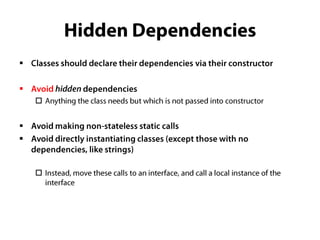

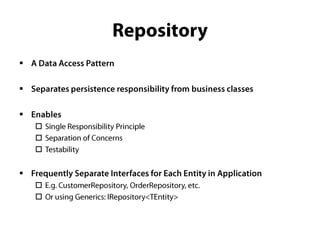

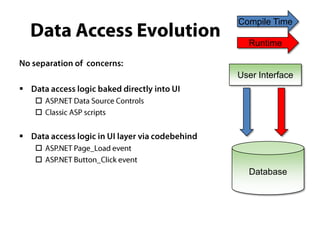

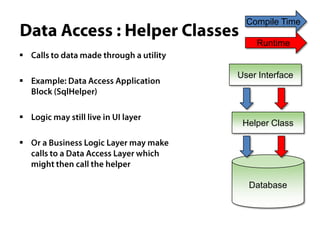

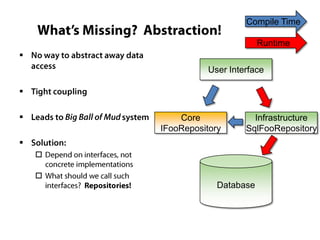

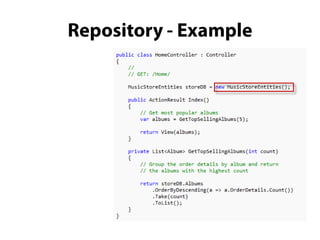

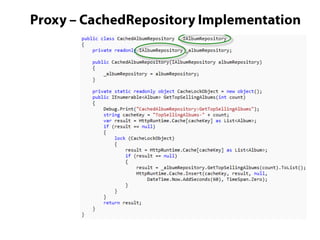

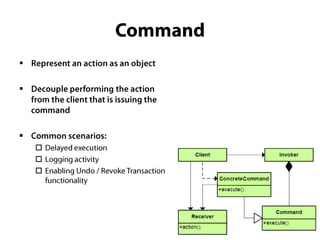

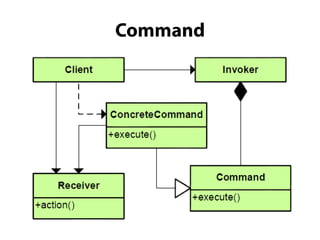

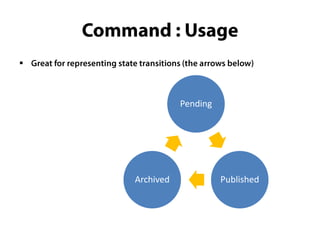

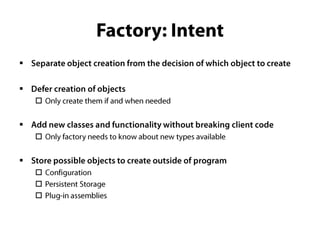

The document discusses various common design patterns used in software development, emphasizing their usefulness and providing resource links for further exploration. It mentions the tight coupling created by using 'new' in classes and suggests utilizing mocking tools for testing certain singleton patterns. Overall, the content aims to highlight best practices in coding through design pattern implementation.