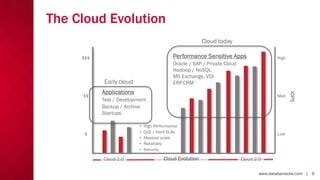

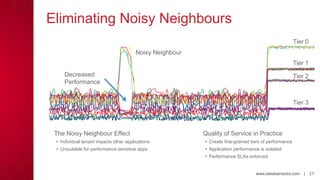

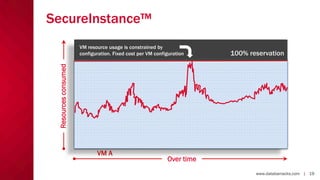

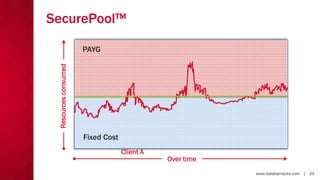

The document outlines the challenges and solutions related to cloud storage performance, highlighting the need for consistent and scalable storage solutions in enterprise IT. It introduces Databarracks and SolidFire's innovative approaches, including guaranteed quality of service (QoS) and in-line data reduction, to address performance inconsistencies and scale effectively. The authors, Tim Pitcher and Mark Thomas, emphasize the importance of performance-sensitive applications in the cloud and the advantages of their all-flash storage systems.