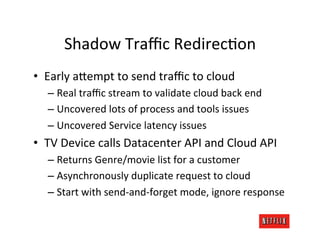

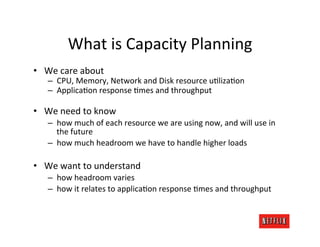

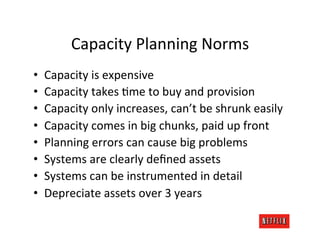

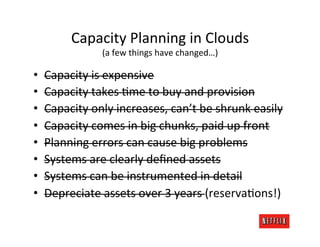

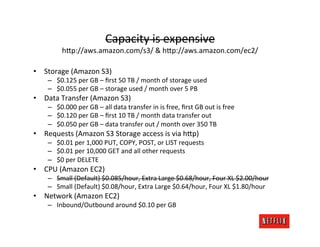

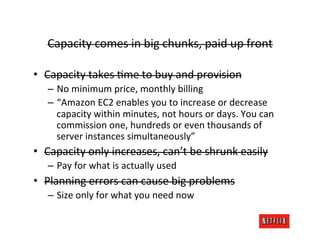

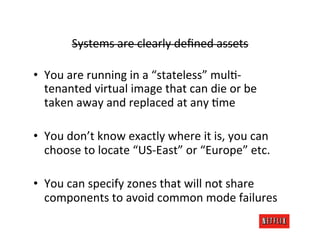

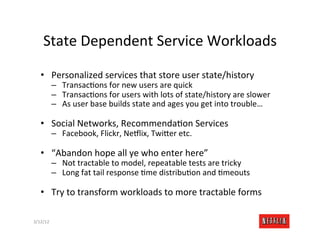

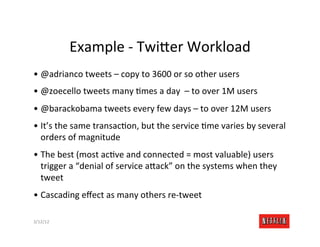

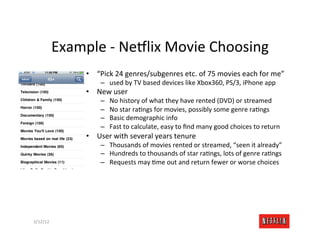

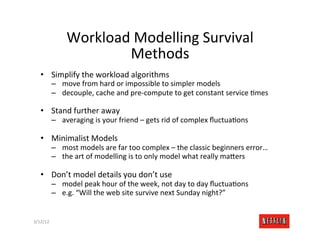

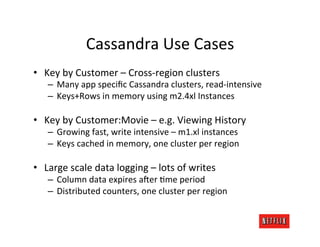

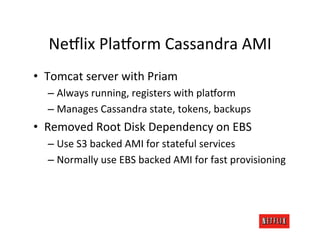

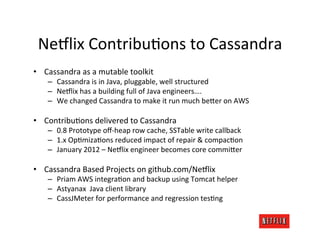

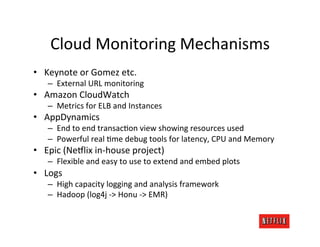

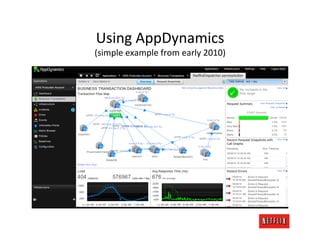

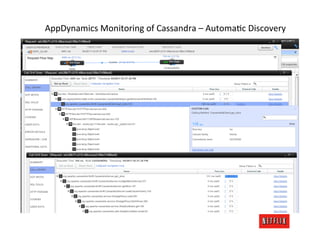

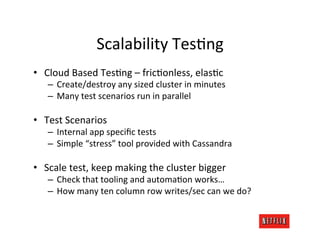

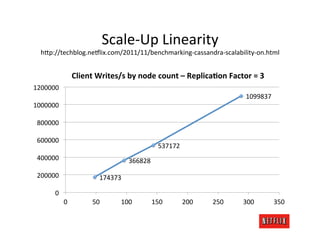

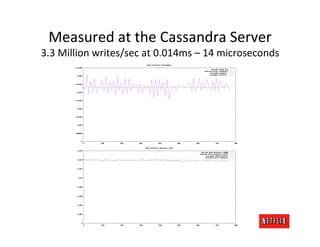

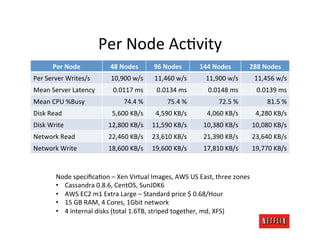

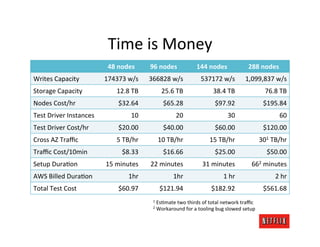

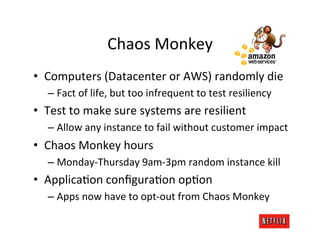

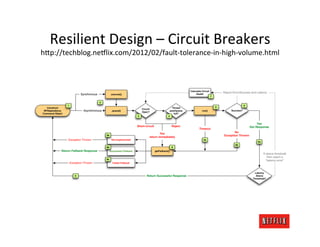

The document discusses cloud architecture and deployment strategies utilized by Netflix, detailing their capacity planning, cloud testing, and workload management. It emphasizes the importance of monitoring scalability, availability, and resilience while transitioning services from on-premises to cloud platforms. Additionally, the complexities of managing varying workloads and the optimizations made in their use of Cassandra for data management are highlighted.