Machine Vision for Automated Wire Bonding

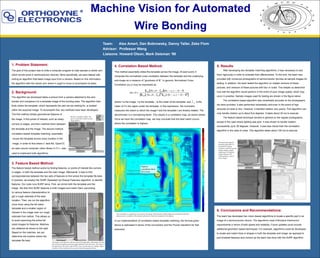

- 1. Team: Alex Amert, Dan Bobrowsky, Danny Taller, Zeke Flom Advisor: Professor Wang Liaisons: Howard Olson, Mark Delsman ‘98 1. Problem Statement: The goal of this project was to write a computer program to help operate a robotic arm which bonds wires in semiconductor devices. More specifically, we were tasked with writing an algorithm that takes image input from a camera. Based on this information, the algorithm tells the robotic arm where it ought to move to accomplish its tasks. 6. Conclusions and Recommendations: The team has developed two vision-based algorithms to locate a specific part in an image of a semiconductor device. The algorithms meet Orthodyne Electronics‘ requirements in terms of both speed and reliability. Future updates could include additional geometric based techniques. For example, algorithms could be developed to locate and match lines or shapes in both the template and image, as opposed to just localized features and corners as the team has done with the SURF algorithm. 4. Correlation Based Method: This method essentially slides the template across the image. At each point, it computes the normalized cross correlation between the template and the underlying sub-image as a measure of “goodness of fit”. In general, Normalized Cross Correlation γ(u,v) may be expressed as where f is the image, t is the template, is the mean of the template, and is the mean of f in the region under the template. In this expression, the numerator measures the extent to which the image f and the template t are linearly related. The denominator is a normalizing factor. This results in a correlation map, as shown below. Once we have the correlation map, we may conclude that the best match occurs where the correlation is highest. In our implementation of correlation-based template matching, the formula given above is rephrased in terms of the convolution and the Fourier transform for fast execution. 3. Feature Based Method: The feature based method works by finding features, or points of interest like corners or edges, in both the template and the main image. Afterwards, it tries to find correspondences between the two sets of features to find where the template fits best. In practice, we employ the SURF (Speeded Up Robust Features) algorithm, to identify features. Our code runs SURF twice. First, we shrink both the template and the image. We then find SURF features in both images and match them (according 5. Results After developing two template matching algorithms, it was necessary to test them rigorously in order to evaluate their effectiveness. To this end, the team was provided with numerous photographs of semiconductor devices as sample images for testing. In addition, the team tested the algorithm on rotated versions of these pictures, and versions of these pictures with blur or noise. This helped us determine how well the algorithm would perform in the event of poor image quality, which may occur in practice. Sample images used for testing are shown in the figure below. The correlation-based algorithm was remarkably accurate on the photographs we were provided. It also performed remarkably well even in the event of high amounts of noise or blur. However, it handled rotation very poorly. This algorithm can only handle rotation up to about five degrees. It takes about 30 ms to execute. The feature based technique worked in general on the regular photographs, except in the case where lighting was poor. It was shown to handle rotation successfully up to 30 degrees. However, it was less robust than the correlation algorithm in the case of noise. This algorithm takes about 100 ms to execute. 2. Background: The algorithm we developed takes a picture from a camera attached to the wire bonder and compares it to a template image of the bonding area. The algorithm then finds where the template, which represents the part we are looking for, is located within the acquired image. To accomplish this, two methods have been developed. The first method utilizes geometrical features of the image. It find points of interest, such as sharp corners or edges, and then matches them between the template and the image. The second method, correlation-based template matching, essentially moves the template across every location in the image, in order to find where it best fits. OpenCV, an open source computer vision library in C++, was used to implement both algorithms. The correlation is computed at every point in the image. Red indicates a higher degree of correlation while blue indicates poor correlation. The location of best fit is where the correlation is the highest. We tested both template matching algorithms we developed on (going clockwise, from the top left) regular images, images rotated up to 30 degrees, images blurred with Gaussian filters, and on noisy images. Machine Vision for Automated Wire Bonding We have a template (right). The goal is to find this within the main image (below) t f u,v to various feature characteristics) to get a rough estimate of the best location. Then, we run the algorithm once more using the full sized template and a smaller region of interest in the image near our rough estimate from before. This allows us to avoid searching the entire full sized images for features. Matches are obtained as shown to the right. Based on the matches, we can determine the location where the template fits best. The image on the left shows the first step of the algorithm, with matches between the downsized template and main image indicated with white lines. The image on the right shows the results when SURF is run a second time on a smaller region of interest. These matches tell us exactly where the template fits best. Highest correlation (best fit) is here.