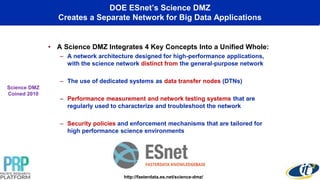

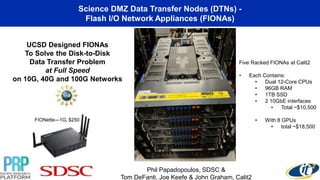

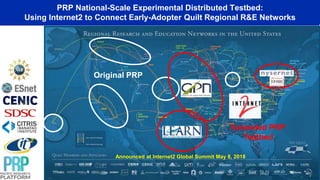

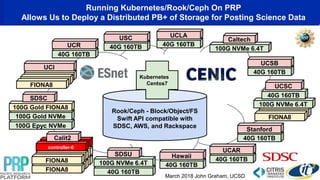

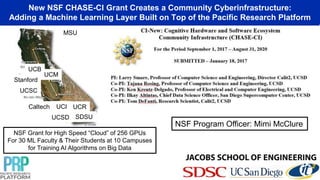

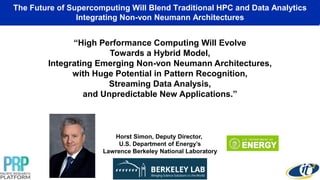

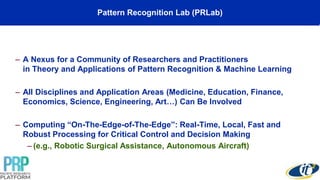

This document summarizes a talk given by Professor Ken Kreutz-Delgado on distributed machine learning platforms and brain-inspired computing. It discusses the Pacific Research Platform (PRP) which connects multiple universities and research institutions. The PRP uses FIONA appliances and Kubernetes to distribute storage and processing. A new NSF grant will add GPUs across 10 campuses for training AI algorithms on big data. The talk envisions connecting the PRP with clouds of GPUs and non-von Neumann processors like IBM's TrueNorth chip. Calit2's Pattern Recognition Lab uses different processors including TrueNorth to explore machine learning algorithms.