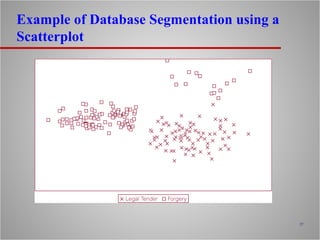

The document discusses data mining, including its definition, main operations, techniques, applications, and relationship to data warehousing. The four main data mining operations are predictive modeling, database segmentation, link analysis, and deviation detection. Each operation uses specific techniques like classification, clustering, association rule mining, and visualization. Data mining is applied in domains like retail, banking, insurance, and medicine. It works best with large, clean datasets typically stored in data warehouses.