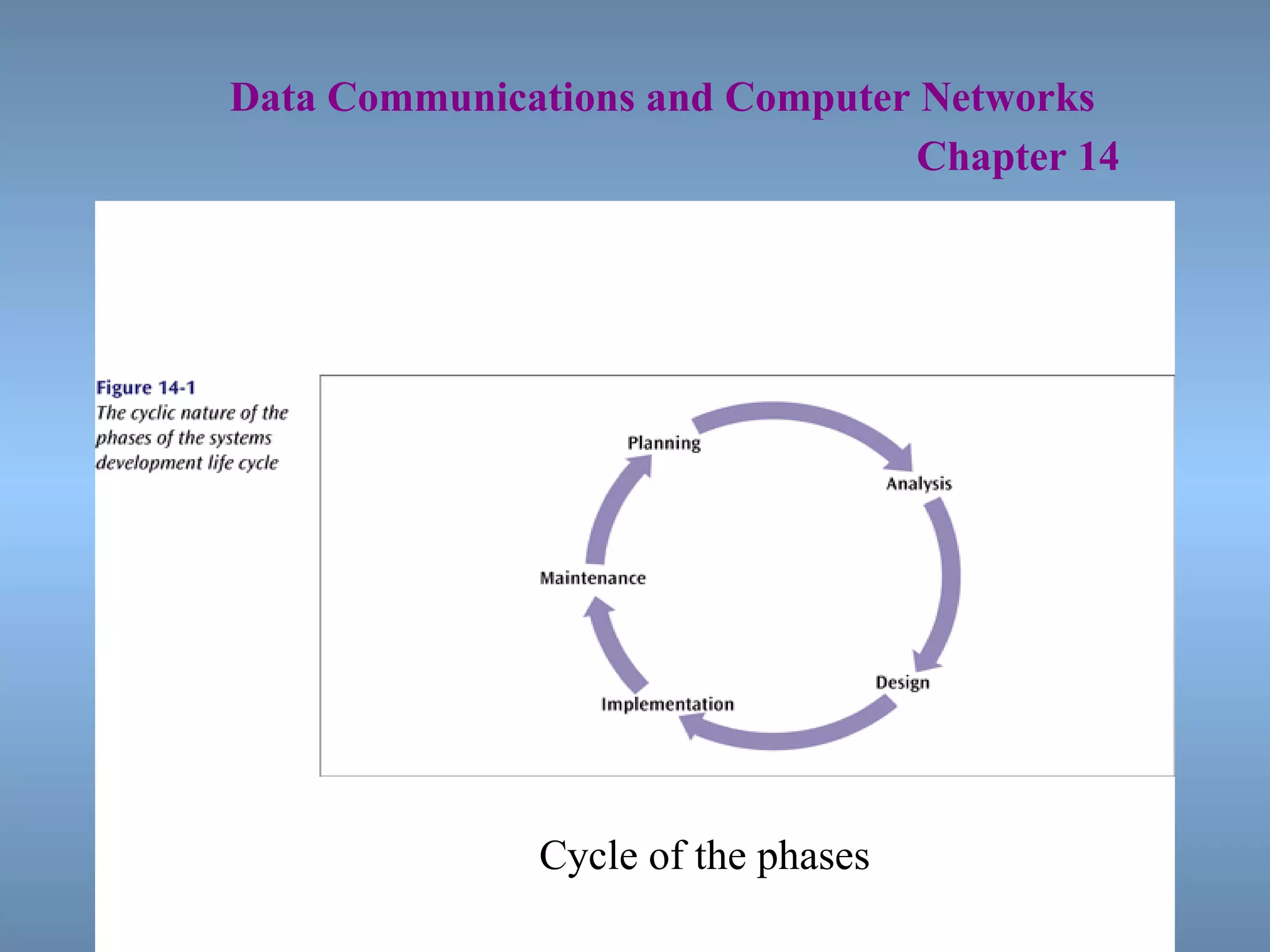

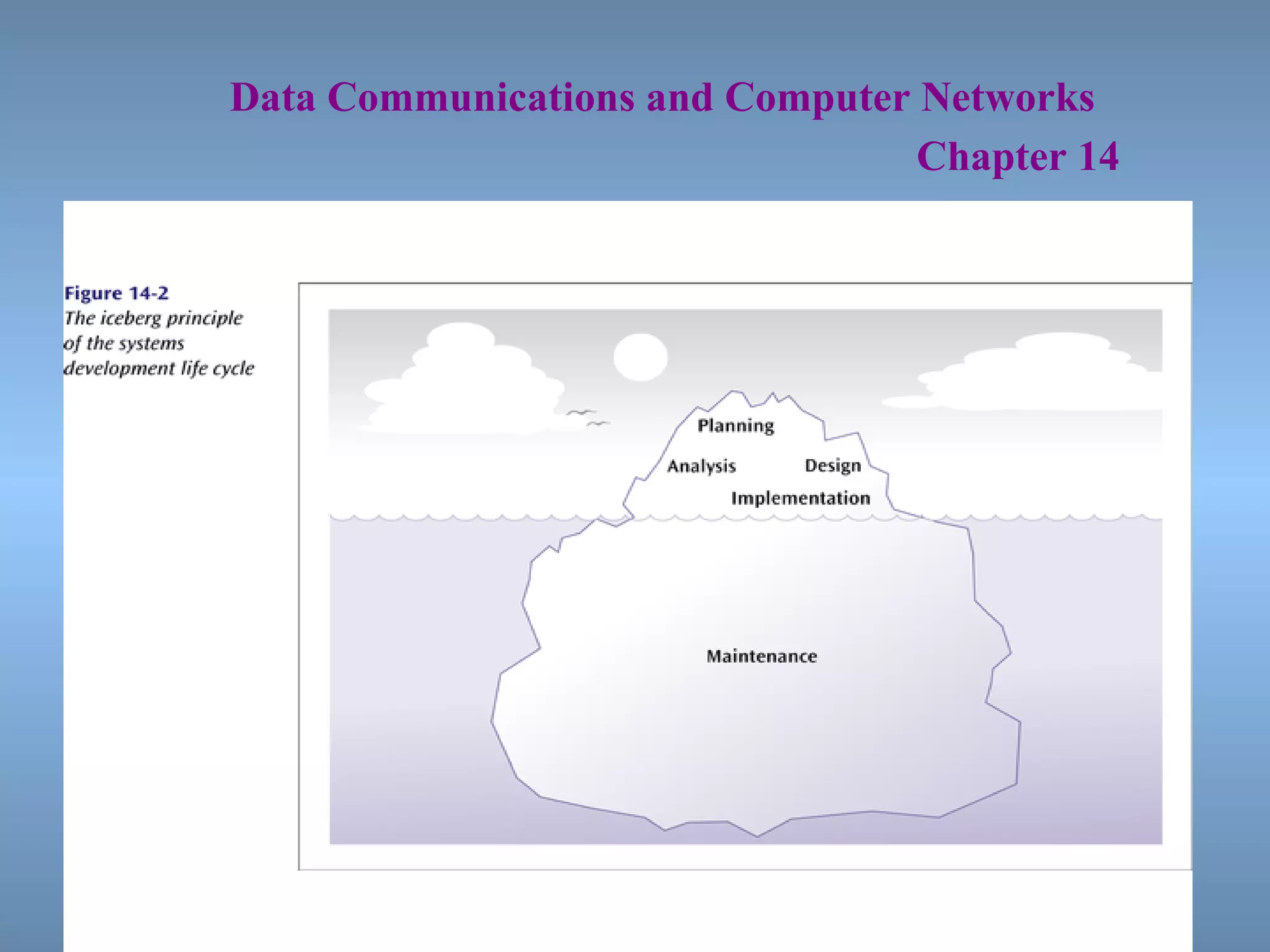

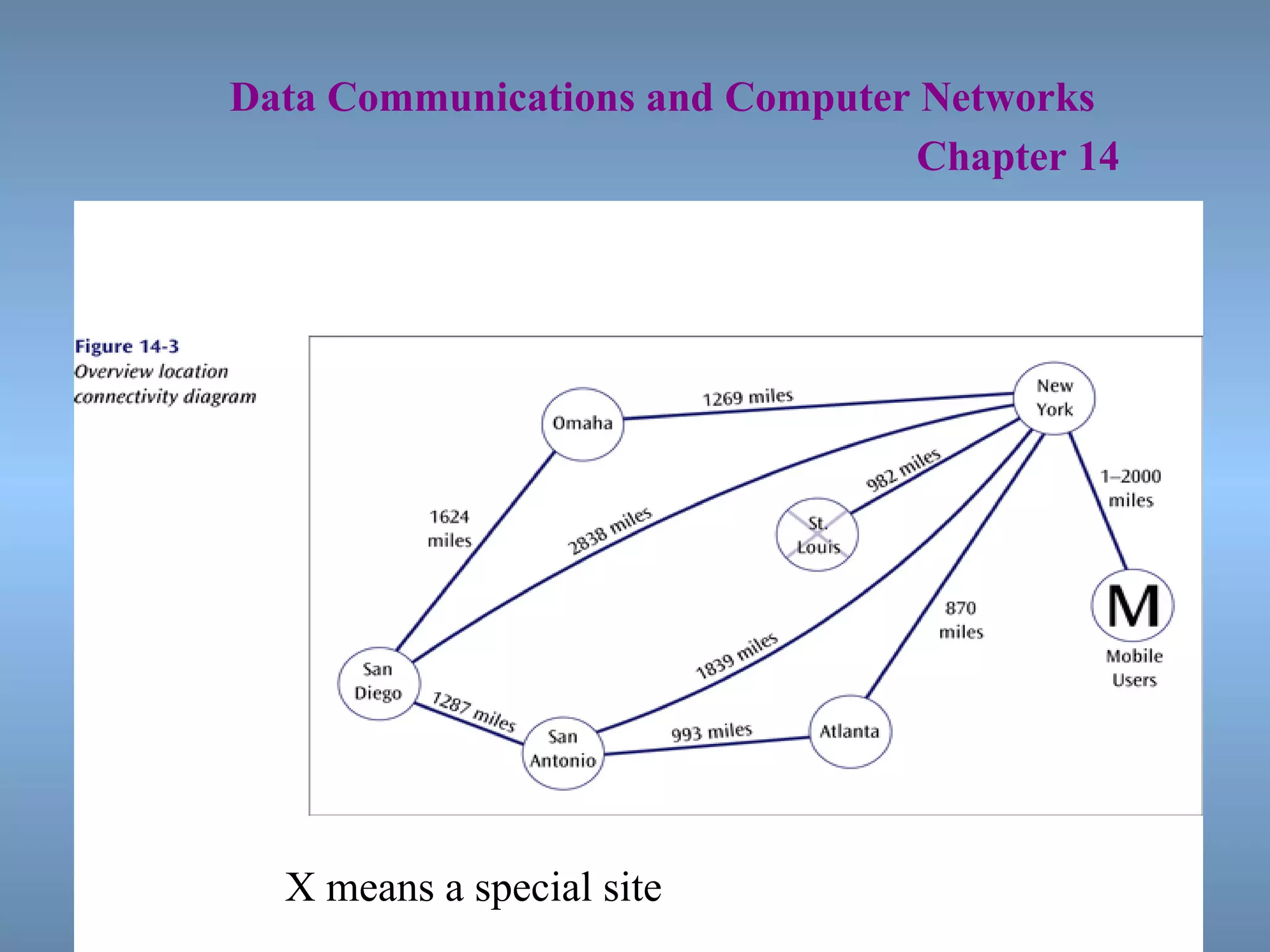

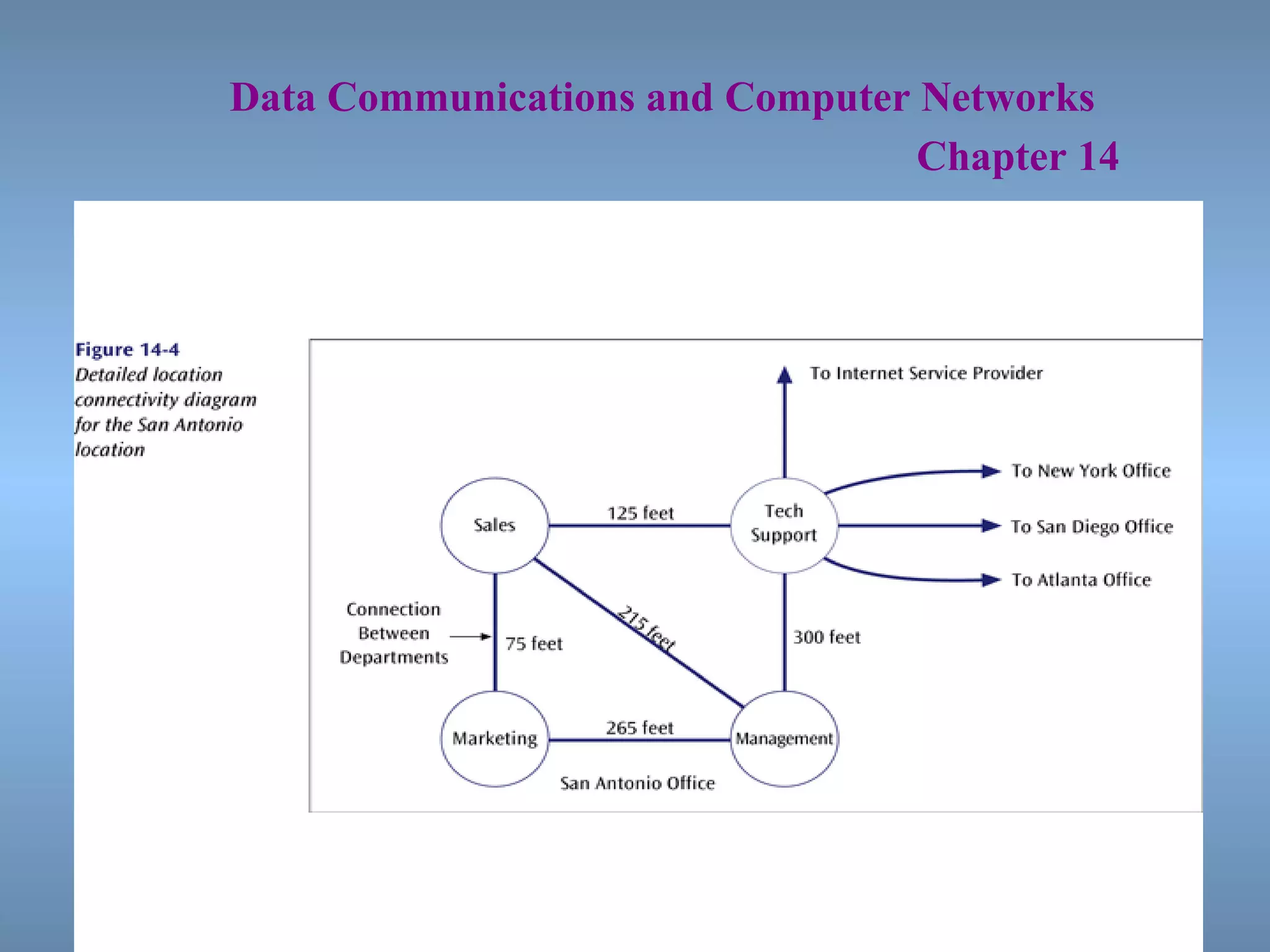

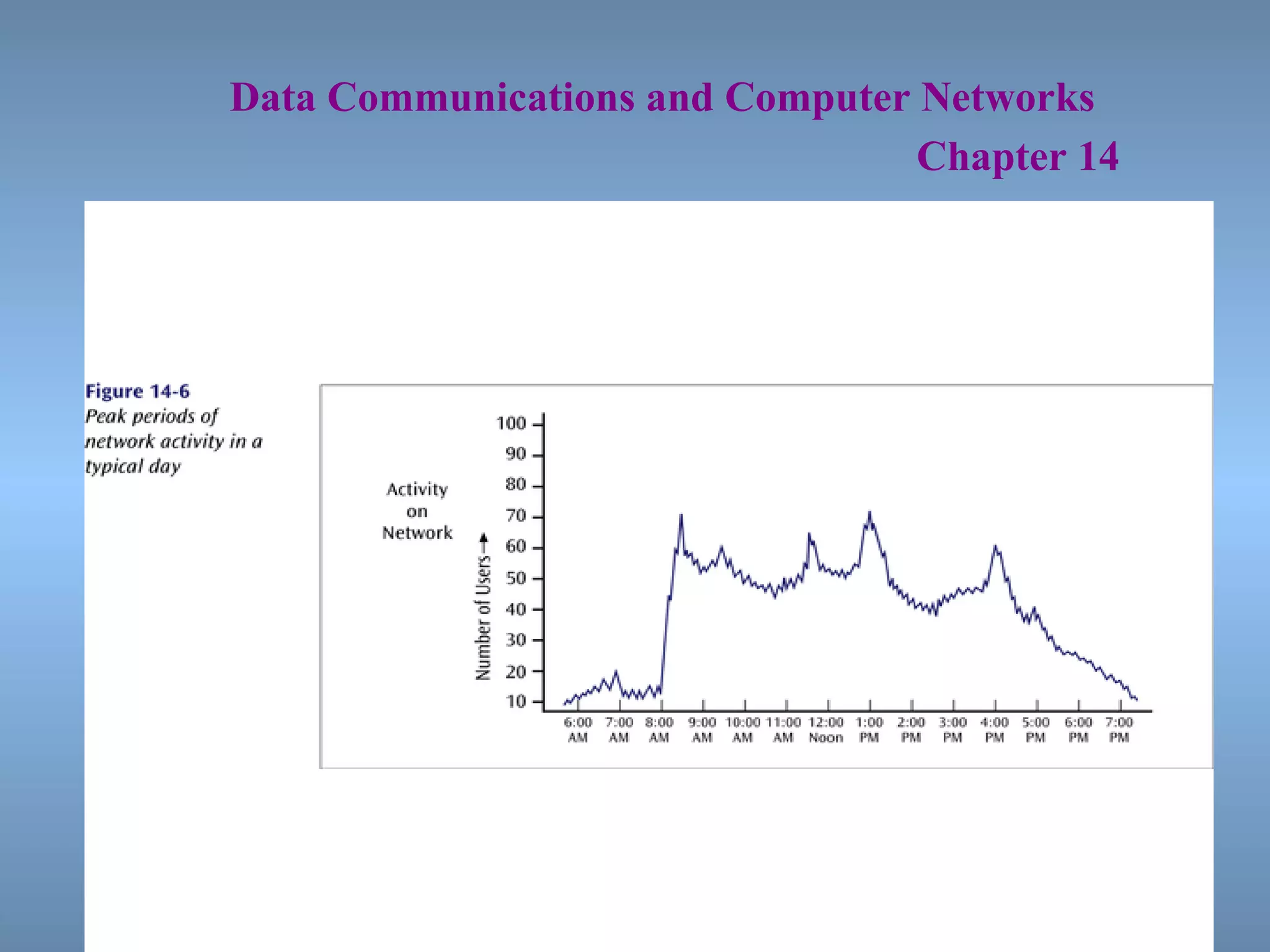

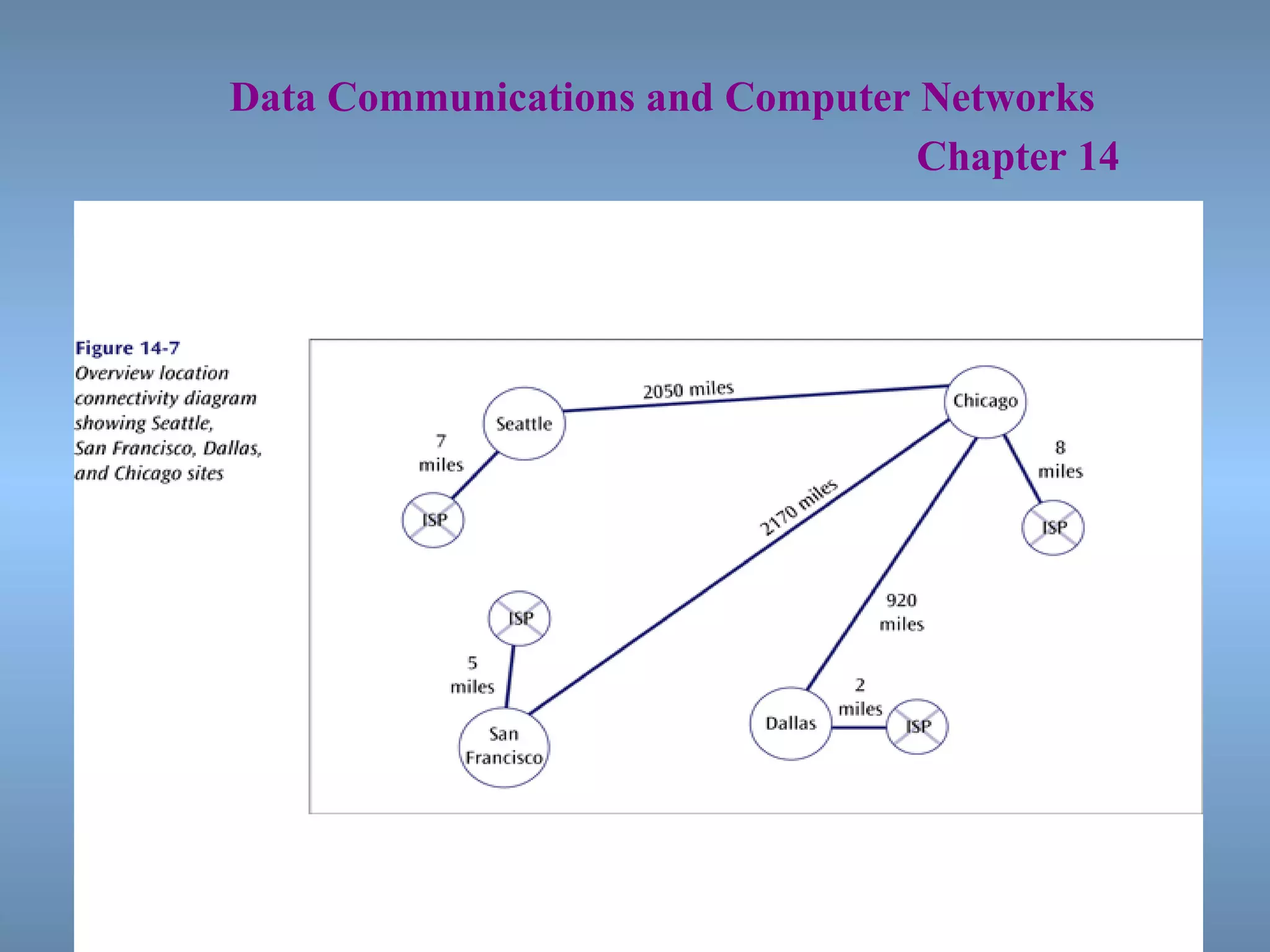

Properly designing and managing a computer network is a difficult task that requires planning, analysis, capacity planning, and skills to keep up with changing technology. Network design follows a systematic process called the Systems Development Life Cycle (SDLC) which includes planning, analysis, design, implementation, and maintenance phases. Network models and diagrams are created to demonstrate the current and planned network configuration. Capacity planning determines necessary network bandwidth by analyzing current usage and projecting future needs. Baseline studies measure current network performance to determine future capacity requirements.