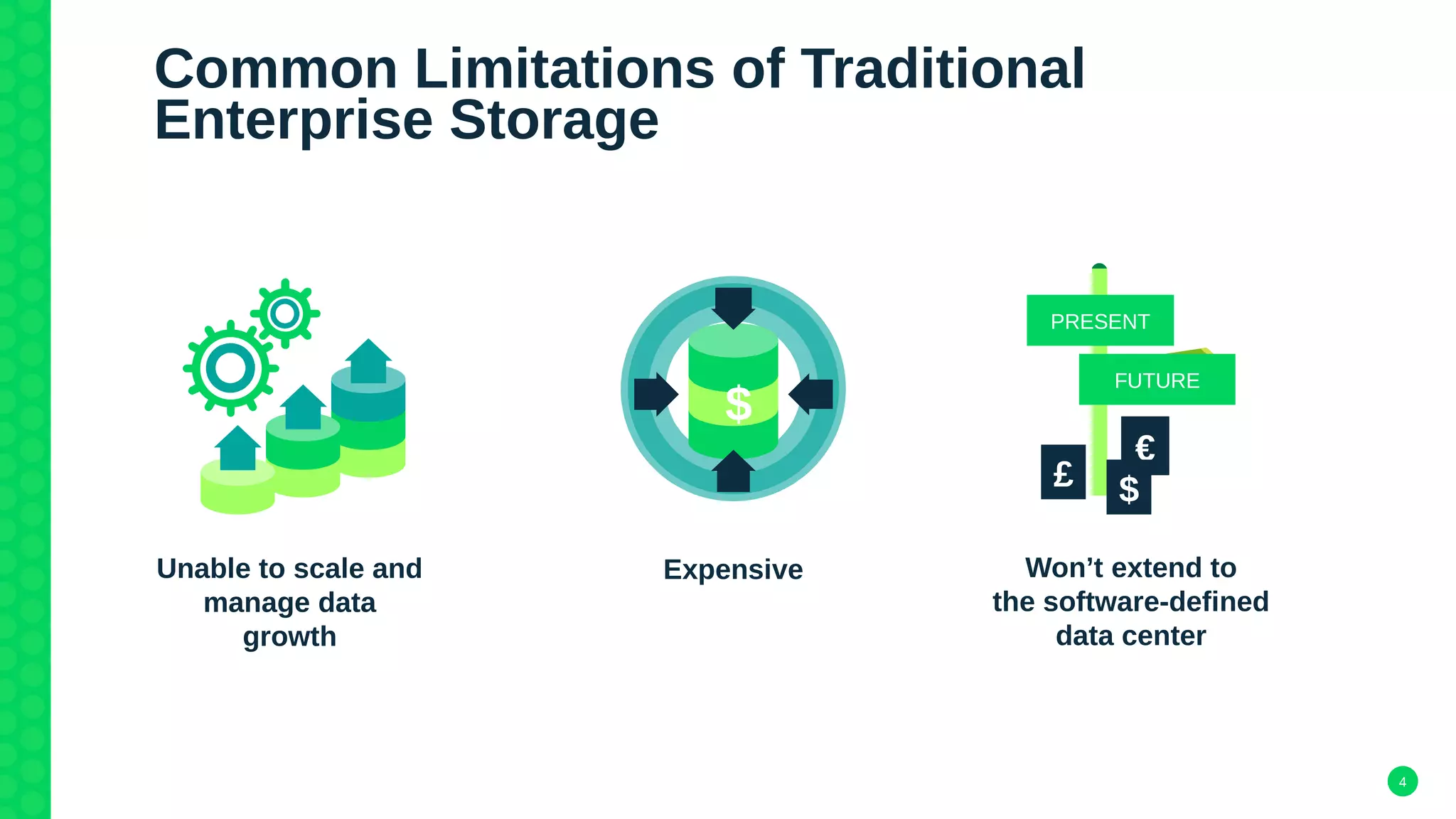

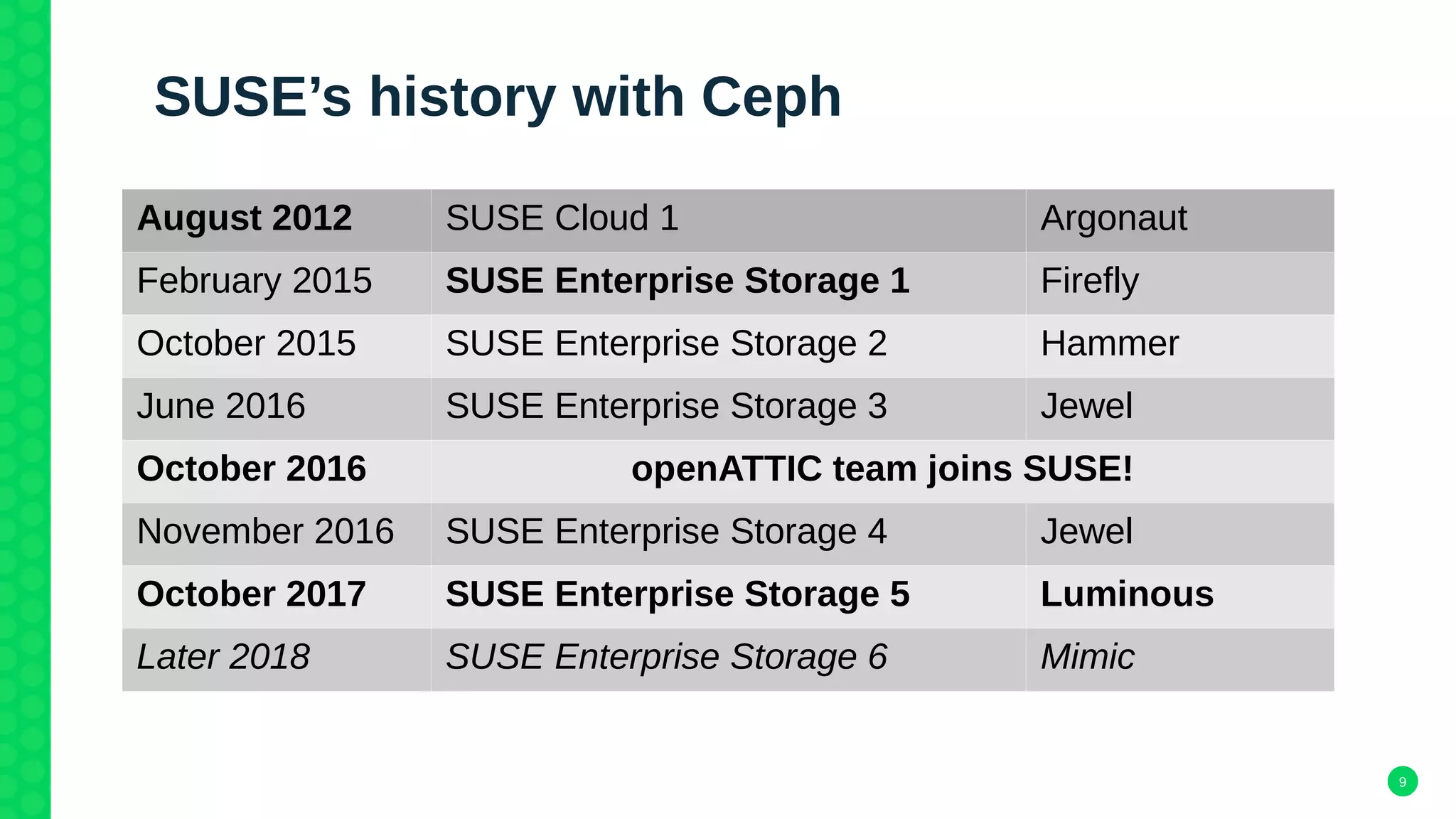

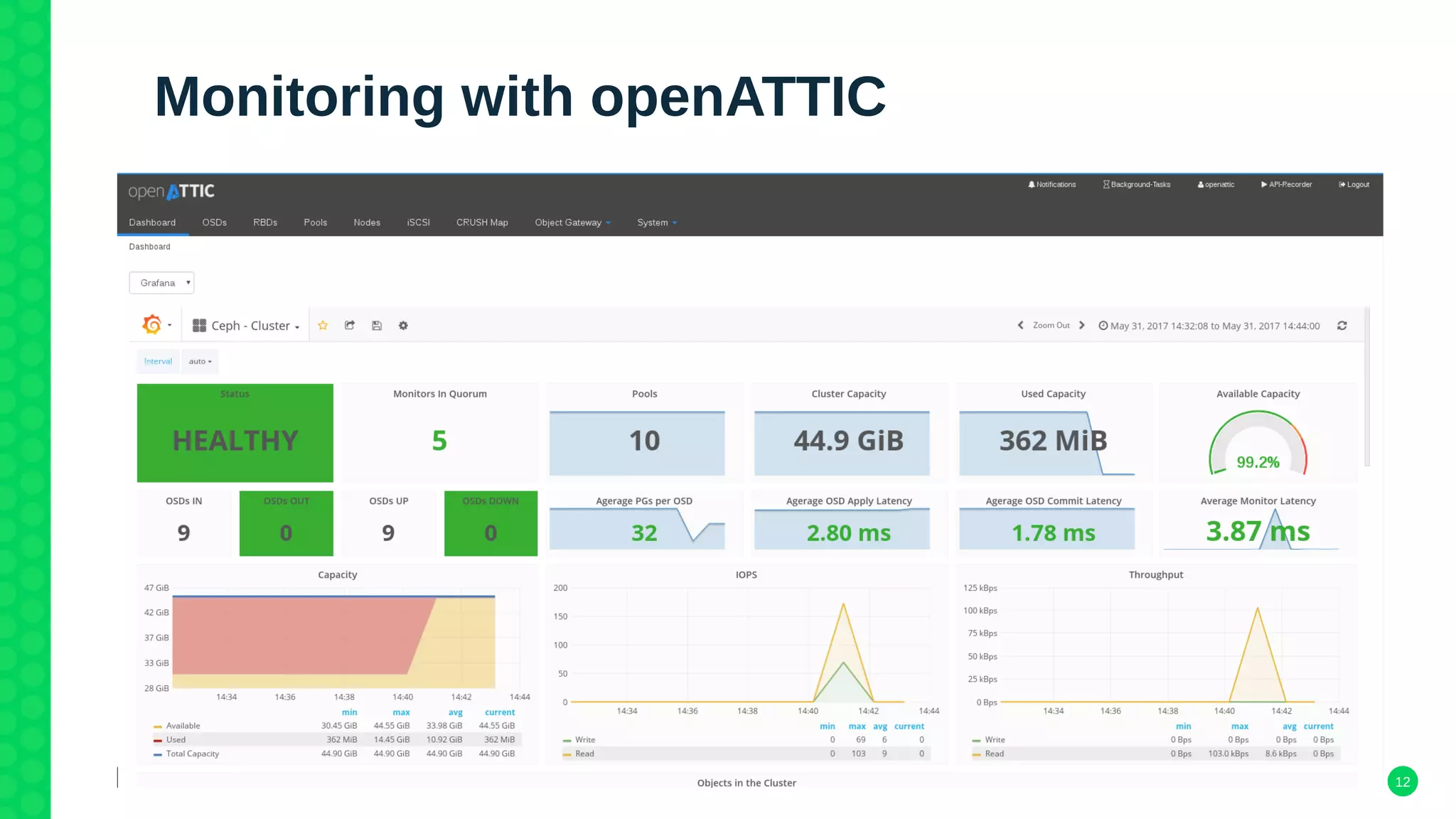

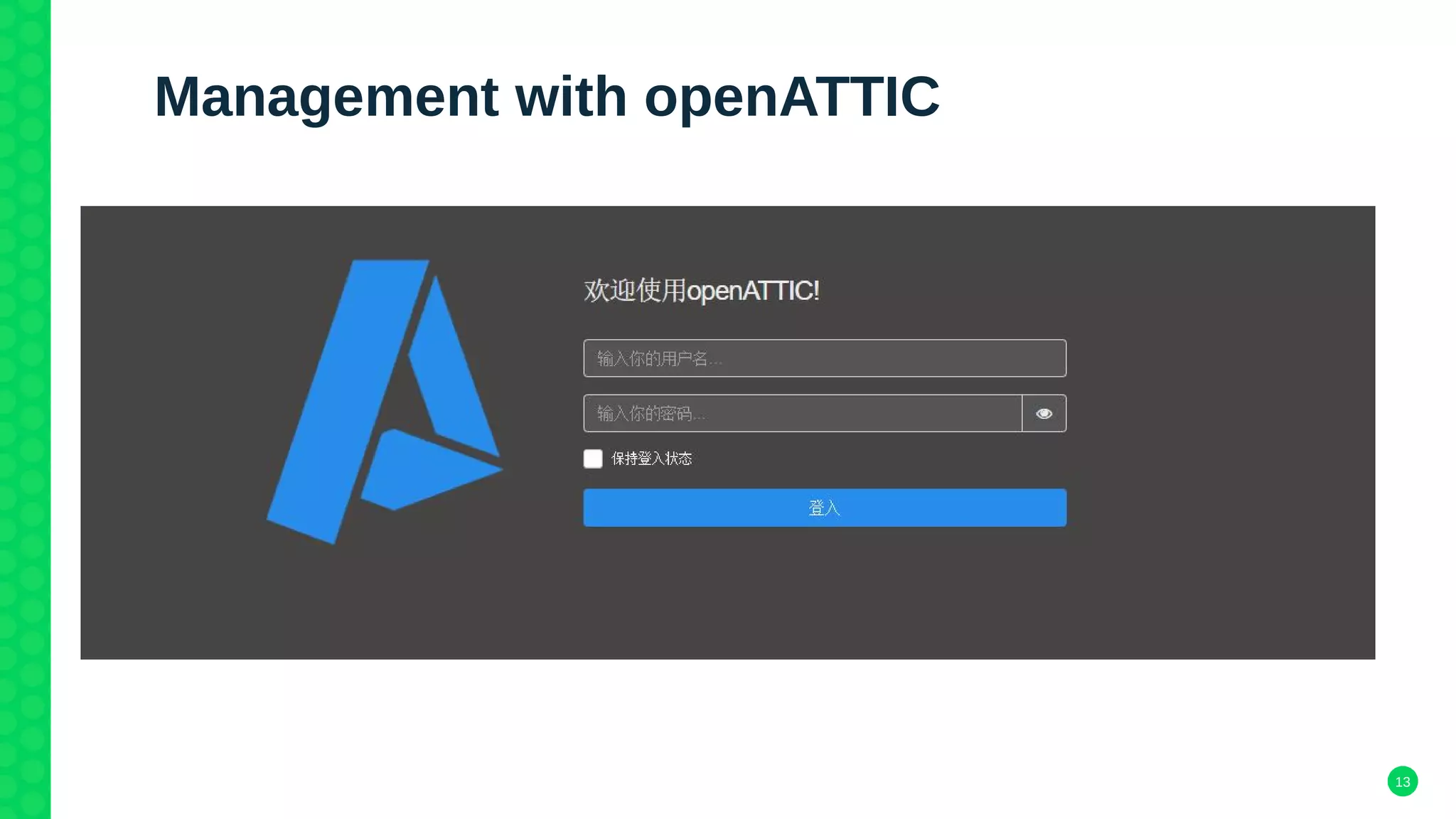

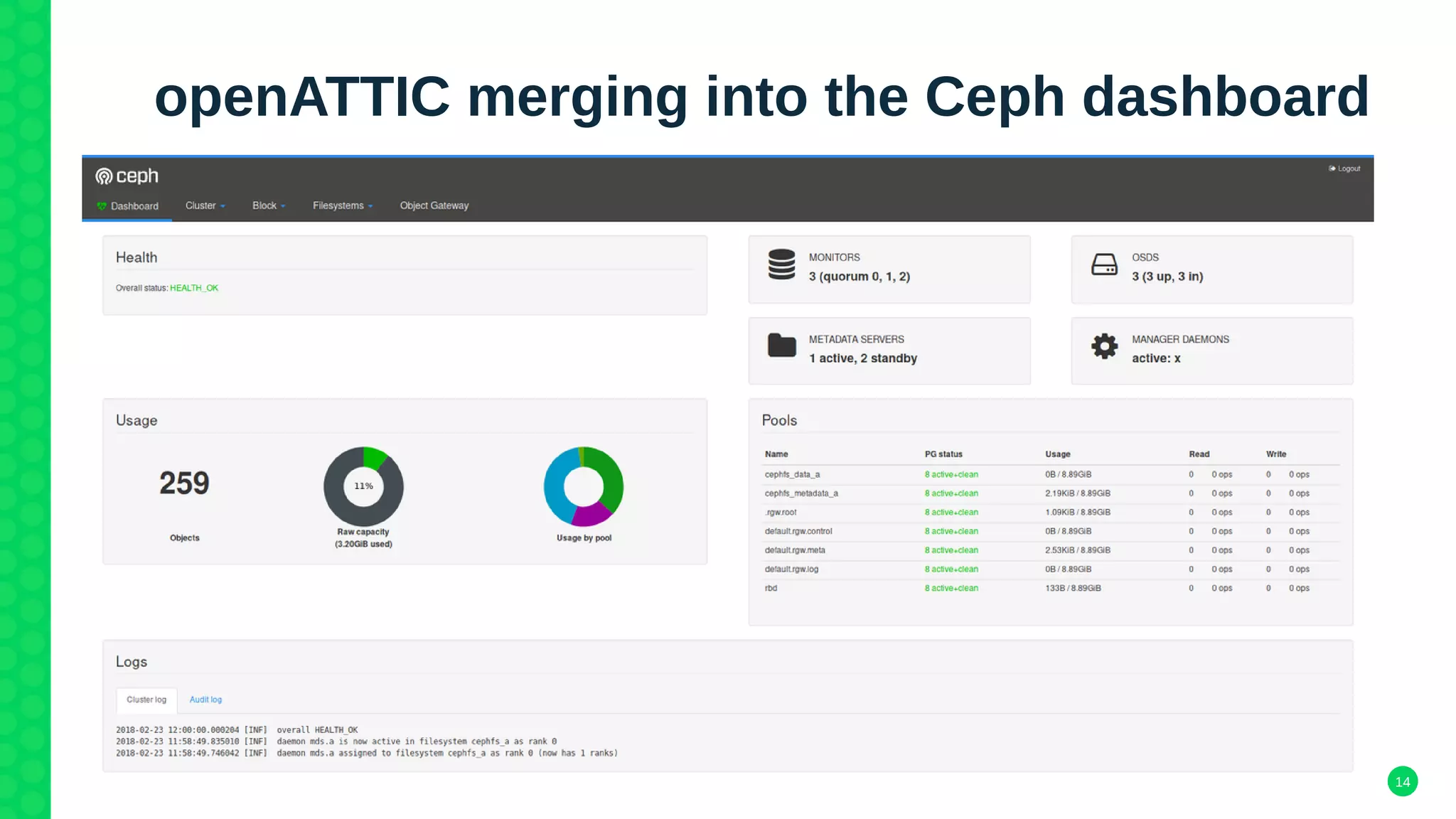

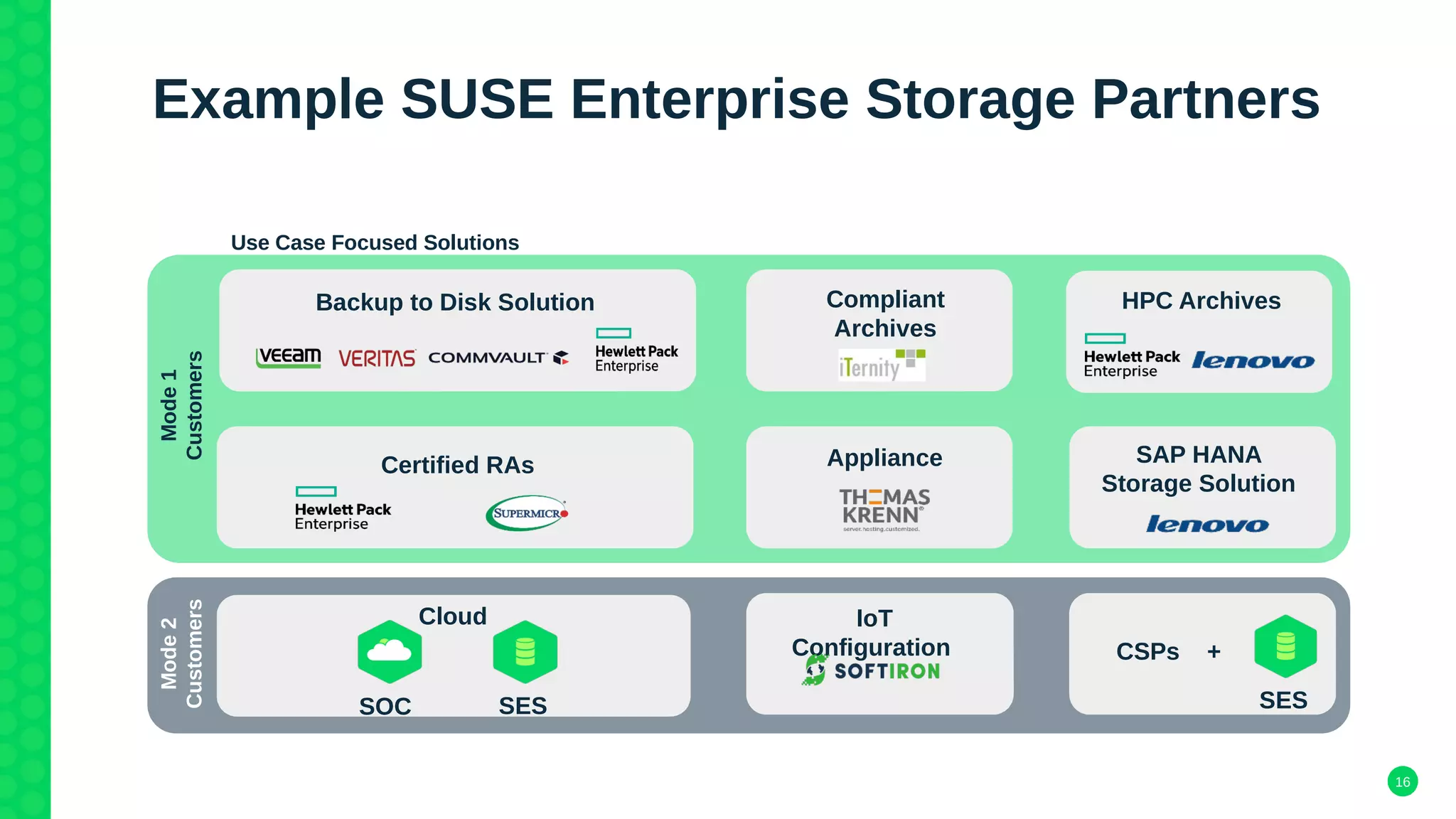

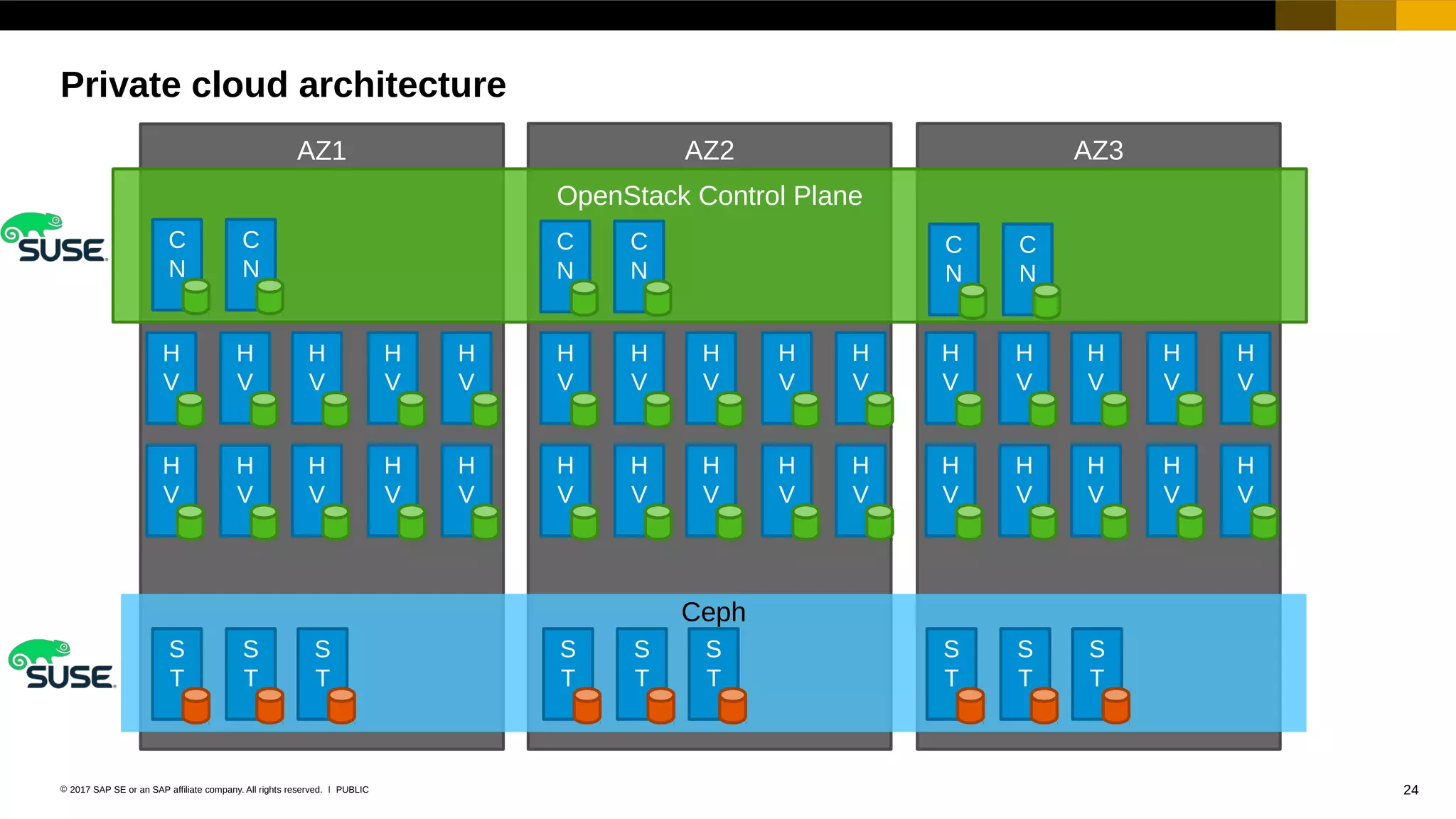

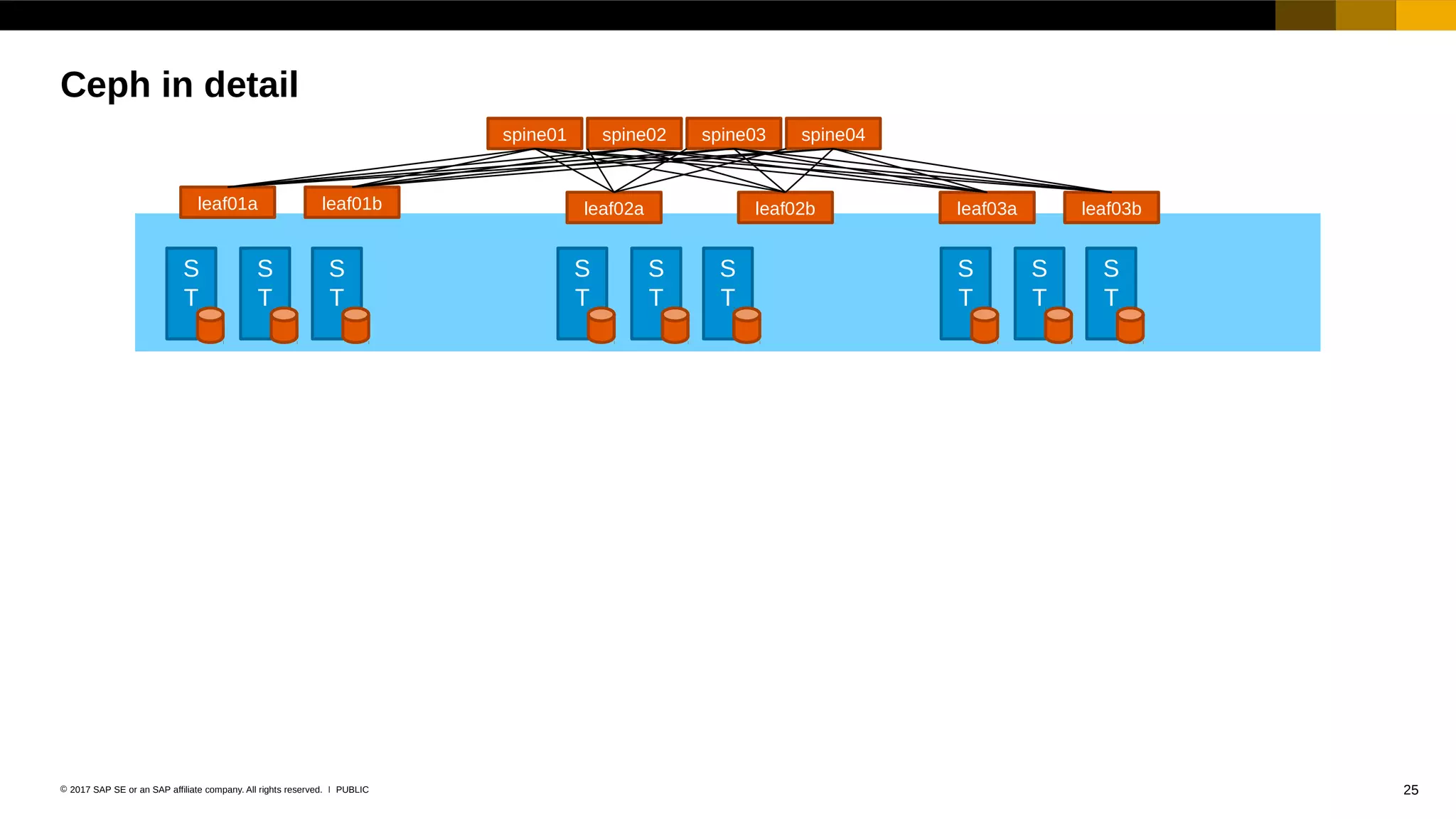

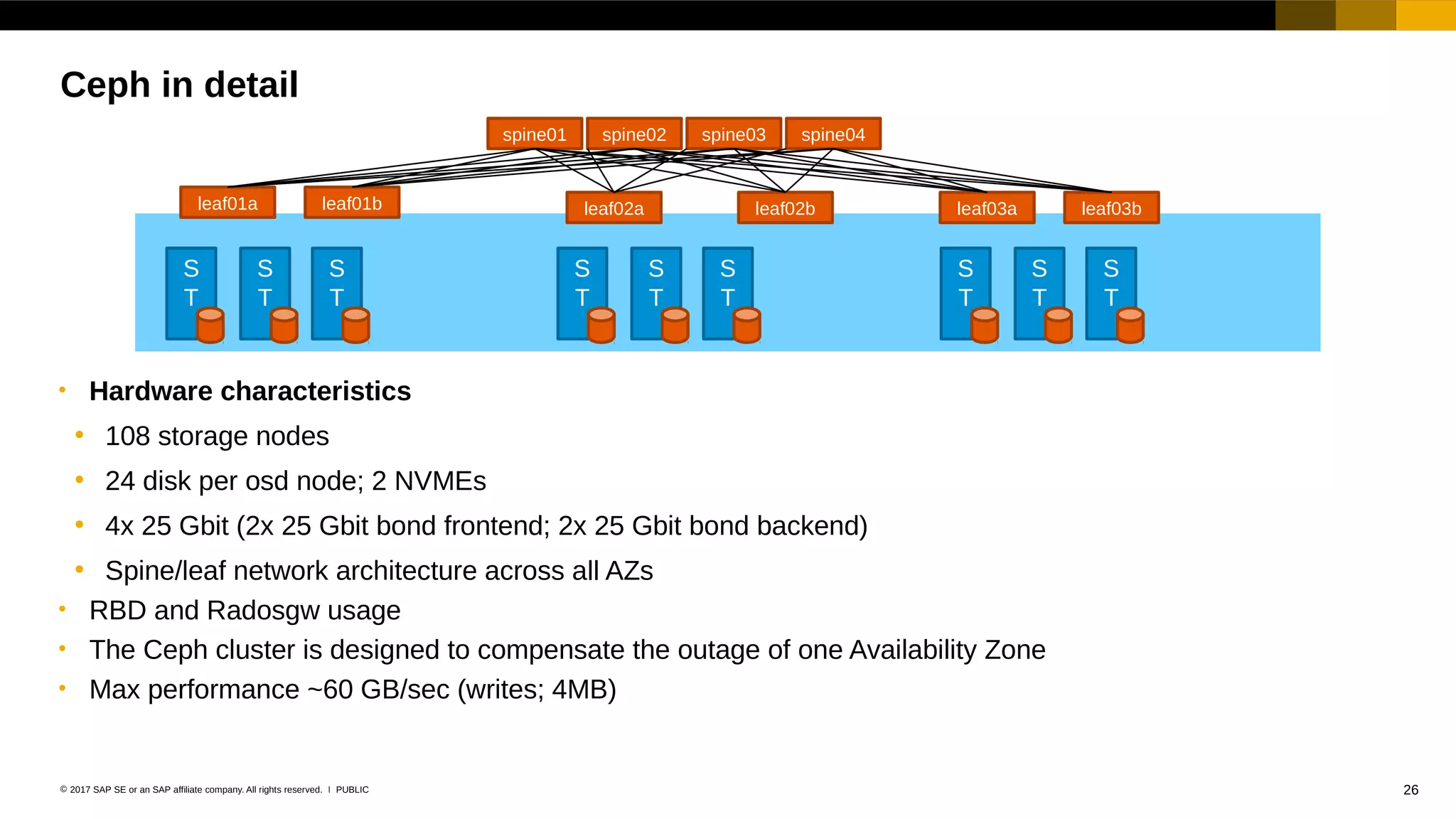

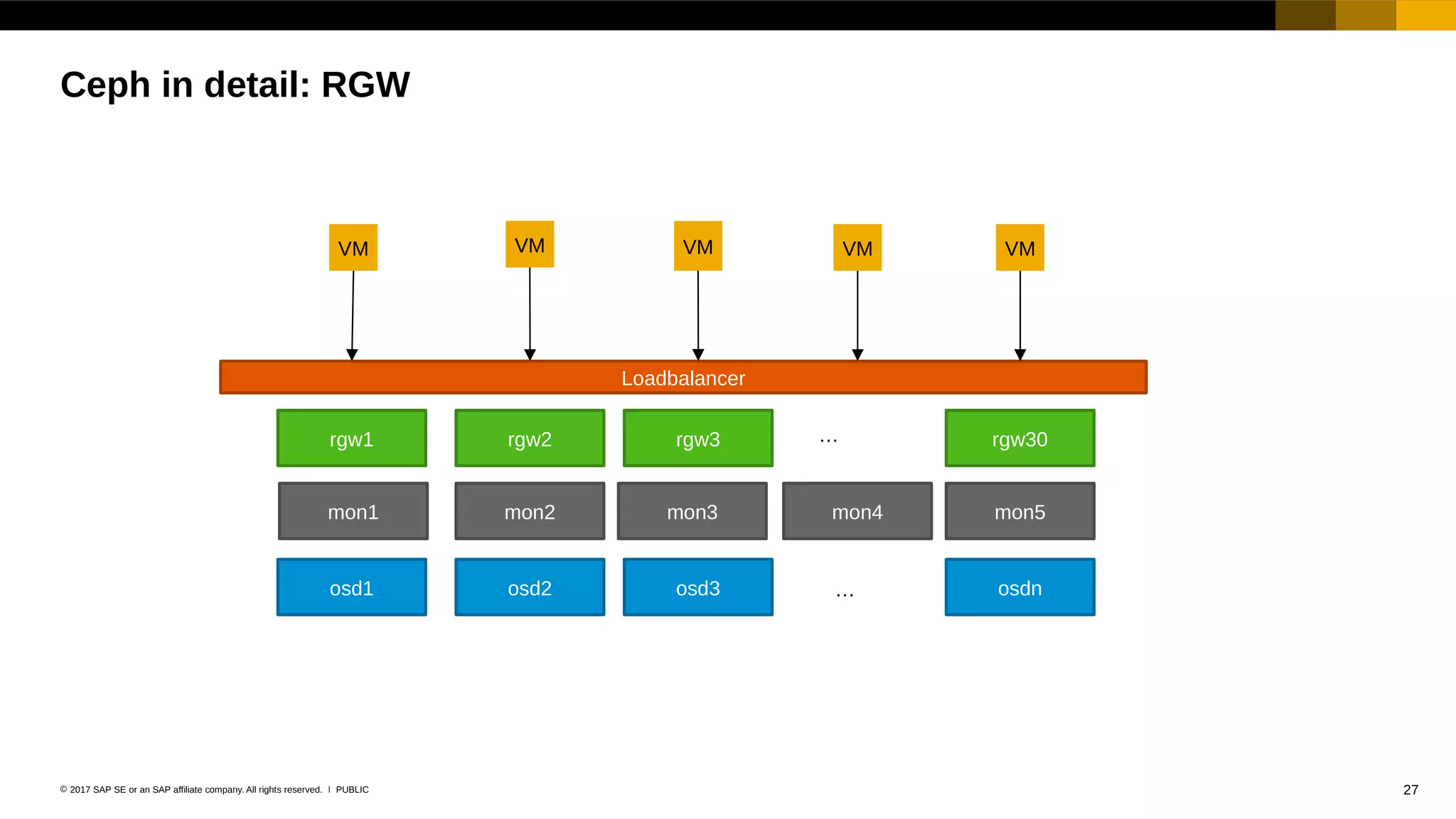

The document discusses the challenges of enterprise data storage, emphasizing the limitations of traditional storage solutions in scaling and managing data growth. It highlights SUSE's contributions to the Ceph open-source community and its developments in software-defined storage over the years. Additionally, it outlines future directions for SUSE's enterprise storage solutions, including improved user experiences and interoperability.