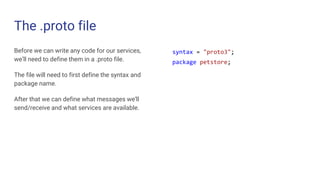

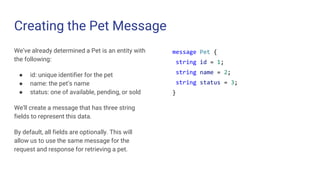

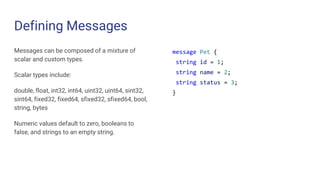

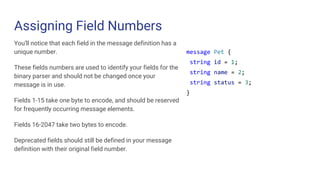

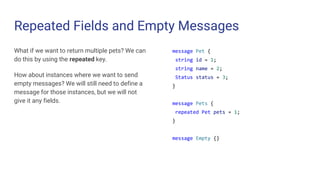

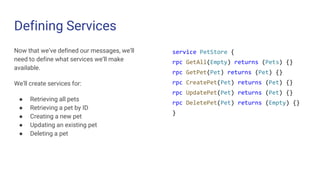

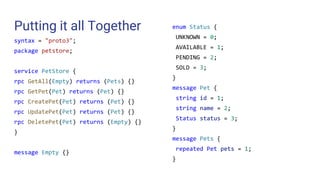

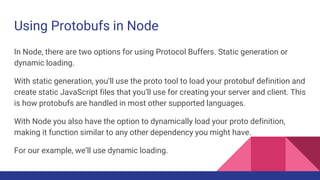

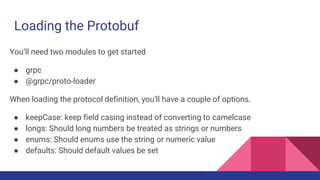

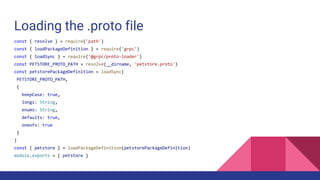

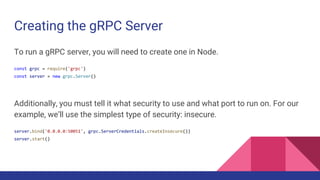

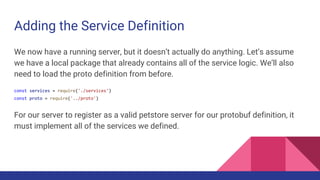

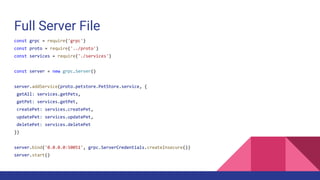

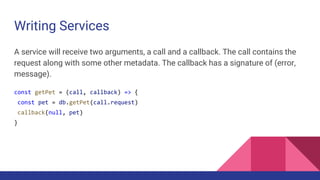

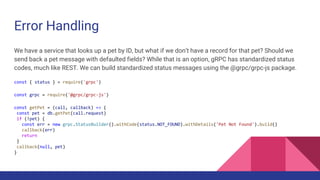

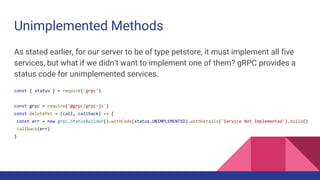

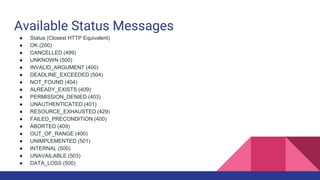

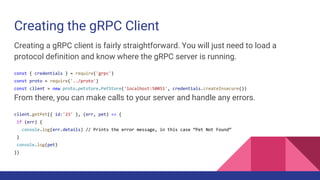

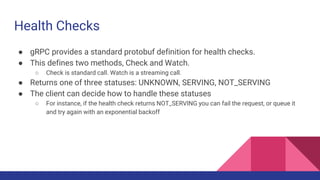

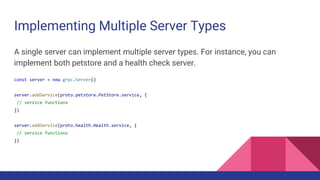

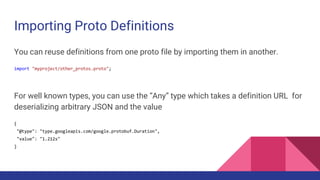

The document discusses building a gRPC service, highlighting its advantages such as low latency, full-duplex streaming, and support for multiple data formats. It explains the use of Protocol Buffers for defining services and messages, provides a step-by-step guide to creating the service and messages in a .proto file, and details how to implement the service in Node.js. Additionally, it covers error handling, service registration, and advanced topics like health checks and security options.