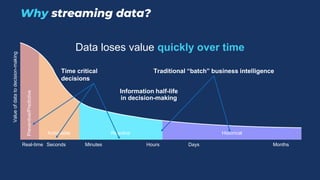

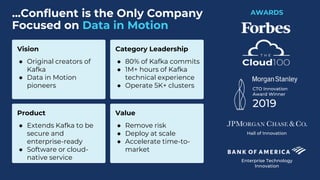

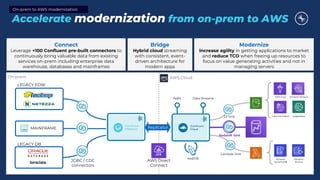

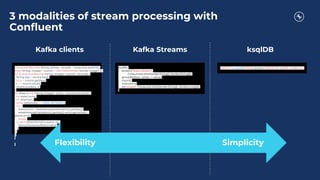

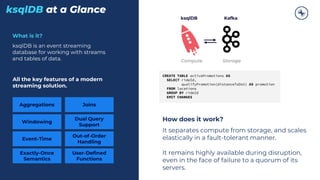

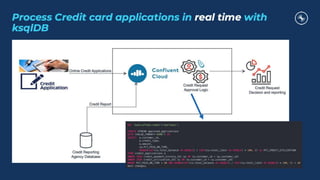

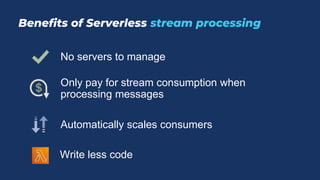

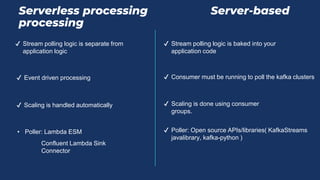

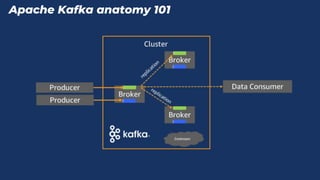

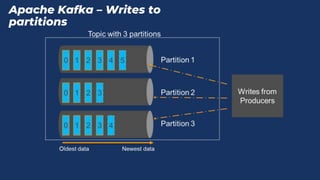

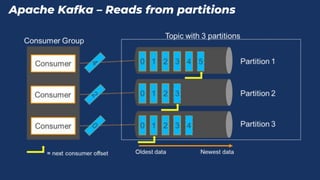

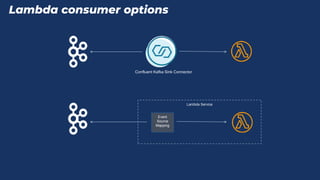

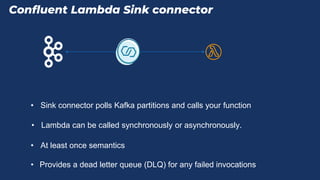

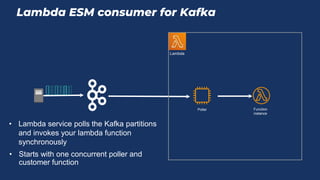

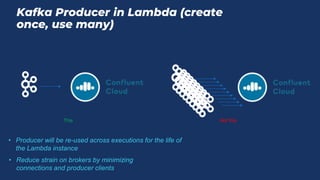

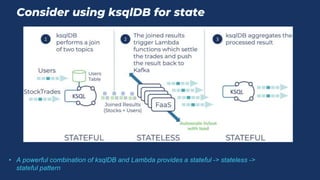

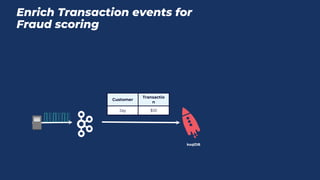

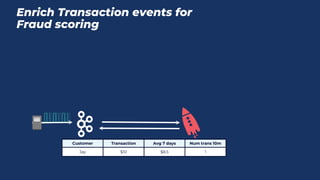

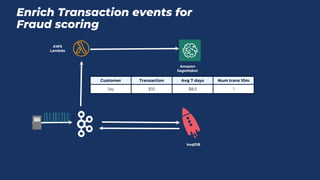

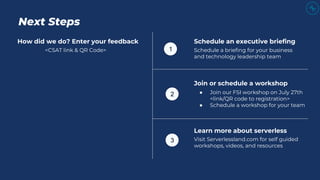

This document discusses using serverless stream processing for financial services applications. It introduces Confluent and Apache Kafka for building event streaming applications. It describes using ksqlDB and AWS Lambda for serverless stream processing. Best practices are provided when using AWS Lambda as a stateless stream processor. Examples of financial use cases are given such as fraud detection, payments, and risk analytics. The benefits of scaling automatically and paying only for consumption are highlighted.