A Guide to Building Highly Scalable Data Services for Large Enterprises

- 2. A GUIDE TO LEARN ABOUT HIGHLY SCALABLE APPROACH TO DESIGN AND BUILD OF DATA SERVICES FOR LARGE ENTERPRISES THAT WOULD WITHSTAND THE DYNAMIC CHANGES FOR NEXT 10 YEARS OF EVOLUTION FULL INSIGHT INTO DETAILED DESIGN OF FRAMEWORK SOLUTION TO SUPPORT EVOLUTION OF NEEDS AND DELIVER BUSINESS TEAM THE PRODUCT SOLUTIONS THEY SEEK WITHIN SHORT SPAN TO BEAT THE MARKET COMPETITION ACTUAL CODE AND SOLUTION SAMPLES ARE ALSO ACCESSIBLE FROM AUTHOR ON NEED BASIS. FRAMEWORK CODE IS AVAILABLE ONLINE Copyright © 2019 by Digendra Vir Singh. All rights reserved. Limit of Liability/Disclaimer of Warranty: While the publisher and author have used their best efforts in preparing this book, they make no representations or warranties with respect to the accuracy or completeness of the contents of this book and specifically disclaim any implied warranties of merchantability or fitness for a particular purpose. No warranty may be created or extended by sales representatives or written sales materials. The advice and strategies contained herein may not be suitable for your situation. You should consult with a professional where appropriate. Neither the publisher nor author shall be liable for any loss of profit or any other commercial damages, including but not limited to special, incidental, consequential, or other damages. For general information on our other products and services, or technical support, please contact our Customer Care Department at +31-20-8895710 or cp@aninfosys.com. Author also publishes its books in a variety of electronic formats. Some content that appears in print may not be available in electronic books. For more information about DV products/services, visit his web site at www.meetdv.com.

- 3. About the Author Digendra Vir Singh (mostly remembered as DV) DV is a authority in Data Quality, Master Data Management and Data Governance. He graduated in 1997 in computer engineering and has deep hands on expertise in vast tools, Operating Systems, databases, programming languages. His versatile tech skills and business needs analysis expertise led to invention of FREE open source SOA Blockchain Framework solution and also generic Multi Hub DQ Solution and they have been successfully deployed for large clients. In 1999, DV designed, hacked and coded solutions for Worlds#1 Mobile based Payment. His dynamic personality speaks for himself, with 20+ years of cross industry consulting for Fortune 200 clients like Roche, Maersk, Axa, Deutsche Bank, T-Systems, TetraPak, ABN Amro. He delivered many large DQ, SAP Integration, Data Migration and MDM projects, with great appreciation for his multiple talent across Advisory, business needs, project estimation and planning, solution design, product selection, delivery+development and deployment. Apart from public speaking at various IT and non-IT events, he is a business Investor with large real estate portfolio too. Having realized that his solution offers savings over 1.5 million € and time savings of 1 year project delivery & solution maturity, he decided to write this book to share his wisdom.

- 4. Special Thanks To You Thanks to you for taking the step forward to evaluate this book in terms of it’s promises and look into the solution promised to add value to you. Book’s journey dates back to 2002, when I started to work on SAP Migration projects. There was need for over 300 data services for one site and with 6 sites simultaneously, it means almost 2000 data services need every 8 months. Each site would deliver about 300 files on daily basis for data checks and reconciliation, prior to final load into SAP. This approach of SOA based brokers and data services limits the no of components to develop. With one generic file broker and 300 configurable data services, we can rollout 6 sites every 8 months for many years…… THAT’s COOL With each new client and more project challenges, I refined this solution to become generic with more features. Within a normal infrastructure, it takes about 3-4 days to install, configure the framework and get the sample services to run. Landmark success is proven at a client where more then 50 financial transaction posting data services run to feed new SAP GL system with multi million idocs each day without error, offering single view of all service status, SOX compliance & audit approvals.

- 5. Acknowledgments Special Thanks To below persons, who has directly or indirectly contributed to evolution of this solution. - Alain Hassler - Walter Sporleder - Roxanne Elia - Jatinder Sondhi - Khalid Lhalouani - Jared Daum - Christian Souche - Bruno Eiwen And to my Life Coach, Mr. Vinod Kumar Chauhan, who gave me so many of the life teachings that resulted in my drive to give to world in form of this book and as well as so many thoughts on how to be a happy and successful person. I love his ?, that he asks me on each meet , “Is your character intact ?”. This clearly speaks that great character is key to successful life. Additionally, thanks to below lovely persons in my work life journey. They contributed to my current success. - Eckhard Ortwein - Mathias Entenmann - Andreas Schroeder - Aman Teja - Seshu Nuthalapati - Prachi Singh - Udai Vir Singh – Father - Premlata Singh – Mother - JT Foxx – my business coach

- 6. CONTENTS PART I : Enterprise Challenges SOA Definition Cragy CIO/ CDO demands Services Definition ROI is un-predictable & Vague PART 2 : Future of Services Cloud of Information SOA Solutions are expensive MDM/ Big Data Project challenges Enterprise Service Bus Blackhole Service Reports PART 3 : Step into SOA Blockchain Aspects SOA Redefined Platform insight for high ROI Core Framework Features for Long Term Survival DB Model Services and Brokers coexist at Enterprise level Service and Broker Independence Modular Constituents of a Service Depth of Service control Service SOX Structure Difference between Inbound and Outbound Services PART 4 : Walk over SOA Blockchain Implementation Introduction Data Object Management Framework Architecture Service Execution Steps - Detail Design

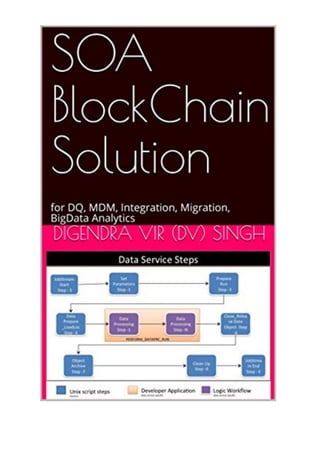

- 7. JOBSTREAM_START SET PARAMETERS CHECK_LOCK_DATA_OBJECT PREPARE_RUN PARFILE_PREPARE_LOADLOC DATA_ PREPARE_LOADLOC PERFORM_DATAPRC_RUN CLOSE_RELEASE_DATA_OBJECT OBJECT_ARCHIVE OUTPUT_DATA_BROKER CLEANUP JOBSTREAM_END Service Execution Flow for Process, Logging DB APIs for Process, Logging DB Logical Model / Staging Information Service/Process Audit trail & Modification Logs PART 5 : SOA DB Model Deep Dive Introduction Deep Dive into DB Model ER Diagram and Table Description Interface Definition Object Definition Site Definition Object Properties Object Registry Object X Reference Process Statistics Process Type Period Interface Stream/Chain Process Log Step Process Log Task Process Log

- 8. Process Detail Log Data Audit Log Log Level Message Domain Message Class Application Error Code Decoder Status Values Interface Functionality Tolerance Level Parameter Configuration Message Archive / Message Store Insight into – DB Components DB nonAPI Components For Tables DB API Components For Tables PART 6 : Object Brokers Broker word explanation Types of Brokers Commercial Brokers Usage of Brokers Features of Broker WebService Broker – Inbound WebService Broker - Outbound Message Queue Broker - Outbound DB Broker - Inbound File Broker - Inbound Message Queue Broker - Inbound File Broker - Outbound DB Broker - Outbound PART 7: Client Cases Big International Bank World’s Largest Shipping

- 9. World’s Biggest Insurance World’s Top Pharma PART 8 : Coal Mine of Challenges Cloud IT landscape Underestimated Deployment efforts Technology difficulty Multi environment project setup & RM nightmare Lack of tools for Deployment Unified Deployment Robot SAP is Black Box SAP real time integration is a big challenge Solutions to SAP real time feedback doesn’t exist SAP IDOC Real time Error capture solution PART 9 : 10 min QA with Guru Client References Applications needed to implement DB needed to implement Time frame to implement it Complexity in enhancing Maintenance issues Change Deployments issues Cost of this solution Paid or Open source Source code access Deploying Brokers APPENDIX Glossary of Terms

- 11. SOA Definition SOA became popular with its usage around WebServices “By formally embracing an SOA approach, solutions are better positioned to stress the importance of well-defined, highly interoperable interfaces” Cragy CIO/ CDO demands CIO demand’s SOA based delivery !!! This message lands on your table, throwing many questions in team like Whats the definition of SOA for MDM project? What is the practical difference in the way I was doing thing before and to be adopted? Will there be a difference in the way of managing deliverables? What is the better way to handle deployments for SOA? Services Definitions What kind of services are we talking for each Subject Area ? Each subject area like Material Master, Customer , Vendor may have a project of different nature like MDM, DQ etc. Below is services nature in those context. MDM / RD Project u Inbound – Periodic/RealTime Data Load Services u Inbound – Initial Data Load Services u Inbound – GUI Data Capture Services u Inbound – Mass Data Update Services u Outbound – Periodic/RealTime Data OffLoad Services u Outbound – Reports (Services & Data Change) Data Quality Project

- 12. u Inbound – Periodic Data Load Services u Inbound – GUI Data Management Services u Outbound – Periodic Data Offload Services u Outbound – Dimensional Reporting Data Offload Services ( Optional ) u Outbound – Reports (Services & Data Issues) Integration / BigData u Inbound – Periodic/RealTime Data Load Services u Outbound – Periodic/RealTime Data OffLoad Services u Outbound – Reports (Services) ROI is unprectiable & Vague Is there any Framework with clear ROI ? Extremely sensitive question to answer truely for a commercial product. We go a step further to nail it down.

- 14. Cloud of Information Future will hold working of enterprises with data spread across world in the cloud, especially keeping in mind the amount of data we would like to use to make proper decisions and work. Based on growing need to make use of larger data to make correct decisions, would it be possible for a enterprise to host all data within his premises ? Amount of data will drive the need for larger and faster processors. This will drive the cost too. The offerings of needed information will grow with time and it would be available in cloud using services. A Enterprise should build it's information system based on SOA concept to be ready to adopt to the future needs without complete rebuild. SOA Solutions are expensive A proven methodology and mature technological architecture that can be used to build your services and roll them out without worrying about designs and solutions to fulfill tons of functional & non-functional requirements that are mandatory. What features to expect in a mature platform ? A mature platform must be able to accomodate the dynamic changes in a enterprise for next few years. Certain must have features are listed below. v This architecture component / framework is based around the generic Service DB model to roll out multiple services in a assembly line fashion. v This framework deliver’s all generic components that need to be used in each inbound / outbound service to adhere to many service level requirements like Service control, process control, process stats etc. v All project services can be configured to run in a Realtime or non-realtime mode. However each Job Stream is again meant to consist of some standard Job Steps.

- 15. v Each service is free to choose which Job Steps to use, based on need. v Job Steps are implemented as reusable programs/ETL components. Each step will however allow for specific code to be added for a particular service. v Same Job Step implementation for realtime and non realtime could be different. v All the Service steps are meant to be part of a Service Run Executor framework. The implementation of executor framework will vary depending on whether it is applied to a realtime or non realtime service and type of Realtime Service. v This executor is configurable and can execute the various job steps that are necessary to accomplish the interface execution, based on meta data configuration. Enterprise Service Bus What does Enterprise Service Bus / Communication Layer refer to ? Basic ones include: Message Queue File System Web Service Hub DB Container Blackhole Service Reports Is it possible to get insights into all black hole services running around Enterprises ? Framework model allows for various kinds of reports at data and service level in automated or on-demand mode Service Reports Daily reports for all status Service specific Data Object Reports

- 16. Inbound Objects status Outbound Objects status Object status based report (selection on status, TimeSpan): Same as above with extra filter on status Objects in Error Data Reports Service report with Summary Service report with data details Below is some sample reports

- 19. SOA Redefined All project services can be configured to run in a Realtime or non-realtime mode. However each Job Stream is again meant to consist of some standard Job Steps. Each service is free to choose which Job Steps to use, based on need. Job Steps are implemented as reusable programs/informatica components. Each step will however allow for specific code to be added for a particular service. Same Job Step implementation for realtime and non realtime could be different. All the Service steps are meant to be part of a Service Run Executor framework. The implementation of executor framework will vary depending on whether it is applied to a realtime or non realtime service and type of Realtime Service Platform insight for high ROI A proven methodology and mature technological architecture that can be used to build your services and roll them out without worrying about designs and solutions to fulfill tons of functional & non-functional requirements that are mandatory. v Use our framework to fulfill all project needs and speed up delivery ü We will present you an SOA based architectural model, that will meet your immediate needs and offer great flexibility for future extensions at no additional redesign cost ü A architecture that has a proven track record of being one of the best. It has been built over few years of continuous inputs and acts as great reference ü Ease of incorporating new services/removing services in a plug & play approach ü High level of audit trail, extensive error logging and end to end data lineage ü Full length of data reconciliation ü Real time data feed and thus offering real time reporting capabilities ü Elimination of try and error approach by inexperienced resources.

- 20. Ø This approach can be costly in terms of timelines and resources Ø Often this tends to be a continuous ongoing patchy work to existing solution Ø Often becomes complicated and unmanageable due to series of patch works ü Delivery of proven platform will deliver greater value and provide cost saving on business front too ü This platform gives the auditors & data owners high level of transparency in term of seeing there data and its audit trail for regulatory compliance as well as data integrity aspects. ü Save multi millions in costs over the next 1-2 years in terms of learning curve, avoiding fixes to existing work, eliminate waste of time by dependent users to get right reports etc Ø Shorten delivery of right platform Ø Delivery capability to manage / roll-out more sites at the same time within fixed deadlines Ø Shorten each phase time due to better platform and quick turnaround time for checks and appropriate reports Core Framework Features of Long Term Survival Framework Execution: It is detailed out in section 2.2 of next chapter. The framework highlights: It is detailed out in section 2.2 of next chapter. The step definitions: It is detailed out in section 2.3 of next chapter. DB Model Plug & play design available for usage to build tons of Services for MDM, Real time Integration, Big Data in a SOX compliant manner. See how it helps you save over 1 million€ and 1 year of expensive pitfalls. This is better covered in detail in later chapter. The ER diagram presented may be outdated, as some attributes has evolved.

- 21. Services and Brokers coexist at Enterprise level Here we show how various Enterprise pieces like Scheduler’s, Data Brokers and Individual services/interfaces co-exists and support each other with SOA. It is detailed out in section 2.2.1 of next chapter. Service and Broker Independence The below diagram shows the overall setup of a service within the context of Broker Services. Modular Constituents of a Service Is it possible to break it down into well defined modules ? When we talk about mature platforms, below shows the various generic step within any kind of service It is detailed out in section 2.3 of next chapter. SERVICES – Brokers & Data Services Data Broker (FILE/DB/MSG) Service XYZBN Data broker will run in real@me mode and will ensure registering of all events and associated informa@on within the framework and relay that event to subscribing services Service (XYZBN) will run in real@me / scheduled mode and Consume / Distribute the data Asynchronous Mode

- 22. Within the context of a DQ project, the orange box can expand into below steps, consisting of multi steps of checks and other activities like task distribution. Or Data Service - Components Generic Framework Steps PERFORM_DATAPRC_RUN JobStrea m Start Step - S JobStrea m End Step - E Set Parameters Step -1 Check_Lock Data Object Step -2 Data Processing Step -1 Data Processing Step -N Data Prepare _LoadLoc Step -4 Prepare Run Step -3 Object Archive Step -7 Clean Up Step -9 Close_Relea se Data Object Step -6 Output Data Broker Step -8 It shows the various generic step within any kind of service

- 23. Within MDM project, this orange box can contain code for initial load or mass data update or data output service etc Within context of SAP or any other data migration projects, orange box can look like below

- 24. Depth of Service control To what granular level can I control/ monitor my service process ? This is very overlooked design aspect. We offer prebuilt support for three depth levels for control. It is detailed out in section 2.4 of next chapter.

- 25. Service SOX Structure If a service is using Files for inbound or outbound purpose, then it needs to use its own folder structure for this. Below is the expected service folder structure under infa_shared / product file system folder smds/ smds/{serviceid} smds/{serviceid}/in smds/{serviceid}/out smds/{serviceid}/run/yyyymmdd_{runid} SrcFiles/{serviceid} TargetFiles/{serviceid} Difference between Inbound and Outbound Services A well defined file structure and a small detailed DB based logs capture model enables this service model to be SOX compliant Process Layers Job Chain Job Step Job Tasks JOBCHAIN_PROCESS_LOG STEP_PROCESS_LOG TASK_PROCESS_LOG GENERIC_DETAIL_LOG DATA_AUDIT_LOG

- 26. CONSUMING PROCESS FLOW STEPS: Below is a listing of Job steps applicable for consuming process flow with brief description. They are later further detailed. JOBSTREAM_START Initialize the start of the Service JobChain /Stream process SET PARAMETERS Fetch all necessary config values and assign them to parameters at Chain level CHECK_LOCK_DATA_OBJEC T Check for existence of Data Objects/files for processing under <interfacename> within meta data. It also updates the Object_X_Prc_status table for processing status to STARTED for the ones identified for processing. PREPARE_RUN Do the necessary preparation steps. Tasks here primary involve activities on file system PARFILE_PREPARE_LOAD LOC Prepare the appropriate parameter files for the actual data jobs and copies them to appropriate load job locations DATA_ PREPARE_LOADLOC Fetch the data objects/ files , for the data processes and ensures there availability for the data jobs. For file objects, it FTPs it on the ETL server PERFORM_DATAPRC_RU N Initiate the ETL/Data processing steps to Perform Tech, Func control checks and the data processing. It may consists of one or more steps. CLOSE_RELEASE_DATA_OB JECT It updates the Object_X_Prc_status table for processing status to COMPLETED or NULL for the ones identified for processing depending on process success or failure. OBJECT_ARCHIVE Archive the processing logs, error files etc. For file objects outputs it copies the from Informatica server to the file server. OUTPUT_DATA_BROKER It looks into object config to distribute put the appropriate output data objects/files on the out path to be taken care by brokers. (This functionality is not very critical for consuming services). It offers the same functionality as that of inbound object broker

- 27. but only for output data. CLEANUP Cleanup the temporary files, log files , data etc, where necessary JOBSTREAM_END Mark the end of the Job Chain/Stream with appropriate return_code OUTBOUND PROCESS FLOW STEPS: Below is a listing of Job steps applicable for provider process flow with brief description. They are above detailed. JOBSTREAM_START Same as Consuming SET PARAMETERS Same as Consuming CHECK_LOCK_DATA_OBJECT Same as Consuming, with small difference in implementation. PREPARE_RUN Same as Consuming PARFILE_PREPARE_LOADLO C Same as Consuming DATA_ PREPARE_LOADLOC Same as Consuming PERFORM_DATAPRC_RUN Same as Consuming CLOSE_RELEASE_DATA_OBJECT Same as Consuming OBJECT_ARCHIVE Same as Consuming OUTPUT_DATA_ BROKER Same as Consuming CLEANUP Same as Consuming JOBSTREAM_END Same as Consuming

- 31. Broker word explanation As the word speaks for itself, brokers are meant to move things around and keep account of it. Types of Brokers Brokers can exists for any kind of data container. Most common data containers we work with are Flat File, database tables, messages within queue / topics, web service calls with data. Commercial Brokers Often in large corporations, we will notice these 2 ones: - IBM Message Broker - Oracle Web Service Broker Using IBM Message Broker in combination with our Message Broker is a good fit, as we have built a feature of Meta data capture mechanism and also a sophisticated mechanism for data services to consume those messages. Usage of Brokers It becomes a critical component of a project, where we need to register a data objects for it’s incoming and outgoing for various reasons like Audit, compliance etc. The other important case is, whenever, one data object is to be used by more then one data service. In those cases, it is wise to register it once, but distribute it to 2 different services and let them consume in there own ways. In special case One service may even reject the contents of the file, while the other service will consume it in good way. Features of Broker The needs can be endless, however. We tend to support the most important ones from audit aspects. Ideally, it should have option to : - scan some sources, including virtual sources - ability to register the data object and it’s various configurable properties

- 32. - support registration of valid data object patterns. - Register non valid pattern data objects and mark them as BAD - Ability to identify the services, that has subscribed to a particular data object and distribute the data object to that service, in such a way that, it should be possible to restart the consuming data service and still not loose the data object. WebService Broker – Inbound I have not developed any personal solution so far on this. I have rather Enterprise tool for this. WebService Broker – Outbound I have not developed any personal solution so far on this. I have rather Enterprise tool for this. Message Queue Broker – Outbound This broker is exactly similar to that of “Message Queue Broker – Inbound”. It will be documented in next release. Ideal case, where we composed messages to send to SAP PI linked message queue and used our solution to register the messages and store the message contents too. DB Broker – Inbound This broker is exactly similar to that of “DB Broker - Outbound”. It will be documented in next release.

- 33. Part 6.1: <<File Broker – Inbound>>

- 34. Part 6.2: <<Message Queue Broker – Inbound>>

- 35. Part 6.3: <<File Broker – Outbound>>

- 36. Part 6.4: <<DB Broker – Outbound>>

- 38. Big International Bank It was used to design and build the risk data processing platform for different kinds of risks like credit risk, non credit risk, operational risk, market risk, business risk etc. Here the global Headquarter operated risk department received data from world wide countries in the forms of data files, which needed to be consumed while fulfilling SOX compliance. Data object registration was very critical, as sometimes, we got multiple deliveries within same day and we needed to identify the right data to pump it into Risk Models for processing. Initially it supported economical data processing and later same platform could support Basel II data processing, which had much more data services. Here we used only the Data File Inbound Broker along with SOA Framework. World’s Largest Shipping The need was to go live with a DQ project and also a MDM project. Since the no of subject areas were estimated about 40 and each having large no of data objects, it clearly showed the need to develop large no of data services to feed into DQ project and also large no for service for MDM domain to support initial load, mass updates etc. Here we used the SOA Framework to build large no of data services. Also deployed Message Broker to capture and manage all MDM Hub Events. We used DB Outbound Broker to capture new data arrival events within database tables.

- 39. World’s Biggest Insurance This project demanded making large no of data services to feed into SAP new GL in real time and also provide real time feedback back to data provider for the errors. The challenge in project was the high financial risks associated to the data posted into SAP. This demanded high performance of data services and high level of data traceability in terms of posting into SAP and later error reconciliation. Here we used only the Data File Inbound Broker along with SOA Framework. World’s Top Pharma Here the ambitions were high in terms of global worldwide rollout of a DQ project for Material Master Subject area, involving multiple countries, to begin with. This clearly meant over 40 data services in long run for each country. In addition, we had to implement a MDM project for managing MM data. This also involved almost real time data push and data extraction from multiple SAP systems. We had to use Data File Inbound Broker, Data File Outbound Broker, Message Inbound Broker along with SOA Framework to make this happen.

- 41. Cloud IT landscape Big challenge here is how to manage and supervise the various combinations of services split across inhouse and cloud landscape !! Using this Framework executor, we can manage and supervise even the cloud services, as we would call the cloud service components as part of execution steps. This would allow centralized monitoring of all services and also allow seamless transition of inhouse services to cloud. Underestimated Deployment efforts Deployment of development work is the most costly aspect of budget and it is the mostly unclear defined aspect. To minimize this big budget loss surprises, we need to bring in assembly line based well defined components approach to use to build a deliverable. Our SOA Blockchain framework is exactly meant for that. Multi environment project setup & RM nightmare Release manangement of build components across multiple environments ( eg. Dev, Dev2, Test, Prod) becomes a nightmare and time consuming, especially in a Agile based project. Forget the packaging, Just the deployment alone will take 20% of project budget. It is wise to use Release Management tools in those cases. Lack of tools for Deployment In real world, there is big lack of Change Management and Change Deployment tools. It’s quite correlated to complex setup scenarios of servers, which varies widely across companies. It is wise to use a tool, that can be extended to fit own enterprise needs.

- 42. Unified Deployment Robot / Change Deployment Robot It is a Release Management, Change packaging and deployment tool, developed by our partner’s and we recommend it’s usage. Using this would by default yield 60% productivity on deployment aspects. Also since it allows scheduled deployment, team is more productive, as deployments, can be planned to happen in night. This implies easy 10% budget saving within agile projects. SAP real time integration is a big challenge Such projects, specially involving financial data, is a big challenge and high risk due to consequence of things going wrong with millions of transactions within a file and scenario’s where we need to identify, what was the last state of data when things went wrong. This demands a robust audit trail framework solution that offers full audit on data and data service state. Solutions to SAP real time feedback doesn’t exist In addition to above data risks, the issue is that SAP by default doesn’t offer a log file feedback to data services interacting with it. This leaves the burden of data reconciliation on data service itself. Browsing through millions of IDOCs and analysing errors in it is a nightmare and extremely slow time consuming process. To act within time, it is ideal to build a solution that can capture all posted idocs and do a automatic reconciliation at line item level. SAP IDOC Real time Error capture solution The pain of business team’s dependency on support and IT team is resolved by having a automated data service that deliver’s reconciliation reports on data posted into SAP system.

- 43. Below is details of such design and solution. Error Framework - SAP Are you able to handle integrations transactions into SAP in real time, to avoid collateral damage ? Most or almost none companies has this solution, as I have personally worked for many large clients and seen none to have such in place. Conceptually it is simple and using a framework solution, it becomes easy to build it for various kinds of data services that integrate with SAP. Two steps process. 1) A data service eg.21, writes transactions to SAP, using a input file as below:

- 45. 2) Generic Error Framework Steps It shows the various generic steps to handle SAP Real time Error Handling. >Service G20: It reads IDOC tables on Run Continuous /scheduled / on demand basis and updates the DB Error table. >S31: A Error handling Service that reads DB Error table and generates output files for each Inbound Service (eg. Service 21)

- 48. Applications needed to implement The tools needed to implement this framework etc, is very basic. SOA Blockchain Framework Unix DB (eg Oracle) File Broker Unix DB (eg Oracle) DB Broker Unix DB (eg Oracle) Message Broker ETL Tool with Queue connectivity / Programming Language. DB (eg Oracle) DB needed to implement Current available DB APIs are coded for Oracle. If you want to do it in another DB, then those APIs need to be migrated. Time frame to implement it 1 week max to test framework and get sample code running. It can be quicker, if organisation IT is fast and approachable. Complexity in enhancing Very easy to extend the steps, or introduce new steps if needed.

- 49. Similarly, the broker’s can be enhanced easily, as most times, it the logic that needs enhancement. Maintenance issues Since development is pattern based, it is easy to maintain and same person can easily handle large no of data services or even entire project or even multiple projects. The actual logic is centred around one step and issues in it are normally well tested and see less of operational issues. Change Deployments issues This is big issue in each project. Since it is pattern based, packaging it is easier, as approach to deliverable code components are well defined in terms of location. Since its service oriented, the data services are 99% independent and hence packaging and deployment has low risks and are quicker. Cost of this solution Indeed building such a solution with so many features, takes long time and definitely it is worth more then 2 million €. Paid or Open source We give it away for FREE, so you can classify it as OPEN Source too. Source code access Please contact us and we shall arrange you the code base. We can even tune it to your environment needs. Deploying Brokers It needs framework to be installed first. Deploying and using brokers then is easy.

- 50. Appendix

- 51. Glossary of Terms Acronym Description UI User interface application. GUI Graphical user interface. Same as UI SDNL Special designated National list GAD General Architecture Design document ER Entity Relationship Diagram (it shows table and there linkages) DB Database API Application Programming Interface

- 52. WOOPS !!, NO BULLSHIT Is this what you thinking? Your thoughts, comments, and ideas about this book would be most appreciated. Write me at: digendra@yahoo.com Put email subject : Book: SOA OR Post comments at www.meetdv.com Thinking of engaging? For my expertise consulting or speaking at your company / event, please drop a email or call us