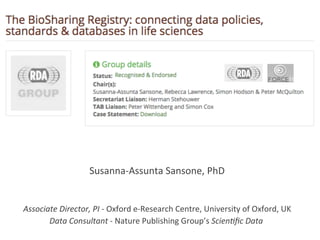

BioSharing WG - ELIXIR IG - RDA Plenary 7, Tokyo, March 2016

•

2 likes•1,000 views

Update on the BioSharing WG activities at the joint ELIXIR IG and BioSharing WG breakout: https://rd-alliance.org/joint-meeting-ig-elixir-bridging-force-wg-biosharing-registry.html

Report

Share

Report

Share

Download to read offline

Recommended

Engaging the Citizen Scientist in Content Enhancement for BHL

This presentation will discuss two current crowdsourcing activities initiated with BHL content: Science Gossip (implemented by ConSciCom on top of the Zooniverse platform) and two online games (Beanstalk and Smorball developed by Tiltfactor @ Darmouth)

Efficient Data Reviews and Quality in Clinical Trials - Kelci Miclaus

Kelci Miclaus from SAS JMP: 'Efficient Data Reviews and Quality in Clinical Trials' - presented at Clinical Data Live 2013.

CLINICAL STUDY REPORT - IN-TEXT TABLES, TABLES FIGURES AND GRAPHS, PATIENT AN...

This document discusses technical requirements and solutions for producing statistical outputs for clinical study reports according to ICH E3 guidelines. It provides an overview of key points in ICH E3 related to in-text tables, post-text tables and figures, narratives, and patient data listings. It also discusses considerations for formatting outputs, including paper size and style guidelines. Potential solutions for automating output generation using SAS are presented.

Clinical Data Review Best Practices - E. Herbel

The document summarizes best practices for clinical data review. Historically, clinical research associates reviewed 100% of paper case report forms and data was reviewed in exhaustive detail. However, this approach is now seen as inefficient. The current trend is for risk-based data review, where highest risk issues are prioritized. This allows reviewers to focus on important safety data and unexpected trends rather than getting bogged down in minor details. Effective data review examines data from aggregate summaries to identify trends, then allows drilling down to patient-level details to investigate further. Graphical aggregates like scatter plots and risk assessments are also useful review tools to detect outliers.

Cinical trial protocol writing

This document provides guidance on writing clinical trial protocols and investigators brochures. It discusses that a protocol is a complete description of a clinical trial that includes objectives, design, methodology, and other key elements. It emphasizes writing clear and unambiguous eligibility criteria. It also reviews important sections of a protocol including study design, safety reporting, statistics, and informed consent. An investigators brochure is a comprehensive document summarizing safety information about an investigational product from preclinical and clinical trials to guide its use in humans.

FAIR data and NPG Scientific Data: RIKEN Yokohama, 25 June, 2014

The document summarizes a presentation about making scientific data FAIR (Findable, Accessible, Interoperable, Reusable). It discusses the concept of FAIR data and several of the presenter's related projects. Examples are provided of using standards like ISA-Tab to structure metadata and make datasets interoperable. The presentation outlines the presenter's roles in data capture, publication, and standards development efforts to promote FAIR data principles. Scientific Data, a new journal for peer-reviewed data descriptions, is introduced as a way to make datasets more discoverable and reusable.

Oxford DTP - Sansone curation tools - Dec 2014

The document discusses making experimental data and methods more reproducible and accessible by providing structured metadata alongside narrative descriptions. It recommends using community standards and ontologies to semantically tag key information, and machine-readable formats to structure descriptions in a consistent way. Tools are proposed to help authors report structured information and curate it according to these standards to make data fully FAIR (findable, accessible, interoperable, reusable). The goal is to move from experiments that are difficult to reproduce to those that are "born reproducible".

BioSharing - RDA Plenary 6 - Metadata Standards Catalog WG and BioSharing WG ...

An introduction to the metadata landscape in the life sciences. Covering metadata standards, the databases that implement them and the policies that endorse/recommend both standards and databases in the life sciences.

Recommended

Engaging the Citizen Scientist in Content Enhancement for BHL

This presentation will discuss two current crowdsourcing activities initiated with BHL content: Science Gossip (implemented by ConSciCom on top of the Zooniverse platform) and two online games (Beanstalk and Smorball developed by Tiltfactor @ Darmouth)

Efficient Data Reviews and Quality in Clinical Trials - Kelci Miclaus

Kelci Miclaus from SAS JMP: 'Efficient Data Reviews and Quality in Clinical Trials' - presented at Clinical Data Live 2013.

CLINICAL STUDY REPORT - IN-TEXT TABLES, TABLES FIGURES AND GRAPHS, PATIENT AN...

This document discusses technical requirements and solutions for producing statistical outputs for clinical study reports according to ICH E3 guidelines. It provides an overview of key points in ICH E3 related to in-text tables, post-text tables and figures, narratives, and patient data listings. It also discusses considerations for formatting outputs, including paper size and style guidelines. Potential solutions for automating output generation using SAS are presented.

Clinical Data Review Best Practices - E. Herbel

The document summarizes best practices for clinical data review. Historically, clinical research associates reviewed 100% of paper case report forms and data was reviewed in exhaustive detail. However, this approach is now seen as inefficient. The current trend is for risk-based data review, where highest risk issues are prioritized. This allows reviewers to focus on important safety data and unexpected trends rather than getting bogged down in minor details. Effective data review examines data from aggregate summaries to identify trends, then allows drilling down to patient-level details to investigate further. Graphical aggregates like scatter plots and risk assessments are also useful review tools to detect outliers.

Cinical trial protocol writing

This document provides guidance on writing clinical trial protocols and investigators brochures. It discusses that a protocol is a complete description of a clinical trial that includes objectives, design, methodology, and other key elements. It emphasizes writing clear and unambiguous eligibility criteria. It also reviews important sections of a protocol including study design, safety reporting, statistics, and informed consent. An investigators brochure is a comprehensive document summarizing safety information about an investigational product from preclinical and clinical trials to guide its use in humans.

FAIR data and NPG Scientific Data: RIKEN Yokohama, 25 June, 2014

The document summarizes a presentation about making scientific data FAIR (Findable, Accessible, Interoperable, Reusable). It discusses the concept of FAIR data and several of the presenter's related projects. Examples are provided of using standards like ISA-Tab to structure metadata and make datasets interoperable. The presentation outlines the presenter's roles in data capture, publication, and standards development efforts to promote FAIR data principles. Scientific Data, a new journal for peer-reviewed data descriptions, is introduced as a way to make datasets more discoverable and reusable.

Oxford DTP - Sansone curation tools - Dec 2014

The document discusses making experimental data and methods more reproducible and accessible by providing structured metadata alongside narrative descriptions. It recommends using community standards and ontologies to semantically tag key information, and machine-readable formats to structure descriptions in a consistent way. Tools are proposed to help authors report structured information and curate it according to these standards to make data fully FAIR (findable, accessible, interoperable, reusable). The goal is to move from experiments that are difficult to reproduce to those that are "born reproducible".

BioSharing - RDA Plenary 6 - Metadata Standards Catalog WG and BioSharing WG ...

An introduction to the metadata landscape in the life sciences. Covering metadata standards, the databases that implement them and the policies that endorse/recommend both standards and databases in the life sciences.

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...Susanna-Assunta Sansone

This document outlines the services provided by Scientific Data, a publication from Nature that helps authors publish, discover, and reuse research data. It provides structured metadata and a narrative component for Data Descriptors, which describe datasets in detail without new scientific findings. The publication works with over 50 repositories and provides submission assistance and semantic annotation to help authors find appropriate data archiving locations.BioSharing update and next steps - ELIXIR ALL Hands - March, 2015

This document describes a web-based registry that aims to provide guidance for researchers, developers, and curators on selecting content standards and databases. There are almost 600 content standards in the life sciences. The registry will allow users to search for, filter, submit, and view information on standards and databases. It will link standards to databases that implement them and provide visualizations of standard formats and terminologies. The goal is to help stakeholders make informed decisions by providing a curated, searchable registry of standards and database information. An advisory board and working group will oversee the registry's development and operations.

How to share useful data

A 45min presentation given at the 'Getting published in Nature's Scientific Data journal', hosted by the University of Cambridge Research Data Management team (www.data.cam.ac.uk). Presented on Monday 11th January 2016.

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014Susanna-Assunta Sansone

What to know when planning for your data management strategy and preparing a data management statement for a research proposal for BBSRC DTP first year students2014 mmg-talk

This document summarizes research on non-model ascidian species Molgula occulta and Molgula oculata. An international collaboration generated transcriptome data, sequenced the genomes of three Molgula species, and examined gene expression patterns related to tail development. Analysis revealed heterochronic shifts in developmental timing between tailed and tailless species. The data resources enabled further study of evolutionary shifts in gene regulatory networks underlying conserved developmental processes. The document emphasizes the importance of methods development for large-scale data analysis to enable new biological insights.

ISA - a short overview - Dec 2013

An AI assistant

created by Anthropic to be

helpful, harmless, and honest.

Reproducibility (and the R*) of Science: motivations, challenges and trends

IRCDL Pisa 31 Jan – 1 Feb 2019

https://ircdl2019.isti.cnr.it/,

IRCDL 2019 15th Italian Research Conference on Digital Libraries.

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014Susanna-Assunta Sansone

http://www.bdebate.org/sites/default/files/archivos/debate/bdebate_bib_big_data_opensimposium_program_121114_web_1.pdfBig Data Standards - Workshop, ExpBio, Boston, 2015

1) Big data standards are needed to make data understandable, reusable, and shareable across different databases and domains.

2) Effective standards require reporting sufficient experimental details and context in both human-readable and machine-readable formats.

3) Developing standards is a collaborative process involving different stakeholder groups to define requirements, vocabularies, and data models through both formal standards bodies and grassroots organizations.

GARNet workshop on Integrating Large Data into Plant Science

Workshop on Integrating Large Data into Plant Science organised by GARNet and egenis, at Dartington Hall, Devon, 21st April 2016.

EMBL Australia Bioinformatics Resource BioInfoSummer 2016

EMBL-ABR is a distributed national research infrastructure in Australia that provides bioinformatics support to life science researchers. It aims to increase Australia's capacity to analyze large heterogeneous datasets, contribute to developing best practices in data management and tools, and enable engagement in international bioinformatics programs. EMBL-ABR has nodes across Australian institutions and works to showcase Australian research internationally and coordinate training in bioinformatics.

COPO kick-off meeting

An overview of the ISA tools (http://isa-tools.org) presented at the COPO kick-off meeting in Norwich, UK, September 2014.

Claudia Bauzer Medeiros Digital preservation – caring for our data to foster...

This document discusses the importance of digital preservation. It notes that digital data is costly to produce and can contribute to scientific progress if preserved and shared. Preserving data ensures it can be found in the future as technologies and standards change over time. The document outlines reasons to preserve data, what types of data and associated materials should be preserved, methods for ensuring long-term access and retrieval such as metadata and standards, and challenges around curation and maintenance of preserved data collections.

Protein Database

Lecture delivered by T. Ashok Kumar, Head, Department of Bioinformatics, Noorul Islam College of Arts and Science, Kumaracoil, Thuckalay, INDIA. UGC Sponsored National Workshop on

BIOINFORMATICS AND GENOME ANALYSIS

for College Teachers on August 11 & 12, 2014. Organized by Centre for Bioinformatics, Department of Zoology, NMCC.

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...Susanna-Assunta Sansone

This document introduces Scientific Data, a new peer-reviewed journal for publishing data descriptors from Nature Publishing Group. It will provide structured metadata and narrative articles to describe datasets for reuse. The journal is now open for submissions and will launch in May 2014, featuring an advisory panel and sections for standardized data descriptor articles and experimental metadata. It aims to give proper credit for data sharing and promote open access, reuse and peer review of curated scientific datasets.An Oz Mammals Bioinformatics and Data Resource

The document proposes an Oz Mammals Bioinformatics and Data Resource to store, share, and analyze genomic and other data from Australian mammal studies. It would:

1) Capture existing Oz mammal data and resources, provide long-term storage, and integrate new genomic data from the OMG Project.

2) Enable data sharing within the OMG project and provide access to Oz mammal data worldwide.

3) Give access to data processing, analysis, and visualization tools, and integrate with external resources like the Atlas of Living Australia.

AB3ACBS 2016: EMBL Australia Bioinformatics Resource

The EMBL Australian Bioinformatics Resource (EMBL-ABR) is a distributed national research infrastructure that provides bioinformatics support to life science researchers in Australia. It has a hub-and-nodes structure with the hub hosted at the Victorian Life Science Computation Initiative at the University of Melbourne and 10 nodes located across Australian institutions. EMBL-ABR aims to increase Australia's capacity for bioinformatics research and data science, provide training in bioinformatics, and enable participation in international collaborations.

Apostila isak

International Standards for

Anthropometric Assessment

International Society for the Advancement of Kinanthropometry

Big data, small data, data papers - short statement for "BDebate on Biomedici...

Big data, small data, data papers - short statement for "BDebate on Biomedici...Susanna-Assunta Sansone

My short statement on the (close) debate on Big Data: http://www.bdebate.org/en/forum/big-data-biomedicine-challenges-and-opportunitiesManaging Big Data - Berlin, July 9-10, 201.

Susanna-Assunta Sansone is a data consultant and honorary academic editor who works on several projects related to making data FAIR (Findable, Accessible, Interoperable, Reusable). She is the associate director of Scientific Data, a peer-reviewed journal focused on publishing data descriptors to describe and provide access to scientifically valuable datasets. The goal of Scientific Data is to help promote open science and data reuse by publishing structured metadata and narratives about datasets alongside traditional research articles.

FAIR, FAIRsharing, FAIR Cookbook and ELIXIR - Sansone SA - Boston 2024

FAIR metrics task force https://doi.org/10.5281/zenodo.7463421

FAIRsharing https://fairsharing.org

FAIR Cookbook https://faircookbook.elixir-europe.org

Elixir https://elixir-europe.org/

FAIRsharing-Standards-4-GSC-Aug23.pdf

This document summarizes a presentation given by Susanna Sansone at the GSC 23rd meeting education day in Bangkok, Thailand on August 7, 2023. The presentation discussed standards across life sciences, including definitions of different types of standards and over 1,600 identified standards. It covered standard organizations and grassroots groups, as well as the FAIRsharing database which catalogs over 2,885 standards and databases and aims to promote their use and value across research.

More Related Content

Similar to BioSharing WG - ELIXIR IG - RDA Plenary 7, Tokyo, March 2016

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...Susanna-Assunta Sansone

This document outlines the services provided by Scientific Data, a publication from Nature that helps authors publish, discover, and reuse research data. It provides structured metadata and a narrative component for Data Descriptors, which describe datasets in detail without new scientific findings. The publication works with over 50 repositories and provides submission assistance and semantic annotation to help authors find appropriate data archiving locations.BioSharing update and next steps - ELIXIR ALL Hands - March, 2015

This document describes a web-based registry that aims to provide guidance for researchers, developers, and curators on selecting content standards and databases. There are almost 600 content standards in the life sciences. The registry will allow users to search for, filter, submit, and view information on standards and databases. It will link standards to databases that implement them and provide visualizations of standard formats and terminologies. The goal is to help stakeholders make informed decisions by providing a curated, searchable registry of standards and database information. An advisory board and working group will oversee the registry's development and operations.

How to share useful data

A 45min presentation given at the 'Getting published in Nature's Scientific Data journal', hosted by the University of Cambridge Research Data Management team (www.data.cam.ac.uk). Presented on Monday 11th January 2016.

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014Susanna-Assunta Sansone

What to know when planning for your data management strategy and preparing a data management statement for a research proposal for BBSRC DTP first year students2014 mmg-talk

This document summarizes research on non-model ascidian species Molgula occulta and Molgula oculata. An international collaboration generated transcriptome data, sequenced the genomes of three Molgula species, and examined gene expression patterns related to tail development. Analysis revealed heterochronic shifts in developmental timing between tailed and tailless species. The data resources enabled further study of evolutionary shifts in gene regulatory networks underlying conserved developmental processes. The document emphasizes the importance of methods development for large-scale data analysis to enable new biological insights.

ISA - a short overview - Dec 2013

An AI assistant

created by Anthropic to be

helpful, harmless, and honest.

Reproducibility (and the R*) of Science: motivations, challenges and trends

IRCDL Pisa 31 Jan – 1 Feb 2019

https://ircdl2019.isti.cnr.it/,

IRCDL 2019 15th Italian Research Conference on Digital Libraries.

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014Susanna-Assunta Sansone

http://www.bdebate.org/sites/default/files/archivos/debate/bdebate_bib_big_data_opensimposium_program_121114_web_1.pdfBig Data Standards - Workshop, ExpBio, Boston, 2015

1) Big data standards are needed to make data understandable, reusable, and shareable across different databases and domains.

2) Effective standards require reporting sufficient experimental details and context in both human-readable and machine-readable formats.

3) Developing standards is a collaborative process involving different stakeholder groups to define requirements, vocabularies, and data models through both formal standards bodies and grassroots organizations.

GARNet workshop on Integrating Large Data into Plant Science

Workshop on Integrating Large Data into Plant Science organised by GARNet and egenis, at Dartington Hall, Devon, 21st April 2016.

EMBL Australia Bioinformatics Resource BioInfoSummer 2016

EMBL-ABR is a distributed national research infrastructure in Australia that provides bioinformatics support to life science researchers. It aims to increase Australia's capacity to analyze large heterogeneous datasets, contribute to developing best practices in data management and tools, and enable engagement in international bioinformatics programs. EMBL-ABR has nodes across Australian institutions and works to showcase Australian research internationally and coordinate training in bioinformatics.

COPO kick-off meeting

An overview of the ISA tools (http://isa-tools.org) presented at the COPO kick-off meeting in Norwich, UK, September 2014.

Claudia Bauzer Medeiros Digital preservation – caring for our data to foster...

This document discusses the importance of digital preservation. It notes that digital data is costly to produce and can contribute to scientific progress if preserved and shared. Preserving data ensures it can be found in the future as technologies and standards change over time. The document outlines reasons to preserve data, what types of data and associated materials should be preserved, methods for ensuring long-term access and retrieval such as metadata and standards, and challenges around curation and maintenance of preserved data collections.

Protein Database

Lecture delivered by T. Ashok Kumar, Head, Department of Bioinformatics, Noorul Islam College of Arts and Science, Kumaracoil, Thuckalay, INDIA. UGC Sponsored National Workshop on

BIOINFORMATICS AND GENOME ANALYSIS

for College Teachers on August 11 & 12, 2014. Organized by Centre for Bioinformatics, Department of Zoology, NMCC.

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...Susanna-Assunta Sansone

This document introduces Scientific Data, a new peer-reviewed journal for publishing data descriptors from Nature Publishing Group. It will provide structured metadata and narrative articles to describe datasets for reuse. The journal is now open for submissions and will launch in May 2014, featuring an advisory panel and sections for standardized data descriptor articles and experimental metadata. It aims to give proper credit for data sharing and promote open access, reuse and peer review of curated scientific datasets.An Oz Mammals Bioinformatics and Data Resource

The document proposes an Oz Mammals Bioinformatics and Data Resource to store, share, and analyze genomic and other data from Australian mammal studies. It would:

1) Capture existing Oz mammal data and resources, provide long-term storage, and integrate new genomic data from the OMG Project.

2) Enable data sharing within the OMG project and provide access to Oz mammal data worldwide.

3) Give access to data processing, analysis, and visualization tools, and integrate with external resources like the Atlas of Living Australia.

AB3ACBS 2016: EMBL Australia Bioinformatics Resource

The EMBL Australian Bioinformatics Resource (EMBL-ABR) is a distributed national research infrastructure that provides bioinformatics support to life science researchers in Australia. It has a hub-and-nodes structure with the hub hosted at the Victorian Life Science Computation Initiative at the University of Melbourne and 10 nodes located across Australian institutions. EMBL-ABR aims to increase Australia's capacity for bioinformatics research and data science, provide training in bioinformatics, and enable participation in international collaborations.

Apostila isak

International Standards for

Anthropometric Assessment

International Society for the Advancement of Kinanthropometry

Big data, small data, data papers - short statement for "BDebate on Biomedici...

Big data, small data, data papers - short statement for "BDebate on Biomedici...Susanna-Assunta Sansone

My short statement on the (close) debate on Big Data: http://www.bdebate.org/en/forum/big-data-biomedicine-challenges-and-opportunitiesManaging Big Data - Berlin, July 9-10, 201.

Susanna-Assunta Sansone is a data consultant and honorary academic editor who works on several projects related to making data FAIR (Findable, Accessible, Interoperable, Reusable). She is the associate director of Scientific Data, a peer-reviewed journal focused on publishing data descriptors to describe and provide access to scientifically valuable datasets. The goal of Scientific Data is to help promote open science and data reuse by publishing structured metadata and narratives about datasets alongside traditional research articles.

Similar to BioSharing WG - ELIXIR IG - RDA Plenary 7, Tokyo, March 2016 (20)

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...

NPG Scientific Data; SSP, Boston, May 2014: http://www.sspnet.org/events/annu...

BioSharing update and next steps - ELIXIR ALL Hands - March, 2015

BioSharing update and next steps - ELIXIR ALL Hands - March, 2015

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014

Overview to: BBSRC Oxford Doctoral Training Partnership - Dr Sansone - July 2014

Reproducibility (and the R*) of Science: motivations, challenges and trends

Reproducibility (and the R*) of Science: motivations, challenges and trends

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014

High quality data publications: drives and needs - Sansone, BDebate, 12 Nov 2014

Big Data Standards - Workshop, ExpBio, Boston, 2015

Big Data Standards - Workshop, ExpBio, Boston, 2015

GARNet workshop on Integrating Large Data into Plant Science

GARNet workshop on Integrating Large Data into Plant Science

EMBL Australia Bioinformatics Resource BioInfoSummer 2016

EMBL Australia Bioinformatics Resource BioInfoSummer 2016

Claudia Bauzer Medeiros Digital preservation – caring for our data to foster...

Claudia Bauzer Medeiros Digital preservation – caring for our data to foster...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

AB3ACBS 2016: EMBL Australia Bioinformatics Resource

AB3ACBS 2016: EMBL Australia Bioinformatics Resource

Big data, small data, data papers - short statement for "BDebate on Biomedici...

Big data, small data, data papers - short statement for "BDebate on Biomedici...

More from Susanna-Assunta Sansone

FAIR, FAIRsharing, FAIR Cookbook and ELIXIR - Sansone SA - Boston 2024

FAIR metrics task force https://doi.org/10.5281/zenodo.7463421

FAIRsharing https://fairsharing.org

FAIR Cookbook https://faircookbook.elixir-europe.org

Elixir https://elixir-europe.org/

FAIRsharing-Standards-4-GSC-Aug23.pdf

This document summarizes a presentation given by Susanna Sansone at the GSC 23rd meeting education day in Bangkok, Thailand on August 7, 2023. The presentation discussed standards across life sciences, including definitions of different types of standards and over 1,600 identified standards. It covered standard organizations and grassroots groups, as well as the FAIRsharing database which catalogs over 2,885 standards and databases and aims to promote their use and value across research.

FAIR-4-GSC-Sansone-Aug23.pdf

FAIR principle, FAIR Cookbook and FAIRsharing: https://genomicsstandardsconsortium.github.io/GSC23-Bangkok

FAIRsharing & FAIRcookbook at RDA 2023

The FAIRsharing journey in RDA document discusses:

1) FAIRsharing's growth and involvement with RDA since 2011, including its Working Group established in 2015 to curate standards, databases, and policies to promote FAIR data.

2) FAIRsharing's current activities and impact, such as its registry of over 4,000 records from many disciplines and usage in various tools and services.

3) Opportunities for further engagement with RDA, such as leveraging their expertise for contributions to the FAIR Cookbook, an open resource providing technical recipes for applying FAIR principles to life science data.

NFDI Physical Sciences Colloquium - FAIR

Overview of FAIR, status and next steps, also highlights of FAIRsharing, FAIR Cookbook in the ELIXIR context

Metadata Standards

Overview of metadata standards, and how FAIRsharing and the FAIR Cookbook help selecting and using them. Presentation to the What is metadata? Common standards and properties. EHP Workshop, November 9, 2022: https://ephconference.eu/pre-conference-programme-441

FAIRcookbook: GSRS22-Singapore

Pharmas and academia are joining forces to make data FAIR (Findable, Accessible, Interoperable, and Reusable) through the development of the FAIR Cookbook. The FAIR Cookbook provides over 70 recipes and growing that give step-by-step guidance on improving the FAIRness of different data types through the use of tools, technologies, and best practices. It aims to provide practical examples and guidelines to support researchers, data managers, and others in managing data according to FAIR principles. The FAIR Cookbook is an open, community-developed resource overseen by an editorial board, with contributions from nearly 100 life sciences professionals.

FAIR Cookbook

Presentation of the FAIR Cookbook to the USA FDA/NCTR MAQC Society (https://themaqc.org) event: https://www.fda.gov/media/161555/download

FAIR, community standards and data FAIRification: components and recipes

Overview of FAIR, FAIRsharing and the FAIR Cookbook at the ATI event on Knowledge Graphs: https://github.com/turing-knowledge-graphs/meet-ups/blob/main/symposium-2022.md

FAIRsharing and the FAIR Cookbook

Presentation to the Edinburgh Open Research Conference, May 27, 2022: https://edopenresearch.com/edinburghopenresearchconference/

FAIRsharing for EOSC

Overview of FAIRsharing and its role and onboarding on the EOSC Portal, at the EOSC Provider Days: https://eoscfuture.eu/eventsfuture/provider-days

FAIR: standards and services

Overview of standards and FAIR, FAIRsharing and the FAIR Cookbook -> Predictive Epigenetics PEP-NET training network, 1 April 2022

FAIRification is a Team Sport: FAIRsharing and the FAIR Cookbook

Overview of ELIXIR and focus on FAIRsharing and the FAIR Cookbook for the USA AR-BIC 2022 8th Annual Conference.

FAIRsharing: what we do for policies

Presentation to the EOSC workshop on policies (https://www.google.com/url?q=https://eoscfuture.eu/eventsfuture/monitoring-eosc-readiness-fair-data-policies) on what FAIRsharing does for policies, including providing registration, discovery, flexible and clearer descriptions, relationships, machine readability and comparability.

FAIRsharing: how we assist with FAIRness

The document summarizes how FAIRsharing assists others with promoting FAIR data principles without directly assessing FAIRness compliance. It does this by (1) providing a lookup service for standards and repositories via its API, (2) serving as a registry for FAIRness tests and indicators to make them discoverable, and (3) enabling communities to create profiles declaring which standards and repositories they use. The document also outlines FAIRsharing's operations, advisory boards, and future plans to further support assessment and tracking of FAIRness improvements over time.

ELIXIR FAIR Activities - Examplars

ELIXIR is a European infrastructure that brings together life science resources from across Europe. It offers databases, tools, computing capabilities, and training opportunities. ELIXIR nodes provide these services and connect national data infrastructures. ELIXIR communities connect infrastructure experts to drive service developments. ELIXIR is funded through a mixed model including public sources. It works to sustain important biological data resources and make data FAIR through recommended standards and interoperability resources. ELIXIR also aims to develop a sustainable tools ecosystem and provides training through its portal.

FAIRsharing - focus on standards and new features

Presentation to the EC Workshop on Maximizing investments in health research: FAIR data for a coordinate COVID-19 response. Workshop III, November 8, 2021.

FAIR data and standards for a coordinated COVID-19 response

Presentation to the EC Workshop on Maximizing investments in health research: FAIR data for a coordinate COVID-19 response. Workshop I, October 11, 2021.

FAIRsharing poster

The FAIR Cookbook poster, as presented at the ELIXIR-UK Node and the UK Conference of Bioinformatics and Computational Biology 2021: https://www.earlham.ac.uk/uk-conference-bioinformatics-and-computational-biology-21

The FAIR Cookbook poster

The FAIR Cookbook poster, as presented at the UK Conference of Bioinformatics and Computational Biology 2021: https://www.earlham.ac.uk/uk-conference-bioinformatics-and-computational-biology-21

More from Susanna-Assunta Sansone (20)

FAIR, FAIRsharing, FAIR Cookbook and ELIXIR - Sansone SA - Boston 2024

FAIR, FAIRsharing, FAIR Cookbook and ELIXIR - Sansone SA - Boston 2024

FAIR, community standards and data FAIRification: components and recipes

FAIR, community standards and data FAIRification: components and recipes

FAIRification is a Team Sport: FAIRsharing and the FAIR Cookbook

FAIRification is a Team Sport: FAIRsharing and the FAIR Cookbook

FAIR data and standards for a coordinated COVID-19 response

FAIR data and standards for a coordinated COVID-19 response

Recently uploaded

Build applications with generative AI on Google Cloud

We will explore Vertex AI - Model Garden powered experiences, we are going to learn more about the integration of these generative AI APIs. We are going to see in action what the Gemini family of generative models are for developers to build and deploy AI-driven applications. Vertex AI includes a suite of foundation models, these are referred to as the PaLM and Gemini family of generative ai models, and they come in different versions. We are going to cover how to use via API to: - execute prompts in text and chat - cover multimodal use cases with image prompts. - finetune and distill to improve knowledge domains - run function calls with foundation models to optimize them for specific tasks. At the end of the session, developers will understand how to innovate with generative AI and develop apps using the generative ai industry trends.

一比一原版(GWU,GW文凭证书)乔治·华盛顿大学毕业证如何办理

毕业原版【微信:176555708】【(GWU,GW毕业证书)乔治·华盛顿大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Intelligence supported media monitoring in veterinary medicine

Media monitoring in veterinary medicien

Learn SQL from basic queries to Advance queries

Dive into the world of data analysis with our comprehensive guide on mastering SQL! This presentation offers a practical approach to learning SQL, focusing on real-world applications and hands-on practice. Whether you're a beginner or looking to sharpen your skills, this guide provides the tools you need to extract, analyze, and interpret data effectively.

Key Highlights:

Foundations of SQL: Understand the basics of SQL, including data retrieval, filtering, and aggregation.

Advanced Queries: Learn to craft complex queries to uncover deep insights from your data.

Data Trends and Patterns: Discover how to identify and interpret trends and patterns in your datasets.

Practical Examples: Follow step-by-step examples to apply SQL techniques in real-world scenarios.

Actionable Insights: Gain the skills to derive actionable insights that drive informed decision-making.

Join us on this journey to enhance your data analysis capabilities and unlock the full potential of SQL. Perfect for data enthusiasts, analysts, and anyone eager to harness the power of data!

#DataAnalysis #SQL #LearningSQL #DataInsights #DataScience #Analytics

一比一原版(UCSB文凭证书)圣芭芭拉分校毕业证如何办理

毕业原版【微信:176555708】【(UCSB毕业证书)圣芭芭拉分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版一比一利兹贝克特大学毕业证(LeedsBeckett毕业证书)如何办理

原版制作【微信:41543339】【利兹贝克特大学毕业证(LeedsBeckett毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版一比一弗林德斯大学毕业证(Flinders毕业证书)如何办理

原版制作【微信:41543339】【弗林德斯大学毕业证(Flinders毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

End-to-end pipeline agility - Berlin Buzzwords 2024

We describe how we achieve high change agility in data engineering by eliminating the fear of breaking downstream data pipelines through end-to-end pipeline testing, and by using schema metaprogramming to safely eliminate boilerplate involved in changes that affect whole pipelines.

A quick poll on agility in changing pipelines from end to end indicated a huge span in capabilities. For the question "How long time does it take for all downstream pipelines to be adapted to an upstream change," the median response was 6 months, but some respondents could do it in less than a day. When quantitative data engineering differences between the best and worst are measured, the span is often 100x-1000x, sometimes even more.

A long time ago, we suffered at Spotify from fear of changing pipelines due to not knowing what the impact might be downstream. We made plans for a technical solution to test pipelines end-to-end to mitigate that fear, but the effort failed for cultural reasons. We eventually solved this challenge, but in a different context. In this presentation we will describe how we test full pipelines effectively by manipulating workflow orchestration, which enables us to make changes in pipelines without fear of breaking downstream.

Making schema changes that affect many jobs also involves a lot of toil and boilerplate. Using schema-on-read mitigates some of it, but has drawbacks since it makes it more difficult to detect errors early. We will describe how we have rejected this tradeoff by applying schema metaprogramming, eliminating boilerplate but keeping the protection of static typing, thereby further improving agility to quickly modify data pipelines without fear.

一比一原版兰加拉学院毕业证(Langara毕业证书)学历如何办理

原版办【微信号:BYZS866】【兰加拉学院毕业证(Langara毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Navigating today's data landscape isn't just about managing workflows; it's about strategically propelling your business forward. Apache Airflow has stood out as the benchmark in this arena, driving data orchestration forward since its early days. As we dive into the complexities of our current data-rich environment, where the sheer volume of information and its timely, accurate processing are crucial for AI and ML applications, the role of Airflow has never been more critical.

In my journey as the Senior Engineering Director and a pivotal member of Apache Airflow's Project Management Committee (PMC), I've witnessed Airflow transform data handling, making agility and insight the norm in an ever-evolving digital space. At Astronomer, our collaboration with leading AI & ML teams worldwide has not only tested but also proven Airflow's mettle in delivering data reliably and efficiently—data that now powers not just insights but core business functions.

This session is a deep dive into the essence of Airflow's success. We'll trace its evolution from a budding project to the backbone of data orchestration it is today, constantly adapting to meet the next wave of data challenges, including those brought on by Generative AI. It's this forward-thinking adaptability that keeps Airflow at the forefront of innovation, ready for whatever comes next.

The ever-growing demands of AI and ML applications have ushered in an era where sophisticated data management isn't a luxury—it's a necessity. Airflow's innate flexibility and scalability are what makes it indispensable in managing the intricate workflows of today, especially those involving Large Language Models (LLMs).

This talk isn't just a rundown of Airflow's features; it's about harnessing these capabilities to turn your data workflows into a strategic asset. Together, we'll explore how Airflow remains at the cutting edge of data orchestration, ensuring your organization is not just keeping pace but setting the pace in a data-driven future.

Session in https://budapestdata.hu/2024/04/kaxil-naik-astronomer-io/ | https://dataml24.sessionize.com/session/667627

一比一原版巴斯大学毕业证(Bath毕业证书)学历如何办理

原版办理【微信号:BYZS866】【巴斯大学毕业证(Bath毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【关于学历材料质量】

我们承诺采用的是学校原版纸张(原版纸质、底色、纹路、)我们工厂拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有成品以及工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

The Modern Marketing Reckoner (MMR) is a comprehensive resource packed with POVs from 60+ industry leaders on how AI is transforming the 4 key pillars of marketing – product, place, price and promotions.

"Financial Odyssey: Navigating Past Performance Through Diverse Analytical Lens"

Embark on a captivating financial journey with 'Financial Odyssey,' our hackathon project. Delve deep into the past performance of two companies as we employ an array of financial statement analysis techniques. From ratio analysis to trend analysis, uncover insights crucial for informed decision-making in the dynamic world of finance."

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Harness the power of AI-backed reports, benchmarking and data analysis to predict trends and detect anomalies in your marketing efforts.

Peter Caputa, CEO at Databox, reveals how you can discover the strategies and tools to increase your growth rate (and margins!).

From metrics to track to data habits to pick up, enhance your reporting for powerful insights to improve your B2B tech company's marketing.

- - -

This is the webinar recording from the June 2024 HubSpot User Group (HUG) for B2B Technology USA.

Watch the video recording at https://youtu.be/5vjwGfPN9lw

Sign up for future HUG events at https://events.hubspot.com/b2b-technology-usa/

原版制作(unimelb毕业证书)墨尔本大学毕业证Offer一模一样

学校原件一模一样【微信:741003700 】《(unimelb毕业证书)墨尔本大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

by

Timothy Spann

Principal Developer Advocate

https://budapestdata.hu/2024/en/

https://budapestml.hu/2024/en/

tim.spann@zilliz.com

https://www.linkedin.com/in/timothyspann/

https://x.com/paasdev

https://github.com/tspannhw

https://www.youtube.com/@flank-stack

milvus

vector database

gen ai

generative ai

deep learning

machine learning

apache nifi

apache pulsar

apache kafka

apache flink

Recently uploaded (20)

Build applications with generative AI on Google Cloud

Build applications with generative AI on Google Cloud

Intelligence supported media monitoring in veterinary medicine

Intelligence supported media monitoring in veterinary medicine

End-to-end pipeline agility - Berlin Buzzwords 2024

End-to-end pipeline agility - Berlin Buzzwords 2024

Udemy_2024_Global_Learning_Skills_Trends_Report (1).pdf

Udemy_2024_Global_Learning_Skills_Trends_Report (1).pdf

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

"Financial Odyssey: Navigating Past Performance Through Diverse Analytical Lens"

"Financial Odyssey: Navigating Past Performance Through Diverse Analytical Lens"

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Challenges of Nation Building-1.pptx with more important

Challenges of Nation Building-1.pptx with more important

A presentation that explain the Power BI Licensing

A presentation that explain the Power BI Licensing

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

BioSharing WG - ELIXIR IG - RDA Plenary 7, Tokyo, March 2016

- 5. 193 85 346 miame! MIAPA! MIRIAM! MIQAS! MIX! MIGEN! ARRIVE! MIAPE! MIASE! MIQE! MISFISHIE….! REMARK! CONSORT! MAGE-Tab! GCDML! SRAxml! SOFT! FASTA! DICOM! MzML! SBRML! SEDML…! GELML! ISA-Tab! CML! MITAB! AAO! CHEBI! OBI! PATO! ENVO! MOD! BTO! IDO…! TEDDY! PRO! XAO! DO VO! In the life sciences there are >600 content standards Databases and tools implemenEng Standards; also training material on and around standards

- 6. de jure de facto grass-roots groups standard organizations Nanotechnology Working Group • To structure, enrich and report the descripEon of the datasets and the experimental context under which they were produced Community-developed content standards

- 9. Mapping the landscape of ‘standards’ in the life sciences 1,379 records and growing

- 10. Mapping the landscape of ‘standards’ in the life sciences A web-based, curated and searchable registry ensuring that standards and databases are registered, informa4ve and discoverable; monitoring development and evolu5on of standards, their use in databases and adopEon of both in data policies 1,379 records and growing

- 11. The International Conference on Systems Biology (ICSB), 22-28 August, 2008 Susanna-Assunta Sansone www.ebi.ac.uk/net-project Tracking evolution, e.g. deprecations and substitutions

- 12. Cross-linking standards to standards and databases Model/format formalizing reporEng guideline --> <-- ReporEng guideline used by model/format We link (descriptions of) standards to related standards and databases, implementing them

- 13. Conducted a 10 quesEons survey to assess users needs: 532 replies will inform the acEviEes of the BioSharing WG and further development of the registry Activities in collaboration with and The replies to other quesEons tell us about the users’ • level of familiarity with standards • type of standards they want to see in the registry • descriptors about standards to help them make decisions • desired funcEonality of the registry • preferred indicator of maturity, adopEon and use of standards

- 14. From simple and advance search interfaces to…. Powered by curated descripEons of each standard and database records, and their relaEons; ….the recommender system

- 15. To inform the creaEon of templates to describe datasets, according to community standards, but rendering the standards invisible to the researchers Create standards-derived metadata elements High-level informa6on about the standards, their rela6ons and use by databases RDF or JSON representa6ons of the standards-derived elements Elements and their provenance served to