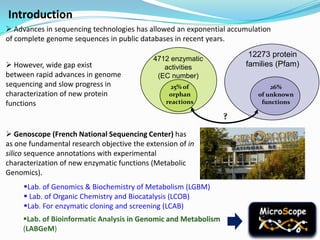

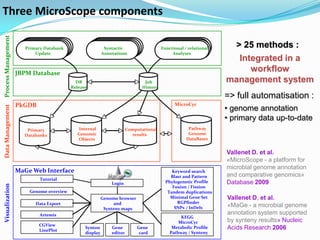

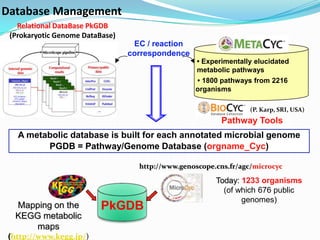

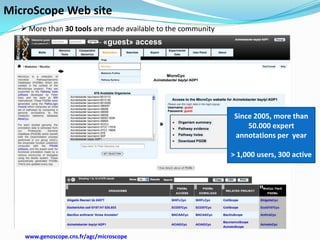

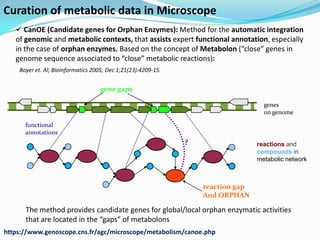

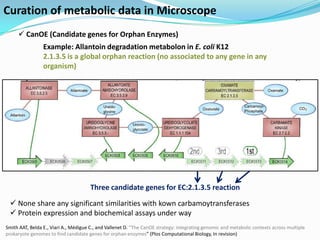

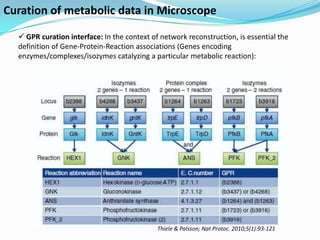

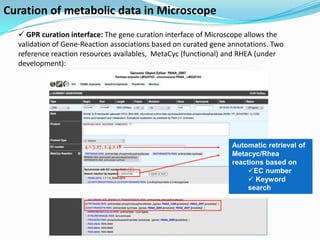

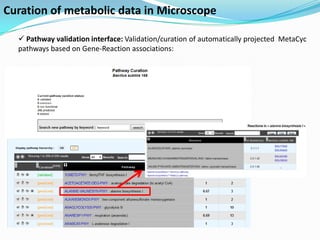

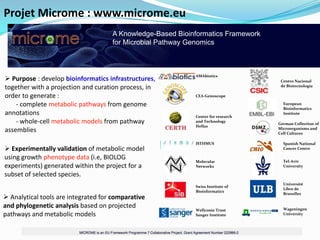

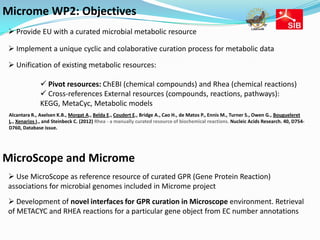

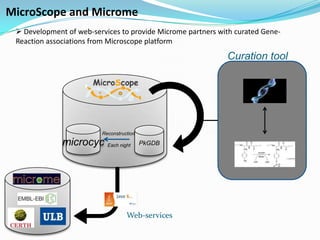

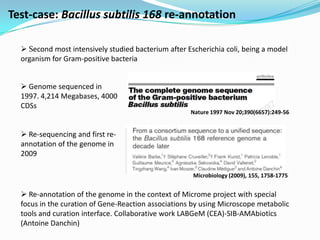

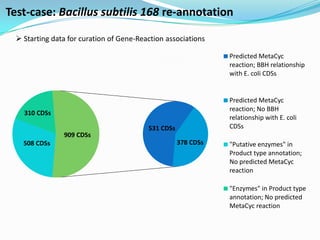

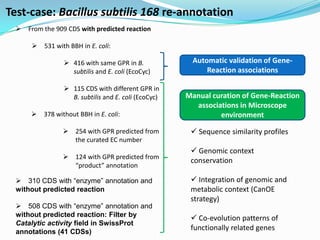

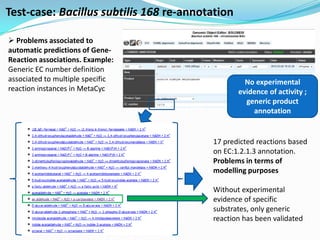

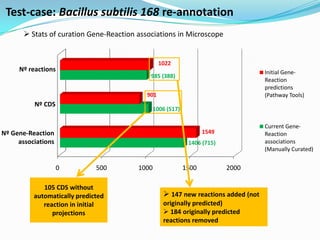

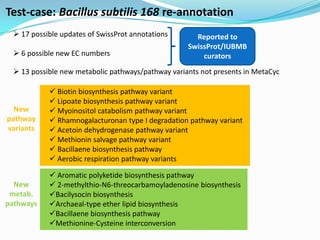

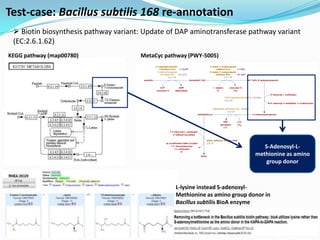

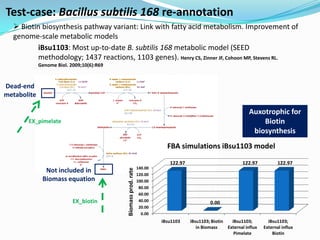

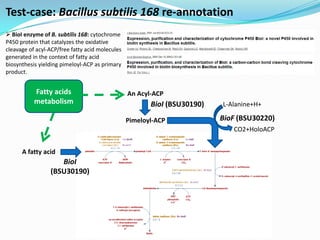

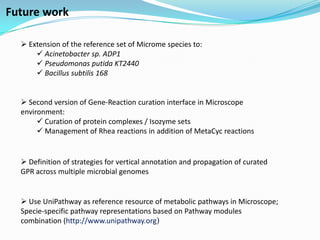

The document outlines the efforts of the LabGem team at Genoscope to enhance genomic and metabolic annotations using bioinformatics tools and methodologies. It highlights the gaps in the characterization of orphan proteins and enzymes, the role of various laboratories in extending metabolic genomics, and the development of systems for integrating data to assist in enzyme function discovery. The Canoe strategy and the curation of metabolic data, such as gene-reaction associations, are emphasized as pivotal in mapping and validating metabolic pathways across numerous microbial genomes.