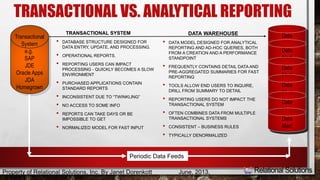

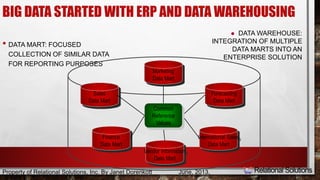

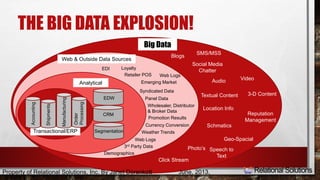

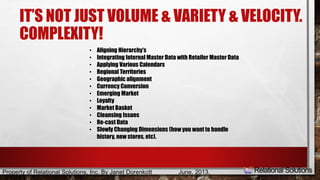

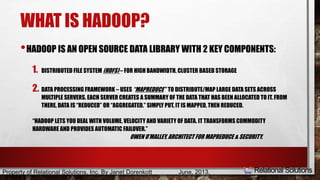

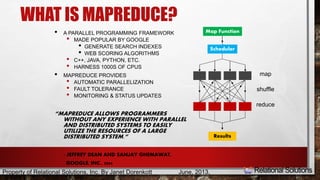

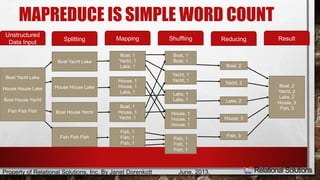

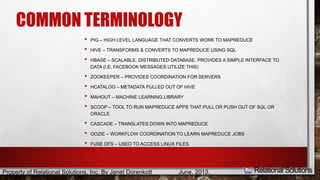

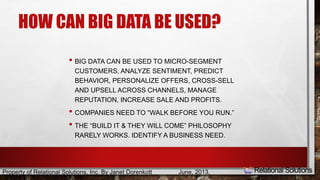

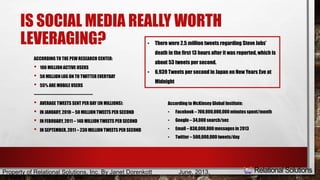

The document is a presentation by Janet Dorenkott that outlines the role of big data in improving business operations, particularly in the context of data warehousing, integration, and business intelligence solutions. It discusses the complexities of data handling, including the distinctions between transactional and analytical reporting, as well as the significance of leveraging social media for customer engagement. The presentation concludes with practical applications of big data and emphasizes collaboration with various data sources to drive insights and decision-making.