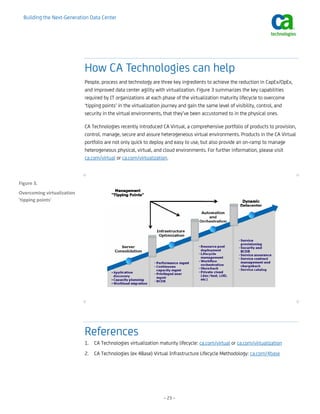

This white paper discusses building the next-generation data center through virtualization maturity. It outlines a 4-stage model of virtualization maturity: server consolidation, infrastructure optimization, automation and orchestration, and dynamic data center. For each stage, it discusses the challenges and provides a sample implementation plan. The target audience is IT directors and infrastructure leaders looking to advance their organizations along the virtualization maturity lifecycle with guidance on overcoming roadblocks at each stage through the right combination of people, processes, and technologies.