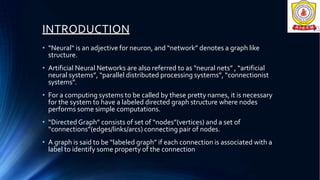

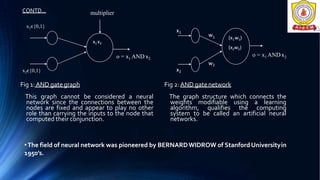

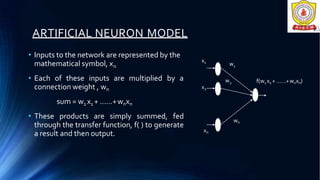

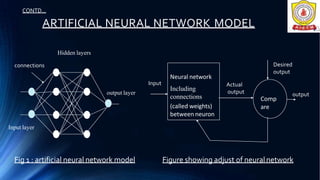

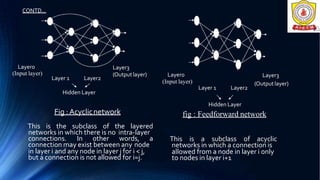

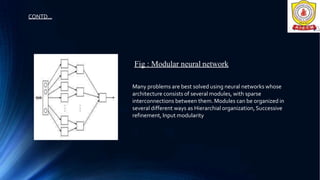

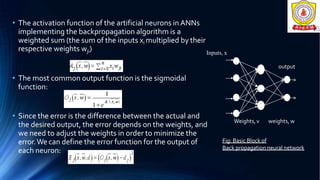

This document provides an introduction to artificial neural networks. It discusses biological neurons and how artificial neurons are modeled. The key components of a neural network including the network architecture, learning approaches, and the backpropagation algorithm for supervised learning are described. Applications and advantages of neural networks are also mentioned. Neural networks are modeled after the human brain and learn by modifying connection weights between nodes based on examples.