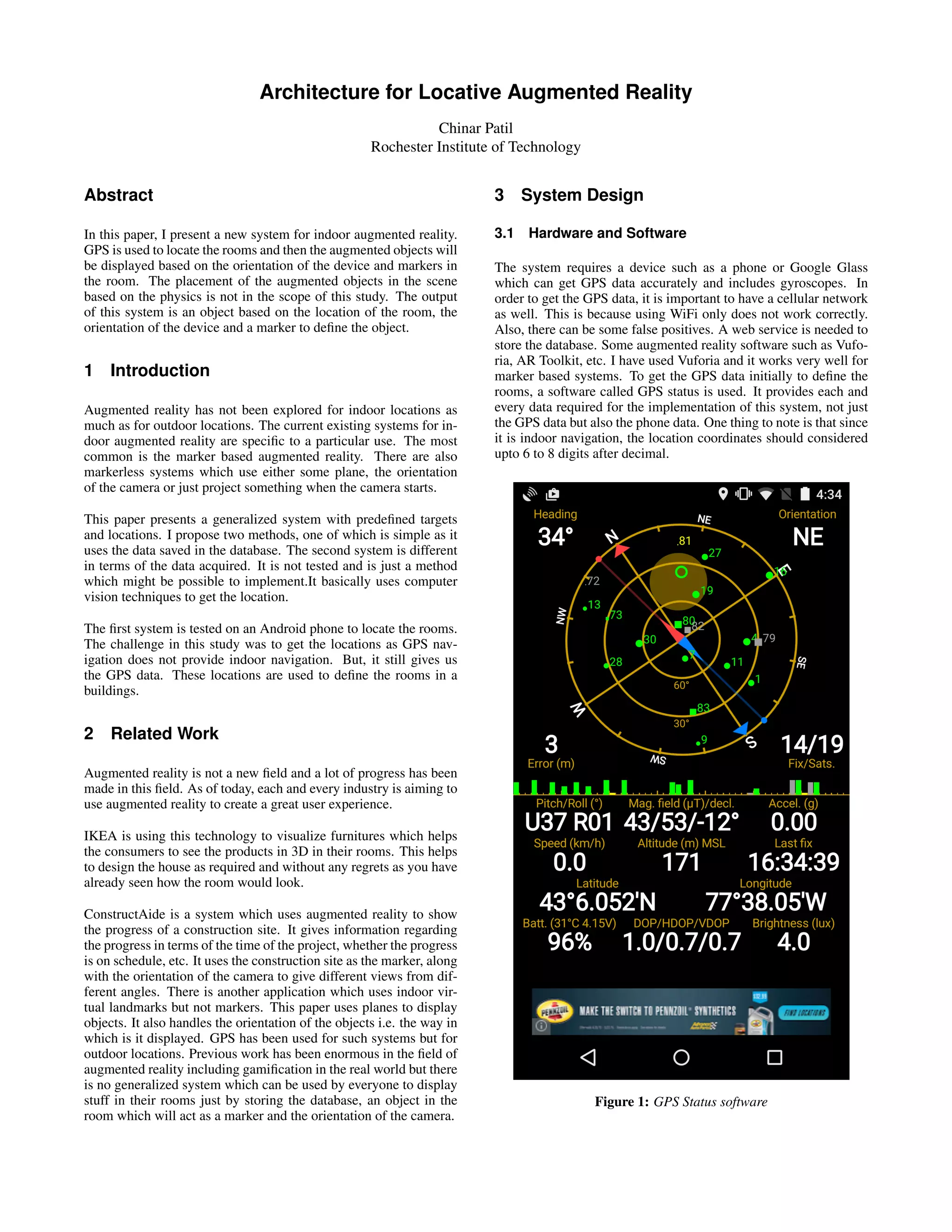

This document summarizes a system for indoor augmented reality using locative technologies. The system uses GPS to locate rooms in a building and then displays augmented objects based on the device's orientation and markers in each room. Two methods are proposed - one that uses stored location data from GPS to identify rooms, and another that uses computer vision techniques to generate an indoor map and determine locations. The system is intended to allow anyone to augment rooms in a building by storing object and location data.

![8 References

[1] Exploring the Evolution of Mobile Augmented Reality for Fu-

ture Entertainment Systems: Klen Copic Pucihar, Lancaster Uni-

versity, School of Computing and Communications, UK; Paul

Colton, Lancaster University, School of Computing and Commu-

nications, UK; June 2013.

[2] ConstructAide: Analyzing and Visualizing Construction Sites

through Photographs and Building Models: Kevin Karsch, Univer-

sity of Illinois; Mani Golparvar-Fard, University of Illinois; David

Forsyth, University of Illinois; November 2014.

[3] Orientation control for indoor virtual landmarks based on hy-

brid based markerless augmented reality: Fadhil Noer Afif, Ah-

mad Hoirul Basori; Faculty of Computing, Universiti Teknologi

Malaysia, 81310 UTM Skudai, Johor Darul Takzim, Malaysia;

November 2013.

[4] Qualcomm Vuforia SDK to implement the augmented reality

system](https://image.slidesharecdn.com/9ae6edfd-dd1b-48bb-82a4-d464a567407e-150701213757-lva1-app6892/85/Architecture-for-Locative-Augmented-Reality-4-320.jpg)