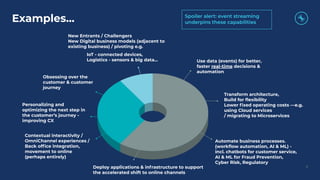

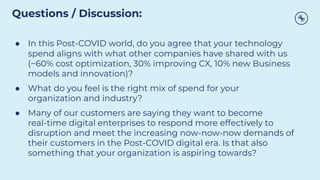

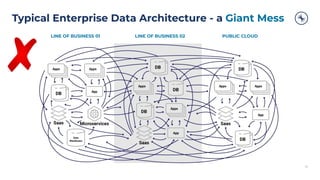

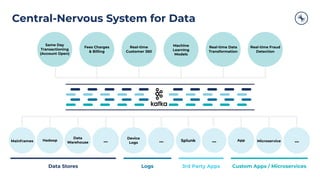

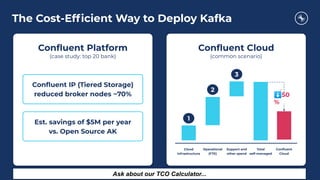

The document outlines the trends and challenges faced by businesses in 2020, emphasizing the urgency for digital transformation and cost optimization amidst the COVID-19 crisis. It discusses the adoption of event streaming as a core architecture for organizations aiming to become real-time digital enterprises, highlighting case studies from Walmart and Capital One. The conclusion encourages companies to consider their current technology investments and the importance of modernizing their data architecture to enhance operational efficiency and customer experience.