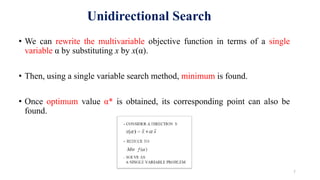

This document provides an overview of multi-variable optimization algorithms. It discusses two broad categories of multi-variable optimization methods: direct search methods and gradient-based methods. For gradient-based methods, it describes Cauchy's steepest descent method, in which the search direction at each iteration is the negative of the gradient to find the direction of maximum descent. It also introduces the simplex search method as a direct search method that manipulates a set of points to iteratively create better solutions without using gradients.