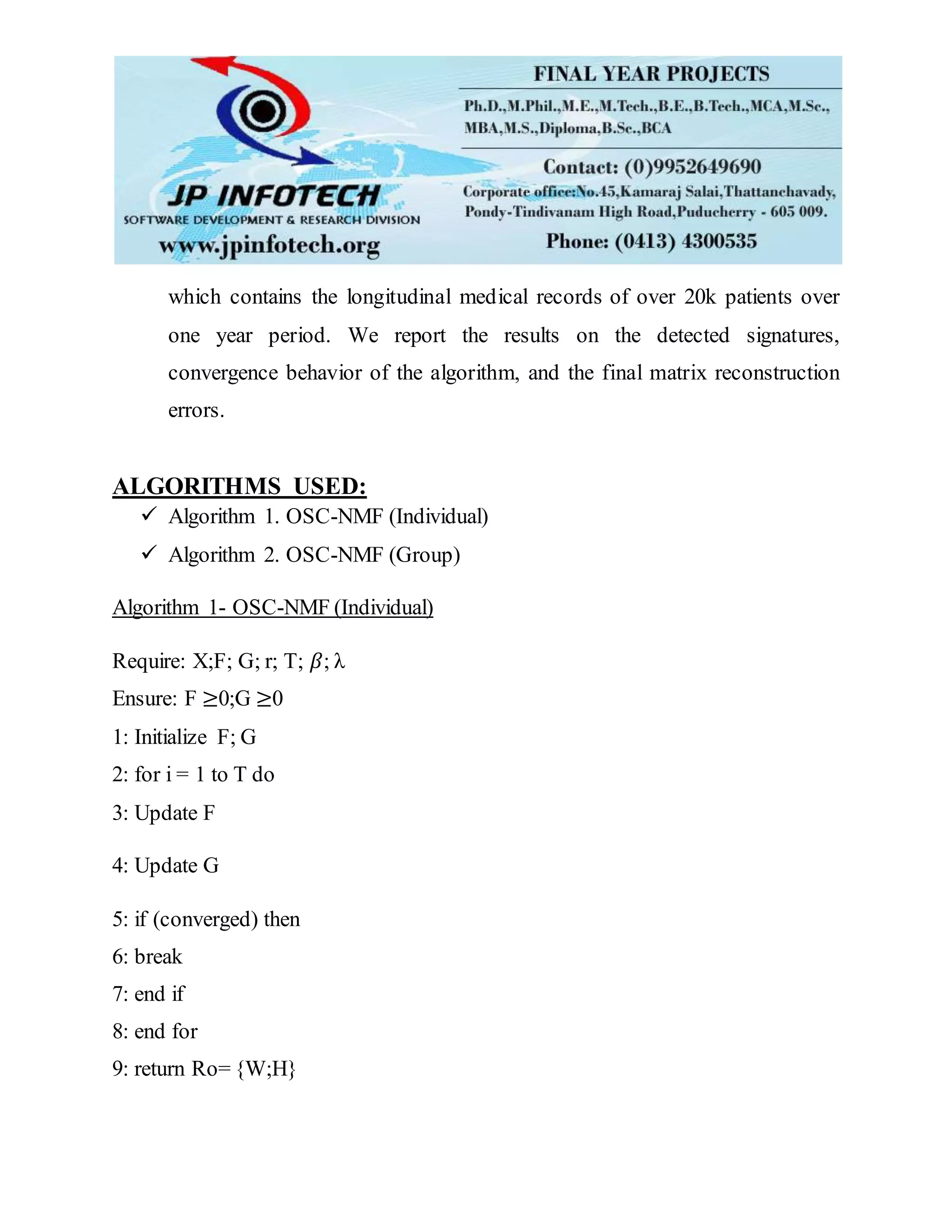

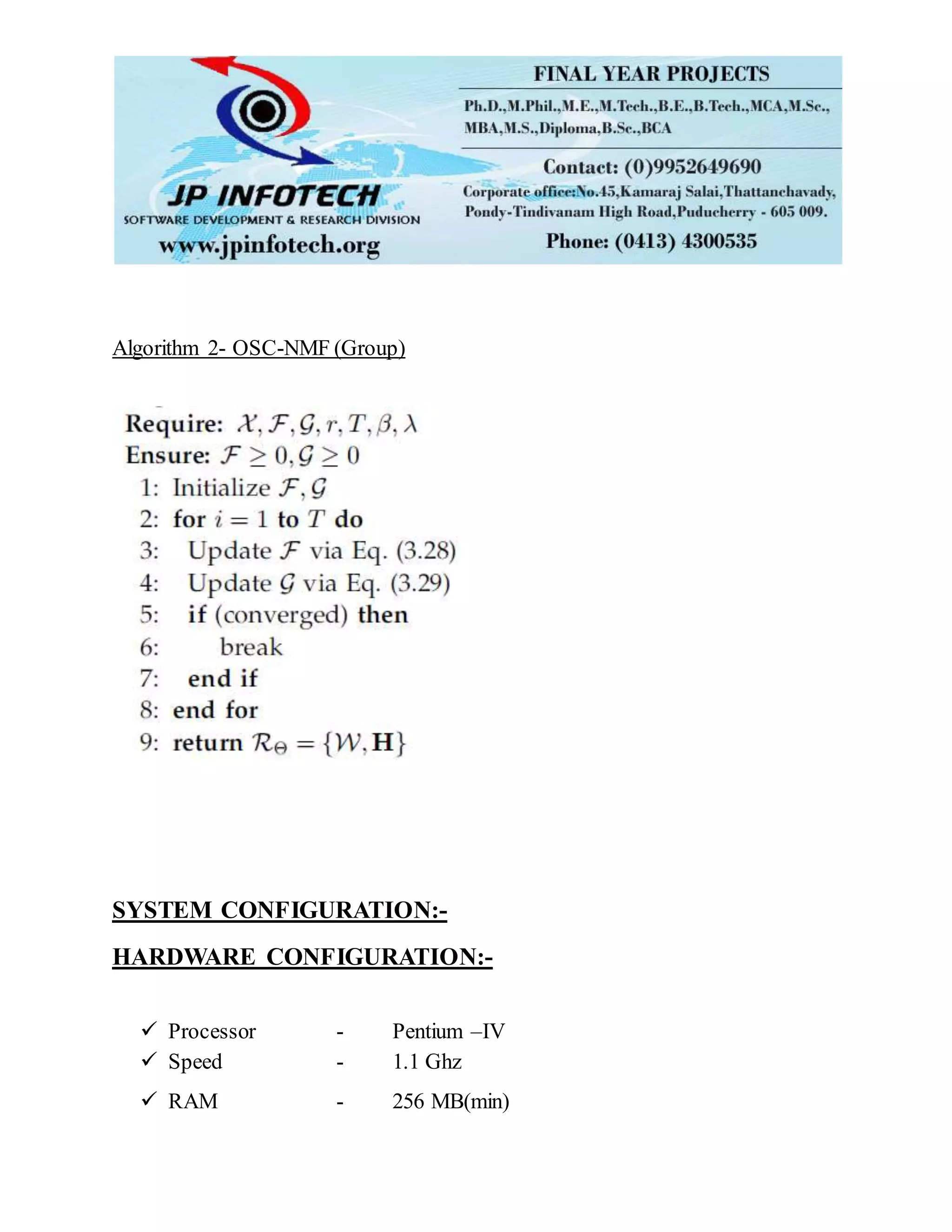

This paper proposes a novel temporal event matrix representation (TEMR) framework to perform temporal signature mining from longitudinal event data. TEMR represents event data as a spatial-temporal matrix, where one dimension is event type and the other is time. A doubly sparse convolutional matrix approximation is used to detect latent signatures in the data. The approach is validated on synthetic and electronic health record data, showing it can scale to large datasets and detect interpretable signatures.