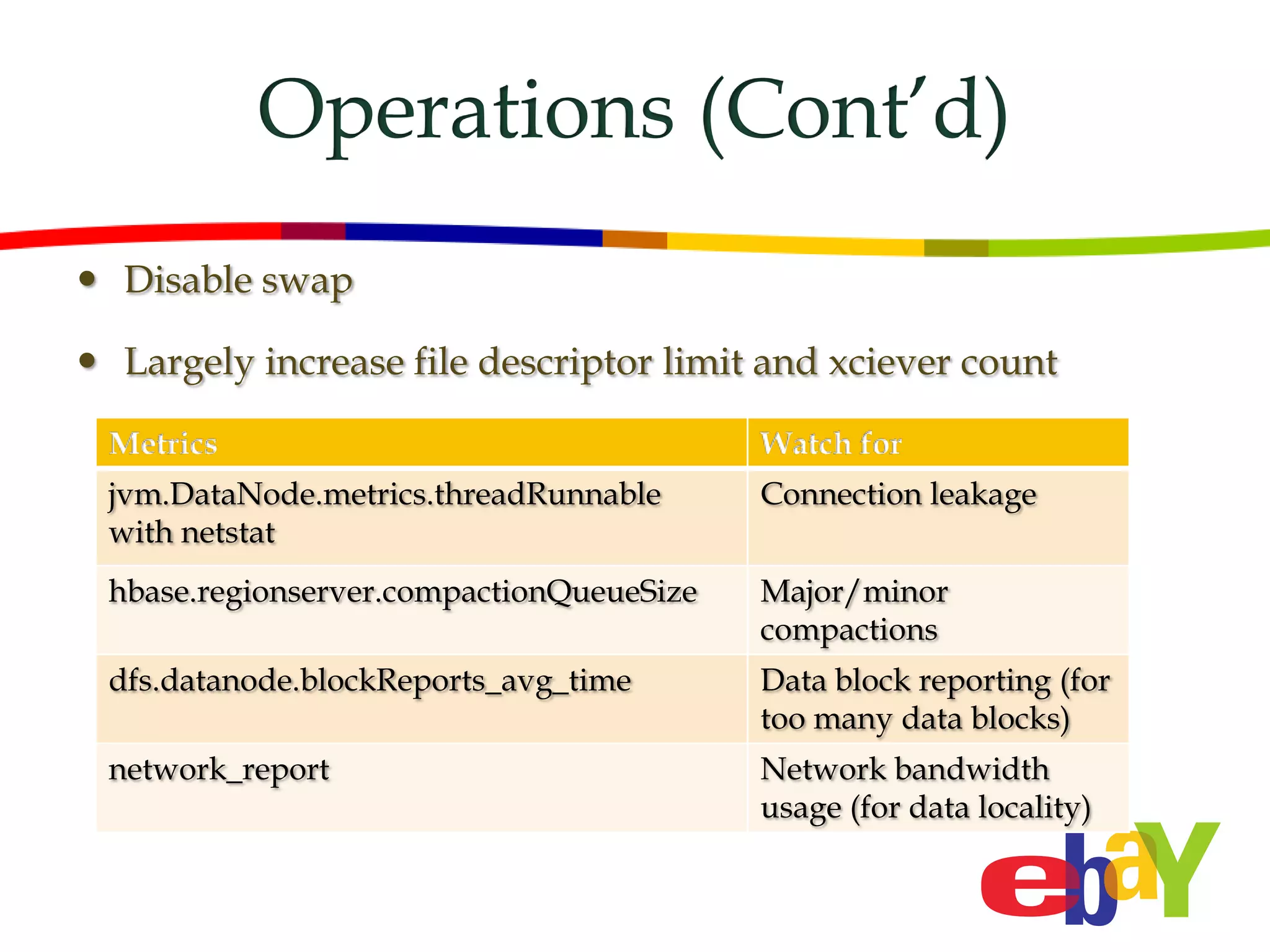

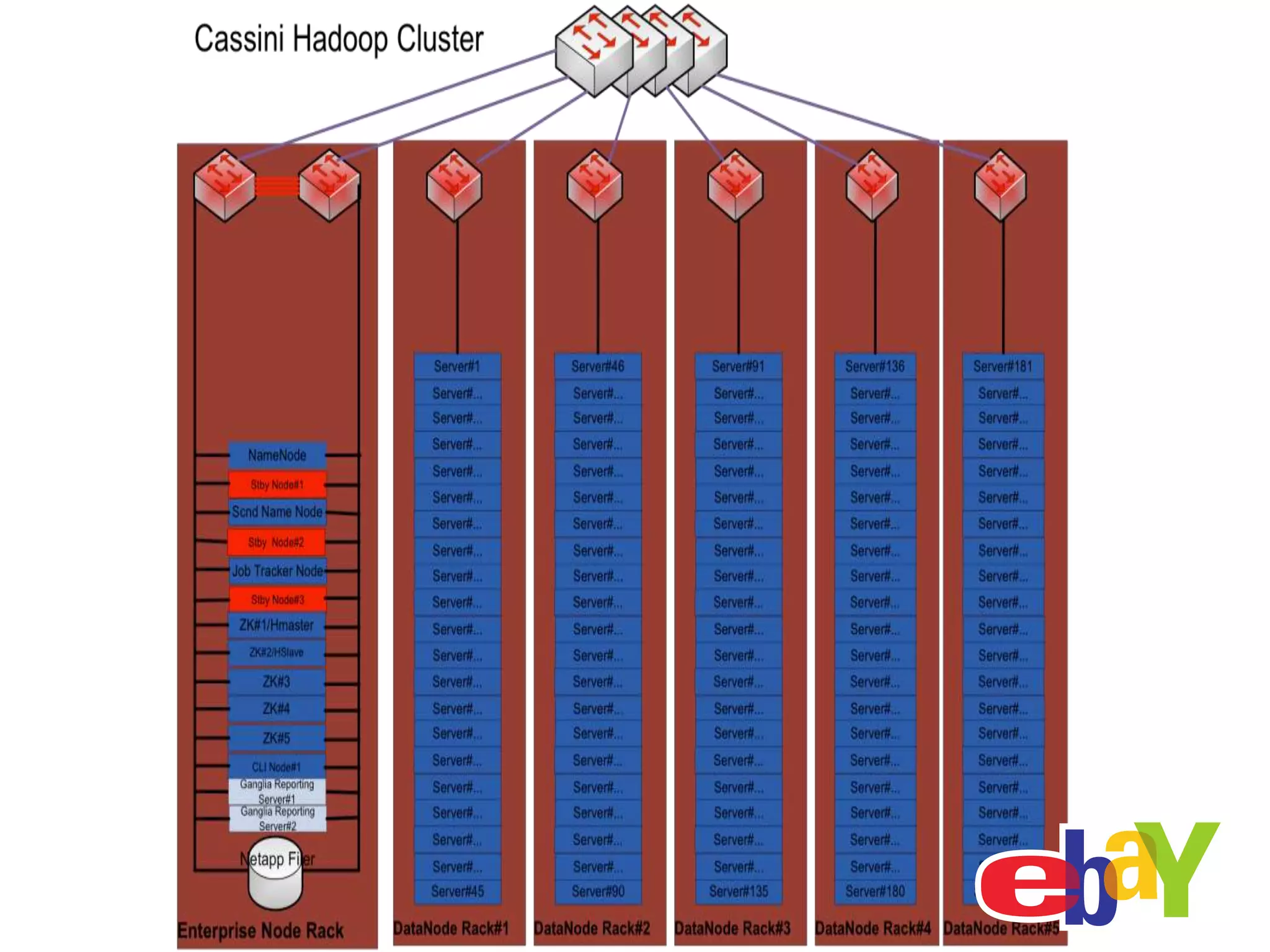

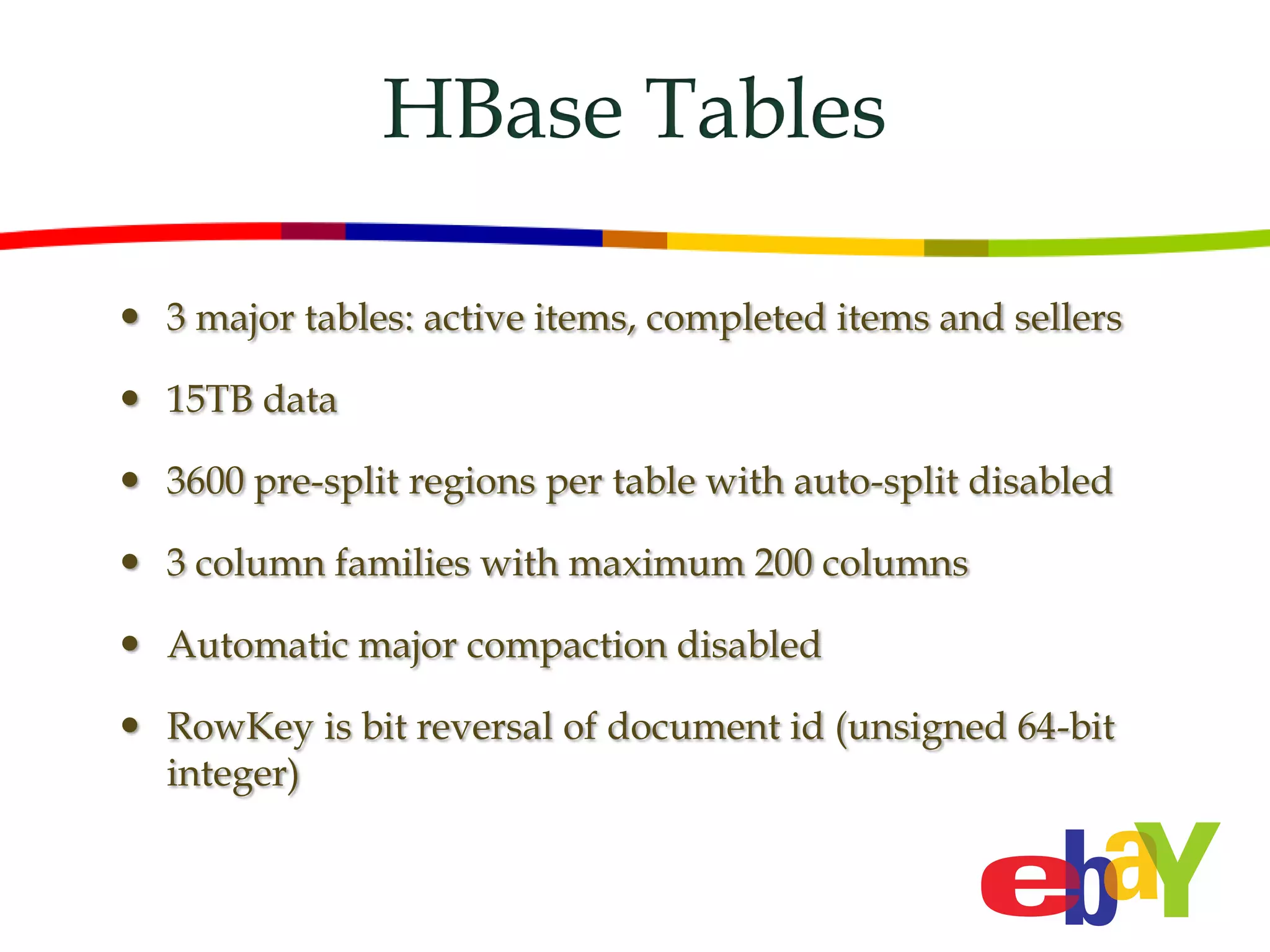

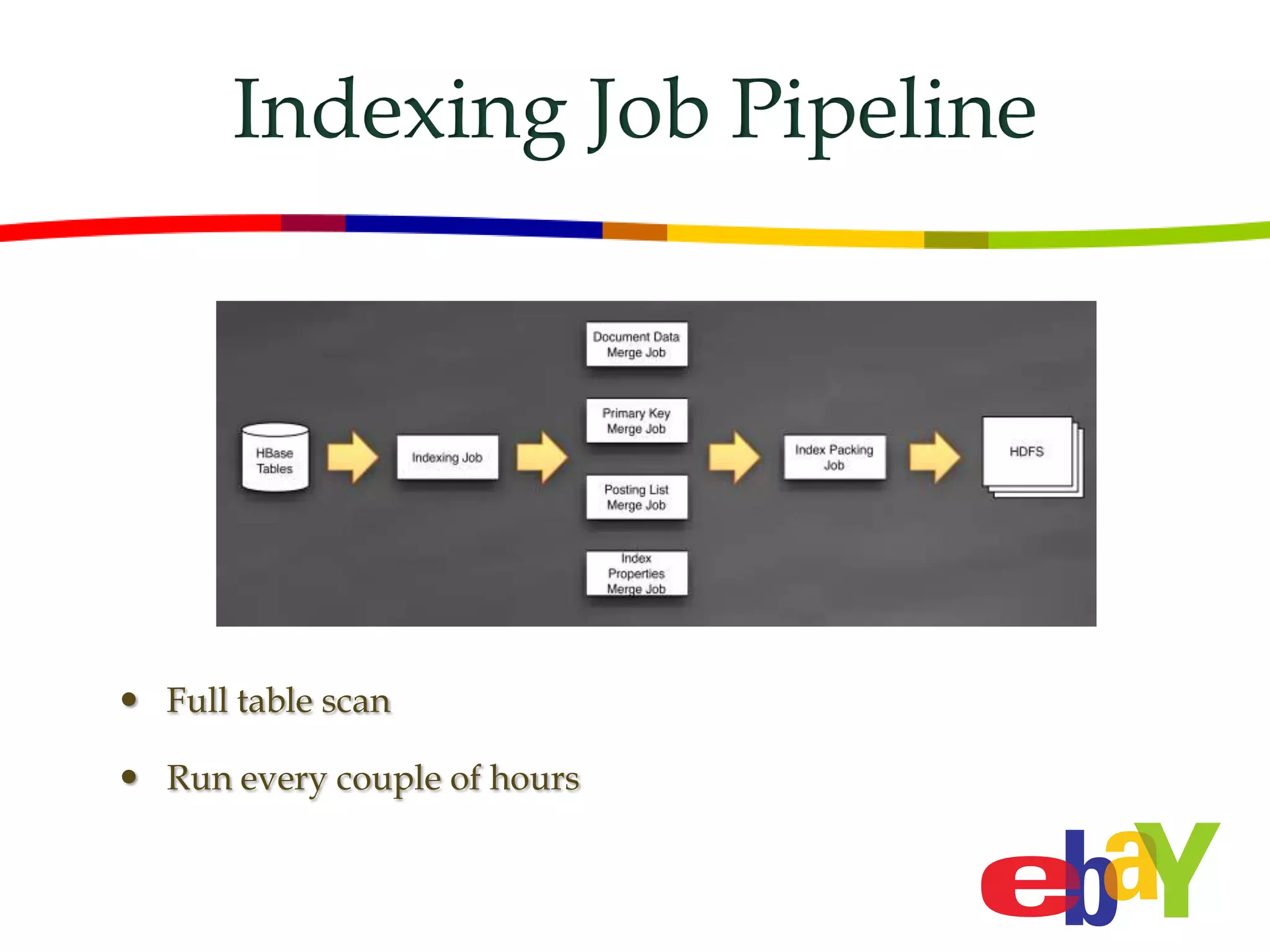

The document discusses the use of HBase in eBay's new search engine, Cassini, which manages massive data volumes and supports a high query load. It details the architecture, indexing processes, and data management practices, including the use of bulk loading and real-time updates for HBase tables. Additionally, it highlights operational metrics and monitoring strategies for the system, involving tools like Ganglia and Nagios.

![Operations

Monitoring

Ganglia

Nagios

OpenTSDB

Testing

Unit test and regression test

HBaseTestingUtility for unit test

Standalone Hbase for regression test (mvn verify)

Cluster level

Fault Injection Tests [HBASE-4925]

Region balancer

Manual major compaction](https://image.slidesharecdn.com/8hbasetheusecaseinebaycassini-120530150936-phpapp01/75/HBaseCon-2012-HBase-the-Use-Case-in-eBay-Cassini-11-2048.jpg)