This paper reviews various cooling technologies for computer products ranging from desktops to large servers, emphasizing the importance of effective heat removal for reliability and performance. It discusses internal and external cooling methods, including air-cooled heat sinks, liquid-cooled systems, and immersion cooling techniques, highlighting advancements and challenges in thermal management. The paper concludes with future challenges in improving cooling technologies to accommodate increasing power densities in computing systems.

![heat

sink, immersion cooling, impingement cooling, liquid cooling,

pool

boiling, refrigeration cooling, system cooling, thermal, thermal

management, water cooling.

I. INTRODUCTION

E LECTRONIC devices and equipment now permeate vir-tually

every aspect of our daily life. Among the most

ubiquitous of these is the electronic computer varying in size

from the handheld personal digital assistant to large scale main-

frames or servers. In many instances a computer is imbedded

within some other device controlling its function and is not

even recognizable as such. The applications of computers vary

from games for entertainment to highly complex systems sup-

porting vital health, economic, scientific, and military

activities.

In a growing number of applications computer failure results

in a major disruption of vital services and can even have

life-threatening consequences. As a result, efforts to improve

the reliability of electronic computers are as important as ef-

forts to improve their speed and storage capacity.

Since the development of the first electronic digital computers

in the 1940s, the effective removal of heat has played a key role

in insuring the reliable operation of successive generations of

computers. The Electrical Numerical Integrator and Computer

(ENIAC), dedicated in 1946, has been described as a “30 ton,

boxcar-sized machine requiring an array of industrial cooling

Manuscript received August 30, 2004.

The authors are with the IBM Corporation, Poughkeepsie, NY

12601 USA

(e-mail: [email protected]).](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-2-2048.jpg)

![Digital Object Identifier 10.1109/TDMR.2004.840855

fans to remove the 140 kW dissipated from its 18 000 vacuum

tubes” [1]. Following ENIAC, most early digital computers used

vacuum-tube electronics and were cooled with forced air.

The invention of the transistor by Bardeen, Brattain, and

Shockley at Bell Laboratories in 1947 [2] foreshadowed the

development of generations of computers yet to come. As a

replacement for vacuum tubes, the miniature transistor gener-

ated less heat, was much more reliable, and promised lower

production costs. For a while it was thought that the use of

transistors would greatly reduce if not totally eliminate cooling

concerns. This thought was short-lived as packaging engineers

worked to improve computer speed and storage capacity by

packaging more and more transistors on printed circuit boards,

and then on ceramic substrates.

The trend toward higher packaging densities dramatically

gained momentum with the invention of the integrated cir-

cuit separately by Kilby at Texas Instruments and Noyce at

Fairchild Semiconductor in 1959 [2]. During the 1960s, small

scale and then medium scale integration (SSI and MSI) led

from one device per chip to hundreds of devices per chip. The

trend continued through the 1970s with the development of

large scale integration (LSI) technologies offering hundreds

to thousands of devices per chip, and then through the 1980s

with the development of very large scale (VLSI) technologies

offering thousands to tens of thousands of devices per chip.

This

trend continued with the introduction of the microprocessor

and continues to this day with chip makers projecting that a

microprocessor chip with a billion or more transistors will be a

reality before 2010.

In many instances the trend toward higher circuit packaging](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-3-2048.jpg)

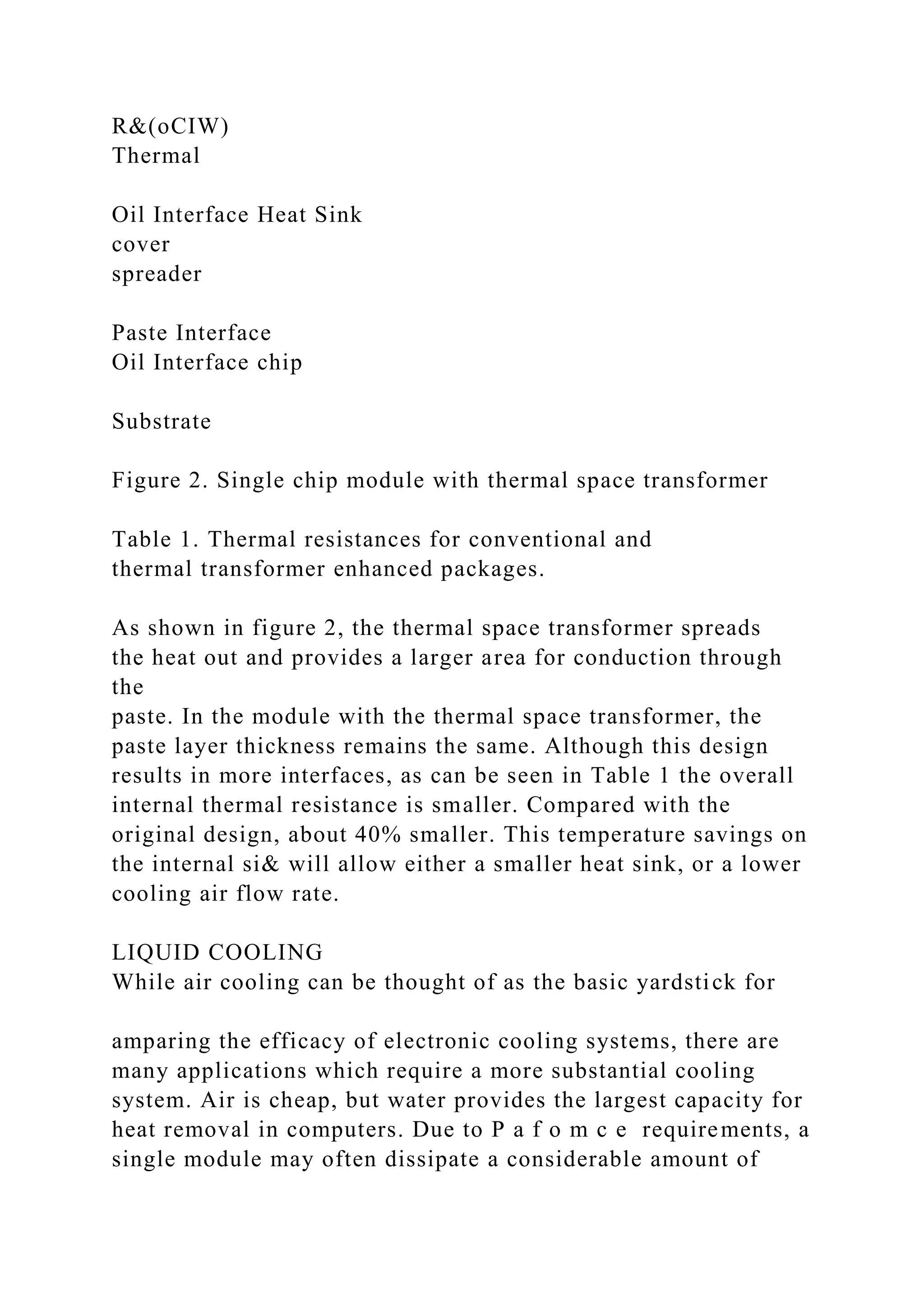

![Processor module cooling is typically characterized in two

ways: cooling internal and external to the module package and

applies to both single and multichip modules. Fig. 2 illustrates

the distinction between the two cooling regimes in the context

of a single-chip module.

A. Internal Module Cooling

The primary mode of heat transfer internal to the module is by

conduction. The internal thermal resistance is therefore dictated

by the module’s physical construction and material properties.

The objective is to effectively transfer the heat from the elec-

tronics circuits to an outer surface of the module where the heat

will be removed by external means which will be discussed in

the following section.

In the case of large multichip modules (MCMs) where

variation in the location and height of chips had to be

considered,

an approach (Figs. 3 and 4) was adopted that employed a

spring-loaded mechanical cylindrical piston touching each chip

with point contact and minute physical gaps between the chip

and piston and between the piston and module housing [3].

Fig. 2. Cross-section of a typical module denoting internal

cooling region and

external cooling region.

Fig. 3. Isometric cutaway view of an IBM TCM module with a

water-cooled

cold plate.

Fig. 4. Cross-sectional view of an IBM TCM module on an

individual chip

site basis.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-5-2048.jpg)

![The volume within the module was filled with helium gas to

minimize the thermal resistance across the gaps and achieve

an acceptable internal thermal resistance. The total module

cooling assembly was patented as a gas-encapsulated module

[4] and later named a thermal conduction module (TCM). TCM

cooling technology evolved through three generations of IBM

mainframes: system 3081, ES/3090, and ES/9000, with about

a threefold increase in cooling capability from 19 to 64 W/cm

at the chip level and 3.7 to 11.8 W/cm at the module level

570 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

Fig. 5. Cross-sectional view of a Hitachi M-880 module on an

individual chip

site basis.

[5]. The last generation TCM incorporated a copper piston (the

original piston was aluminum) with a cylindrical center section

and a slight taper on each end to minimize the gap between

piston and cap while retaining intimate contact between the

piston face and the chip [6]. Additionally, the volume inside the

module was filled with a PAO (polyalphaolefin) oil instead of

helium to reduce the piston-to-cap and chip-to-piston thermal

resistances. Hitachi packaged a similar conduction scheme in

their M-880 [7] and MP5800 [8] processors. Instead of a

cylindrical piston Hitachi utilized an interdigitated microfin

structure (Fig. 5).

In the 1990s when IBM made the switch from bipolar to

CMOS circuit technology [10] the conduction cooling approach

was simplified and reduced in cost by adopting a “flat plate”

conduction approach as shown in Fig. 6. The thermal path from

chip to cap is provided by a controlled thickness (e.g., 0.10 mm](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-6-2048.jpg)

![to 0.18 mm) of a thermally conductive paste. This was possible

largely due to improved planarity of the substrate, better control

of dimensional tolerances and enhanced thermal conductivity of

the paste.

As time went on, chip power levels continued to increase. In

addition, concentrated areas of high heat flux 2 to 3 times the

average chip heat flux referred to as hot spots emerged. To meet

internal thermal resistance requirements, in 2001 IBM chose to

attach a high-grade silicon carbide (SiC) spreader to the chip

with an adhesive thermal interface (ATI) and then use a more

conventional thermal paste between the spreader and the cap

[10]. This configuration is shown in Fig. 7.

The adhesive thermal interface (ATI), while not as thermally

conductive as the thermal paste, could be applied much thinner

resulting in a lower thermal resistance. SiC was chosen for the

spreader material for its unique combination of high thermal

conductivity and low coefficient of thermal expansion (CTE).

The CTE of the SiC closely matches that of the silicon chip thus

avoiding stress fracturing the interface when the module heats

up during use. The thermal resistance of this package arrange-

ment is lower than just using thermal paste between chip and

cap because of the use of the lower thermal resistance ATI on

the smaller chip area. The thermal paste thermal resistance is

mitigated by applying it over a much larger area.

B. External Module Cooling

Cooling external to the module serves as the primary means

to effectively transfer the heat generated within the module to

Fig. 6. Cross-sectional view of central processor module

package with thermal

paste path to module cap [9].](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-7-2048.jpg)

![Fig. 7. MCM cross-section showing heat spreader adhesively

attached to chip

(adapted from [10]).

the system environment. This is accomplished primarily by at-

taching a heat sink to the module. Traditionally, and prefer-

ably, the system environment of choice has been air because

of its ease of implementation, low cost, and transparency to

the end user or customer. This section, therefore, will focus

on air-cooled heat sinks. Liquid-cooled heat sinks typically re-

ferred to as cold plates will also be discussed.

1) Air-Cooled Heat Sinks: A typical air-cooled heat sink is

shown in Fig. 8. The heat sink is constructed of a base region

that is in contact with the module to be cooled. Fins protruding

from the base serve to extend surface area for heat transfer to

the air. Heat is conducted through the base, up into the fins and

then transferred to the air flowing in the spaces between the fins

by convection. The spacing between fins can run continuously

in one direction in the case of a straight fin heat sink or they

can

run in two directions in the case of a pin fin heat sink (Fig. 9).

Air flow can either be through the heat sink laterally (in cross

flow) or can impinge from the top as seen in Fig. 10.

The thermal performance of the heat sink is a function of

many variables. Geometric variables include the thickness and

plan area of the base plus the fin thickness, height, and spacing.

The principal material variable is thermal conductivity. Also

factored in is volumetric air flow and pressure drop. Many opti-

mization studies have been conducted to minimize the external

thermal resistance for a particular set of application conditions

[11]–[13]. However, over time, as greater and greater thermal

performance has been required, fin heights and fin number have

increased while fin spacing has been decreased. Additionally,

heat sinks have migrated in construction from all aluminum](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-8-2048.jpg)

![CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 571

Fig. 8. Typical air-cooled heat sink.

Fig. 9. Typical (a) straight fin heat sink and (b) pin fin heat

sink.

Fig. 10. Air flow path through a heat sink: (a) cross flow or (b)

impingement.

(with thermal conductivity ranging from 150–200 W/mK) to

aluminum fins on copper bases (with thermal conductivity

ranging from 350–390 W/mK) to all copper. In certain cases

heat pipes have been embedded into heat sinks to more effec-

tively spread the heat [14]–[16].

Heat sink attachment to the module also plays a role in the ex-

ternal thermal performance of a module. The method of attach-

ment and the material at the interface must be considered. The

material at the interface is important because when two surfaces

are brought together seemingly in contact with one another, sur-

face irregularities such as surface flatness and surface

roughness

result in just a fraction of the surfaces actually contacting one

another. The majority of the heat is therefore transferred

through

the material that fills the voids or gaps that exist between the

two surfaces [17]. One method of heat sink attachment is by

mechanical means using screws or a clamping mechanism. Air

has traditionally existed at the interface but more recently oils

or even phase change materials (PCMs) have been used [18] to](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-9-2048.jpg)

![reduce the thermal resistance at the interface. Another method

of attachment has been adhesively with an elastomer or epoxy.

This method has worked well on smaller single-chip modules

where heat sinks do not have to be removed from the module.

2) Water-Cooled Cold Plates: For situations where air

cooling could not meet requirements, such as was the case in

IBM’s 3081, ES/3090, and ES/9000 systems in the 1980s and

early 1990s, and the case in Hitachi’s M-880 and MP5800 in the

1990s, heat was removed from the modules via water-cooled

cold plates. Compared to air, water cooling can provide al-

most an order of magnitude reduction in thermal resistance

principally due to the higher thermal conductivity of water.

In addition, because of the higher density and specific heat of

water, its ability to absorb heat in terms of the temperature

rise across the coolant stream is approximately 3500 times that

of air. Cold plates function very similarly to air-cooled heat

sinks. For example, the ES/9000 cold plate is an internal finned

structure made of tellurium copper [19]. As with the air-cooled

heat sinks, changes in material properties and geometry were

made to improve performance. A higher thermal conductivity

tellurium copper was chosen over beryllium copper used in

previous generation cold plates. Additionally, fin heights were

increased and channel widths (analogous to fin spacings) were

decreased. The ES/9000 module also marked the first time IBM

used a PAO oil at the interface between the module cap and

cold plate to reduce the thermal interface resistance.

In an effort to significantly extend the cooling capability

of liquid-cooled cold plates, researchers continue to work on

microchannel cooling structures. The concept was originally

demonstrated over 20 years ago by Tuckerman and Pease [20].

They chemically etched 50 m-wide by 300- m-deep channels

into a 1 cm 1 cm silicon chip. By directing water through

these microchannels they were able to remove 790 W with a

temperature difference of 71 C. More recently, aluminum ni-](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-10-2048.jpg)

![tride heat sinks fabricated using laser machining and adhesively

attached to the die have been used to cool a high-powered

MCM and achieve a junction to ambient unit thermal resistance

below 0.6 K-cm /W [21]. The challenge continues to be to

provide a practical chip or module cooling structure and flow

interconnections in a manner which is both manufacturable

(i.e., cost effective) and reliable.

572 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

C. Immersion Cooling

Immersion cooling has been of interest as a possible method

to cool high heat flux components for many years. Unlike the

water-cooled cold plate approaches which utilize physical walls

to separate the coolant from the chips, immersion cooling brings

the coolant in direct physical contact with the chips. As a result,

most of the contributors to internal thermal resistance are elim-

inated, except for the thermal conduction resistance from the

device junctions to the surface of the chip in contact with the

liquid.

Direct liquid immersion cooling offers a high heat transfer co-

efficient which reduces the temperature rise of the heated chip

surface above the liquid coolant temperature. The magnitude

of the heat transfer coefficient depends upon the thermophys-

ical properties of the coolant and the mode of convective heat

transfer employed. The modes of heat transfer associated with

liquid immersion cooling are generally classified as natural con-

vection, forced convection, and boiling. Forced convection in-

cludes liquid jet impingement in the single phase regime and

boiling (including pool boiling, flow boiling, and spray cooling)

in the two-phase regime. An example of the broad range of heat](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-11-2048.jpg)

![flux that can be accommodated with the different modes and

forms of direct liquid immersion cooling is shown in Fig. 11

[22].

Selection of a liquid for direct immersion cooling cannot

be made on the basis of heat transfer characteristics alone.

Chemical compatibility of the coolant with the chips and

other packaging materials exposed to the liquid is an essential

consideration. There may be several coolants that can provide

adequate cooling, but only a few will be chemically compatible.

Water is an example of a liquid which has very desirable

heat transfer properties, but which is generally undesirable for

direct immersion cooling because of its chemical and electrical

characteristics. Alternatively, fluorocarbon liquids (e.g., FC-72,

FC-86, FC-77, etc.) are generally considered to be the most

suitable liquids for direct immersion cooling, in spite of their

poorer thermophysical properties [22], [23].

1) Natural and Forced Liquid Convection: As in the case of

air cooling, liquid natural convection is a heat transfer process

in which mixing and fluid motion is induced by differences in

coolant density caused by heat transferred to the coolant. As

shown in Fig. 11, this mode of heat transfer offers the lowest

heat flux or cooling capability for a given wall superheat or

surface-to-liquid temperature difference. Nonetheless, the heat

transfer rates attainable with liquid natural convection can ex-

ceed those attainable with forced convection of air.

Higher heat transfer rates may be attained by utilizing a pump

to provide forced circulation of the liquid coolant over the chip

or module surfaces. This process is termed forced convection

and the allowable heat flux for a given surface-to-liquid temper-

ature difference can be increased by increasing the velocity of

the liquid over the heated surface. The price to be paid for the

increased cooling performance will be a higher pressure drop.

This can mean a larger pump and higher system operating pres-](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-12-2048.jpg)

![sures. Although forced convection requires the use of a pump

and the associated piping, it offers the opportunity to remove

heat from high power chips and modules in a confined space.

The liquid coolant may then be used to transport the heat to a

remote heat exchanger to reject the heat to air or water.

Fig. 11. Heat flux ranges for direct liquid immersion cooling of

microelectronic chips [22].

Fig. 12. Forced convection thermal resistance results for

simulated 12.7 mm

� 12.7 mm microelectronic chips (adapted from [24]).

Experimental studies were conducted by Incropera and

Ramadhyani [24] to study liquid forced convection heat

transfer from simulated microelectronic chips. Tests were

performed with water and dielectric liquids (FC-77 and FC-72)

flowing over bare heat sources and heat sources with pin-fin

and finned pin extended surface enhancement. It can be seen in

Fig. 12 that, depending upon surface and flow conditions (i.e.,

Reynolds number), thermal resistance values obtained for the

fluorocarbon liquids ranged from 0.4 to 20 C W. It may be

noted that a thermal resistance on the order of 0.5 C W could

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 573

support chip powers of 100 W while maintaining chip junction

temperatures 85 C or less.

The Cray-2 supercomputer introduced in the mid-1980s pro-

vides an example of the application of forced convection liquid

cooling to computer electronics [25]. As shown in Fig. 13, the

module assembly used in the Cray-2 was three-dimensional](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-13-2048.jpg)

![in structure consisting of eight interconnected printed circuit

boards on which were mounted arrays of single-chip carriers.

Module power dissipation was reported to be 600 to 700 W.

Cooling was provided by FC-77 liquid distributed vertically

between stacks of modules and flowing horizontally between

the printed circuit cards.

Even higher heat transfer rates may be obtained in the forced

convection mode by directing the liquid flow normal to the

heated surface in the form of a liquid jet. A number of studies

[26]–[28] have been conducted to demonstrate the cooling

efficacy of liquid jet impingement flows. An example of the

chip heat flux that can be accommodated using a single FC-72

liquid jet is shown in Fig. 14. Liquid jet impingement was the

basic cooling scheme employed in the aborted SSI SS-1 super-

computer. The cooling design provided for a maximum chip

power of 40 W corresponding to a chip heat flux of 95 W/cm .

2) Pool and Flow Boiling: Boiling is a complex convec-

tive heat transfer process depending upon liquid-to-vapor phase

change with the formation of vapor bubbles at the heated sur-

face. It may be characterized as either pool boiling (occurring in

an essentially stagnant liquid) or flow boiling. The pool boiling

heat flux, , usually follows a relationship of the form

where is a constant depending upon each fluid-surface

combination, is the heat transfer surface area, is the

temperature of the heated surface, and is the saturation

temperature (i.e., boiling point) of the liquid. The value of

the exponent is typically about 3. This means that as the

heat flux is increased at the chip surface, the heat transfer

coefficient or cooling effectiveness increases. For example if

and the power dissipation is doubled, the temperature

rise will increase by only about 26% in the boiling mode

compared to 100% in the forced convection mode.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-14-2048.jpg)

![A problem that has been associated with pool boiling of fluo-

rocarbon liquids is that of temperature overshoot. This behavior

is characterized by a delay in the inception of boiling on the

heated surface. The heated surface continues to be cooled in the

natural convection mode, with increased surface temperatures

until a sufficient degree of superheat is reached for boiling to

occur. This behavior is a result of the good wetting character-

istics of fluorocarbon liquids and the smooth nature of silicon

chips. Although much work [29] has been done in this area, it is

still a potential problem in pool boiling applications using fluo-

rocarbon liquids to cool untreated silicon chips.

The maximum chip heat flux that can be accommodated in

pool boiling is determined by the critical heat flux. As power is

increased more and more vapor bubbles are generated. Even-

tually so many bubbles are generated that they form a vapor

blanket over the surface preventing fresh liquid from reaching

the surface and resulting in film boiling and high surface tem-

peratures. Typical critical heat fluxes encountered in saturated

Fig. 13. Forced convection liquid-cooled Cray-2 electronic

module assembly.

Fig. 14. Typical direct liquid jet impingement cooling

performance for a

6.5 mm � 6.5 mm integrated circuit chip (adapted from [28]).

(i.e., liquid temperature saturation temperature) pool boiling

of fluorocarbon liquids range from 10 to 15 W/cm , depending

upon the nature of the surface (i.e., material, finish, geometry).

The allowable critical heat flux may be extended by subcooling

the liquid below its saturation temperature. For example experi-

ments have shown that it is possible to increase the critical heat

in pool boiling to as much as 25 W/cm by subcooling the liquid

temperature to 25 C.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-15-2048.jpg)

![Higher critical heat fluxes may be achieved using flow

boiling. For example, heat fluxes from 25 to 30 W/cm have

been reported for liquid velocities of 0.5 to 2.5 m/s over the

heated surface [30]. In addition, it may also be noted that

temperature overshoot has not been observed to be a problem

with flow boiling.

As in the case of air cooling or single phase liquid cooling,

the heat flux that may be supported at the component level (i.e.,

chip or module) may be increased by attaching a heat sink to

the surface. As part of an early investigation of pool boiling

with fluorocarbon liquids a small 3-mm-tall molybdenum stud

with a narrow slot (0.76 mm) down the middle was attached to

574 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

a 2.16 mm 2.16 mm silicon chip. A heat flux at the chip level

in excess of 100 W/cm was achieved [31].

An example of a computer electronics package utilizing pool

boiling to cool integrated circuit chips is provided by the IBM

Liquid Encapsulated Module (LEM) developed in the 1970s

[32]. As shown in Fig. 15, a substrate with 100 integrated

circuit chips was mounted within a sealed module-cooling

assembly containing a fluorocarbon coolant (FC-72). Boiling

at the exposed chip surfaces provided a high heat transfer

coefficient (1700 to 5700 W m -K) with which to meet chip

cooling requirements. Either an air-cooled or water-cooled

cold plate could be used to handle the module heat load. With

this approach it was possible to cool 4.6 mm 4.6 mm chips

dissipating 4 W and module powers up to 300 W.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-16-2048.jpg)

![3) Spray Cooling: In recent years spray cooling has re-

ceived increasing attention as a means of supporting higher

heat flux in electronic cooling applications. Spray cooling is a

process in which very fine droplets of liquid are sprayed on the

heated surface. Cooling of the surface is then achieved through

a combination of thermal conduction through the liquid in

contact with the surface and evaporation at the liquid–vapor

interface.

One of the early investigations of spray cooling was con-

ducted by Yao et al. [33] with both real and ideal sprays of

FC-72 on a heated horizontal copper surface 3.65 cm in di-

ameter. A peak heat flux of 32 W cm , or about 2 to 3 times

the critical heat flux achievable with saturated pool boiling was

reported.

Pautsch and Bar-Cohen [34] describe two methods of spray

cooling suitable for electronic cooling. One method is termed

“low density spray cooling” and is defined as occurring when

the liquid contacts and wets the surface and then boils before

interacting with the next impinging droplet. Although a very ef-

ficient method of heat transfer, it does not support very high

heat fluxes. The other method is termed “high density evapo-

rative cooling” and requires spraying the liquid on the surface

at a rate that maintains a continuously wetted surface. In the

paper, experiments are described demonstrating the capability

to accommodate heat fluxes in excess of 50 W/cm while main-

taining chip junction temperatures below 85 C with spray evap-

orative cooling. Spray evaporative cooling is used to maintain

junction temperatures of ASICs on MCMs in the CRAY SV2

system between 70 C and 85 C for heat fluxes from 15 W/cm

to 55 W/cm [35]. In addition to the CRAY cooling application,

spray cooling has gained a foothold in the military sector pro-

viding for improved thermal management, dense system pack-

aging, and reduced weight [36].](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-17-2048.jpg)

![Researchers have also investigated spray cooling heat transfer

using other liquids. Lin and Ponnappan determined that critical

heat fluxes can reach up to 90 W/cm with fluorocarbon liquids,

490 W/cm with methanol, and higher than 500 W/cm with

water [37].

III. SYSTEM-LEVEL COOLING

Cooling systems for computers may be categorized

as air-cooled, hybrid-cooled, liquid-cooled, or refrigera-

tion-cooled. An air-cooled system is one in which air, usually

in the forced convection mode, is used to directly cool and carry

heat away from arrays of electronic modules and packages.

Fig. 15. IBM Liquid Encapsulated Module (LEM) cooling

concept.

In some systems air-cooling alone may not be adequate due

to heating of the cooling air as it passes through the machine.

In such cases a hybrid-cooling design may be employed, with

air used to cool the electronic packages and water-cooled

heat exchangers used to cool the air. For even higher power

packages it may be necessary to employ indirect liquid cooling.

This is usually done utilizing water-cooled cold plates on

which heat dissipating components are mounted, or which may

be mounted to modules containing integrated circuit chips.

Ultimately, direct liquid immersion cooling may be employed

to accommodate high heat fluxes and a high system heat load.

A. Air-Cooled Systems

Forced air-cooled systems may be further subdivided into se-

rial and parallel flow systems. In a serial flow system the same

air stream passes over successive rows of modules or boards, so

that each row is cooled by air that has been preheated by the

previous row. Depending on the power dissipated and the air](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-18-2048.jpg)

![flow rate, serial air flow can result in a substantial air tempera-

ture rise across the machine. The rise in cooling air temperature

is directly reflected in increased circuit operating temperatures.

This effect may be reduced by increasing the air flow rate. Of

course to do this requires larger blowers to provide the higher

flow rate and overcome the increase in air flow pressure drop.

Parallel air flow systems have been used to reduce the temper-

ature rise in the cooling air [38], [39]. In systems of this type,

the

printed circuit boards or modules are all supplied air in parallel

as shown in Fig. 16. Since each board or module is delivered its

own fresh supply of cooling air, systems of this type typically

require a higher total volumetric flow rate of air.

B. Hybrid Air–Water Cooling

An air-to-liquid hybrid cooling system offers a method to

manage cooling air temperature in a system without resorting

to a parallel configuration and higher air flow rates. In a system

of this type, a water-cooled heat exchanger is placed in the

heated air stream to extract heat and reduce the air temperature.

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 575

Fig. 16. Example of a parallel air-flow cooling scheme [40].

Fig. 17. Typical processor gate configuration with air-to-water

heat exchanger

between boards.

An example of the early use of this method was in the IBM

System/360 Model 91 (c. 1964) [40]. As shown in Fig. 17, the](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-19-2048.jpg)

![cooling system incorporated an air-to-water finned tube heat

exchanger between each successive row of circuit boards. The

modules on the boards were still cooled by forced convection

with air, however; the heated air exiting a board passed through

an air-to-water heat exchanger before passing over the next

board.

Approximately 50% of the heat transferred to air in the board

columns was transferred to the cooling water. A comparison of

cooling air temperatures in the board columns with and without

hybrid air-to-water cooling is shown in Fig. 18. The reduction

in air temperatures with air-to-water hybrid cooling resulted in

Fig. 18. Typical air temperature profiles across five high board

columns with

and without air-to-water heat exchangers between boards.

Fig. 19. Closed-loop liquid-to-air hybrid cooling system.

a one-to-one reduction in chip junction operating temperatures.

Ultimately air-to-liquid hybrid cooling offers the potential for a

sealed, recirculating, closed-cycle air-cooling system with total

heat rejection of the heat load absorbed by the air to chilled

water [39]. Sealing the system offers additional advantages. It

allows the use of more powerful blowers to deliver higher air

flow rates with little or no impact on acoustics. In addition,

the potential for electromagnetic emissions from air inlet/outlet

openings in the computer frame is eliminated.

Another variant of the hybrid cooling system is the

liquid-to-air cooling system shown schematically in Fig. 19. In

this system liquid is circulated in a sealed loop through a cold

plate attached to an electronic module dissipating heat. The

heat is then transported via the liquid stream to an air-cooled

heat exchanger where it is rejected to ambient air. This scheme

provides the performance advantages of indirect liquid cooling](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-20-2048.jpg)

![at the module level while retaining the advantages of air cooling

at the system or box level. Most recently, a liquid-to-air cooling

system is being used to cool the two processor modules in the

Apple Power Mac G5 personal computer shipped earlier this

year [42].

576 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

Fig. 20. Large scale computer configuration of the 1980s with

coolant

distribution unit (CDU).

C. Liquid-Cooling Systems

Either the air-to-water heat exchangers in a hybrid

air–water-cooled system or the water-cooled cold plates in

a conduction-cooled system rely upon a controlled source of

water in terms of pressure, flow rate, temperature, and chem-

istry. In order to insure the physical integrity, performance, and

long-term reliability of the cooling system, customer water is

usually not run directly through the water-carrying components

in electronic frames. This is because of the great variability

that can exist in the quality of water available at computer

installations throughout the world. Instead a pumping and heat

exchange unit, sometimes called a coolant distribution unit

(CDU) is used to control and distribute system cooling water to

computer electronics frames as shown in Fig. 20. The primary

closed loop (i.e., system) is used to circulate cooling water

to and from the electronics frames. The system heat load is

transferred to the secondary loop (i.e., customer water) via a

water-to-water heat exchanger in the CDU. Within an elec-

tronics frame a combination of parallel-series flow networks is

used to distribute water flow to individual cold plates and heat](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-21-2048.jpg)

![The potential for enhancement of computer performance

by operating at lower temperatures was recognized as long

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 577

Fig. 23. Cray-2 liquid immersion cooling system.

ago as the late 1960s and mid-1970s. Some of the earliest

studies focused on Josephson devices operating at liquid he-

lium temperatures (4 K). The focus then shifted to CMOS

devices operating near liquid nitrogen temperatures (77 K). A

number of researchers have identified the electrical advantages

of operating electronics all the way down to liquid nitrogen

LN temperatures (77 K) [43]–[45]. In summary, the ad-

vantages are:

• increased average carrier drift velocities (even at high

fields);

• steeper sub-threshold slope, plus reduced sub-threshold

currents (channel leakages) which provide higher noise

margins;

• higher transconductance;

• well-defined threshold voltage behavior;

• no degradation of geometry effects;

• enhanced electrical line conductivity;

• allowable current density limits increase dramatically (i.e.,

electromigration concerns diminish).

To illustrate how much improvement is realized with de-](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-23-2048.jpg)

![creasing temperature, Fig. 24 shows the performance of a

0.1- m CMOS circuit (relative to the performance of a 0.1- m

circuit designed to operate at 100 C) as a function of tem-

perature [43]. The performance behavior is shown for three

different assumptions about the threshold voltage. Only a slight

performance gain is realized if the circuit unchanged from

its design to operate at 100 C is taken down in temperature

(same hardware). This is due to a rise in threshold voltage

that partially offsets the gain due to higher mobilities. Tuning

threshold voltages down until eventually the same off-current as

the 100 C circuit is achieved yields the greatest performance

gain to almost 2 at 123 K. In addition, the improvement

in electrical conductivity with lowering temperature of the

two metals used today to interconnect circuits on a chip [46].

Fig. 24. Relative performance factors (with respect to a 100 C

value) of 1.5-V

CMOS circuits as a function of temperature. Threshold voltages

are adjusted

differently with temperature in each of the three scenarios

shown (adapted from

[43]).

A conductivity improvement of approximately 1.5 , 2 , and

10 is realized at about 200 K, 123 K, and 77 K, respectively.

The reduction in capacitive (RC) delays can therefore approach

2 at the lower (77 K) temperatures.

One of the earliest systems to incorporate refrigeration was

the Cray-1 supercomputer announced in 1979 [47]. Its cooling

system was designed to limit the IC die temperature to a max-

imum of 65 C. The heat generated by the ICs was conducted

through the IC package, into a PC board the IC packages were

attached to, and then into a 2-mm-thick copper plate. The

copper](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-24-2048.jpg)

![plate conducted heat to its edges which were in contact with

cast

aluminum cold bars. A refrigerant, Freon 22, flowed through

stainless steel tubes embedded in the aluminum cold bars. The

refrigerant, which was maintained at 18.5 C, absorbed the heat

that was conducted into the aluminum cold bars. The refrigera-

tion system ultimately rejected the heat to a cold water supply

flowing at 40 gpm. The maximum heat load of the system was

approximately 170 kW.

In the latter part of the 1980s, ETA Systems Inc. devel-

oped a commercial supercomputer system using CMOS logic

chips operating in liquid nitrogen [48]. The processor mod-

ules were immersed in a pool of liquid nitrogen maintained

in a vacuum-jacketed cryostat vessel within the CPU cabinet

(Fig. 25). Processor circuits were maintained below 90 K. At

this temperature, circuit speed was reported to be almost double

that obtained at above ambient temperatures. Heat transfer ex-

periments were conducted to validate peak nucleate boiling

heat flux limits of approximately 12 W/cm . A closed-loop

Stirling refrigeration system (cryogenerator) was developed

to recondense the gaseous nitrogen produced by the boiling

process.

In 1991, IBM initiated an effort to demonstrate the feasibility

of packaging and cooling a CMOS processor in a form suitable

for product use [49]. A major part of the effort was devoted

to the development of a refrigeration system that would meet

578 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

Fig. 25. ETA-10 cryogenic system configuration [48].](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-25-2048.jpg)

![IBM’s reliability and life expectancy specifications and handle

a

cooling load of 250 W at 77 K. A Stirling cycle type

refrigerator

was chosen as the only practical refrigeration method for

obtaining liquid nitrogen temperatures. Prototype models were

built with cooling capacities of 500 and 250 W at 77 K. In

addition, a packaging scheme had to be developed that would

withstand cycling from room temperature down to 77 K and

provide thermal insulation to reduce the parasitic heat losses.

A low-temperature conduction module (LTCM) was built to

package the chip and module. The LTCM, or cryostat, consisted

of a stainless steel housing with a vacuum to minimize heat

losses. This hardware was used to measure chip performance

at 77 K. As a result of this effort, prototype Stirling cycle

cryocoolers in a form factor compatible with overall system

packaging constraints were built and successfully tested and

key elements of the packaging concept were demonstrated.

IBM’s most recent interest in refrigeration-cooling focused on

the application of conventional vapor compression refrigeration

technology to operate below room temperature conditions, but

well above cryogenic temperatures. In 1997, IBM developed,

built and shipped its first refrigeration-cooled server (the S/390

G4 system) [50], [51]. This cooling scheme provided an average

processor temperature of 40 C which represented a temperature

decrease of 35 C below that of a comparable air-cooled system.

The system packaging layout is shown in Fig. 26. Below the

bulk power compartment is the central electronic complex

(CEC) where the MCM housing 12 processors is located. Two

modular refrigeration units (MRUs) located near the middle

of the frame provide cooling via the evaporator attached to the

back of the processor module. Only one MRU is operated at

a time during normal operation. The evaporator mounted on

the processor module is fully redundant with two independent](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-26-2048.jpg)

![refrigerated passages. Refrigerant passing through one passage

is adequate to cool the MCM which dissipates a maximum

power of 1050 W. Following the success of this machine IBM

has continued to exploit the advantages of sub-ambient cooling

at the high-end of its zSeries product line.

In 1999, Fujitsu released its Global Server GS8900 that uti-

lized a refrigeration unit to chill a secondary coolant and then

supply the coolant to a liquid-cooled Central Processor Unit

(CPU) MCMs [52]. A schematic of the liquid-cooled system

is shown in Fig. 27. The refrigeration unit which is called the

chilled coolant supply unit (CCSU) contains three air-cooled re-

frigeration modules and two liquid circulating pumps. The re-

frigeration modules chill the coolant to near 0 C. The system

board assembly housing the CPU modules is accommodated in

a closed box in which the dew point is controlled in order to

pre-

vent condensation from forming on the electrical equipment. In

comparison to an air-cooled version of this system, circuit junc-

tion temperatures are reduced by more than 50 C.

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 579

Fig. 26. IBM S390 G4 server with refrigeration-cooled

processor module and redundant modular refrigeration units

(MRUs).

Fig. 27. Configuration of Fujitsu’s GS8900 low-temperature

liquid cooling system (adapted from [52]).

IV. DATA CENTER THERMAL MANAGEMENT

Due to technology compaction, the information technology](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-27-2048.jpg)

![(IT) industry has seen a large decrease in the floor space

required to achieve a constant quantity of computing and

storage

capability. However, the energy efficiency of the equipment

has not dropped at the same rate. This has resulted in a

significant increase in power density and heat dissipation within

the footprint of computer and telecommunications hardware.

The heat dissipated in these systems is exhausted to the room

and the room has to be maintained at acceptable temperatures

for reliable operation of the equipment. Cooling computer and

telecommunications equipment rooms is becoming a major

challenge.

The increasing heat load of datacom equipment has been

documented by a thermal management consortium of 17 com-

panies and published in collaboration with the Uptime Institute

[53] as shown in Fig. 28. Also shown in this figure are mea-

sured heat fluxes (based on product footprint) of some recent

product announcements. The most recent shows a rack dissi-

pating 28 500 W resulting in a heat flux based on the footprint

of the rack of 20 900 W/m . With these heat loads the focus

for customers of such equipment is in providing adequate air

flow at a temperature that meets the manufacturer’s require-

ments. Of course, this is a very complex problem considering

the dynamics of a data center and one that is only starting

to be addressed [54]–[61]. There are many opportunities for

improving the thermal environment of data centers and the

580 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

Fig. 28. Equipment power trends [53].

Fig. 29. Cluster of server racks.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-28-2048.jpg)

![efficiency of the cooling techniques applied to those data cen-

ters [61]–[63].

Air-flow direction in the room has a major affect on the

cooling of computer rooms. A major requirement is the uni-

formity of air temperature at the computer inlets. A number

of papers have focused on whether the air should be deliv-

ered overhead or from underneath a raised floor [65]–[67],

ceiling height requirements to eliminate “heat traps” or hot air

stratification [64], [65], raised floor heights [64], and proper

dis-

tribution of the computer equipment in the data center [66], [68]

to eliminate the potential for hot spots or high temperatures.

Computer room cooling concepts can be classified according to

the two main types of room construction: 1) nonraised floor (or

standard room) and 2) raised floor. Some of the papers discuss

and compare these concepts in general terms [67], [69]–[71].

Data centers are typically arranged into hot and cold aisles

as shown in Fig. 29. This arrangement accommodates most

rack designs which typically employ front-to-back cooling and

somewhat separates the cold air exiting the perforated tiles

(for raised floor designs) and overhead chilled air flow (for

nonraised floor designs) from the hot air exhausting from the

back of the racks. The racks are positioned on the cold aisle

such that the fronts of the racks face the cold aisle. Similarly,

the back of the racks face each other and provide a hot-air

exhaust region. This layout allows the chilled air to wash the

front of the data processing (DP) equipment while the hot air

from the racks exits into the hot aisle as it returns to the inlet of

the air conditioning (A/C) units.

With the arrangement of computer server racks in rows within

a data center there may be zones where all the equipment within](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-29-2048.jpg)

![In January 2002, the American Society of Heating, Re-

frigerating and Air Conditioning Engineers (ASHRAE) was

approached with a proposal to create an independent committee

to specifically address high-density electronic heat loads. The

proposal was accepted by ASHRAE and eventually a tech-

nical committee, TC9.9 Mission Critical Facilities, Technology

Spaces, and Electronic Equipment, was formed. The first pri-

ority of TC9.9 was to create a Thermal Guidelines document

that would help to align the designs of equipment manufac-

turers and help data center facility designers to create efficient

and fault tolerant operation within the data center. The re-

sulting document, Thermal Guidelines for Data Processing

Environments, was published in January 2004 [73]. Some of

the key issues of that document will now be described.

For data centers, the primary thermal management focus is

on assuring that the housed equipment’s temperature and hu-

midity requirements are met. Each manufacturer has their own

environmental specification and a customer of many types of

electronic equipment is faced with a wide variety of environ-

mental specifications. In an effort to standardize, the ASHRAE

TC9.9 committee first surveyed the environmental specifica-

tions of a number of data processing equipment manufacturers.

From this survey, four classes were identified that would en-

compass most of the specifications. Also included within the

guidelines was a comparison to the NEBS (Network Equipment

Building Systems) specifications for the telecommunications

industry to show both the differences and also aid in possible

convergence of the specifications in the future. The four data

processing classes cover the entire environmental range from

air conditioned, server and storage environments of classes 1

and 2 to the lesser controlled environments like class 3 for

workstations, PCs and portables or class 4 for point of sales

equipment with virtually no environmental control.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-34-2048.jpg)

![Other publications will follow on data center thermal man-

agement with one planned for January 2005 that will update the

initial trend chart and will discuss air cooling and water cooling

in the context of the data center. However, to aid in the ad-

vancement of data center thermal management it is of utmost

importance to understand the current situation in high density

data centers in order to build on this understanding to further

enhance the thermal environment in data centers. In this effort

Schmidt [74] published the first paper of its kind to completely

thermally profile a high density data center. The motivation for

the paper was twofold. First, the paper provided some basic in-

formation on the thermal/flow data collected from a high

density

data center. Second, it provided a methodology which others

can

follow in collecting thermal and air flow data from data centers

so that data can be assimilated to make comparisons. This data-

base can then provide the basis for future data center air cooling

design and aid in the understanding of deployment of racks of

higher heat loads in the future. This data needs to be further

expanded so that data center design and optimization from an

air-cooled viewpoint can occur.

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 583

Data centers do have limitations and each data center is

unique such that some data centers have much lower power

density limitations than others. To resolve these environmental

issues in some data centers today manufacturers of HVAC

equipment have begun to offer liquid cooling solutions to aid

in data center thermal management. The objective of these

new approaches is to move the liquid cooling closer to the

source of the problem, which is the electronic equipment that](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-36-2048.jpg)

![must be commensurate with the overall manufacturing cost of

the computer and indeed be a relatively small fraction of the

total cost!

Although air cooling may be expected to continue to be the

most pervasive method of cooling, in many instances the chips

and packages that require cooling are at or will soon exceed

the limits of air cooling. As this happens it will be necessary to

once again introduce water or some other form of liquid

cooling.

This represents a real challenge as it does not mean simply res-

urrecting the water-cooled designs of the past. Machines today

are packaged much more densely than in the past making the job

of introducing water or any other form of liquid cooling much

more challenging. In addition, today many machines must virtu-

ally operate continuously without interruption. This means that

the cooling design must incorporate redundancy to allow for a

blower or pump failure while continuing to provide the required

cooling function. It also means that provisions must be incorpo-

rated in the cooling design to allow replacement of the failed

unit while the machine continues to operate. All of these con-

siderations clearly represent an increased level of challenges for

thermal engineers. It also means that thermal engineers must be

an integral part of the design process from the very beginning

and work very closely with electrical and packaging engineers

to achieve a truly holistic design.

In addition, as identified in the thermal management section

of the 2002 National Electronics Management Technology

Roadmap [75] there are several major cooling areas requiring

further development and innovation. In order to diffuse high

heat flux from chip heat sources and reduce thermal resistance

at the chip-to-sink interface, there is a need to develop low cost,

higher thermal conductivity, packaging materials such as adhe-

sives, thermal pastes and thermal spreaders. Advanced cooling](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-38-2048.jpg)

![technology in the form of heat pipes and vapor chambers are

already widely used. Further advances in these technologies

as well as thermoelectric cooling technology, direct liquid

cooling technology, high-performance air-cooled heat sinks

and air movers are also needed. Also as discussed earlier in

the paper, cooling at the data center level is also becoming a

very challenging problem. High performance cooling systems

that will minimize the impact to the environment within the

customer’s facility are needed to answer this challenge. Finally,

to achieve the holistic design referred to above, it will be

necessary to develop advanced modeling tools to integrate

the electrical, thermal, and mechanical aspects of package

and product function, while providing enhanced usability and

minimizing interface incompatibilities.

It is clear that thermal management for high-performance

computers will continue to be an area offering engineers many

challenges and opportunities for meaningful contributions and

innovations.

REFERENCES

[1] A. E. Bergles, “The evolution of cooling technology for

electrical, elec-

tronic, and microelectronic equipment,” ASME HTD, vol. 57,

pp. 1–9,

1986.

[2] D. Hanson, The New Alchemists. New York: Avon Books,

1982.

[3] R. C. Chu, U. P. Hwang, and R. E. Simons, “Conduction

cooling for an

LSI package: A one-dimensional approach,” IBM J. Res.

Develop., vol.

26, no. 1, pp. 45–54, Jan. 1982.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-39-2048.jpg)

![[4] R. C. Chu, O. R. Gupta, U. P. Hwang, and R. E. Simons,

“Gas encapsu-

lated cooling module,” U.S. Patent 3,741,292, 1976.

[5] R. C. Chu and R. E. Simons, “Cooling technology for high

performance

computers: Design applications,” in Cooling of Electronic

Systems, S.

Kakac, H. Yuncu, and K. Hijikata, Eds. Boston, MA: Kluwer,

1994,

pp. 97–122.

[6] G. F. Goth, M. L. Zumbrunnen, and K. P. Moran, “Dual-

Tapered piston

(DTP) module cooling for IBM enterprise system/9000

systems,” IBM

J. Res. Develop., vol. 36, no. 4, pp. 805–816, July 1992.

[7] F. Kobayashi, Y. Watanabe, M. Yamamoto, A. Anzai, A.

Takahashi, T.

Daikoku, and T. Fujita, “Hardware technology for HITACHI M-

880 pro-

cessor group,” in Proc. 41st Electronics Components and

Technology

Conf., Atlanta, GA, May 1991, pp. 693–703.

[8] F. Kobayashi, Y. Watanabe, K. Kasai, K. Koide, K.

Nakanishi, and R.

Sato, “Hardware technology for the Hitachi MP5800 series

(HDS Sky-

line Series),” IEEE Trans. Adv. Packag., vol. 23, no. 3, pp.

504–514, Aug.

2000.

[9] P. Singh, D. Becker, V. Cozzolino, M. Ellsworth, R.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-40-2048.jpg)

![Schmidt, and E.

Seminaro, “System packaging for a CMOS mainframe,”

Advancing Mi-

croelectron., vol. 25, no. 7, pp. 12–17, 1998.

584 IEEE TRANSACTIONS ON DEVICE AND MATERIALS

RELIABILITY, VOL. 4, NO. 4, DECEMBER 2004

[10] J. U. Knickerbocker, “An advanced multichip module

(MCM) for high-

performance unix servers,” IBM J. Res. Develop., vol. 46, no. 6,

pp.

779–804, Nov. 2002.

[11] D. J. De Kock and J. A. Visser, “Optimal heat sink design

using mathe-

matical optimization,” Adv. Electron. Packag., vol. 1, pp. 337–

347, 2001.

[12] J. R. Culham and Y. S. Muzychka, “Optimization of plate

fin heat sinks

using entropy generation minimization,” IEEE Trans. Compon.

Packag.

Technol., vol. 24, no. 2, pp. 159–165, Jun. 2001.

[13] M. F. Holahan, “Fins, fans, and form: Volumetric limits to

air-side heat

sink performance,” in Proc. 9th Intersociety Conf. Thermal and

Ther-

momechanical Phenomena in Electronic Systems, Las Vegas,

NV, Jun.

2004, pp. 564–570.

[14] F. Roknaldin and R. A. Sahan, “Cooling solution for next](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-41-2048.jpg)

![generation

high-power processor boards in 1U computer servers,” Adv.

Electron.

Packag., vol. 2, pp. 629–634, 2003.

[15] M. Gao and Y. Cao, “Flat and U-shaped heat spreaders for

high-power

electronics,” Heat Transfer Eng., vol. 24, no. 3, pp. 57–65,

May/Jun.

2003.

[16] Z. Z. Yu and T. Harvey, “Precision-Engineered heat pipe

for cooling Pen-

tium II in compact PCI design,” in Proc. 7th Intersociety Conf.

Thermal

and Thermomechanical Phenomena in Electronic Systems, Las

Vegas,

NV, May 2000, pp. 102–105.

[17] V. W. Antonetti, S. Oktay, and R. E. Simons, “Heat

transfer in electronic

packages,” in Microelectronics Packaging Handbook, R. R.

Tummala

and E. J. Rymaszewski, Eds. New York: Van Nostrand

Reinhold, 1989,

pp. 189–190.

[18] R. S. Prasher, C. Simmons, and G. Solbrekken, “Thermal

contact resis-

tance of phase change and grease type polymeric materials,”

Amer. Soc.

Mechanical Engineers, Manufacturing Engineering Division

(MED),

vol. 11, pp. 461–466, 2000.

[19] D. J. Delia, T. C. Gilgert, N. H. Graham, U. P. Hwang, P.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-42-2048.jpg)

![W. Ing, J.

C. Kan, R. G. Kemink, G. C. Maling, R. F. Martin, K. P. Moran,

J. R.

Reyes, R. R. Schmidt, and R. A. Steinbrecher, “System cooling

design

for the water-cooled IBM enterprise system/9000 processors,”

IBM J.

Res. Develop., vol. 36, no. 4, pp. 791–803, Jul. 1992.

[20] D. B. Tuckerman and R. F. Pease, “High performance heat

sinking for

VLSI,” IEEE Electron. Device Lett., vol. EDL-2, no. 5, pp.

126–129,

May 1981.

[21] R. Hahn, A. Kamp, A. Ginolas, M. Schmidt, J. Wolf, V.

Glaw, M. Topper,

O. Ehrmann, and H. Reichl, “High power multichip modules

employing

the planar embedding technique and microchannel water heat

sinks,”

IEEE Trans. Compon., Packag., Manufact. Technol.–Part A, vol.

20, no.

4, pp. 432–441, Dec. 1997.

[22] A. E. Bergles and A. Bar-Cohen, “Direct liquid cooling of

microelec-

tronic components,” in Advances in Thermal Modeling of

Electronic

Components and Systems, A. Bar-Cohen and A. D. Kraus, Eds.

New

York: ASME Press, 1990, vol. 2, pp. 233–342.

[23] R. E. Simons, “Direct liquid immersion cooling for high

power density

microelectronics,” Electron. Cooling, vol. 2, no. 2, 1996.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-43-2048.jpg)

![[24] F. P. Incropera, “Liquid immersion cooling of electronic

components,”

in Heat Transfer in Electronic and Microelectronic Equipment,

A. E.

Bergles, Ed. New York: Hemisphere, 1990.

[25] R. D. Danielson, N. Krajewski, and J. Brost, “Cooling a

superfast com-

puter,” Electron. Packag. Produc., pp. 44–45, Jul. 1986.

[26] L. Jiji and Z. Dagan, “Experimental investigation of single

phase multi

jet impingement cooling of an array of microelectronic heat

sources,” in

Modern Developments in Cooling Technology for Electronic

Equipment,

W. Aung, Ed. New York: Hemisphere, 1988, pp. 265–283.

[27] P. F. Sullivan, S. Ramadhyani, and F. P. Incropera,

“Extended surfaces to

enhance impingement cooling with single circular jets,” Adv.

Electron.

Packag., vol. ASME EEP-1, pp. 207–215, Apr. 1992.

[28] G. M. Chrysler, R. C. Chu, and R. E. Simons, “Jet

impingement boiling

of a dielectric coolant in narrow gaps,” IEEE Trans. CPMT-A,

vol. 18,

no. 3, pp. 527–533, 1995.

[29] A. E. Bergles and A. Bar-Cohen, “Immersion cooling of

digital com-

puters,” in Cooling of Electronic Systems, S. Kakac, H. Yuncu,

and K.

Hijikata, Eds. Boston, MA: Kluwer, 1994, pp. 539–621.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-44-2048.jpg)

![[30] I. Mudawar and D. E. Maddox, “Critical heat flux in

subcooled flow

boiling of fluorocarbon liquid on a simulated chip in a vertical

rectan-

gular channel,” Int. J. Heat Mass Transfer, vol. 32, 1989.

[31] R. C. Chu and R. E. Simons, “Review of boiling heat

transfer for cooling

of high-power density integrated circuit chips,” in Process,

Enhanced,

and Multiphase Heat Transfer, A. E. Bergles, R. M. Manglik,

and A. D.

Kraus, Eds. New York: Begell House, 1996.

[32] R. E. Simons, “The evolution of IBM high performance

cooling tech-

nology,” IEEE Trans. CPMT-Part A, vol. 18, no. 4, pp. 805–

811, 1995.

[33] S. C. Yao, S. Deb, and N. Hammouda, “Impacting spray

boiling for

thermal control of electronic systems,” Heat Transfer Electron.,

vol.

ASME HTD-111, pp. 129–134, 1989.

[34] G. Pautsch and A. Bar-Cohen, “Thermal management of

multichip mod-

ules with evaporative spray cooling,” Adv. Electron. Packag.,

vol. ASME

EEP-26-2, pp. 1453–1461, 1999.

[35] G. Pautsch, “An overview on the system packaging of the

Cray SV2

supercomputer,” presented at the IPACK 2001 Conf., Kauai, HI,

2001.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-45-2048.jpg)

![[36] T. Cader and D. Tilton, “Implementing spray cooling

thermal manage-

ment in high heat flux applications,” in Proc. 2004 Intersociety

Conf.

Thermal Performance, 2004, pp. 699–701.

[37] G. Lin and R. Ponnappan, “Heat transfer characteristics of

spray cooling

in a closed loop,” Int. J. Heat Mass Transfer, vol. 46, pp. 3737–

3746,

2003.

[38] C. Hilbert, S. Sommerfeldt, O. Gupta, and D. J. Herrell,

“High perfor-

mance air cooled heat sinks for integrated circuits,” IEEE

Trans. CHMT,

vol. 13, no. 4, pp. 1022–1031, 1990.

[39] R. C. Chu, R. E. Simons, and K. P. Moran, “System

cooling design con-

siderations for large mainframe computers,” in Cooling

Techniques for

Computers, W. Aung, Ed. New York: Hemisphere, 1991.

[40] V. W. Antonetti, R. C. Chu, and J. H. Seely, “Thermal

design for IBM

system/360 model 91,” presented at the 8th Int. Electronic

Circuit Pack-

aging Symp., San Francisco, CA, 1967.

[41] R. C. Chu, M. J. Ellsworth, E. Furey, R. R. Schmidt, and R.

E. Simons,

“Method and apparatus for combined air and liquid cooling of

stacked

electronic components,” U.S. Patent 6,775,137 B2, Aug. 10,](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-46-2048.jpg)

![2004.

[42] H. Bray, “Computer Makers Sweat Over Cooling,” The

Boston Globe,

2004.

[43] Y. Taur and J. Nowak, “CMOS devices below 0.1 �m How

high will

performance go ?,” in Int. Electron Devices Meeting Tech. Dig.,

1997,

pp. 215–218.

[44] K. Rose, R. Mangaser, C. Mark, and E. Sayre,

“Cryogenically cooled

CMOS,” Critical Rev. Solid State Materials Sci., vol. 4, no. 1,

pp. 63–99,

1999.

[45] W. F. Clark, E. Badih, and R. G. Pires, “Low temperature

CMOS—A

brief review,” IEEE Trans. Compon., Hybrids, Manufact.

Technol., vol.

15, no. 3, pp. 397–404, Jun. 1992.

[46] R. F. Barron, Cryogenic Systems, 2nd ed. New York:

Oxford Univ.

Press, 1985.

[47] J. S. Kolodzey, “Cray-1 computer technology,” IEEE

Trans. Compon.,

Hybrids, Manufact. Technol., vol. CHMT-4, no. 2, pp. 181–186,

Jun.

1981.

[48] D. M. Carlson, D. C. Sullivan, R. E. Bach, and D. R.

Resnick, “The](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-47-2048.jpg)

![ETA-10 liquid-nitrogen-cooled supercomputer system,” IEEE

Trans.

Electron. Devices, vol. 36, no. 8, pp. 1404–1413, Aug. 1989.

[49] R. E. Schwall and W. S. Harris, “Packaging and cooling of

low temper-

ature electronics,” in Advances in Cryogenic Engineering. New

York:

Plenum Press, 1991, pp. 587–596.

[50] R. R. Schmidt, “Low temperature electronics cooling,”

Electronics

Cooling, vol. 6, no. 3, Sep. 2000.

[51] R. R. Schmidt and B. Notohardjono, “High-End server low

temperature

cooling,” IBM J. Res. Develop., vol. 46, no. 2, pp. 739–751,

2002.

[52] A. Fujisaki, M. Suzuki, and H. Yamamoto, “Packaging

technology for

high performance CMOS server fujitsu GS8900,” IEEE Trans.

Adv.

Packag., vol. 24, pp. 464–469, Nov. 2001.

[53] Heat Density Trends in Data Processing, Computer

Systems and

Telecommunication Equipment. Santa Fe, NM: Uptime Institute,

2000.

[54] R. Schmidt, “Effect of data center characteristics on data

processing

equipment inlet temperatures,” in Proc. IPACK ’01, Advances

in

Electronic Packaging 2001, vol. 2, Kauai, HI, Jul. 2001, pp.

1097–1106.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-48-2048.jpg)

![[55] R. Schmidt and E. Cruz, “Raised floor computer data

center: Effect on

rack inlet temperatures of chilled air exiting both the hot and

cold aisles,”

in Proc. ITHERM, San Diego, CA, Jun. 2002, pp. 580–594.

[56] , “Raised floor computer data center: Effect on rack inlet

tempera-

tures when rack flow rates are reduced,” presented at the Int.

Electronic

Packaging Conf. and Exhibition, Maui, HI, Jul. 2003.

[57] , “Raised floor computer data center: Effect on rack inlet

tempera-

tures when adjacent racks are removed,” presented at the Int.

Electronic

Packaging Conf. and Exhibition, Maui, HI, July 2003.

[58] , “Raised floor computer data center: Effect on rack inlet

temper-

atures when high powered racks are situated amongst lower

powered

racks,” presented at the ASME IMECE Conf., New Orleans, LA,

Nov.

2002.

CHU et al.: REVIEW OF COOLING TECHNOLOGIES FOR

COMPUTER PRODUCTS 585

[59] , “Clusters of high powered racks within a raised floor

computer

data center: Effect of perforated tile flow distribution on rack

inlet air](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-49-2048.jpg)

![temperatures,” presented at the ASME IMECE Conf.,

Washington, DC,

Nov. 2003.

[60] C. Patel, C. Bash, C. Belady, L. Stahl, and D. Sullivan,

“Computational

fluid dynamics modeling of high compute density data centers

to assure

system inlet air specifications,” in Proc. IPACK ’01, Advances

in Elec-

tronic Packaging 2001, vol. 2, Kauai, HI, July 2001, pp. 821–

829.

[61] C. Patel, R. Sharma, C. Bash, and A. Beitelmal, “Thermal

considerations

in cooling large scale compute density data centers,” in Proc.

ITHERM,

San Diego, CA, Jun. 2002, pp. 767–776.

[62] C. Patel, C. Bash, R. Sharma, M. Beitelmal, and R.

Friedrich, “Smart

cooling of data centers,” in Proc. IPACK ’03, Advances in

Electronic

Packaging 2003, Maui, HI, Jul. 2003, pp. 129–137.

[63] C. Bash, C. Patel, and R. Sharma, “Efficient thermal

management of

data centers—Immediate and long term research needs,”

HVAC&R Res.

J., vol. 9, no. 2, pp. 137–152, Apr. 2003.

[64] H. Obler, “Energy efficient computer cooling,”

Heating/Piping/Air Con-

ditioning, vol. 54, no. 1, pp. 107–111, Jan. 1982.

[65] J. M. Ayres, “Air conditioning needs of computers pose](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-50-2048.jpg)

![problems for

new office building,” Heating, Piping and Air Conditioning,

vol. 34, no.

8, pp. 107–112, Aug. 1962.

[66] H. F. Levy, “Computer room air conditioning: How to

prevent a catas-

trophe,” Building Syst. Des., vol. 69, no. 11, pp. 18–22, Nov.

1972.

[67] R. W. Goes, “Design electronic data processing

installations for relia-

bility,” Heating, Piping Air Cond., vol. 31, no. 9, pp. 118–120,

Sept.

1959.

[68] W. A. Di Giacomo, “Computer room environmental

systems,” Heating,

Piping Air Cond., vol. 45, no. 11, pp. 76–80, Oct. 1973.

[69] F. J. Grande, “Application of a new concept in computer

room air con-

ditioning,” Western Electric Eng., vol. 4, no. 1, pp. 32–34, Jan.

1960.

[70] F. Green, “Computer room air distribution,” ASHRAE J.,

vol. 9, no. 2,

pp. 63–64, Feb. 1967.

[71] M. N. Birken, “Cooling computers,” Heating, Piping Air

Cond., vol. 39,

no. 6, pp. 125–128, Jun. 1967.

[72] H. F. Levy, “Air distribution through computer room

floors,” Building

Syst. Des., vol. 70, no. 7, pp. 16–16, Oct./Nov. 1973.](https://image.slidesharecdn.com/568ieeetransactionsondeviceandmaterialsreliabilityvol-221224072049-5bbc9e32/75/568-IEEE-TRANSACTIONS-ON-DEVICE-AND-MATERIALS-RELIABILITY-VOL-docx-51-2048.jpg)

![[73] Thermal Guidelines for Data Processing Environments.

Atlanta, GA:

ASHRAE, 2004.

[74] R. Schmidt, “Thermal profile of a high density data center-

methodology