The document discusses pipeline architecture and describes:

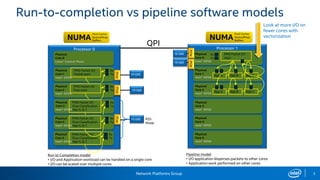

1. The difference between run-to-completion and pipeline software models, where pipeline models disperse packets to other cores for processing.

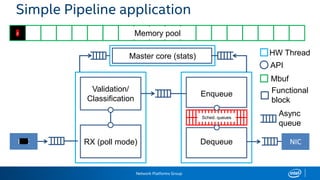

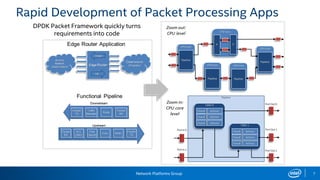

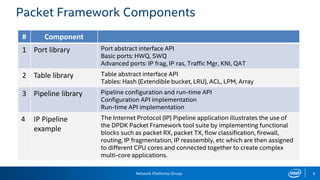

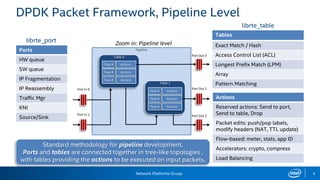

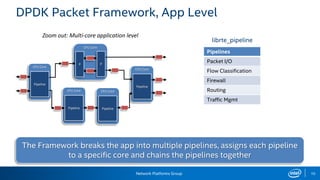

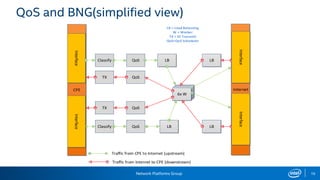

2. How the Intel DPDK Packet Framework can be used to rapidly develop packet processing applications using standard pipeline blocks like ports, tables, and a pipeline configuration API.

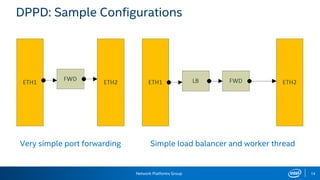

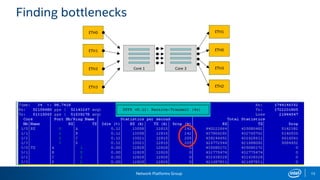

3. How the DPDK Packet Demonstrators (DPPD) provide sample applications and configurations to analyze performance and find bottlenecks in multi-core packet processing applications.

![Network Platforms Group

Legal Disclaimer

General Disclaimer:

© Copyright 2015 Intel Corporation. All rights reserved. Intel, the Intel logo, Intel Inside, the Intel Inside logo, Intel.

Experience What’s Inside are trademarks of Intel. Corporation in the U.S. and/or other countries. *Other names and

brands may be claimed as the property of others.

FTC Disclaimer:

Intel technologies’ features and benefits depend on system configuration and may require enabled hardware, software

or service activation. Performance varies depending on system configuration. No computer system can be absolutely

secure. Check with your system manufacturer or retailer or learn more at [intel.com].

Software and workloads used in performance tests may have been optimized for performance only on Intel

microprocessors. Performance tests, such as SYSmark and MobileMark, are measured using specific computer systems,

components, software, operations and functions. Any change to any of those factors may cause the results to vary. You

should consult other information and performance tests to assist you in fully evaluating your contemplated purchases,

including the performance of that product when combined with other products. For more complete information visit

http://www.intel.com/performance.](https://image.slidesharecdn.com/5pipelinearchrationale-150616084002-lva1-app6891/85/5-pipeline-arch_rationale-2-320.jpg)