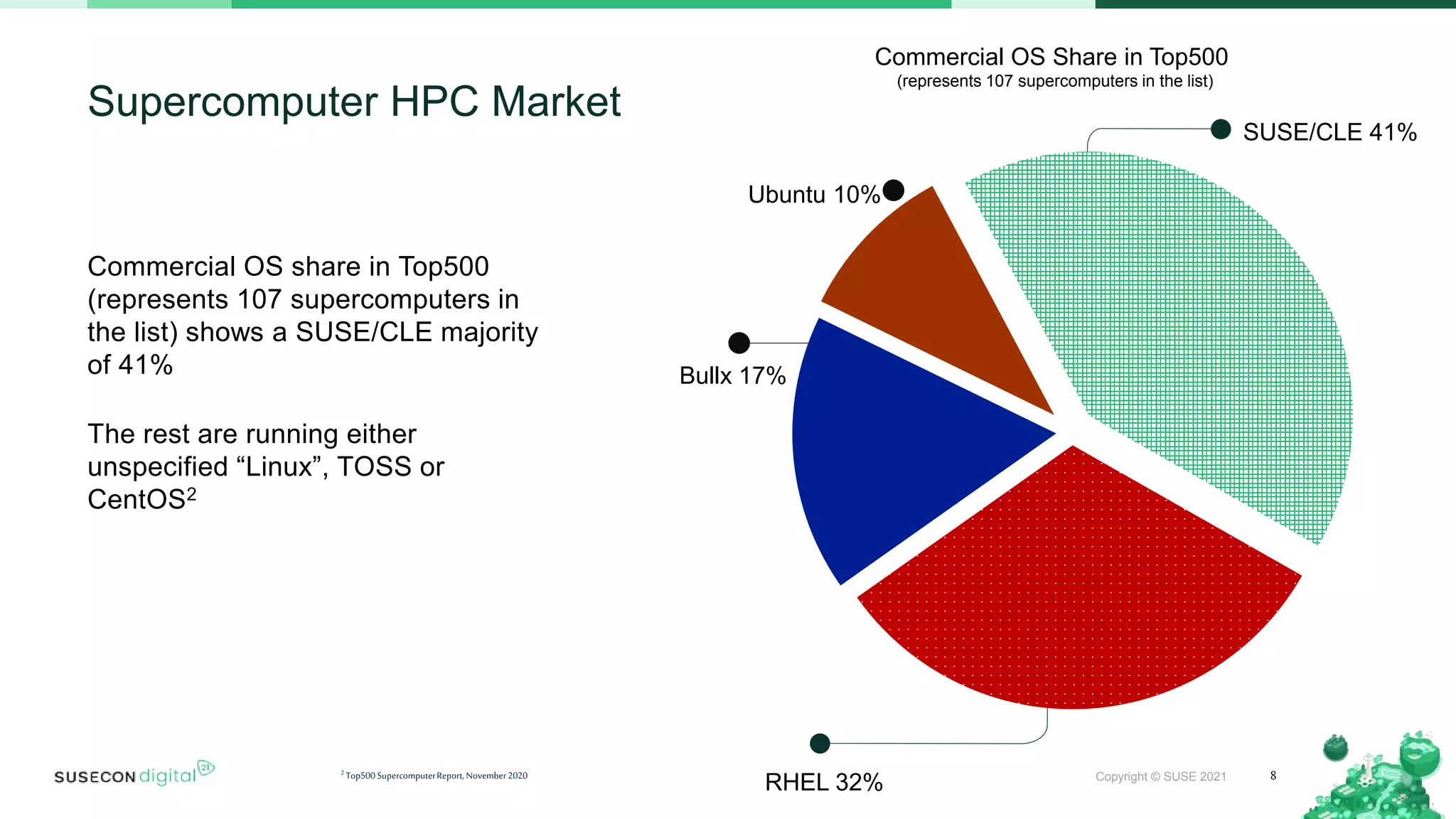

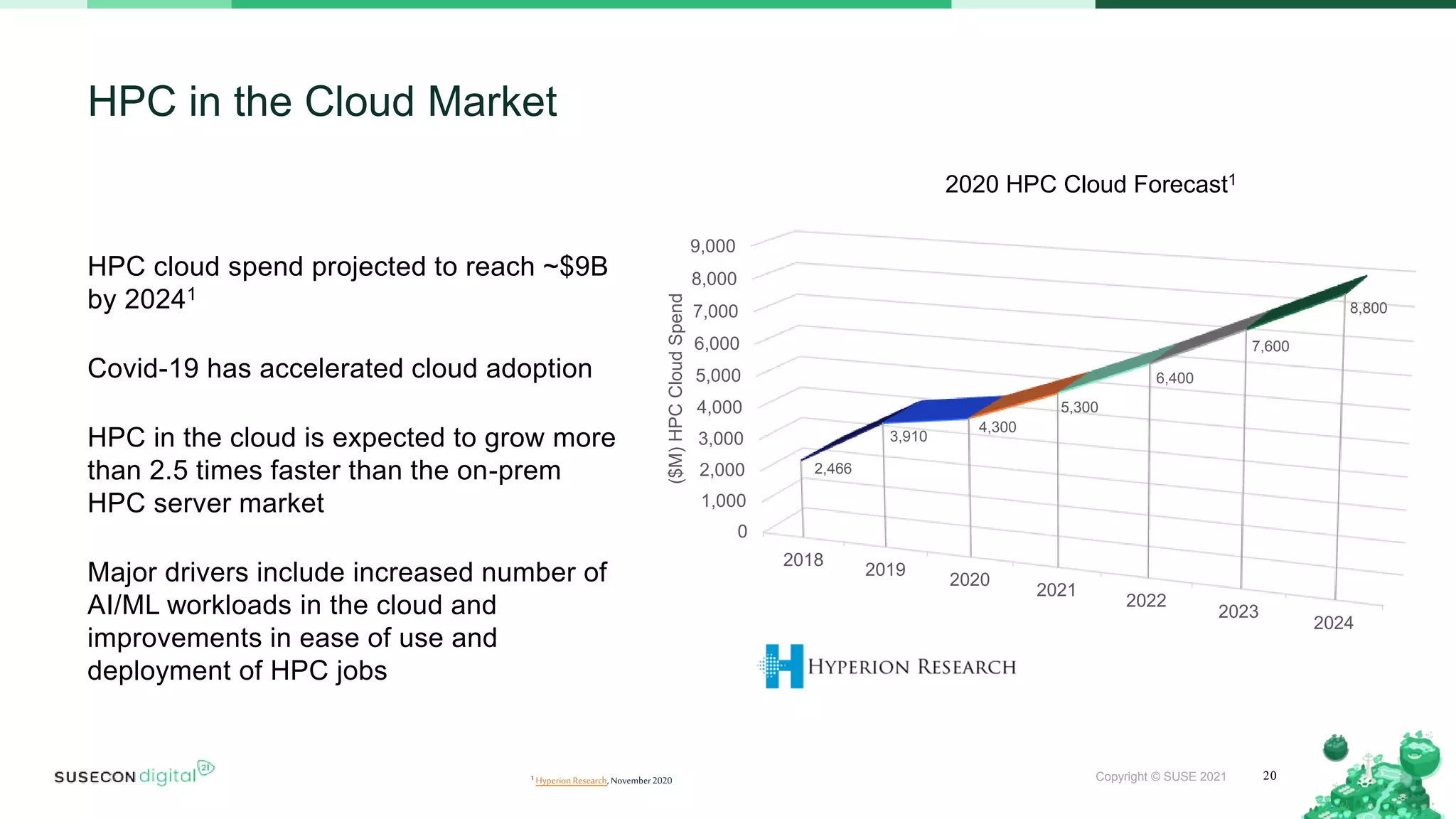

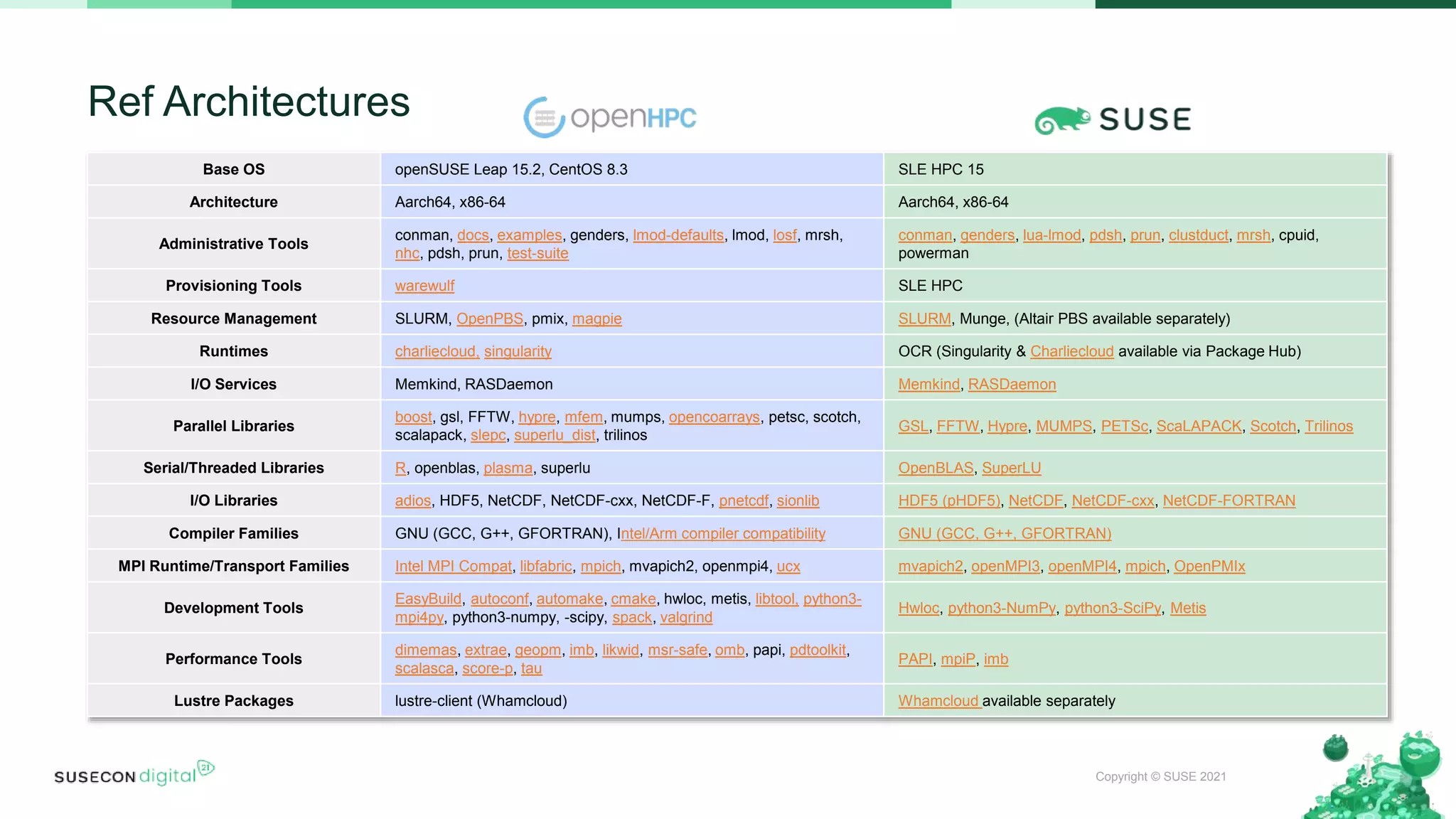

The document discusses 5 paths to high performance computing: Edge Path, Containers Path, Cloud Path, Enterprise Path, and Supercomputing Path. It provides examples of organizations using HPC across various industries like manufacturing, life sciences, energy, automotive, and more. The document also summarizes SUSE Linux Enterprise High Performance Computing, which provides popular HPC tools and libraries in one bundled solution.