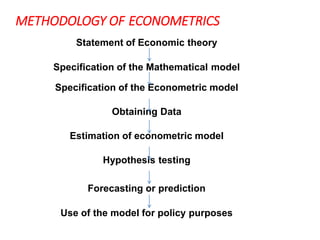

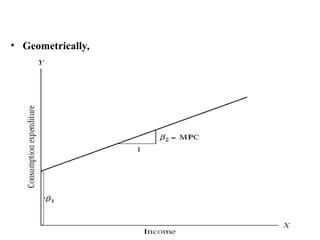

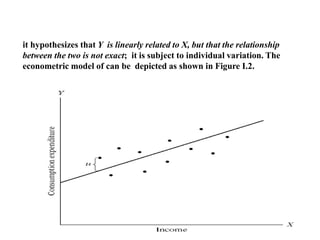

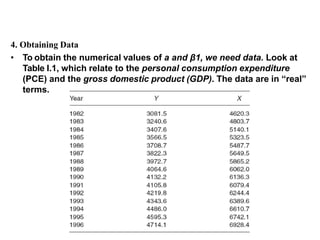

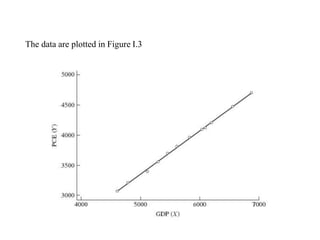

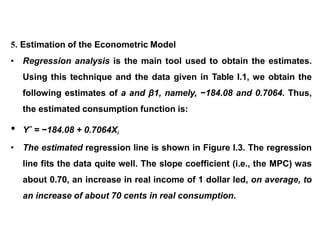

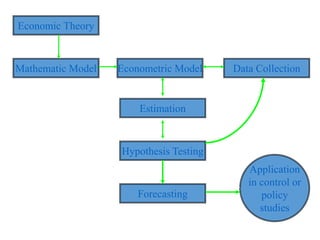

The document provides an introduction to econometrics methodology. It outlines the typical steps as: 1) stating an economic theory or hypothesis, 2) specifying a mathematical model, 3) specifying an econometric model, 4) collecting data, 5) estimating model parameters, 6) hypothesis testing, 7) forecasting, and 8) using the model for policy purposes. It then gives an example applying these steps to Keynes' consumption theory and the relationship between consumption and income.