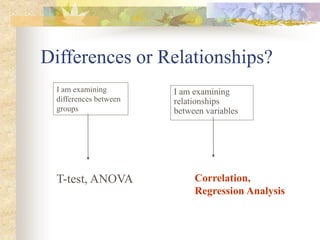

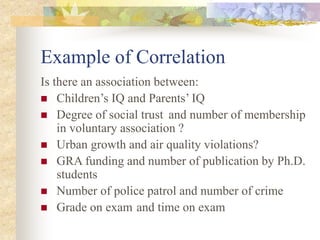

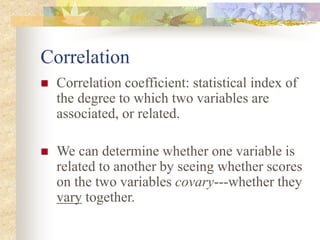

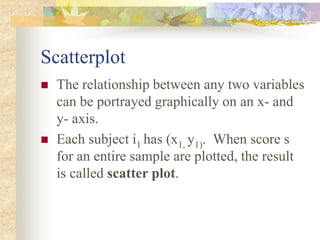

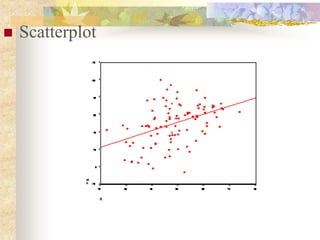

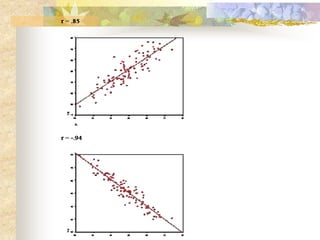

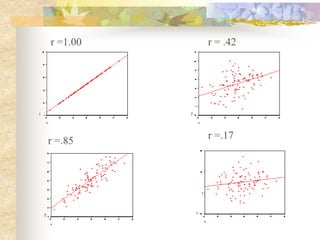

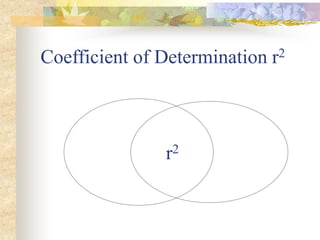

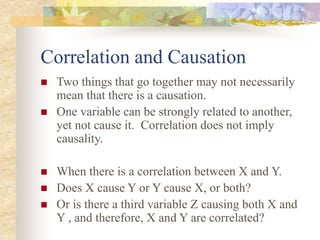

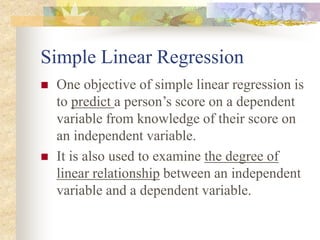

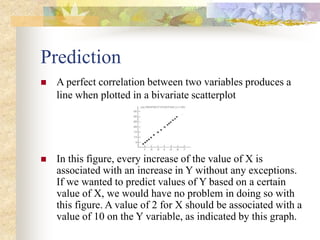

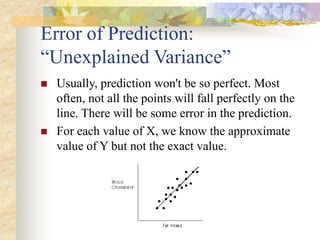

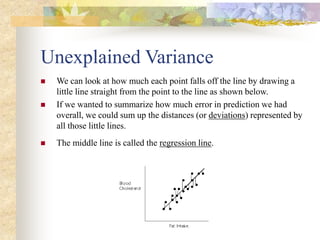

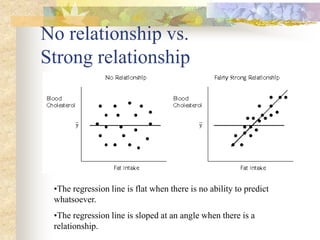

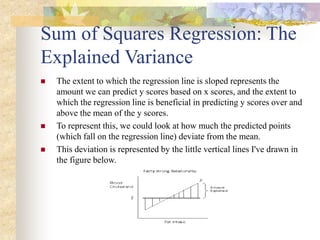

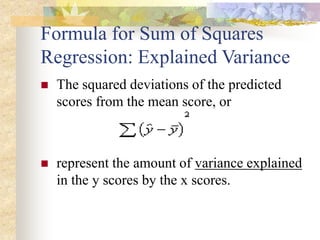

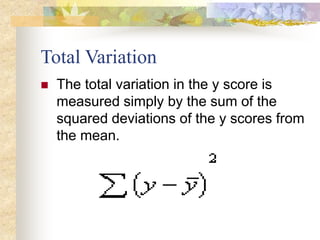

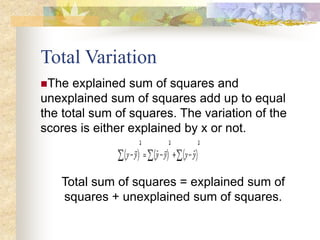

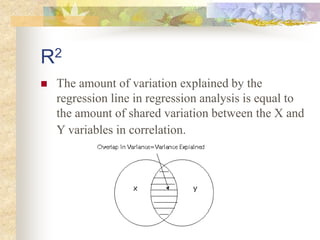

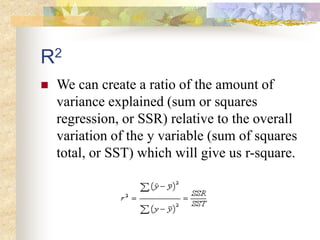

This document provides an overview of correlation and linear regression analysis. It defines correlation as a statistical measure of the relationship between two variables. Pearson's correlation coefficient (r) ranges from -1 to 1, with values farther from 0 indicating a stronger linear relationship. Positive values indicate an increasing relationship, while negative values indicate a decreasing relationship. The coefficient of determination (r2) represents the proportion of shared variance between variables. While correlation indicates linear association, it does not imply causation. Multiple regression allows predicting a continuous dependent variable from two or more independent variables.