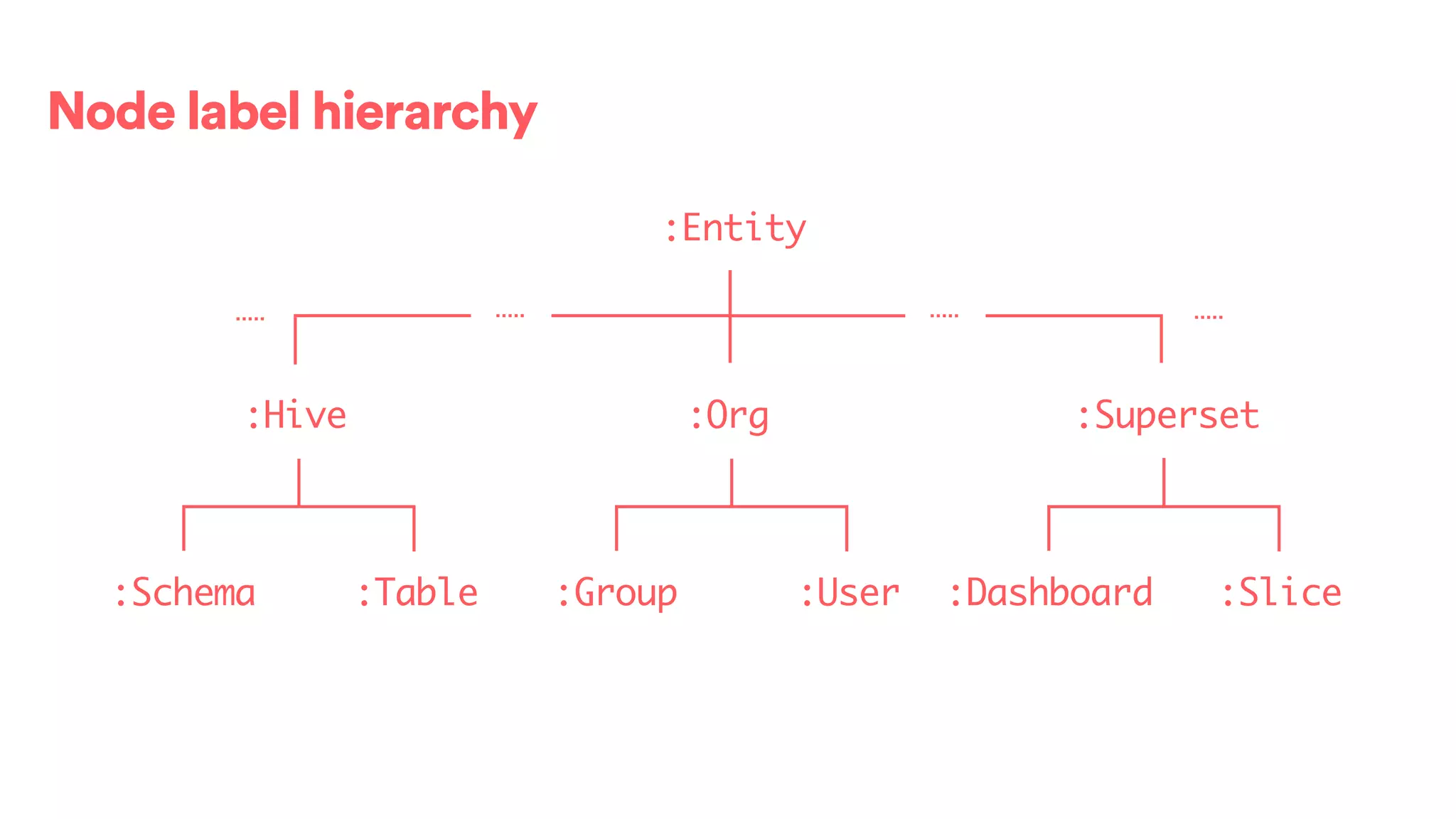

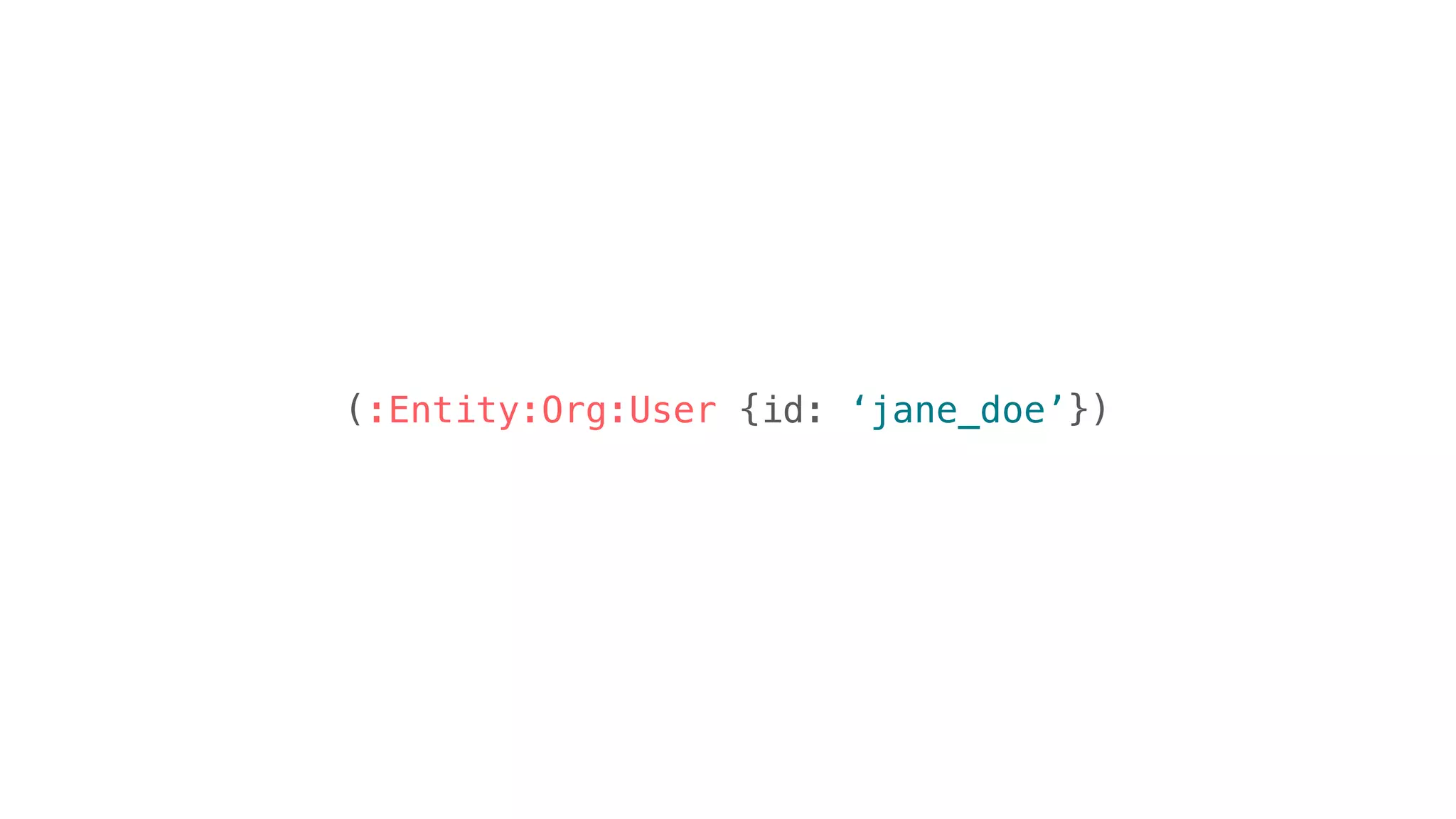

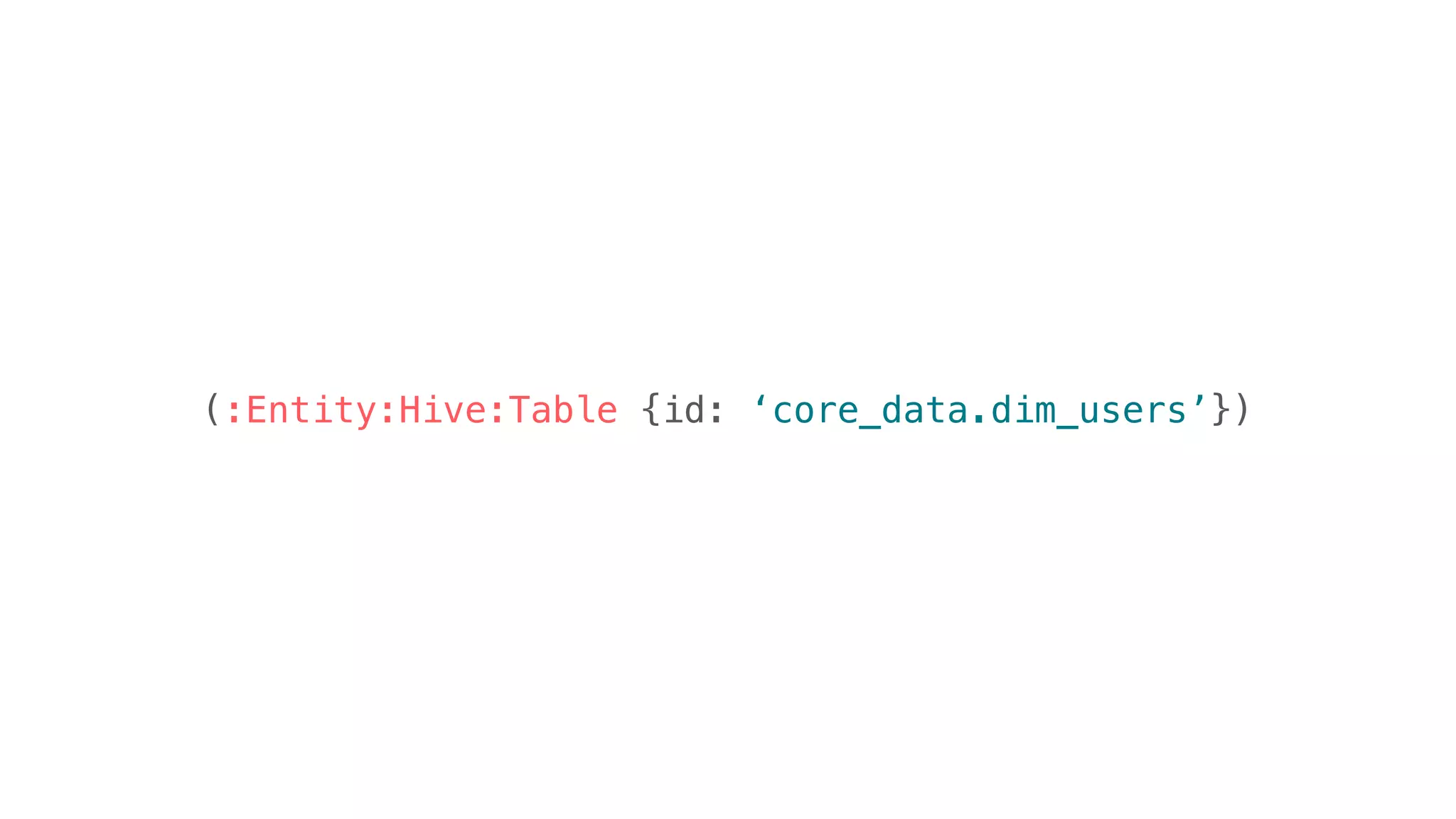

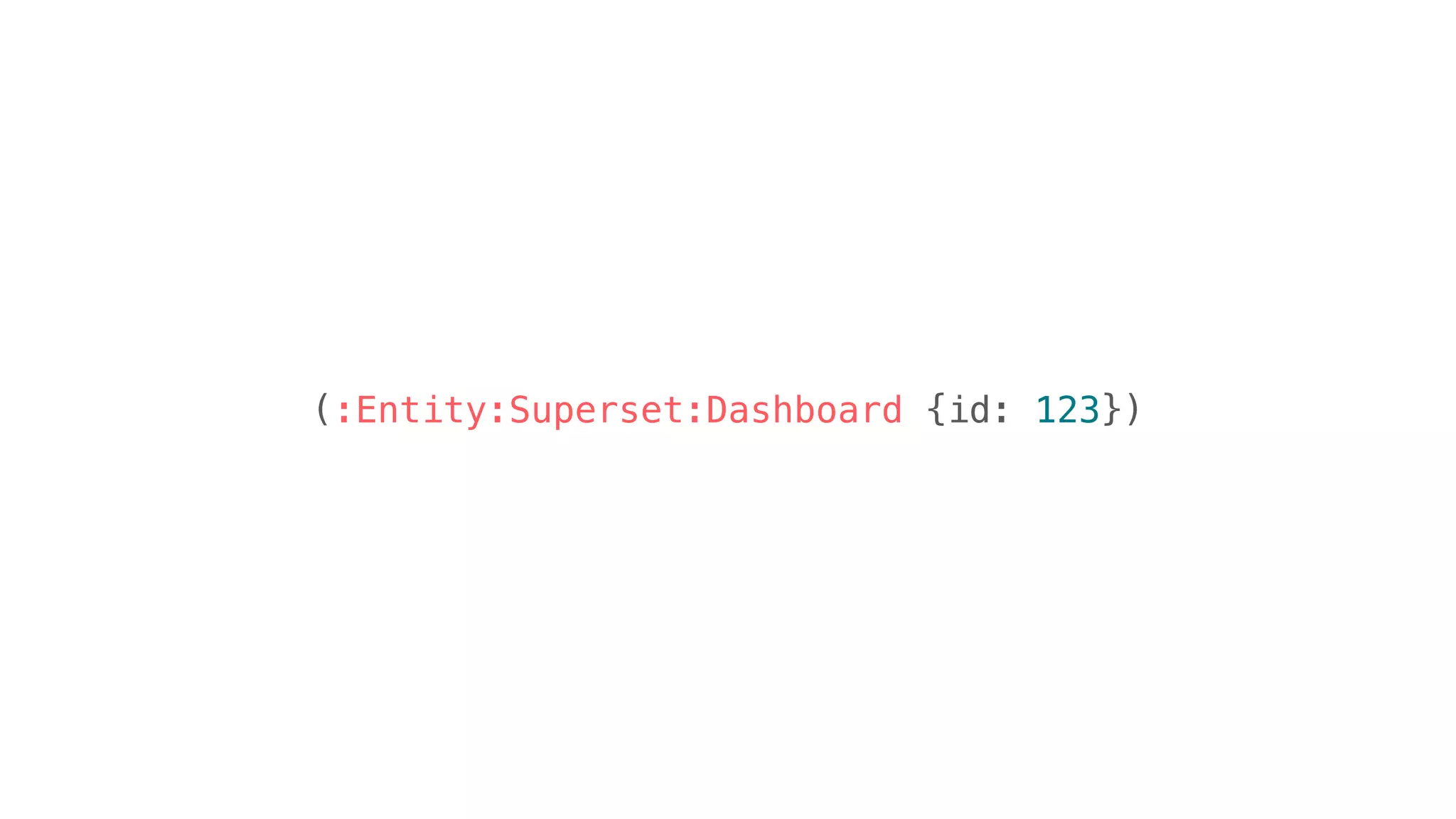

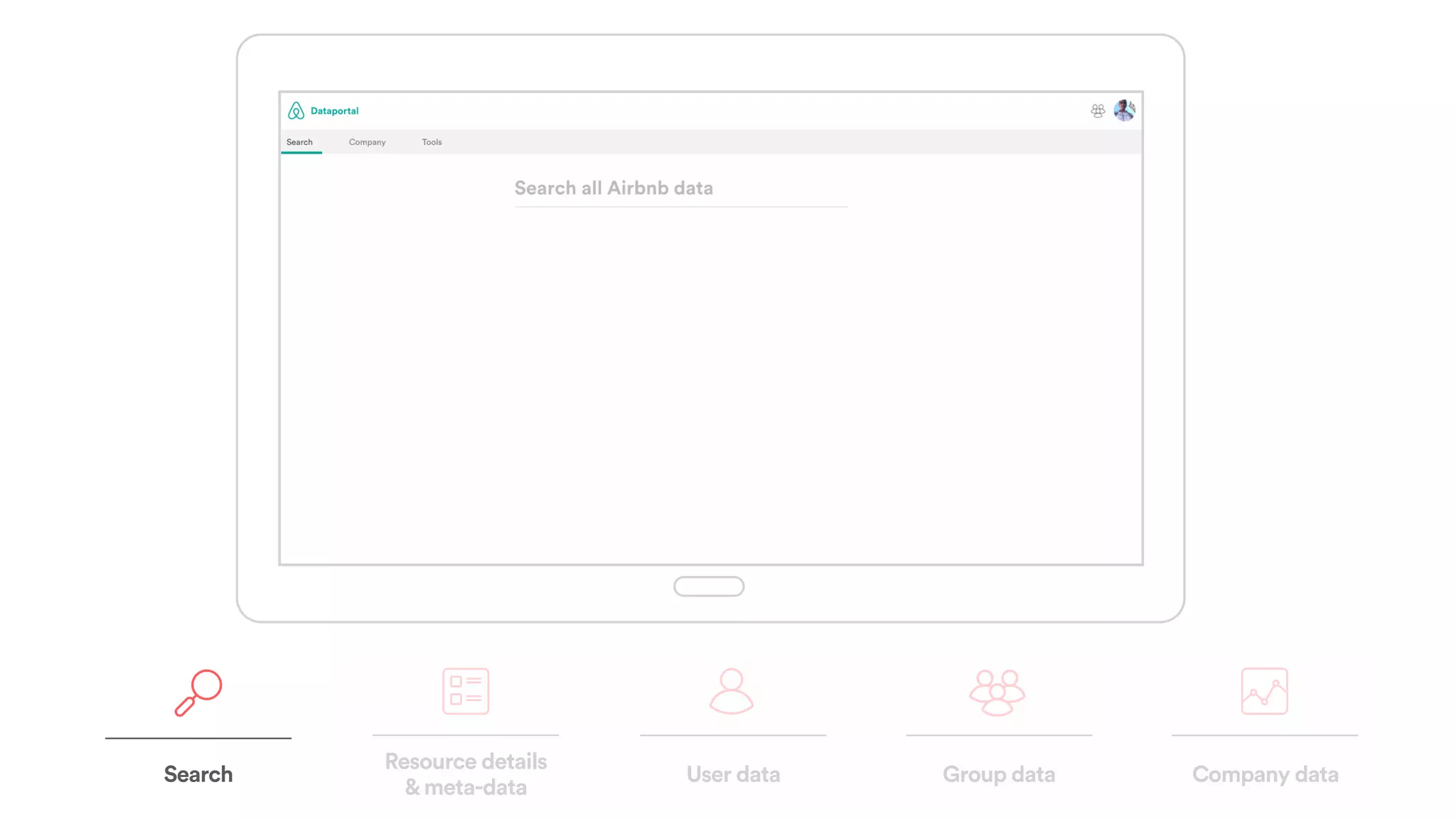

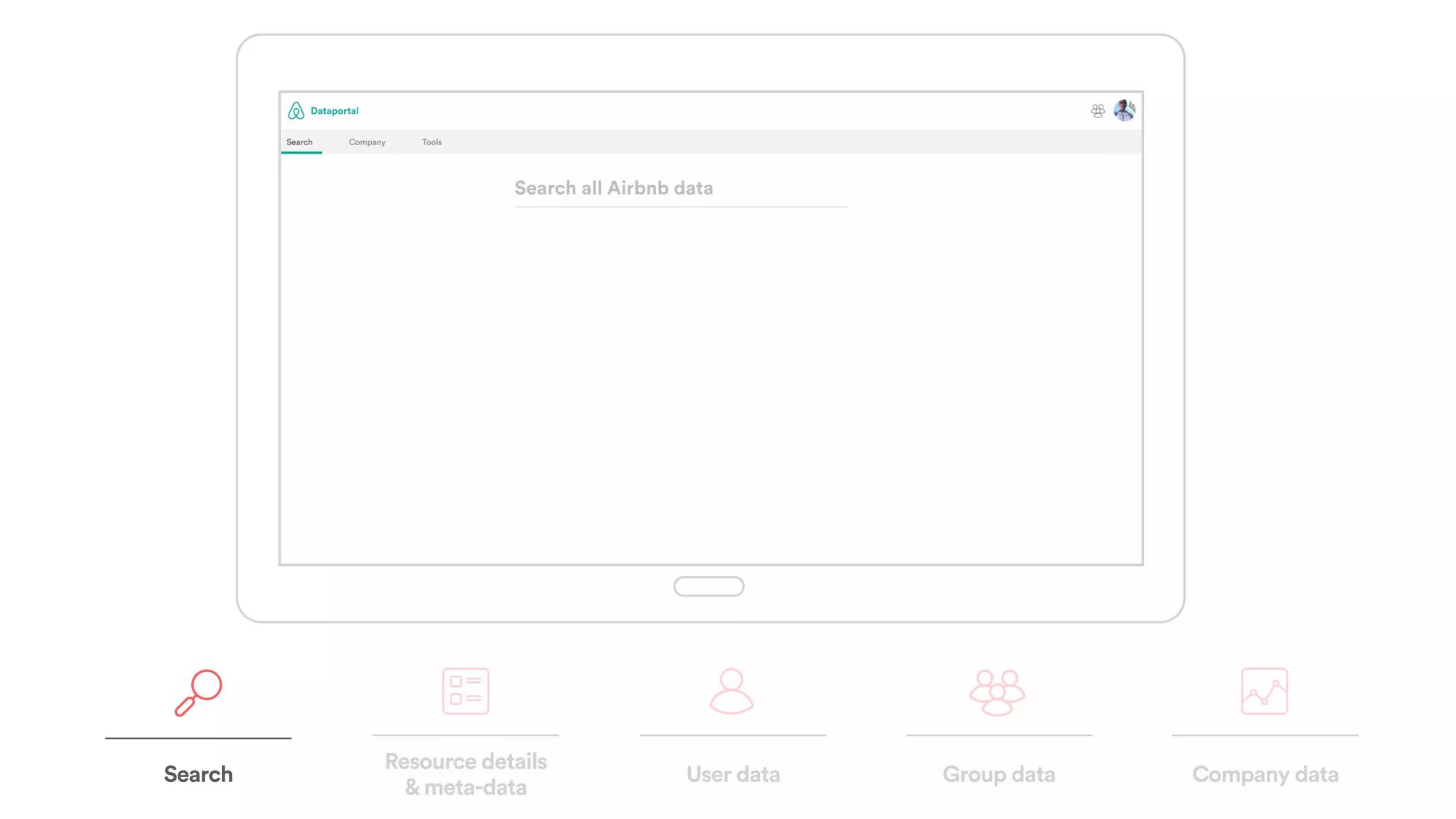

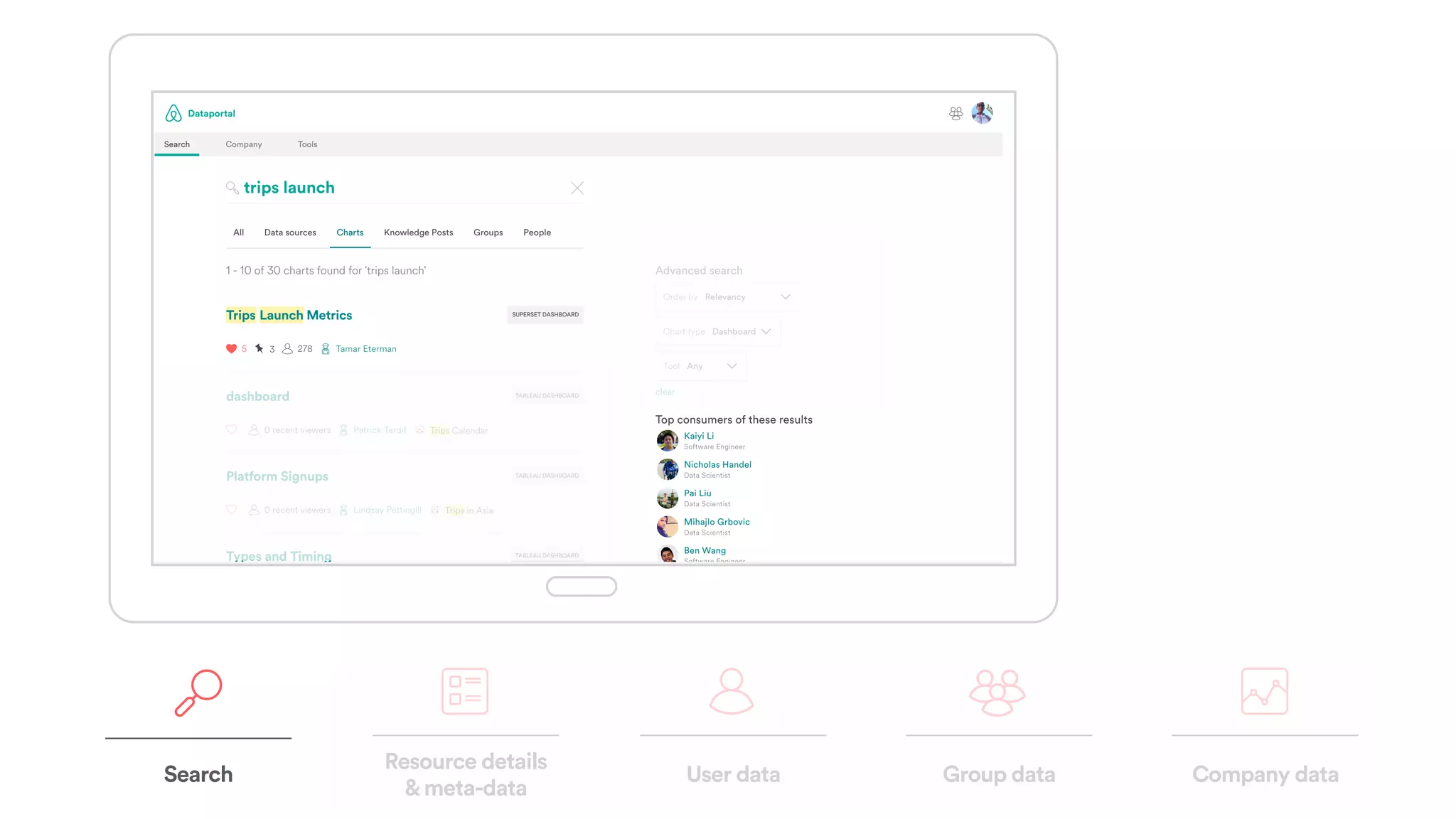

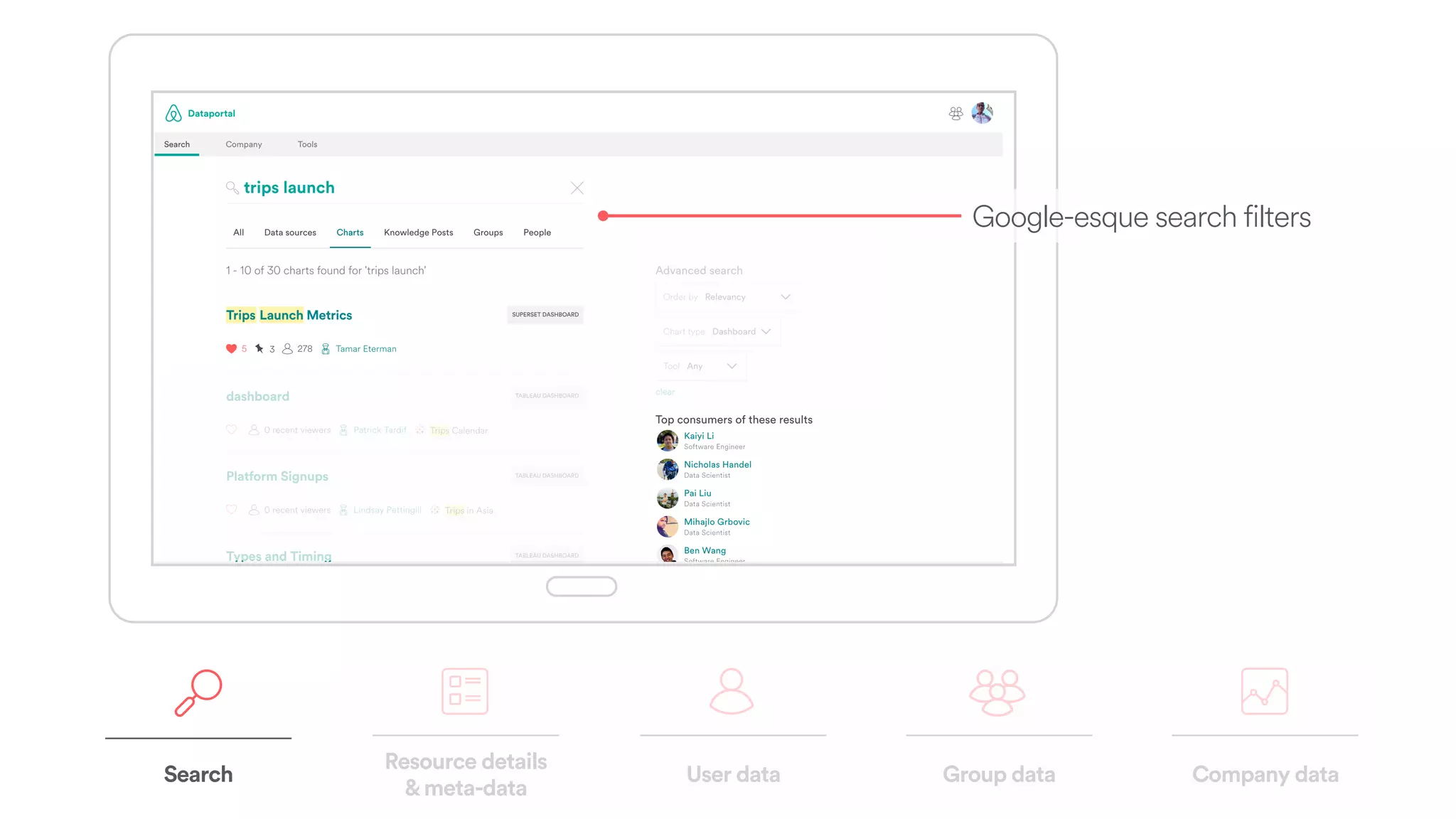

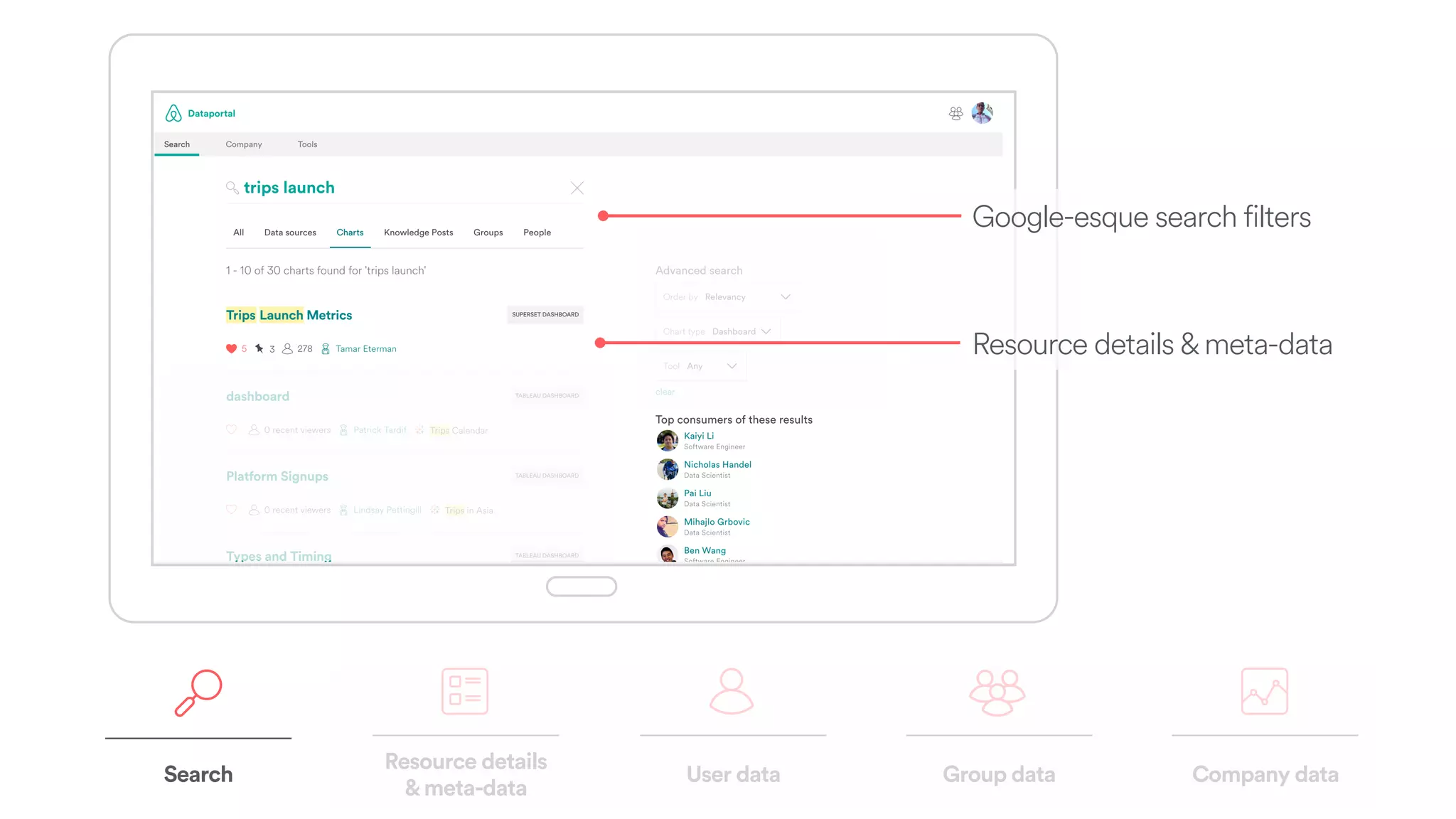

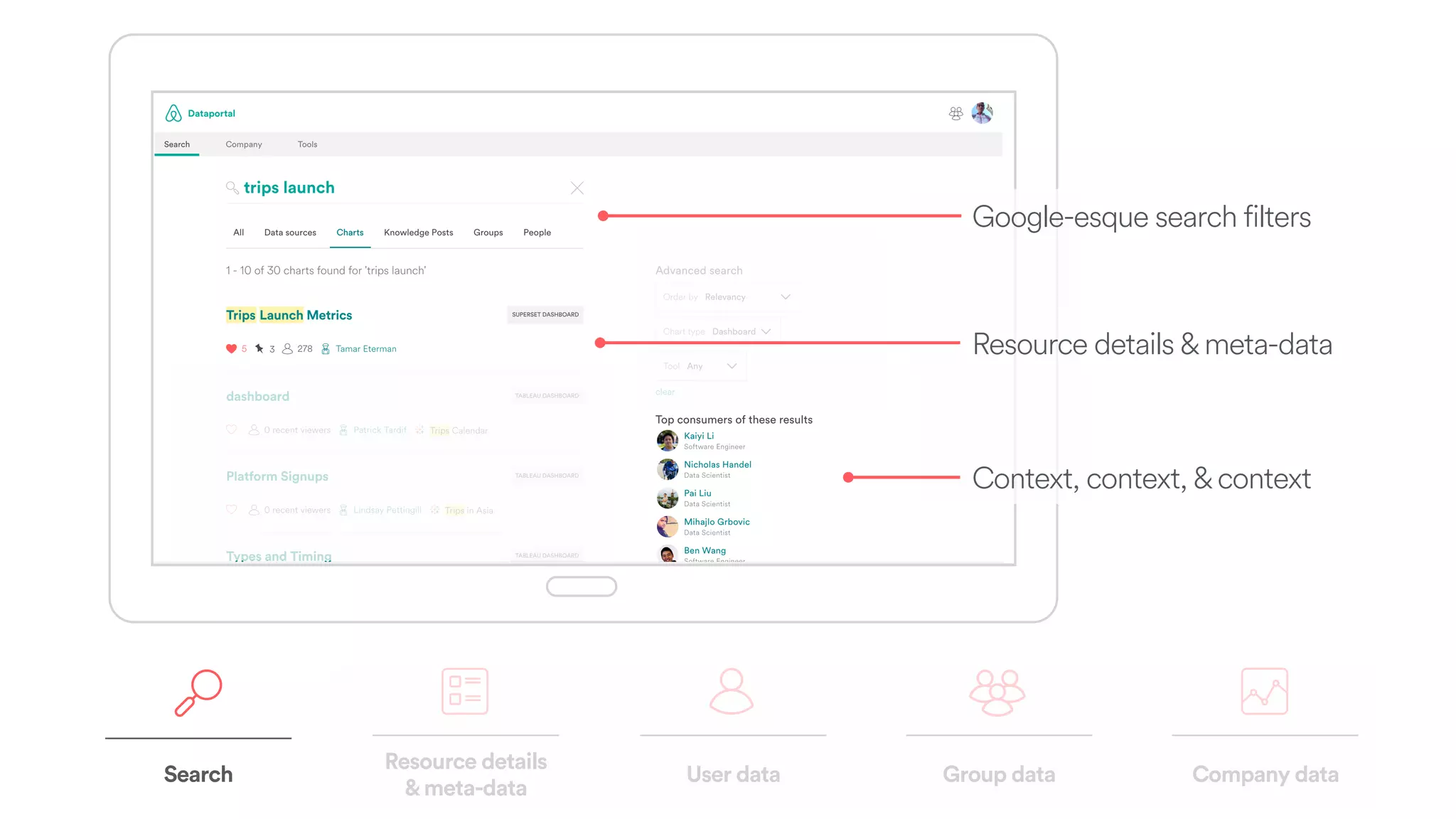

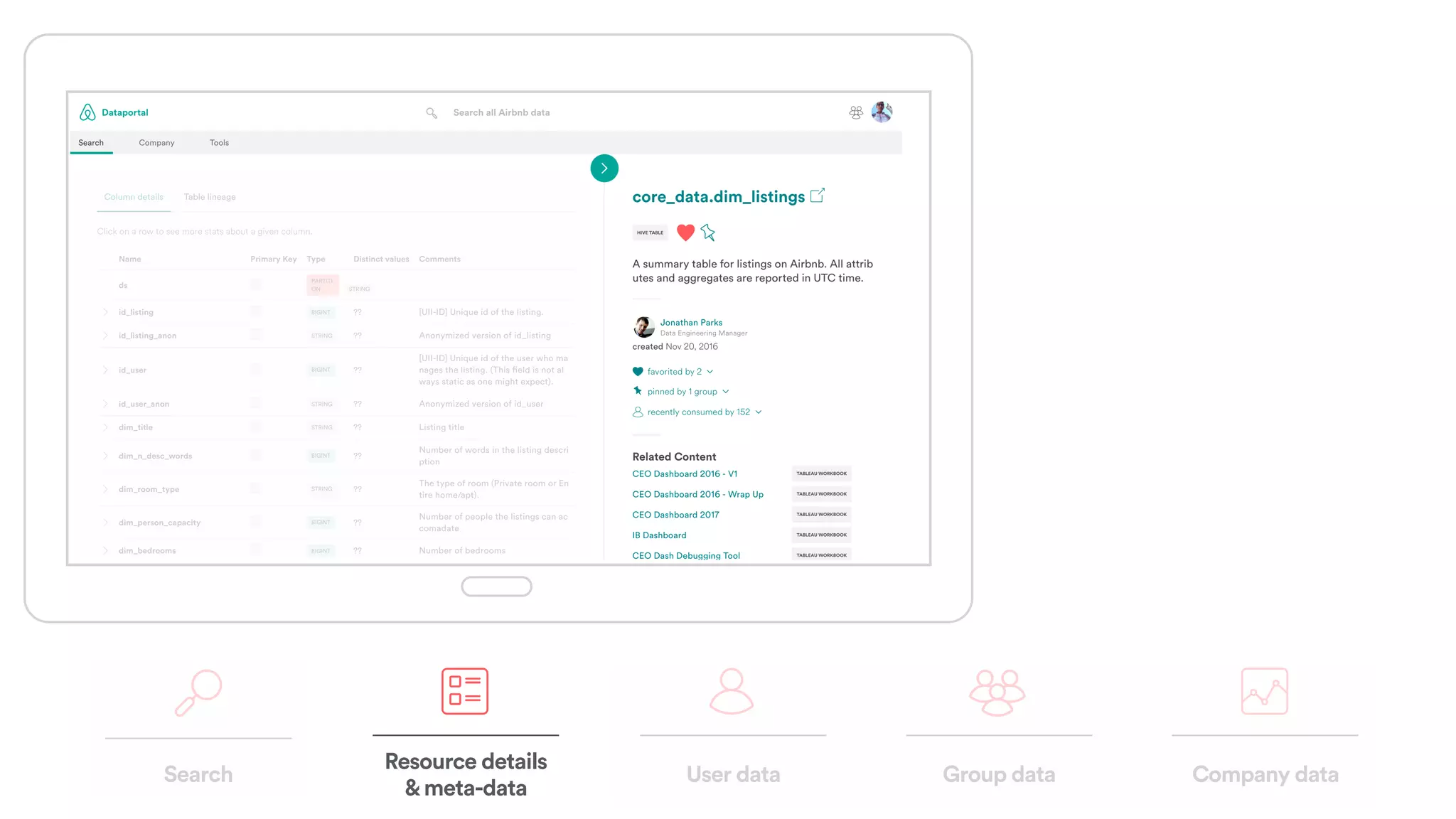

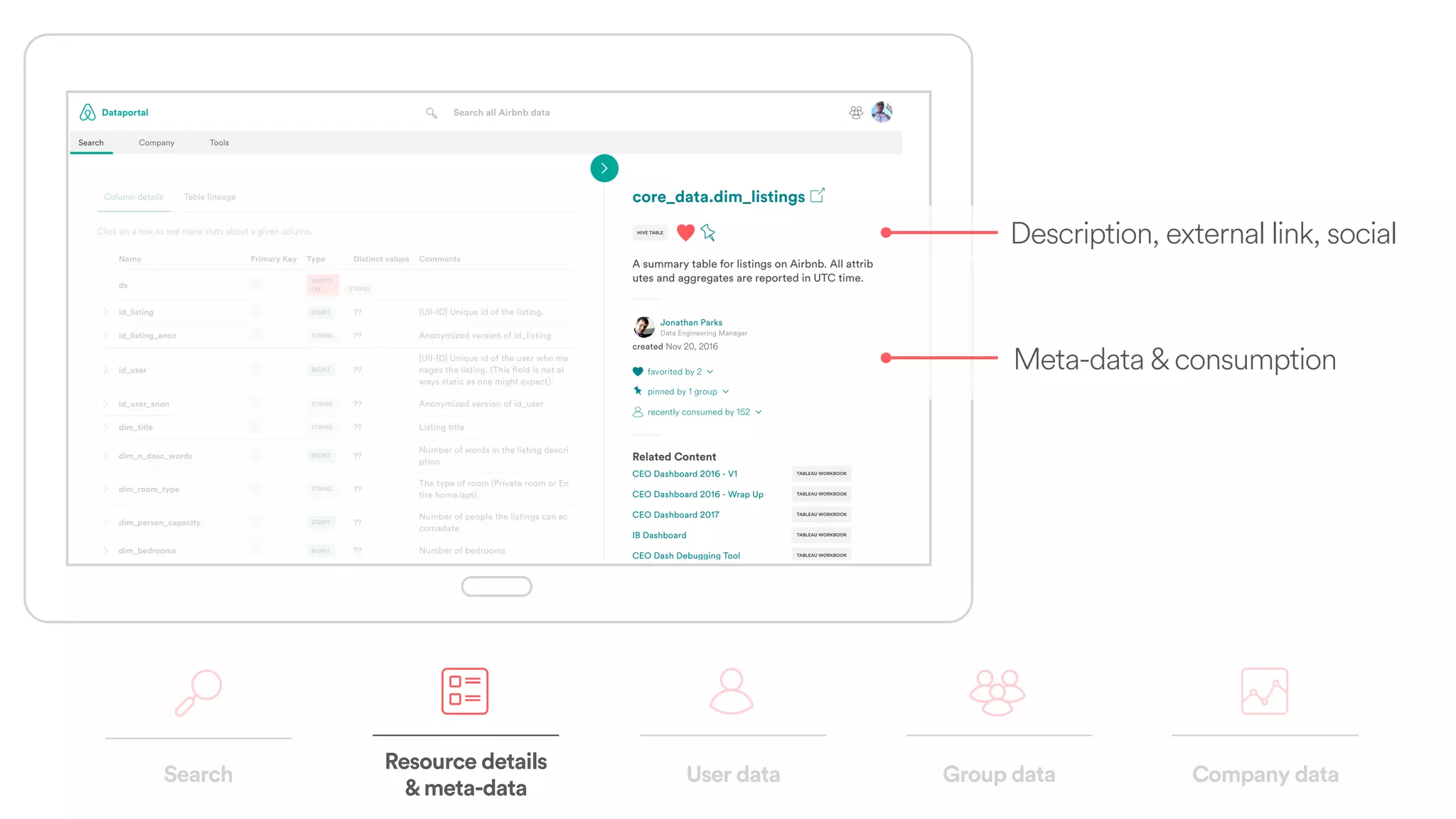

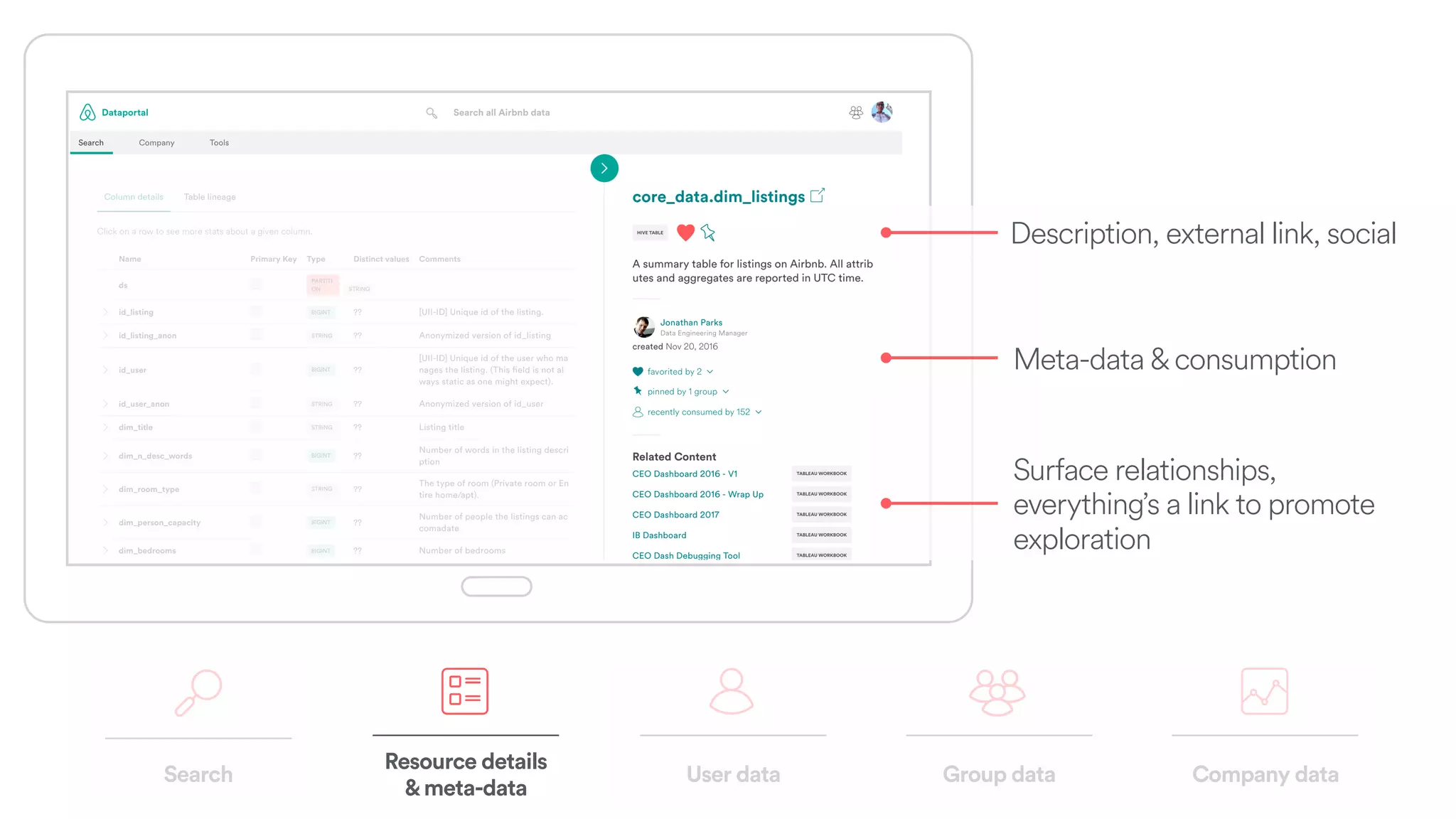

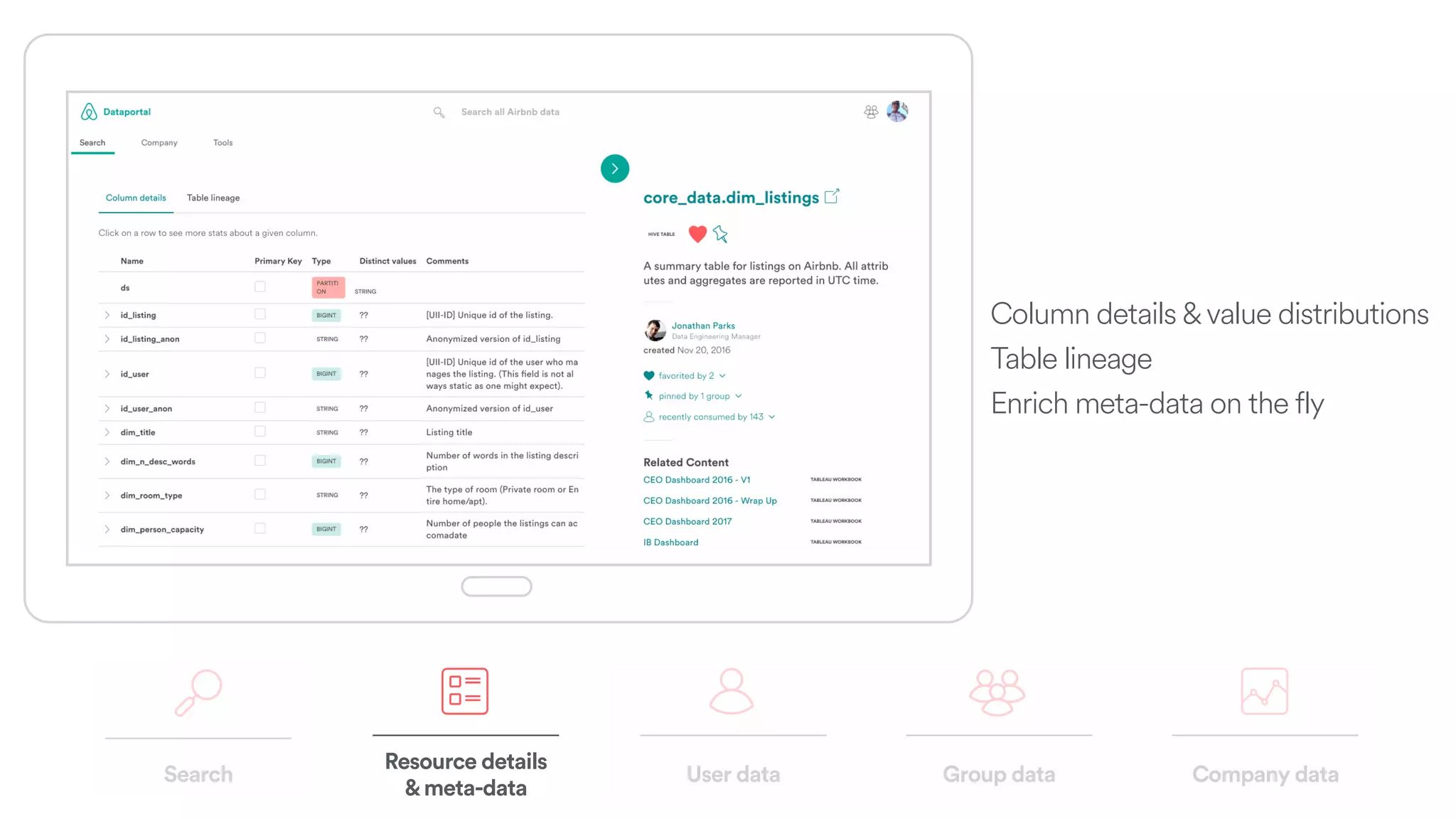

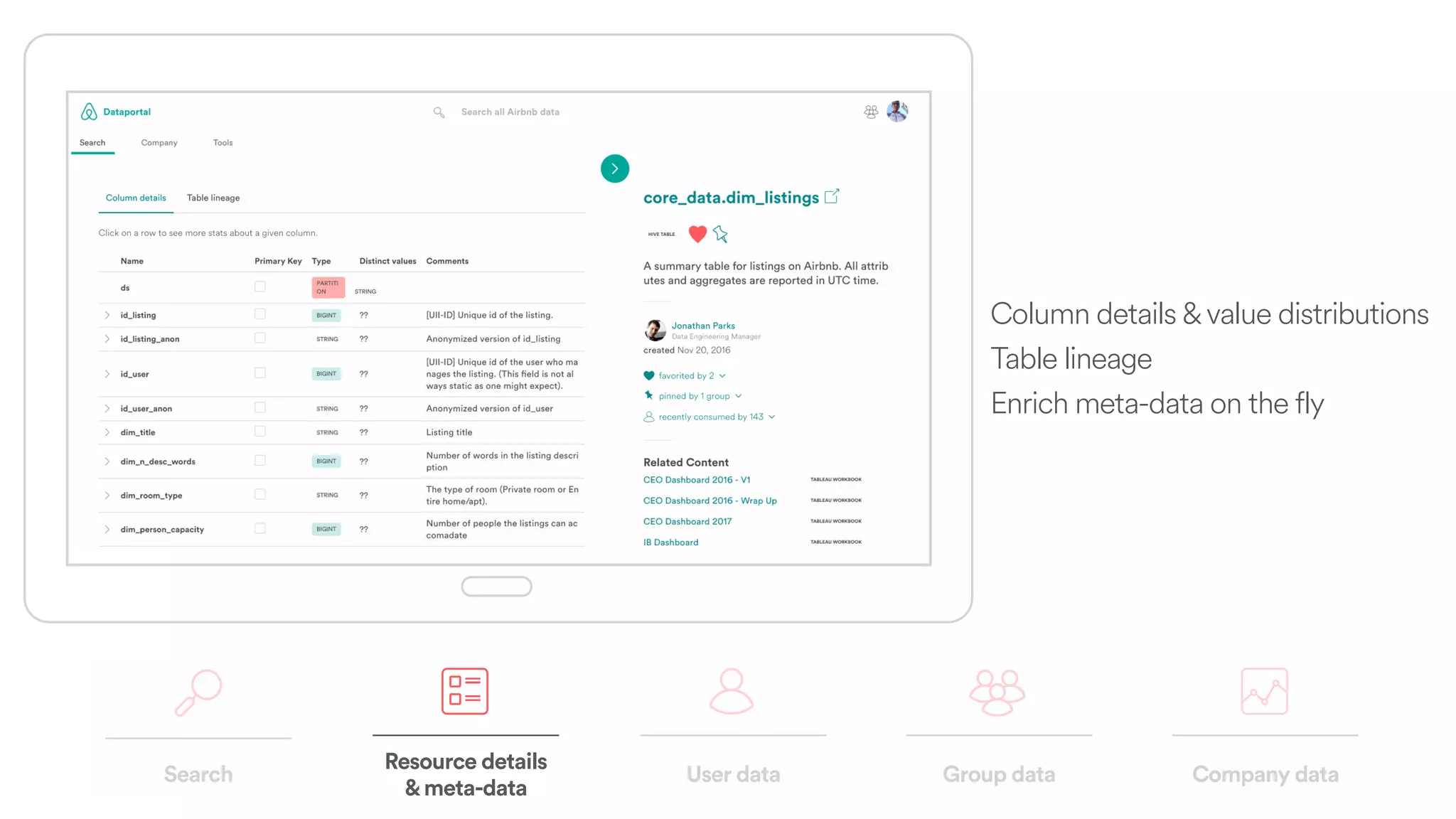

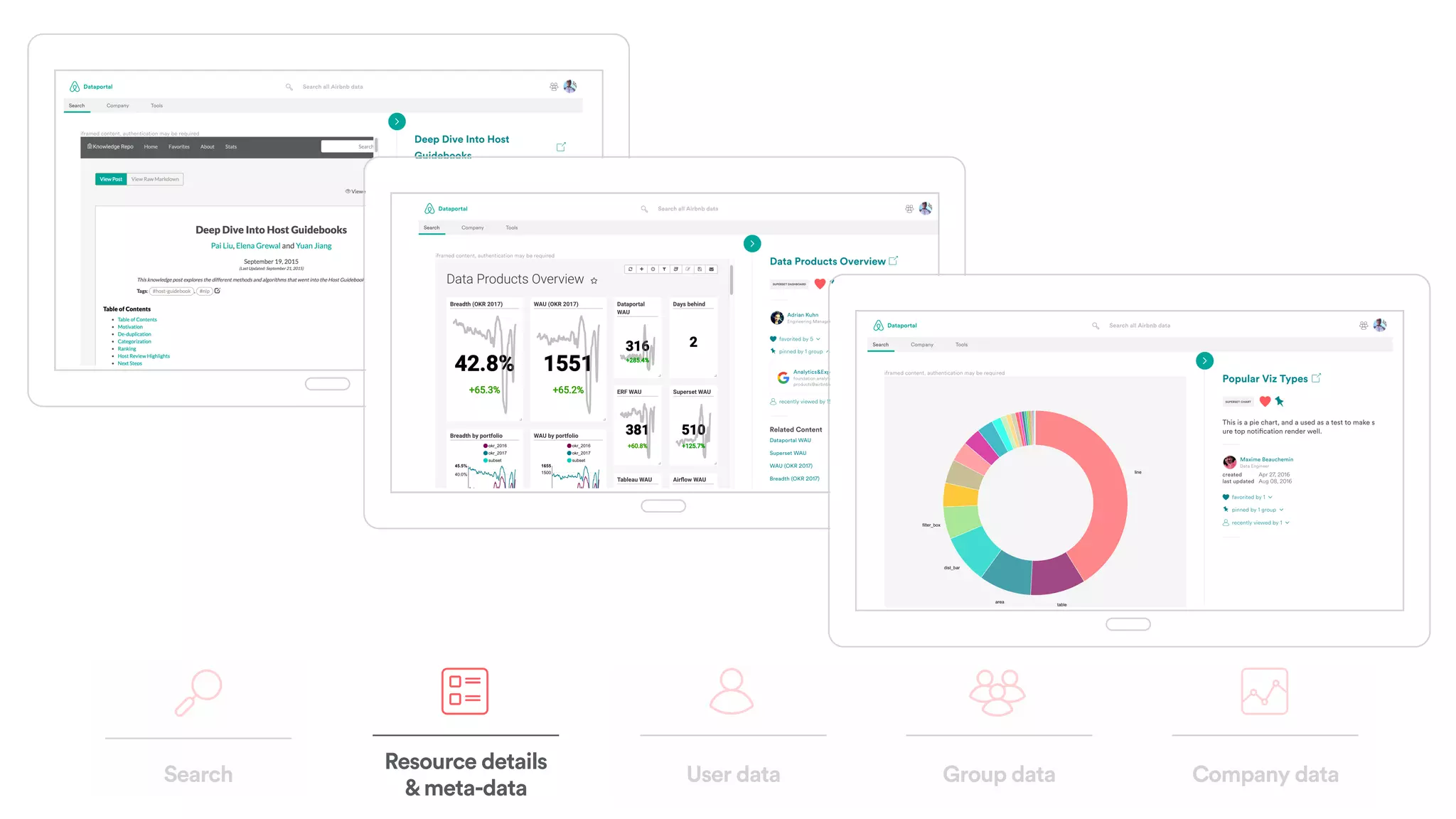

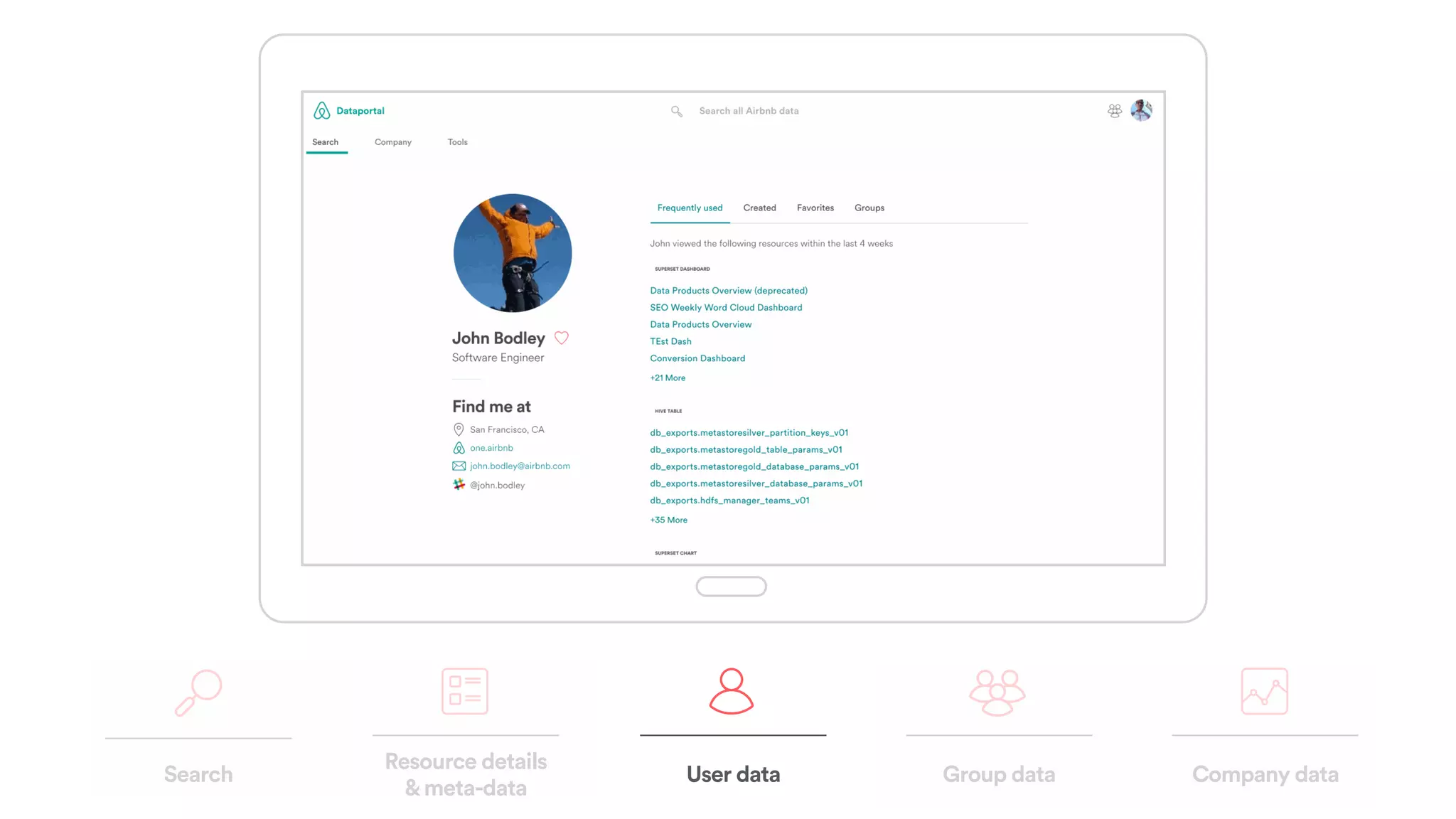

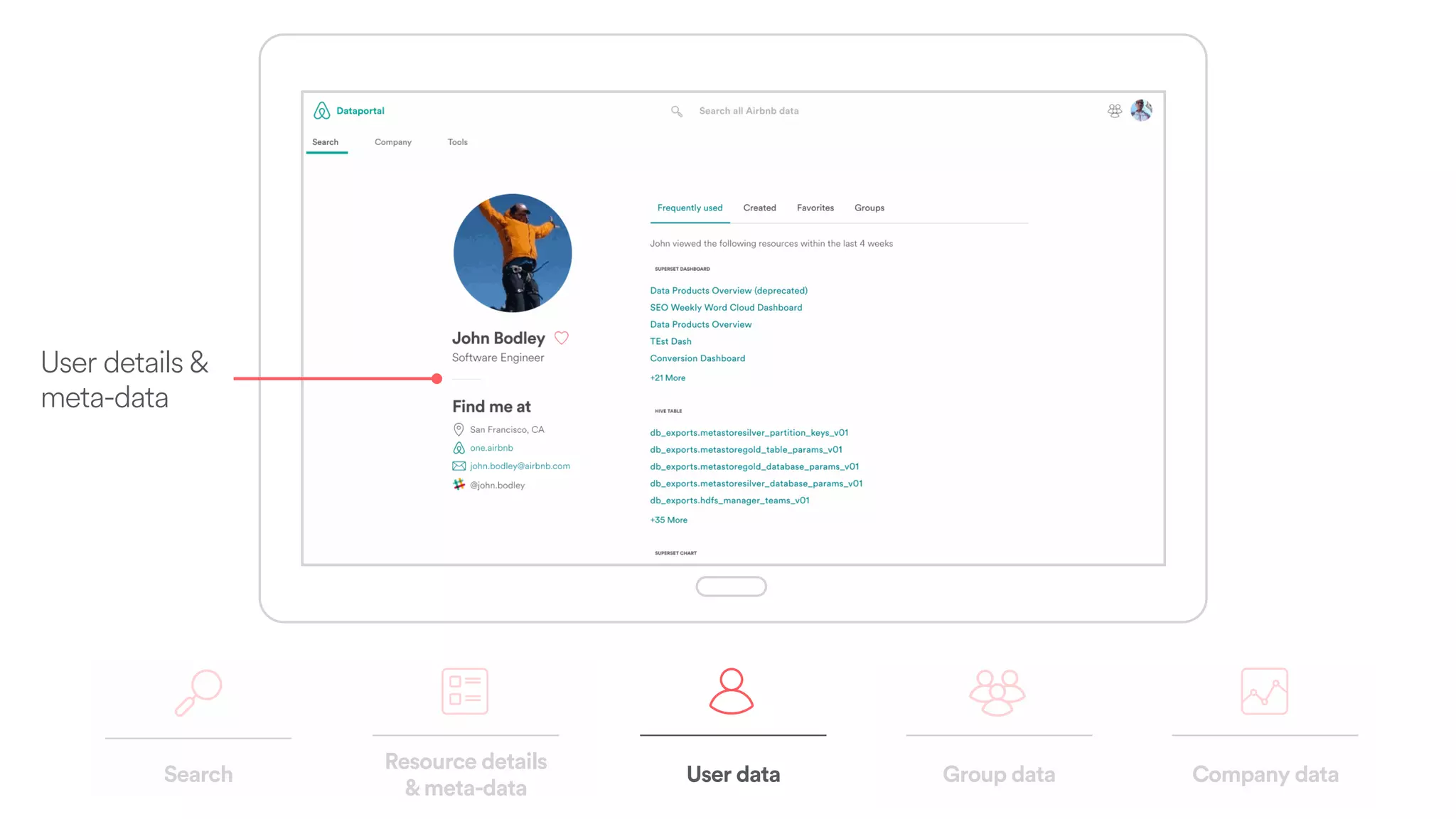

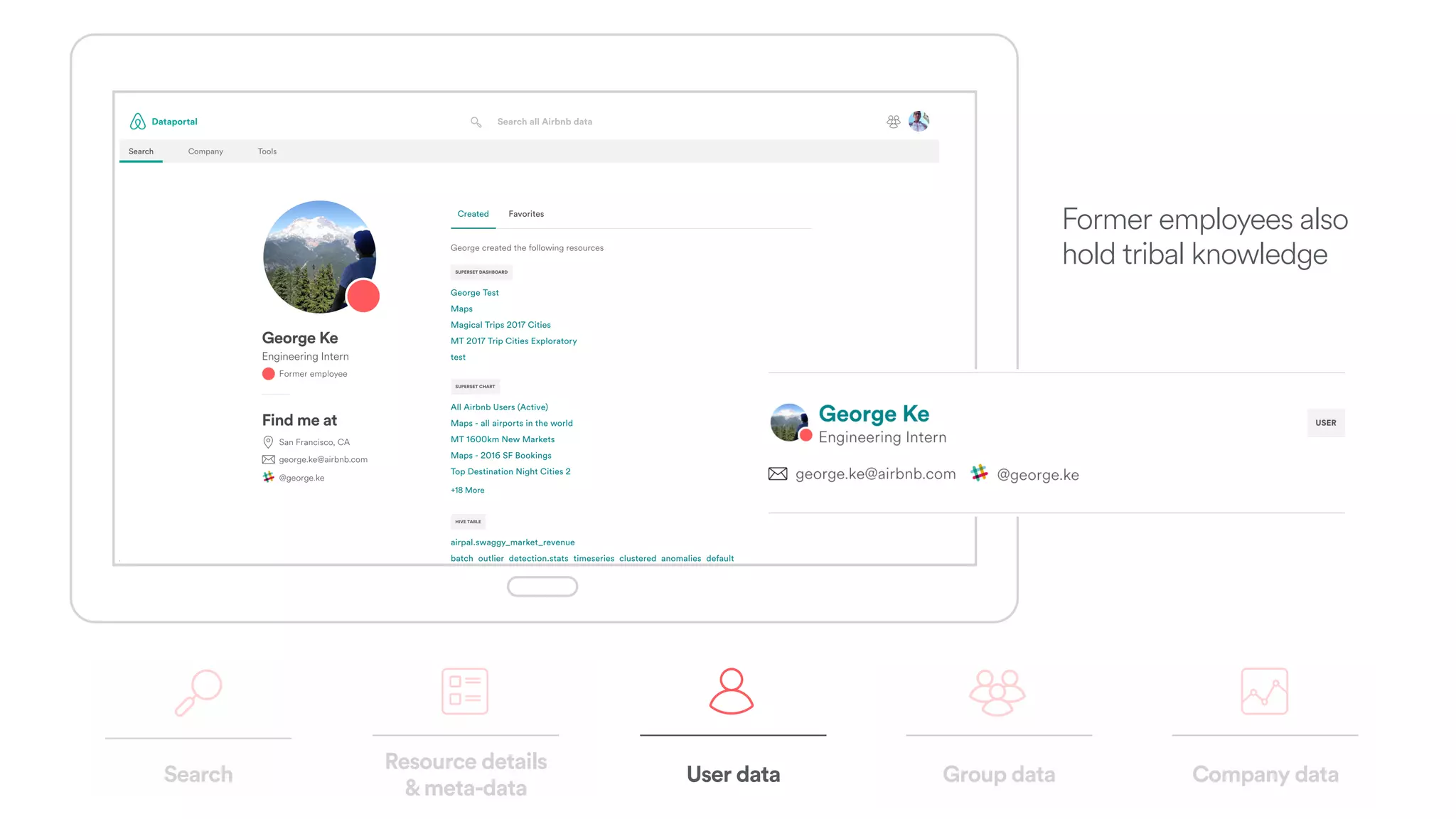

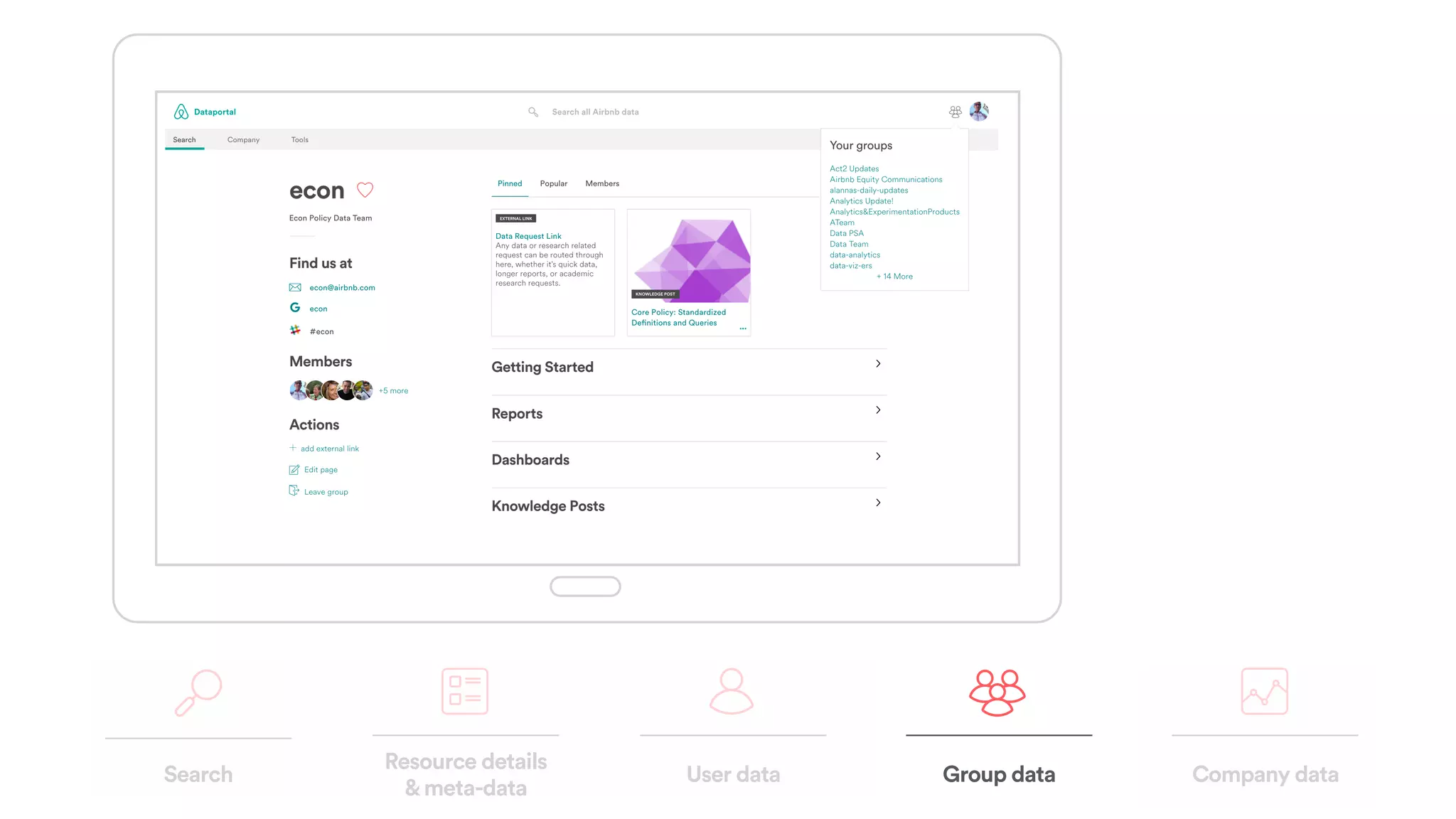

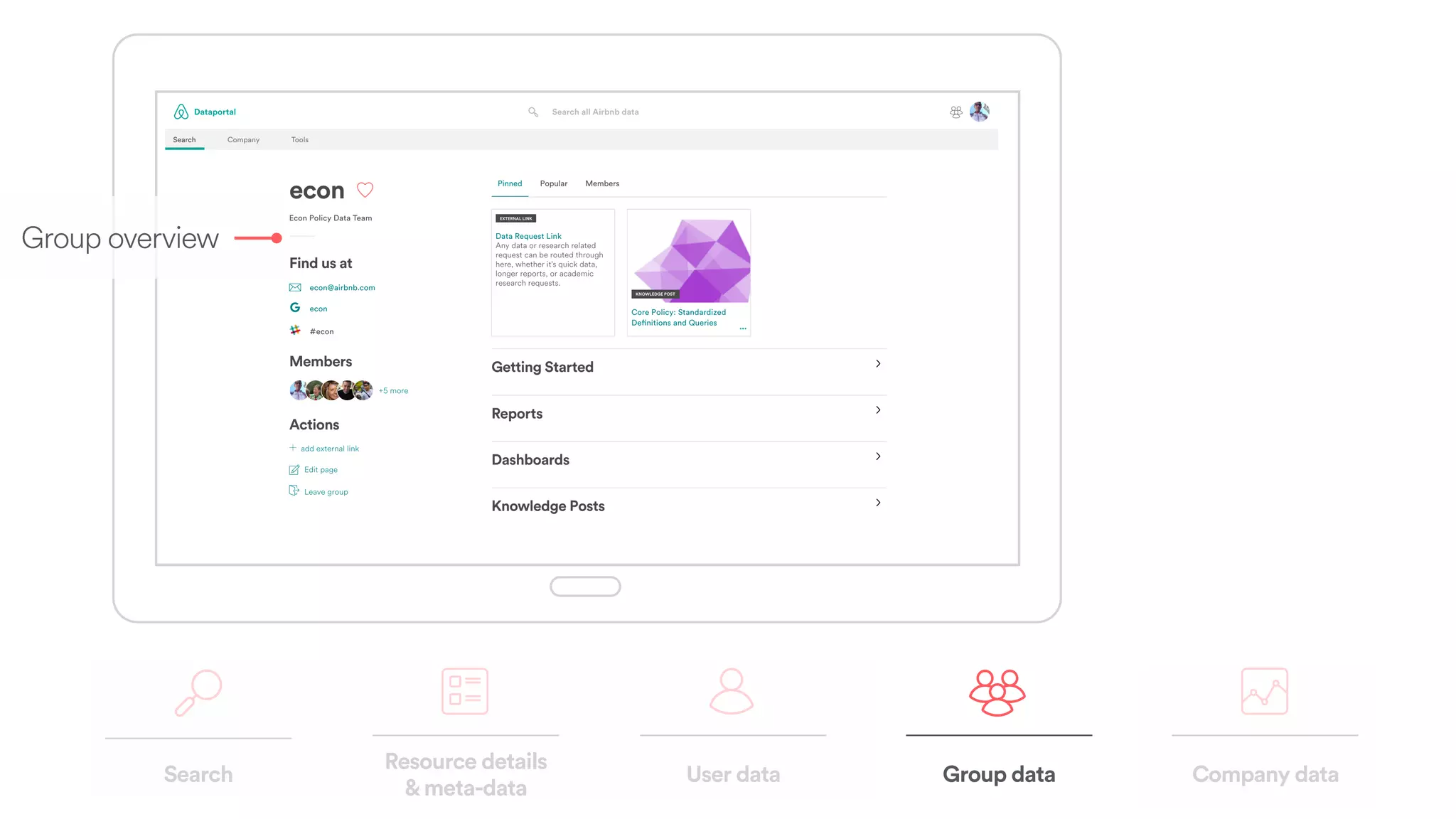

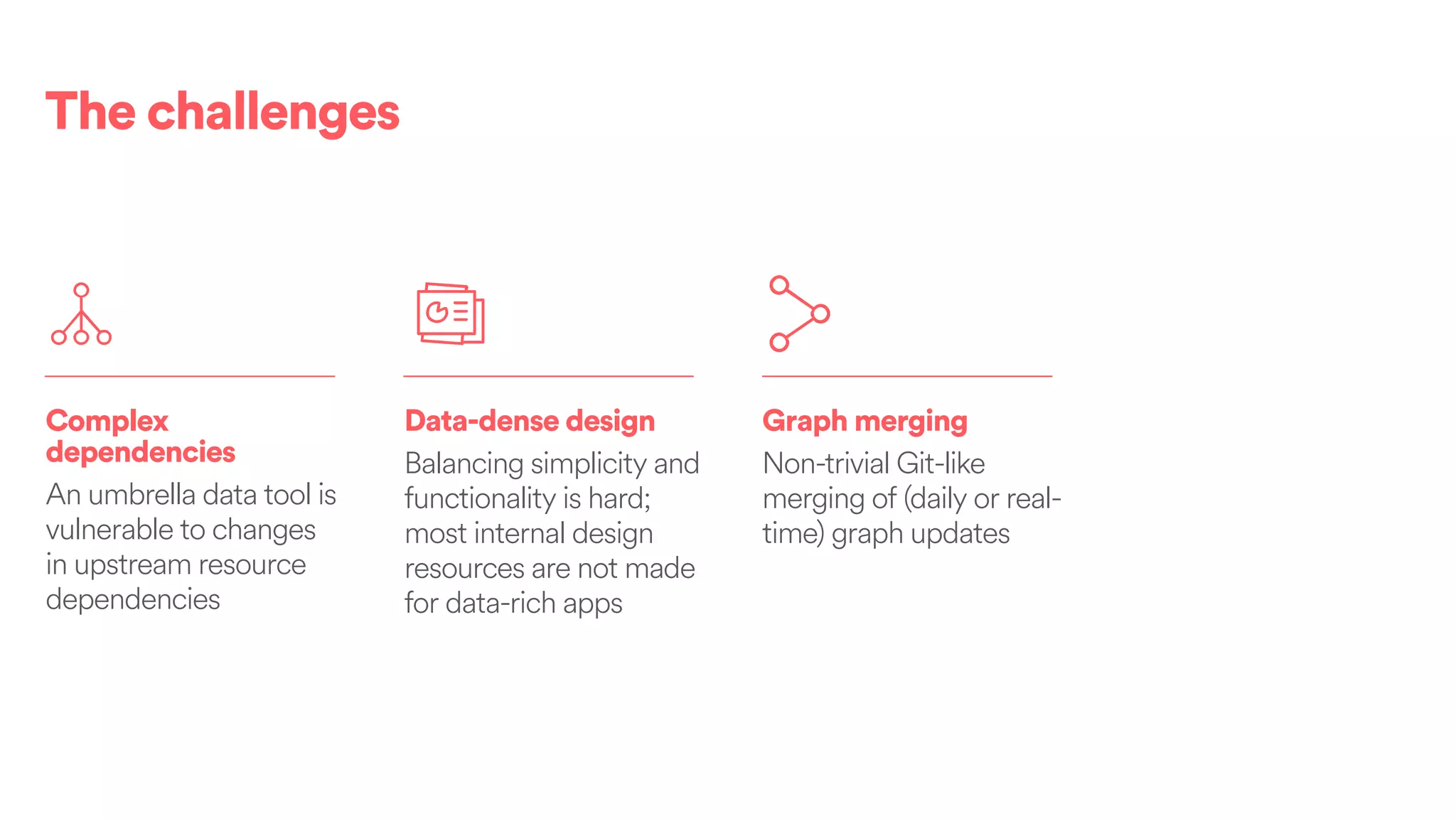

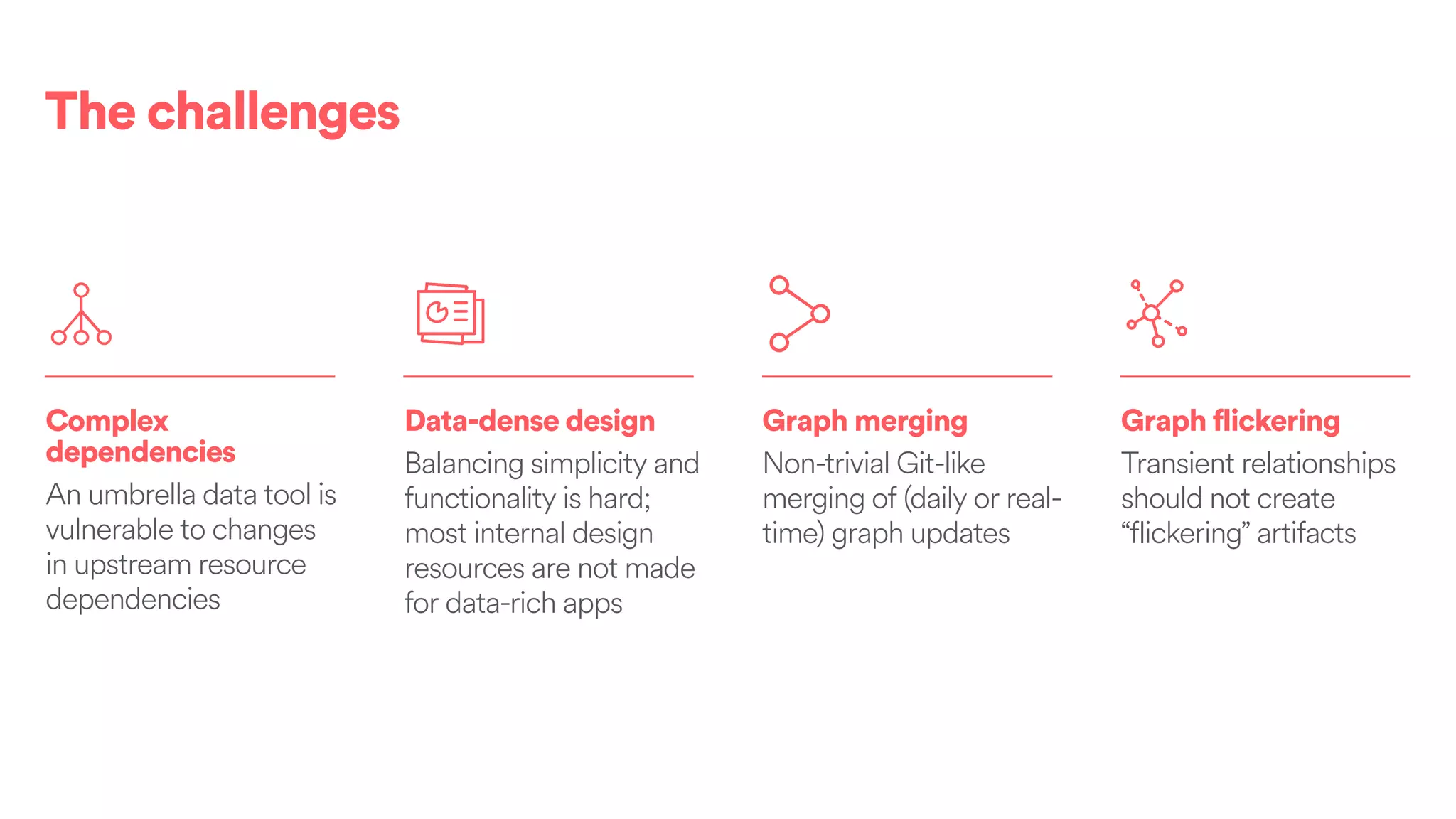

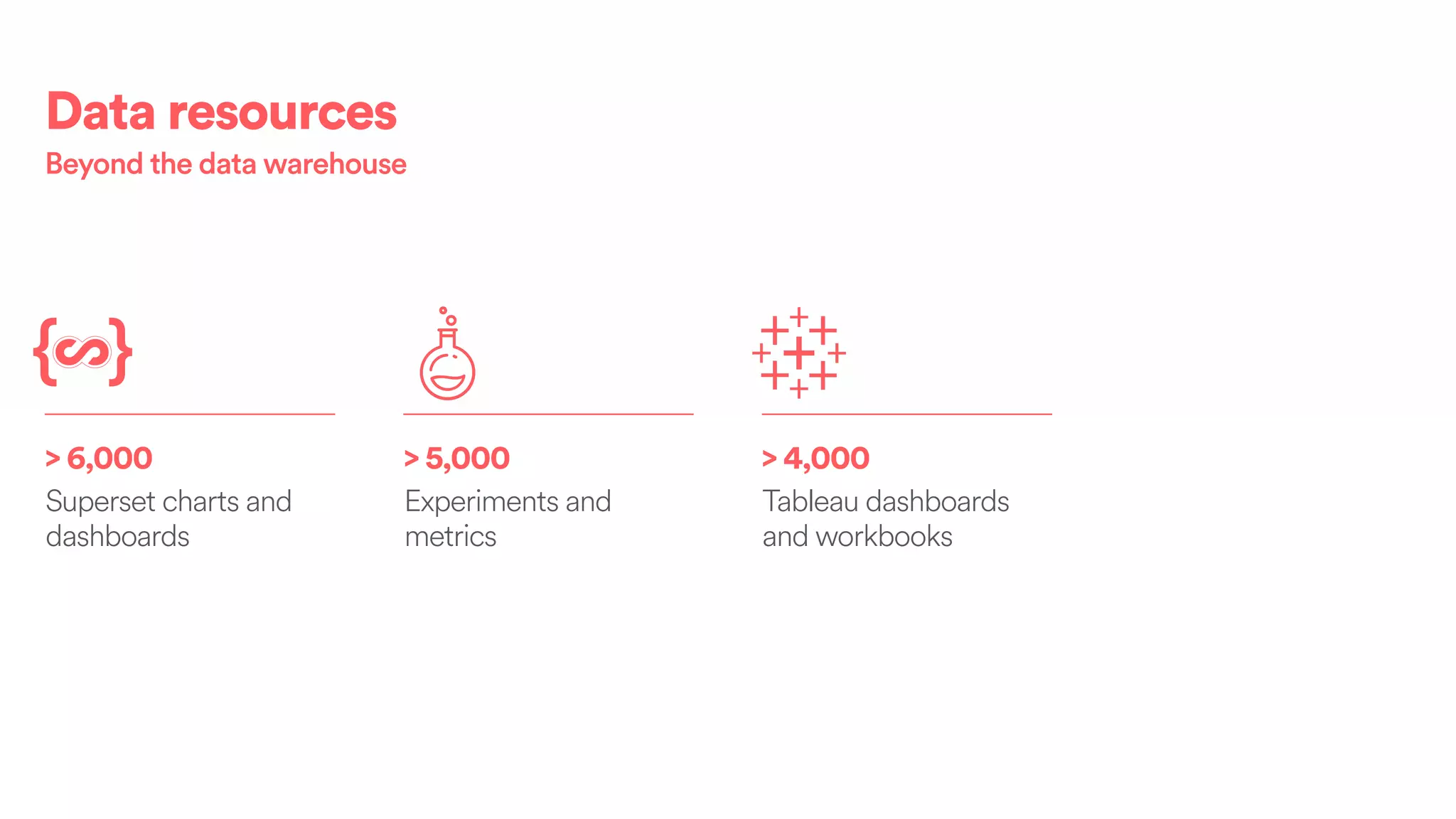

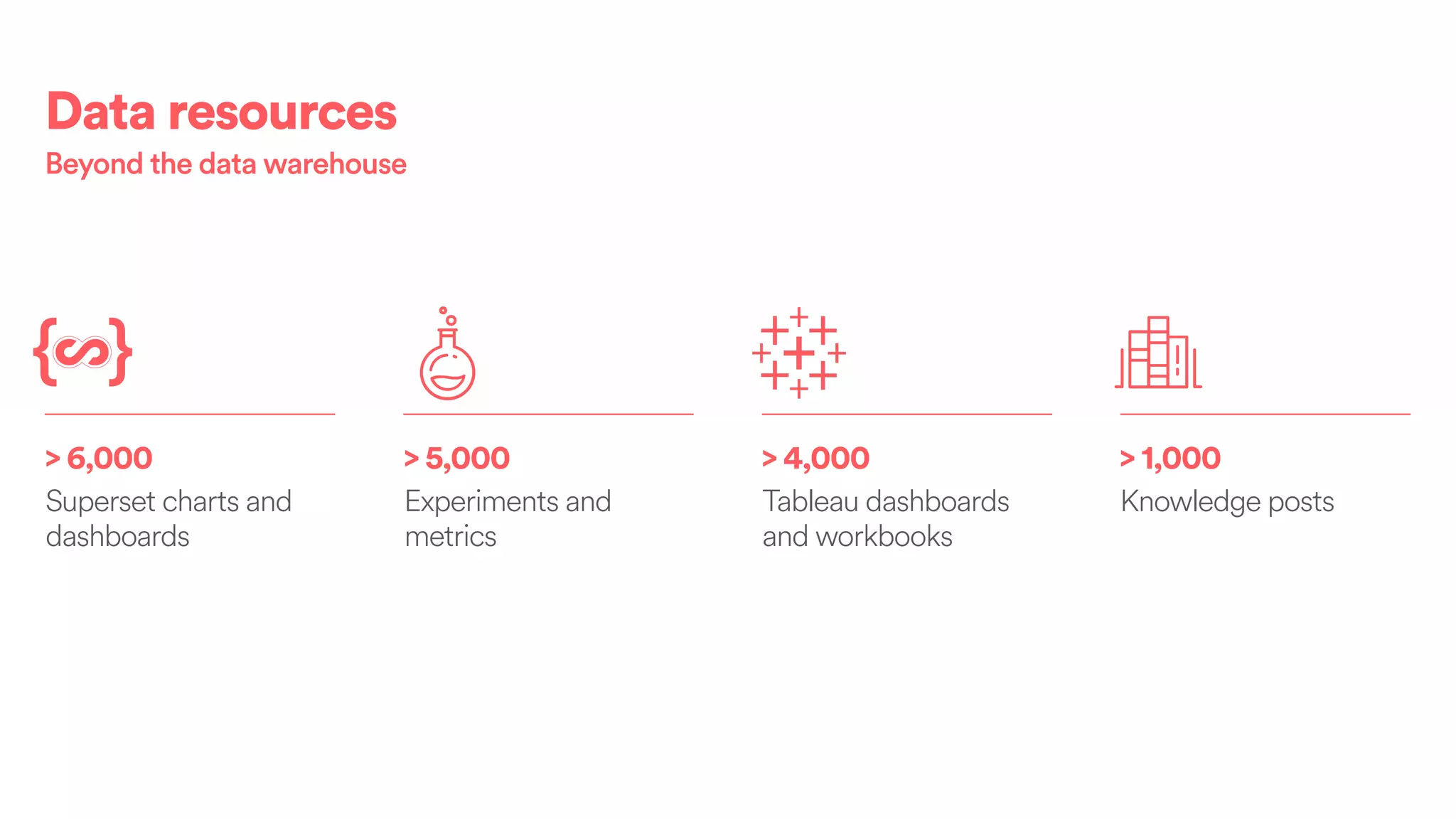

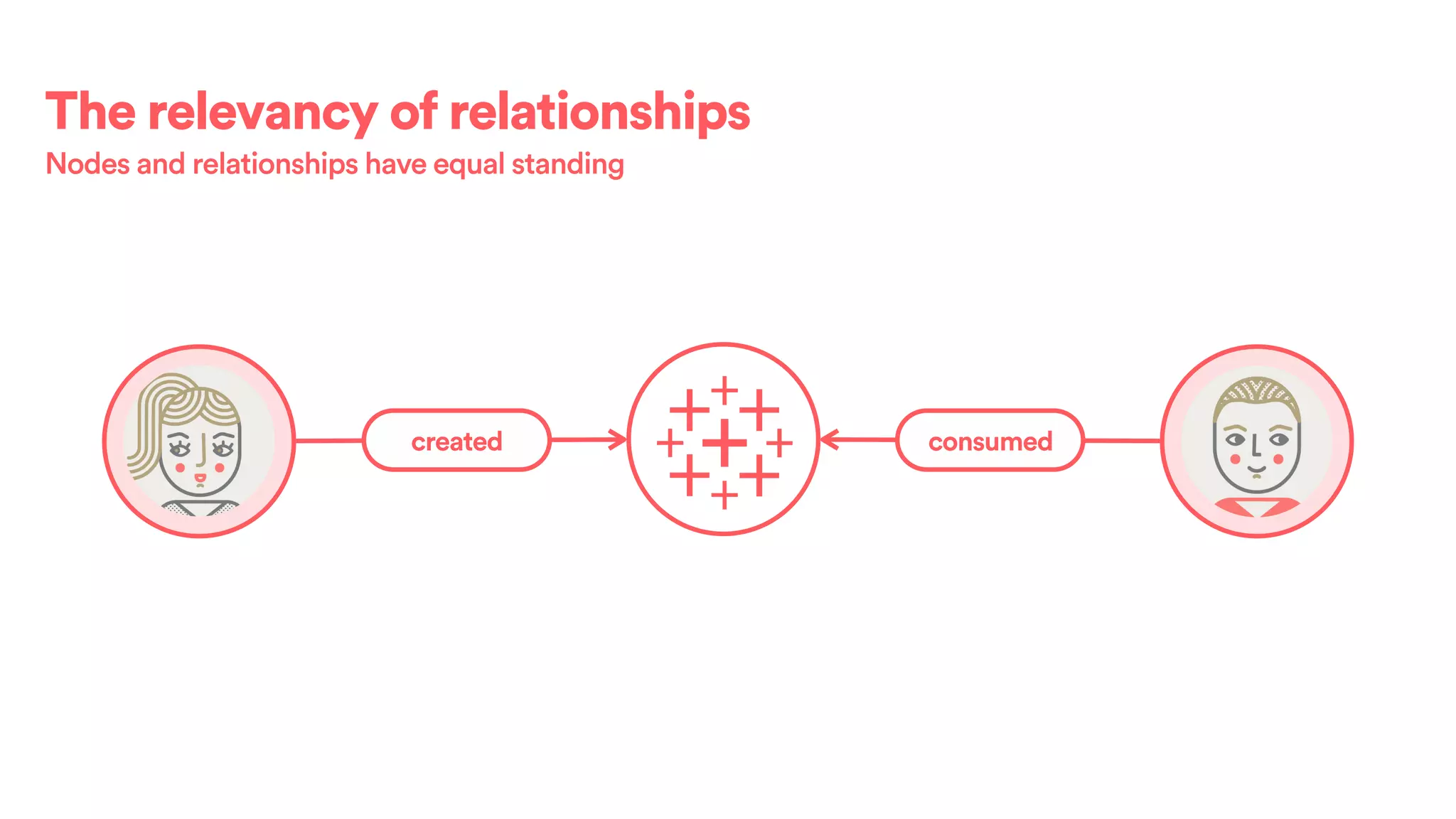

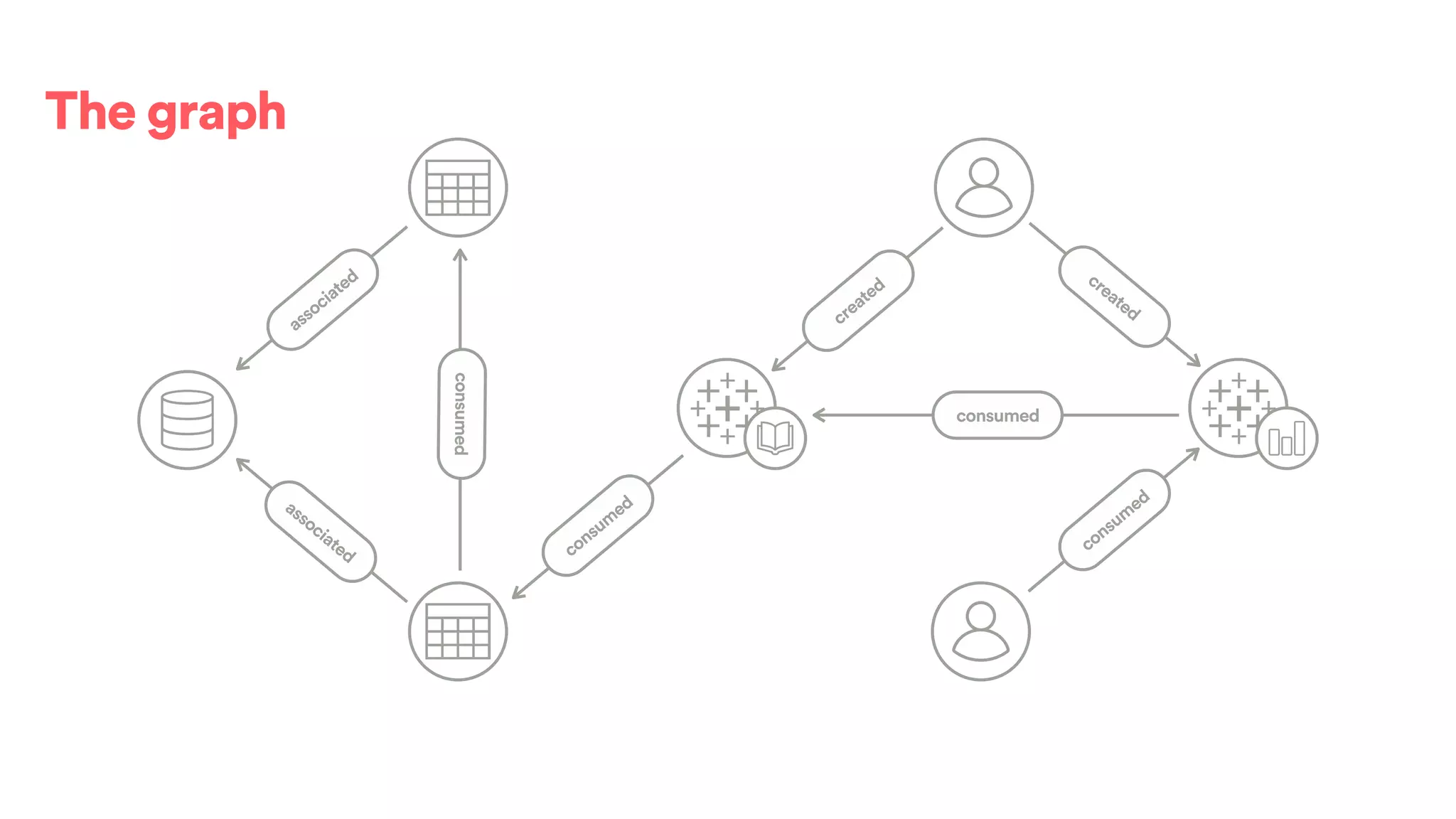

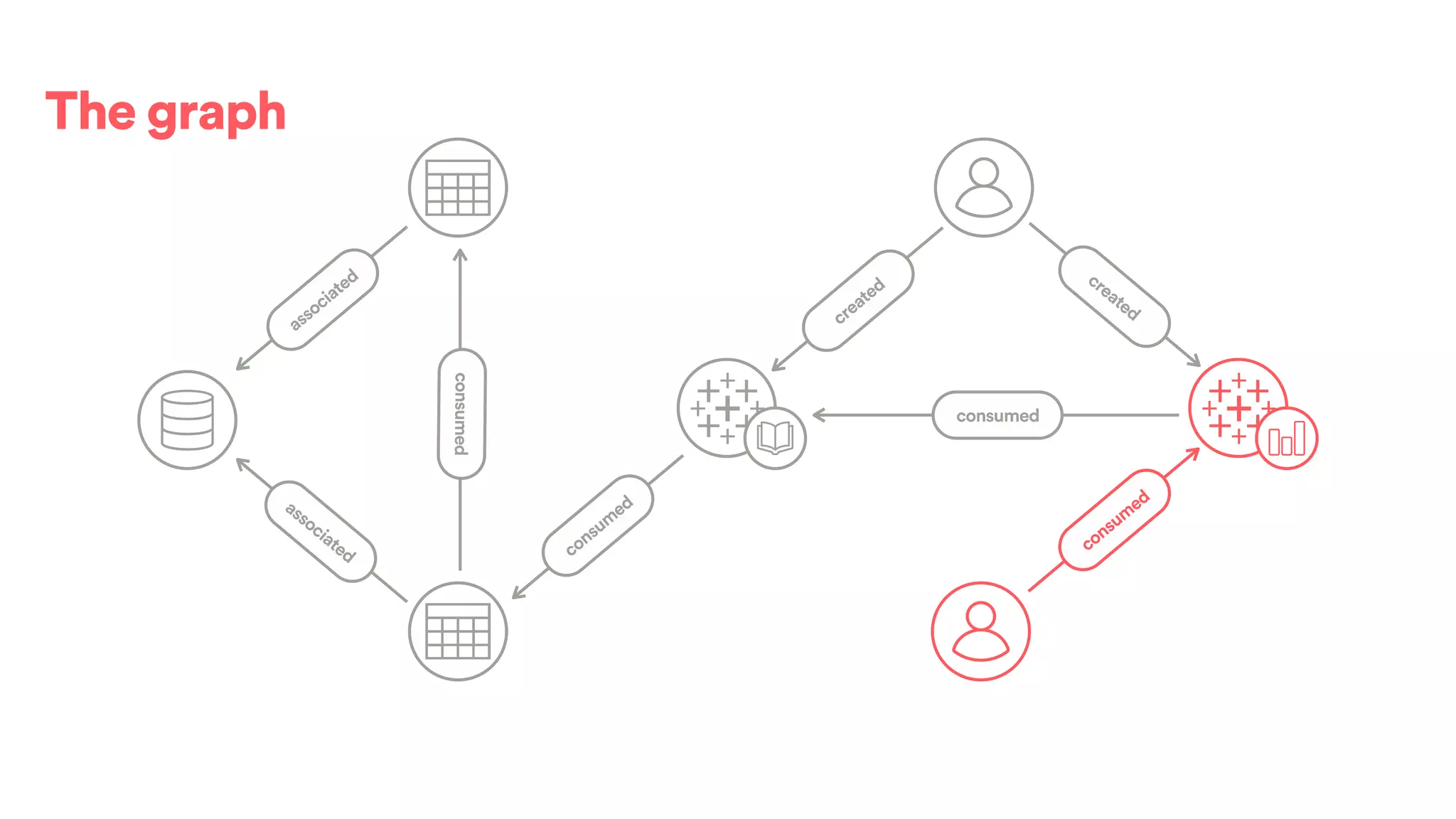

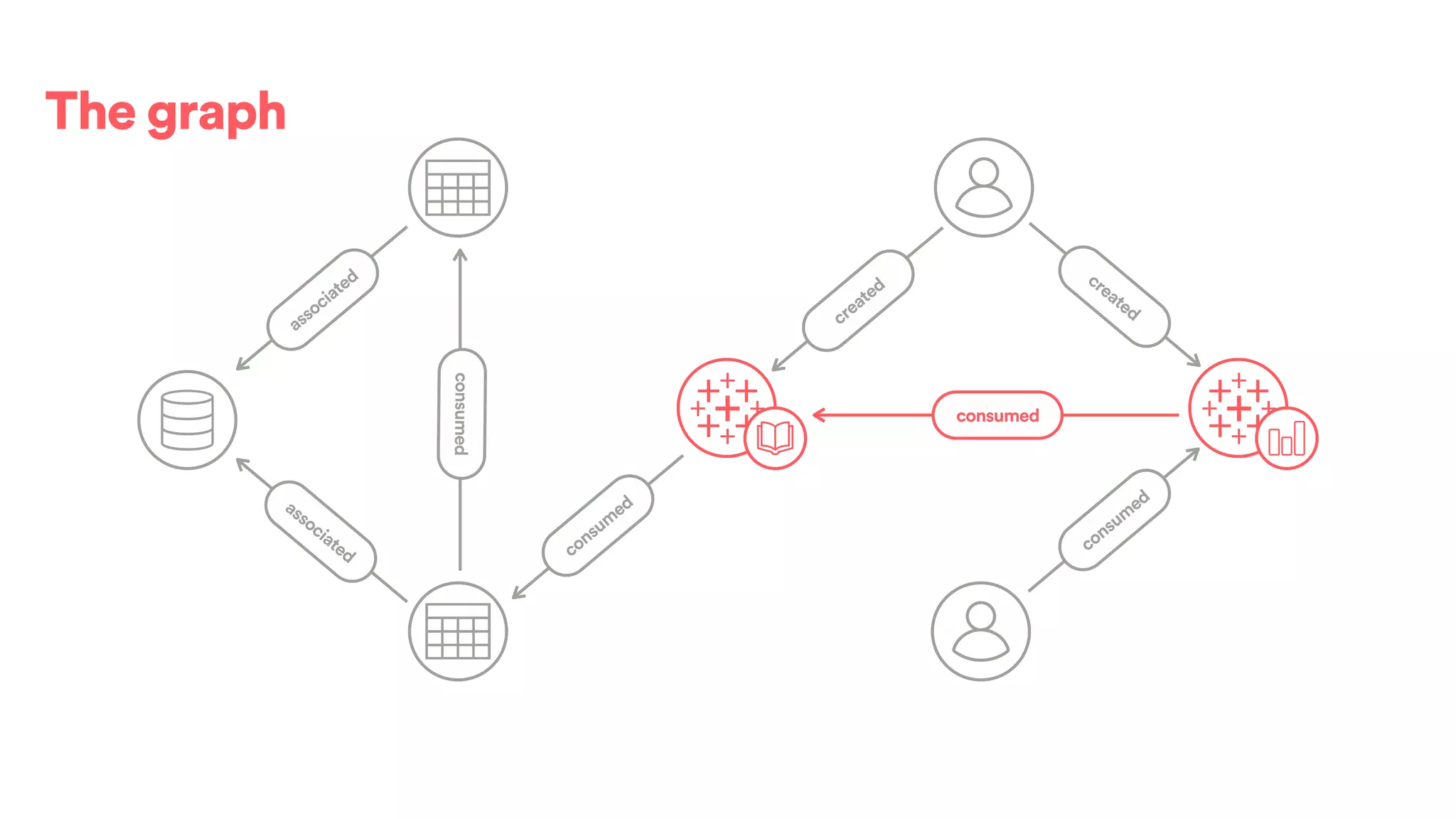

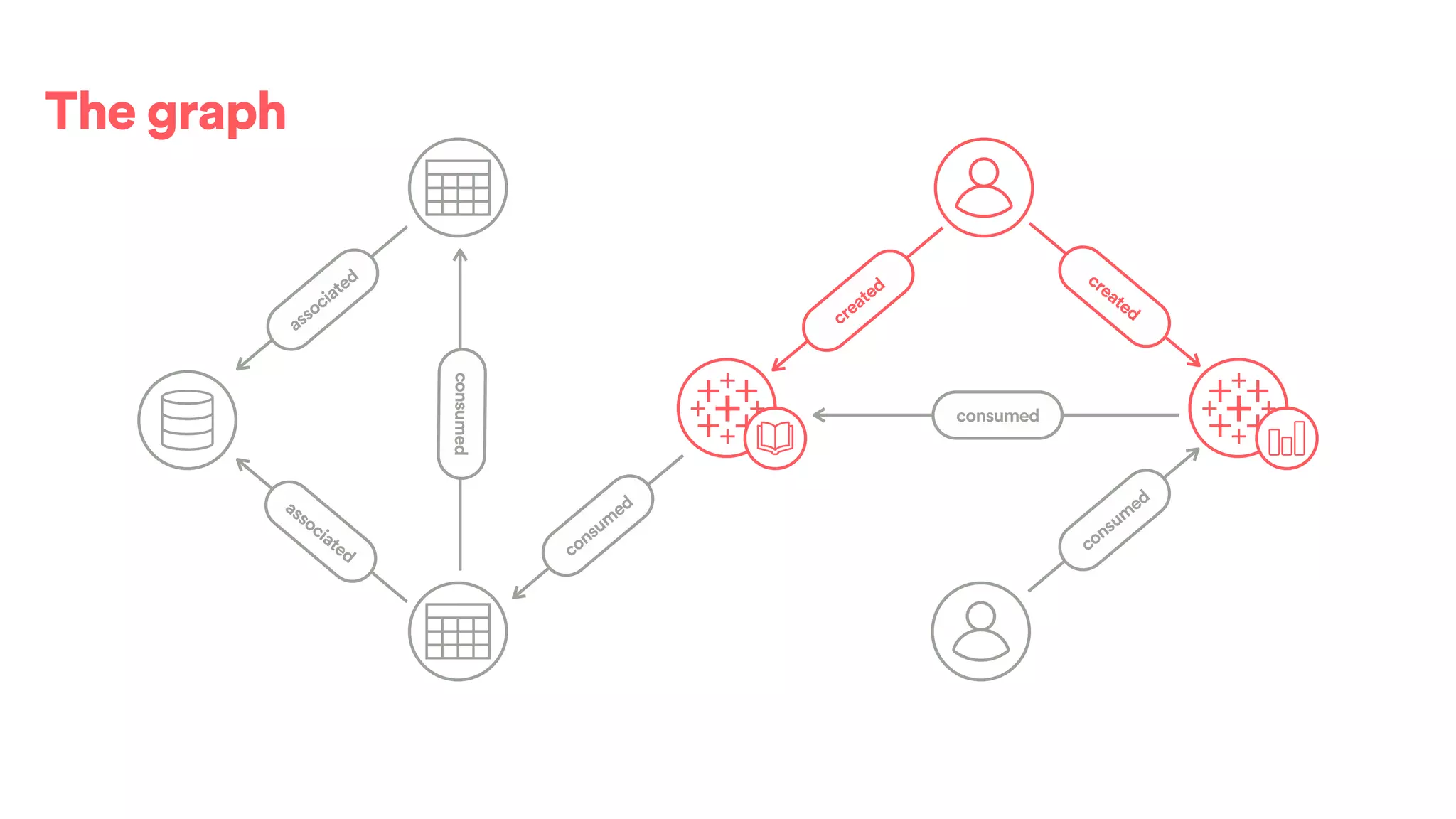

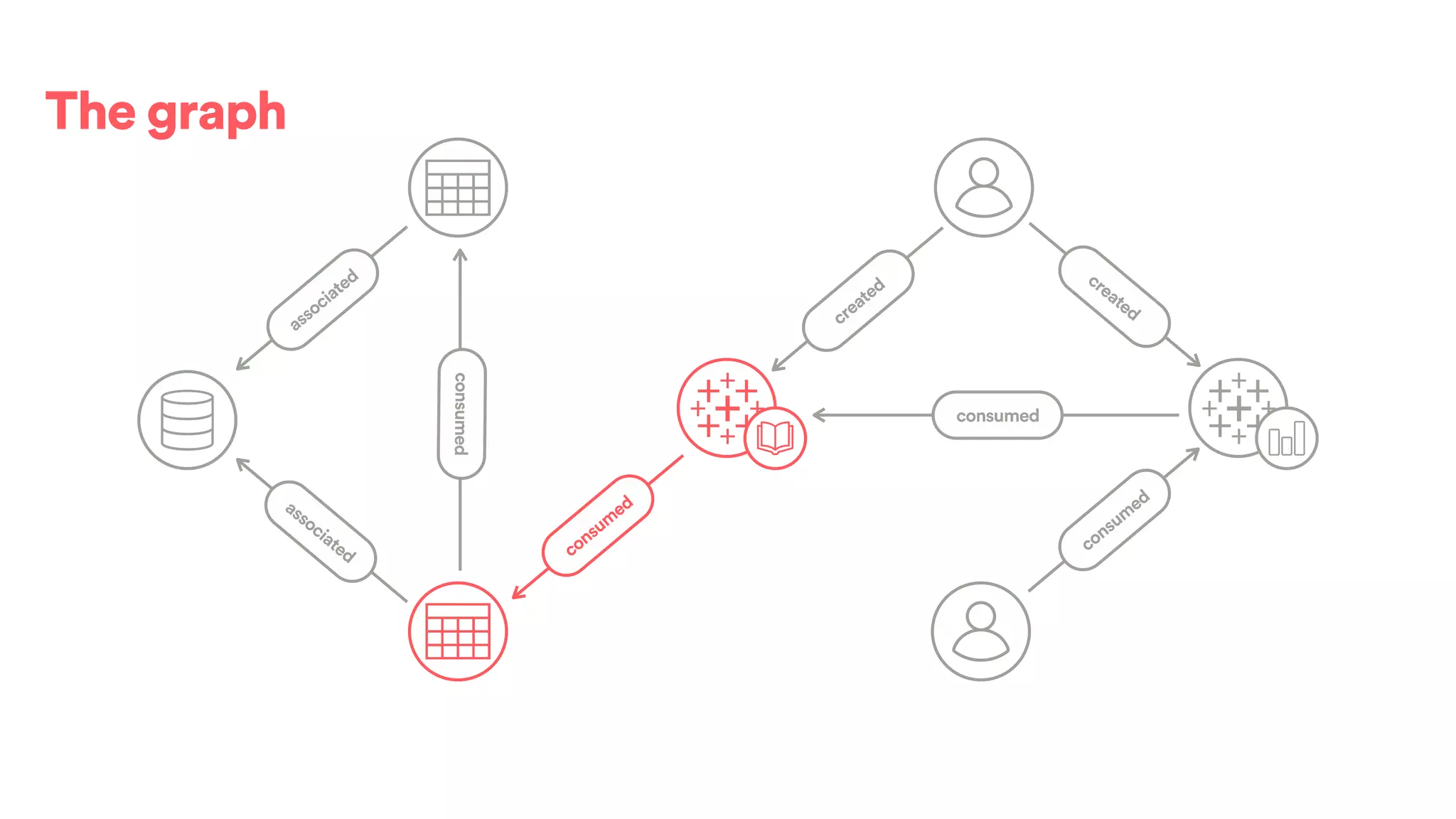

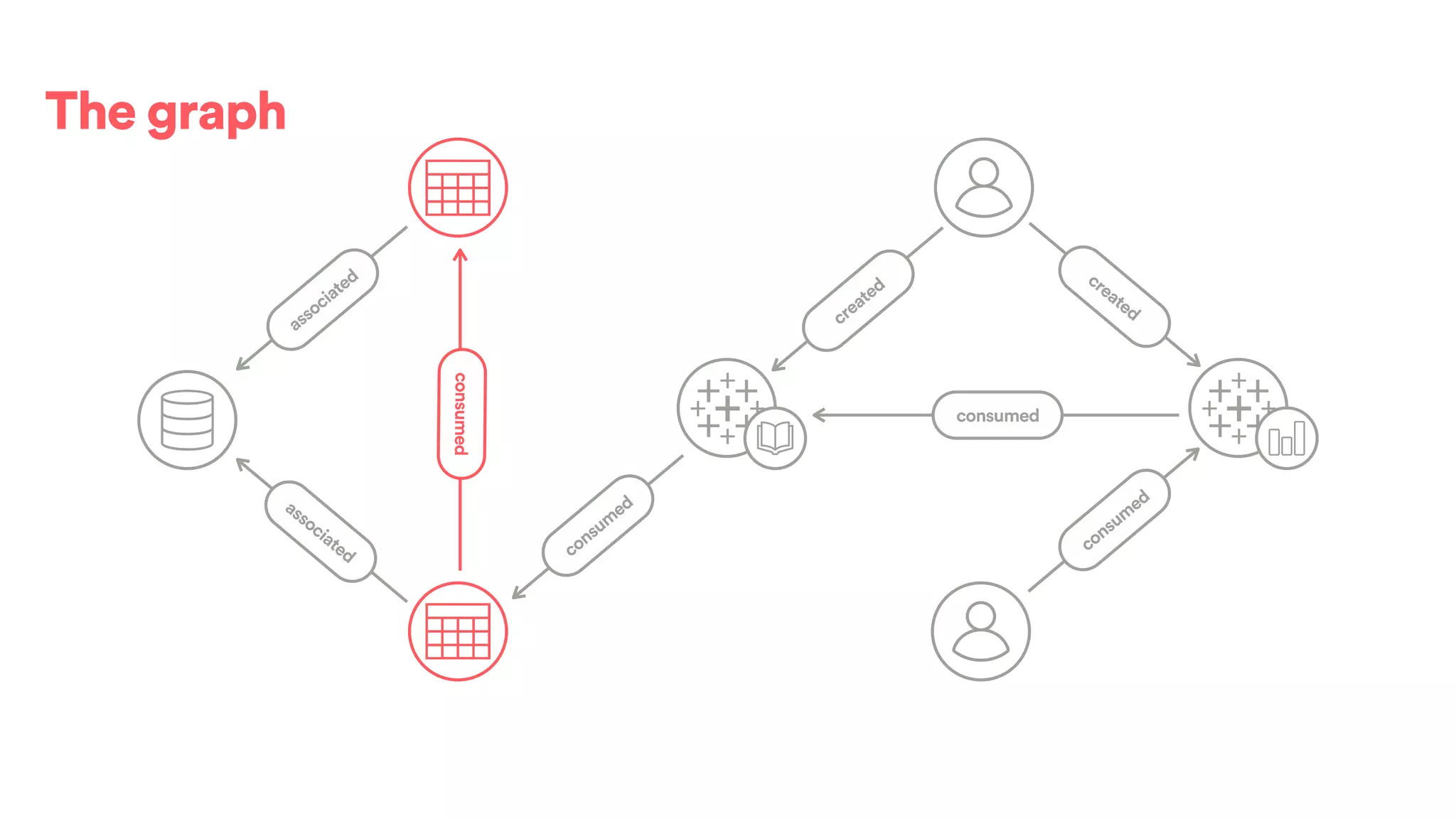

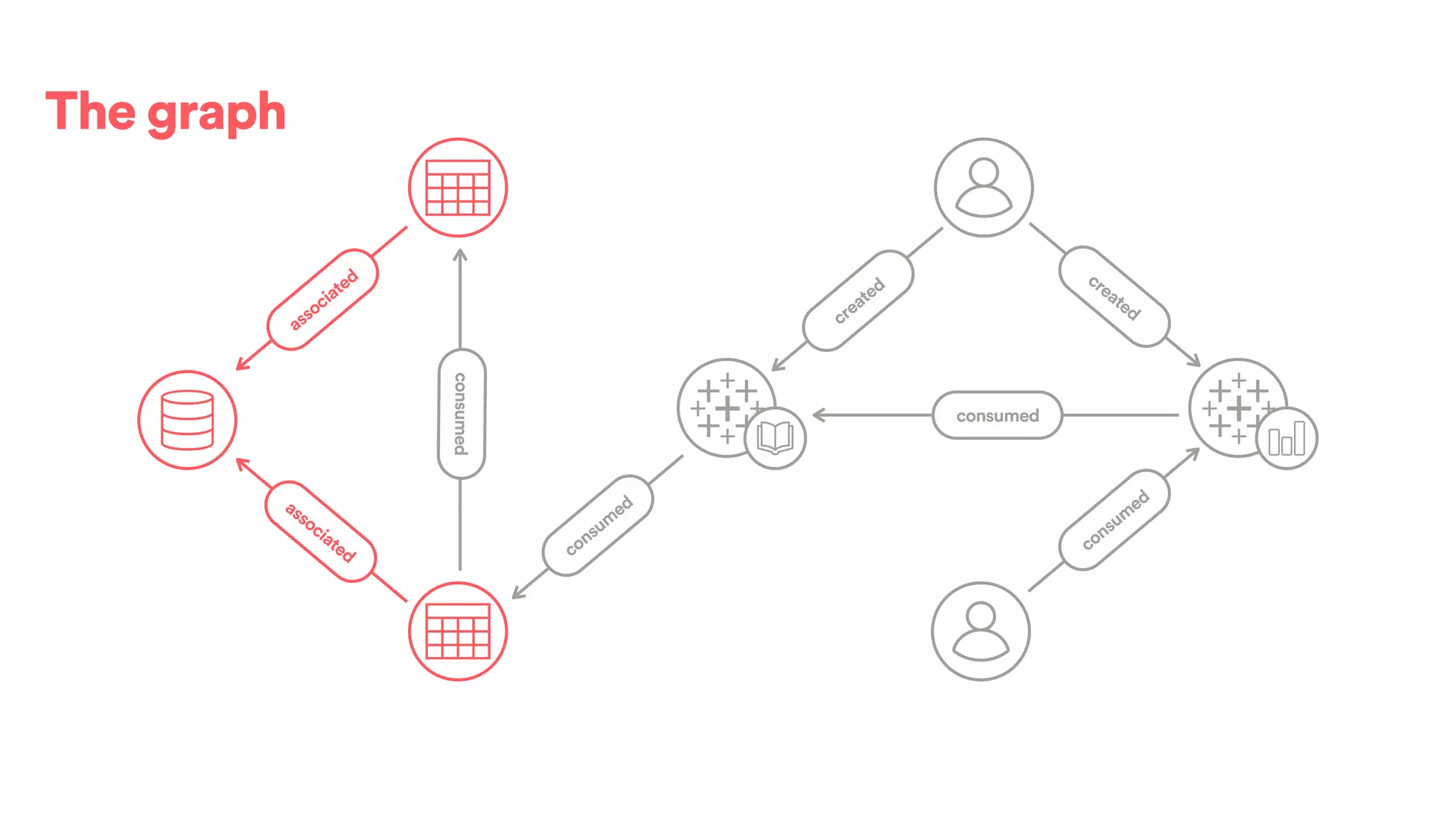

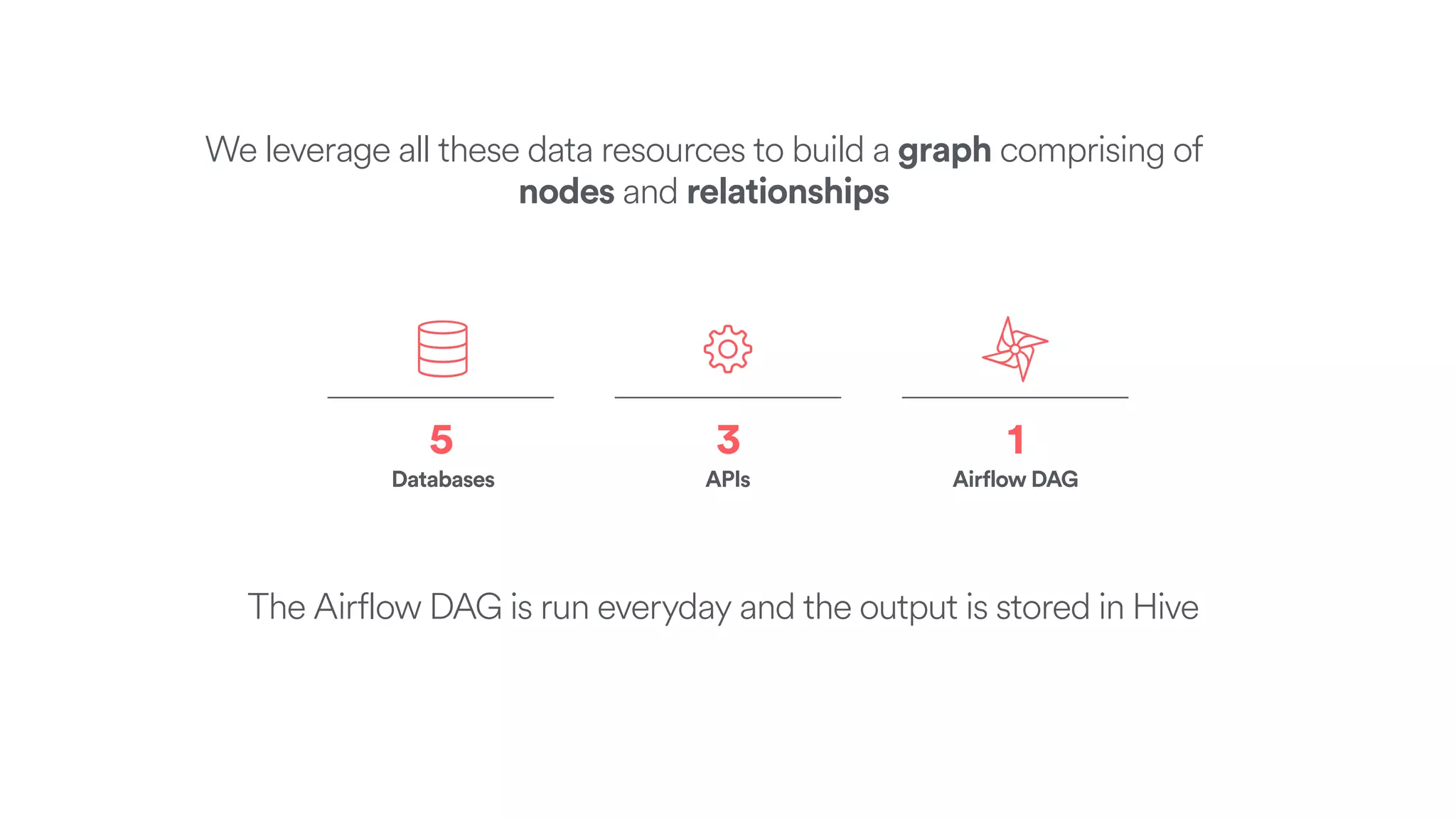

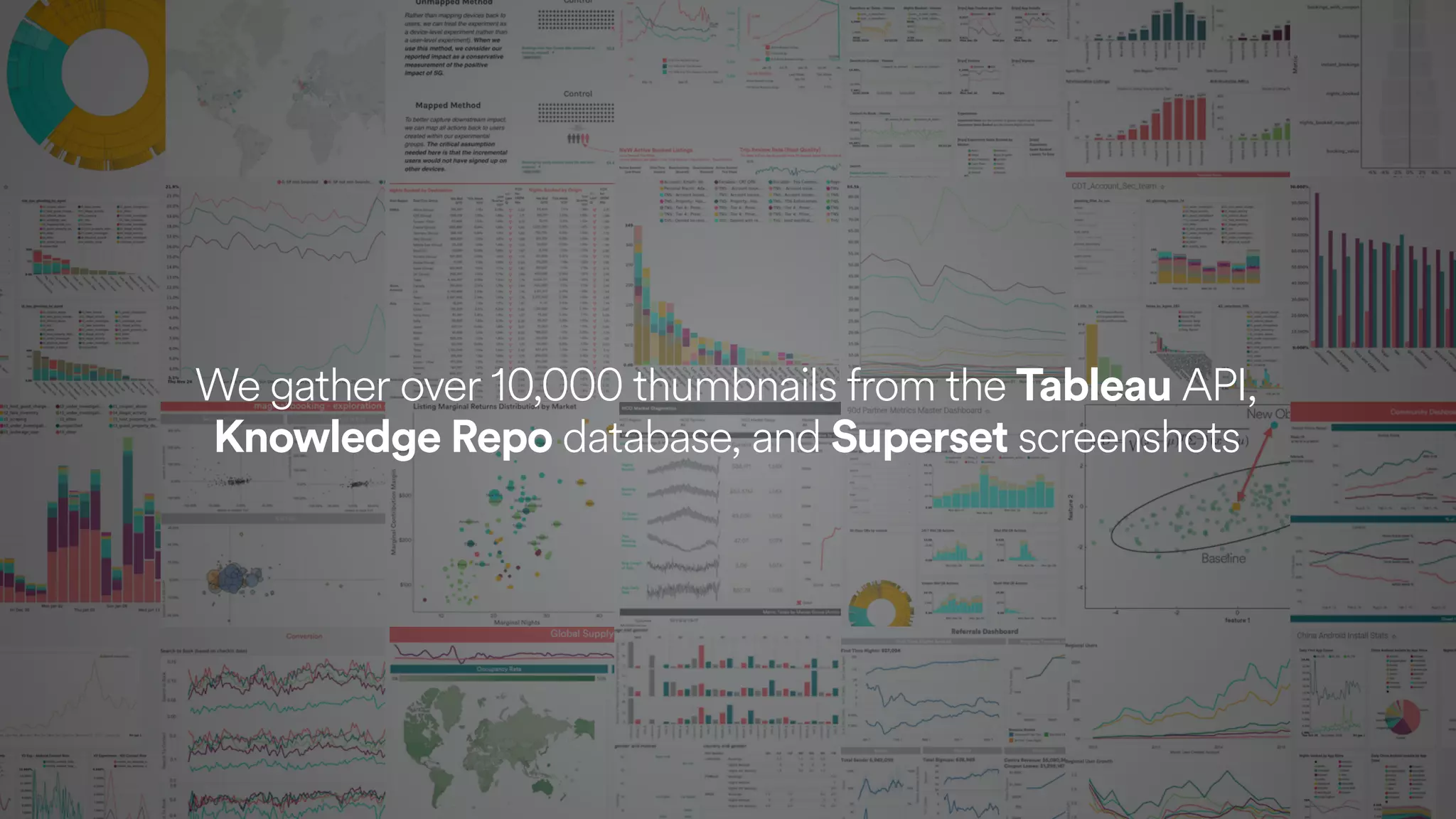

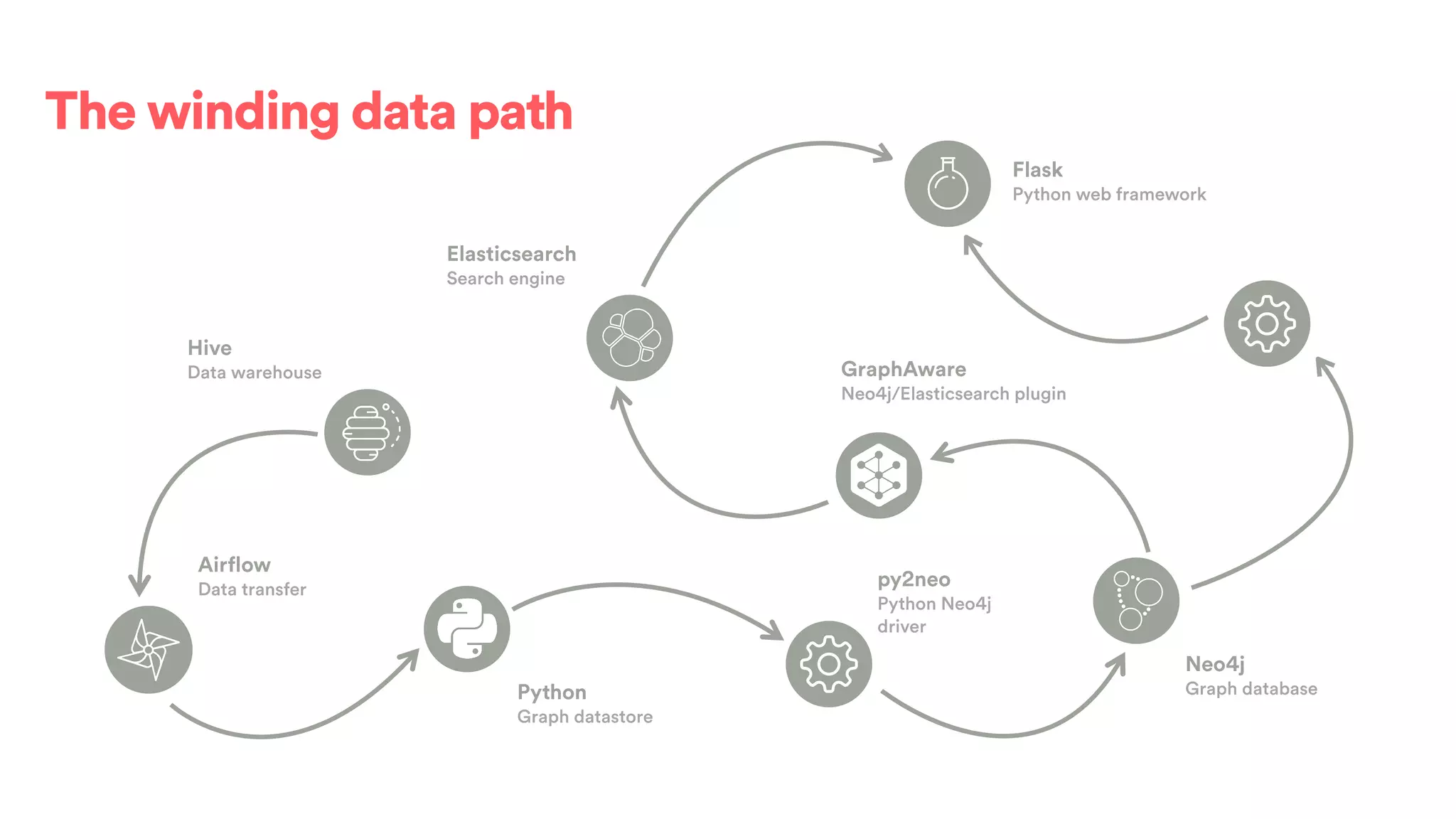

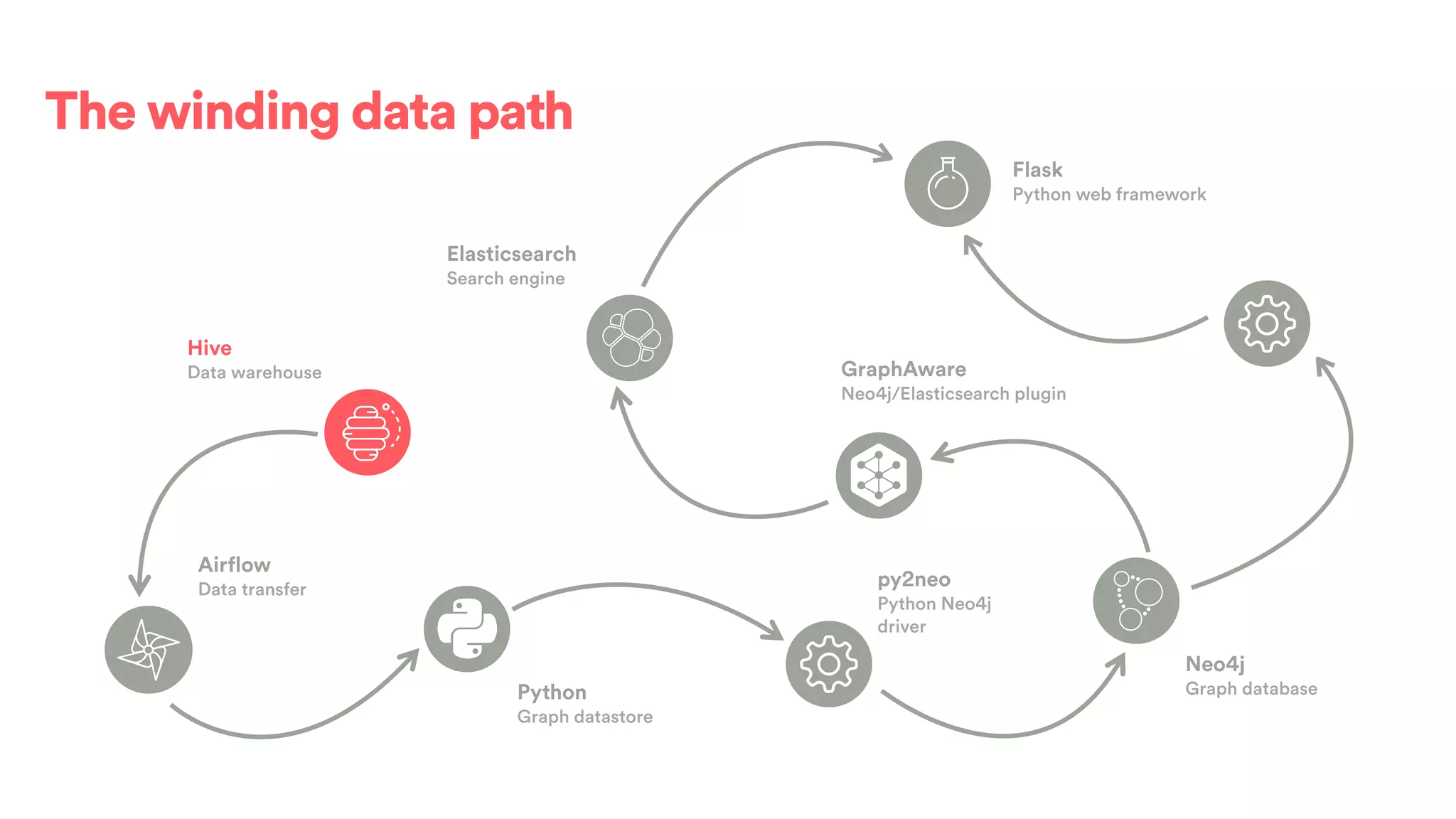

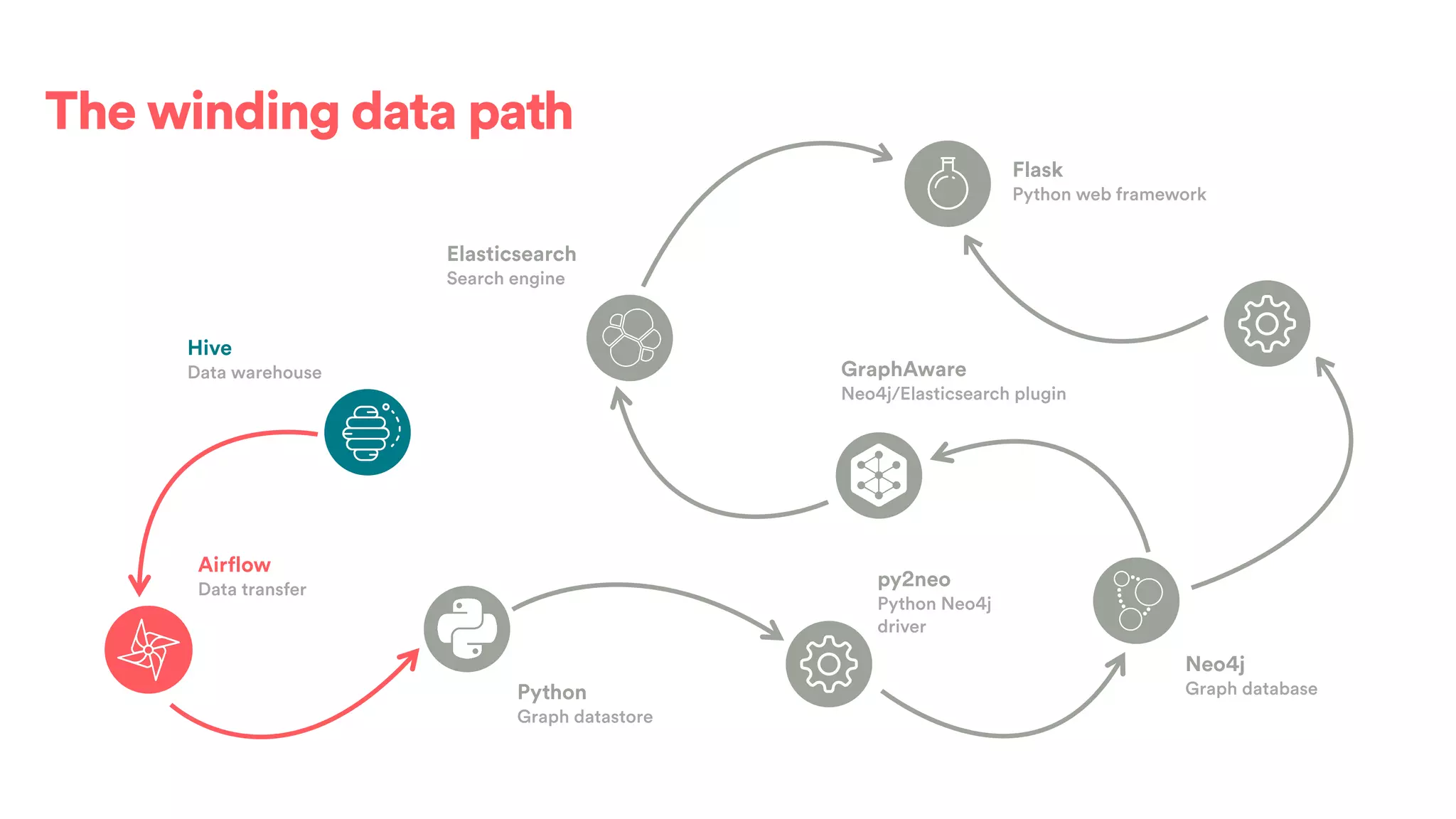

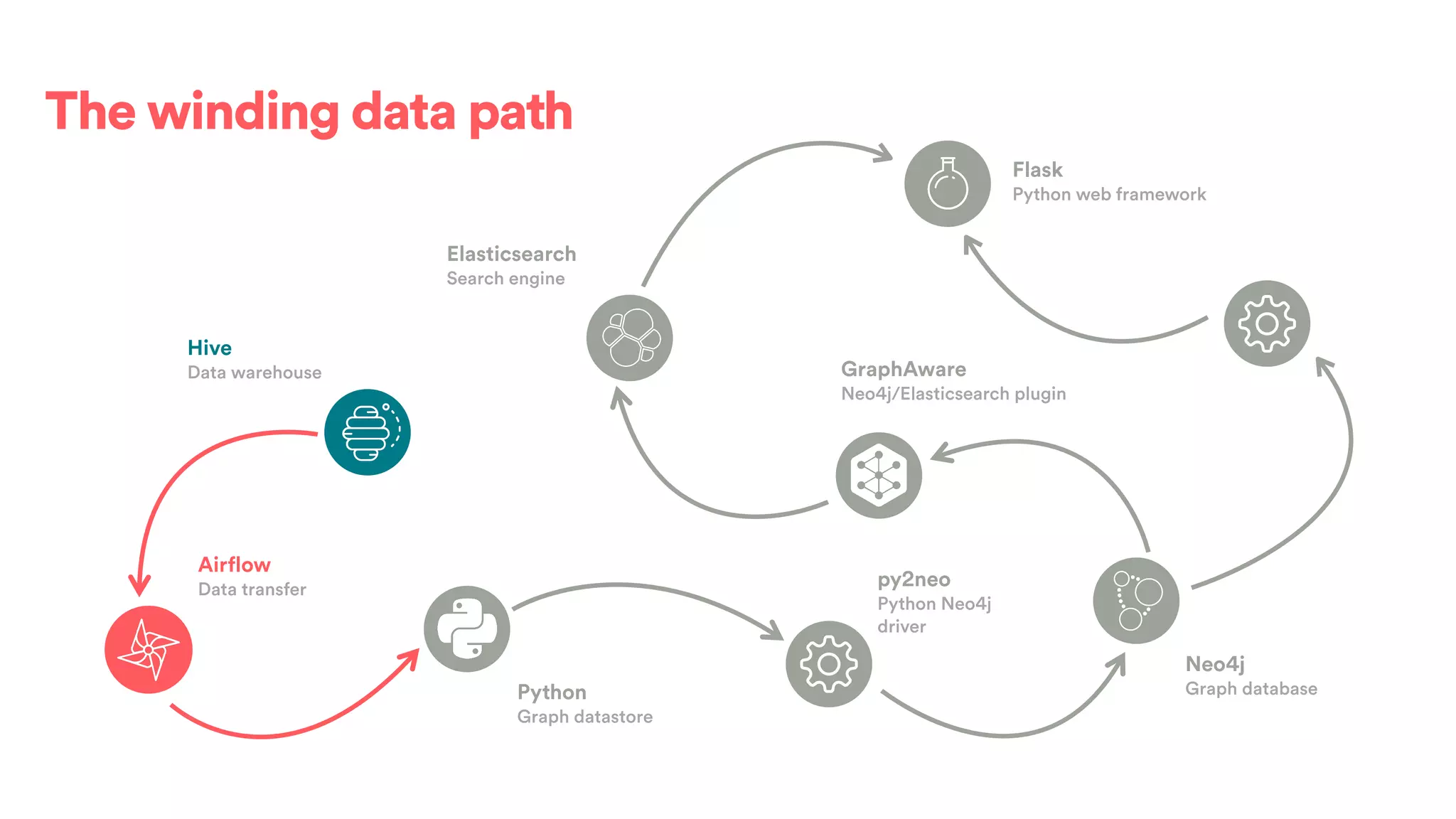

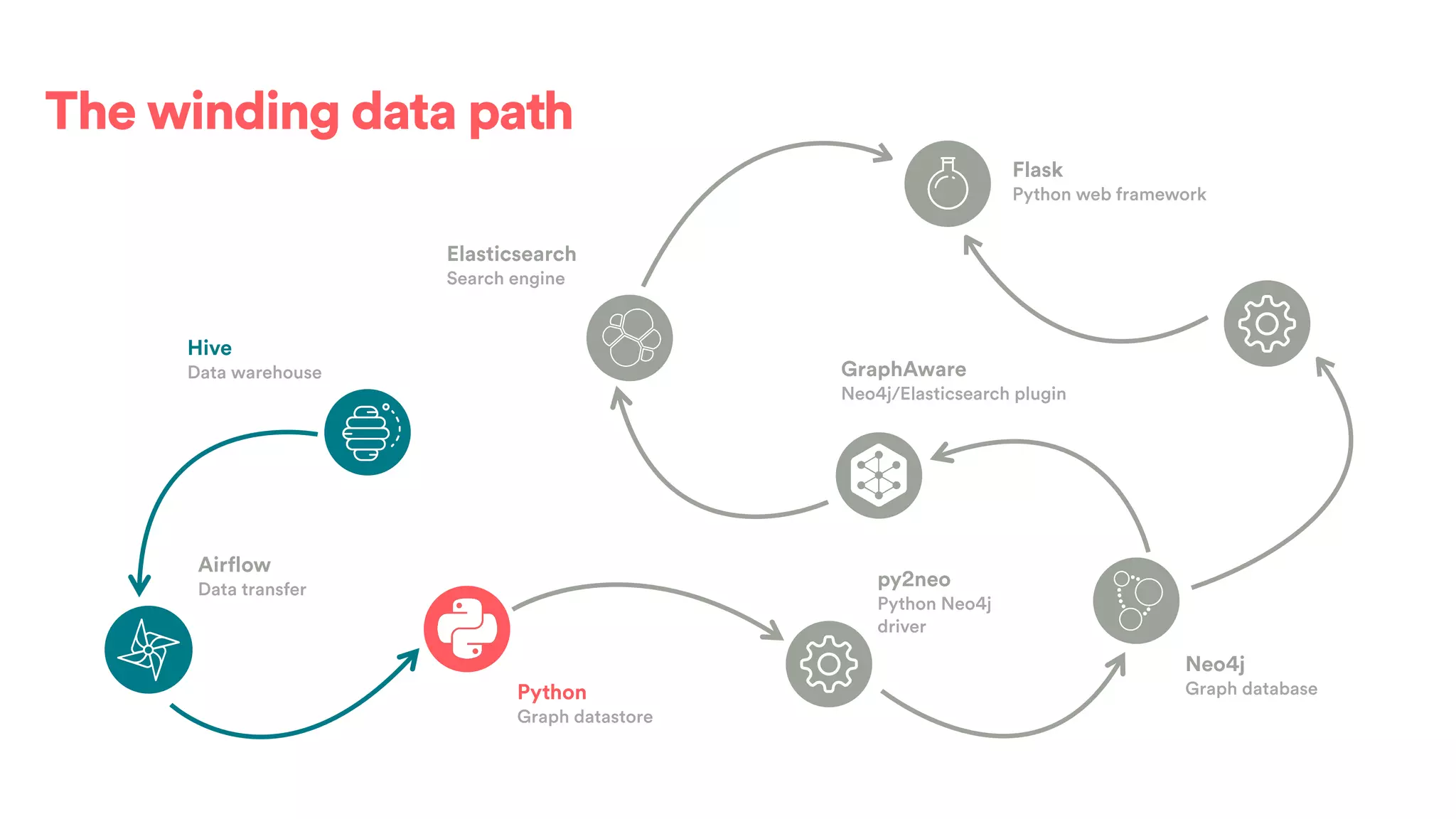

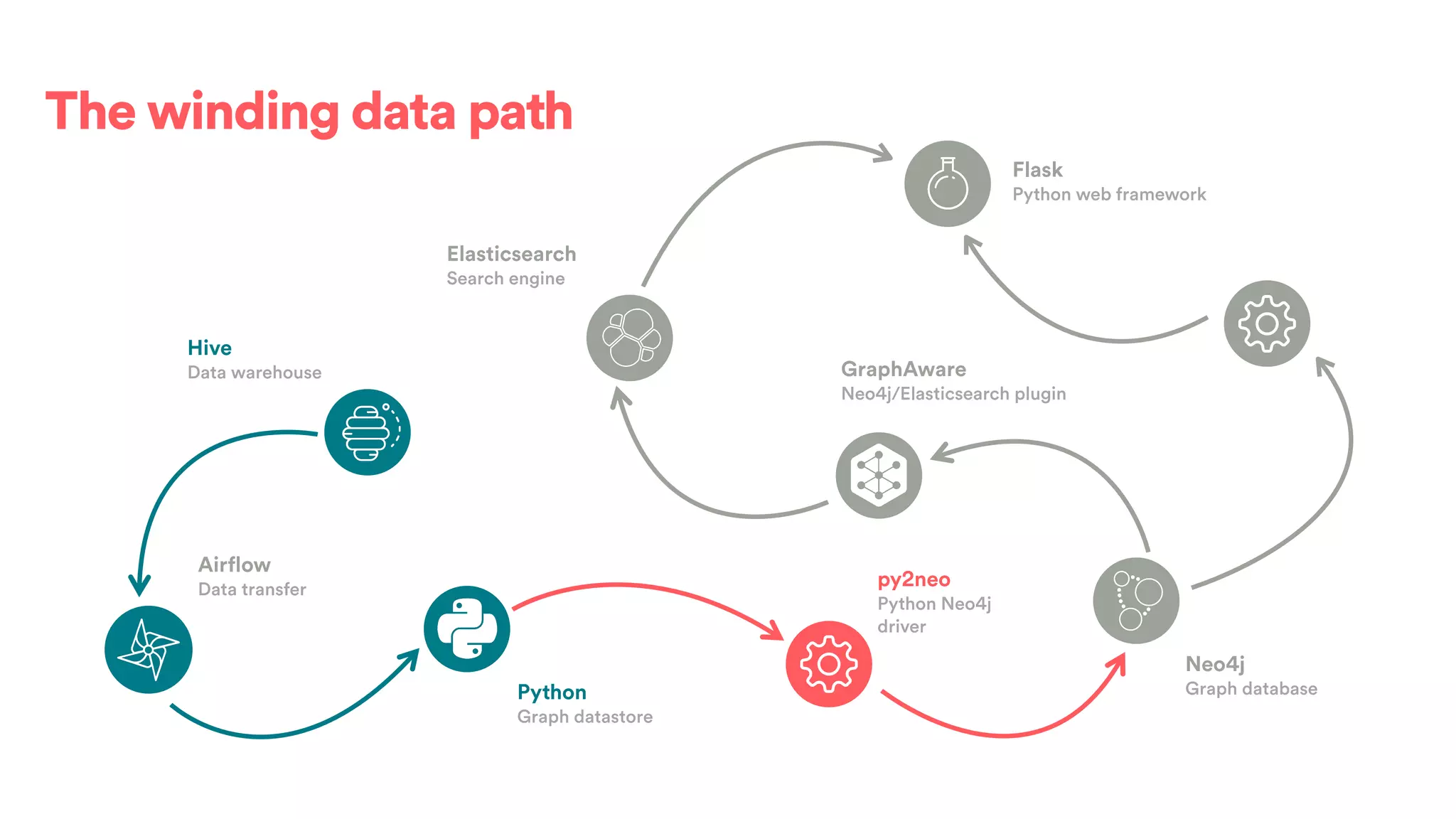

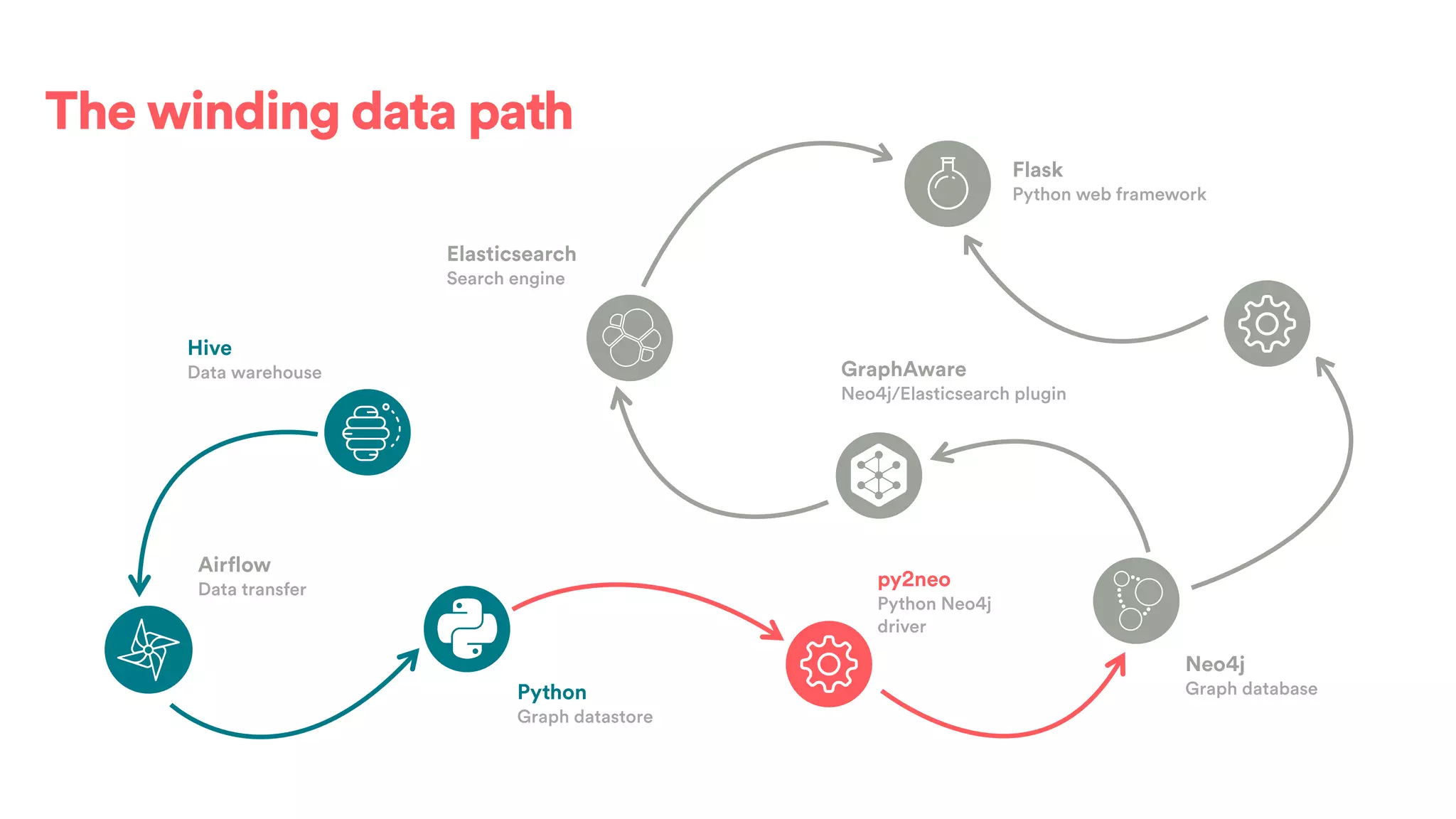

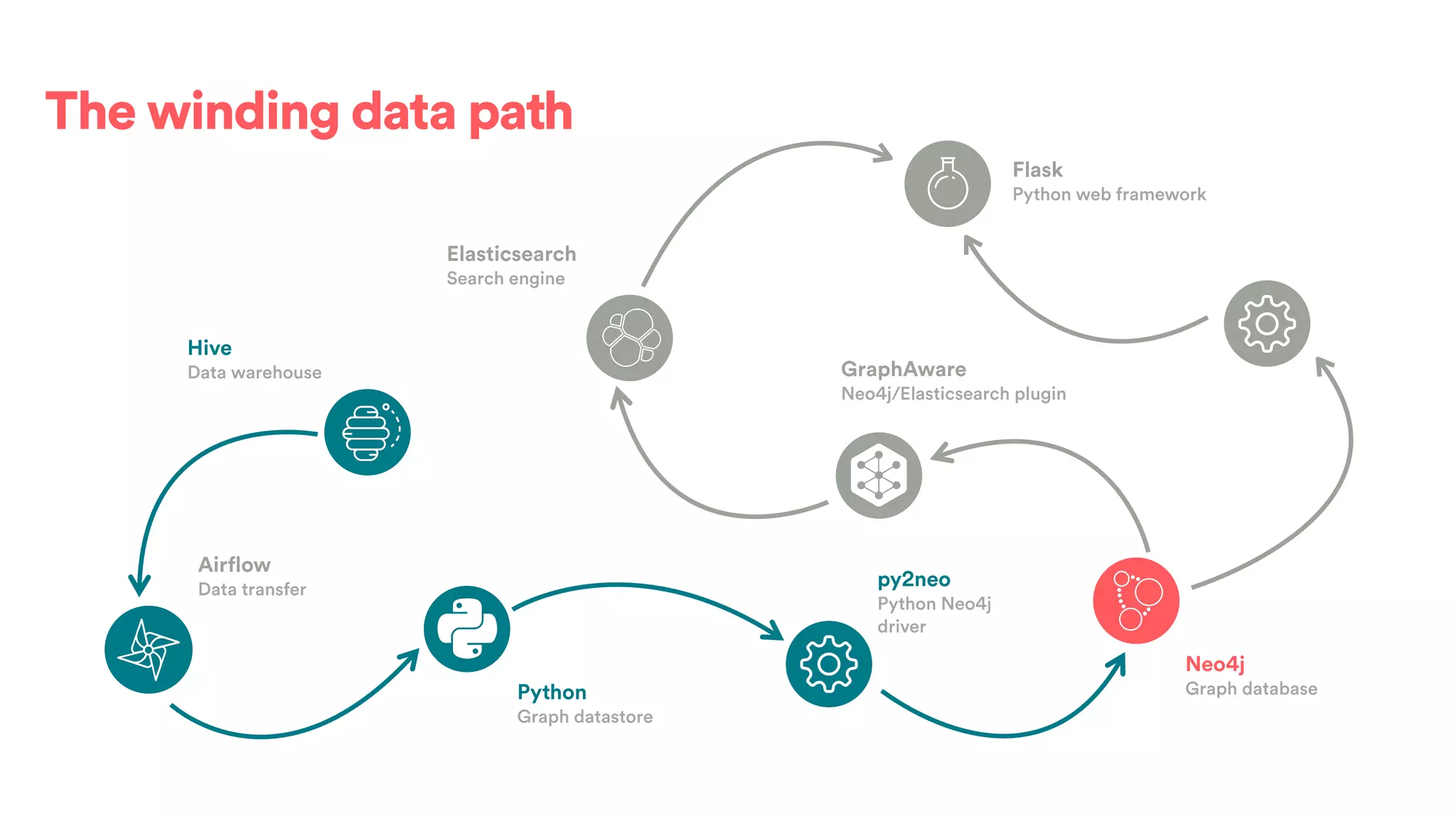

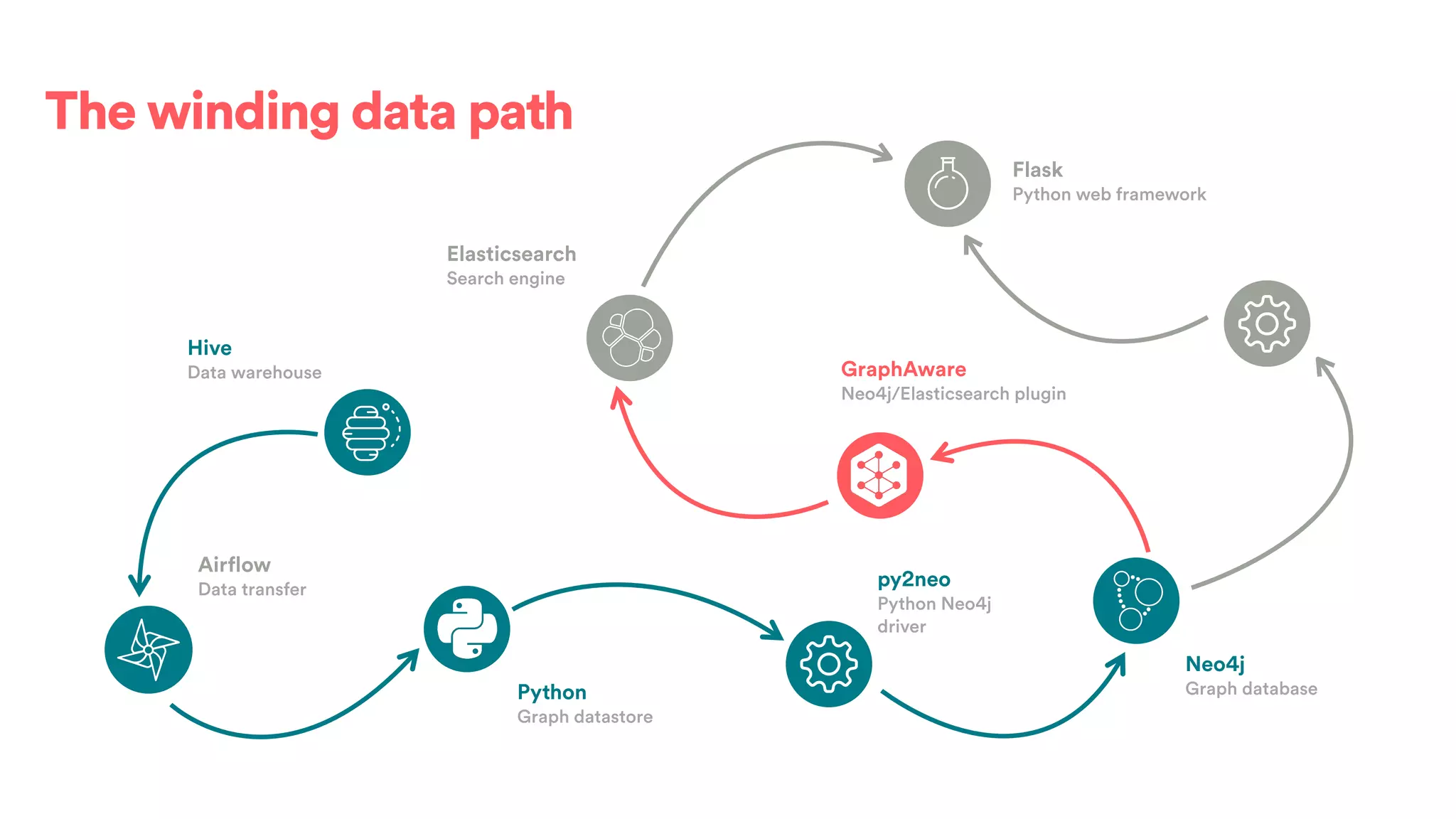

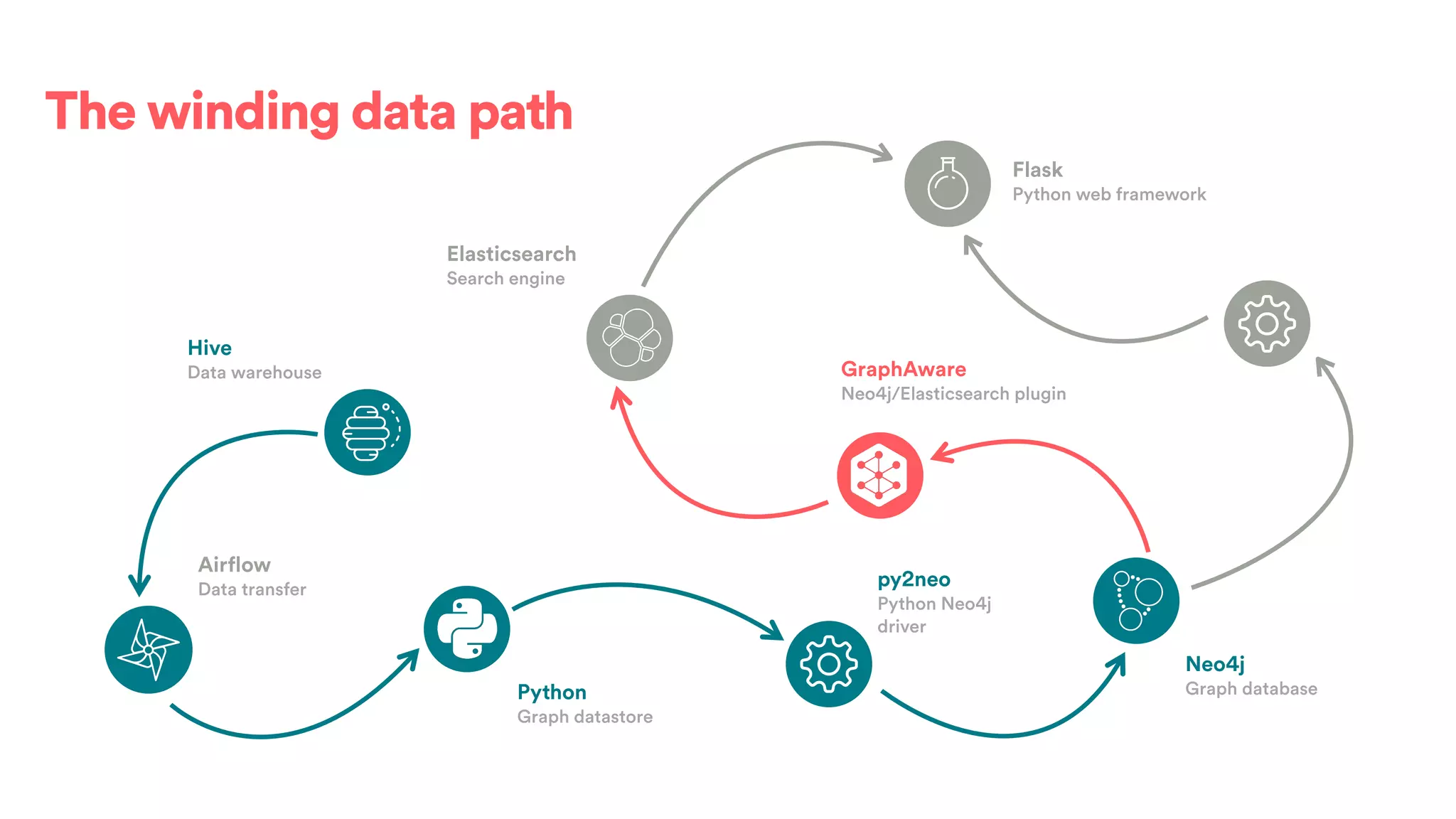

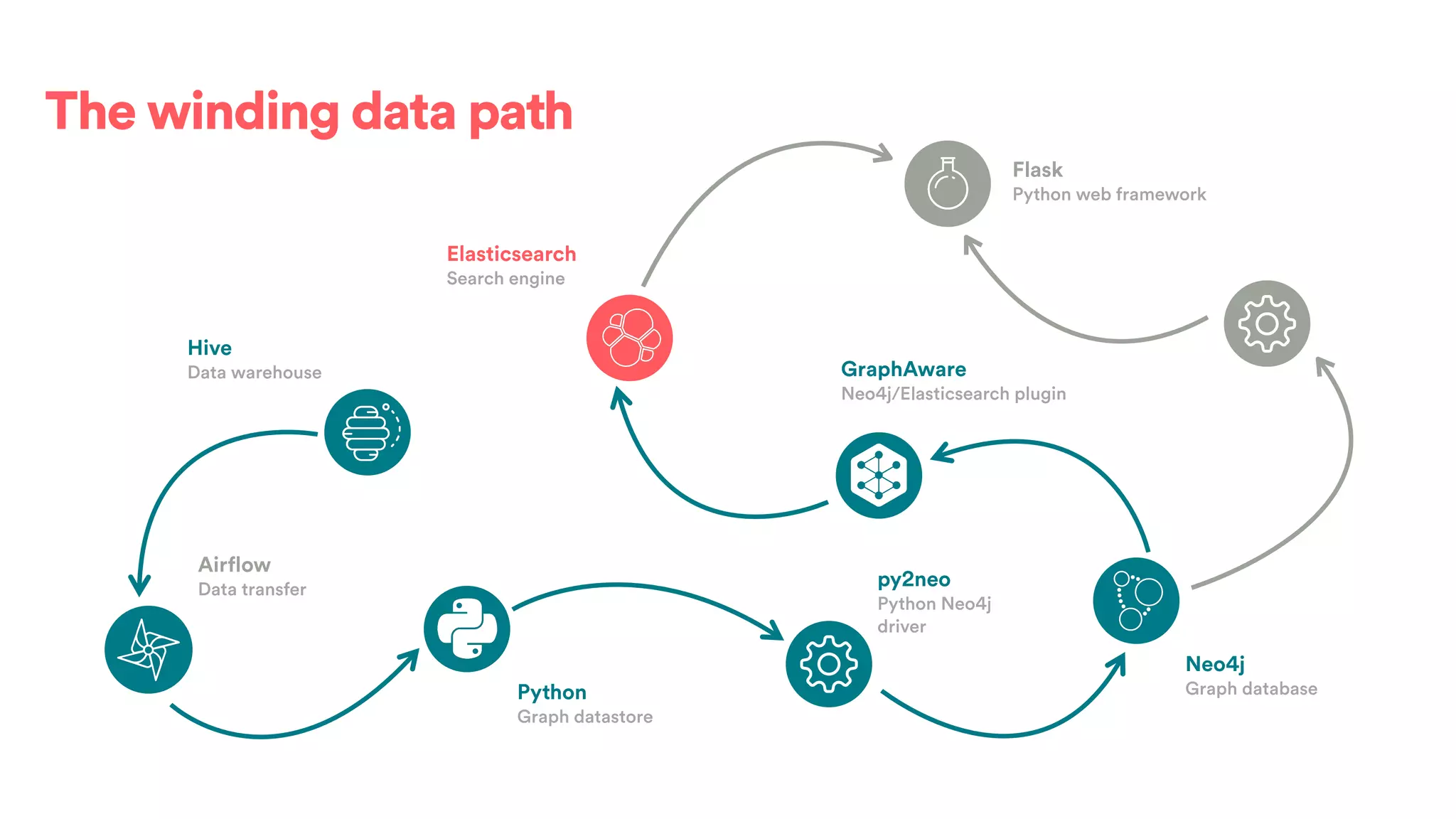

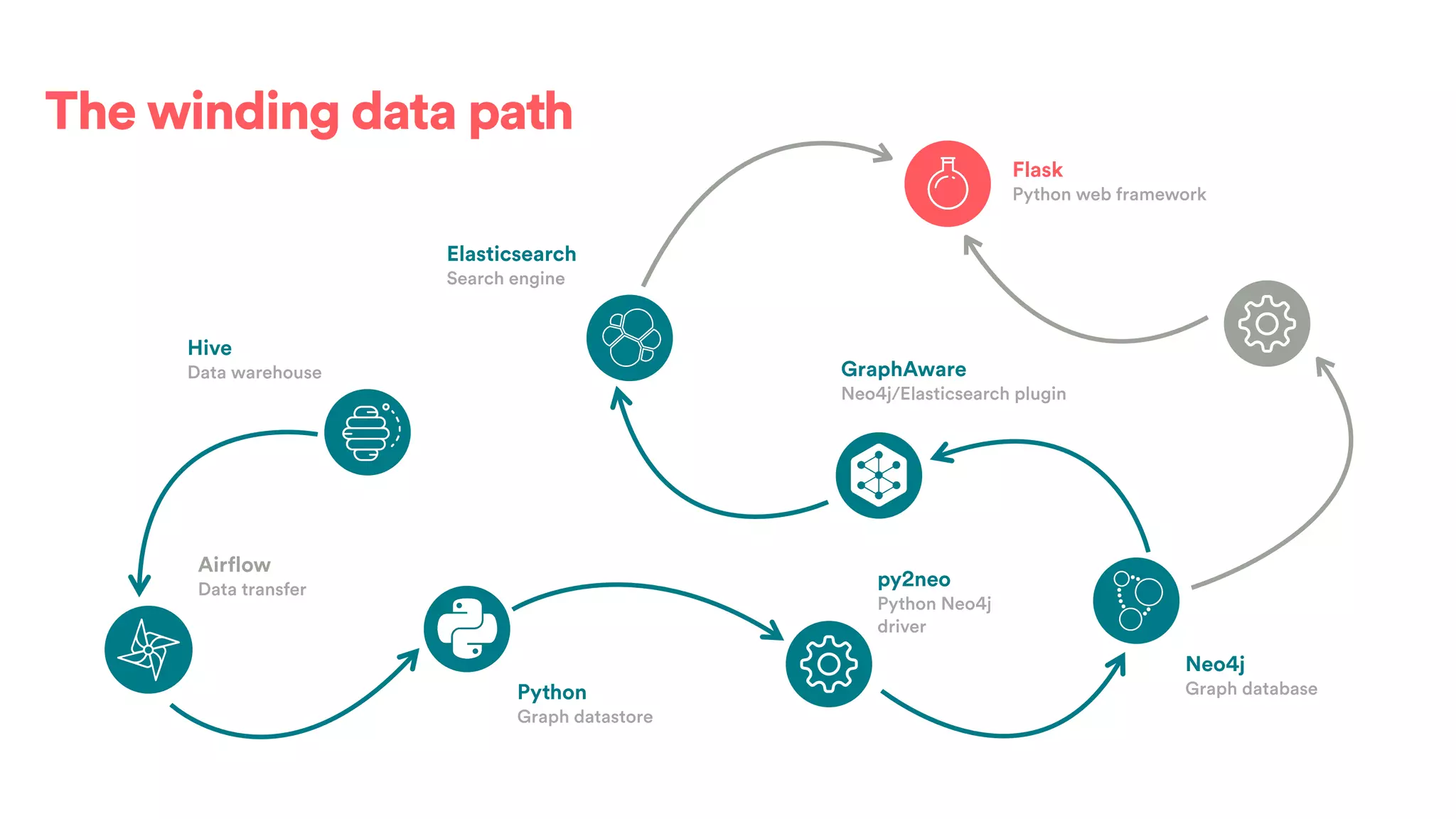

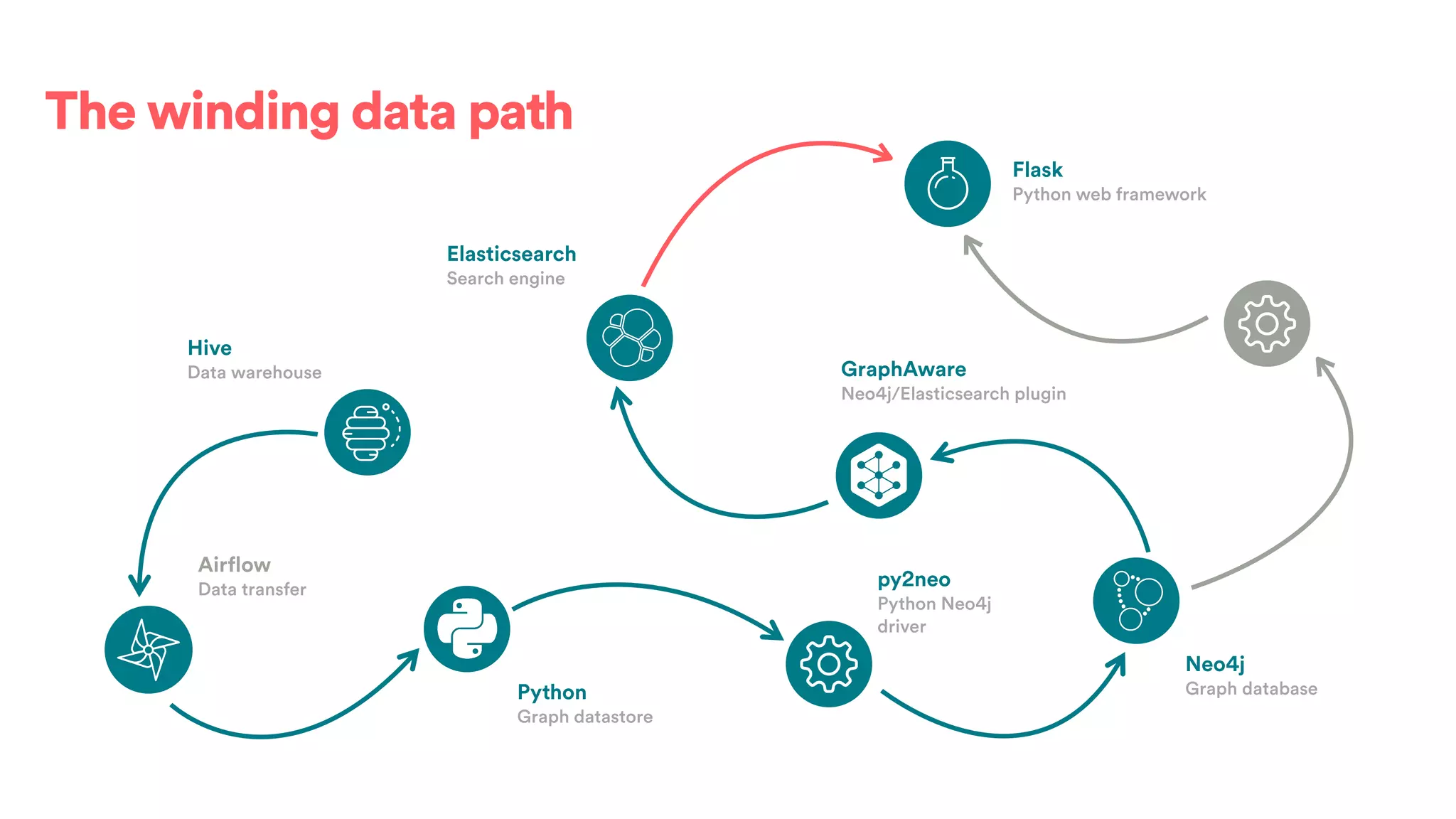

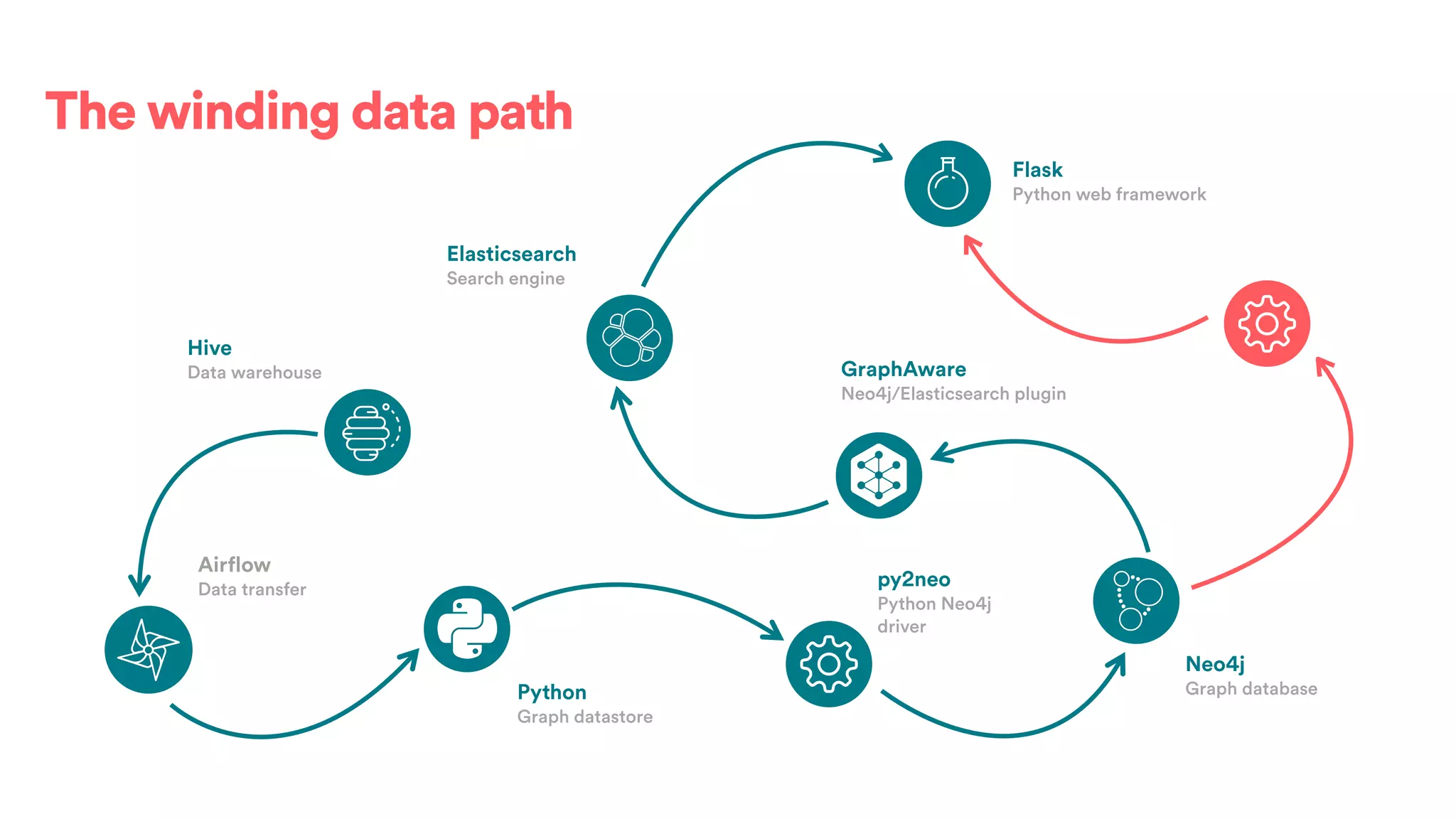

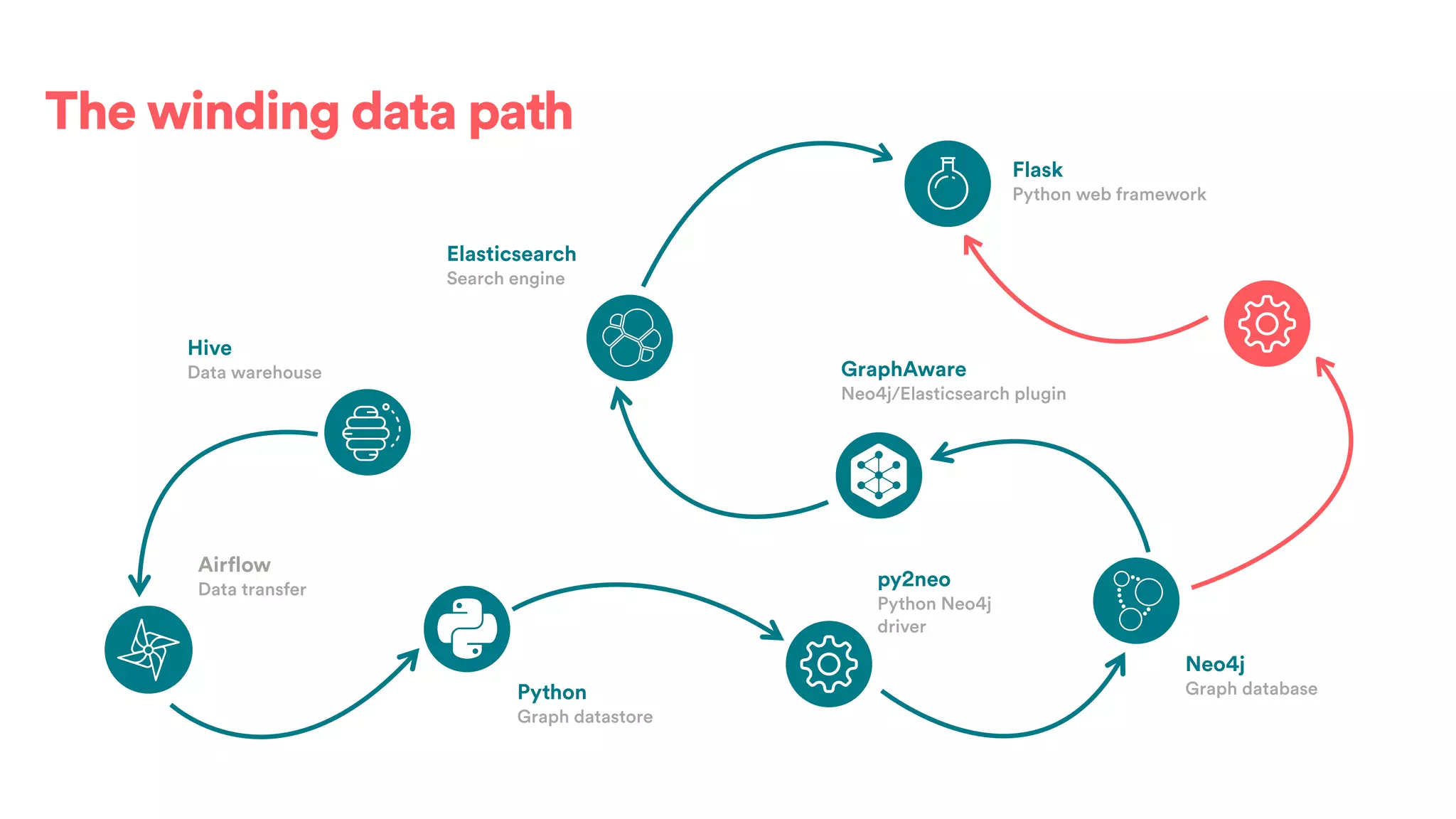

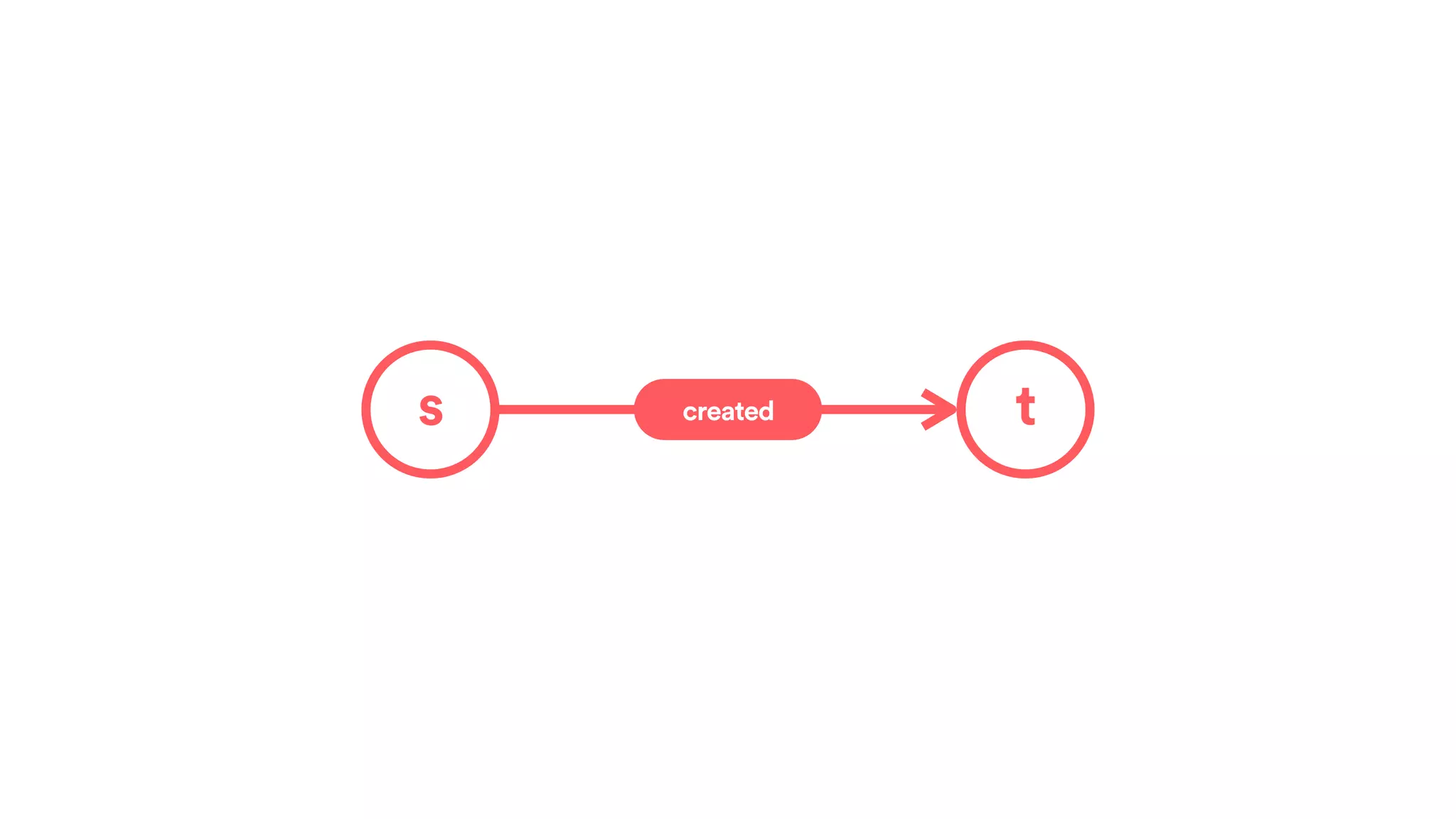

The document discusses scaling tribal knowledge at Airbnb by building a graph database of the company's data resources. It describes collecting metadata on over 6,000 charts, dashboards, experiments and other data assets from various systems into a Neo4j graph database using Airflow. The graph is indexed in Elasticsearch for fast search. This allows employees to explore relationships between data and find relevant resources.

![(s:Entity)-[r:CREATED]->(t:Entity)](https://image.slidesharecdn.com/2017-01-08-scalingtribalknowledge-170209020857/75/2017-01-08-scaling-tribalknowledge-72-2048.jpg)