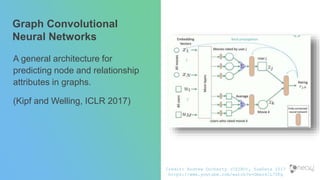

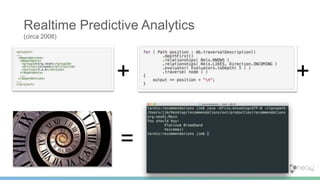

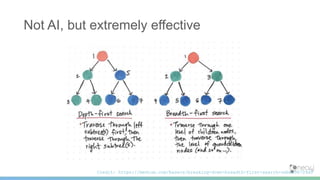

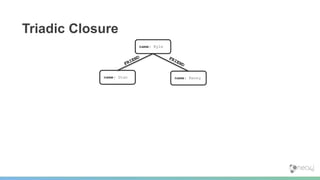

This document provides an overview of graphs for artificial intelligence and machine learning. It discusses definitions of machine learning and AI, as well as common techniques like predictive analytics, transfer learning, and human-like AI. It then covers how graph databases and graph algorithms can be applied to domains like social networks, knowledge graphs, and recommender systems. Specific graph algorithms like triadic closure, structural balance, and graph partitioning are examined. The document also explores emerging areas like graph neural networks, graph convolutional networks, and using graph structures for causal models. It argues that representing data as graphs and applying graph algorithms can provide intelligent behavior without needing general human-level artificial intelligence.

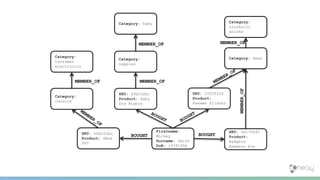

![(d)-[:BOUGHT]->()-[:MEMBER_OF]->(n)

(d)-[:BOUGHT]->()-[:MEMBER_OF]->(b)

(d)-[:BOUGHT]->()-[:MEMBER_OF]->(c)

Flatten the graph](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-20-320.jpg)

![(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(n:Category)

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(b:Category)

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(c:Category)

Include any labels](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-21-320.jpg)

![MATCH (d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(n:Category),

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(b:Category)

Add a MATCH clause](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-22-320.jpg)

![MATCH (d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(n:Category),

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(b:Category),

(c:Category)

WHERE NOT((d)-[:BOUGHT]->()-[:MEMBER_OF]->(c))

Constrain the Pattern](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-23-320.jpg)

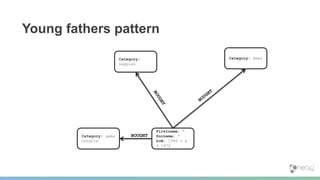

![MATCH (d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(n:Category),

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(b:Category),

(c:Category)

WHERE n.category = "nappies" AND

b.category = "beer" AND

c.category = "console" AND

NOT((d)-[:BOUGHT]->()-[:MEMBER_OF]->(c))

Add property constraints](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-24-320.jpg)

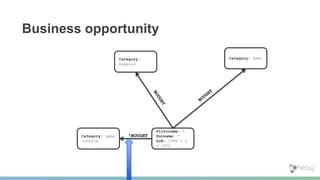

![MATCH (d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(n:Category),

(d:Person)-[:BOUGHT]->()-[:MEMBER_OF]->(b:Category),

(c:Category)

WHERE n.category = "nappies" AND

b.category = "beer" AND

c.category = "console" AND

NOT((d)-[:BOUGHT]->()-[:MEMBER_OF]->(c))

RETURN DISTINCT d AS daddy

Profit!](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-25-320.jpg)

![==> +---------------------------------------------+

==> | daddy |

==> +---------------------------------------------+

==> | Node[15]{name:"Rory Williams",dob:19880121} |

==> +---------------------------------------------+

==> 1 row

==> 0 ms

==>

neo4j-sh (0)$

Results](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-26-320.jpg)

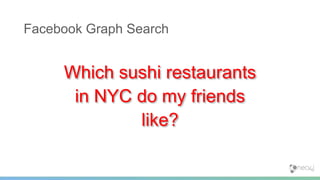

![Simple Query, Intelligent Results

MATCH (:Person {name: 'Jim'})

-[:IS_FRIEND_OF]->(:Person)

-[:LIKES]->(restaurant:Restaurant)

-[:LOCATED_IN]->(:Place {location: 'New York'}),

(restaurant)-[:SERVES]->(:Cuisine {cuisine: 'Sushi'})

RETURN restaurant](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-30-320.jpg)

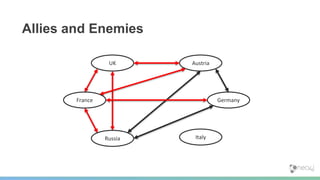

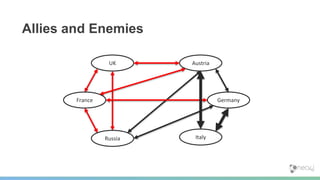

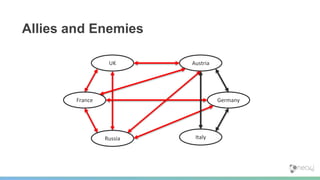

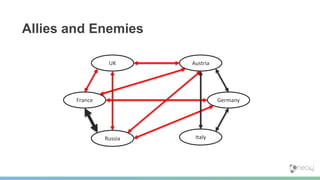

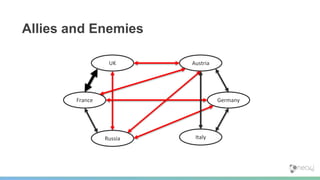

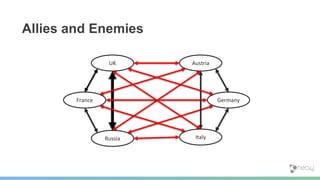

![Predicting WWI

[Easley and Kleinberg]](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-47-320.jpg)

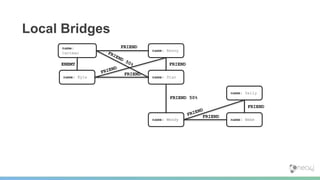

![“If a node A in a network satisfies the Strong Triadic Closure Property

and is involved in at least two strong relationships, then any local

bridge it is involved in must be a weak relationship.”

[Easley and Kleinberg]

Local Bridge Property](https://image.slidesharecdn.com/jimkeynote-190215154344/85/Graphs-for-AI-ML-Jim-Webber-Neo4j-52-320.jpg)