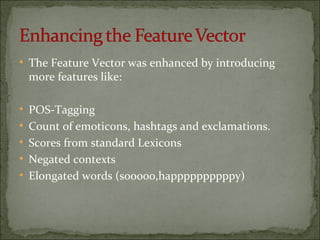

The document details a project focused on classifying tweets as positive, negative, or neutral using a linear SVM classifier. A total of 9684 tweets were analyzed, resulting in 7875 training tweets and 8011 test tweets after preprocessing. The final model achieved an accuracy of 64% on the test set with an F-measure of 0.6163.