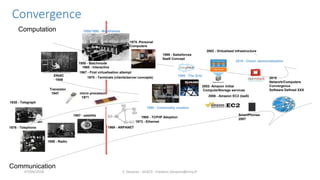

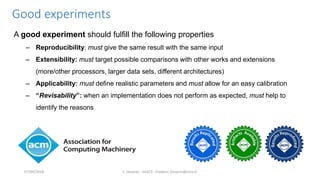

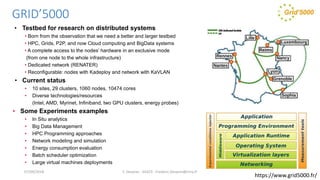

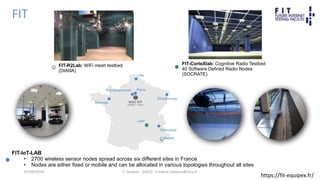

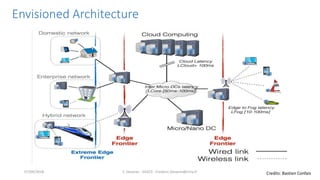

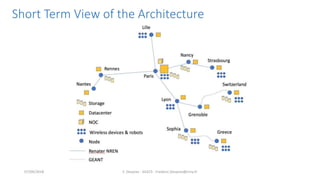

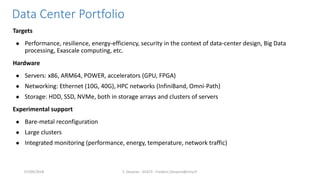

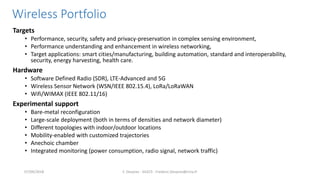

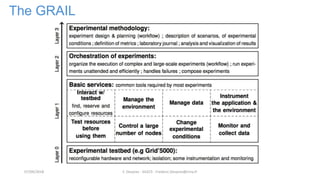

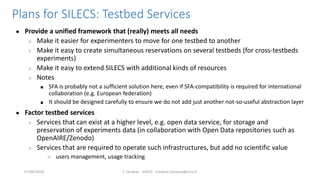

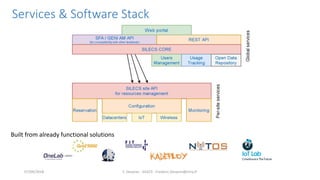

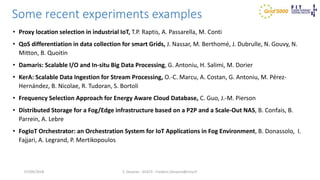

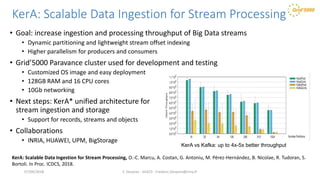

The document outlines the SILECS project, which focuses on creating a super infrastructure for large-scale experimental computer science, enabling efficient testing and research with diverse technologies and resources. It discusses key components such as the FIT and Grid'5000 infrastructures, their capabilities, and the need for better testbeds for distributed systems, IoT solutions, and big data applications. Additionally, it emphasizes experimental principles like reproducibility and extensibility, while detailing recent experiments and developments in various areas of computer science.