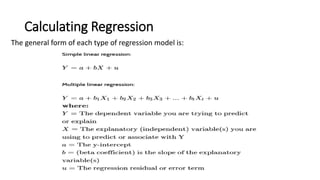

Regression analysis is a statistical technique used to determine the relationship between variables. It allows one to quantify the strength and character of the association between a dependent variable and one or more independent variables. Regression models are used across various disciplines like finance, economics, and investing to help explain phenomena and predict outcomes.