This startup

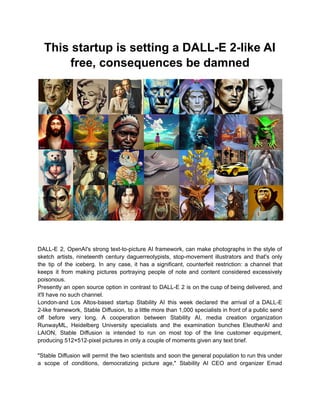

- 1. This startup is setting a DALL-E 2-like AI free, consequences be damned DALL-E 2, OpenAI's strong text-to-picture AI framework, can make photographs in the style of sketch artists, nineteenth century daguerreotypists, stop-movement illustrators and that's only the tip of the iceberg. In any case, it has a significant, counterfeit restriction: a channel that keeps it from making pictures portraying people of note and content considered excessively poisonous. Presently an open source option in contrast to DALL-E 2 is on the cusp of being delivered, and it'll have no such channel. London-and Los Altos-based startup Stability AI this week declared the arrival of a DALL-E 2-like framework, Stable Diffusion, to a little more than 1,000 specialists in front of a public send off before very long. A cooperation between Stability AI, media creation organization RunwayML, Heidelberg University specialists and the examination bunches EleutherAI and LAION, Stable Diffusion is intended to run on most top of the line customer equipment, producing 512×512-pixel pictures in only a couple of moments given any text brief. "Stable Diffusion will permit the two scientists and soon the general population to run this under a scope of conditions, democratizing picture age," Stability AI CEO and organizer Emad

- 2. Mostaque wrote in a blog entry. "We anticipate the open environment that will arise around this and further models to investigate the limits of dormant space genuinely." Be that as it may, Stable Diffusion's absence of shields contrasted with frameworks like DALL-E 2 suggests precarious moral conversation starters for the AI people group. Regardless of whether the outcomes aren't entirely persuading yet, making counterfeit pictures of people of note gets into an enormous tricky situation. Also, making the crude parts of the framework uninhibitedly accessible leaves the entryway open to troublemakers who could prepare them on emotionally improper substance, similar to erotic entertainment and realistic savagery. Making Stable Diffusion Stable Diffusion is the brainchild of Mostaque. Having moved on from Oxford with a Masters in math and software engineering, Mostaque filled in as an examiner at different mutual funds prior to changing gears to more open confronting works. In 2019, he helped to establish Symmitree, an undertaking that planned to diminish the expense of cell phones and web access for individuals living in ruined networks. Furthermore, in 2020, Mostaque was the main engineer of Collective and Augmented Intelligence Against COVID-19, a coalition to assist policymakers with pursuing choices despite the pandemic by utilizing programming. He helped to establish Stability AI in 2020, roused both by an individual interest with AI and what he described as a sloppiness inside the open source AI people group. "No one has any democratic privileges aside from our 75 workers — no very rich people, enormous assets, states or any other individual with control of the organization or the networks we support. We're totally free," Mostaque told TechCrunch in an email. "We intend to utilize our register to speed up open source, primary AI." Mostaque says that Stability AI subsidized the formation of LAION 5B, an open source, 250-terabyte dataset containing 5.6 billion pictures scratched from the web. ("LAION" represents Large-scale Artificial Intelligence Open Network, a charitable association fully intent on making AI, datasets and code accessible to the general population.) The organization likewise worked with the LAION gathering to make a subset of LAION 5B called LAION-Esthetics, which contains AI-sifted pictures positioned as especially "lovely" by analyzers of Stable Diffusion. Regardless, Stable Diffusion expands on research brooded at OpenAI as well as Runway and Google Brain, one of Google's AI R&D divisions. The framework was prepared on text-picture matches from LAION-Esthetics to become familiar with the relationship between composed ideas and pictures, similar to how "bird" can allude not exclusively to bluebirds yet parakeets and bald eagles, as well as additional theoretical thoughts. At runtime, Stable Diffusion — like DALL-E 2 — breaks the picture age process down into a course of "dissemination." It begins with unadulterated clamor and refines a picture over the long run, making it gradually more like a given text depiction until there's no commotion left by any means. Steadiness AI utilized a group of 4,000 Nvidia A100 GPUs running in AWS to prepare Stable Diffusion throughout the span of a month. CompVis, the machine vision and learning research

- 3. bunch at Ludwig Maximilian University of Munich, managed the preparation, while Stability AI gave the process power. Stable Diffusion can run on illustrations cards with around 5GB of VRAM. That is generally the limit of mid-range cards like Nvidia's GTX 1660, evaluated around $230. Work is in progress on carrying similarity to AMD MI200's server farm cards and even MacBooks with Apple's M1 chip (albeit on account of the last option, without GPU speed increase, picture age will require up to a couple of moments). "We have upgraded the model, packing the information on north of 100 terabytes of pictures," Mosaque said. "Variations of this model will be on more modest datasets, especially as support learning with human input and different strategies are utilized to take these overall advanced cerebrums and make then much more modest and centered." For the beyond couple of weeks, Stability AI has permitted a predetermined number of clients to inquiry the Stable Diffusion model through its Discord waiter, easing back expanding the quantity of most extreme questions to pressure test the framework. Security AI says that in excess of 15,000 analyzers have utilized Stable Diffusion to make 2 million pictures every day. "We will give more subtleties of our practical plan of action soon with our authority send off, yet it is essentially the business open source programming playbook: administrations and scale framework," Mostaque said. "We figure AI will go the method of servers and data sets, with open beating exclusive frameworks — especially given the energy of our networks." With the facilitated variant of Stable Diffusion — the one accessible through Stability AI's Discord waiter — Stability AI doesn't allow each sort of picture age. The startup's terms of administration boycott some licentious or sexual material (albeit not insufficiently clad figures), disdainful or savage symbolism (like anti-Jewish iconography, bigoted cartoons, misanthropic and misandrist publicity), prompts containing protected or reserved material, and individual data like telephone numbers and Social Security numbers. In any case, while Stability AI has executed a catchphrase channel in the server like Openai's, which keeps the model from endeavoring to produce a picture that could disregard the use strategy, it has all the earmarks of being more lenient than most. Solidness AI likewise doesn't have a strategy against pictures with well known individuals. That probably makes deepfakes fair game (and Renaissance-style canvases of renowned rappers), however the model battles with faces on occasion, presenting odd curios that a gifted Photoshop craftsman seldom would. "Our benchmark models that we discharge depend on broad web creeps and are intended to address the aggregate symbolism of humankind packed into documents a couple of gigabytes huge," Mostaque said. "Beside unlawful substance, there is negligible separating, and it is on the client to involve it as they will."

- 4. Possibly more tricky are the destined to-be-delivered instruments for making custom and tweaked Stable Diffusion models. An "Computer based intelligence fuzzy pornography generator" profiled by Vice offers a review of what could come; a craftsmanship understudy going by the name of CuteBlack prepared a picture generator to produce delineations of human creature genitalia by scratching work of art from shaggy being a fan locales. The conceivable outcomes don't stop at erotic entertainment. In principle, a vindictive entertainer could tweak Stable Diffusion on pictures of uproars and butchery, for example, or publicity. As of now, analyzers in Stability AI's Discord waiter are utilizing Stable Diffusion to produce a scope of content prohibited by other picture age administrations, remembering pictures of the battle for Ukraine, naked ladies, an envisioned Chinese attack of Taiwan and questionable portrayals of strict figures like the Prophet Muhammad. Without a doubt, a portion of these pictures are against Stability AI's own terms, yet the organization is right now depending on the local area to signal infringement. Many bear the indications of an algorithmic creation, as unbalanced appendages and a confused blend of workmanship styles. In any case, others are tolerable on first look. Furthermore, the tech will keep on improving, apparently. Mostaque recognized that the apparatuses could be utilized by agitators to make "truly awful stuff," and CompVis says that the public arrival of the benchmark Stable Diffusion model will "integrate moral contemplations." But that's what mostaque contends — by making the instruments unreservedly accessible — it permits the local area to foster countermeasures. "We desire to be the impetus to organize worldwide open source AI, both free and scholarly, to construct crucial framework, models and instruments to expand our aggregate potential," Mostaque said. "This is astounding innovation that can change mankind to improve things and ought to be open foundation for all." Not every person concurs, as confirmed by the debate over "GPT-4chan," an AI model prepared on one of 4chan's scandalously poisonous conversation sheets. Computer based intelligence specialist Yannic Kilcher made GPT-4chan — which figured out how to yield bigot, racist and misanthrope disdain discourse — accessible recently on Hugging Face, a center for sharing prepared AI models. Following conversations via web-based entertainment and Hugging Face's remark segment, the Hugging Face group first "gated" admittance to the model prior to eliminating it out and out, yet not before it was downloaded in excess of multiple times. Meta's new chatbot disaster shows the test of holding even apparently safe models back from spinning out of control. Only days in the wake of making its most developed AI chatbot to date, BlenderBot 3, accessible on the web, Meta had to go up against media reports that the bot offered continuous xenophobic remarks and rehashed bogus