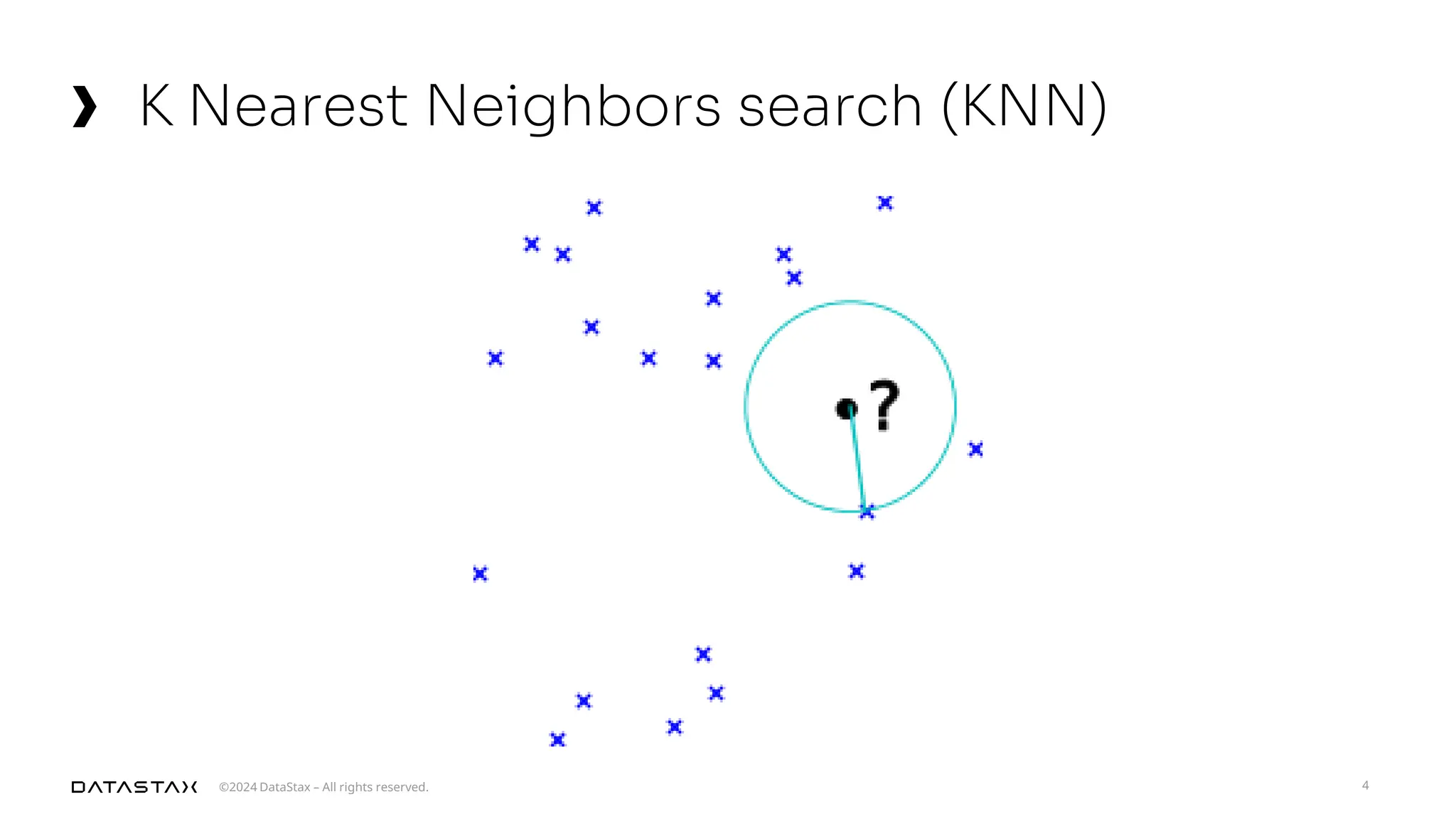

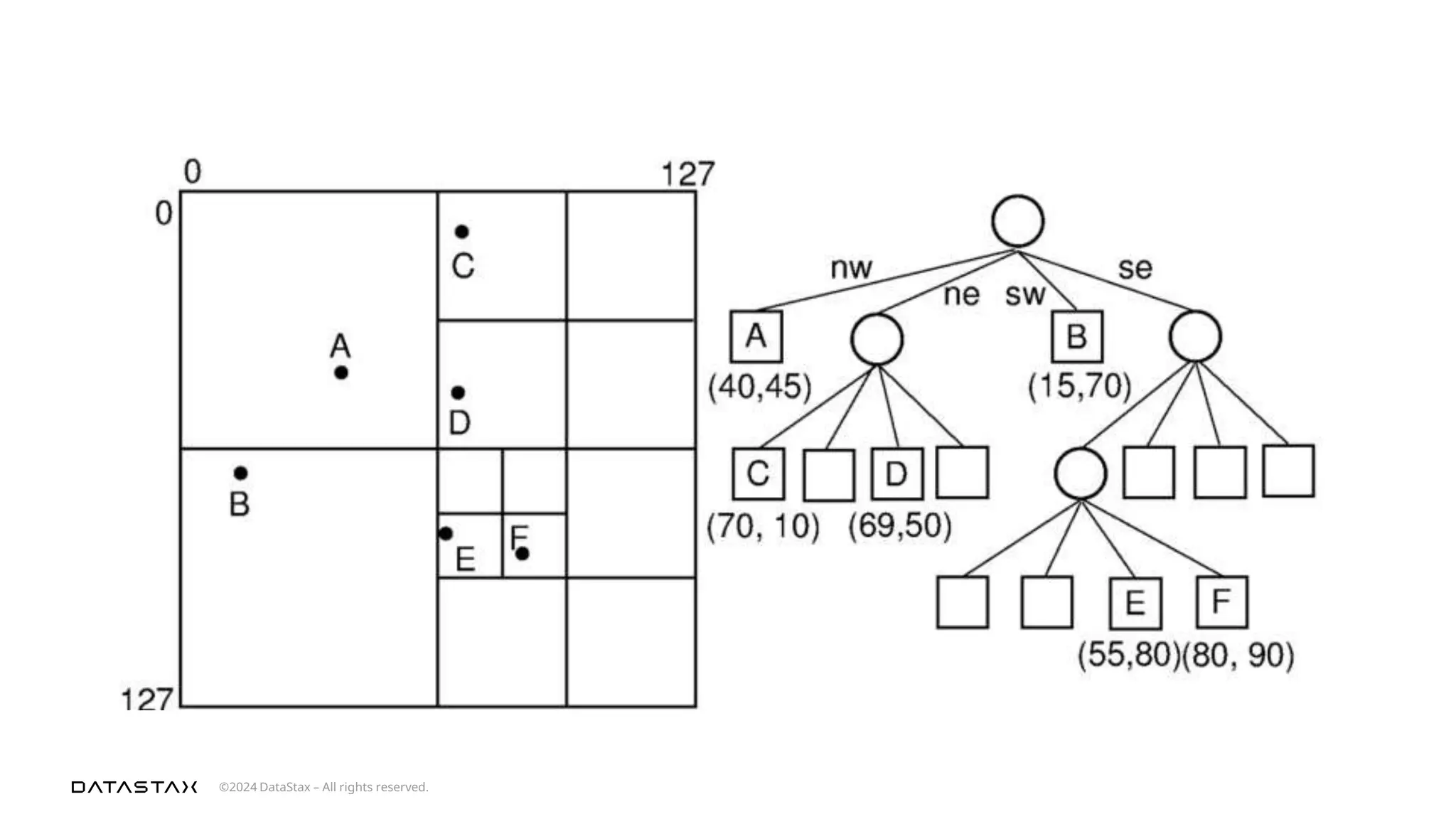

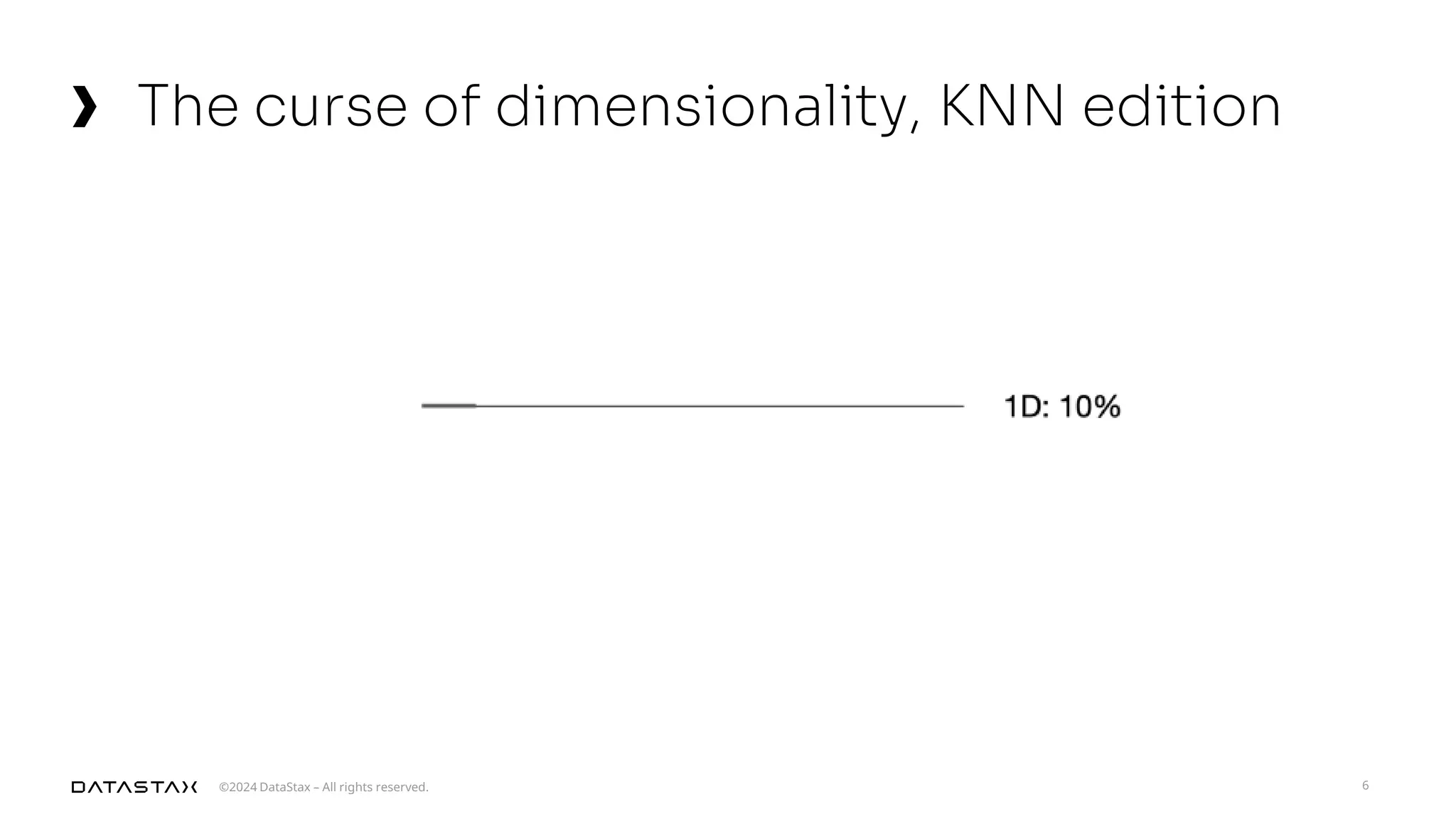

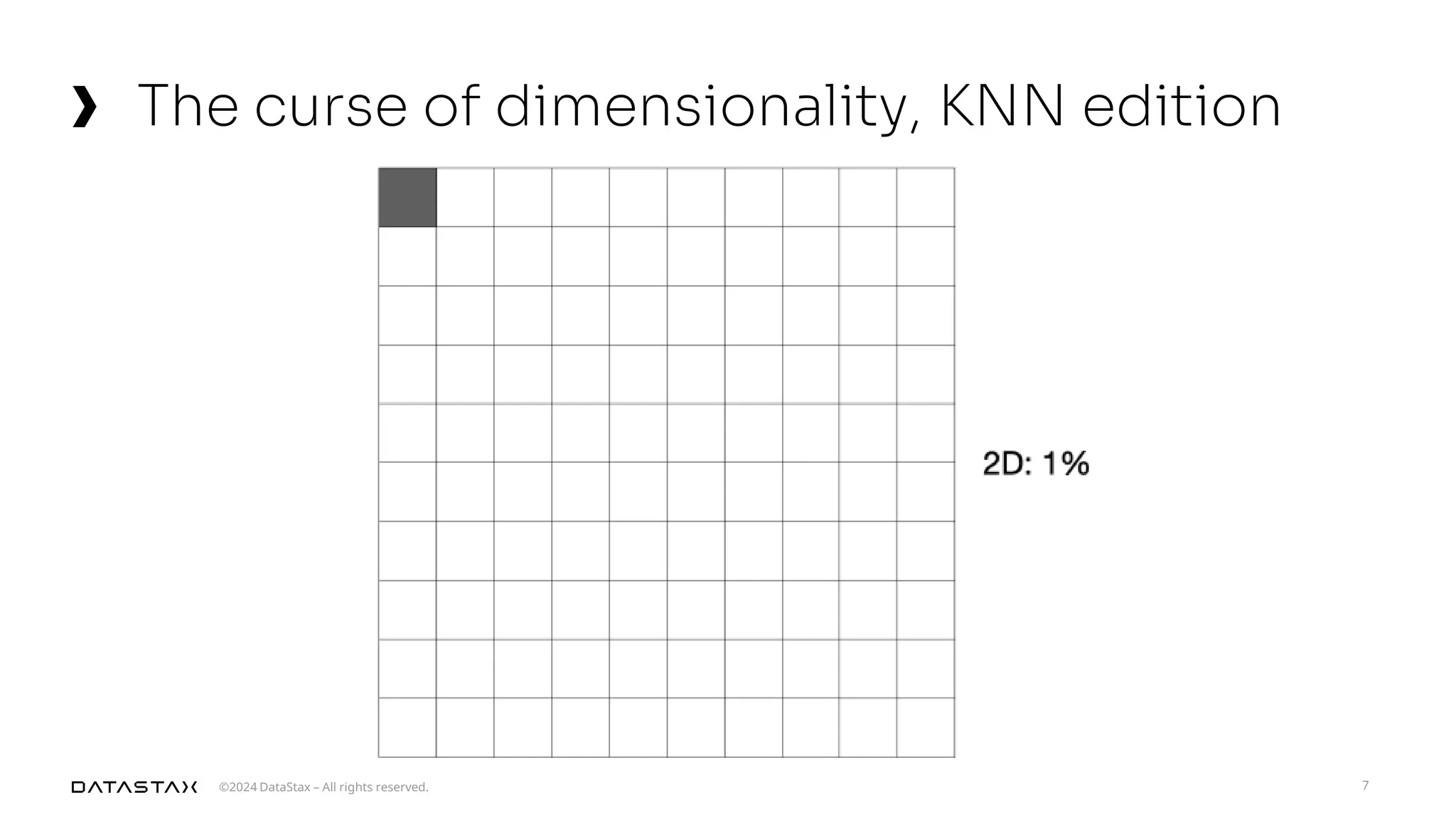

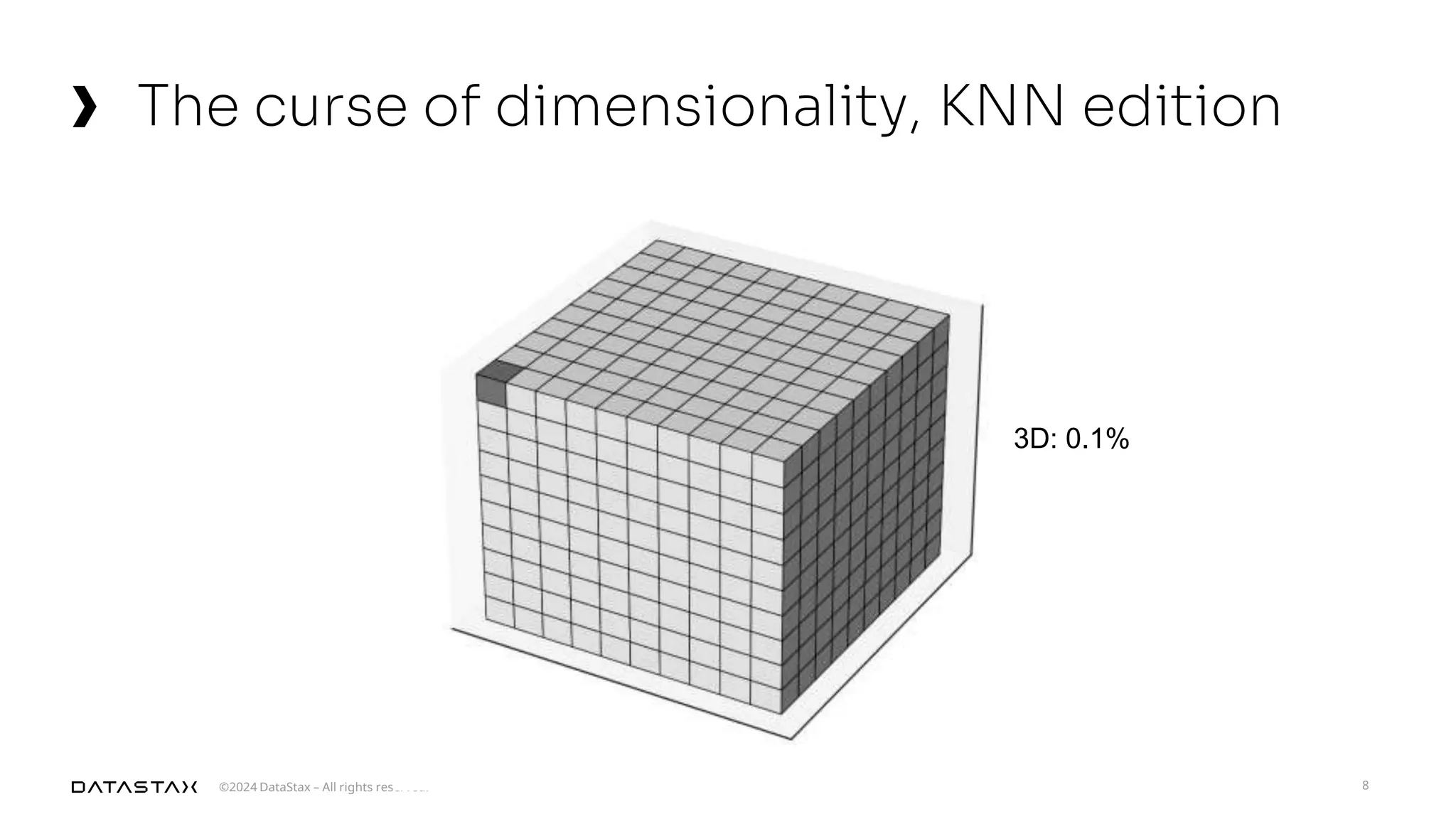

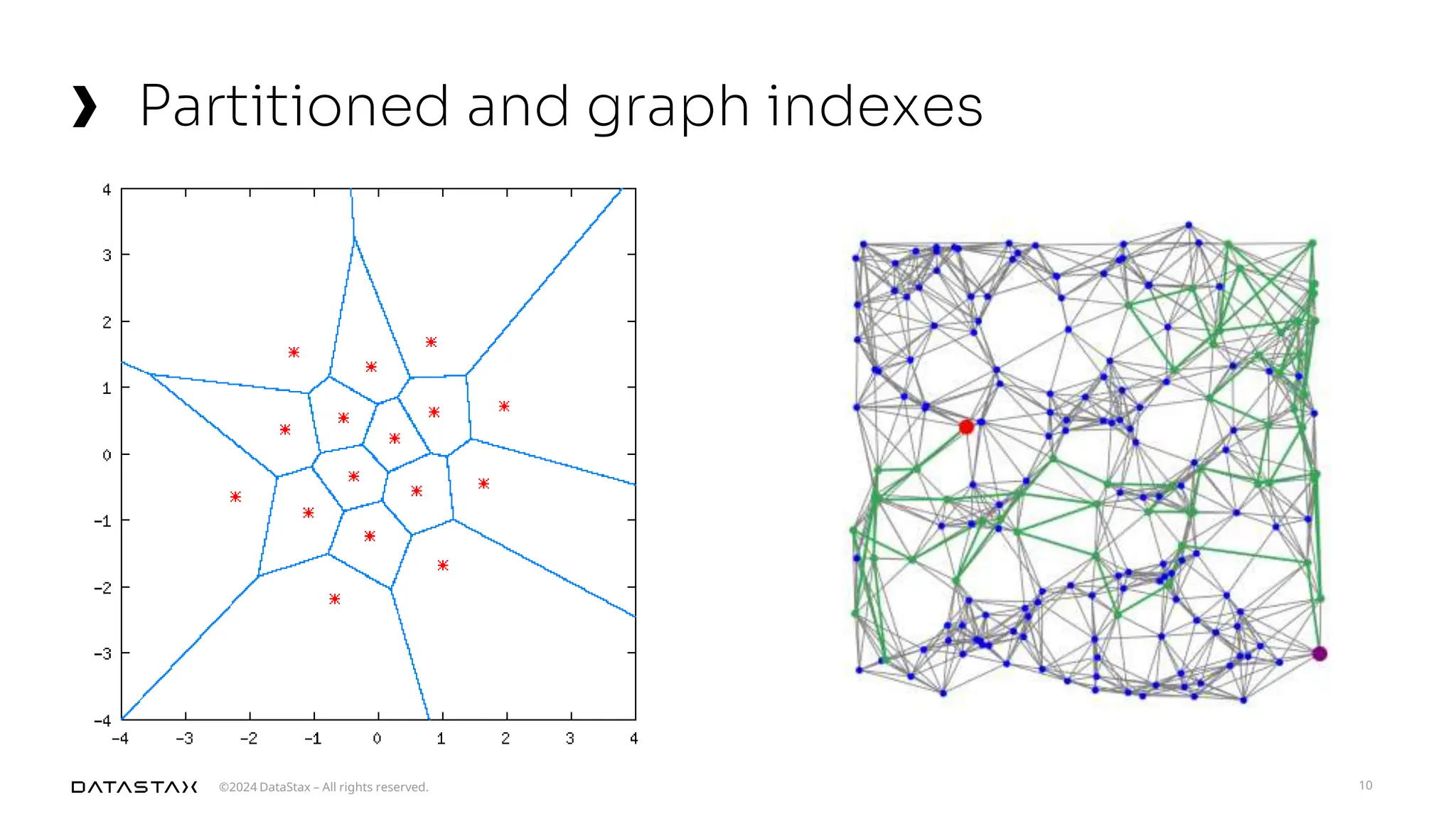

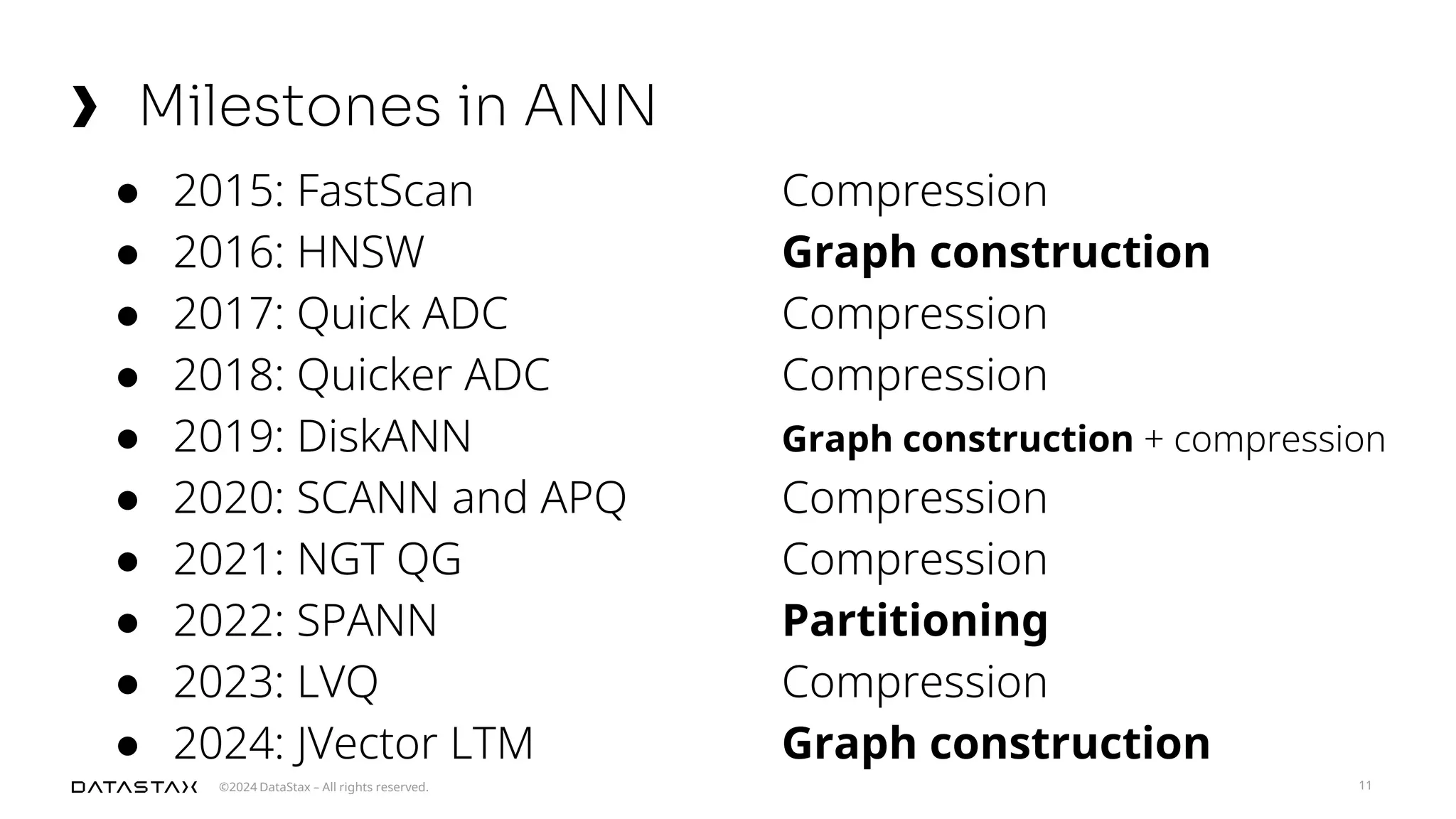

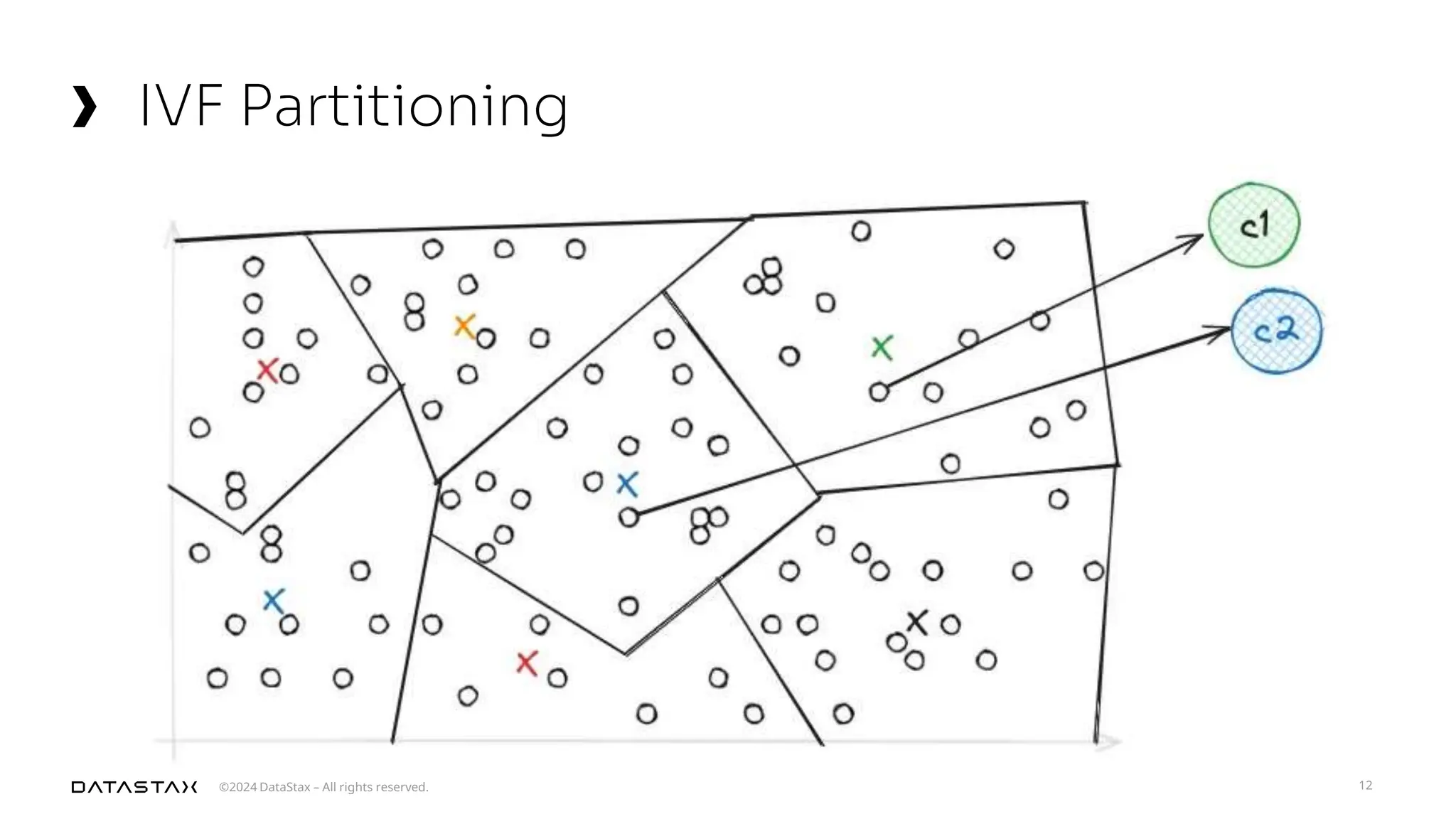

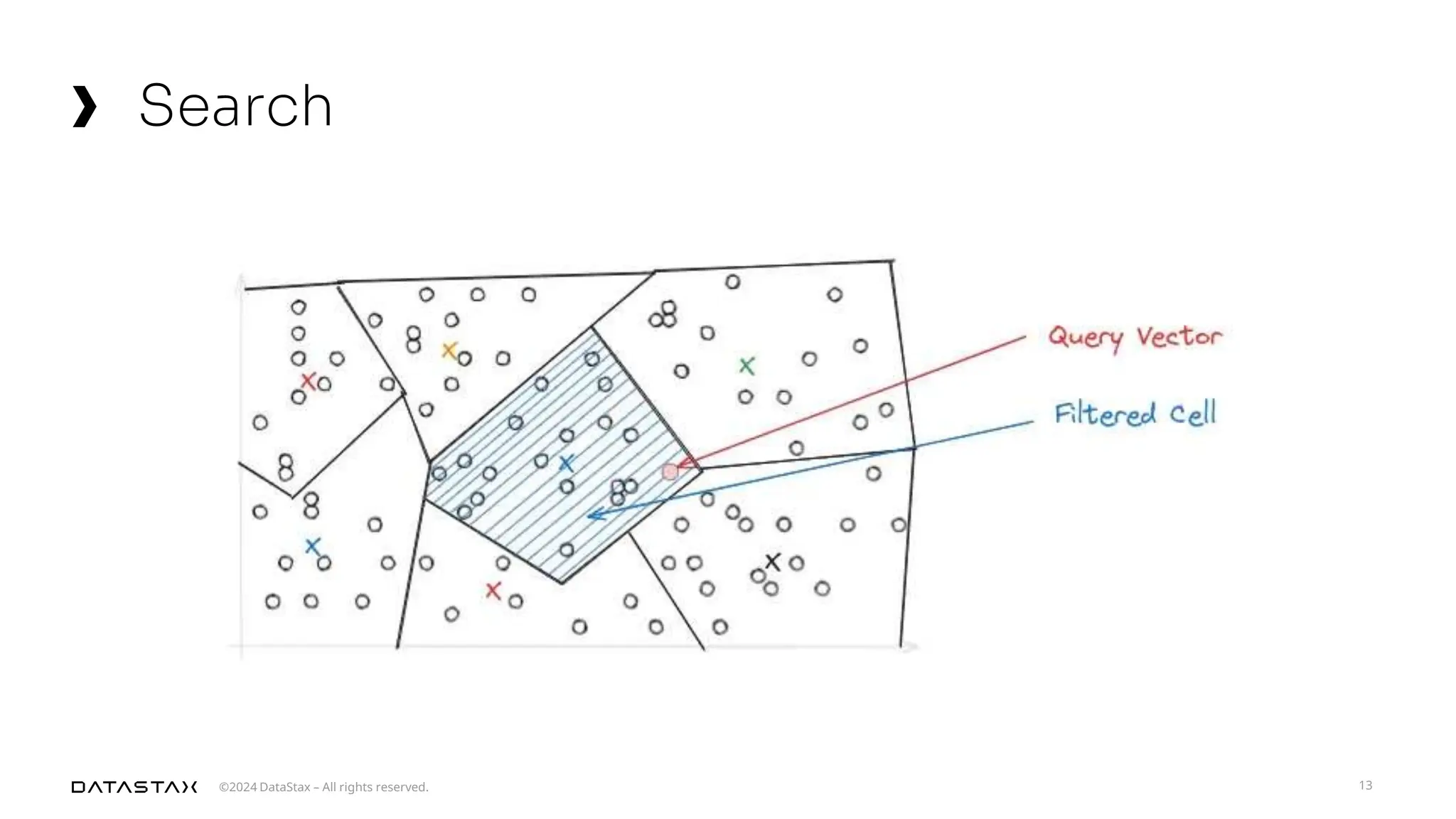

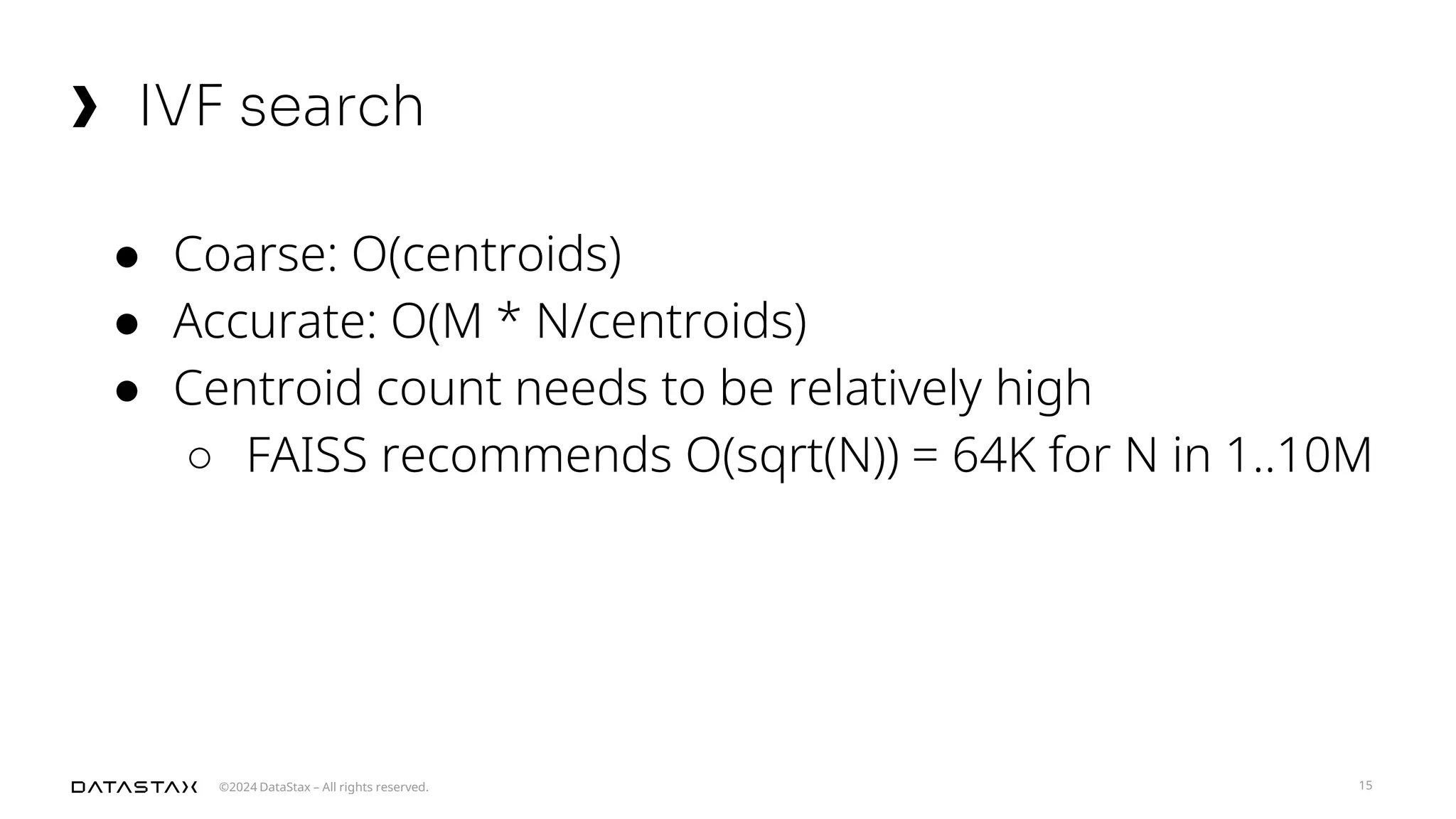

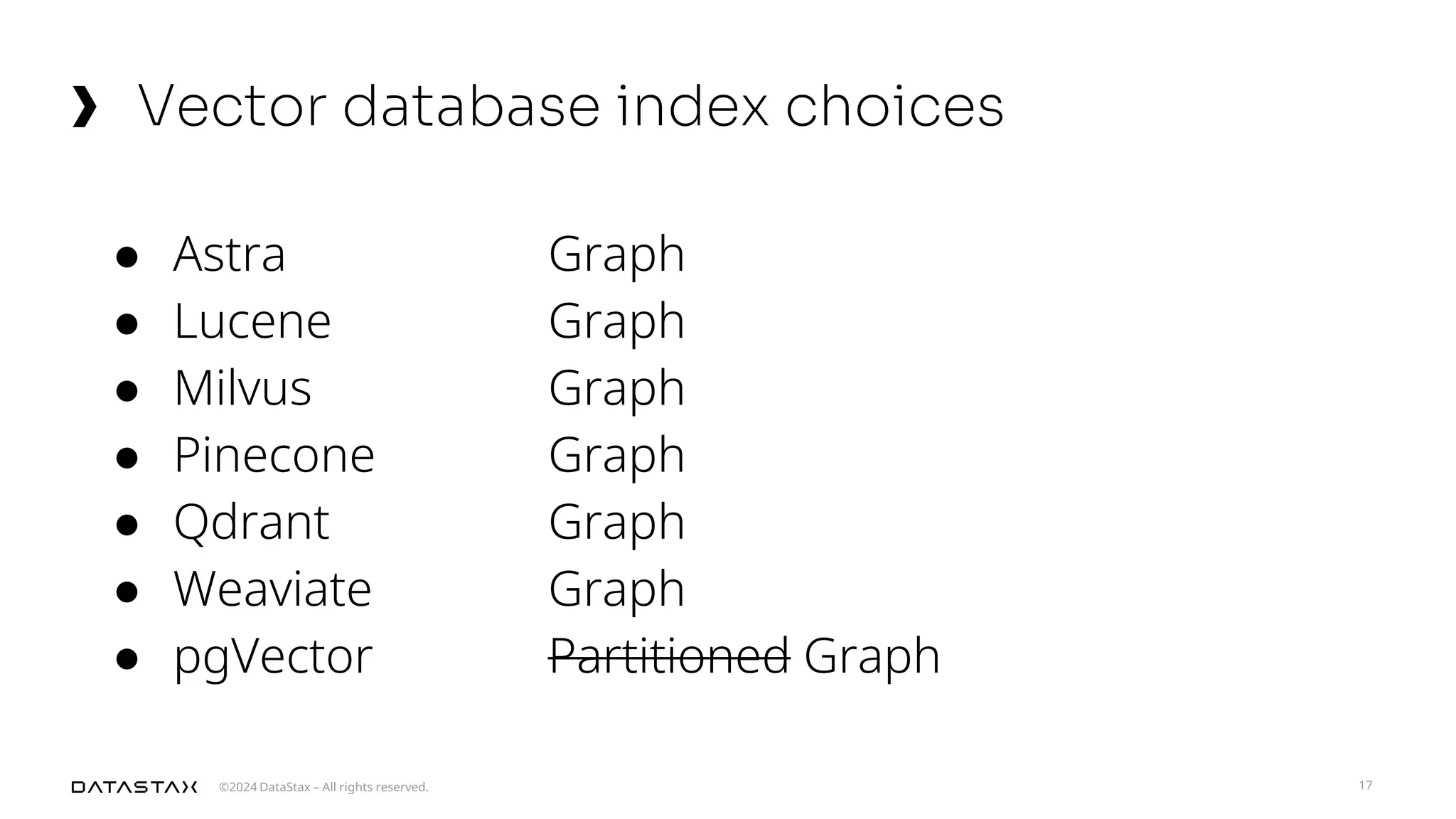

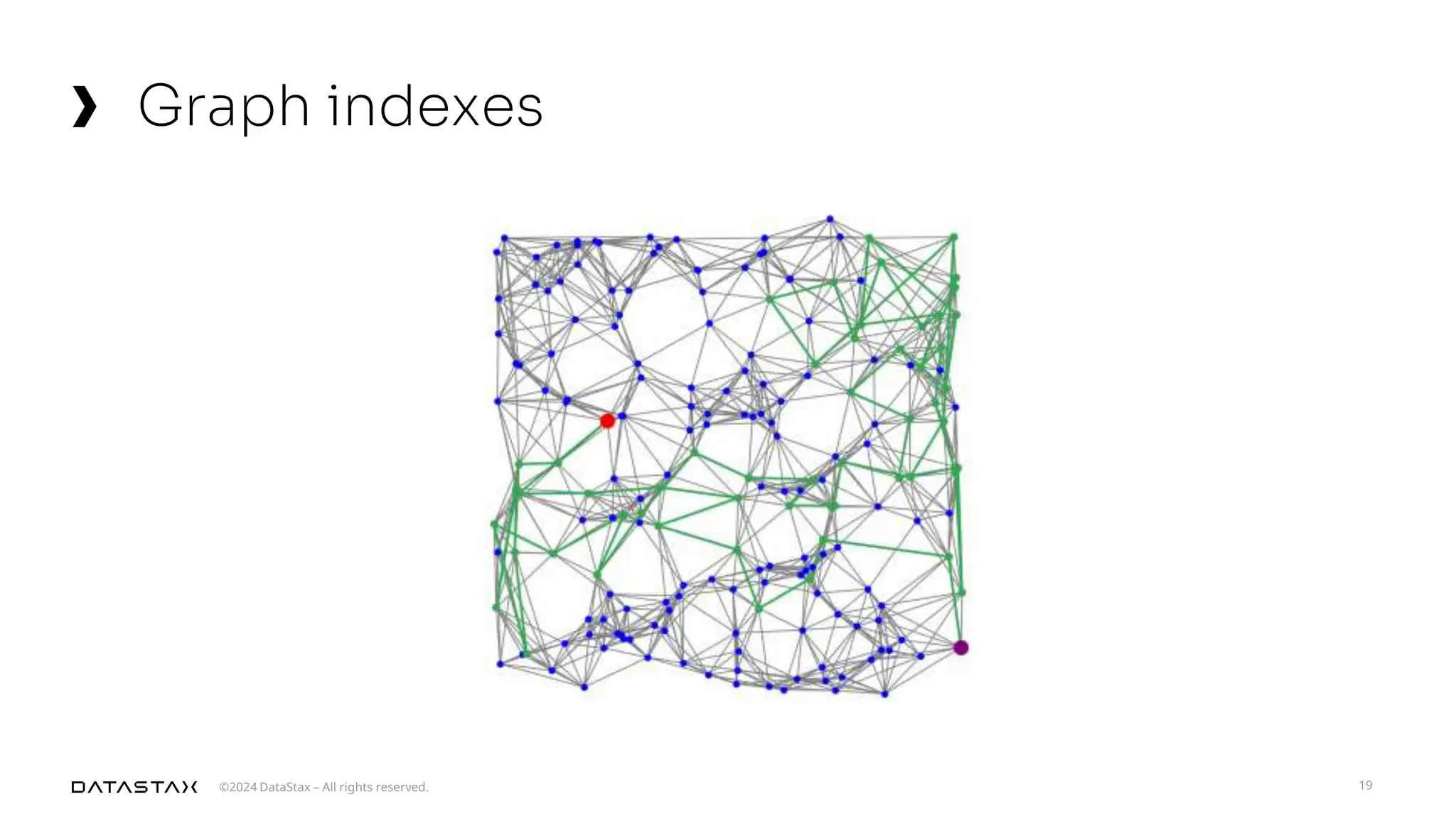

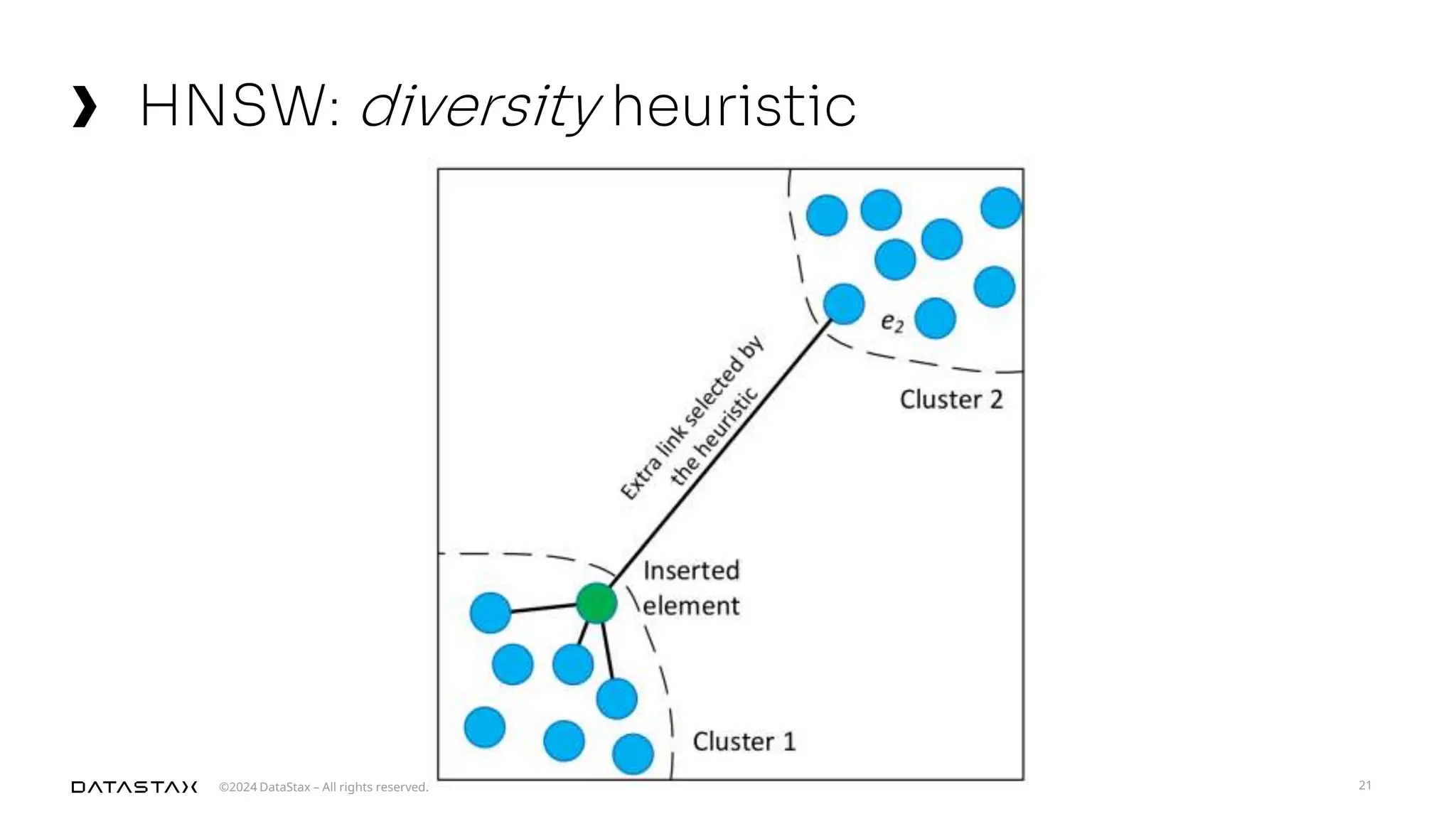

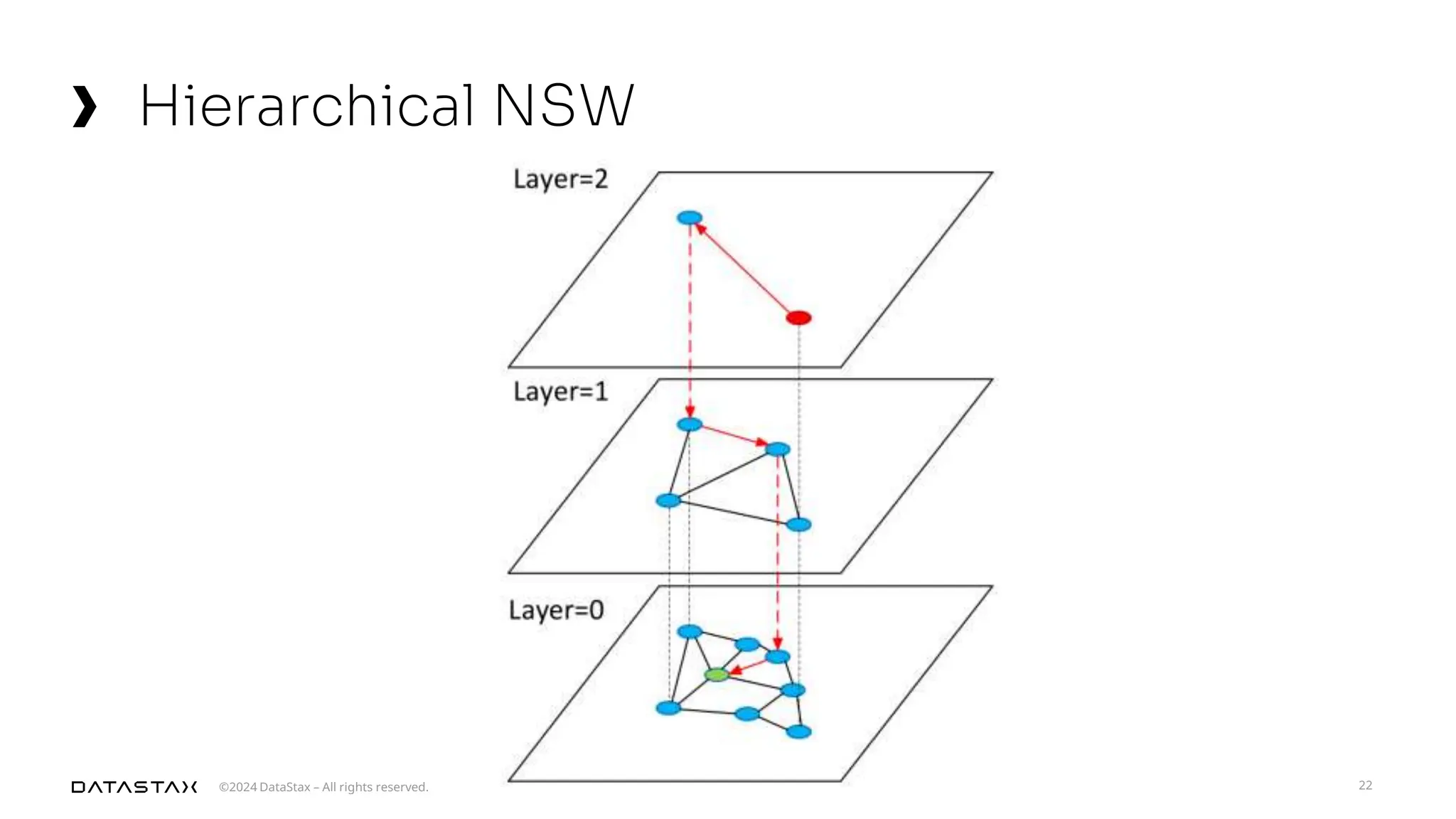

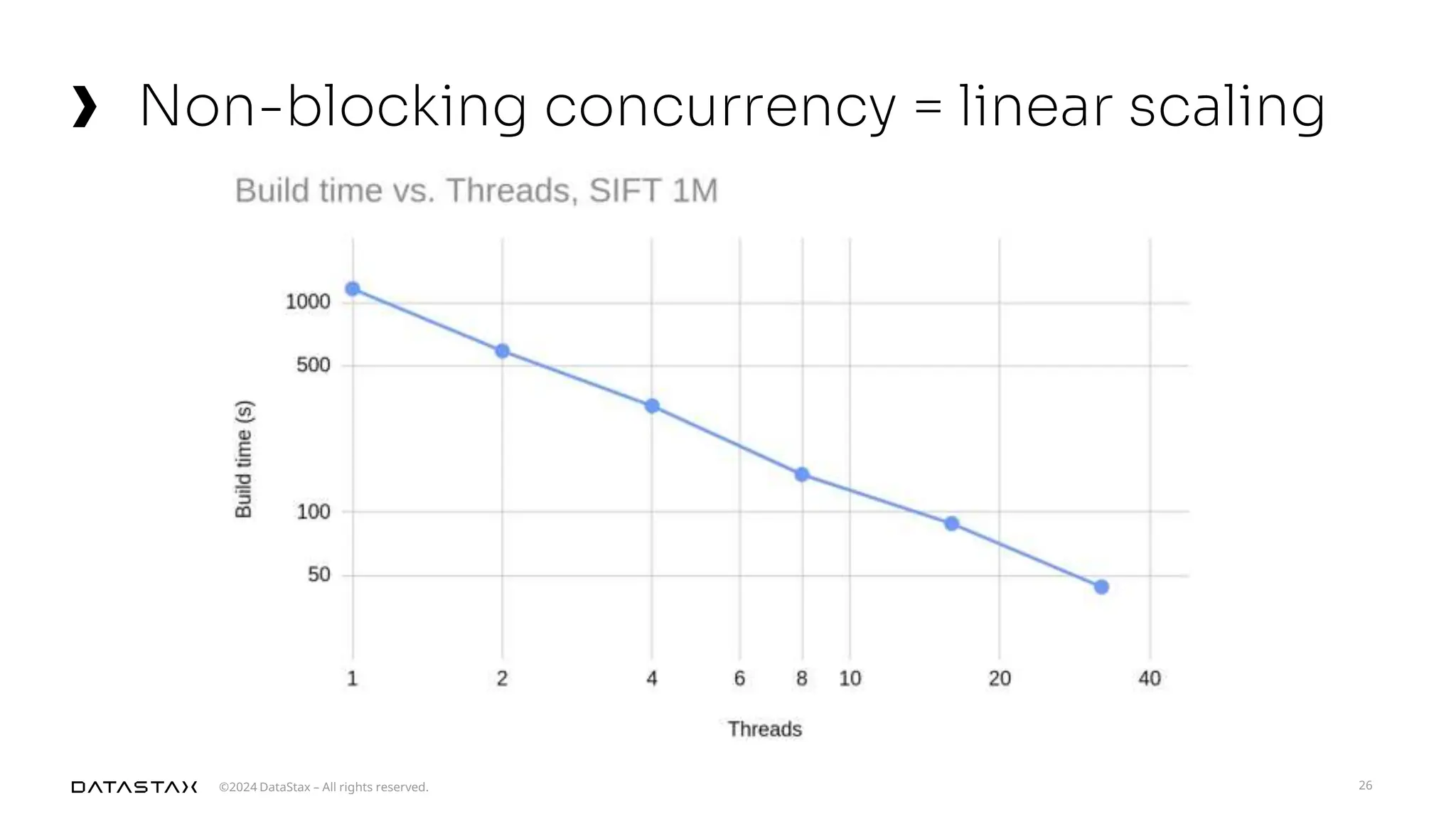

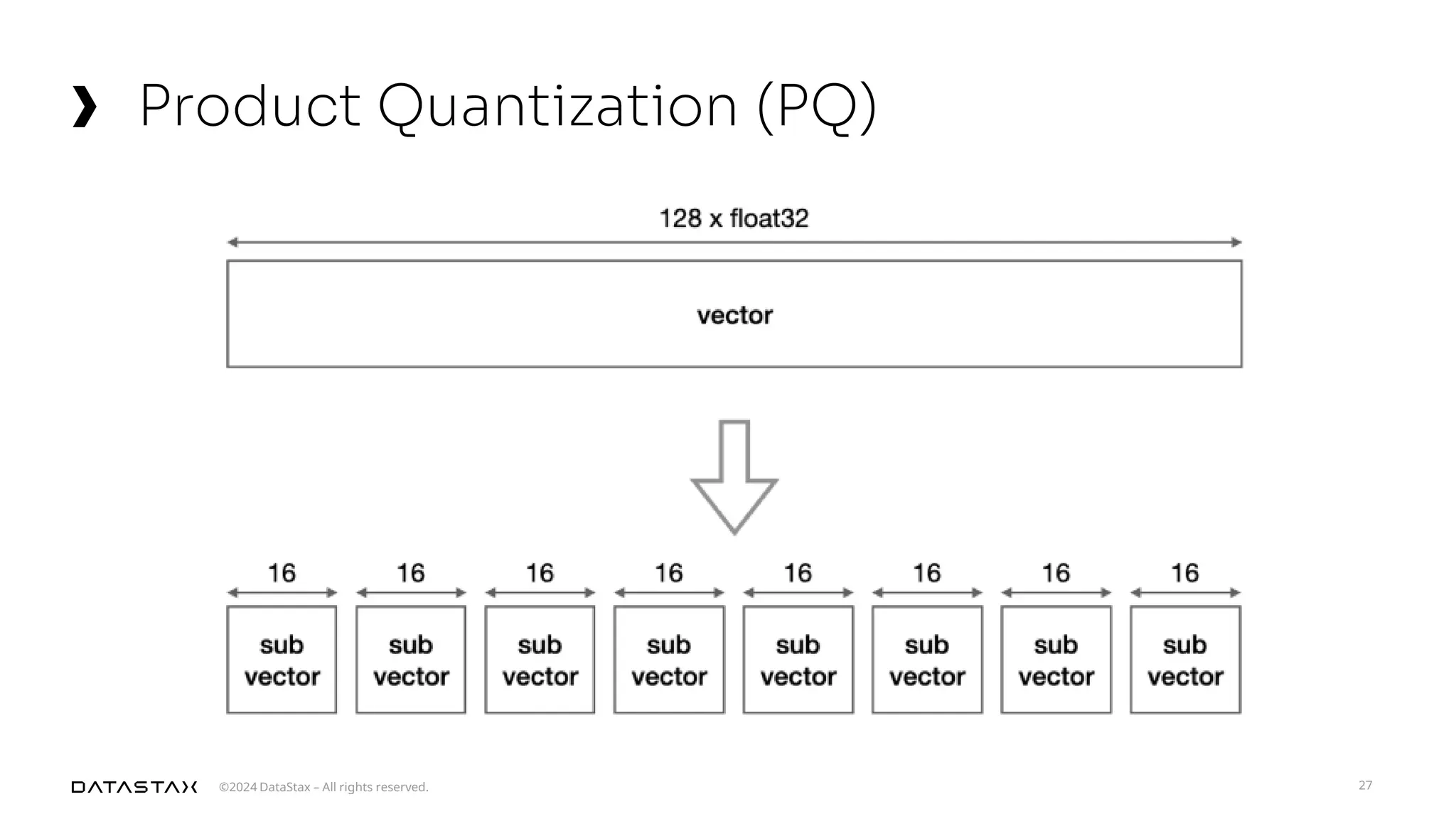

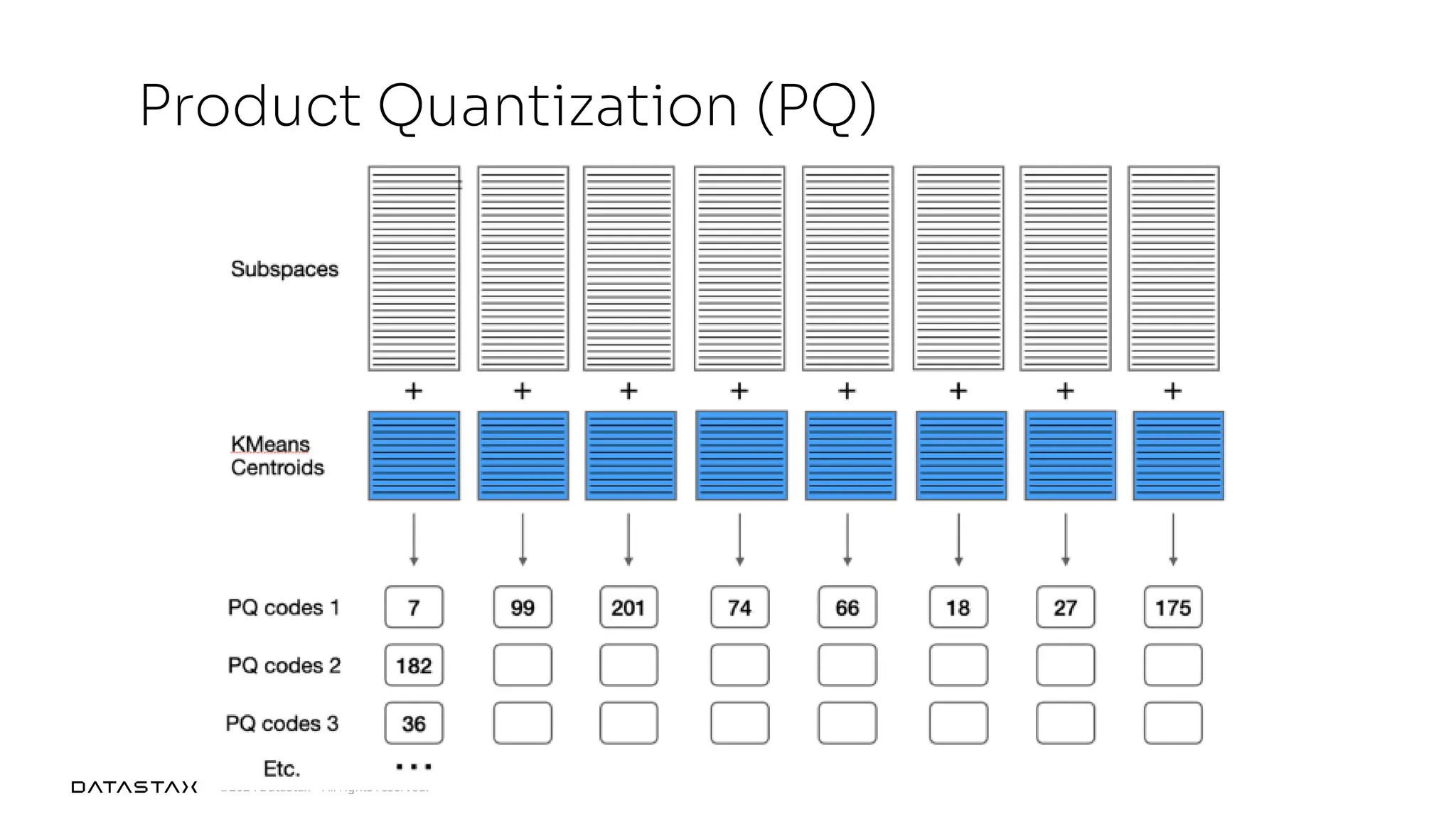

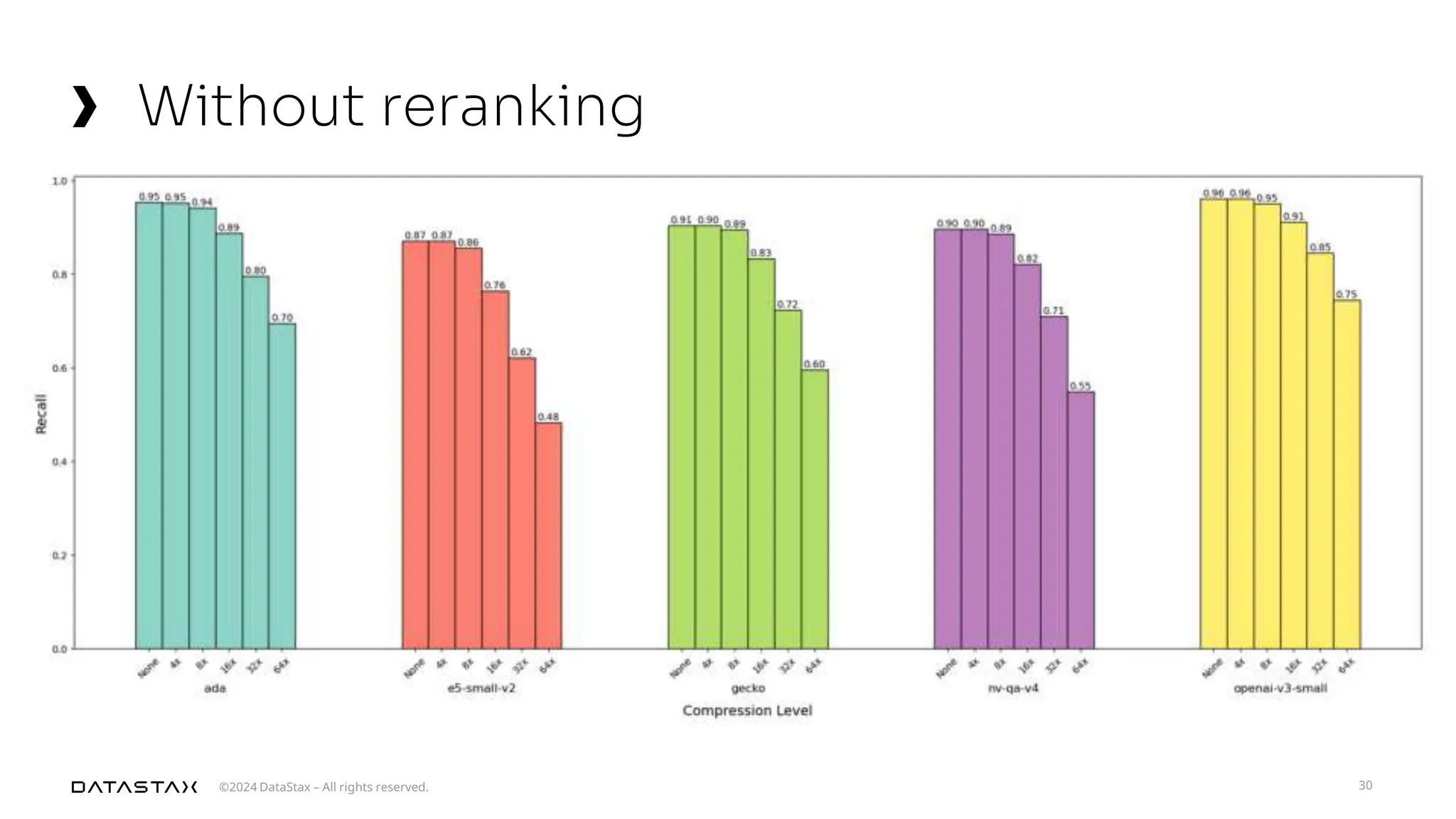

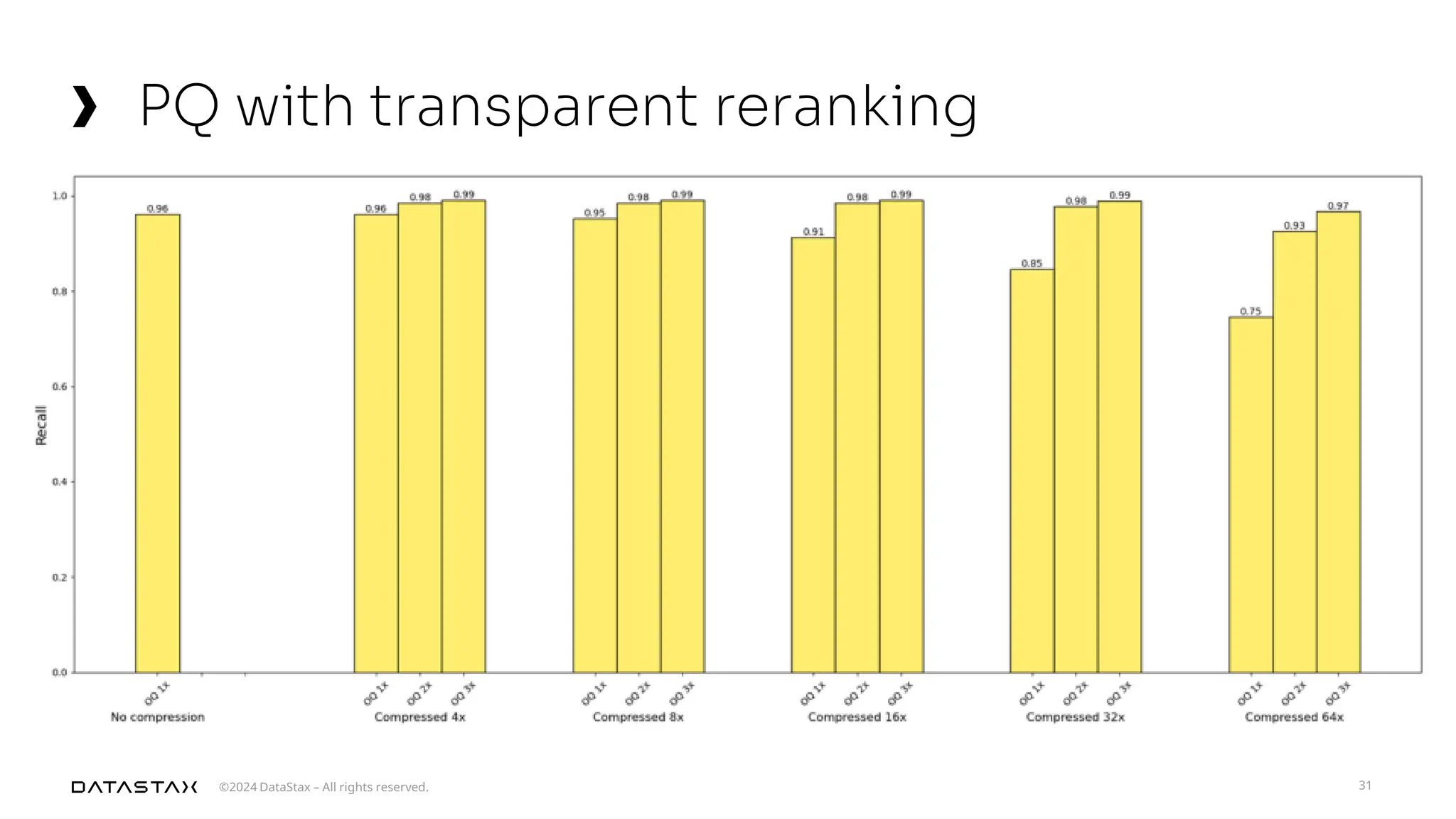

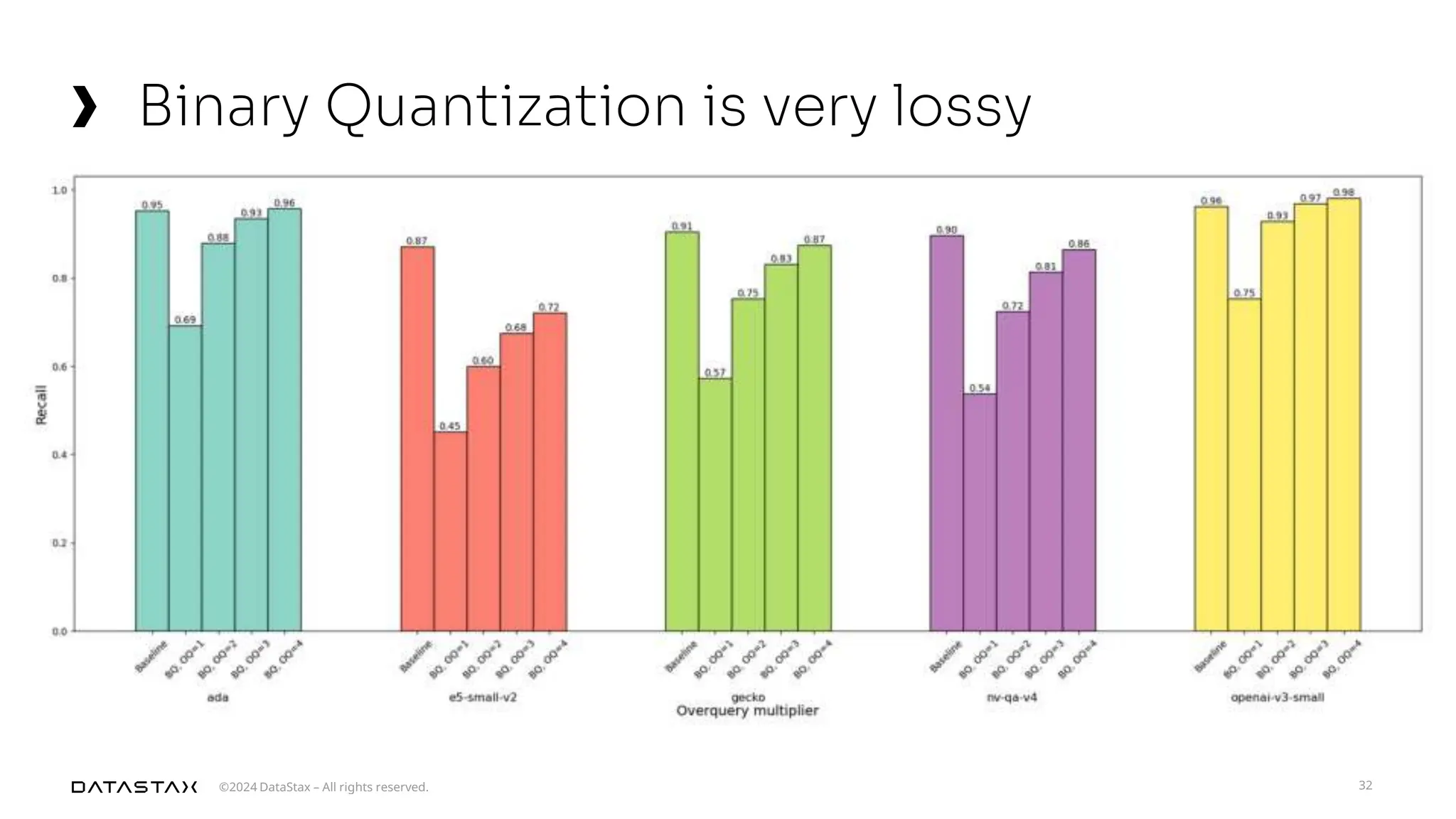

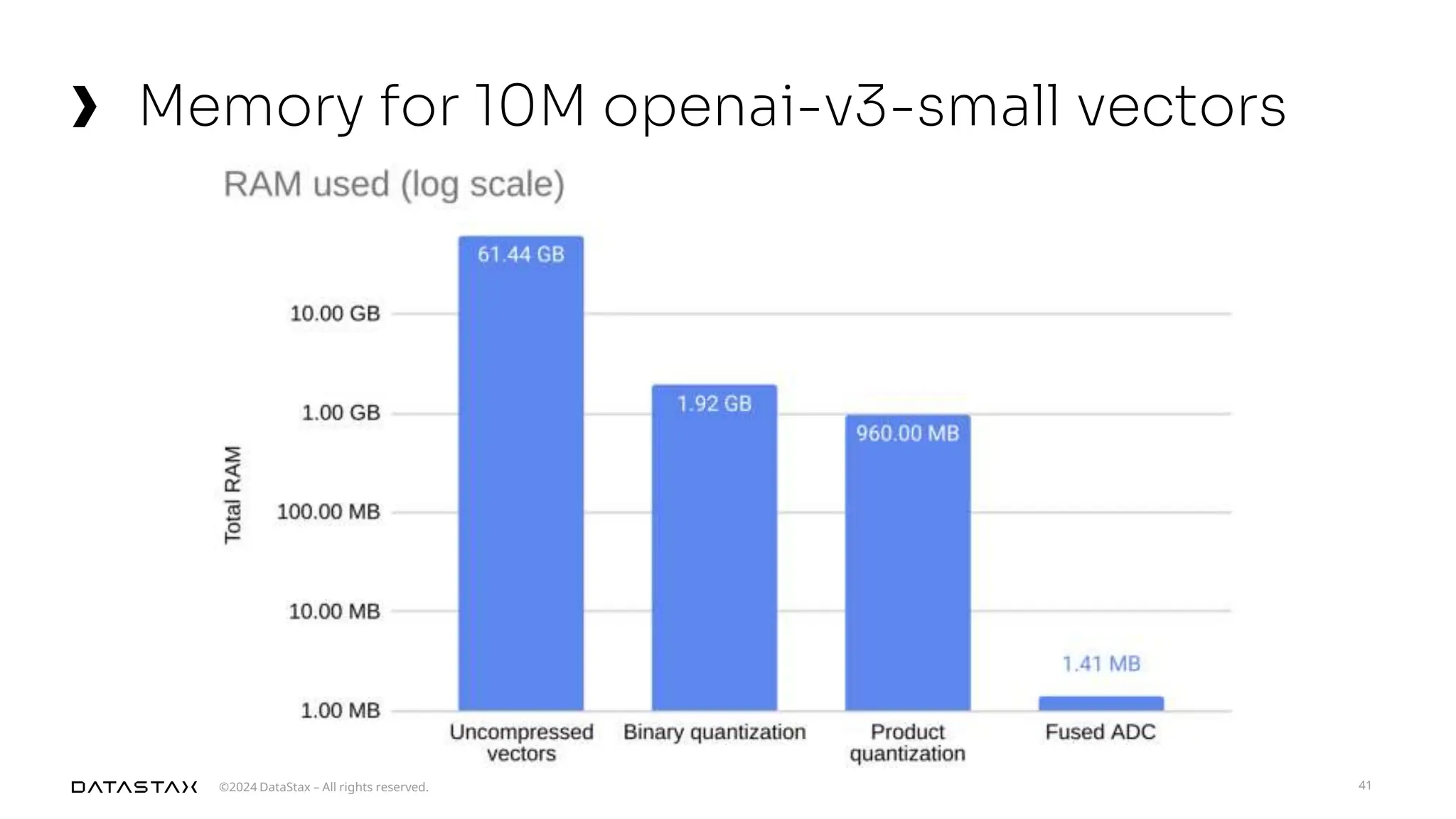

The document outlines advancements in vector search technology and approximate nearest neighbor (ANN) algorithms, detailing milestones from 2015 to 2024. It discusses various indexing techniques, partitioning, and challenges associated with larger-than-memory datasets. Additionally, it explores performance metrics and the evolution of vector databases in managing massive datasets efficiently.