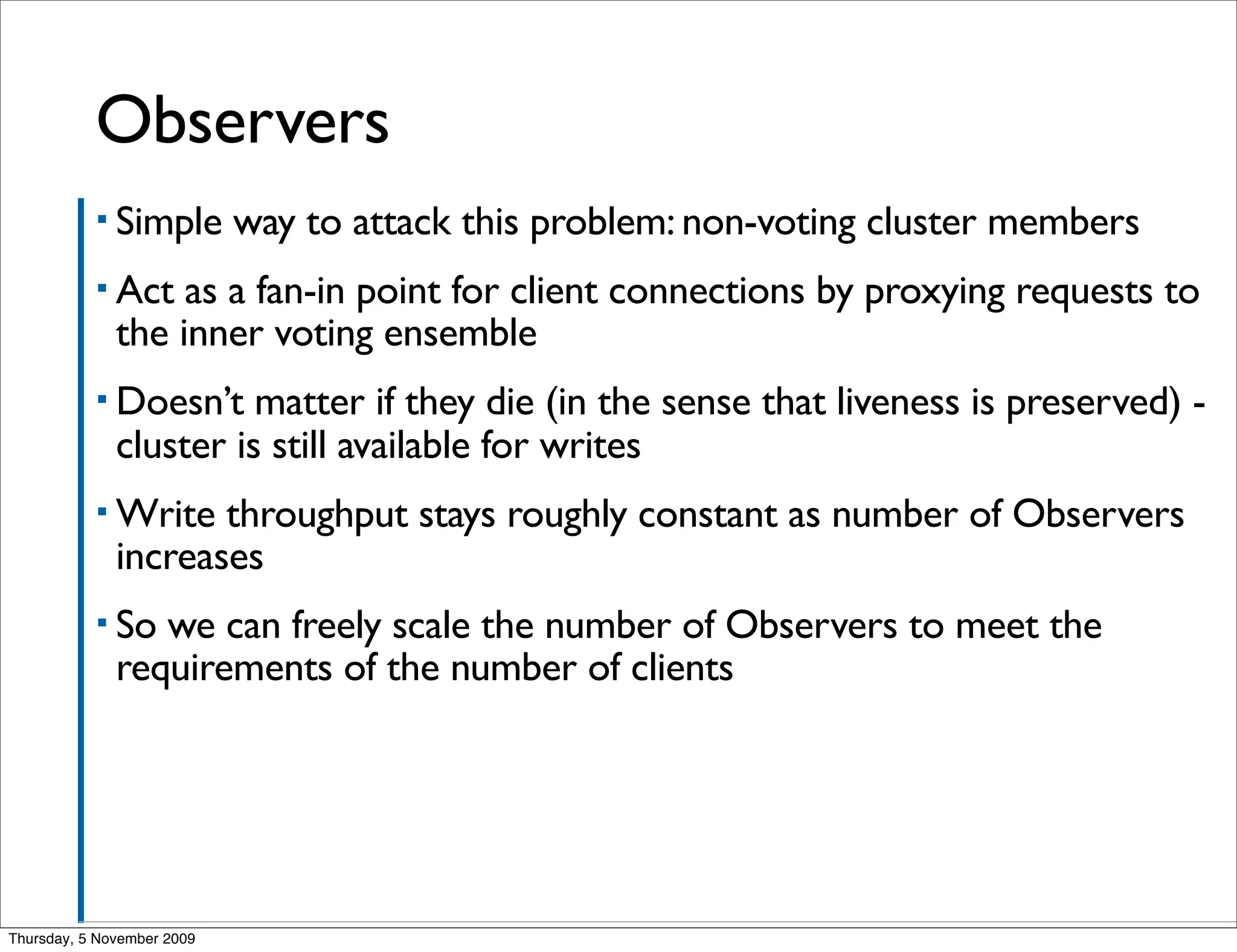

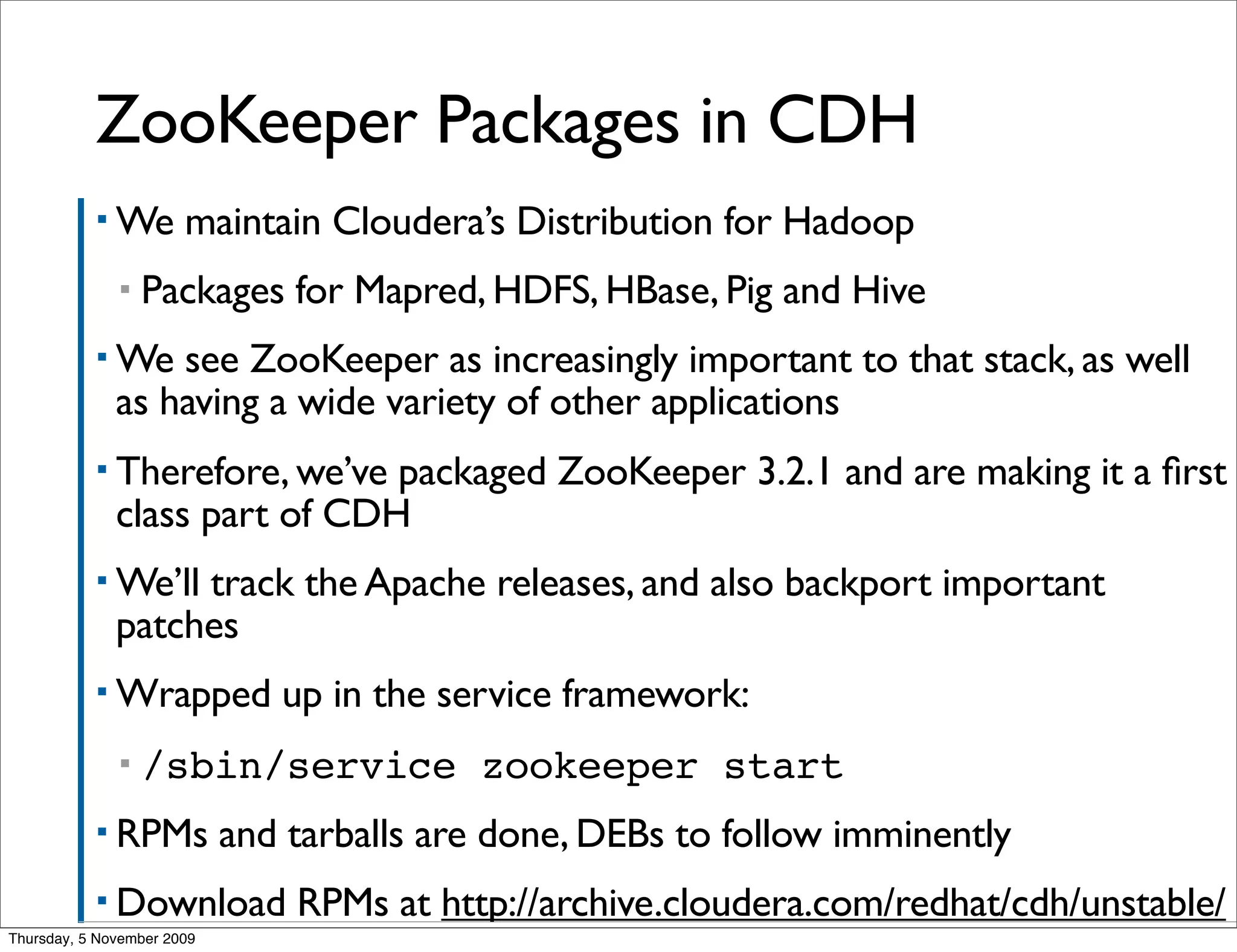

Henry Robinson gave a presentation on upcoming features for ZooKeeper. He discussed observers, which allow non-voting servers to scale client connections without impacting performance. He also covered dynamic ensembles, which would allow changing the ZooKeeper cluster membership without downtime. Finally, he announced that Cloudera's Distribution for Hadoop will include ZooKeeper packages to integrate it more fully.