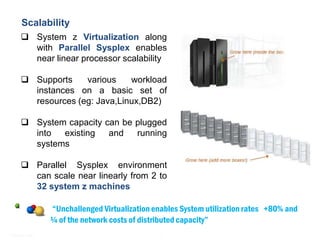

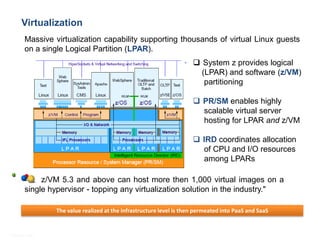

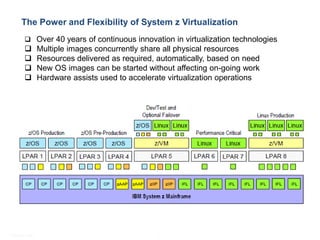

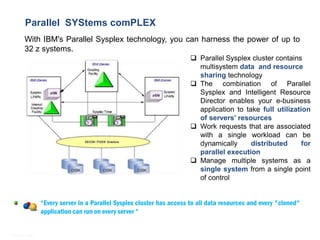

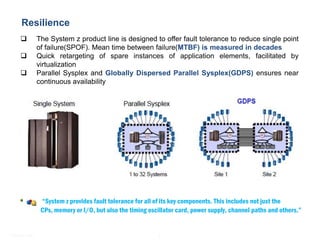

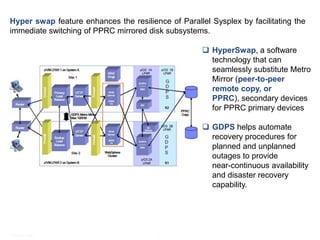

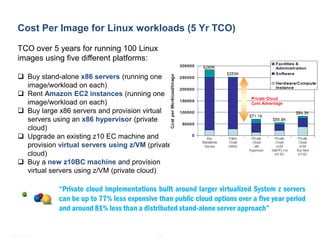

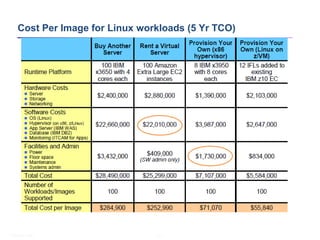

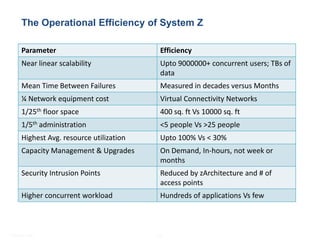

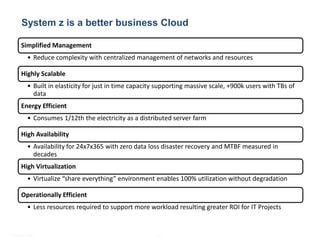

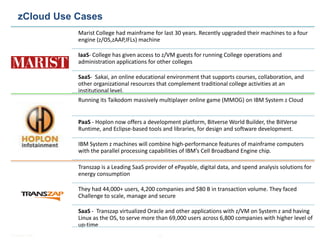

This document discusses how System z mainframes provide a better business cloud platform compared to other options. It highlights key cloud requirements like scalability, resilience, elasticity and security that System z addresses through its virtualization, Parallel Sysplex clustering, and other features. Examples are given of organizations successfully using System z in infrastructure (IaaS), platform (PaaS), and software (SaaS) cloud models to gain benefits like simplified management, high availability, energy efficiency and operational efficiency.