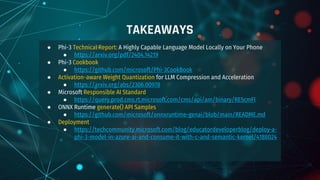

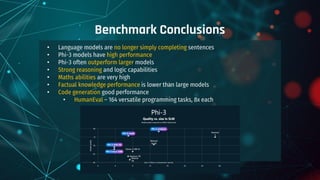

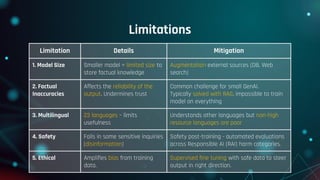

The document discusses the Phi-3 family of small language models (SLMs) developed by Microsoft, which are designed for deployment on local devices, ensuring cost-effectiveness, security, and performance. Key highlights include their competitive performance against larger models, multi-modal capabilities, and advanced training techniques such as activation-aware weight quantization. It also outlines deployment options, pricing, and future enhancements planned for 2025.

![● Models run in the cloud and on the edge

● Runs locally on mobile devices (1.8GB RAM, 12 tkns/sec on iPhone 14)

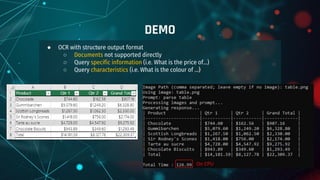

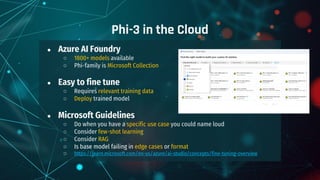

AZ Serverless Deployment Pricing

Phi-3 Deployment Options

Model Context Input (1M Tokens) Output (1M tokens)

Phi-3-mini -4k-instruct / -128k-instruct 4K / 128K €0.13 €0.50

Phi-3.5-mini-instruct, Phi-3.5-vision-instruct 128K €0.13 €0.50

Phi-3-small -8k-instruct / -128k-instruct 8K / 128K €0.15 €0.58

Phi-3-medium -4k-instruct / -128k-instruct 4K / 128K €0.17 €0.65

Phi-3.5-MoE-instruct 128K €0.16 €0.62

GPT-4o mini 128K €0.16 €0.62

GPT-4o-0513 128K €4.63 €13.89

GPT-4o-2024-08-06 [Newer, More Censored] 128K €2.32 €9.26](https://image.slidesharecdn.com/whatarephismalllanguagemodelscapableof-241210165302-a453952e/85/What-are-Phi-Small-Language-Models-Capable-of-14-320.jpg)

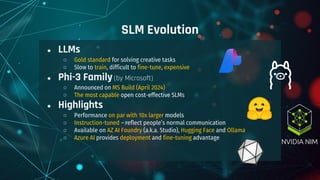

![Ollama

● Download and Install Ollama

https://ollama.com/download

● Install Phi-3

Hugging Face

● Install Hugging Face CLI

● Install the generate() API for CPU

● Download Phi3-Vision files

● Download phi3V example by MSFT

https://github.com/microsoft/onnxruntime-genai/blob/main/examples/python/phi3v.py

Phi-3 on the Edge

PS > ollama run phi3:mini [2.2GB]

PS > ollama run phi3:medium

PS > ollama run phi3.5 [2.2GB]

> pip install -U "huggingface_hub[cli]“

> pip install onnxruntime-genai

> huggingface-cli download microsoft/Phi-3.5-vision-instruct-onnx --

include cpu_and_mobile/cpu-int4-rtn-block-32-acc-level-4/* --local-

dir .](https://image.slidesharecdn.com/whatarephismalllanguagemodelscapableof-241210165302-a453952e/85/What-are-Phi-Small-Language-Models-Capable-of-26-320.jpg)