Embed presentation

Download to read offline

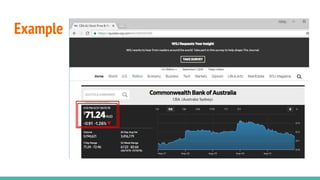

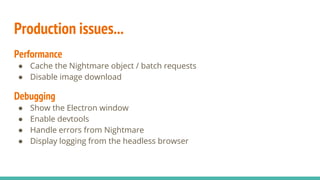

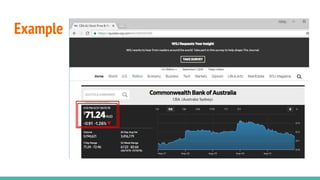

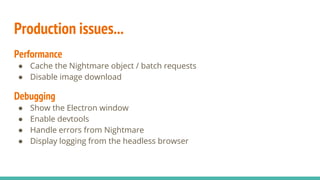

The document discusses the challenges and pitfalls of web scraping, highlighting it as a generally poor practice due to its fragility, potential legal issues, and risk of being blocked from websites. However, it acknowledges that web scraping may be necessary when data is otherwise inaccessible and outlines both simple and advanced techniques for implementing scraping. The content also provides resources and contact information for further reference.