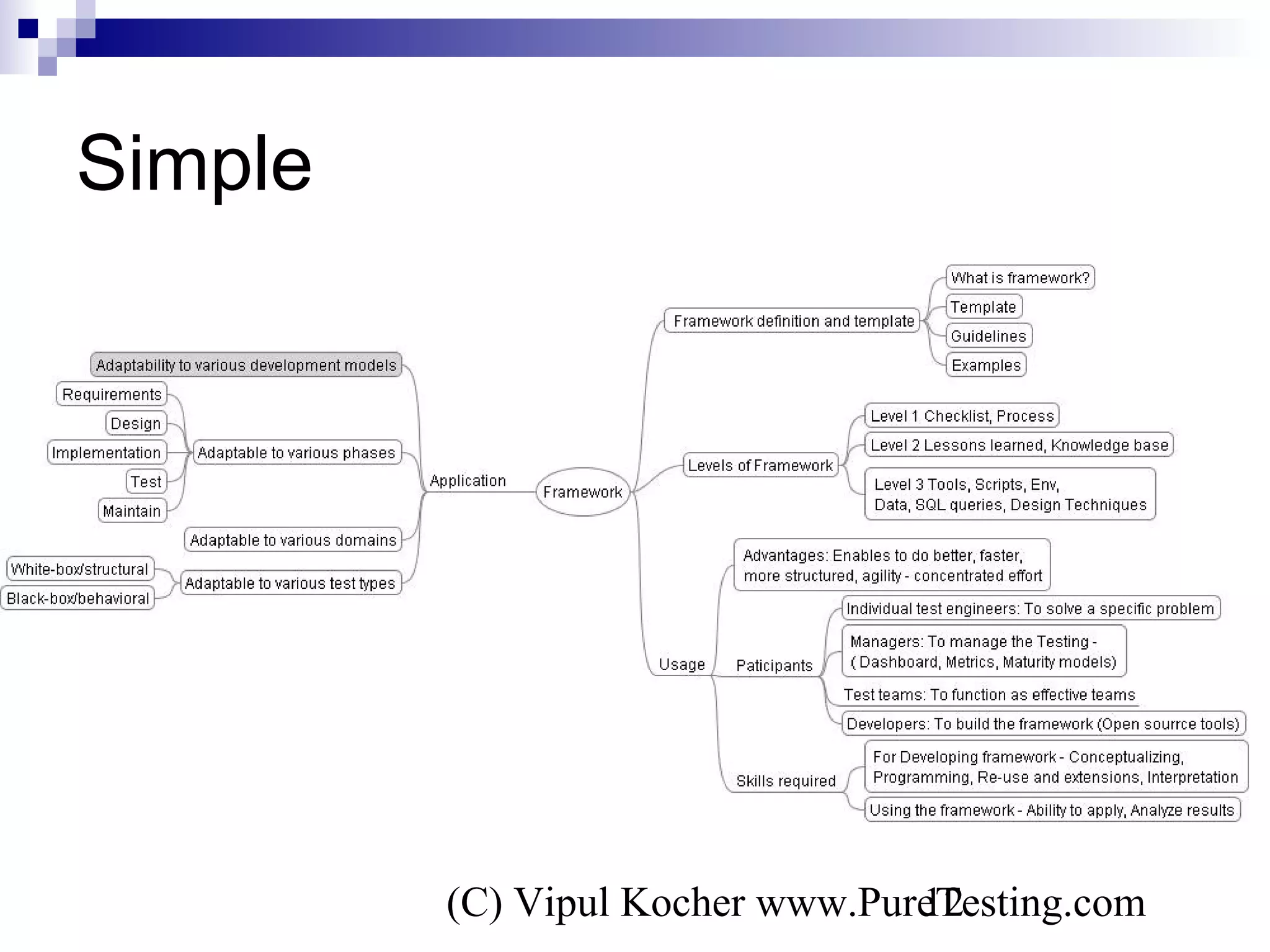

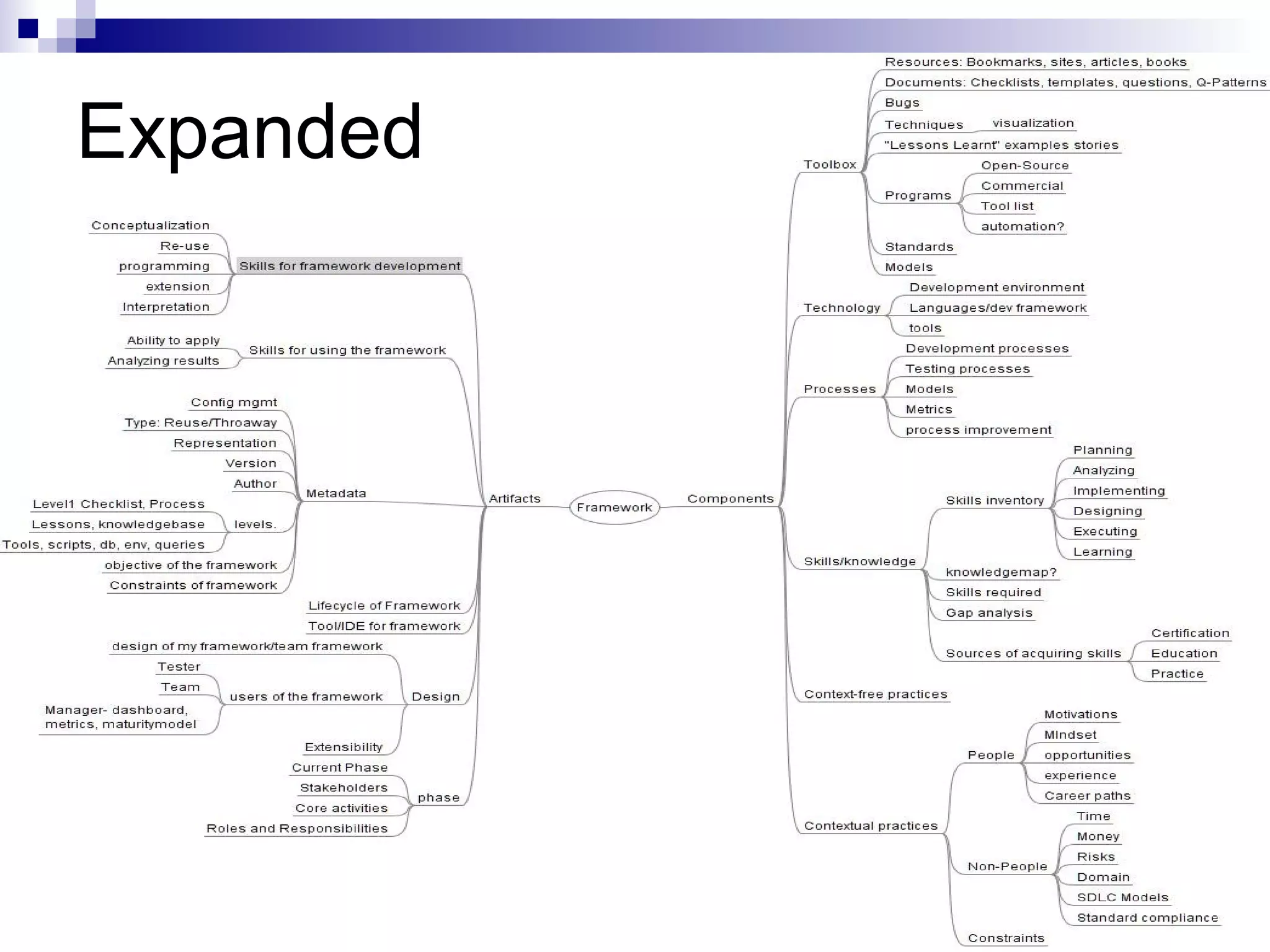

The document discusses a framework-based approach to software testing, emphasizing the importance of leveraging past experiences for improved effectiveness in testing processes. It highlights the role of frameworks in guiding testing activities, managing knowledge, and enhancing collaboration among team members. Importantly, it also addresses potential challenges and caveats associated with implementing such frameworks in practice.

![(C) Vipul Kocher www.PureTesting.com6

Introduction to Framework

The free dictionary[1] defines a framework as:

1. A structure for supporting or enclosing something else, especially a skeletal

support used as the basis for something being constructed.

2. An external work platform; a scaffold.

3. A fundamental structure, as for a written work.

4. A set of assumptions, concepts, values, and practices that constitutes a way of

viewing reality.

Wikipedia[2] defines a framework as

“a real or conceptual structure intended to serve as a support or guide for the

building of something that expands the structure into something useful.”

Thus a framework is

an “external” structure that supports some activity and consists of various

things such as assumtions, practices, concepts, tools and various other things

which can be used to create models and thus be useful for whatever activity for

which these are being applied.

[1] http://www.thefreedictionary.com/framework [2] htttp://en.wikipedia.org/wiki/Framework](https://image.slidesharecdn.com/vipulkocher-softwaretestingaframeworkbasedapproach-150325111647-conversion-gate01/75/Vipul-Kocher-Software-Testing-A-Framework-Based-Approach-6-2048.jpg)