VA3DR Poster

•

0 likes•248 views

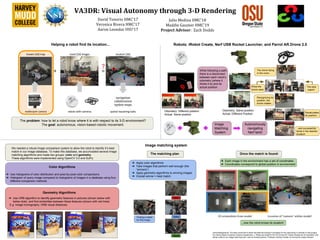

This document describes the VA3DR project which aims to enable visual autonomy in robots through 3D rendering. The team developed an image matching system to allow robots to identify their location by comparing camera images to a database of images tagged with coordinate locations. The system uses both color and geometric algorithms to find the best matching image, then identifies the robot's position based on the coordinates of the matched image. This allows robots like drones and tanks to autonomously navigate and locate themselves within their environment.

Report

Share

Report

Share

Download to read offline

Recommended

Three-dimensional Holographic Projection Technology PPT | 2018

3D holographic projection technology clearly has a big future ahead. 3D Holographic projection is the new wave of technology that will change how we see things in the modern era. Three-dimensional holographic projection technology will have tremendous effects on all fields of life including business, education, science, art, and healthcare.

immoral scene sensoring

describe how to sensor image base on skin detection

mainly on different skin color

Holographic Technology

I cover the knowledge of holographic technology means how its phenomena work and how 3D data stored in the memory in the form of a hologram.

Regeneration of hologram from a slice

This presentation contains parameter change when a small part of hologram is cut .

Recommended

Three-dimensional Holographic Projection Technology PPT | 2018

3D holographic projection technology clearly has a big future ahead. 3D Holographic projection is the new wave of technology that will change how we see things in the modern era. Three-dimensional holographic projection technology will have tremendous effects on all fields of life including business, education, science, art, and healthcare.

immoral scene sensoring

describe how to sensor image base on skin detection

mainly on different skin color

Holographic Technology

I cover the knowledge of holographic technology means how its phenomena work and how 3D data stored in the memory in the form of a hologram.

Regeneration of hologram from a slice

This presentation contains parameter change when a small part of hologram is cut .

Holography Projection

Holography is the science and practice of making holograms. Typically, a hologram is a photographic recording of a light field, rather than of an image formed by a lens, and it is used to display a fully three-dimensional image of the holographed subject, which is seen without the aid of special glasses or other intermediate optics.

An intro to 4D

This ppt provides a basic information about 4D Technology and how it is been implemented in the various field of engineering.

Beyond RGB: Raster Analytics with FME

Satellite imagery providers deliver not only familiar, easily recognizable RGB images, but also some extra bands of other wavelengths, such as near infrared (NIR) and short-wavelength infrared (SWIR). With FME, we can easily assemble any combinations of bands for analytic purposes, for example, create pseudo-color infrared images or NDVI (Vegetation health) rasters.

The presentation goes into details of making images from remote sensing data either by simple band combining (which is usually not that simple) or by using some complicated math (which is often not that complicated). We will go through the list of necessary transformers for such workflows and demonstrate several workspaces producing useful output.

4d and 4d visualization

What is 4D

History

Applications Areas

4D Visualization

Its Uses and Application

LIDAR

4D Ultrasound

4D Cinema

4D Radiotherapy

4D Examples

Tesseract

Hidden surface removal

This presentation is about hidden surface removal, which is used in the representation of 3d objects in an image .

Visualization in 4th dimension ( The 4D concept)

The Visualization in 4D. The content of presentations is much more explainable compare to reading through slides only, it also has lots of animation and short Video to fully drive home the 4D concept.

An Assessment of Image Matching Algorithms in Depth Estimation

Computer vision is often used with mobile robot for feature tracking, landmark sensing, and obstacle detection. Almost all high-end robotics systems are now equipped with pairs of cameras arranged to provide depth perception. In stereo vision application, the disparity between the stereo images allows depth estimation within a scene. Detecting conjugate pair in stereo images is a challenging problem known as the correspondence problem. The goal of this research is to assess the performance of SIFT, MSER, and SURF, the well known matching algorithms, in solving the correspondence problem and then in estimating the depth within the scene. The results of each algorithm are evaluated and presented. The conclusion and recommendations for future works, lead towards the improvement of these powerful algorithms to achieve a higher level of efficiency within the scope of their performance.

3-d interpretation from single 2-d image III

3D object detection, monocular camera, autonomous driving

Dan Walsh - Undergrad FYP Presentation

Presentation for my undergraduate final year project, titled "Shadows vs. Stereo"

More Related Content

What's hot

Holography Projection

Holography is the science and practice of making holograms. Typically, a hologram is a photographic recording of a light field, rather than of an image formed by a lens, and it is used to display a fully three-dimensional image of the holographed subject, which is seen without the aid of special glasses or other intermediate optics.

An intro to 4D

This ppt provides a basic information about 4D Technology and how it is been implemented in the various field of engineering.

Beyond RGB: Raster Analytics with FME

Satellite imagery providers deliver not only familiar, easily recognizable RGB images, but also some extra bands of other wavelengths, such as near infrared (NIR) and short-wavelength infrared (SWIR). With FME, we can easily assemble any combinations of bands for analytic purposes, for example, create pseudo-color infrared images or NDVI (Vegetation health) rasters.

The presentation goes into details of making images from remote sensing data either by simple band combining (which is usually not that simple) or by using some complicated math (which is often not that complicated). We will go through the list of necessary transformers for such workflows and demonstrate several workspaces producing useful output.

4d and 4d visualization

What is 4D

History

Applications Areas

4D Visualization

Its Uses and Application

LIDAR

4D Ultrasound

4D Cinema

4D Radiotherapy

4D Examples

Tesseract

Hidden surface removal

This presentation is about hidden surface removal, which is used in the representation of 3d objects in an image .

Visualization in 4th dimension ( The 4D concept)

The Visualization in 4D. The content of presentations is much more explainable compare to reading through slides only, it also has lots of animation and short Video to fully drive home the 4D concept.

What's hot (13)

Similar to VA3DR Poster

An Assessment of Image Matching Algorithms in Depth Estimation

Computer vision is often used with mobile robot for feature tracking, landmark sensing, and obstacle detection. Almost all high-end robotics systems are now equipped with pairs of cameras arranged to provide depth perception. In stereo vision application, the disparity between the stereo images allows depth estimation within a scene. Detecting conjugate pair in stereo images is a challenging problem known as the correspondence problem. The goal of this research is to assess the performance of SIFT, MSER, and SURF, the well known matching algorithms, in solving the correspondence problem and then in estimating the depth within the scene. The results of each algorithm are evaluated and presented. The conclusion and recommendations for future works, lead towards the improvement of these powerful algorithms to achieve a higher level of efficiency within the scope of their performance.

3-d interpretation from single 2-d image III

3D object detection, monocular camera, autonomous driving

Dan Walsh - Undergrad FYP Presentation

Presentation for my undergraduate final year project, titled "Shadows vs. Stereo"

Montage4D: Interactive Seamless Fusion of Multiview Video Textures

Project Site: http://montage4d.com

The commoditization of virtual and augmented reality devices and the availability of inexpensive consumer depth cameras have catalyzed a resurgence of interest in spatiotemporal performance capture. Recent systems like Fusion4D and Holoportation address several crucial problems in the real-time fusion of multiview depth maps into volumetric and deformable representations. Nonetheless, stitching multiview video textures onto dynamic meshes remains challenging due to imprecise geometries, occlusion seams, and critical time constraints. In this paper, we present a practical solution towards real-time seamless texture montage for dynamic multiview reconstruction. We build on the ideas of dilated depth discontinuities and majority voting from Holoportation to reduce ghosting effects when blending textures. In contrast to their approach, we determine the appropriate blend of textures per vertex using view-dependent rendering techniques, so as to avert fuzziness caused by the ubiquitous normal-weighted blending. By leveraging geodesics-guided diffusion and temporal texture fields, our algorithm mitigates spatial occlusion seams while preserving temporal consistency. Experiments demonstrate significant enhancement in rendering quality, especially in detailed regions such as faces. We envision a wide range of applications for Montage4D, including immersive telepresence for business, training, and live entertainment.

Goal location prediction based on deep learning using RGB-D camera

In the navigation system, the desired destination position plays an essential role since the path planning algorithms takes a current location and goal location as inputs as well as the map of the surrounding environment. The generated path from path planning algorithm is used to guide a user to his final destination. This paper presents a proposed algorithm based on RGB-D camera to predict the goal coordinates in 2D occupancy grid map for visually impaired people navigation system. In recent years, deep learning methods have been used in many object detection tasks. So, the object detection method based on convolution neural network method is adopted in the proposed algorithm. The measuring distance between the current position of a sensor and the detected object depends on the depth data that is acquired from RGB-D camera. Both of the object detected coordinates and depth data has been integrated to get an accurate goal location in a 2D map. This proposed algorithm has been tested on various real-time scenarios. The experiments results indicate to the effectiveness of the proposed algorithm.

Simulation for autonomous driving at uber atg

Testing Safety of SDVs by Simulating Perception and Prediction

LiDARsim: Realistic LiDAR Simulation by Leveraging the Real World

Recovering and Simulating Pedestrians in the Wild

S3: Neural Shape, Skeleton, and Skinning Fields for 3D Human Modeling

SceneGen: Learning to Generate Realistic Traffic Scenes

TrafficSim: Learning to Simulate Realistic Multi-Agent Behaviors

GeoSim: Realistic Video Simulation via Geometry-Aware Composition for Self-Driving

AdvSim: Generating Safety-Critical Scenarios for Self-Driving Vehicles

Appendix: (Waymo)

SurfelGAN: Synthesizing Realistic Sensor Data for Autonomous Driving

Automatic 3D view Generation from a Single 2D Image for both Indoor and Outdo...

Image based video generation paradigms have recently emerged as an interesting problem in the field of robotics. This paper focuses on the problem of automatic video generation of both indoor and outdoor scenes. Automatic 3D view generation of indoor scenes mainly consist of orthogonal planes and outdoor scenes consist of vanishing point. The algorithm infers frontier information directly from the images using a geometric context-based segmentation scheme that uses the natural scene structure. The presence of floor is a major cue for obtaining the termination point for the video generation of the indoor scenes and vanishing point plays an important role in case of outdoor scenes. In both the cases, we create the navigation by cropping the image to the desired size upto the termination point. Our approach is fully automatic, since it needs no human intervention and finds applications, mainly in assisting autonomous cars, virtual walk through ancient time images, in architectural sites and in forensics. Qualitative and quantitative experiments on nearly 250 images in different scenarios show that the proposed algorithms are more efficient and accurate.

6 - Conception of an Autonomous UAV using Stereo Vision (presented in an Indo...

Conception of an Autonomous UAV using Stereo Vision (presented in an Indonesian conference)

OBJECT DETECTION FOR SERVICE ROBOT USING RANGE AND COLOR FEATURES OF AN IMAGE

In real-world applications, service robots need to locate and identify objects in a scene. A range sensor provides a robust estimate of depth information, which is useful to accurately locate objects in a scene. On the other hand, color information is an important property for object recognition task. The objective of this paper is to detect and localize multiple objects within an image using both range and color features. The proposed method uses 3D shape features to generate promising hypotheses within range images and verifies these hypotheses by using features obtained from both range and color images.

Object Detection for Service Robot Using Range and Color Features of an Image

In real-world applications, service robots need to locate and identify objects in a scene. A range sensor provides a robust estimate of depth information, which is useful to accurately locate objects in a scene. On the other hand, color information is an important property for object recognition task. The objective of this paper is to detect and localize multiple objects within an image using both range and color features. The proposed method uses 3D shape features to generate promising hypotheses within range images and verifies these hypotheses by using features obtained from both range and color images.

Object detection for service robot using range and color features of an image

In real-world applications, service robots need to locate and identify objects in a scene. A range sensor

provides a robust estimate of depth information, which is useful to accurately locate objects in a scene. On

the other hand, color information is an important property for object recognition task. The objective of this

paper is to detect and localize multiple objects within an image using both range and color features. The

proposed method uses 3D shape features to generate promising hypotheses within range images and

verifies these hypotheses by using features obtained from both range and color images.

Similar to VA3DR Poster (20)

An Assessment of Image Matching Algorithms in Depth Estimation

An Assessment of Image Matching Algorithms in Depth Estimation

Montage4D: Interactive Seamless Fusion of Multiview Video Textures

Montage4D: Interactive Seamless Fusion of Multiview Video Textures

Goal location prediction based on deep learning using RGB-D camera

Goal location prediction based on deep learning using RGB-D camera

Automatic 3D view Generation from a Single 2D Image for both Indoor and Outdo...

Automatic 3D view Generation from a Single 2D Image for both Indoor and Outdo...

6 - Conception of an Autonomous UAV using Stereo Vision (presented in an Indo...

6 - Conception of an Autonomous UAV using Stereo Vision (presented in an Indo...

OBJECT DETECTION FOR SERVICE ROBOT USING RANGE AND COLOR FEATURES OF AN IMAGE

OBJECT DETECTION FOR SERVICE ROBOT USING RANGE AND COLOR FEATURES OF AN IMAGE

Object Detection for Service Robot Using Range and Color Features of an Image

Object Detection for Service Robot Using Range and Color Features of an Image

ppt - of a project will help you on your college projects

ppt - of a project will help you on your college projects

Object detection for service robot using range and color features of an image

Object detection for service robot using range and color features of an image

VA3DR Poster

- 1. VA3DR: Visual Autonomy through 3-D Rendering David Tenorio HMC’17 Veronica Rivera HMC’17 Aaron Leondar OSU’17 Julio Medina HMC’18 Maddie Gaumer HMC’19 Project Advisor: Zach Dodds Robots: iRobot Create, Nerf USB Rocket Launcher, and Parrot AR.Drone 2.0Helping a robot find its location... Image matching system We needed a robust image comparison system to allow the robot to identify it’s best match in our image database. To make this database, we accumulated several image matching algorithms and made two groups: color and geometry. These algorithms were implemented using OpenCV 3.0 and SciPy. The problem: how to let a robot know where it is with respect to its 3-D environment? The goal: autonomous, vision-based robotic movement. The matching plan Geometry Algorithms ➔ Use ORB algorithm to identify geometric features in pictures (shown below with below dots) and find similarities between these features (shown with red lines) E.g. Image homography, ORB visual distances ➔ Apply color algorithms ➔ Take images that perform well enough (the “winners”) ➔ Apply geometry algorithms to winning images ➔ Overall winner = best match Once the match is found: ➔ Each image in the environment has a set of coordinates ➔ Coordinates correspond to global position in environment 2D screenshots from model Location of “camera” within model! ...now the robot knows its location! Bad Better Good Finding a match for this image... Odometry: Same position Actual: Different Position Odometry: Different position Actual: Same position While following a path, there is a disconnect between each robot’s odometry (where it thinks it is) and its actual position. Image Matching System Color Algorithms ➔ Use histograms of color distribution and pixel-by-pixel color comparisons ➔ Histogram of query image compared to histograms of images in a database using four different comparison methods The drone flying in the room... The best match! What the drone sees Recognizing its position, the drone rotates... Recalculates its position... ...and successfully lands in the desired location! Autonomously navigating Nerf tank! Acknowledgements: The team would like to thank the National Science Foundation for the opportunity to embark on this project, the Harvey Mudd Computer Science Department, J. Philipp de Graaff for the PS-Drone API, Adrian Rosebrock for inspiration and starter code for our image matching work, and our tireless advisor, Professor Zachary Dodds, for driving the project forward.