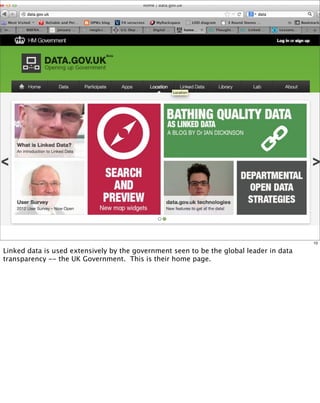

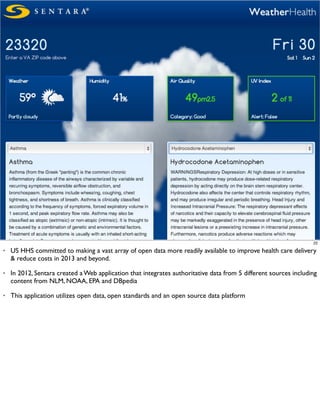

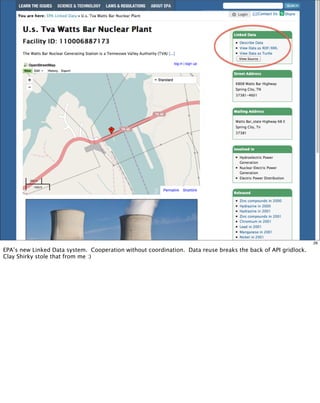

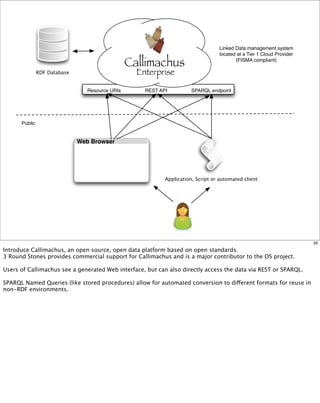

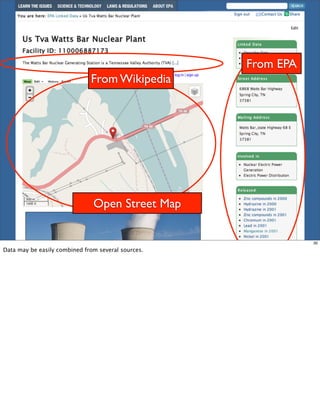

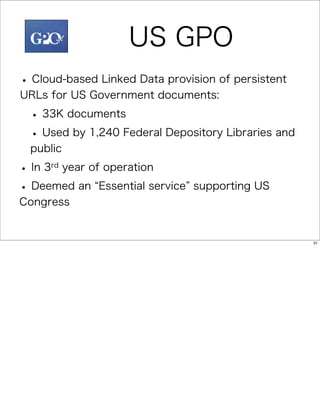

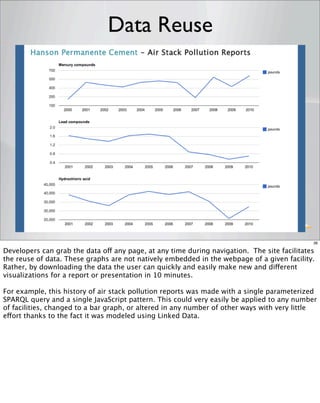

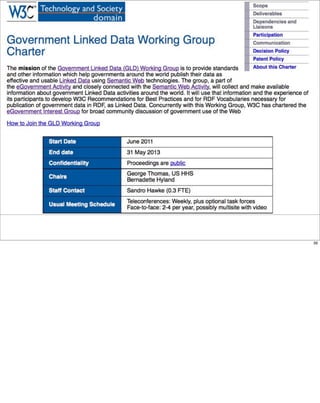

The document discusses the role of linked data in enhancing government transparency and innovation through open data initiatives. It highlights the importance of semantic technologies for improving data access, interoperability, and reuse across various sectors, including healthcare and environmental management. The presentation details how platforms like Callimachus enable the efficient management and publication of structured linked data, facilitating collaboration and reducing barriers to information sharing.