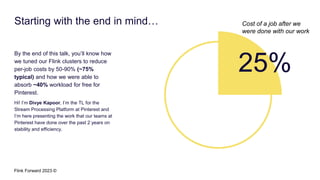

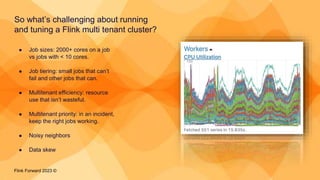

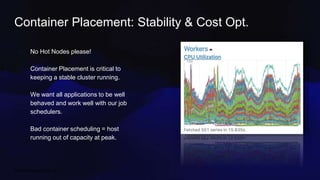

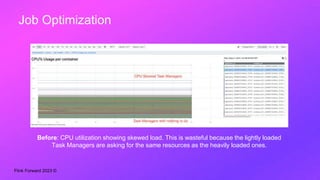

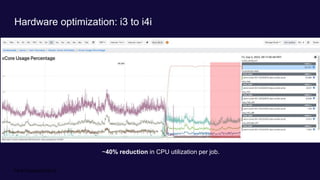

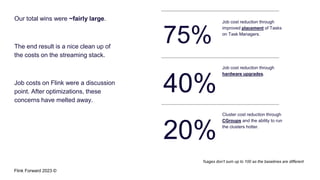

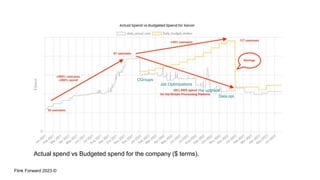

Divye Kapoor discusses how Pinterest tuned their Flink clusters to reduce per-job costs by 50-90%, achieving a typical reduction of around 75%. Key strategies included utilizing cgroups for resource management, optimizing job configurations, improving container placement, and hardware upgrades. The results showed significant cost savings and enhanced cluster stability, allowing Pinterest to absorb increased workloads efficiently.