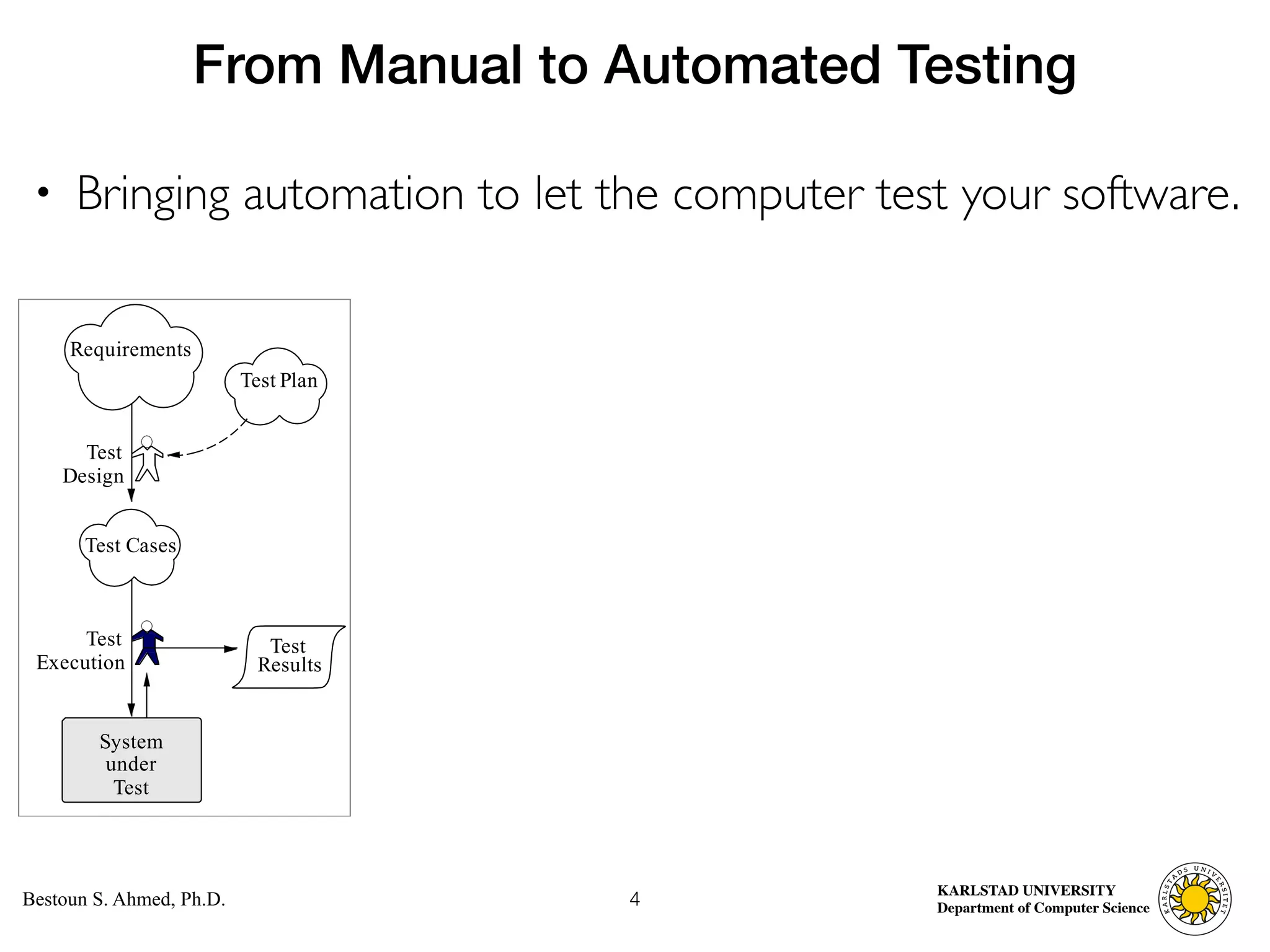

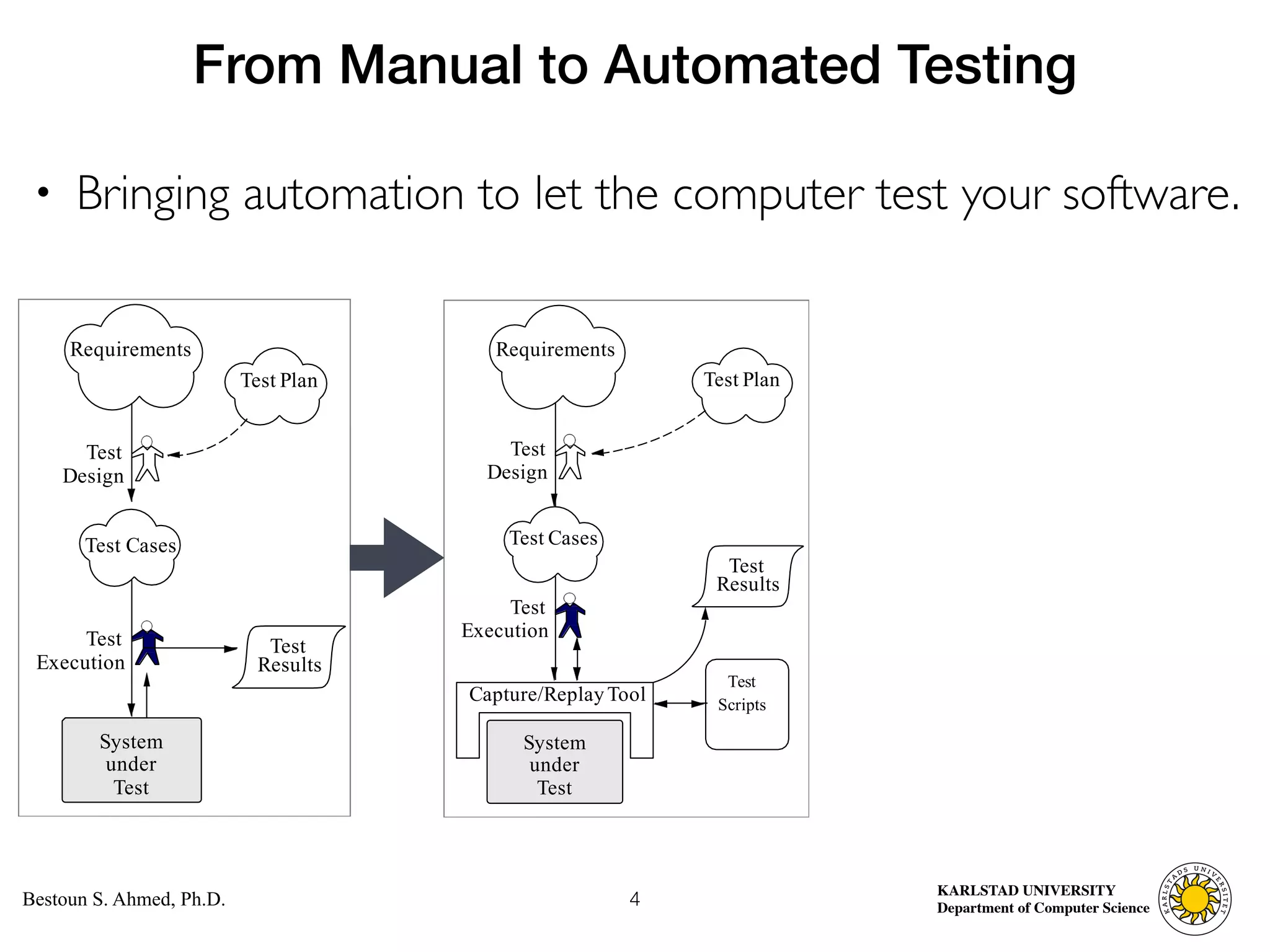

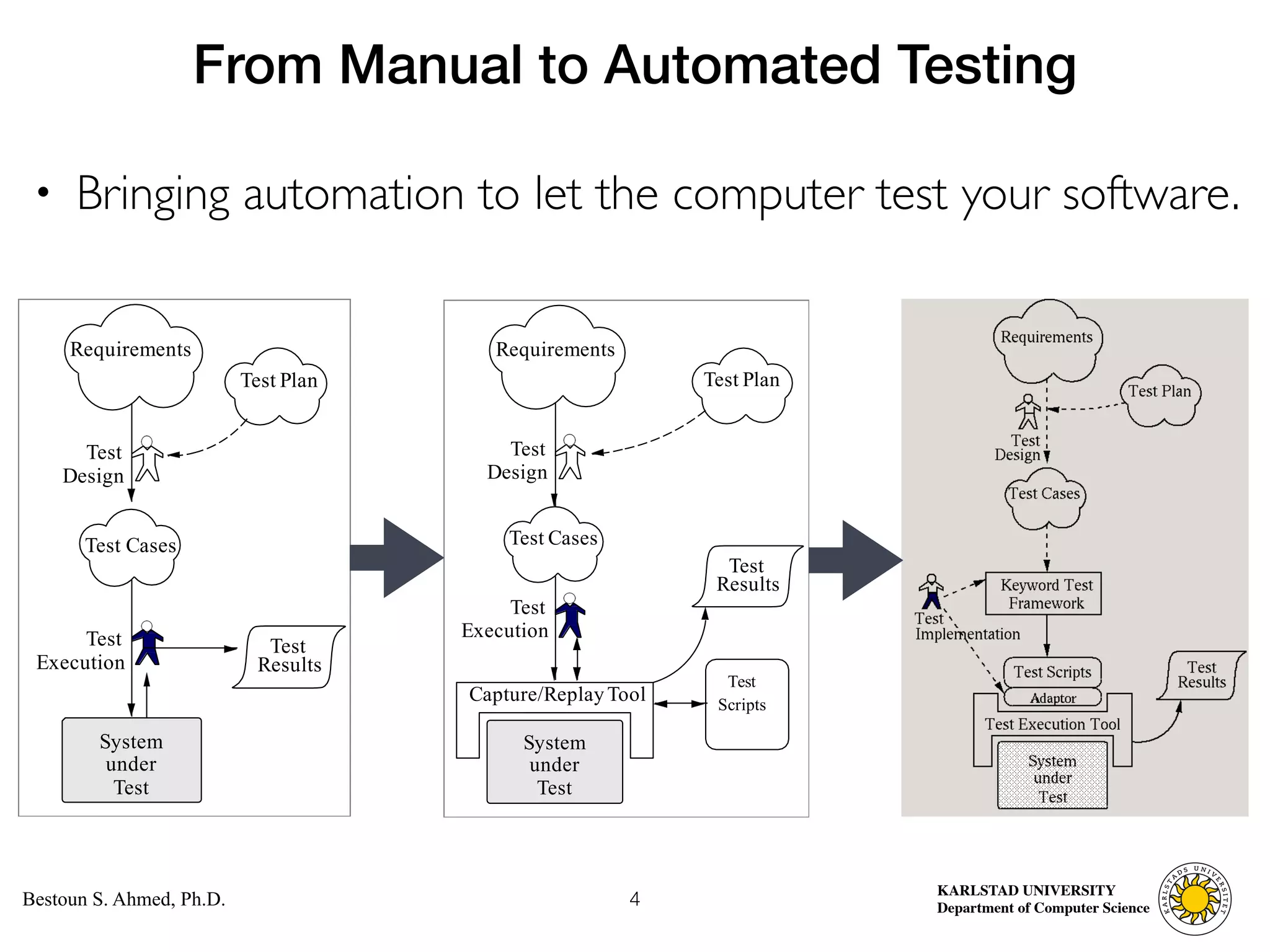

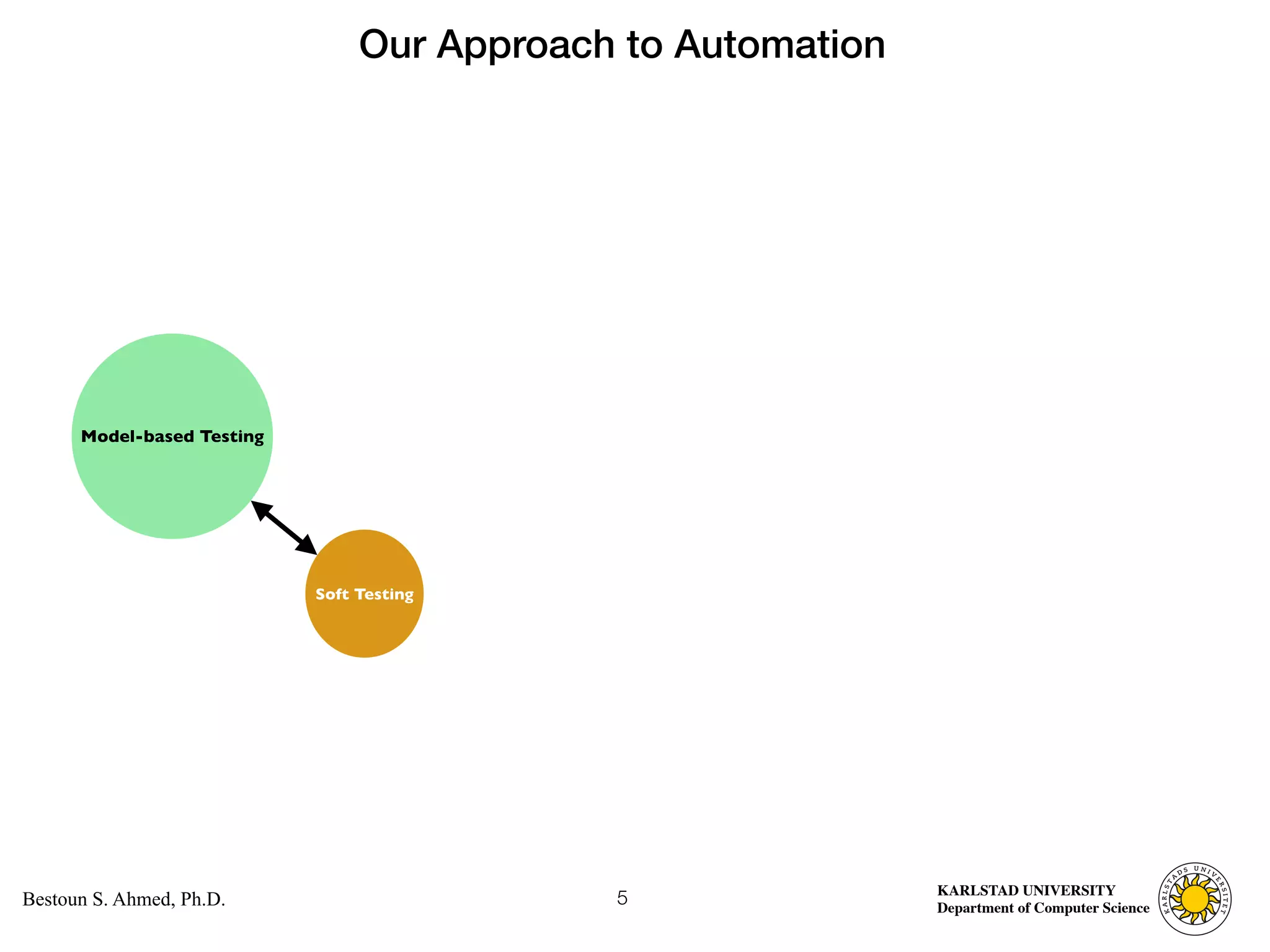

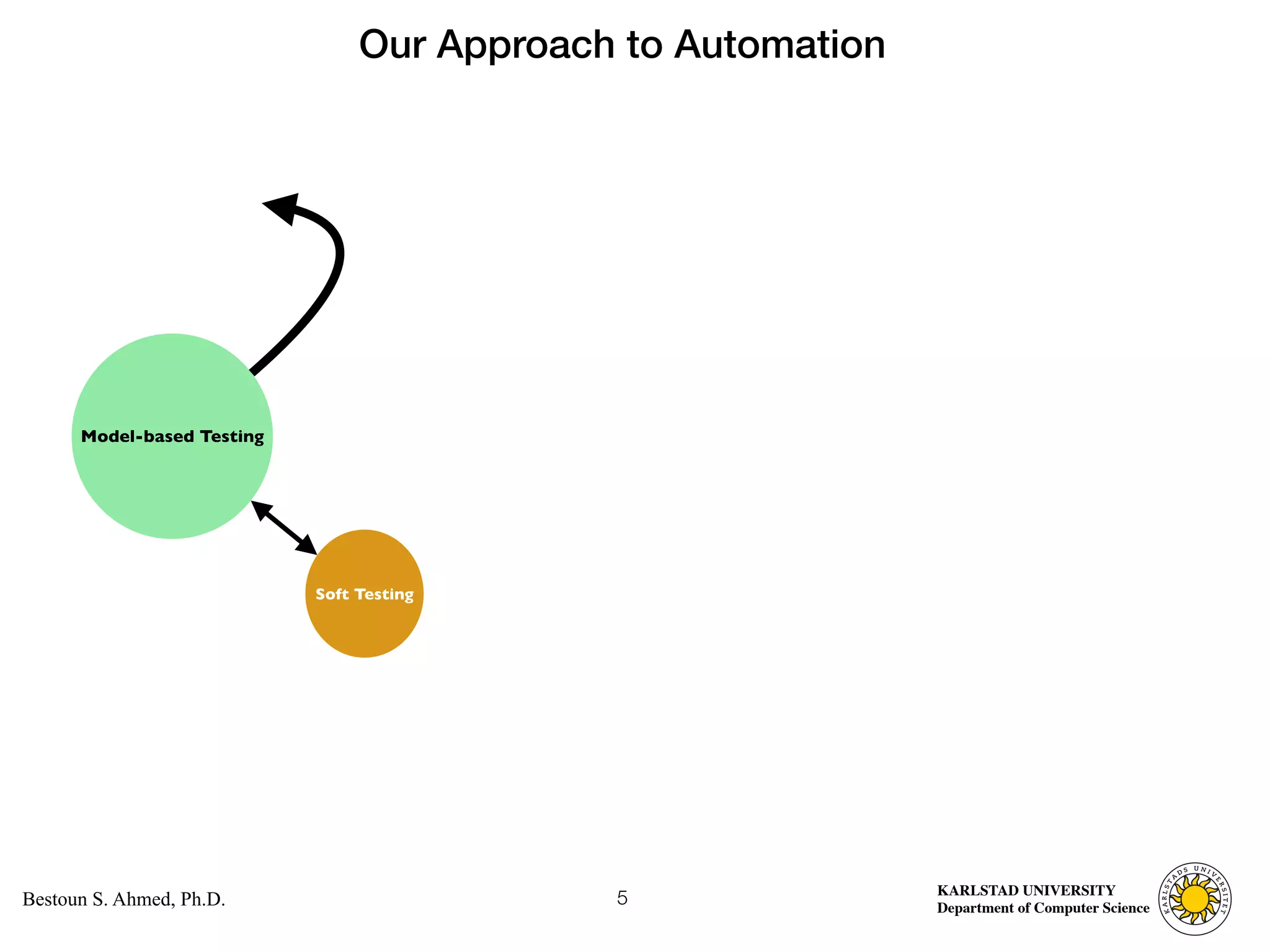

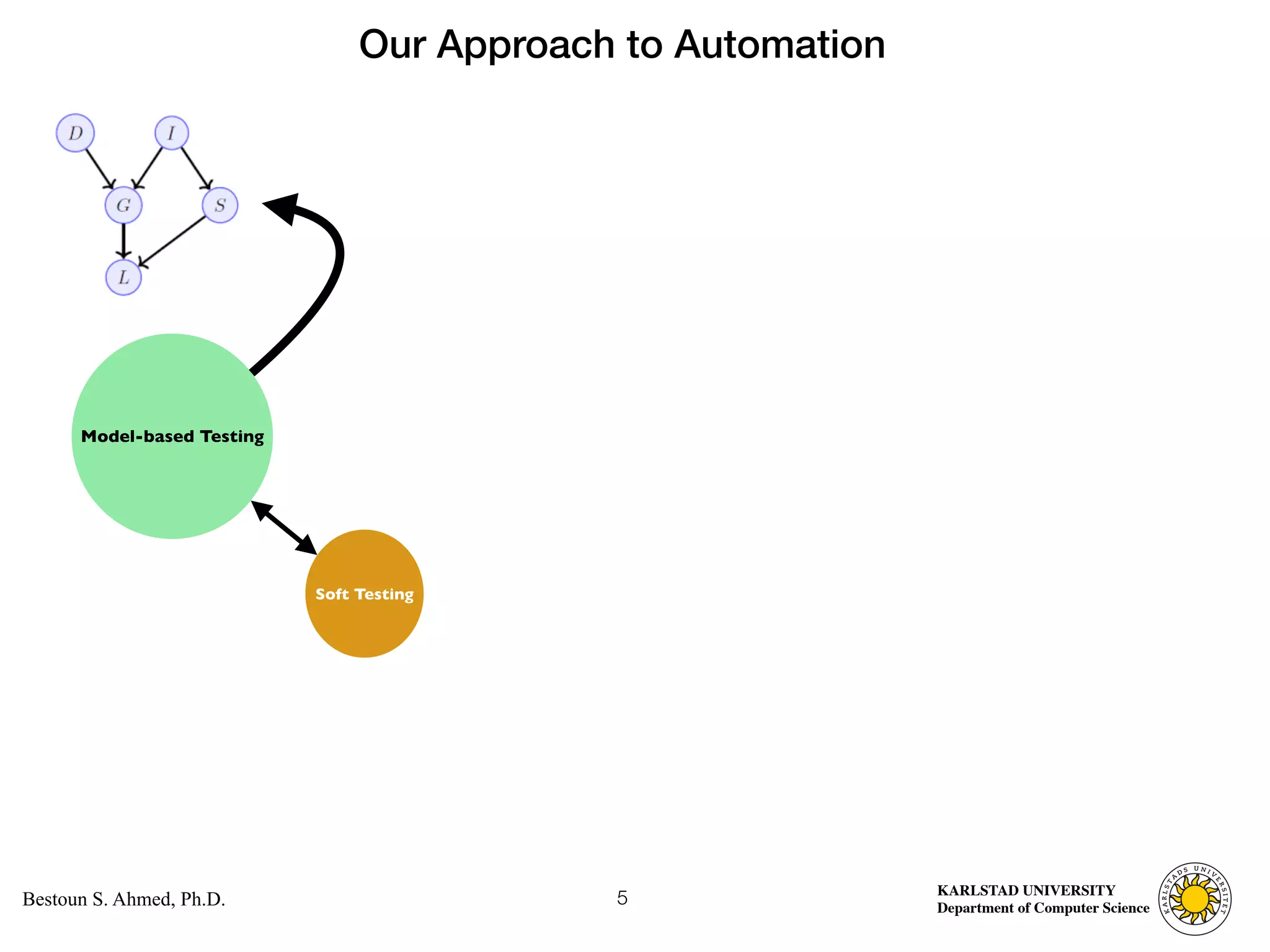

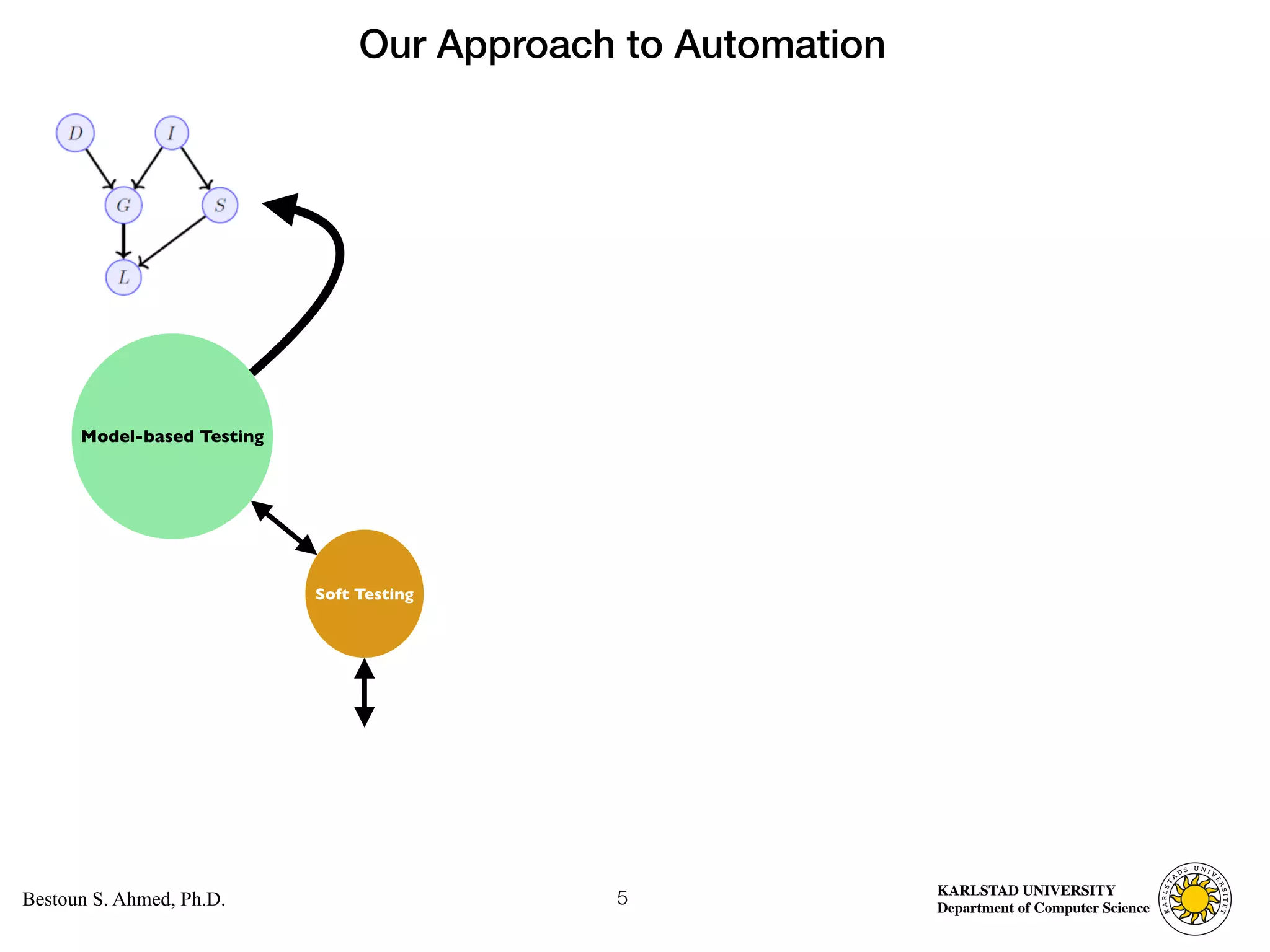

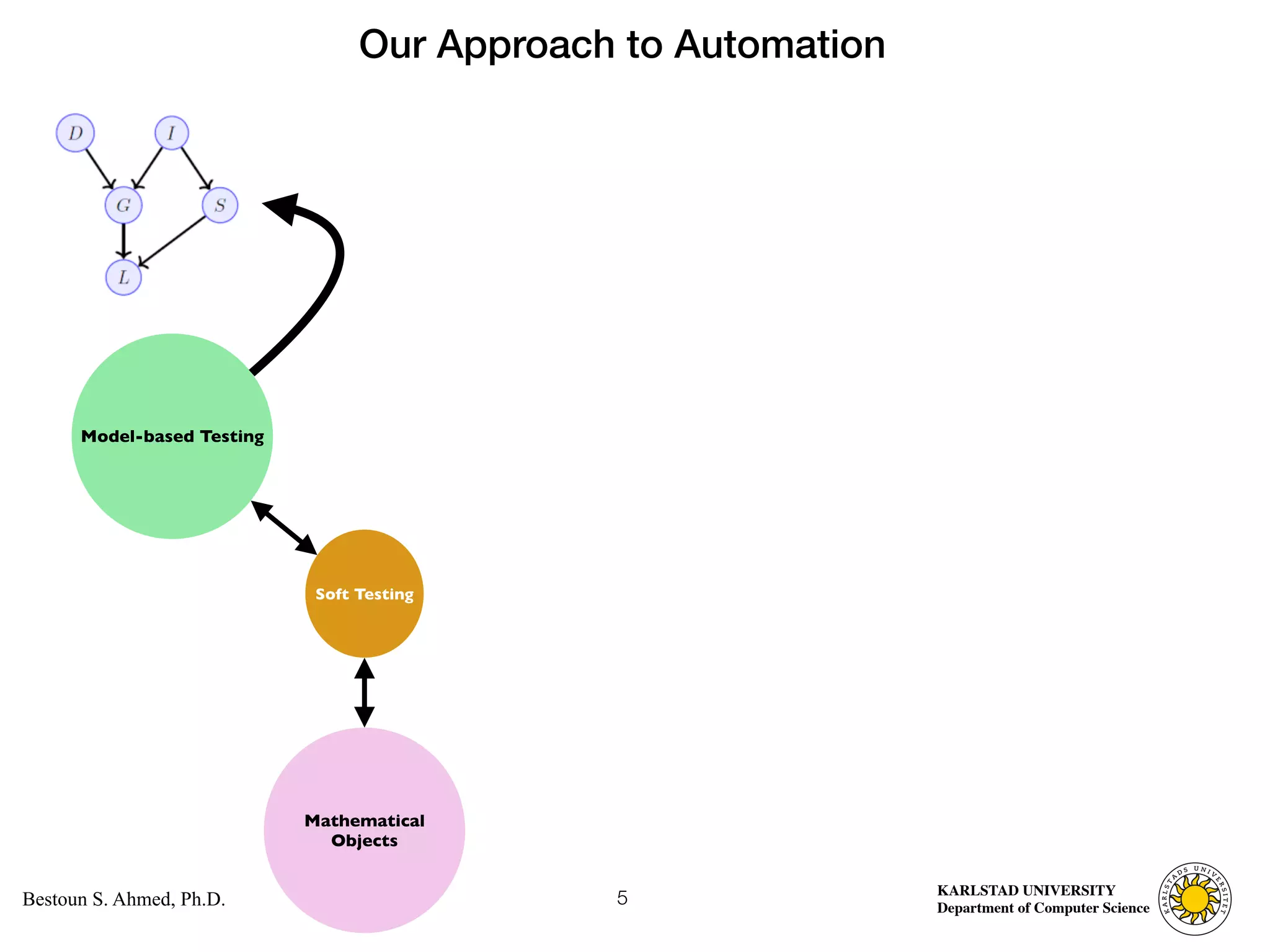

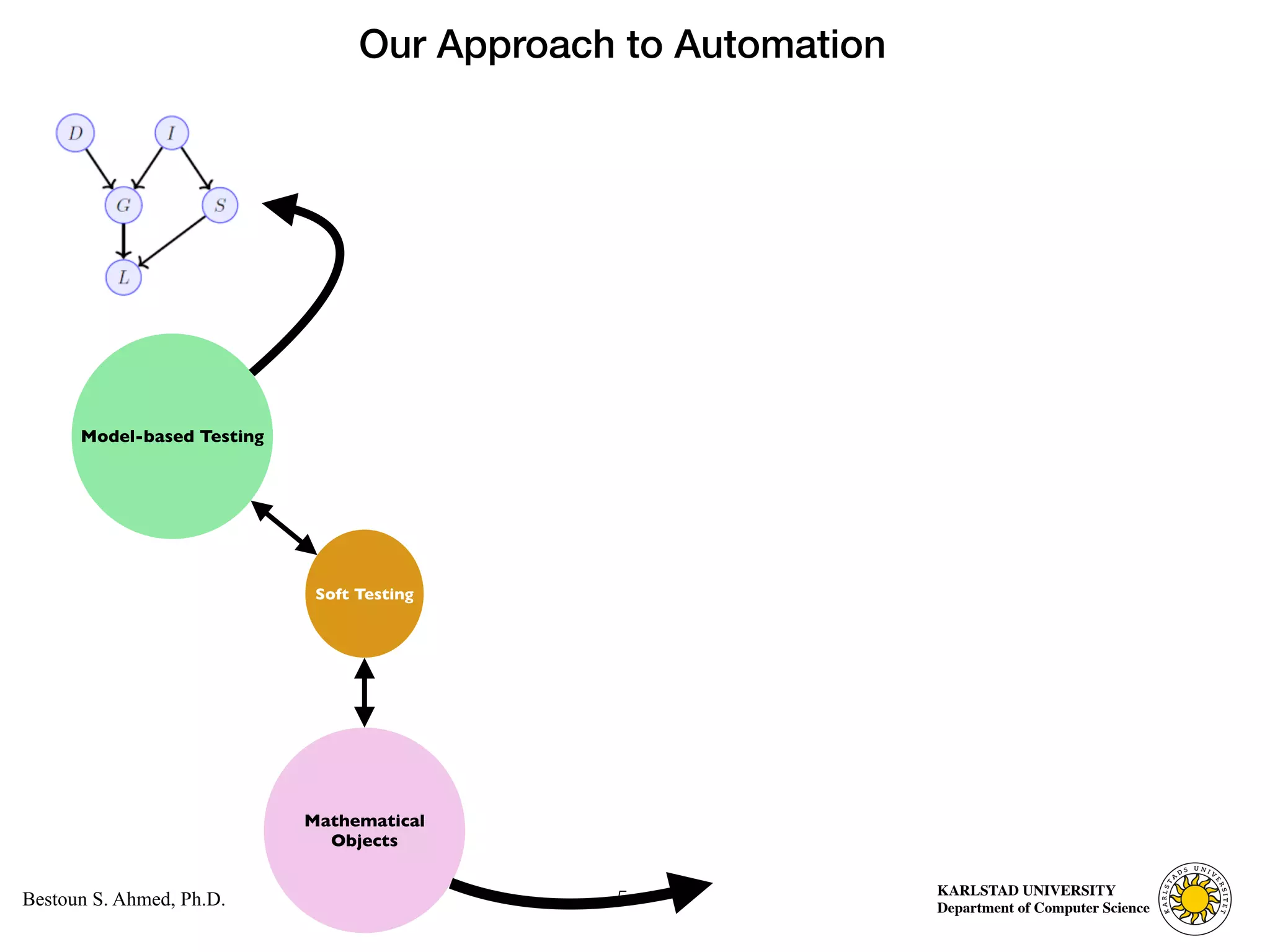

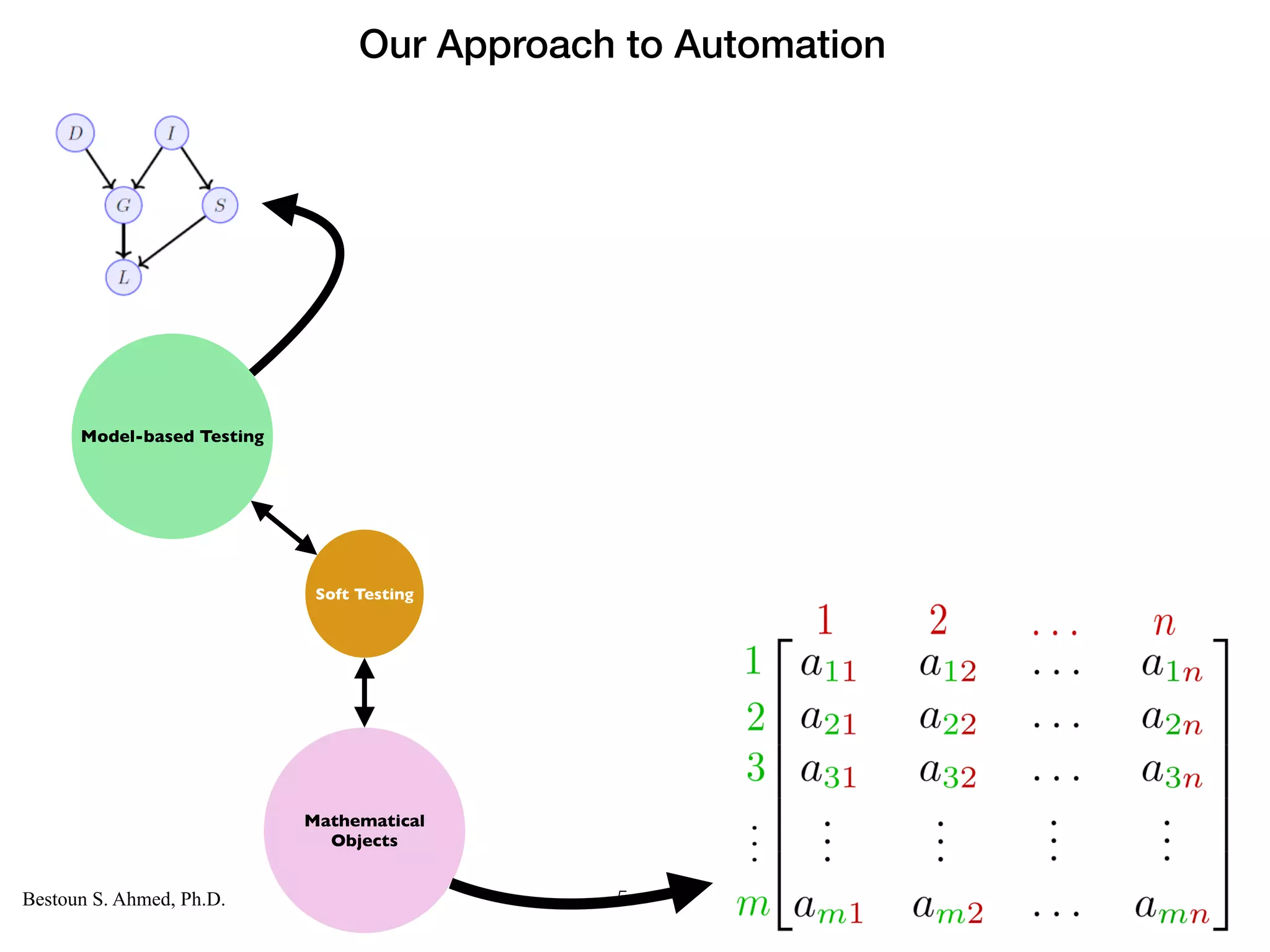

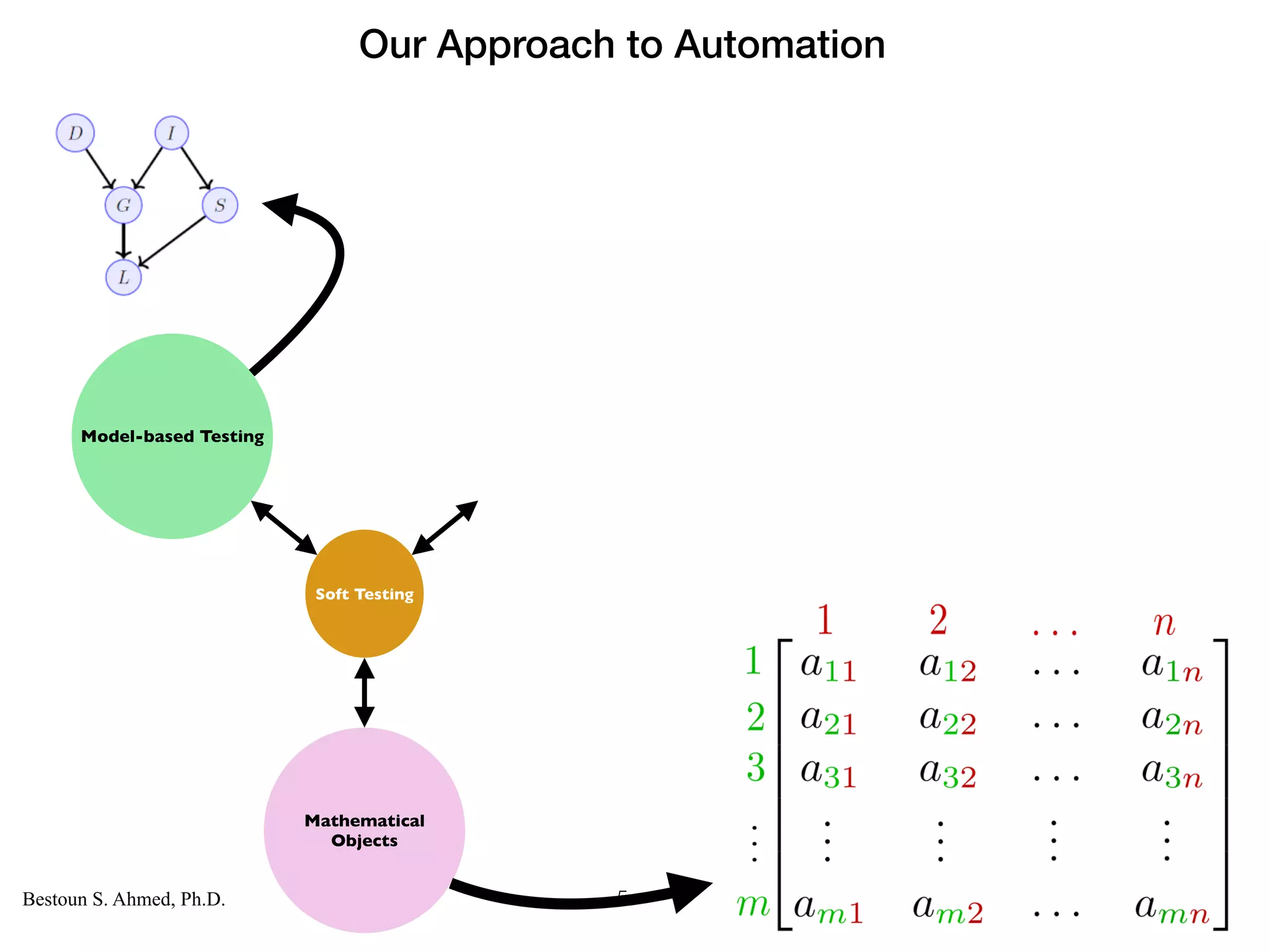

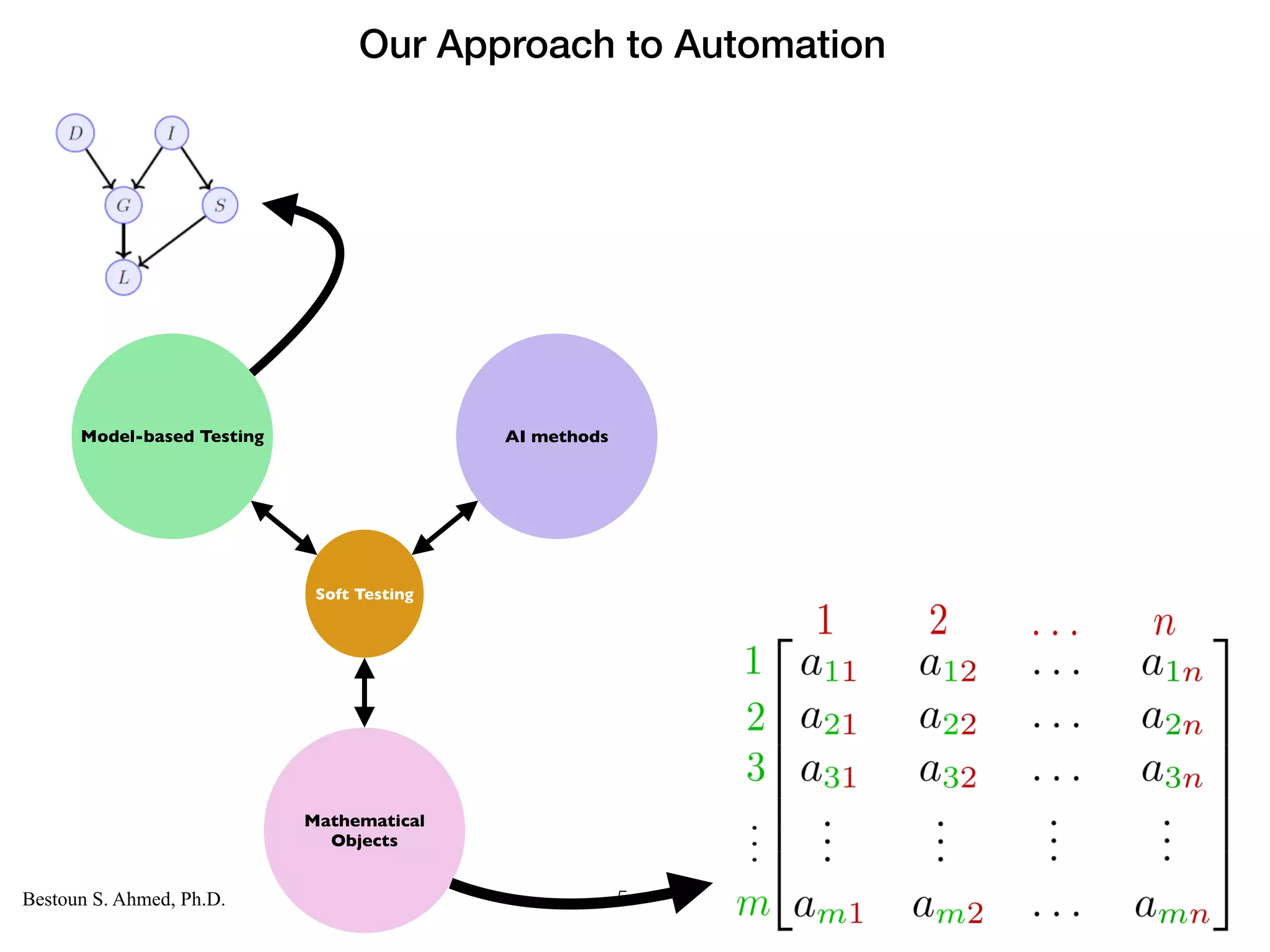

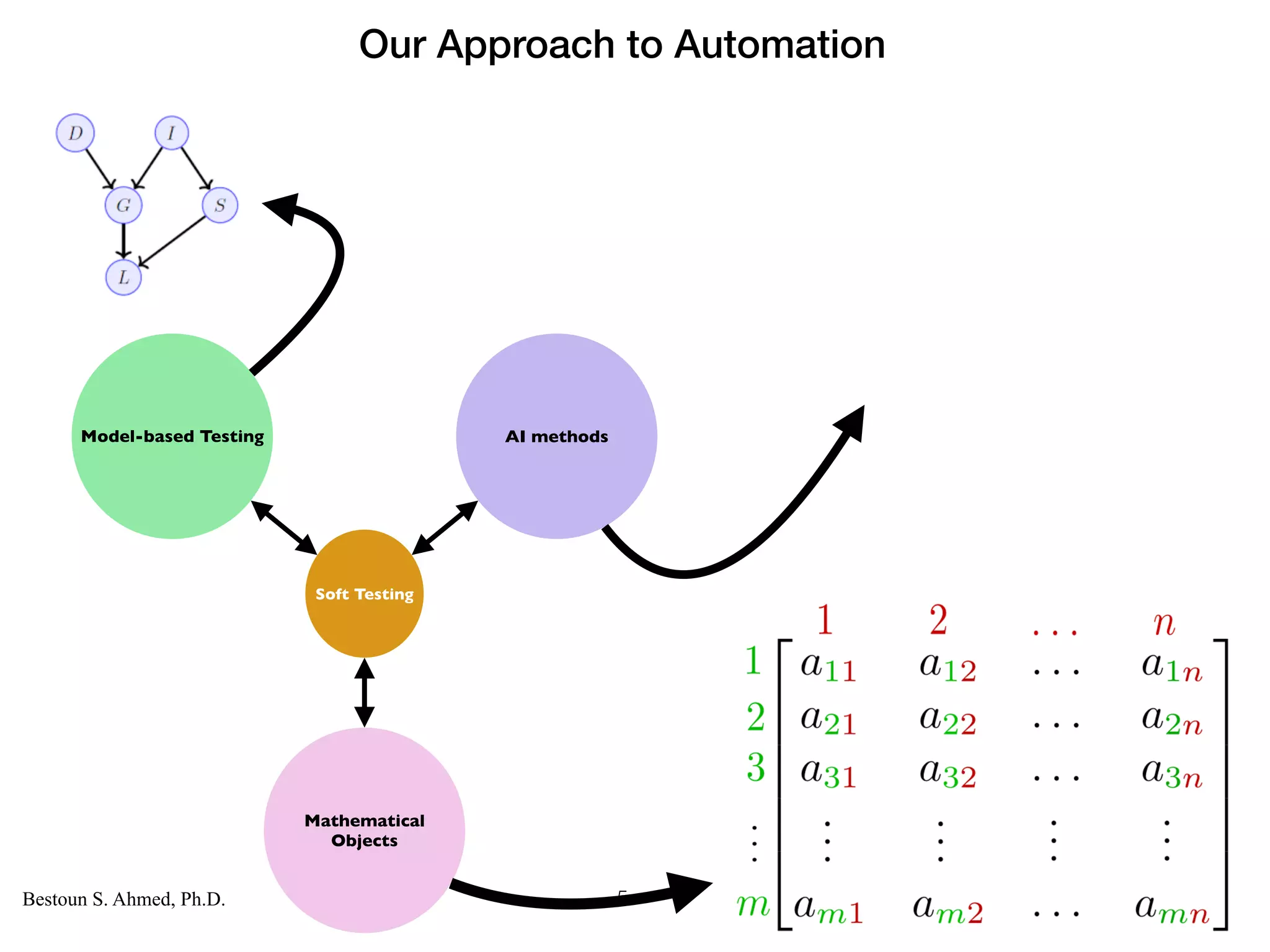

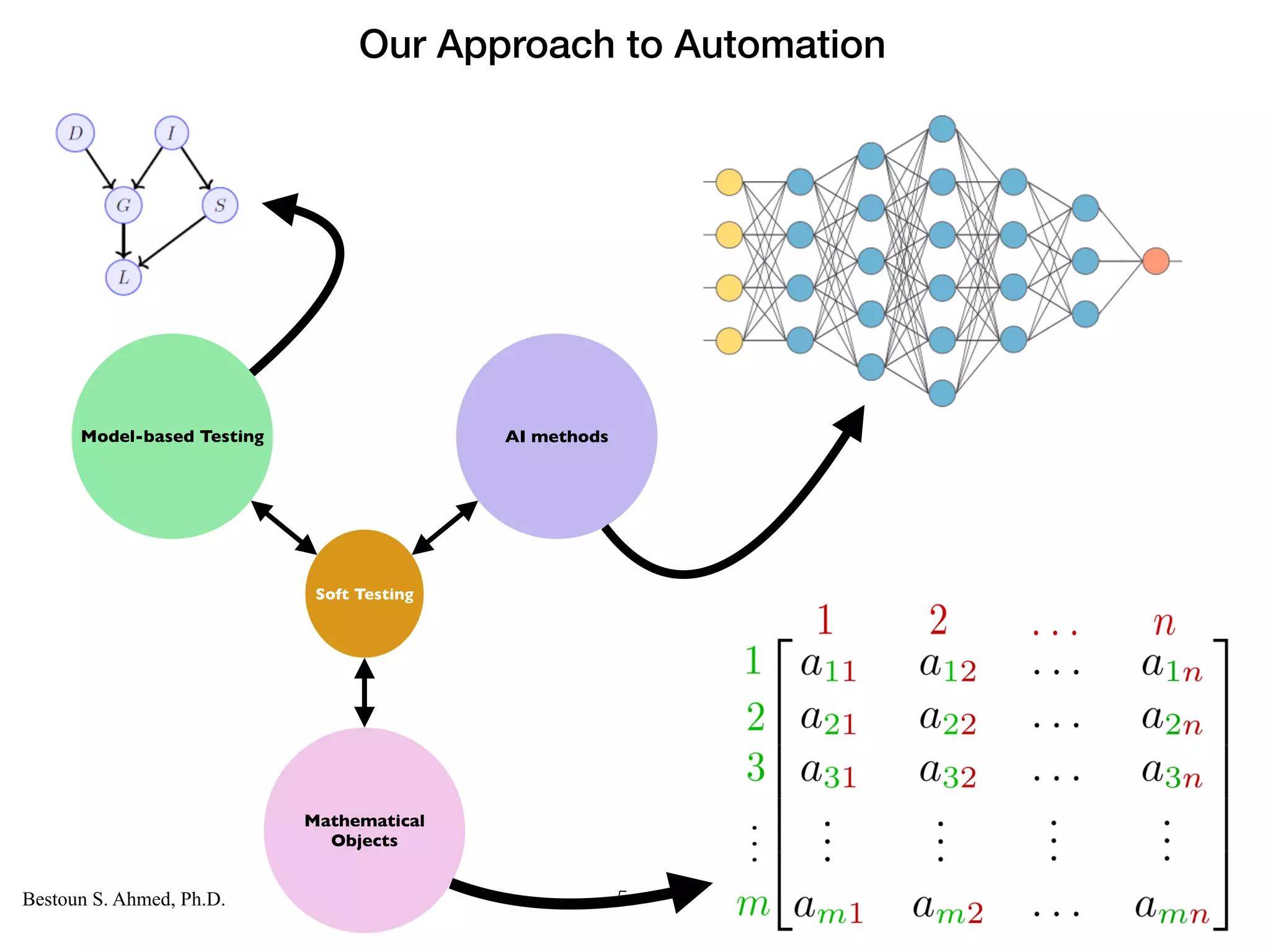

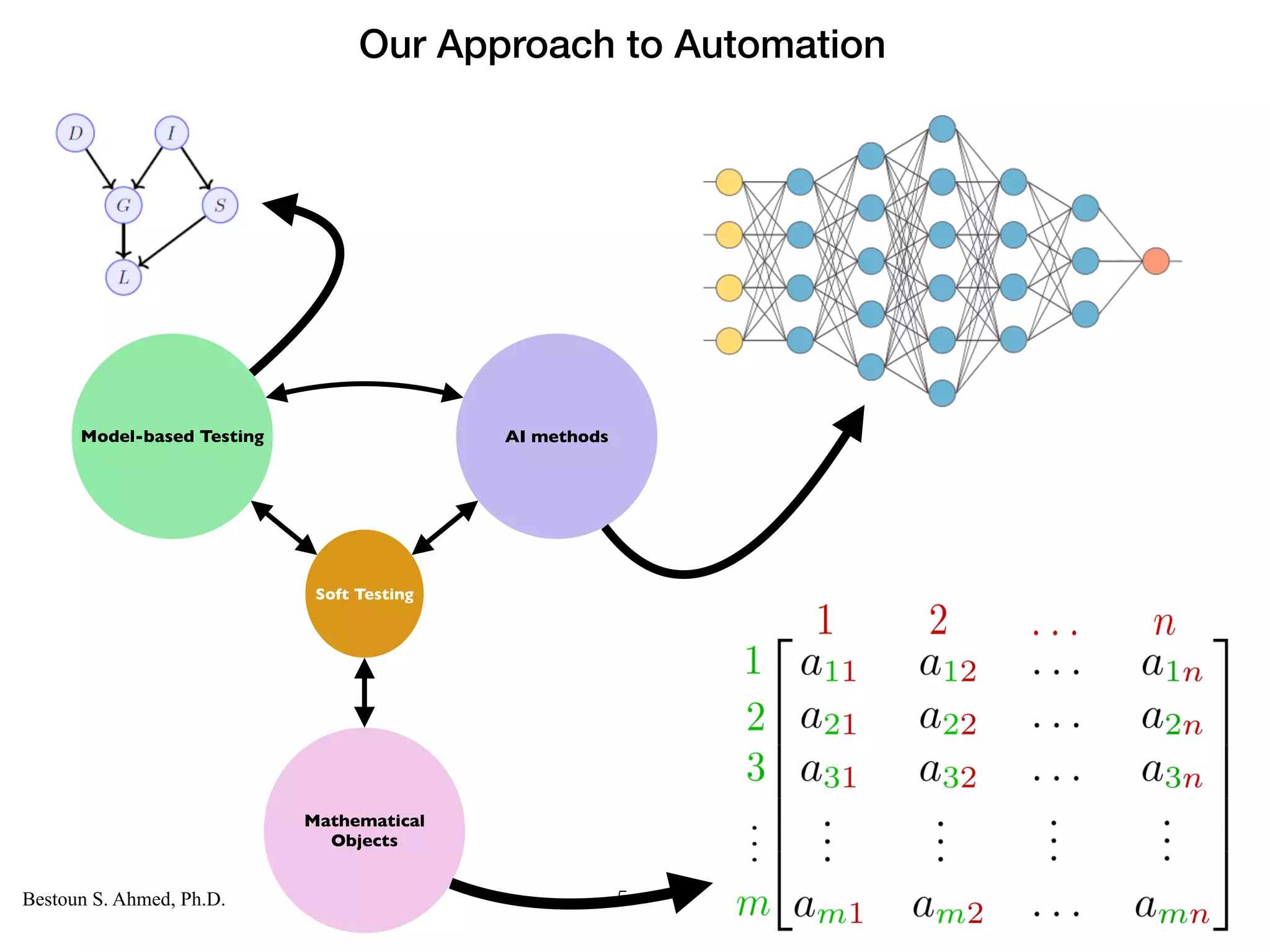

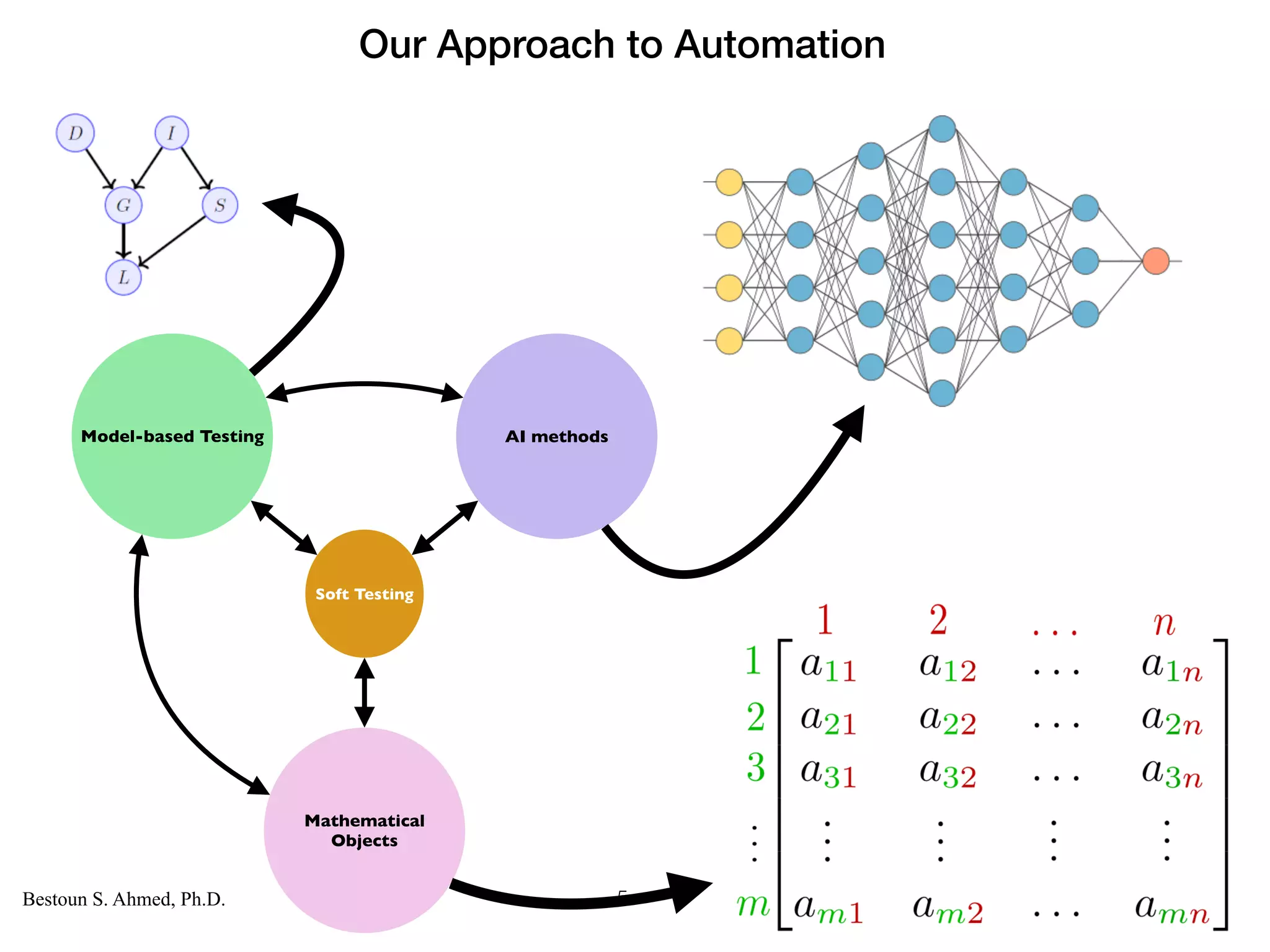

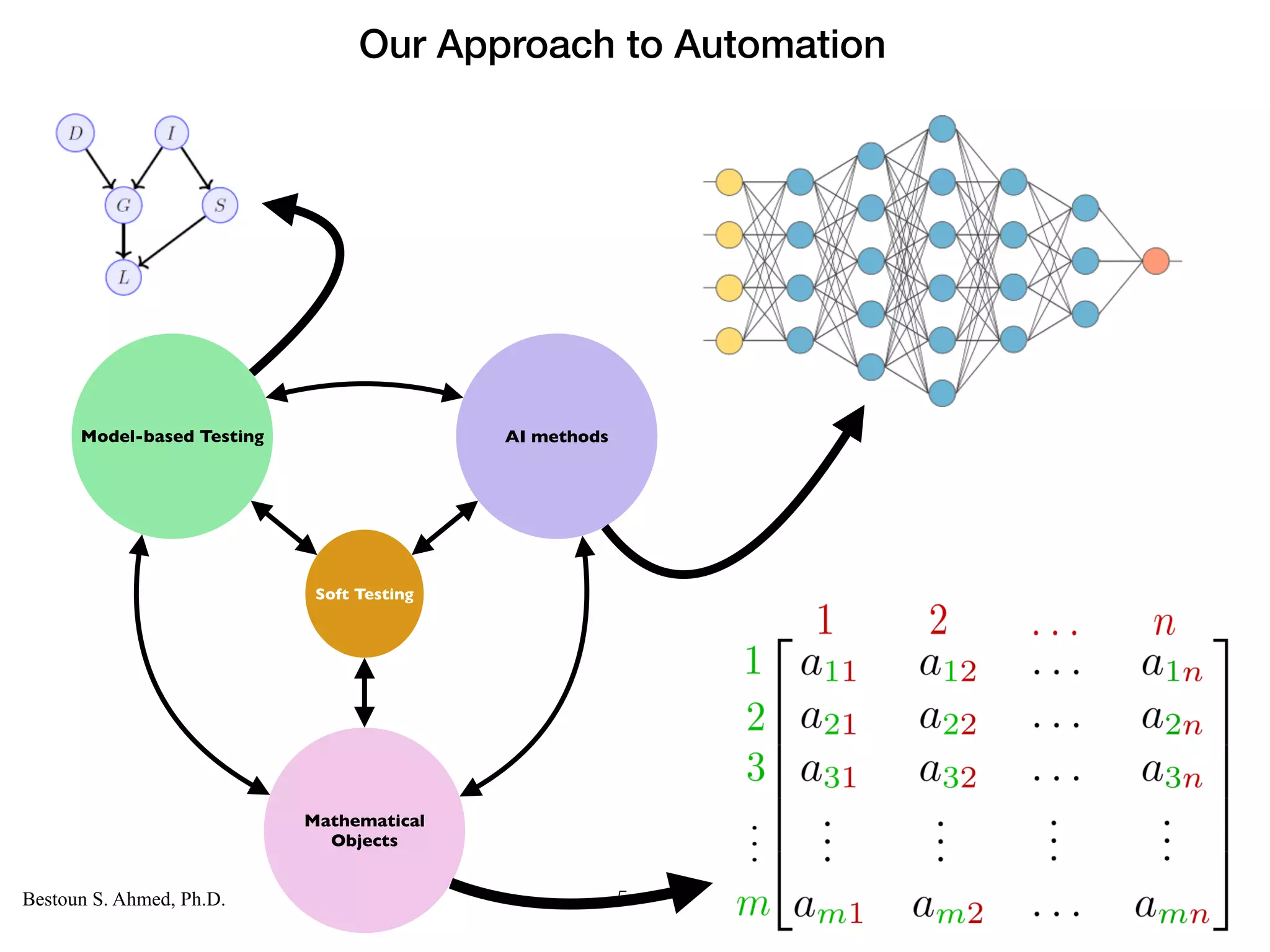

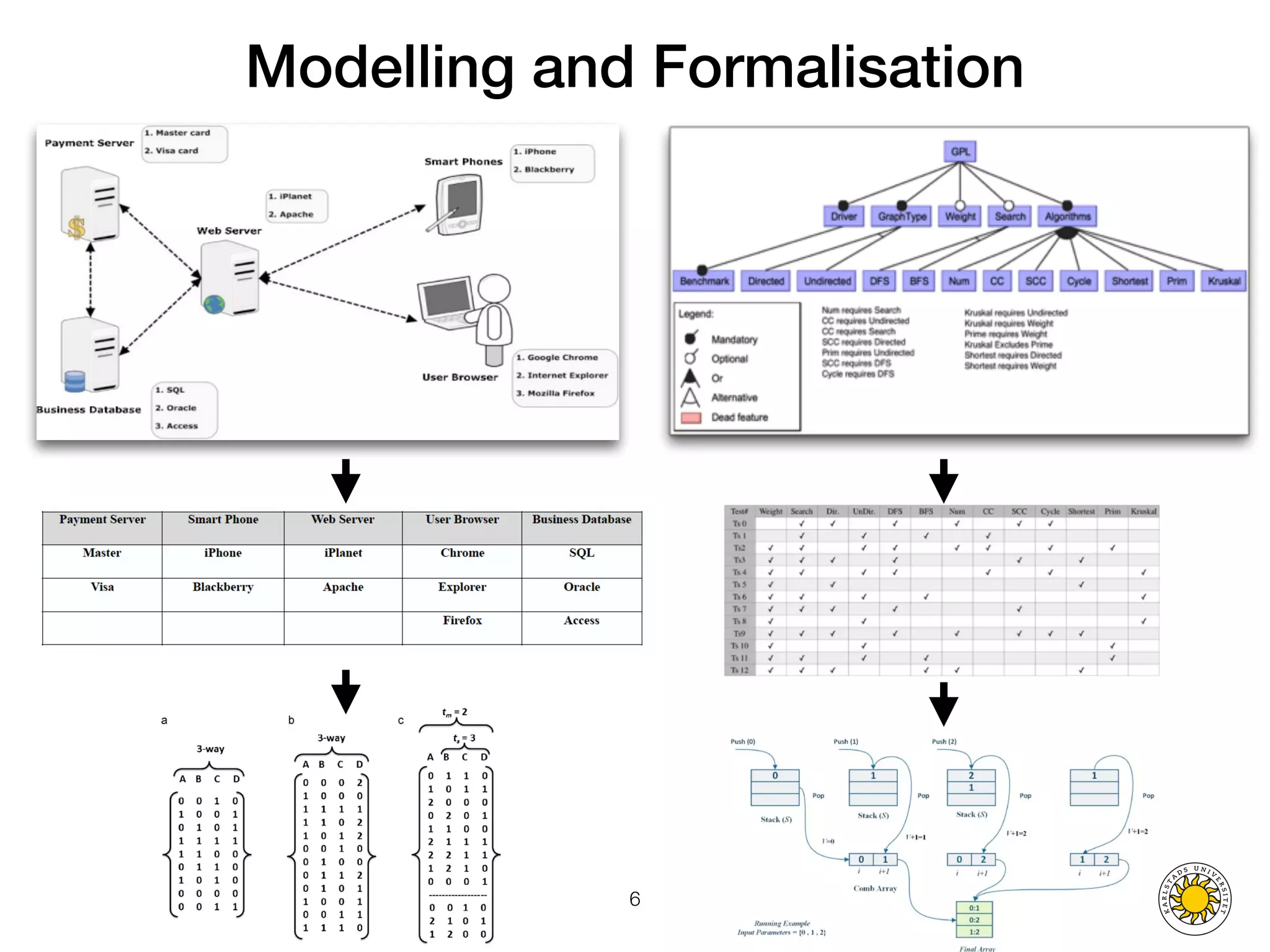

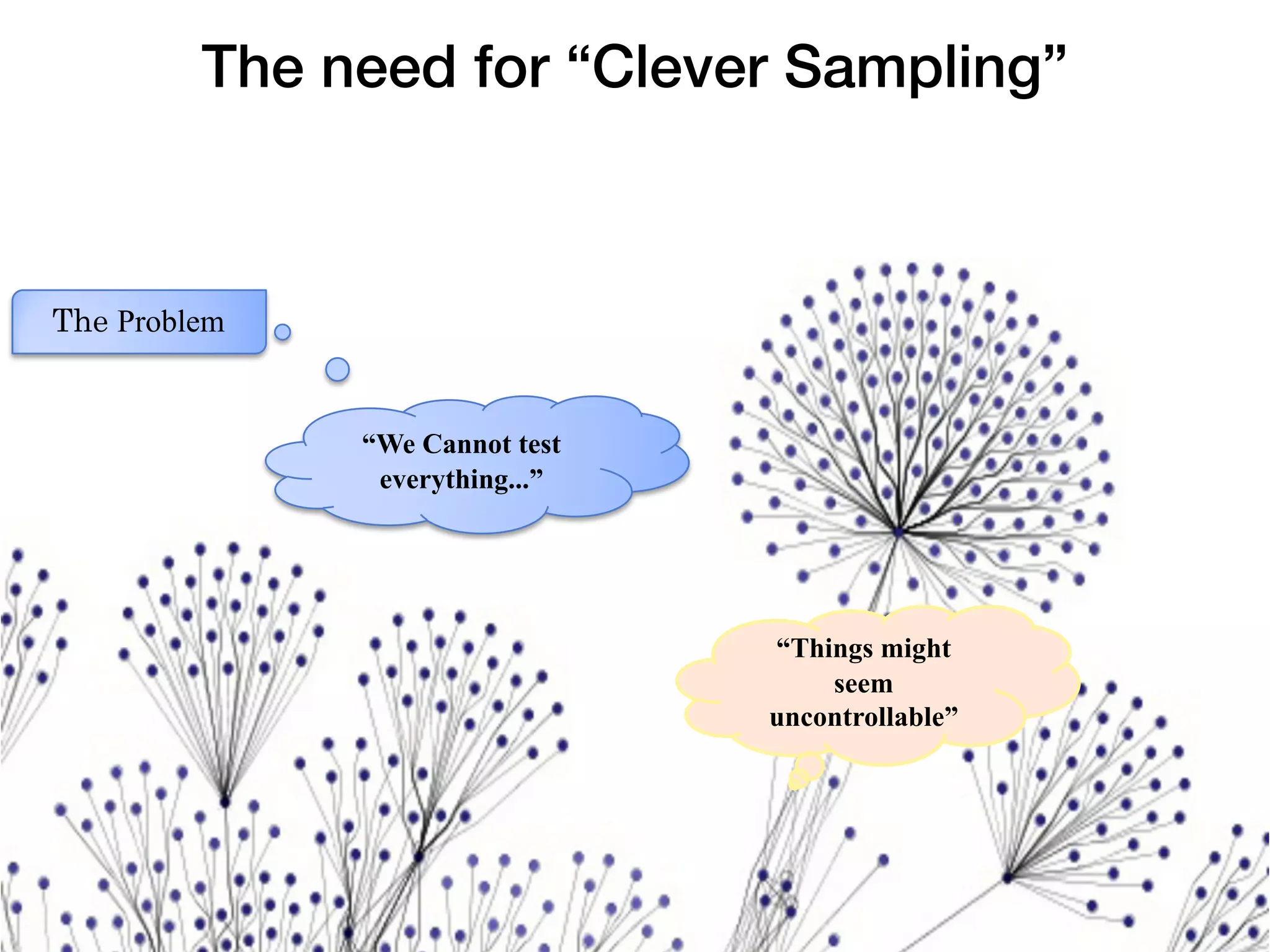

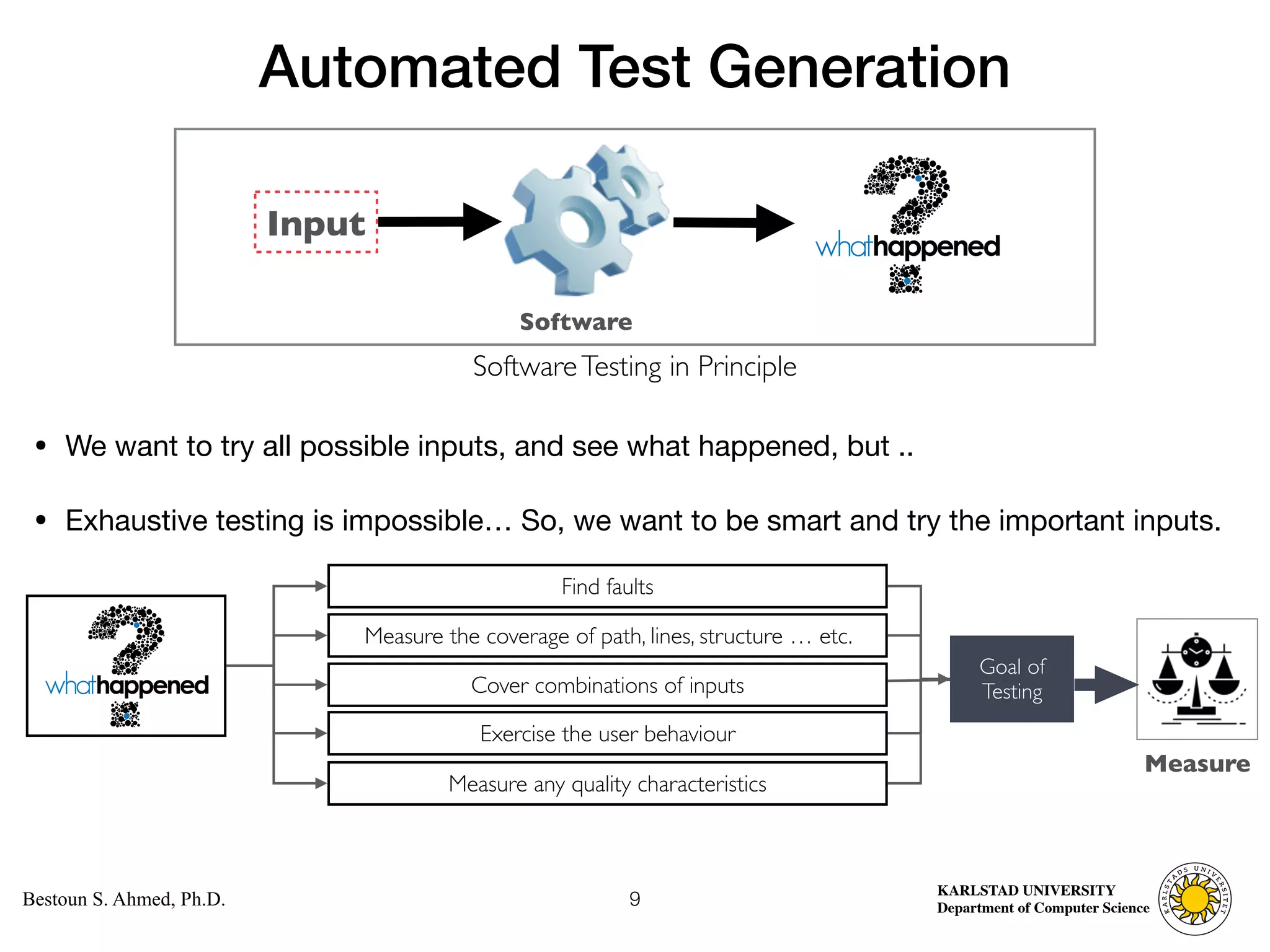

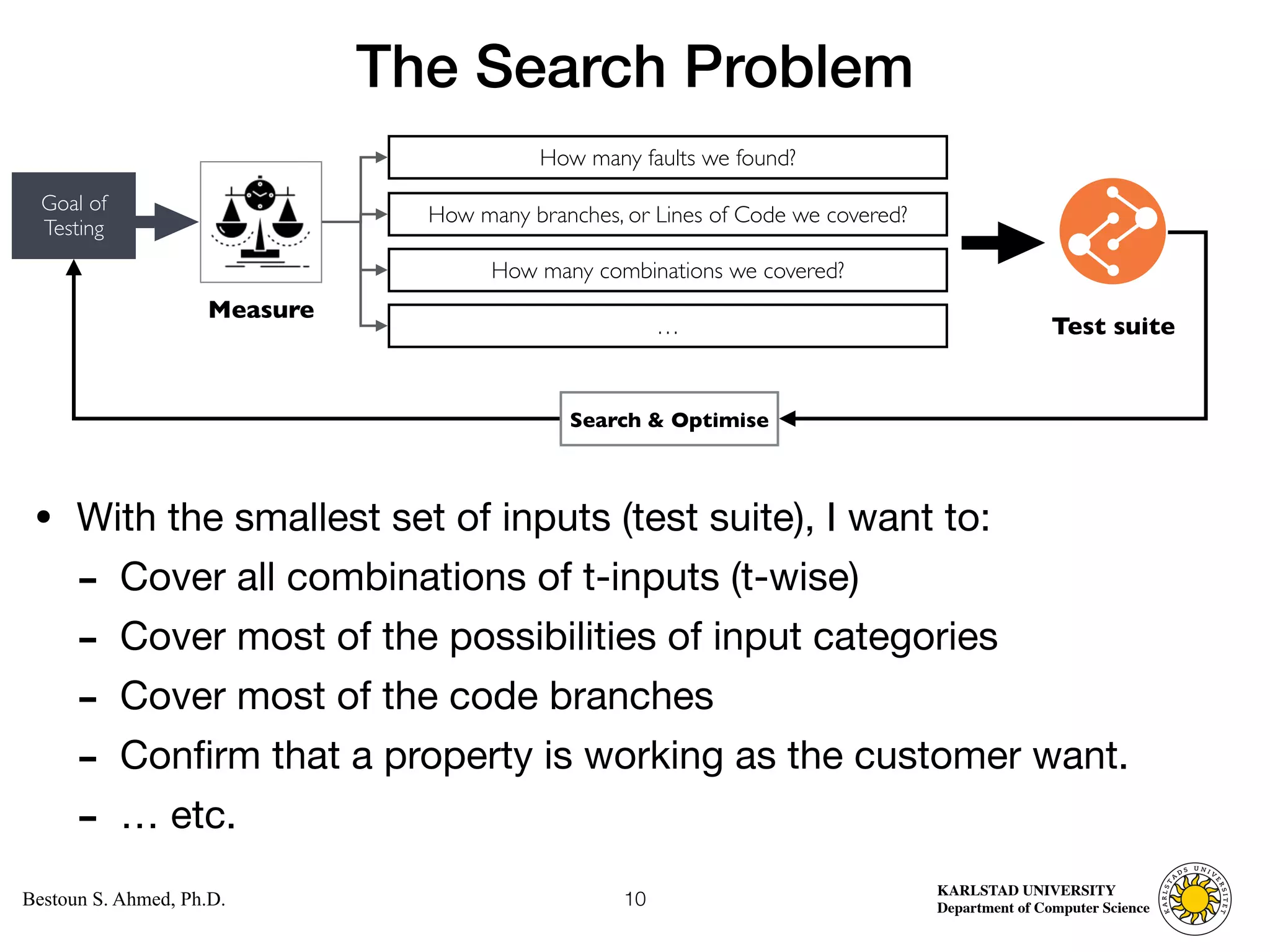

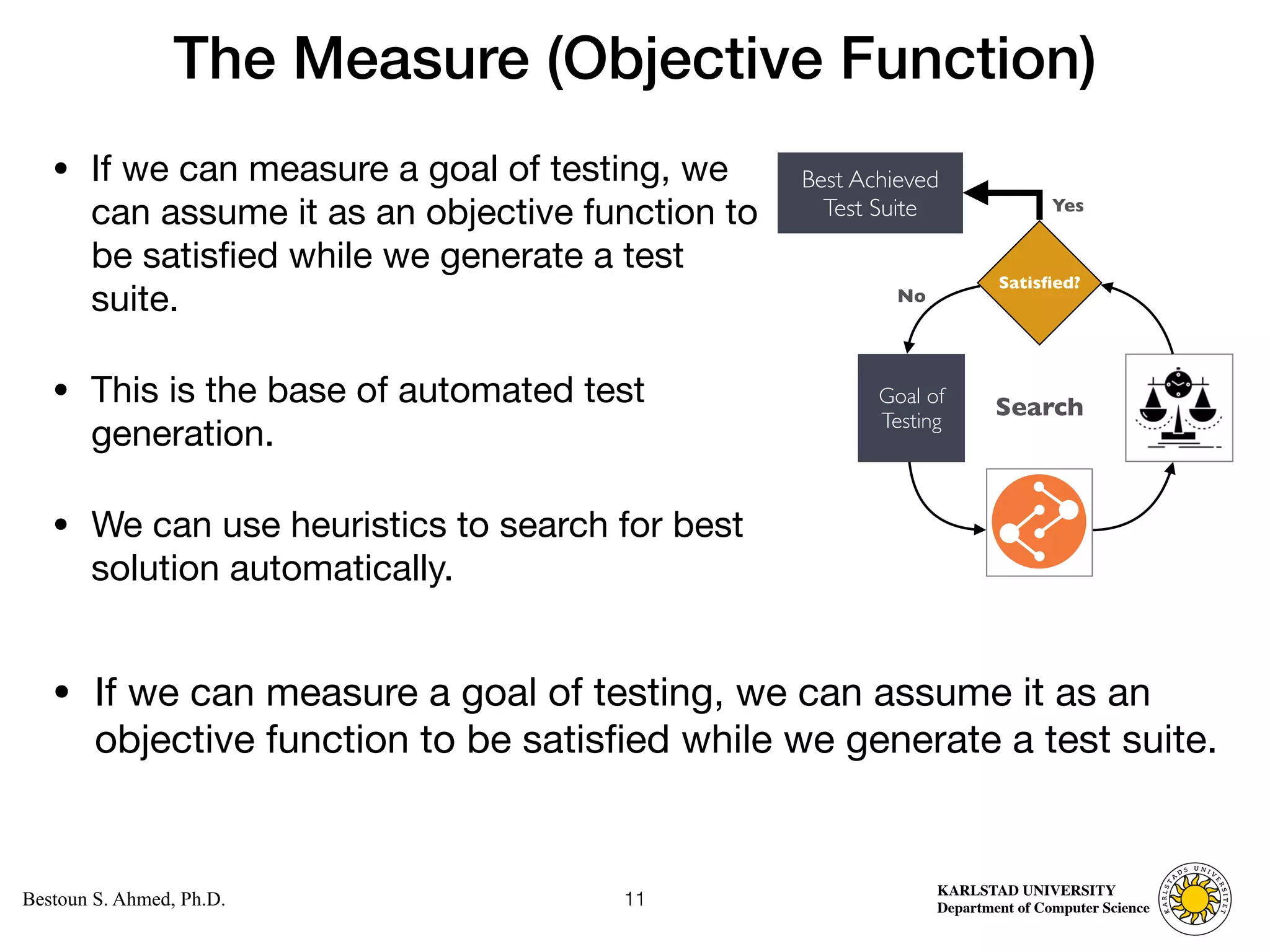

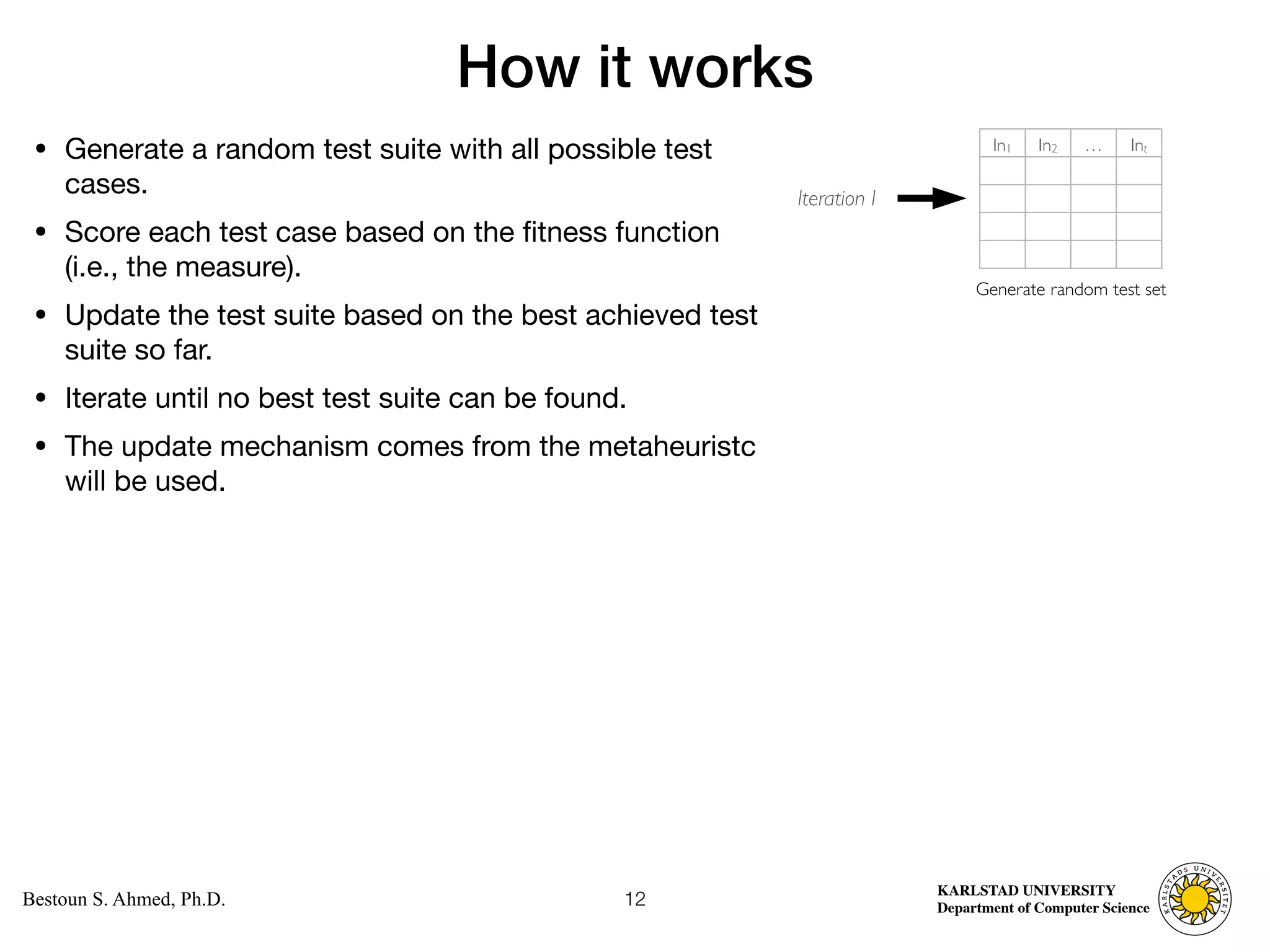

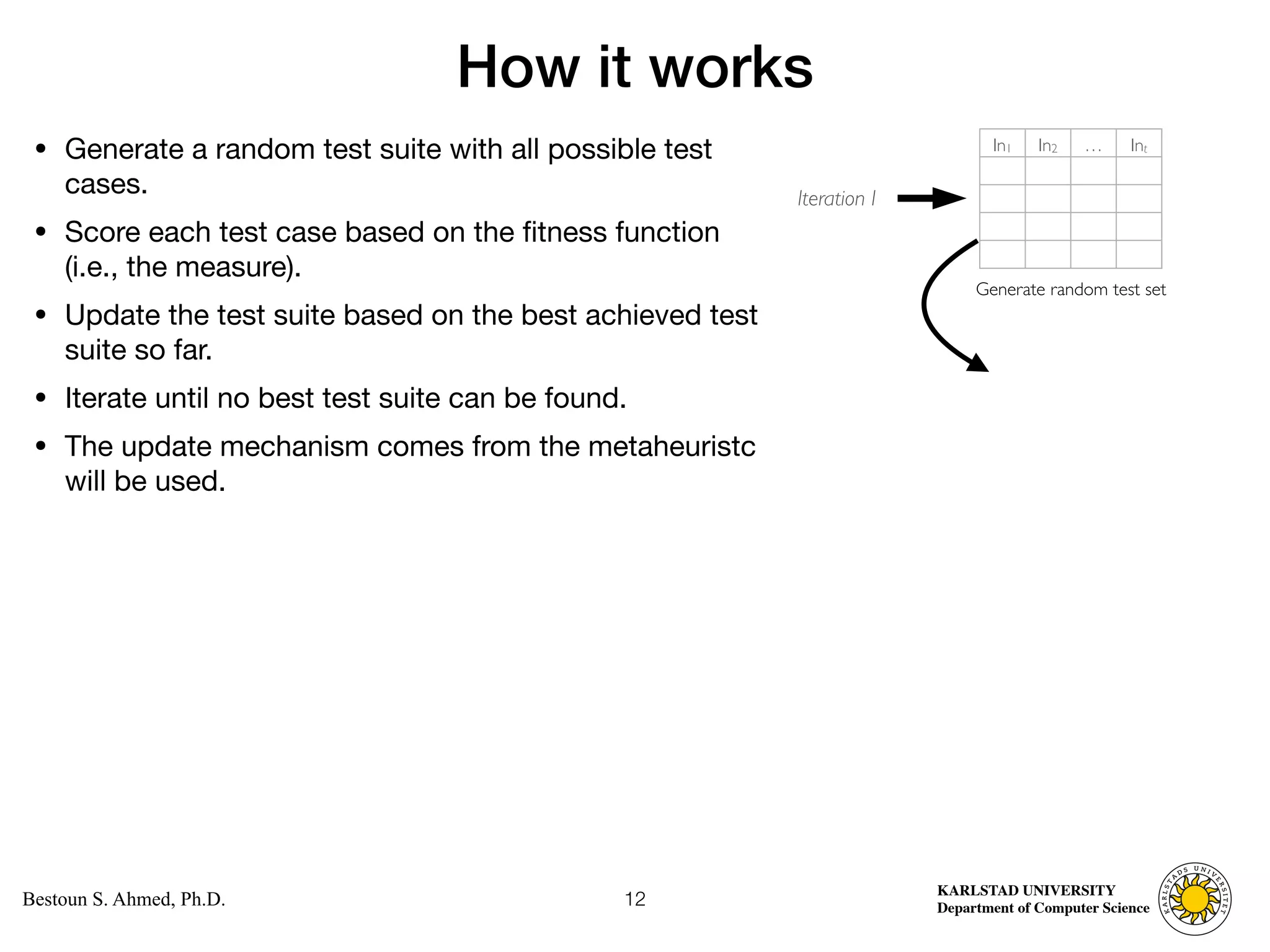

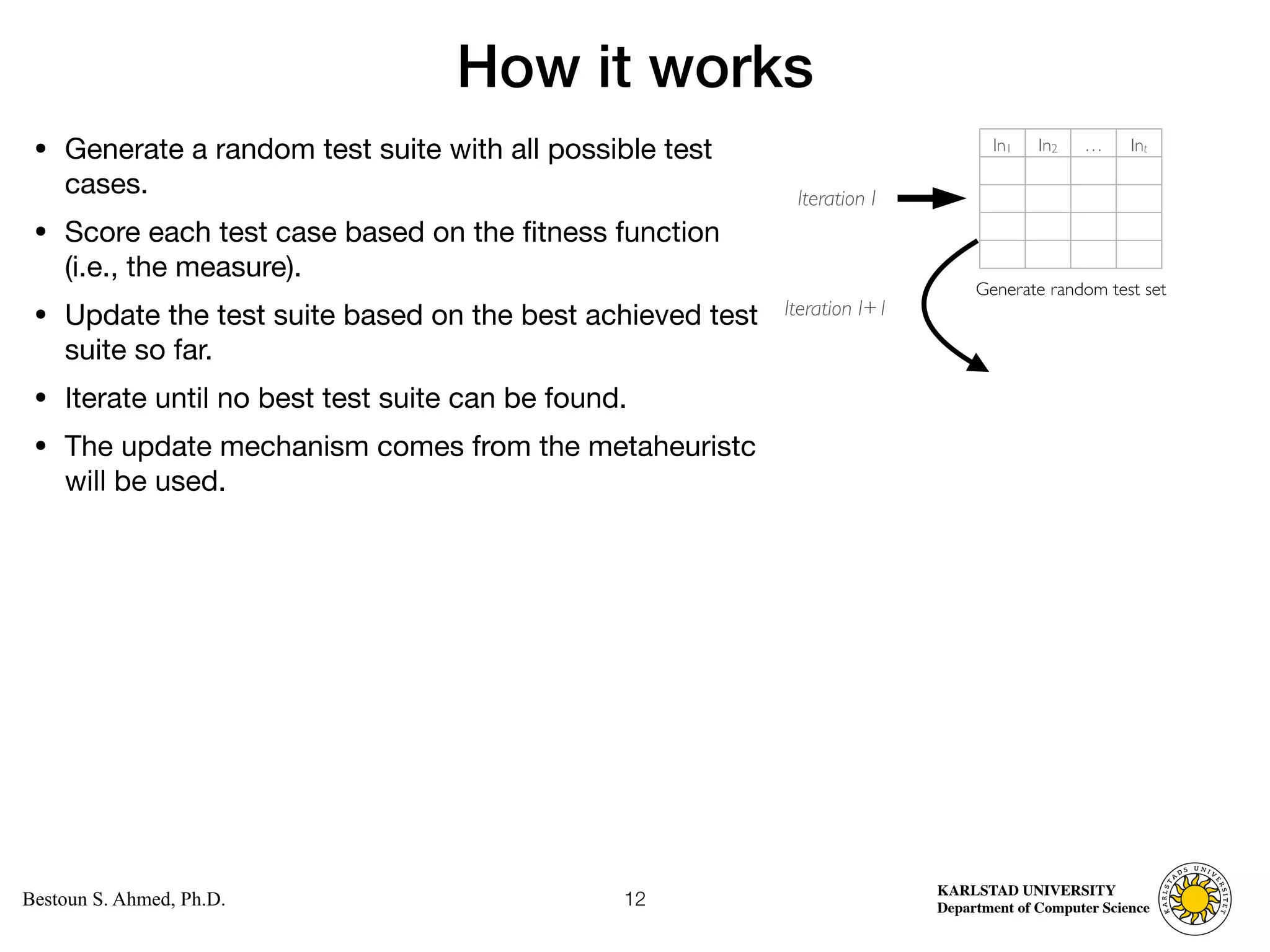

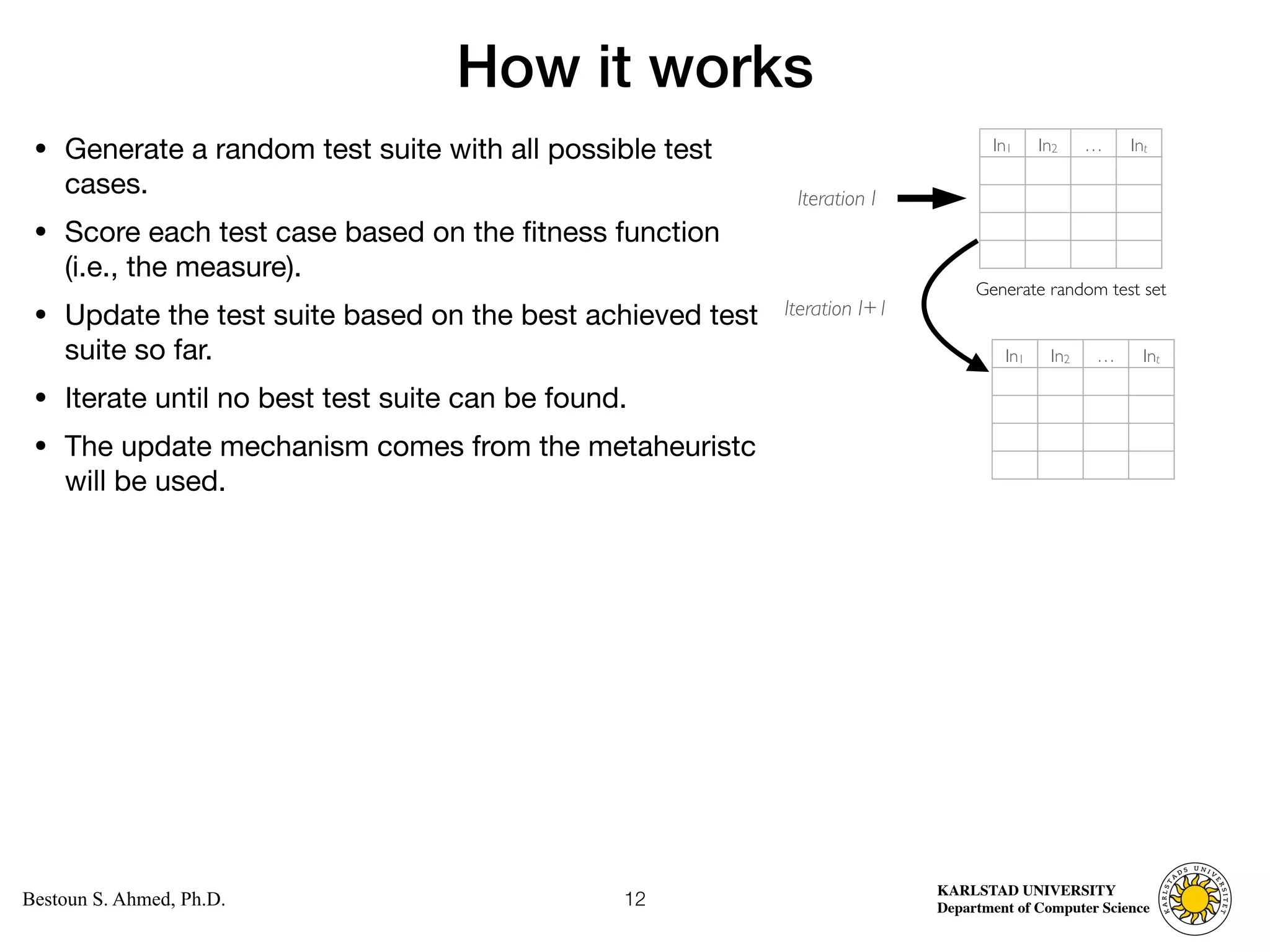

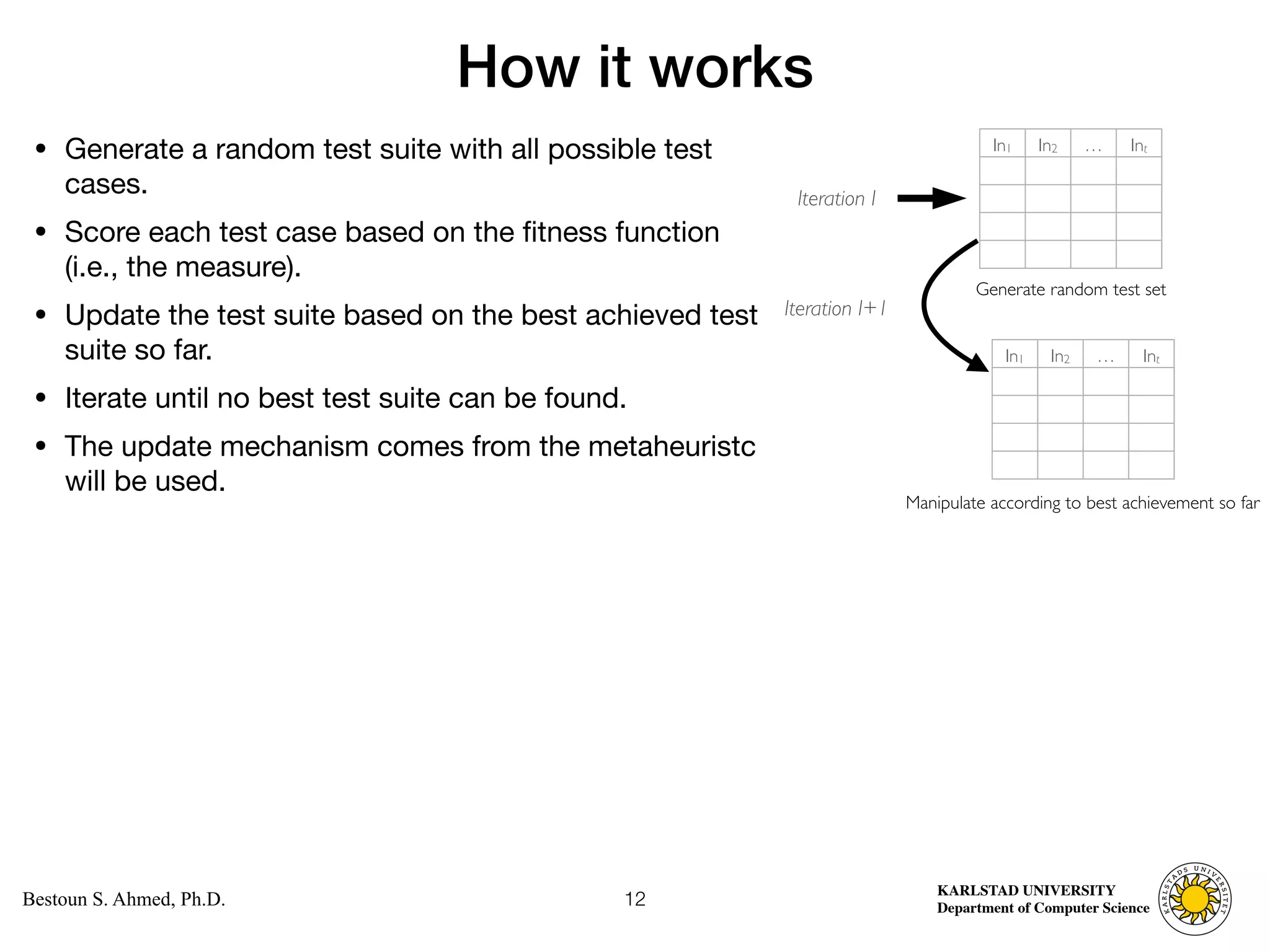

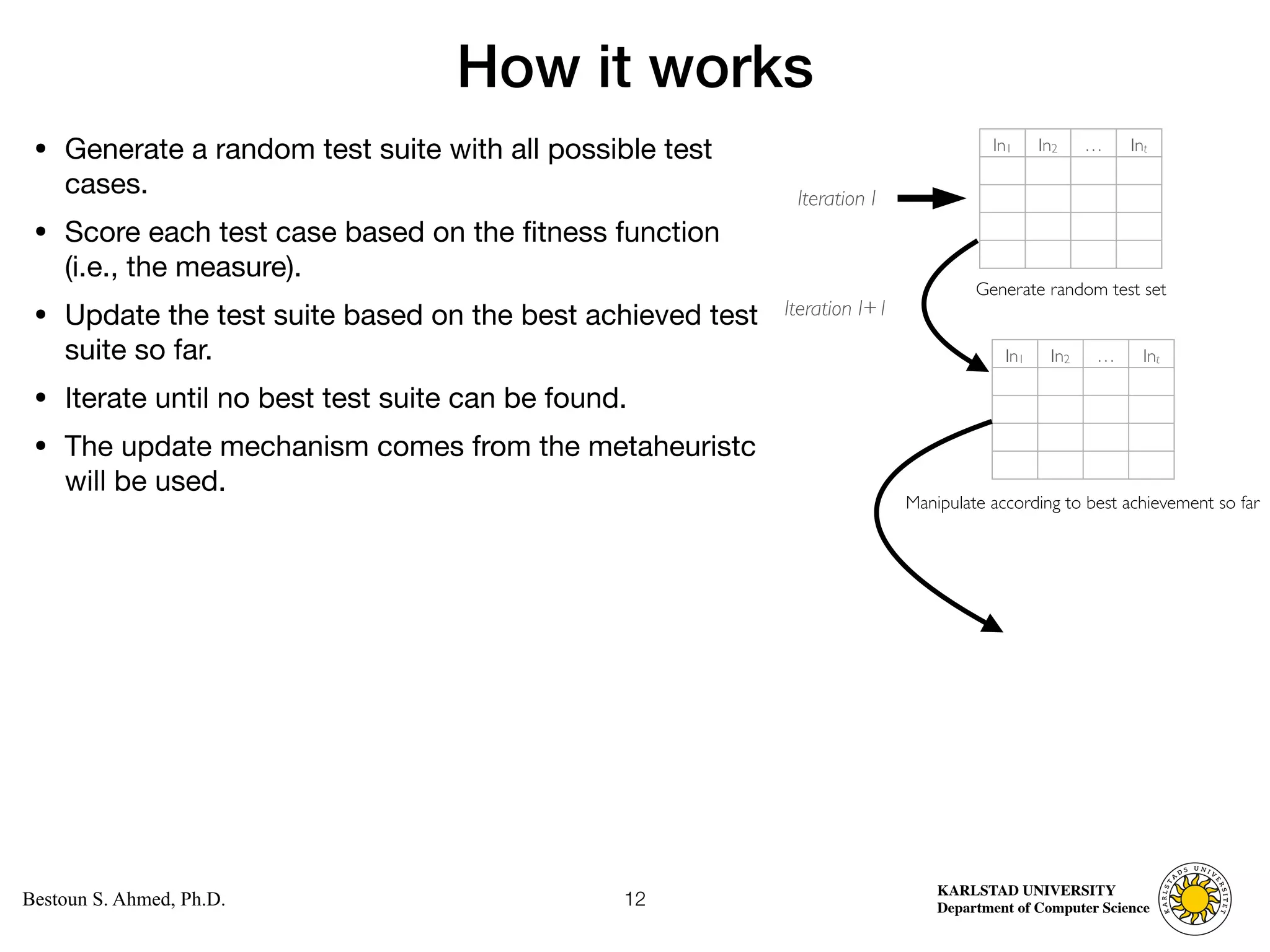

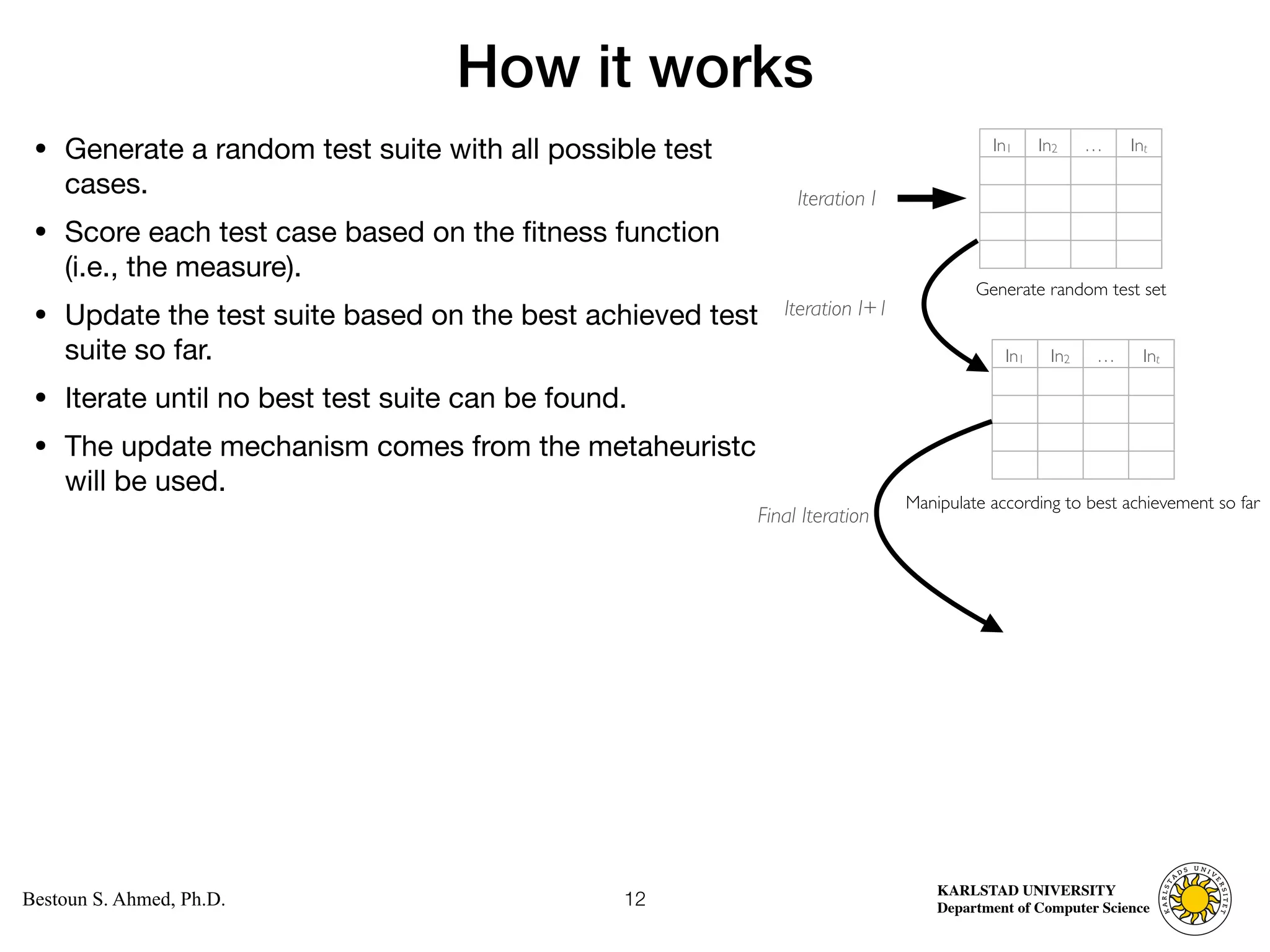

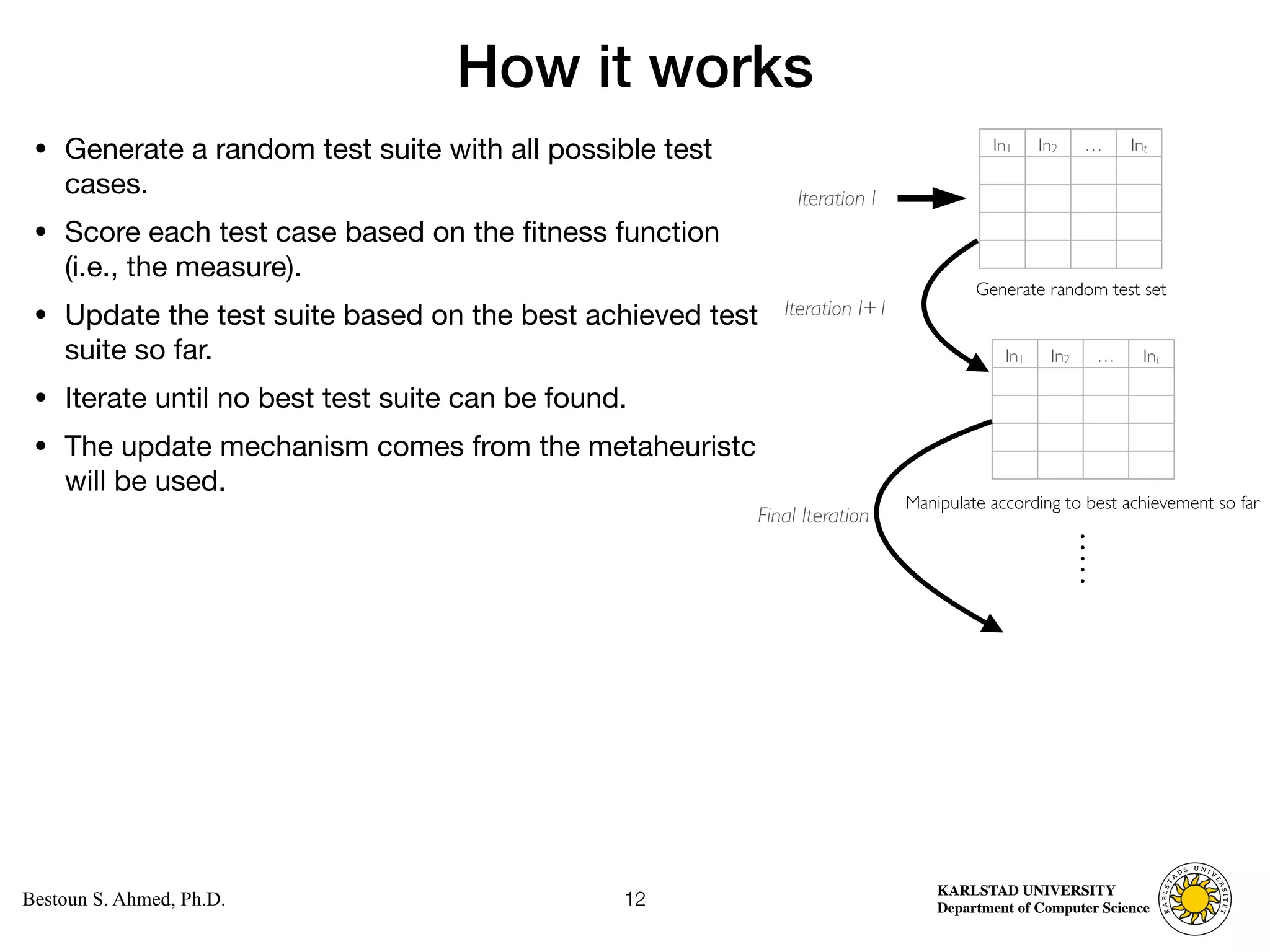

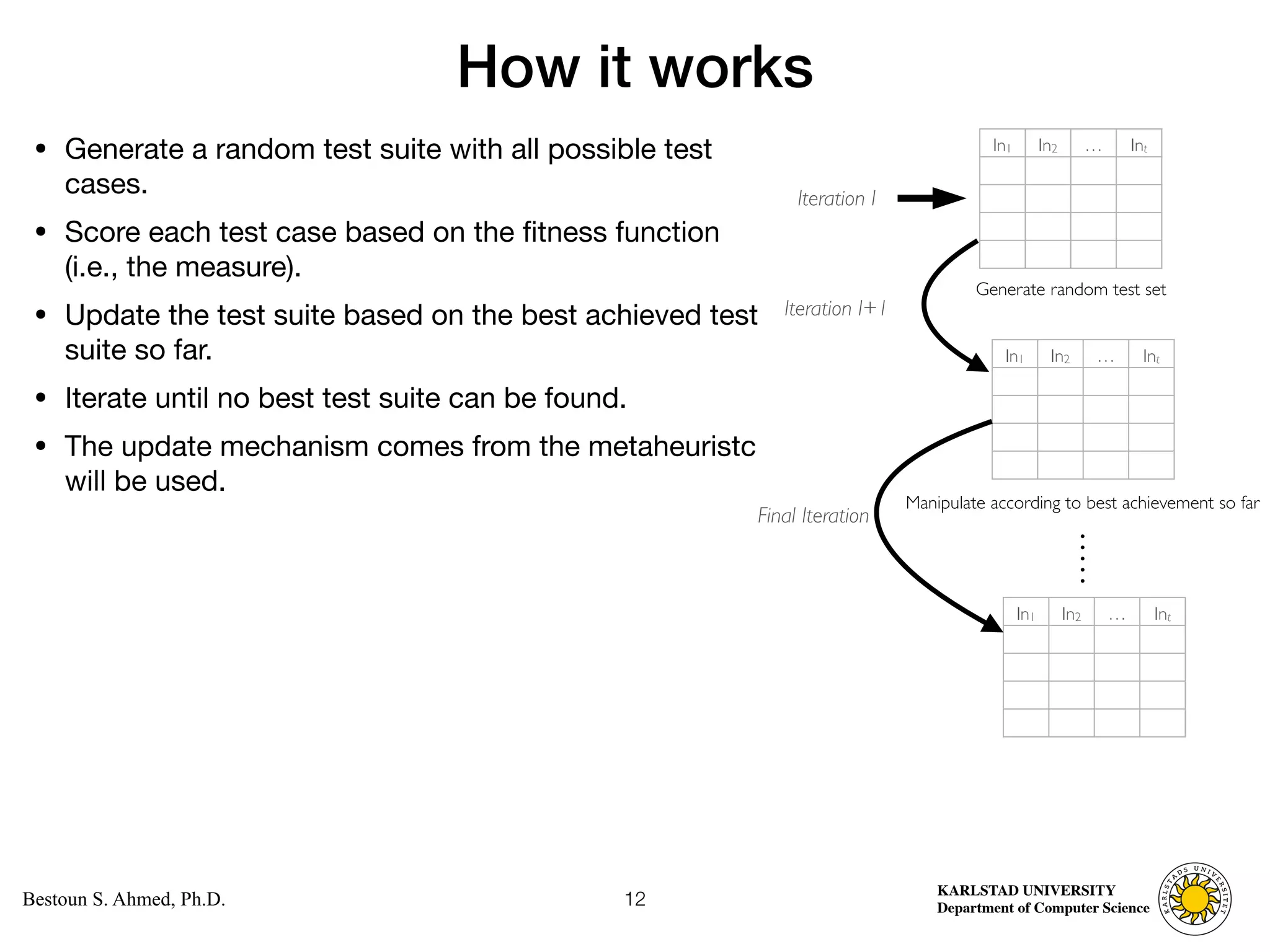

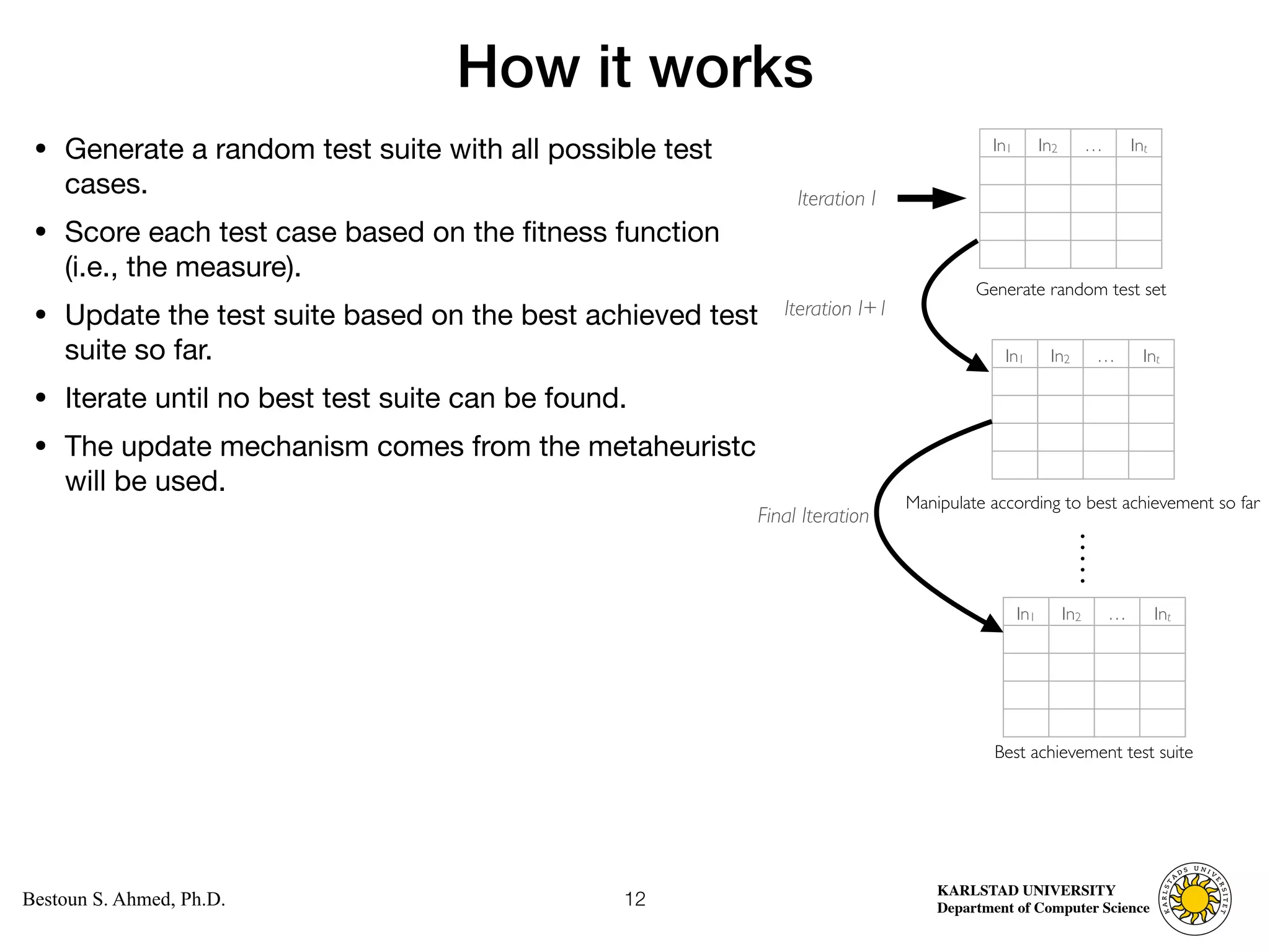

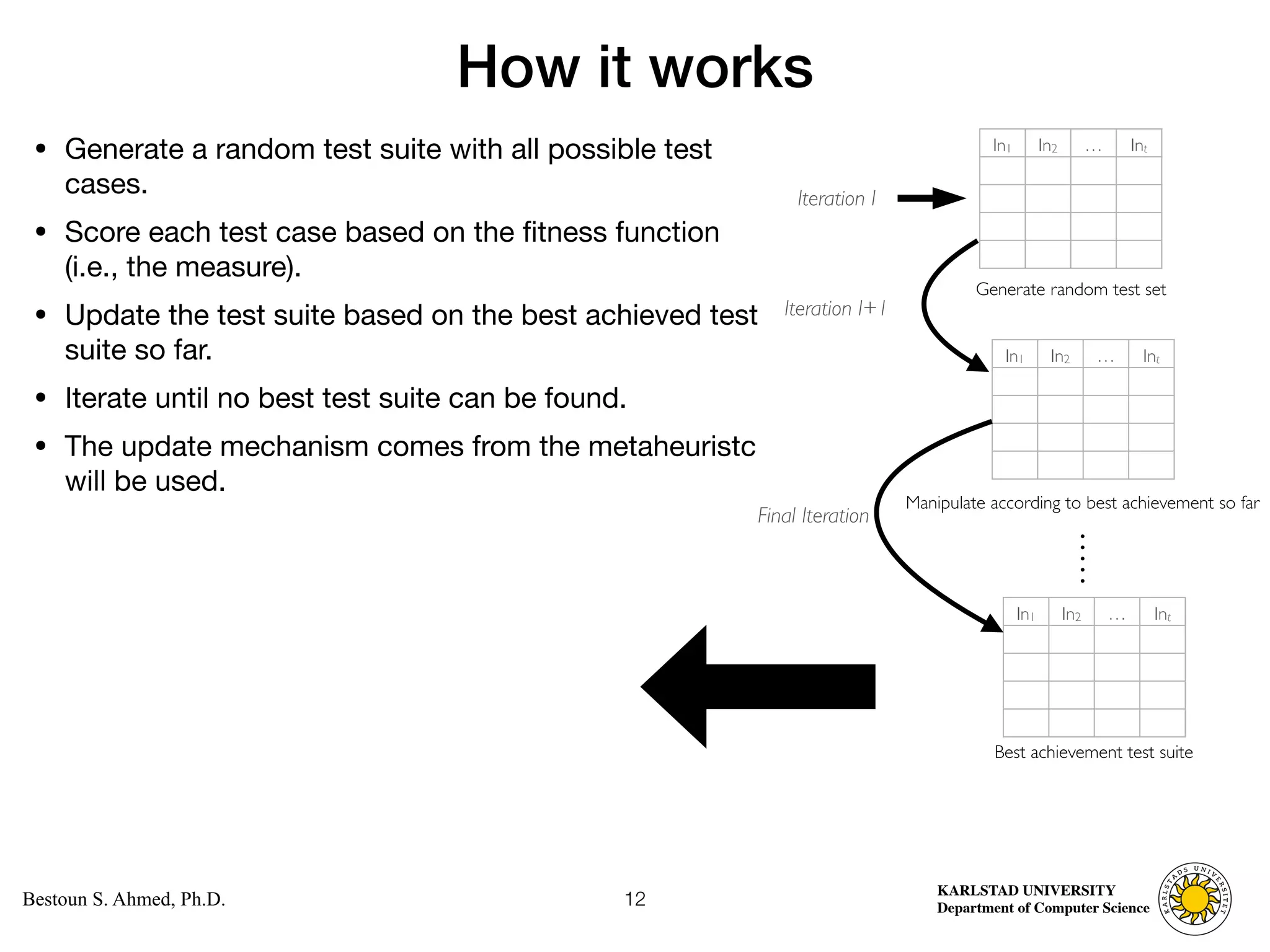

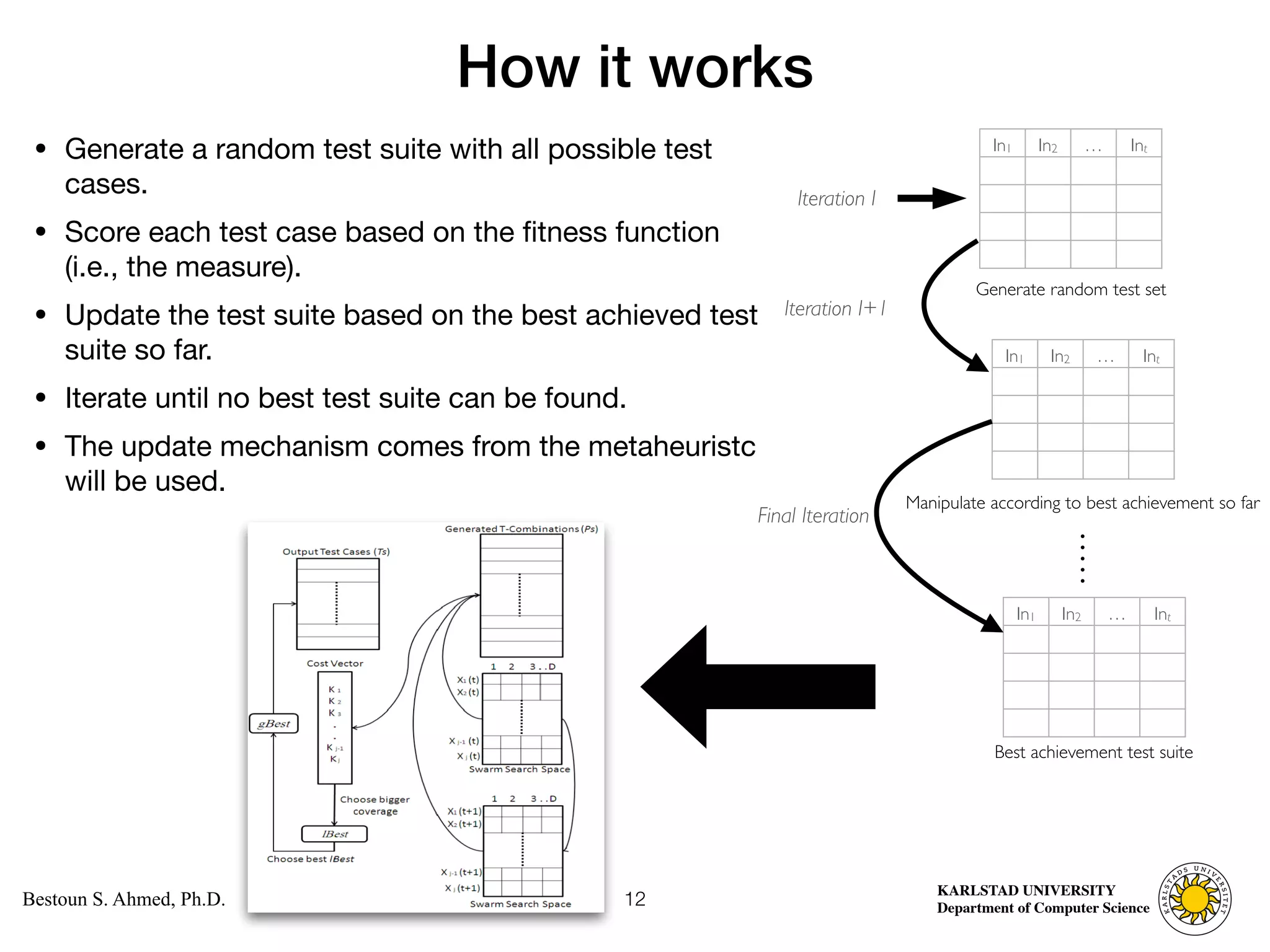

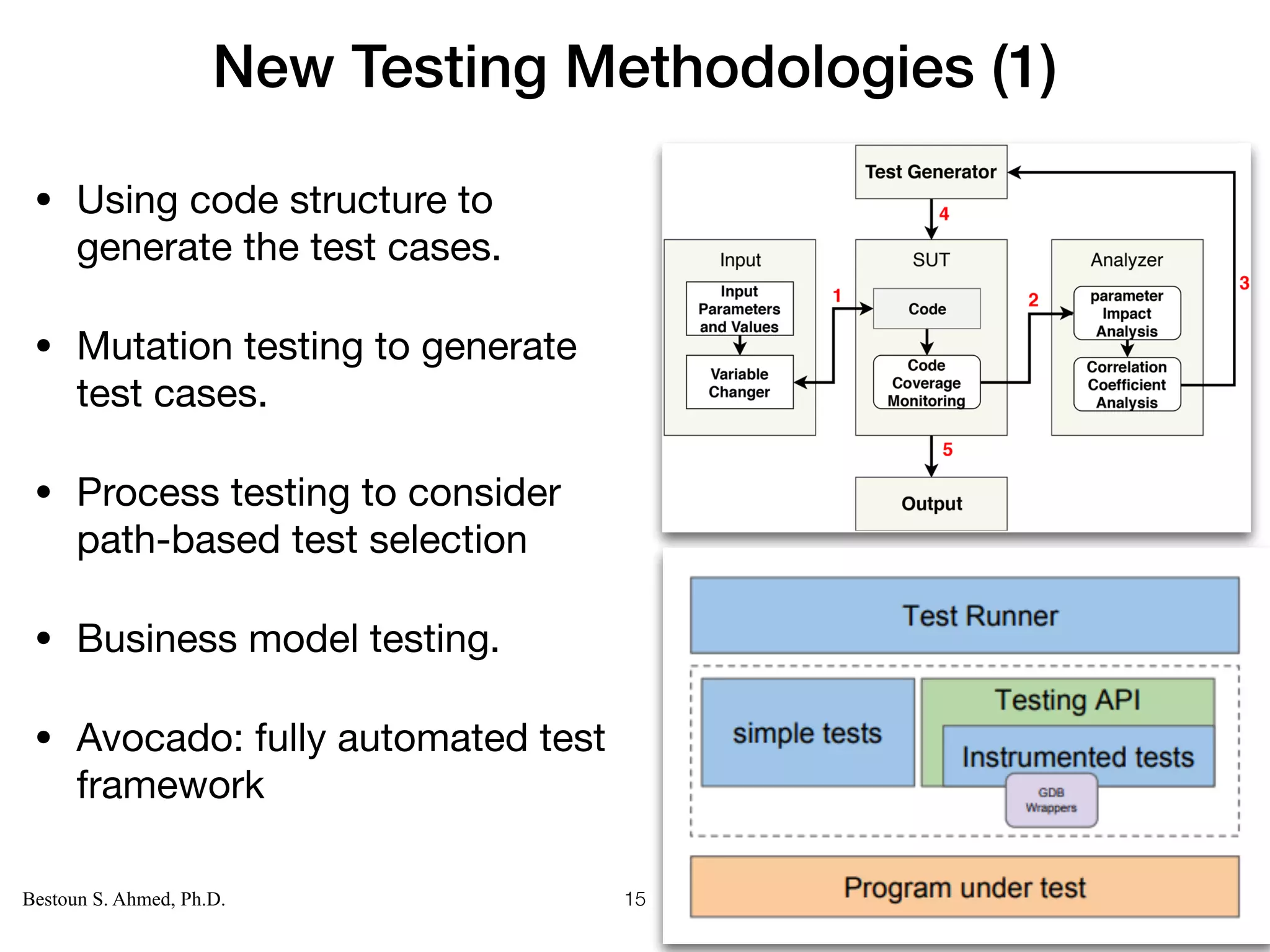

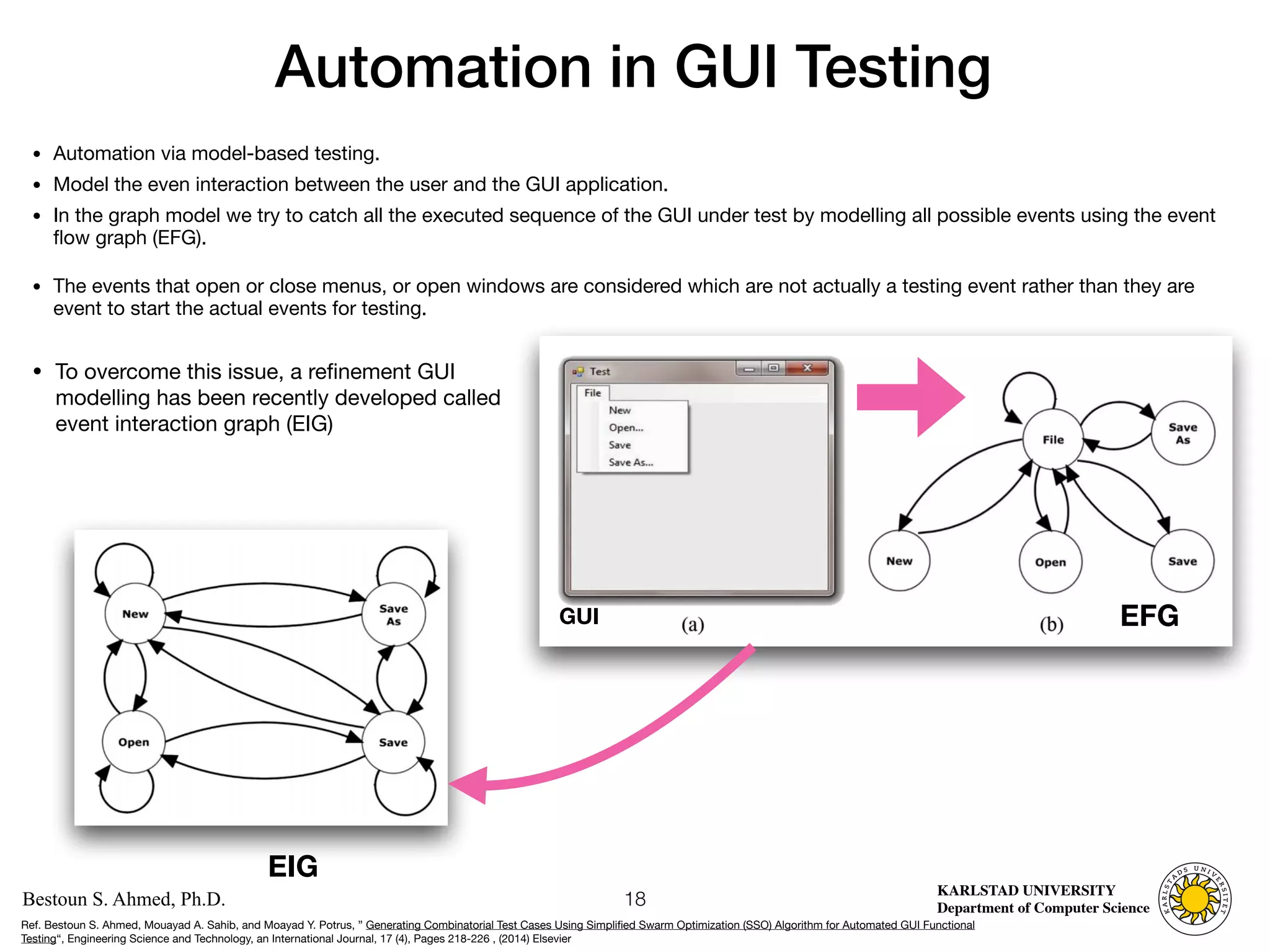

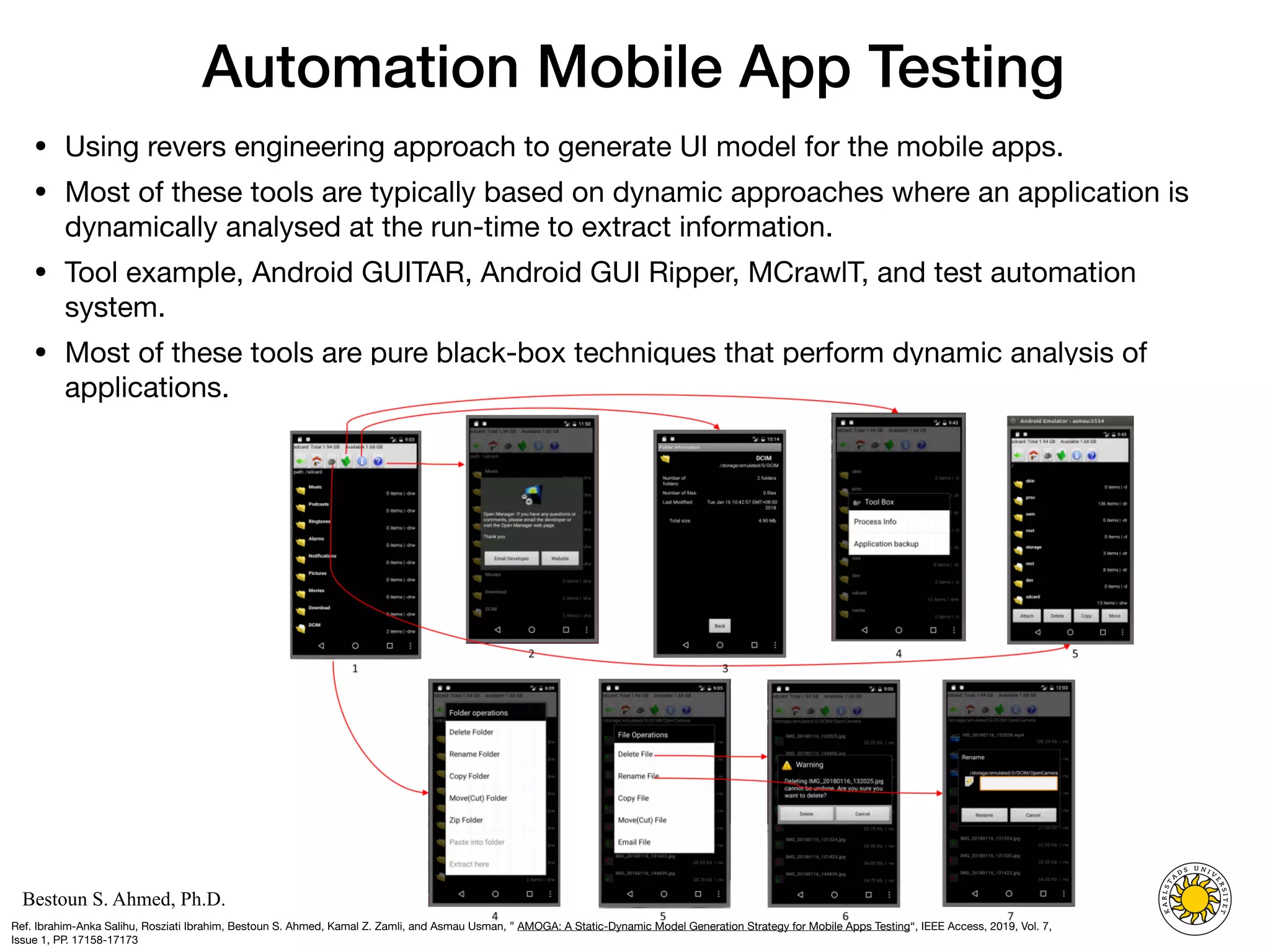

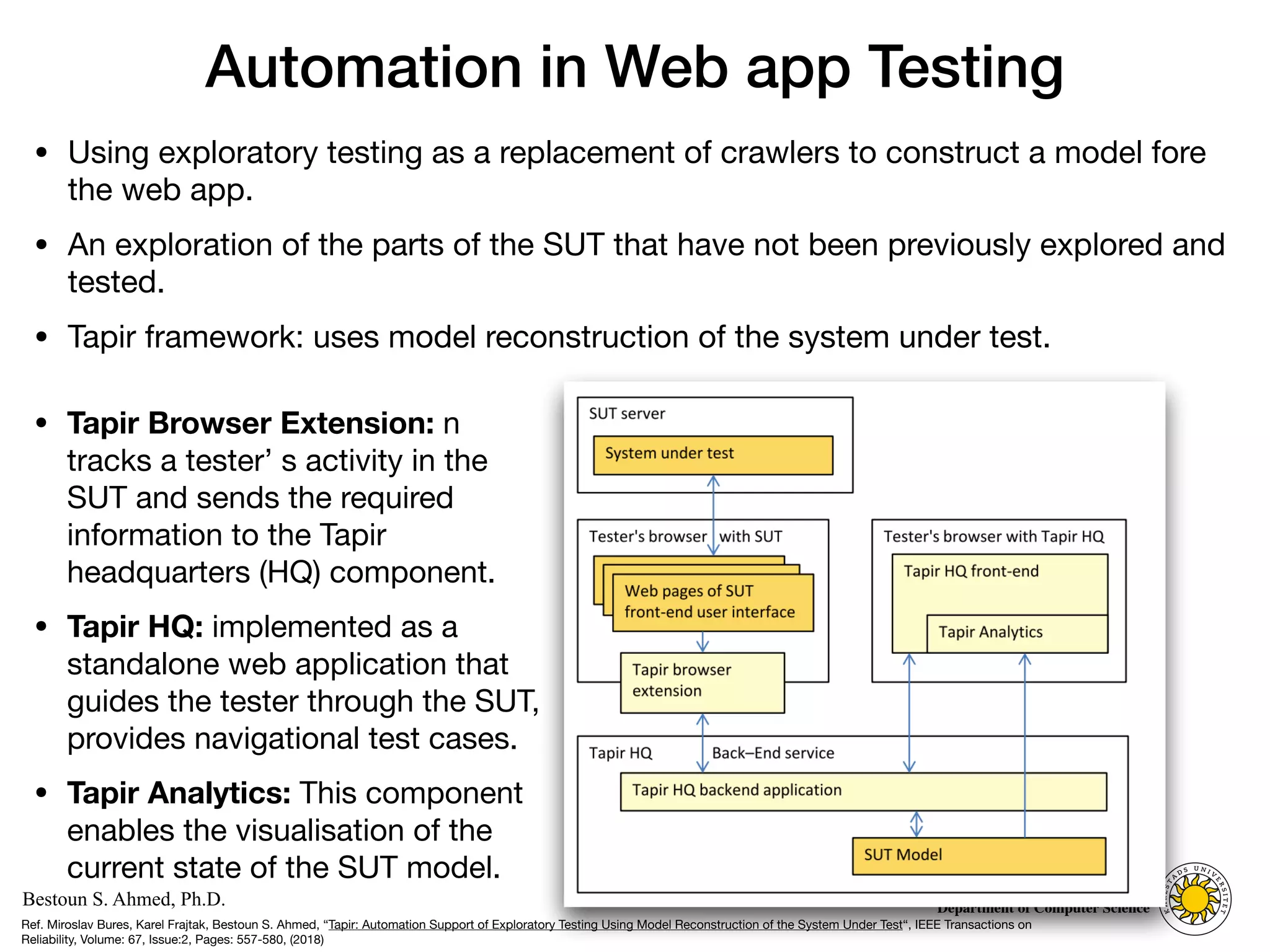

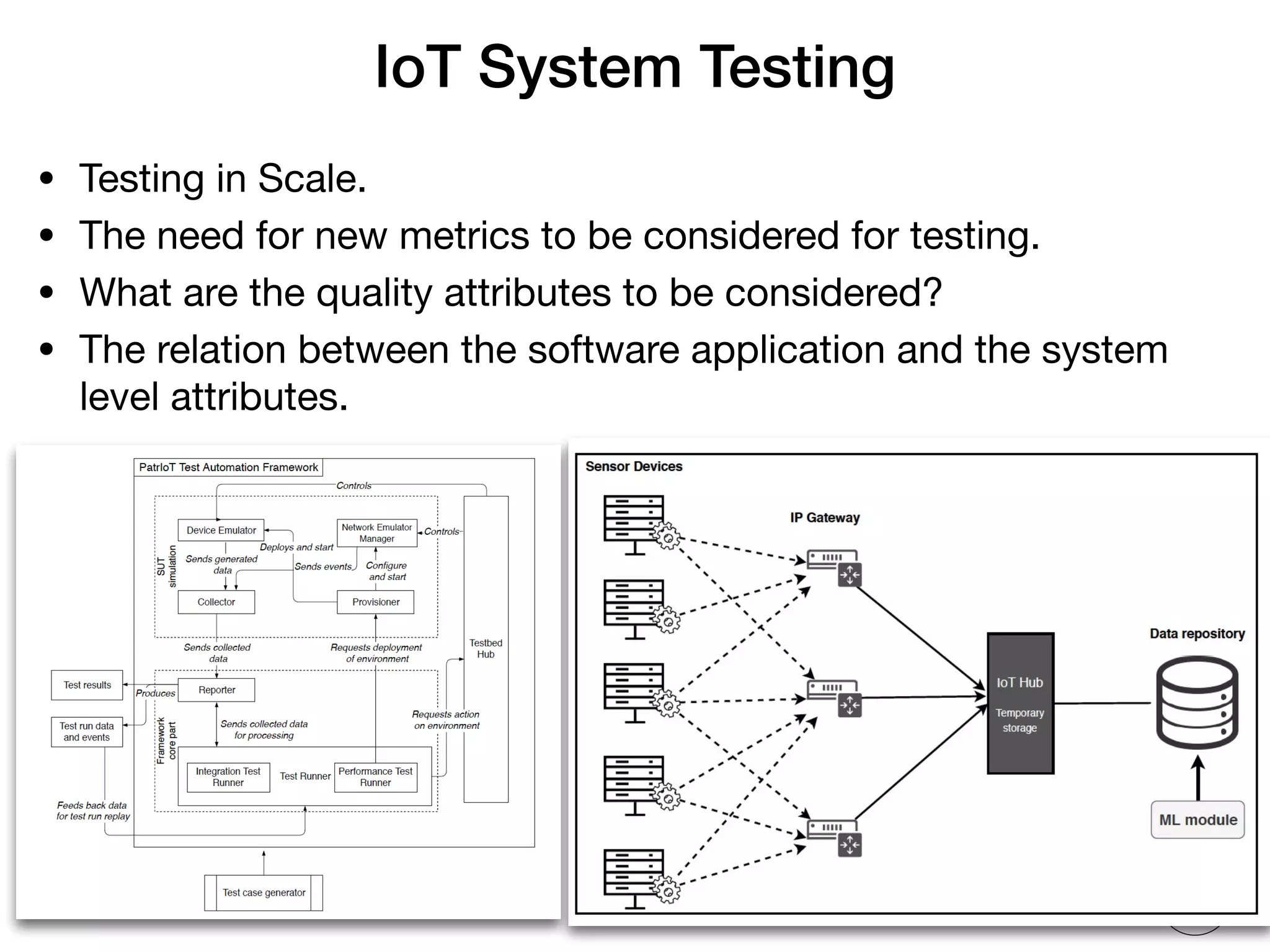

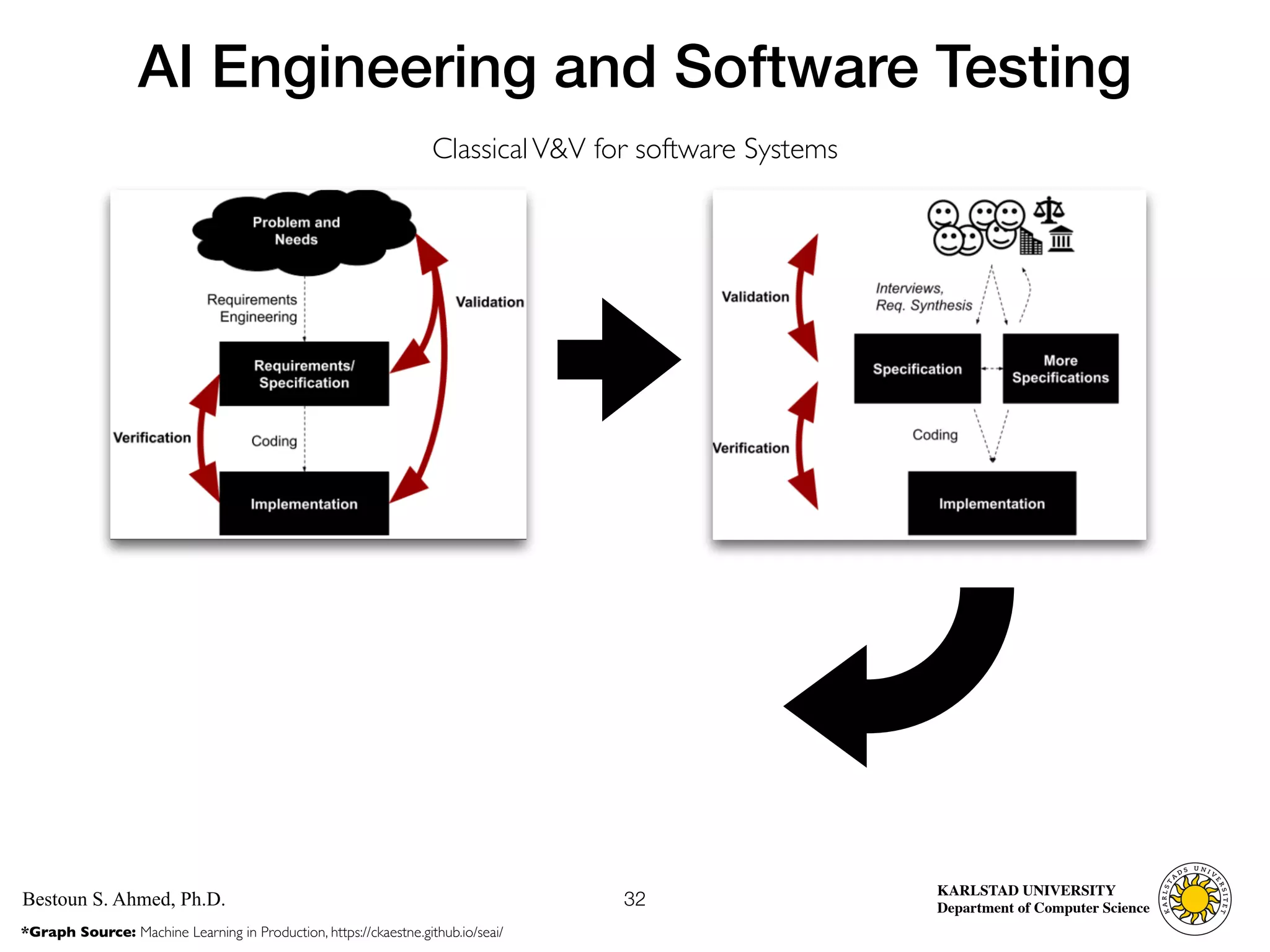

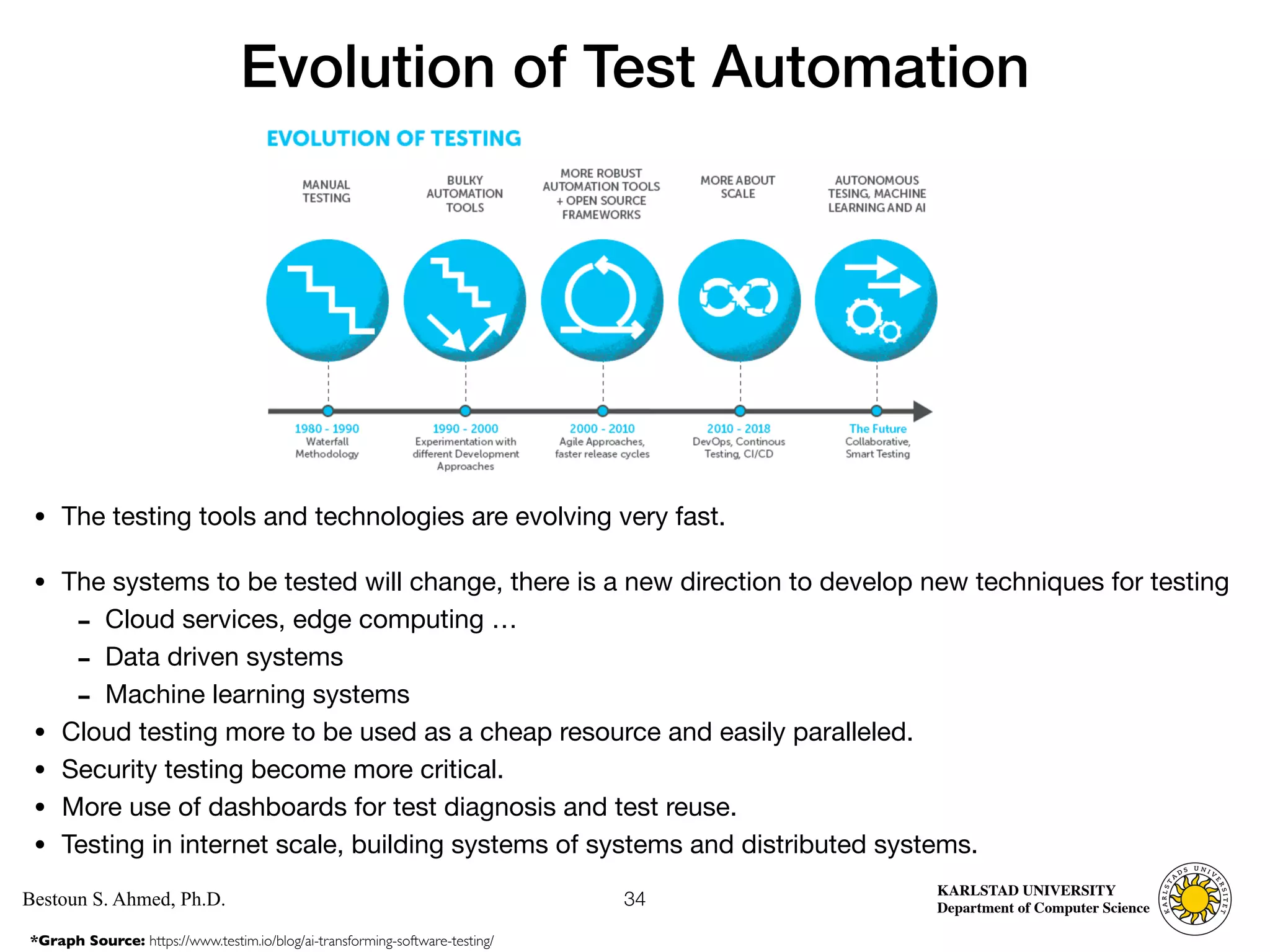

The document discusses the evolution of software testing, particularly focusing on the transition from manual to automated testing and the introduction of model-based testing and AI methods. It emphasizes the importance of optimizing test suites by using objective functions to measure testing goals and leveraging heuristics for automated test generation. Additionally, it highlights the development of new testing methodologies that incorporate code structure and mutation testing to improve the quality of software applications.