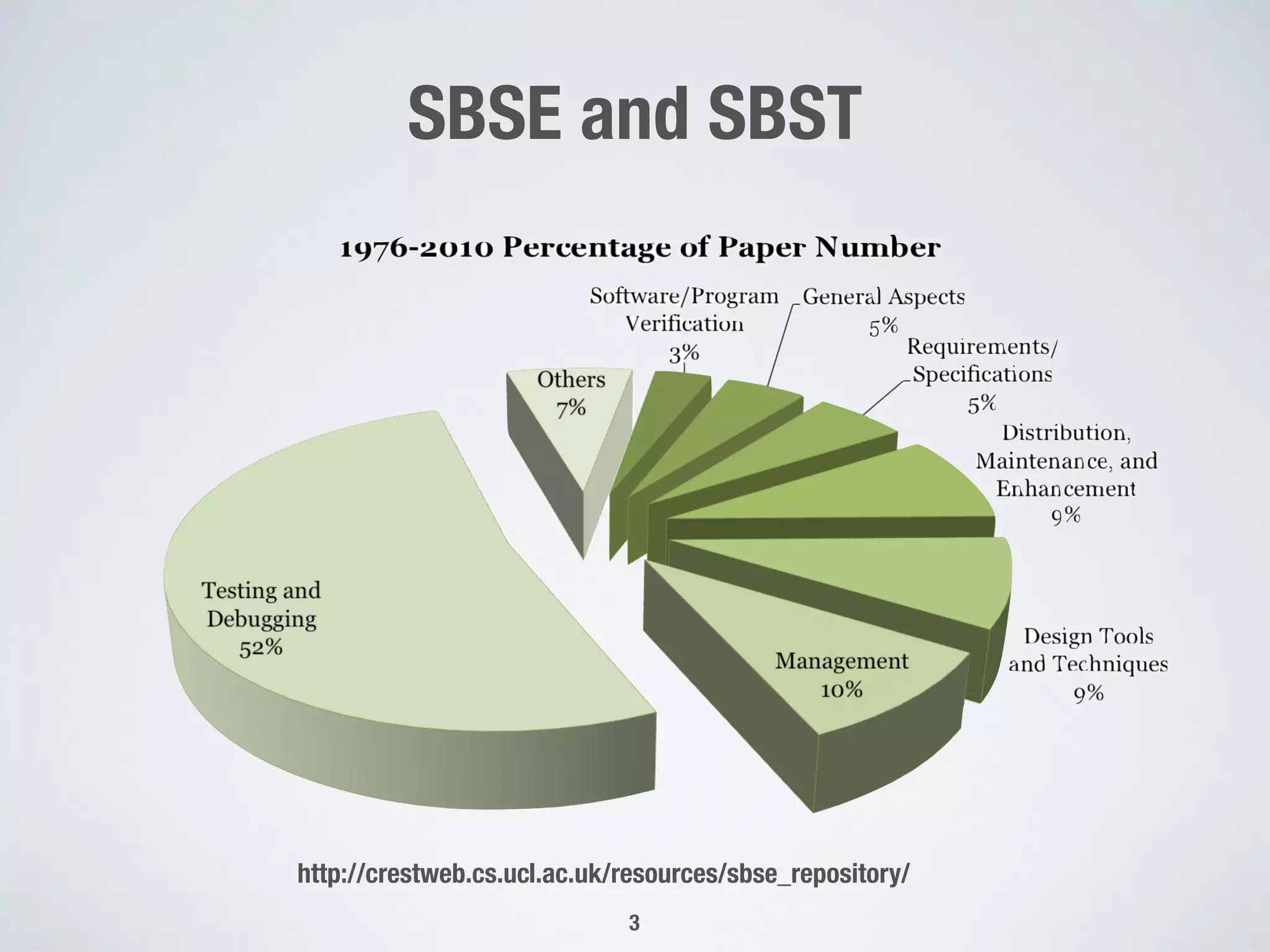

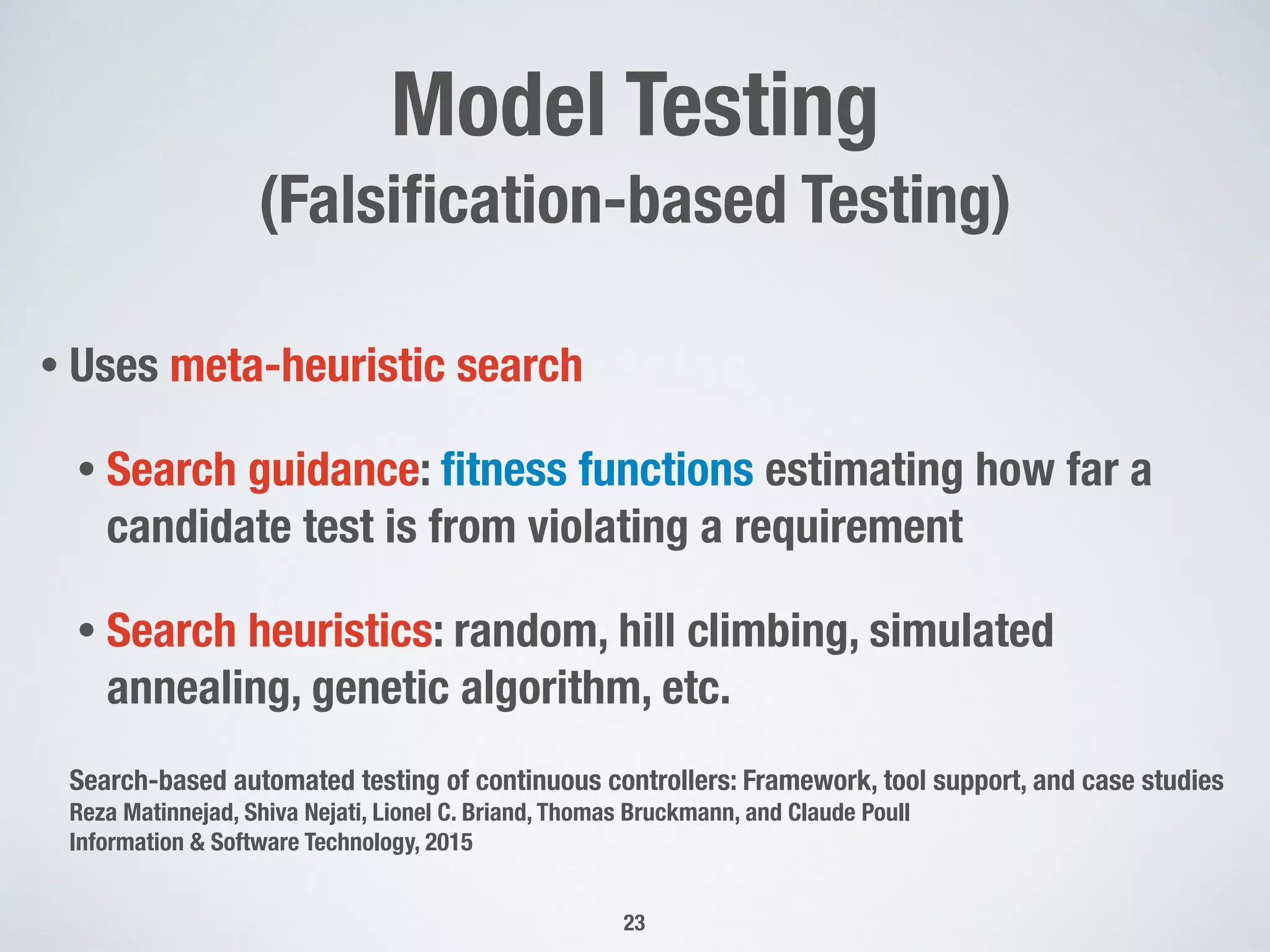

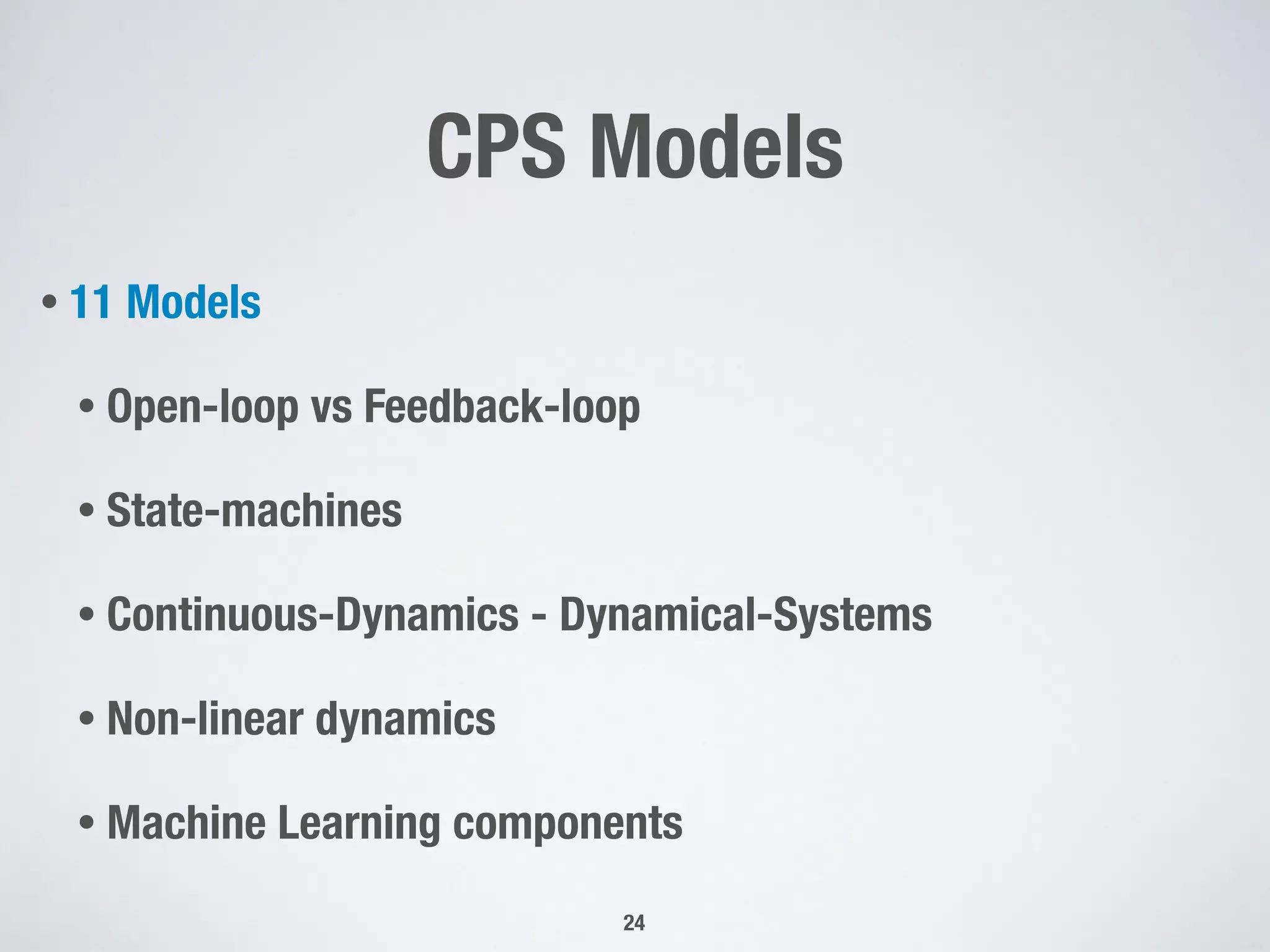

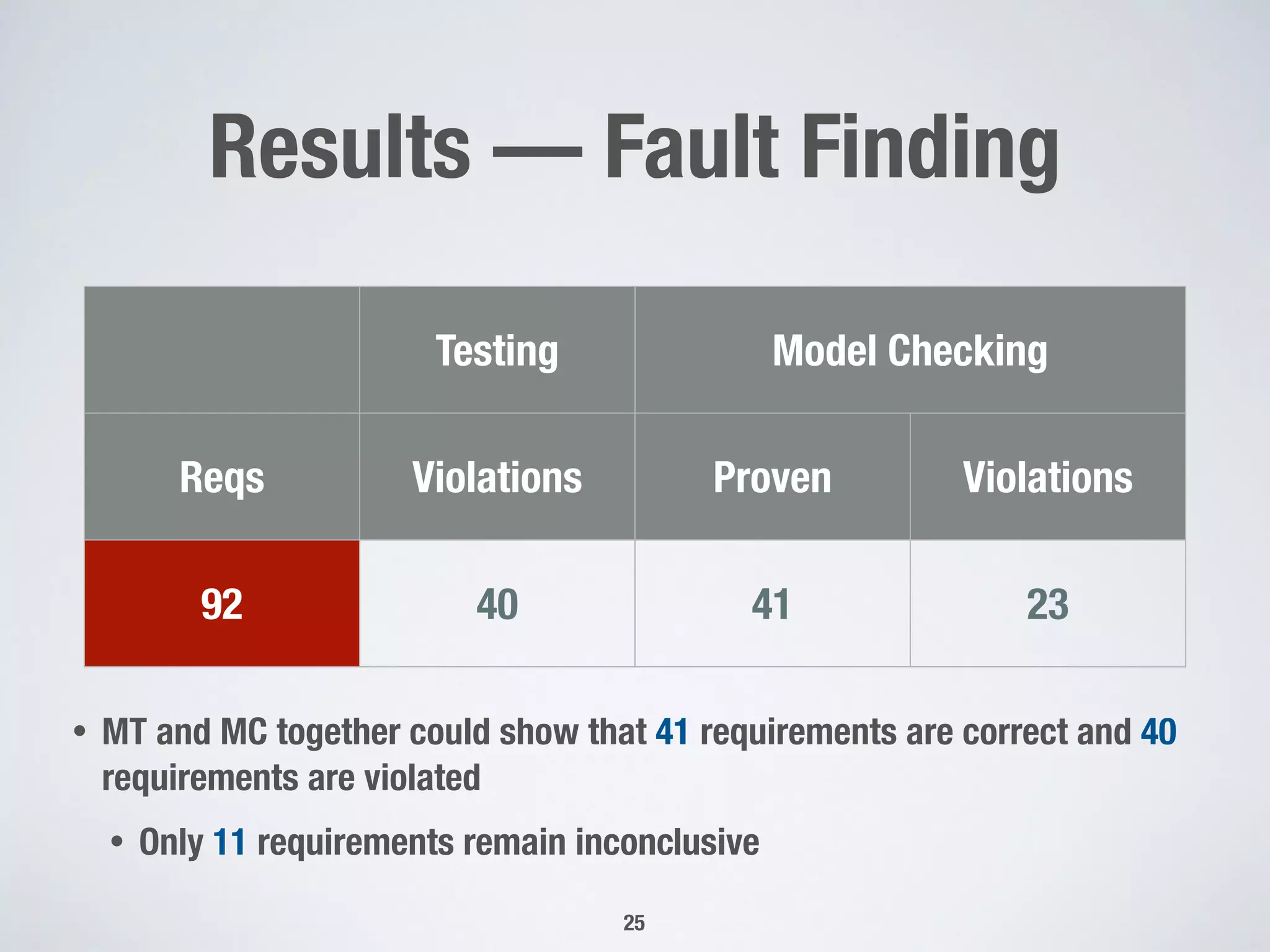

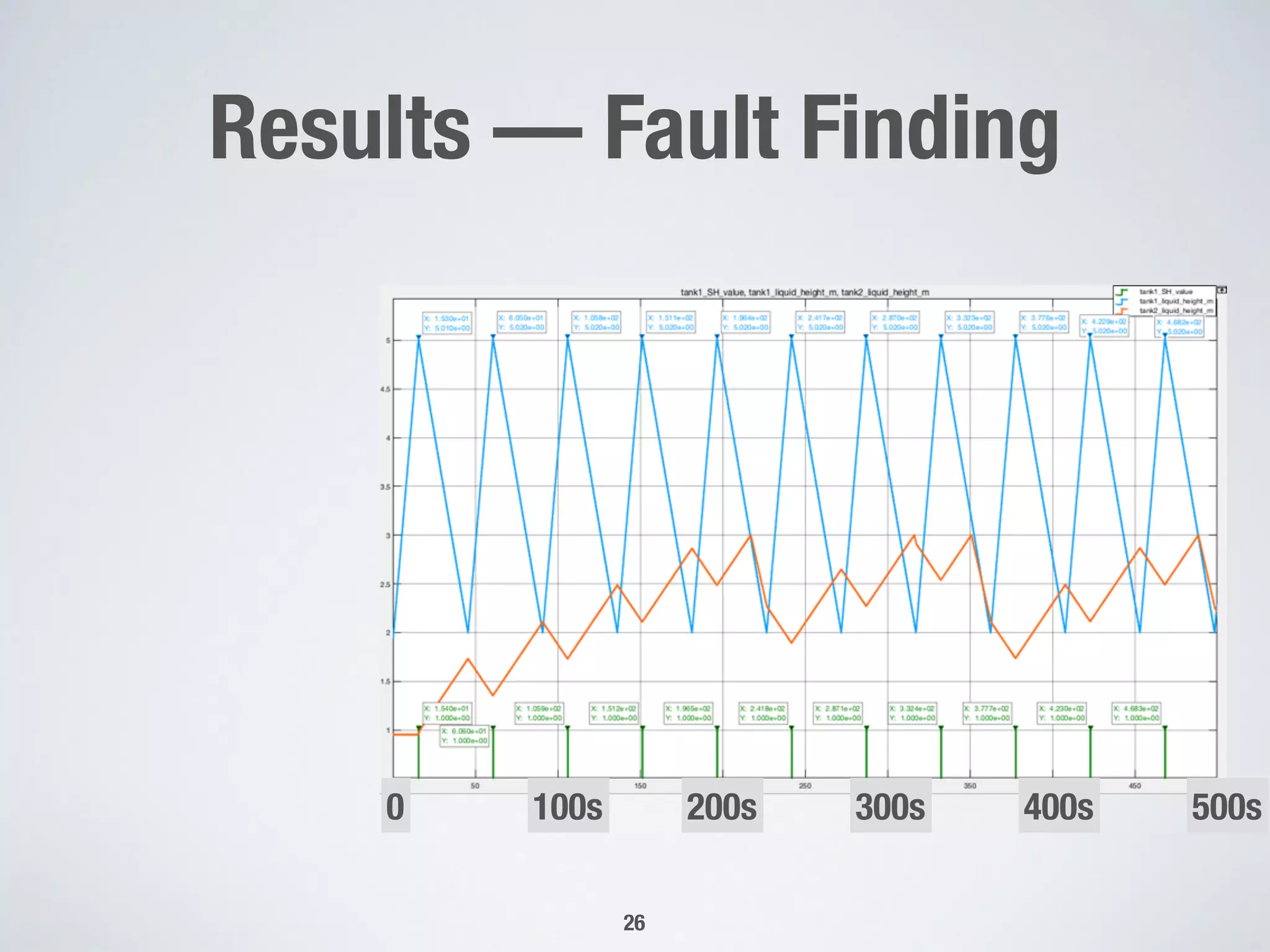

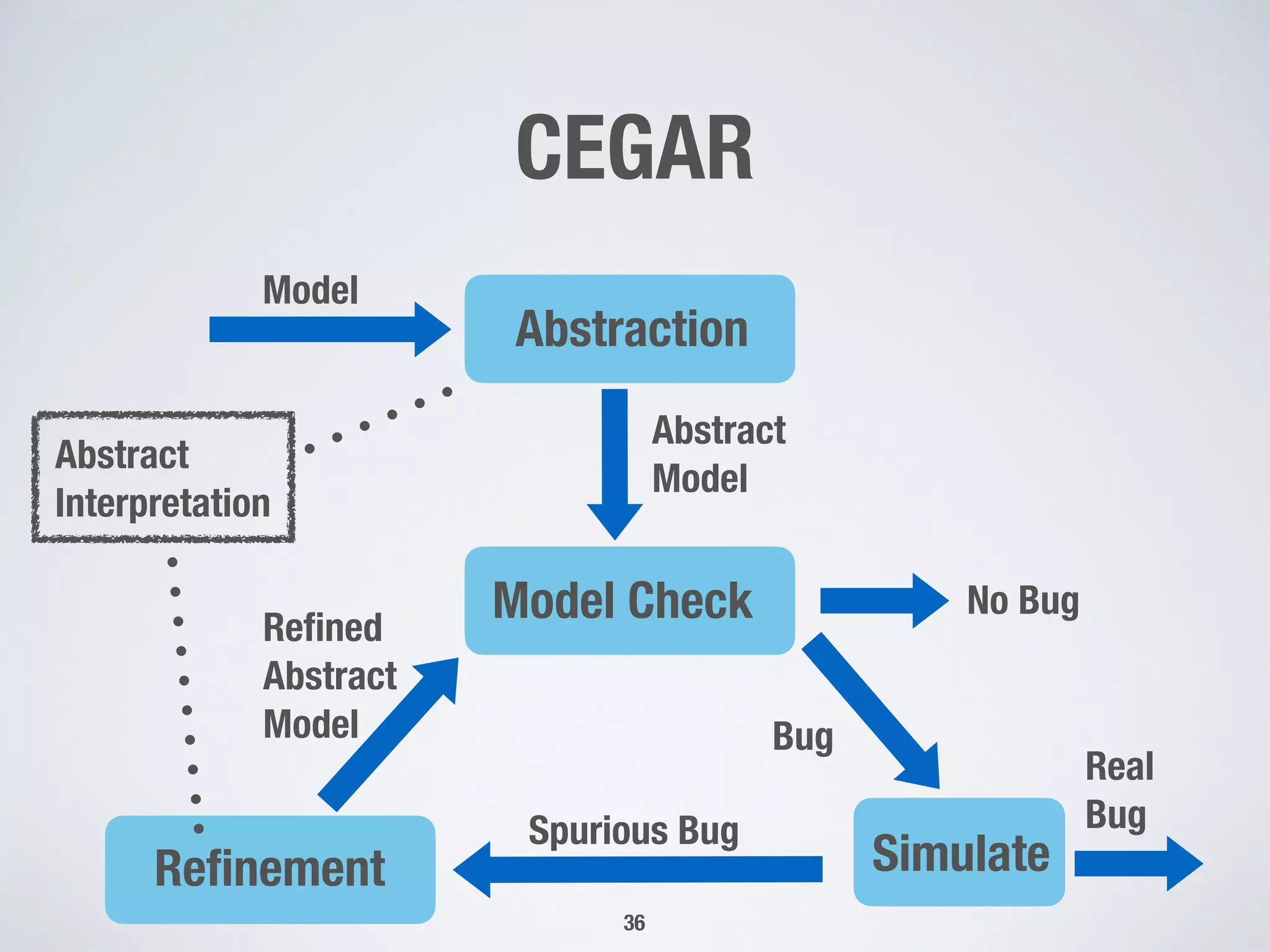

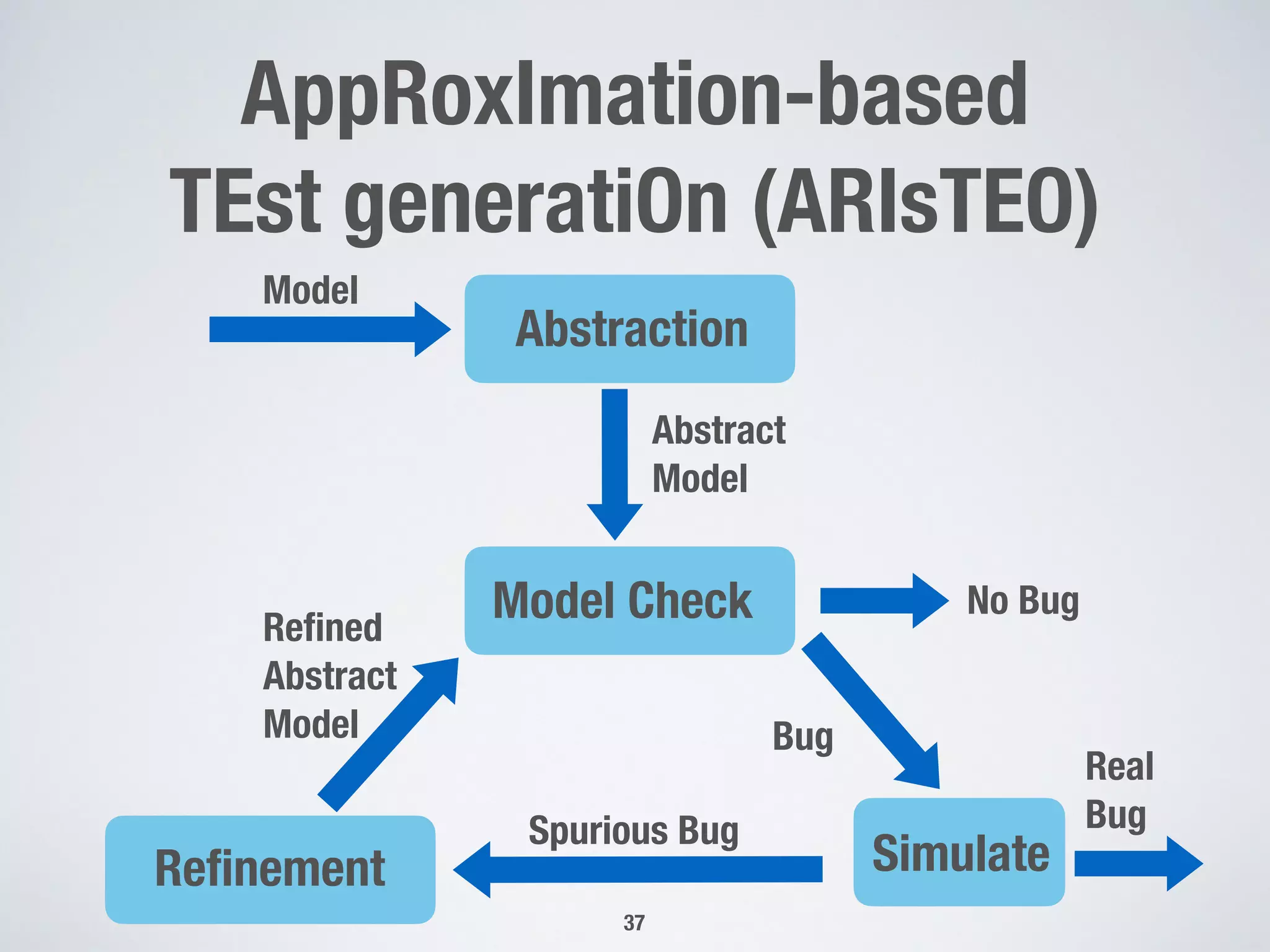

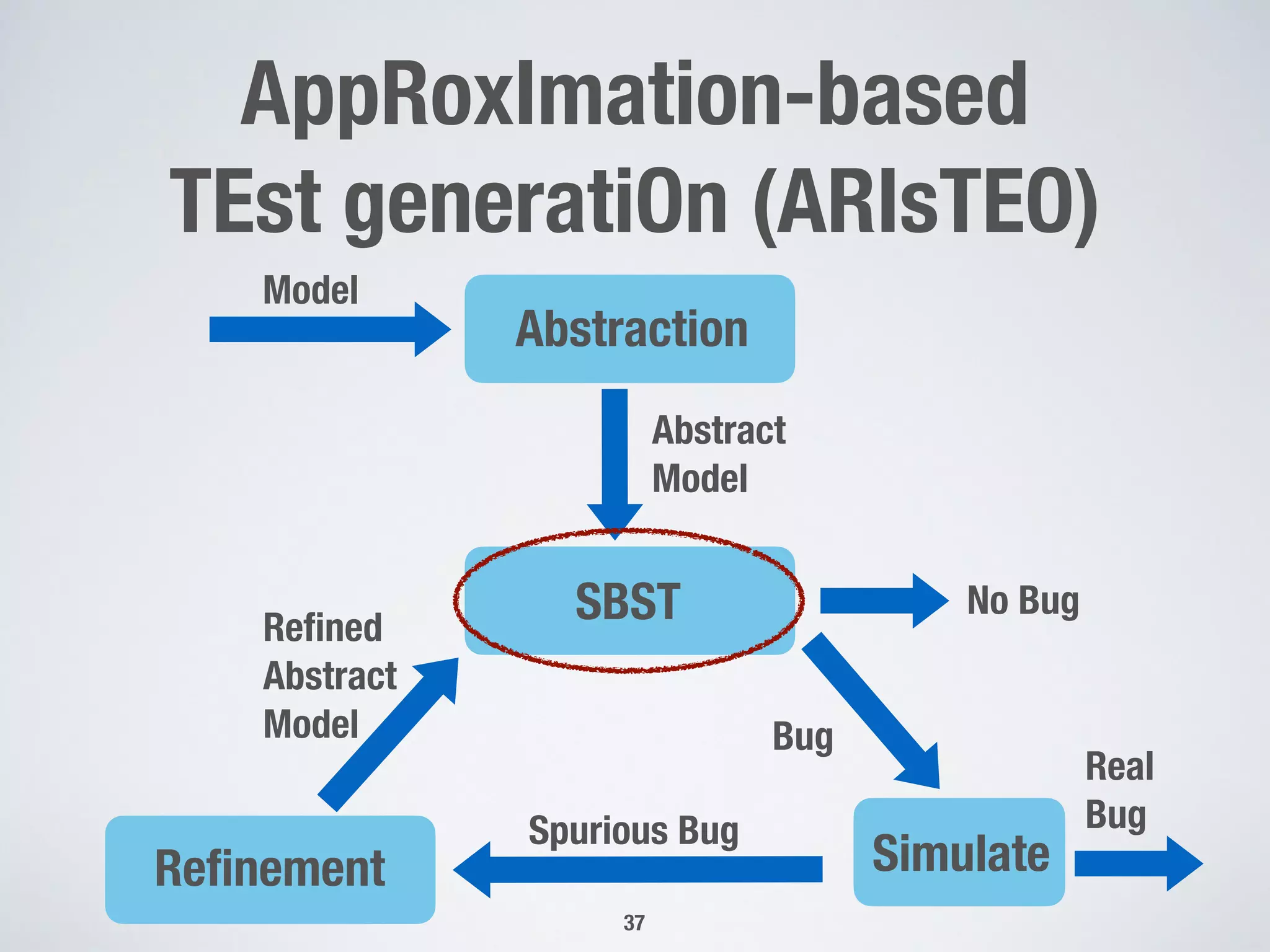

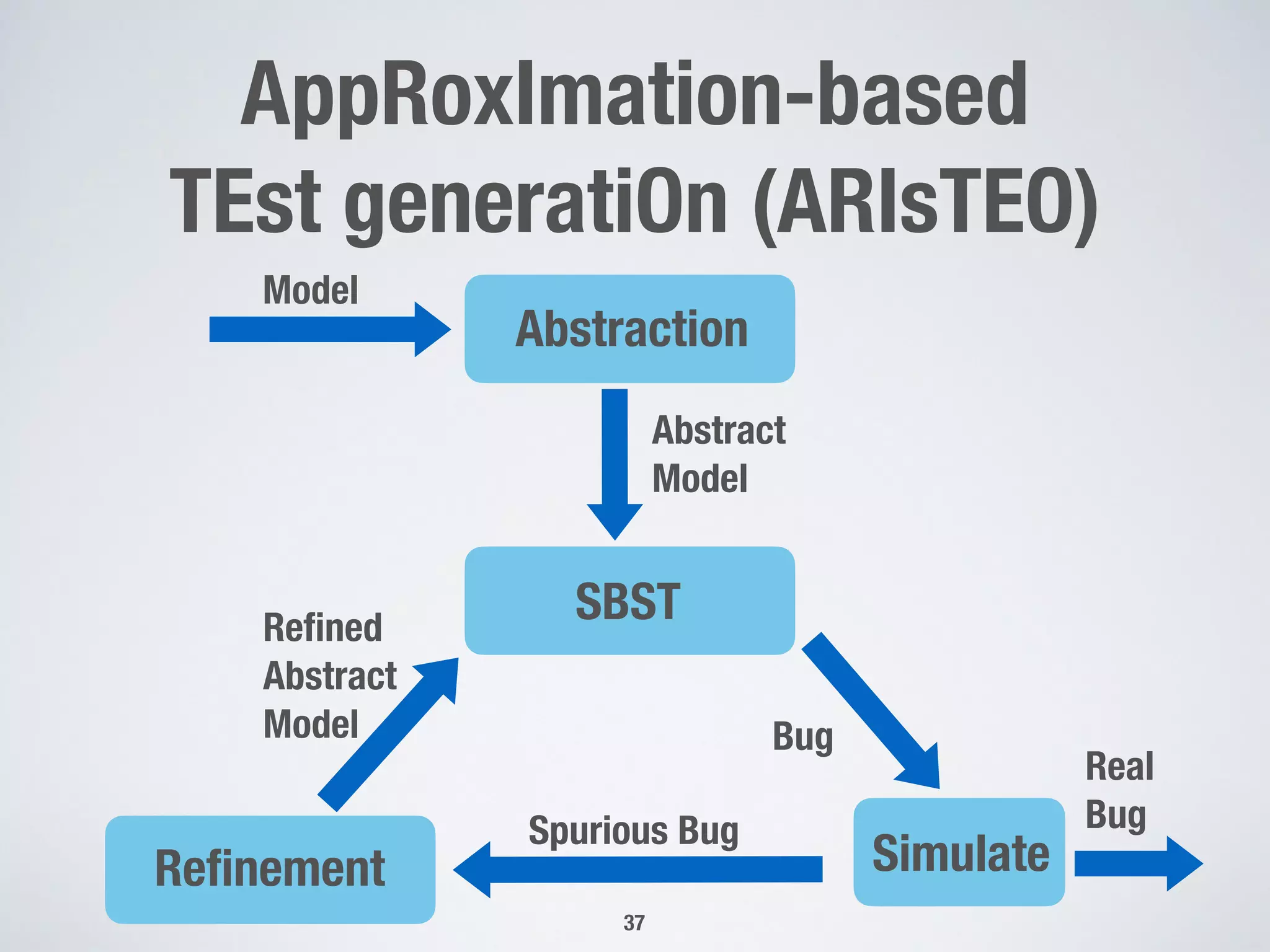

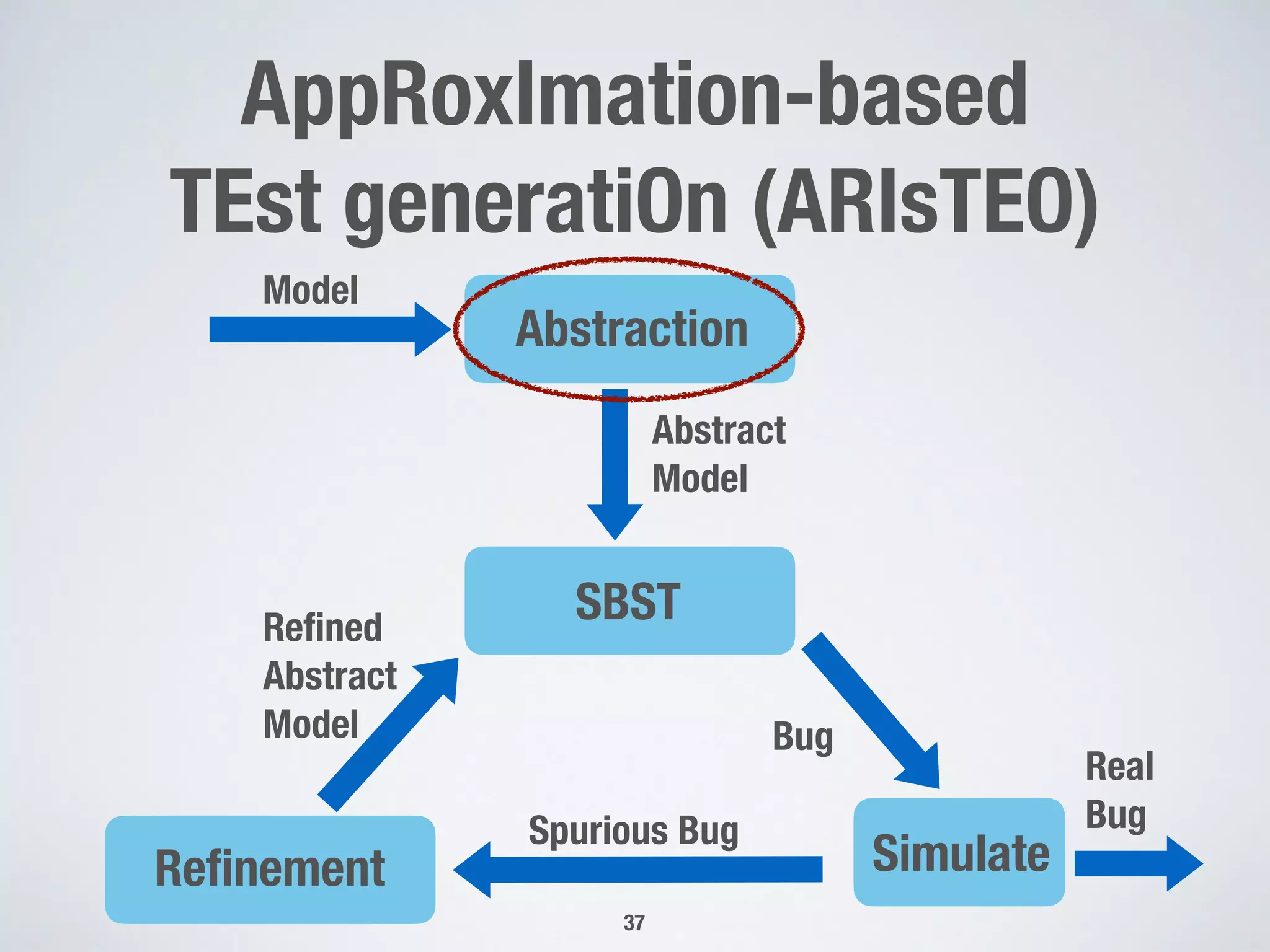

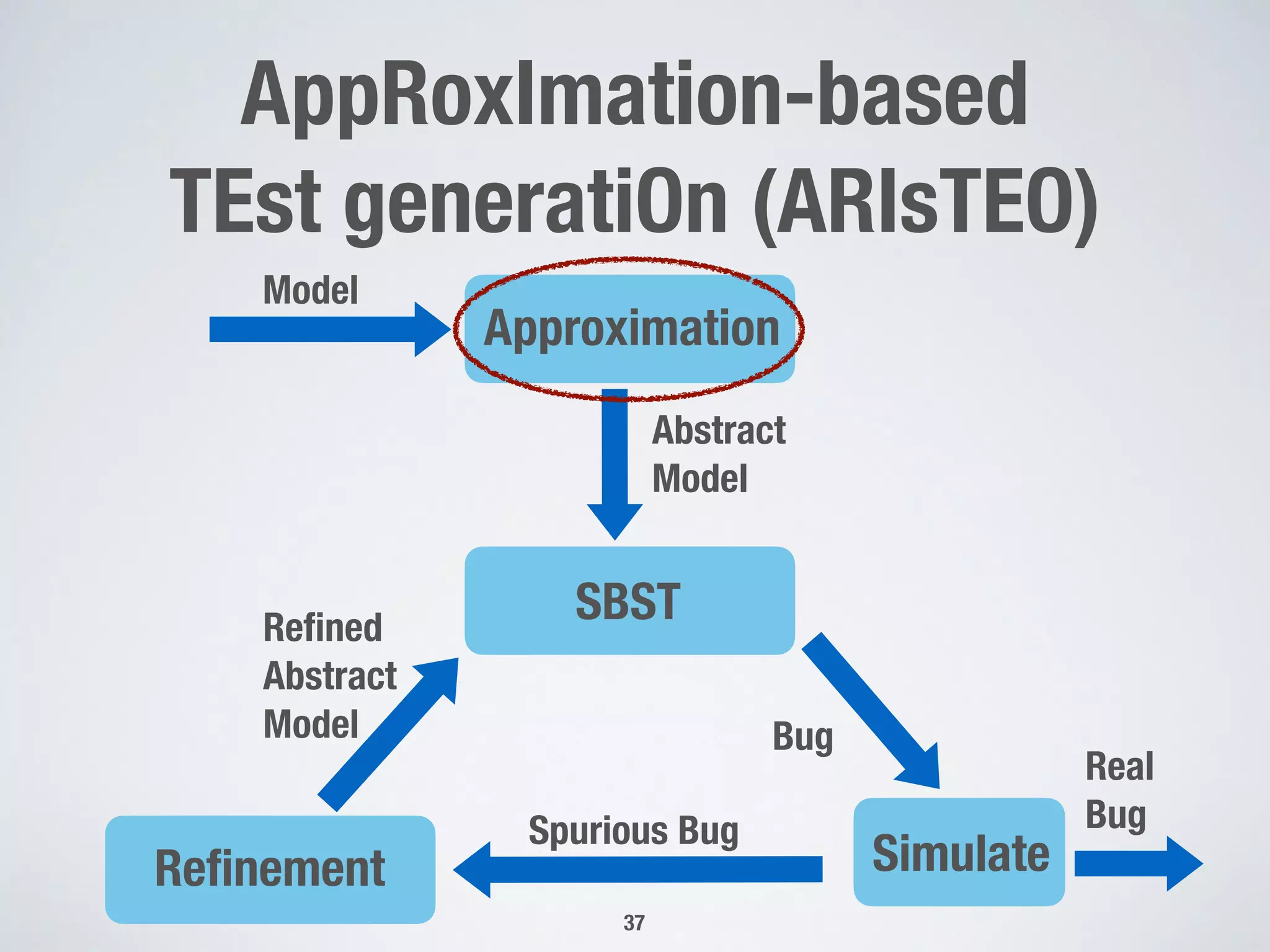

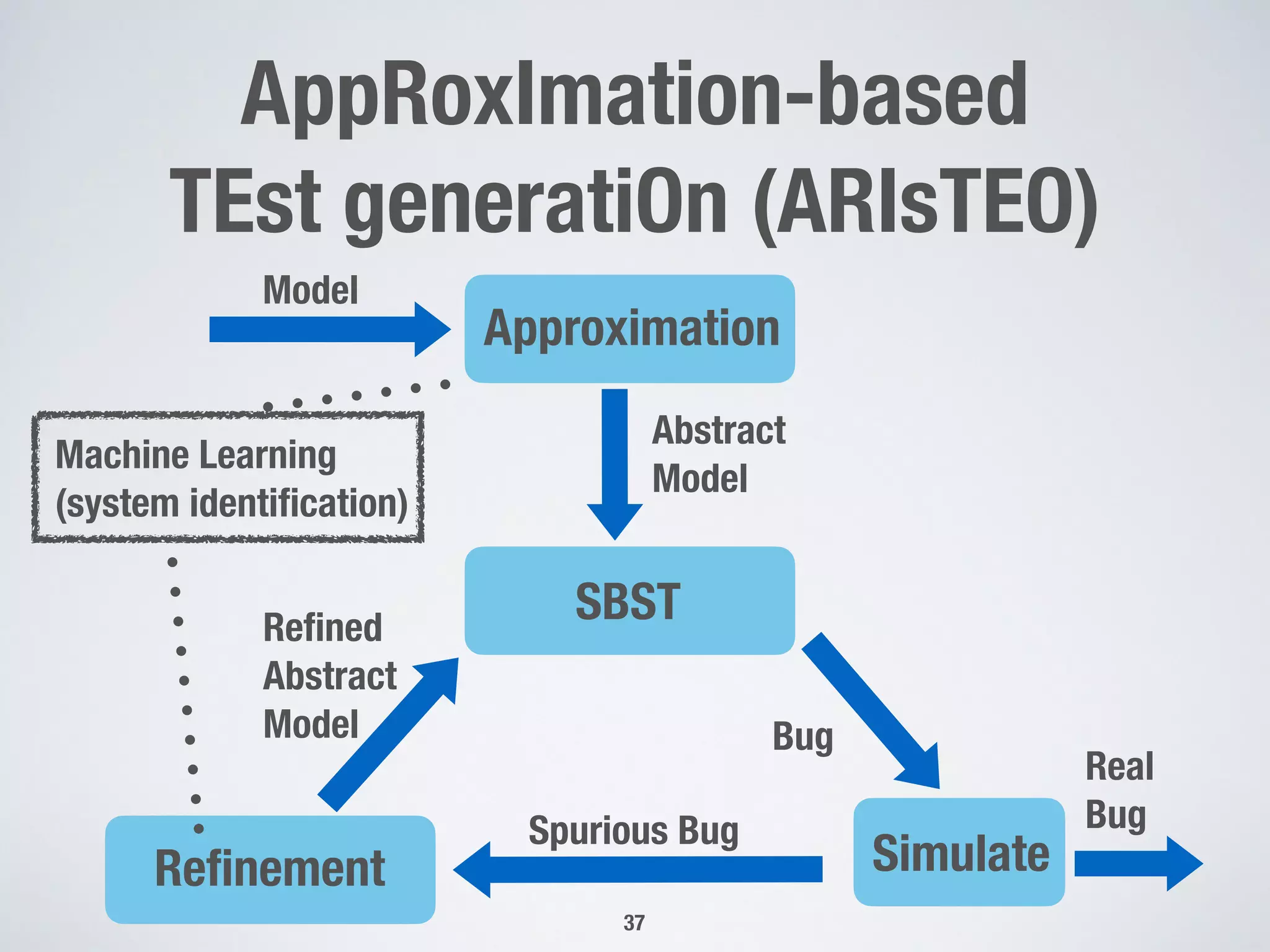

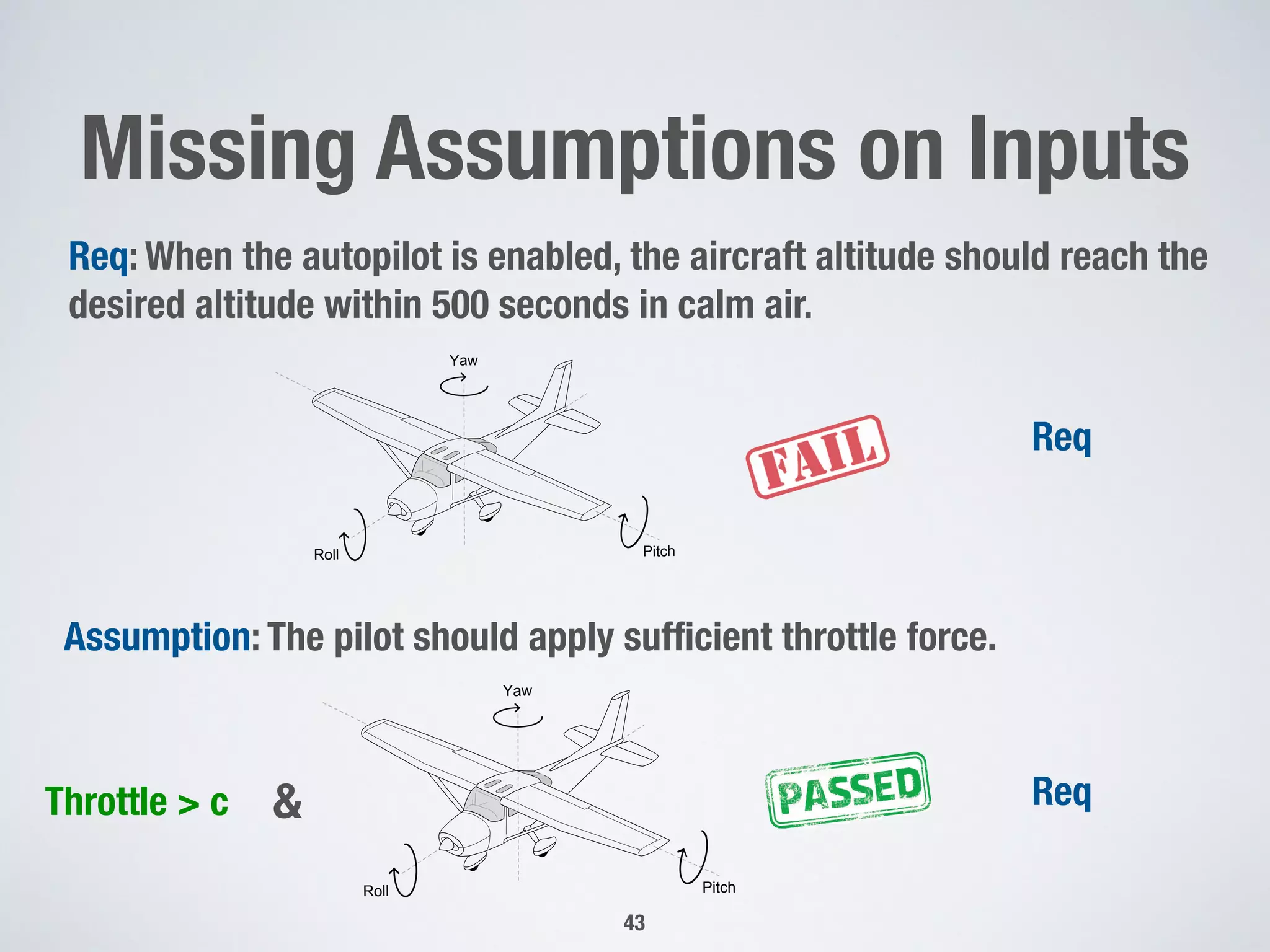

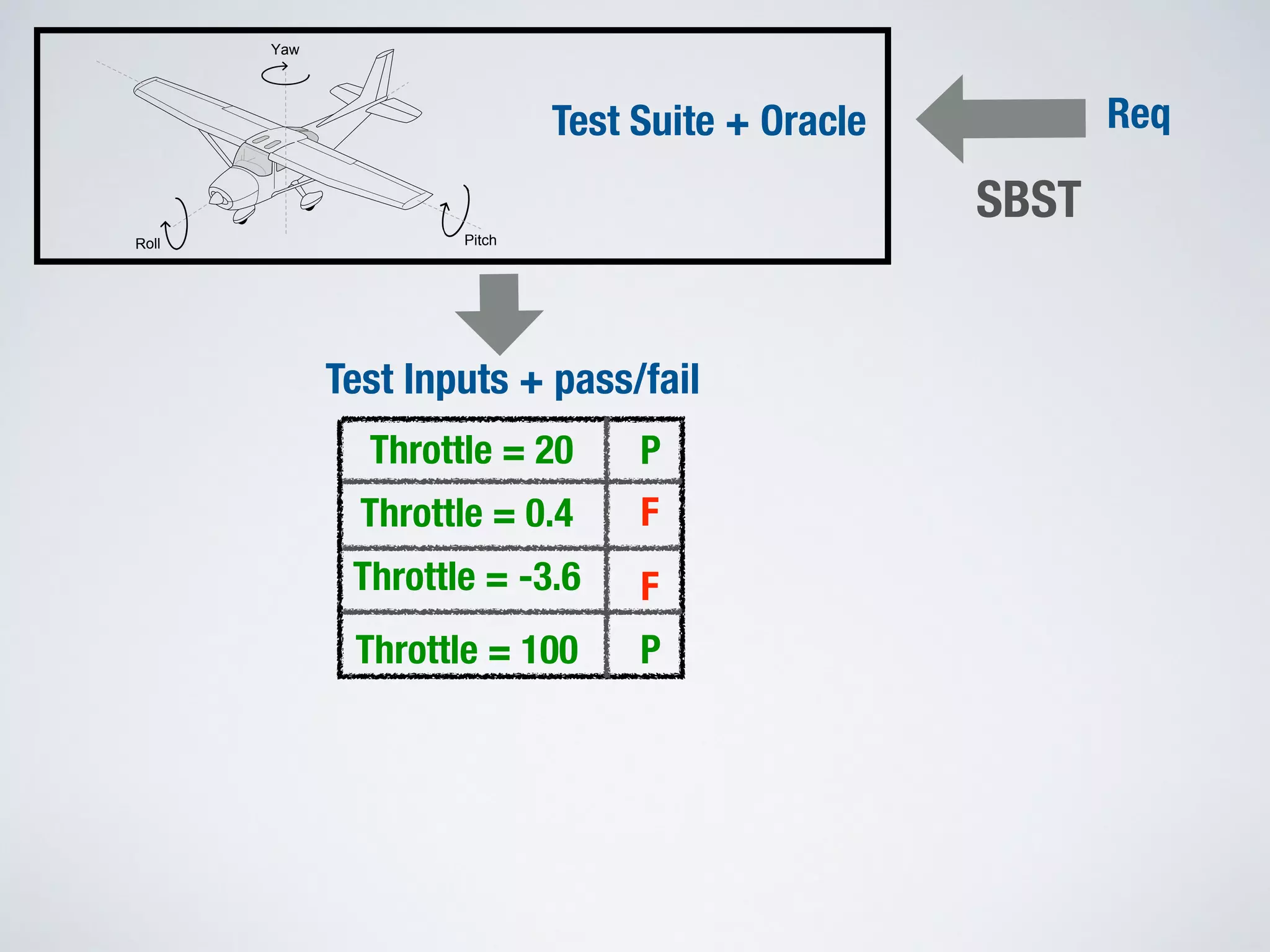

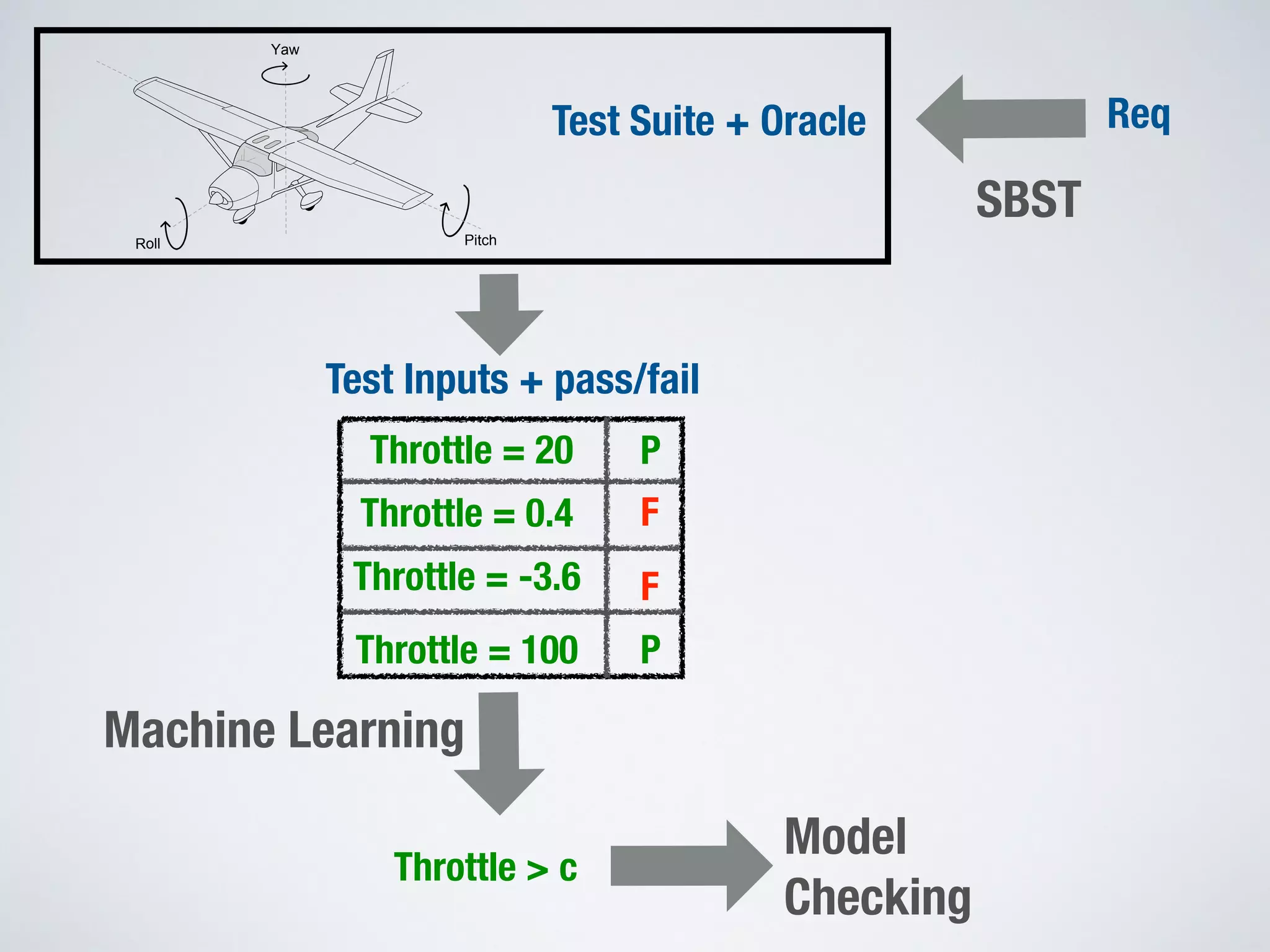

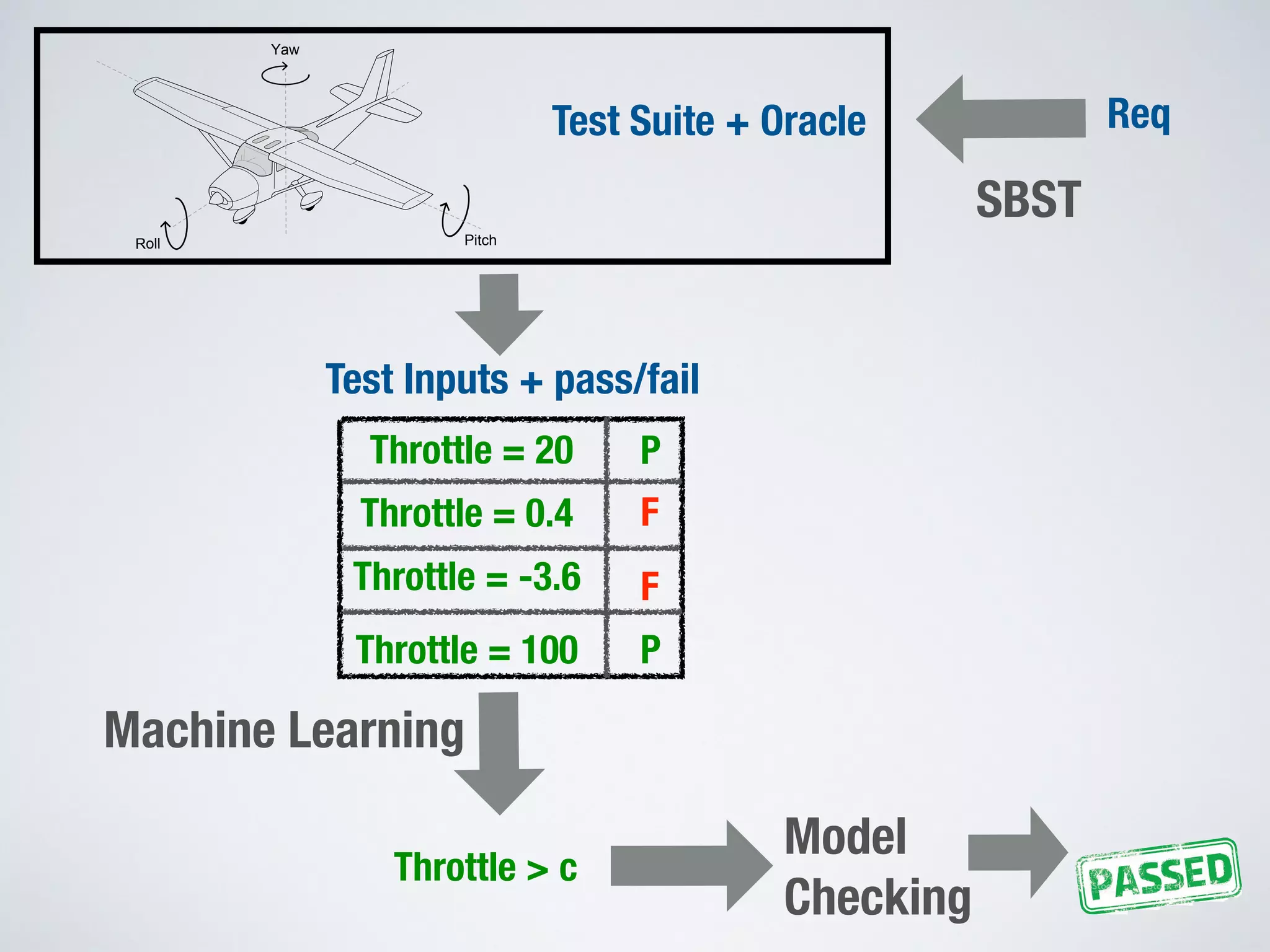

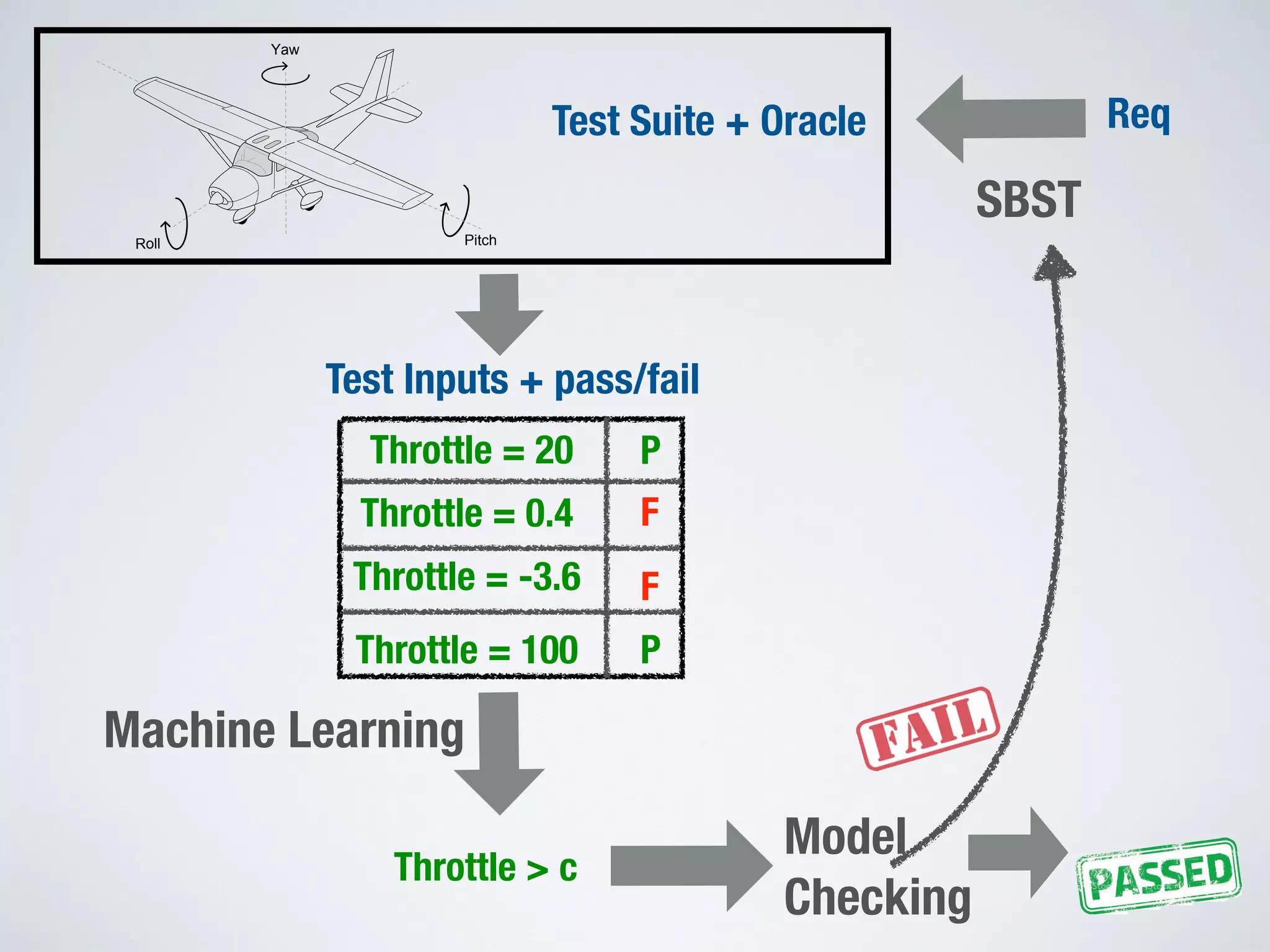

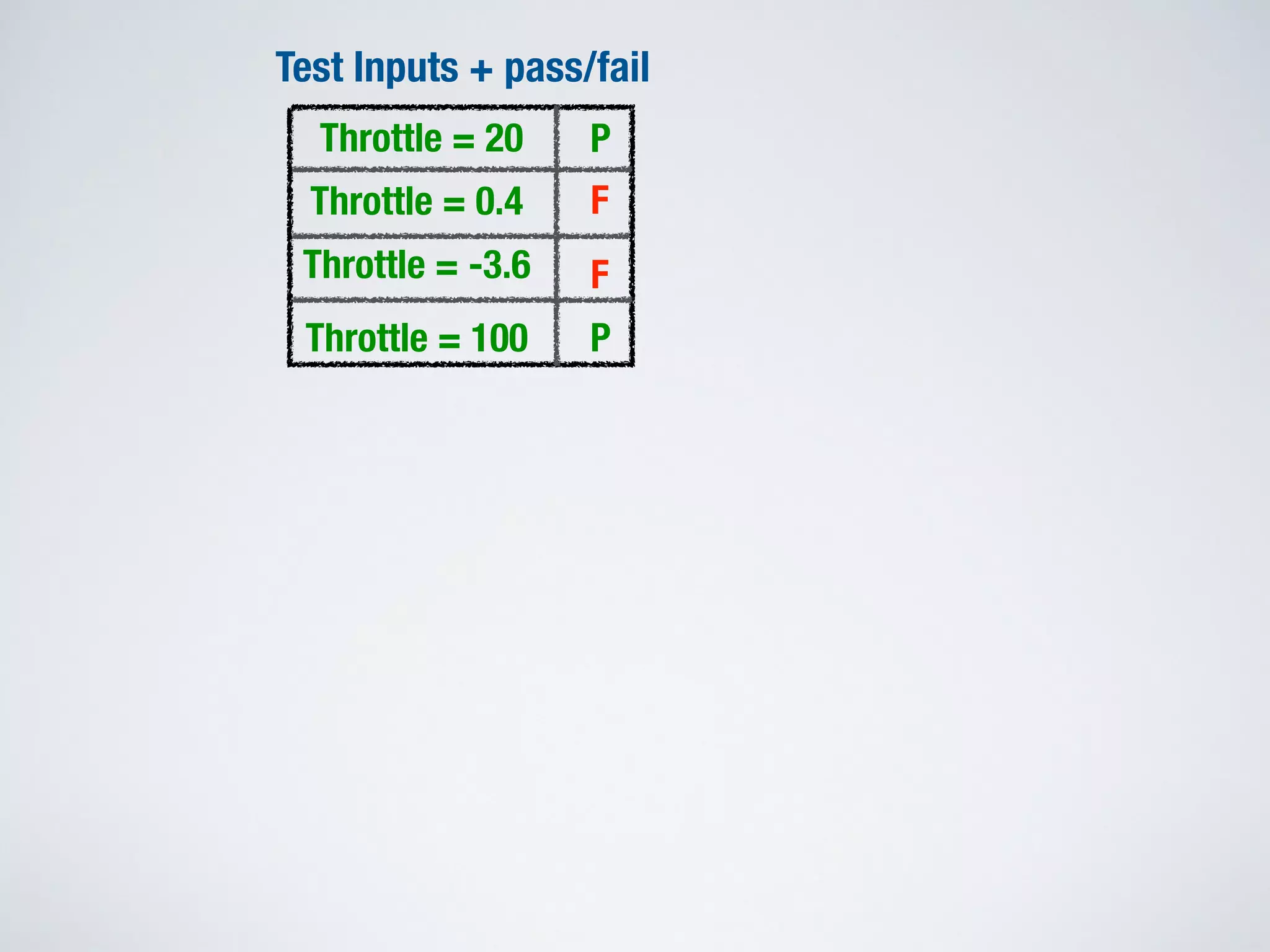

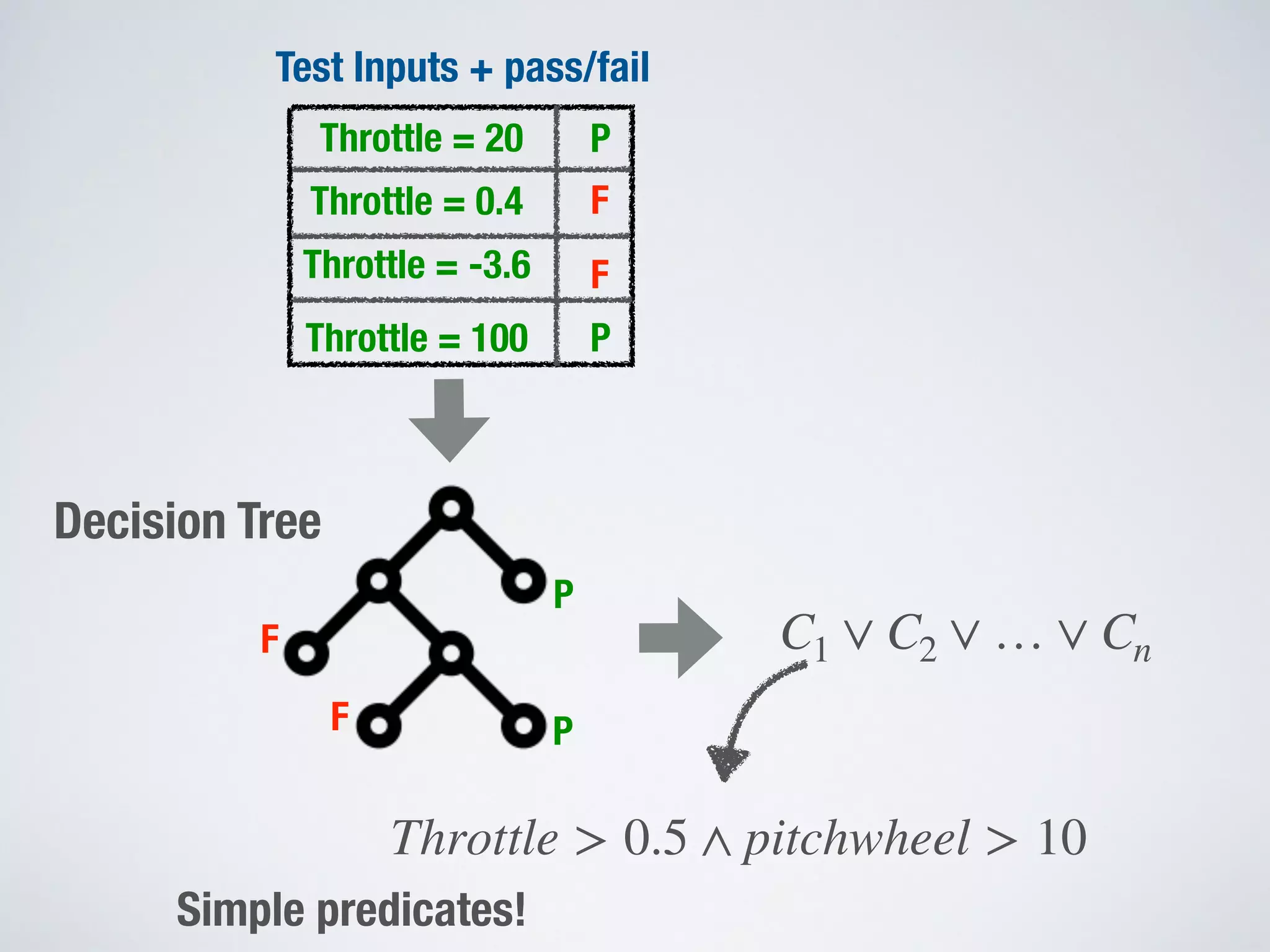

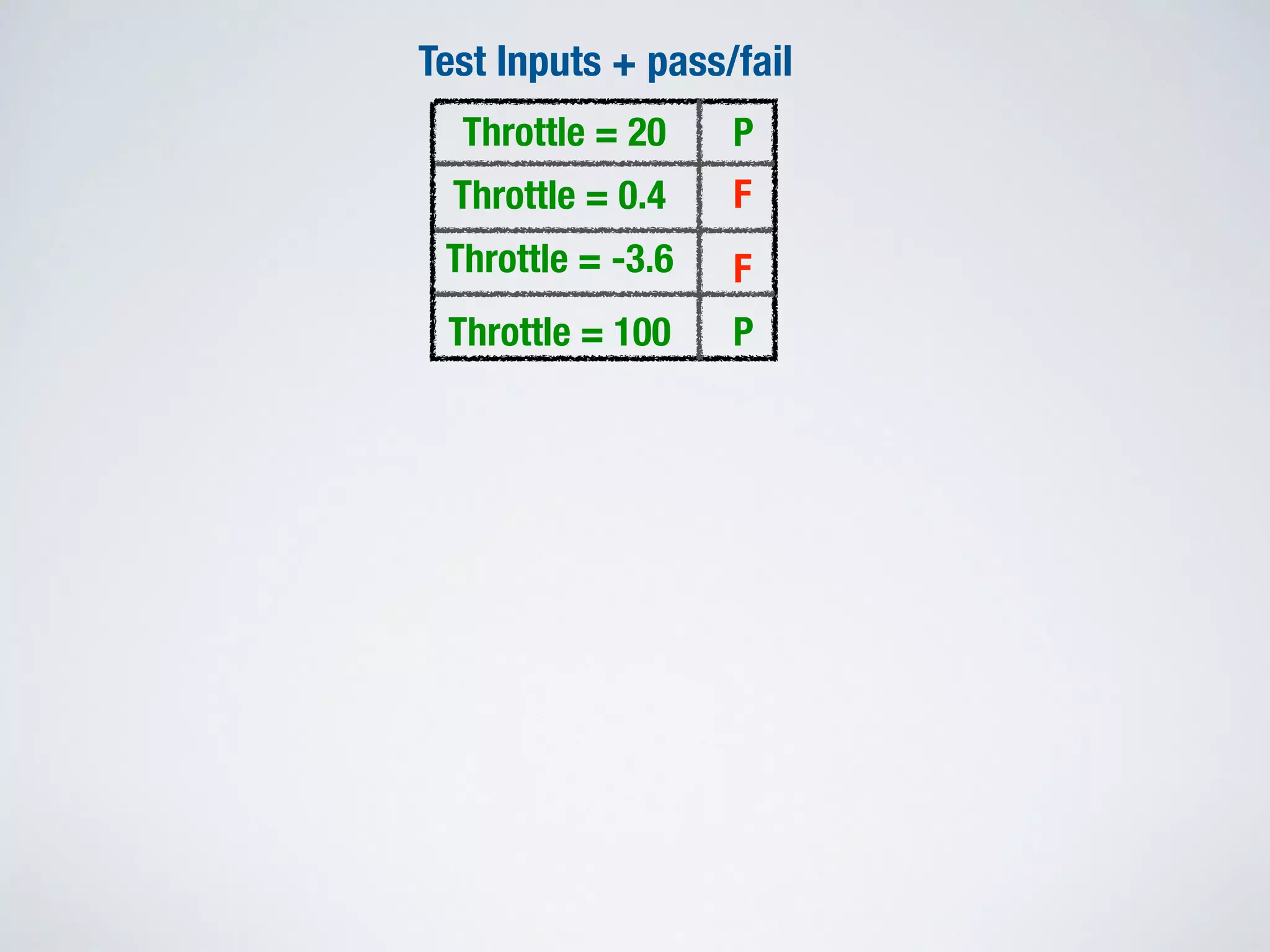

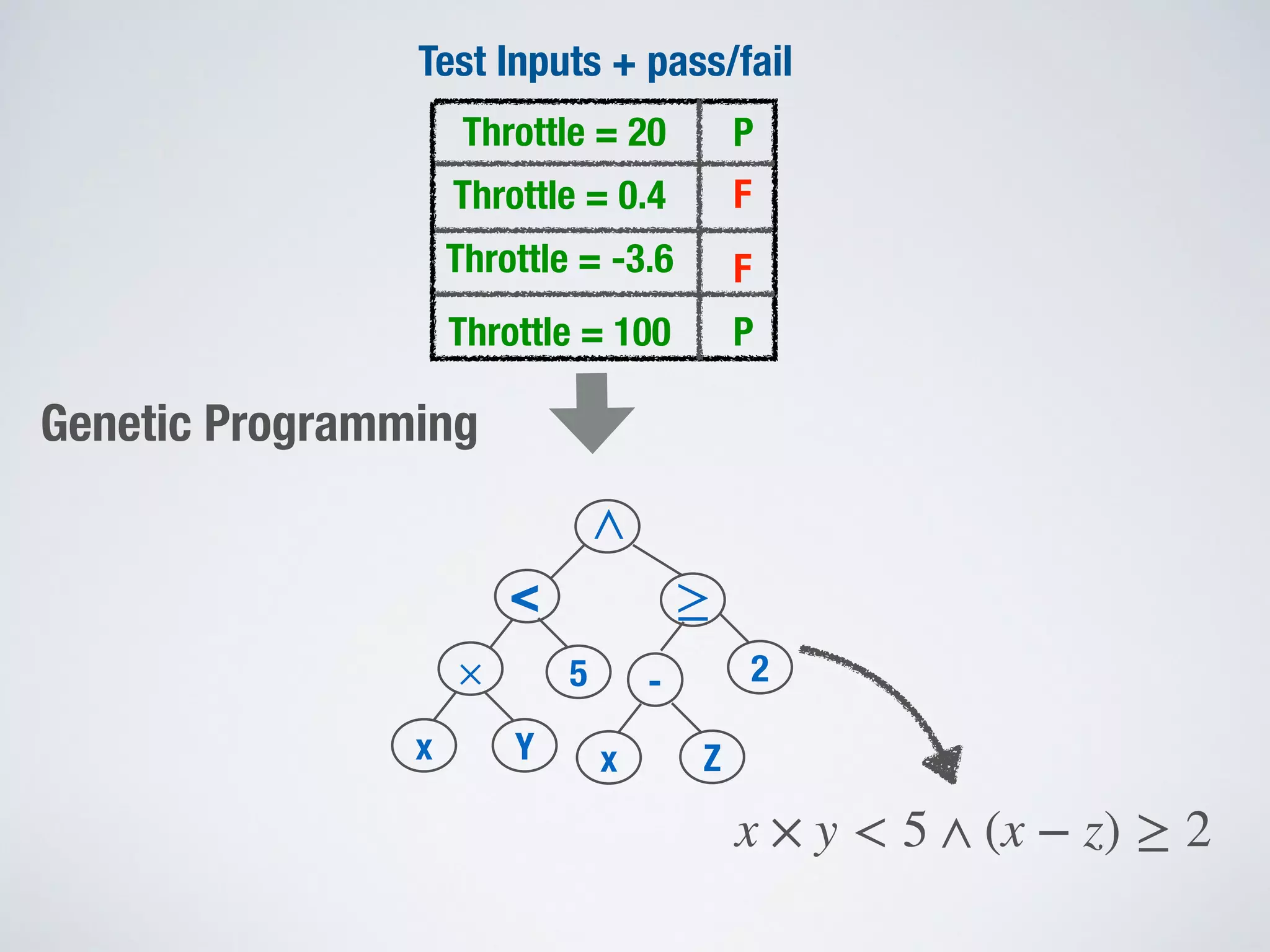

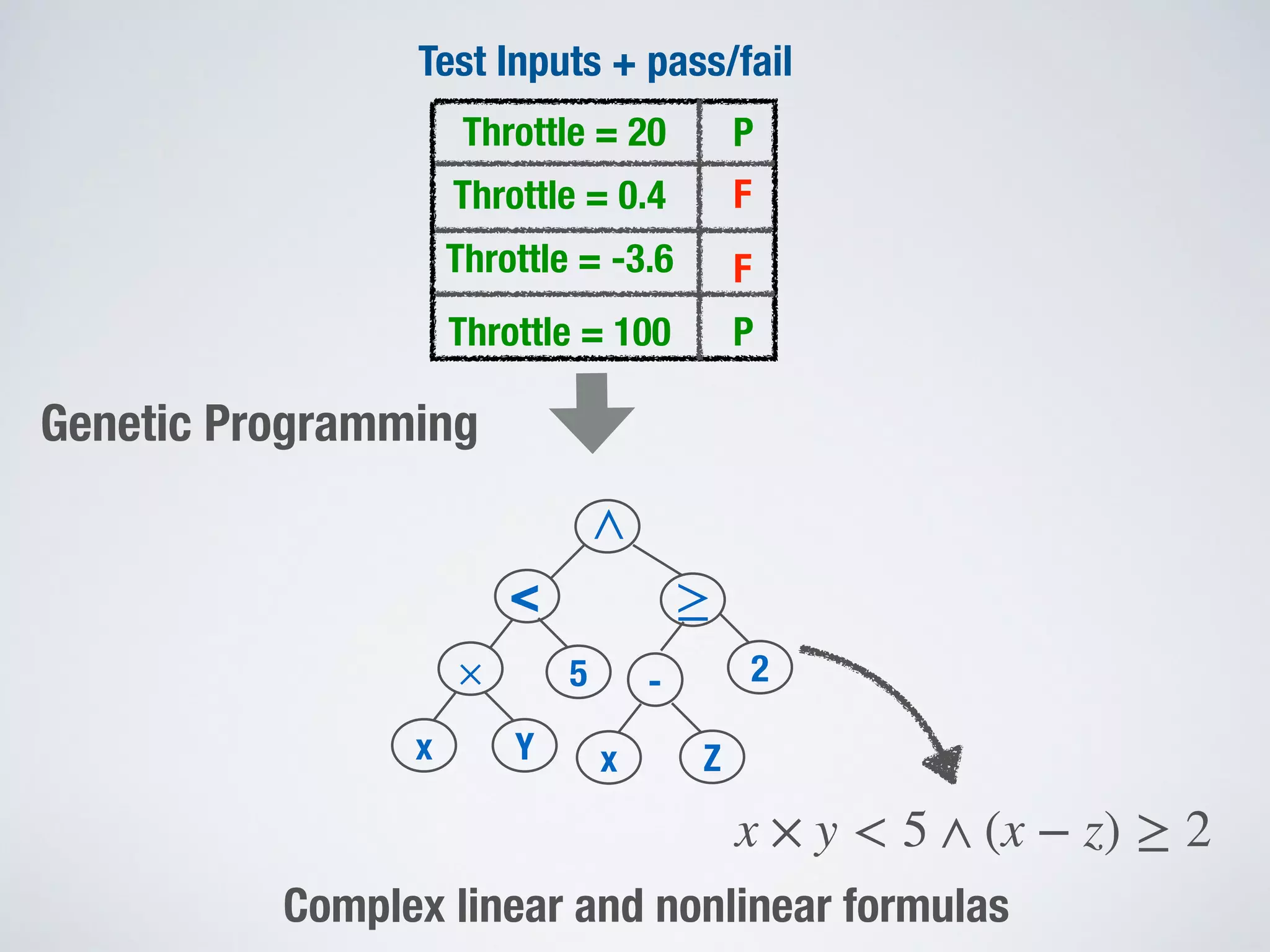

This document discusses search-based testing and its applications in software testing. It outlines some key strengths of search-based software testing (SBST) such as being scalable, parallelizable, versatile, and flexible. It also discusses some limitations of search-based approaches for problems that require formal verification to establish properties for all possible usages. The document compares classical optimization approaches, which build solutions incrementally, to stochastic optimization approaches used in SBST, which sample solutions in a randomized way. It notes that while testing can find bugs, it cannot prove their absence. Finally, it discusses how SBST can be combined with other techniques like constraint solving and machine learning.