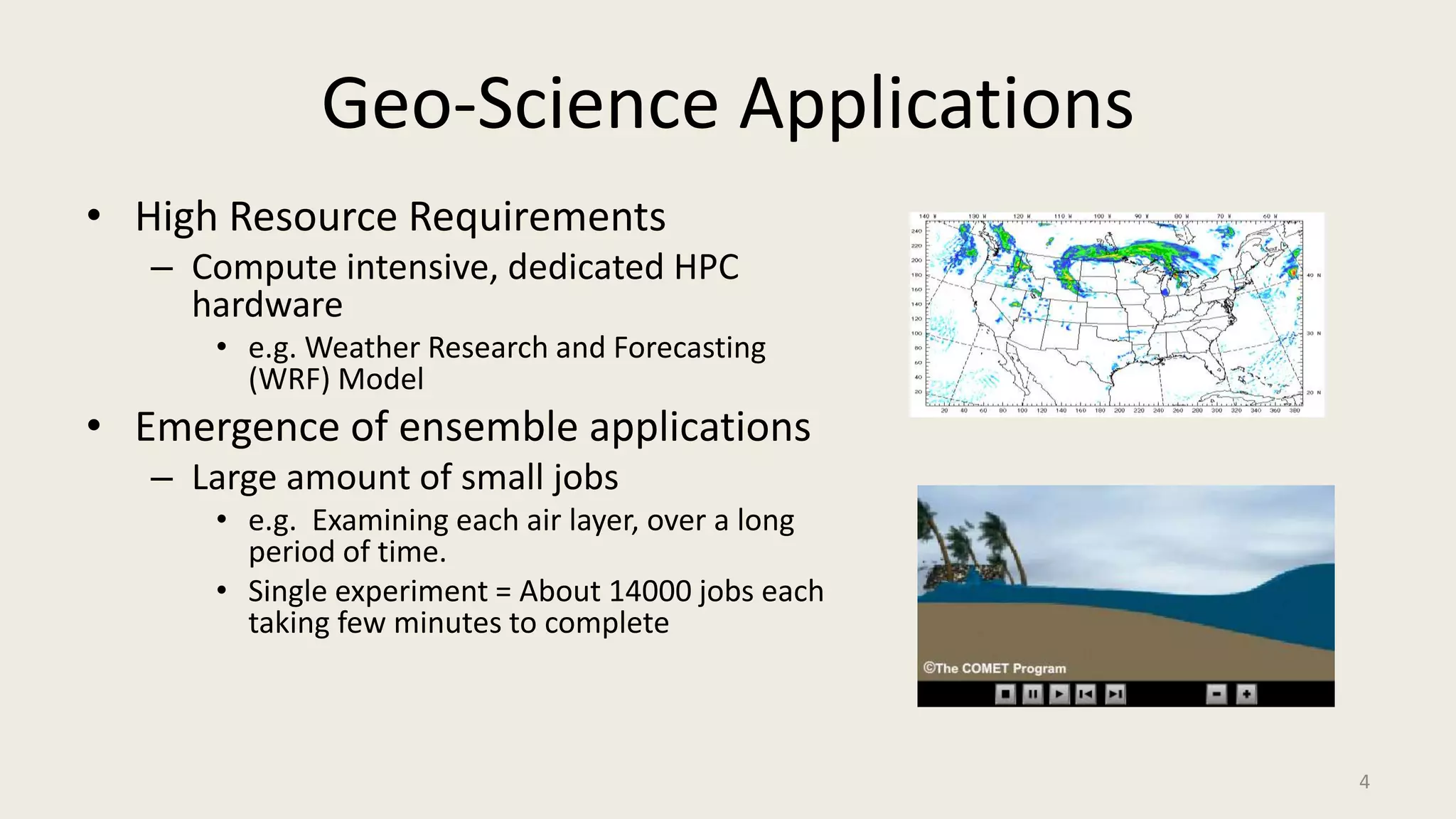

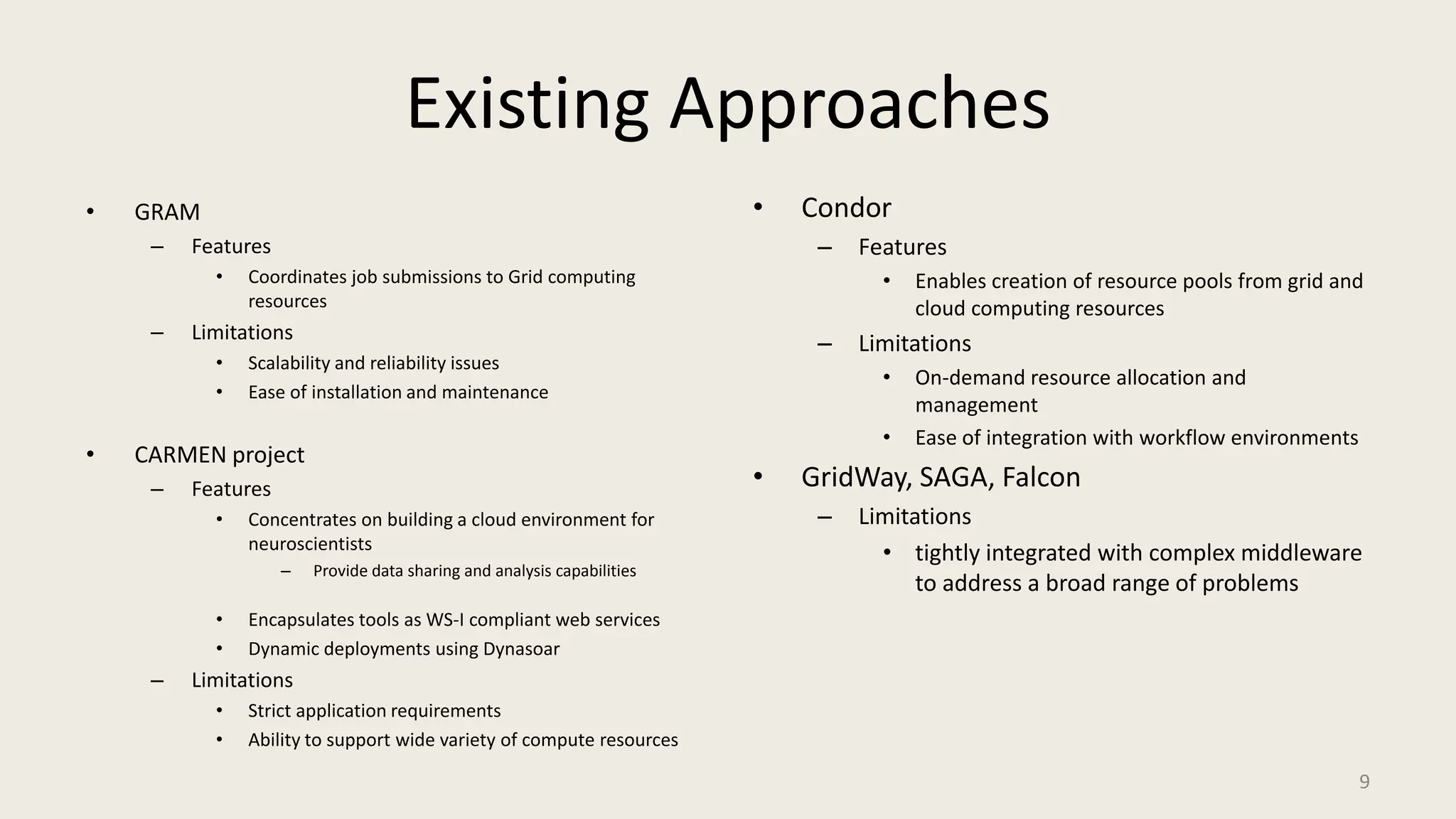

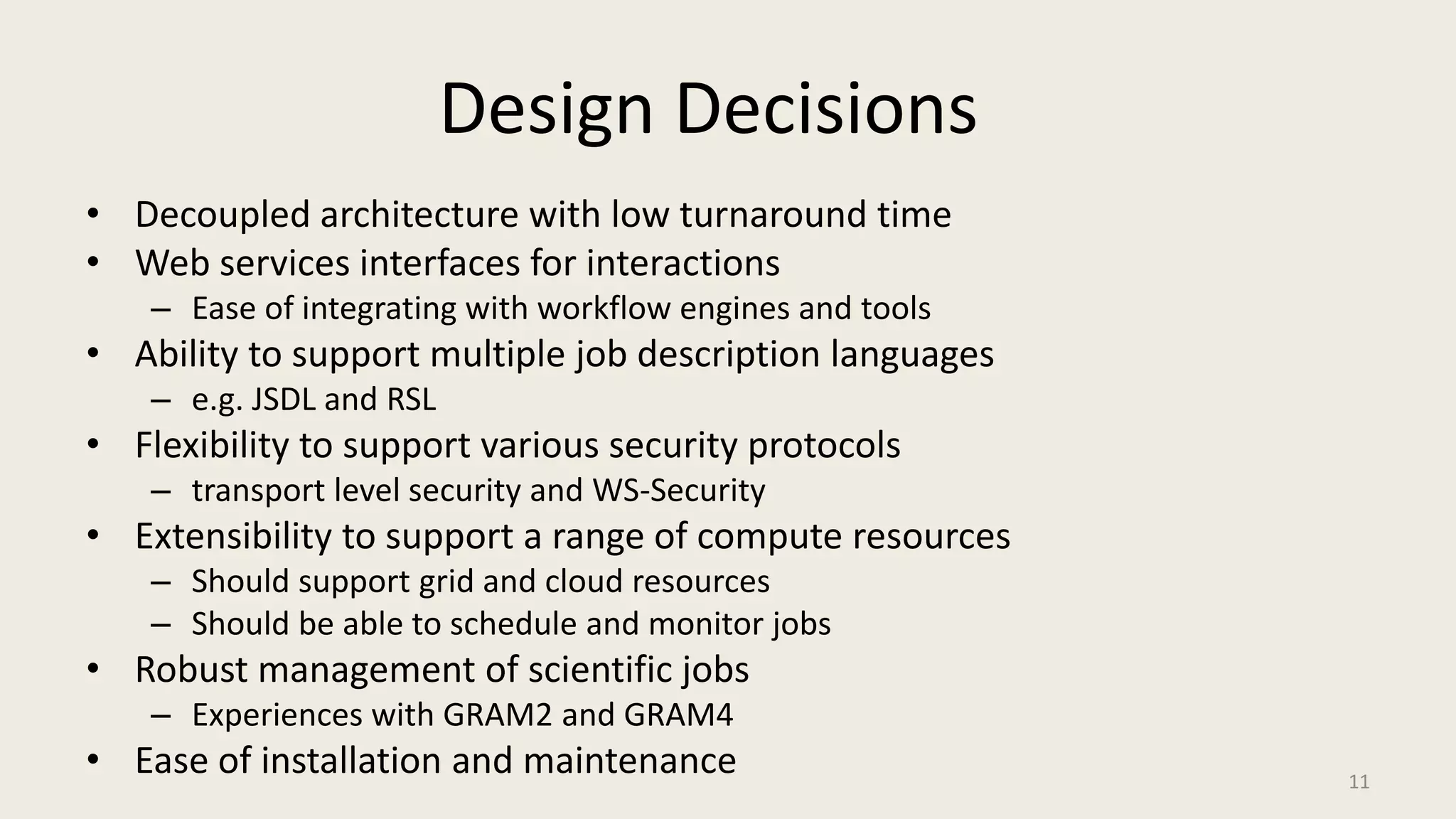

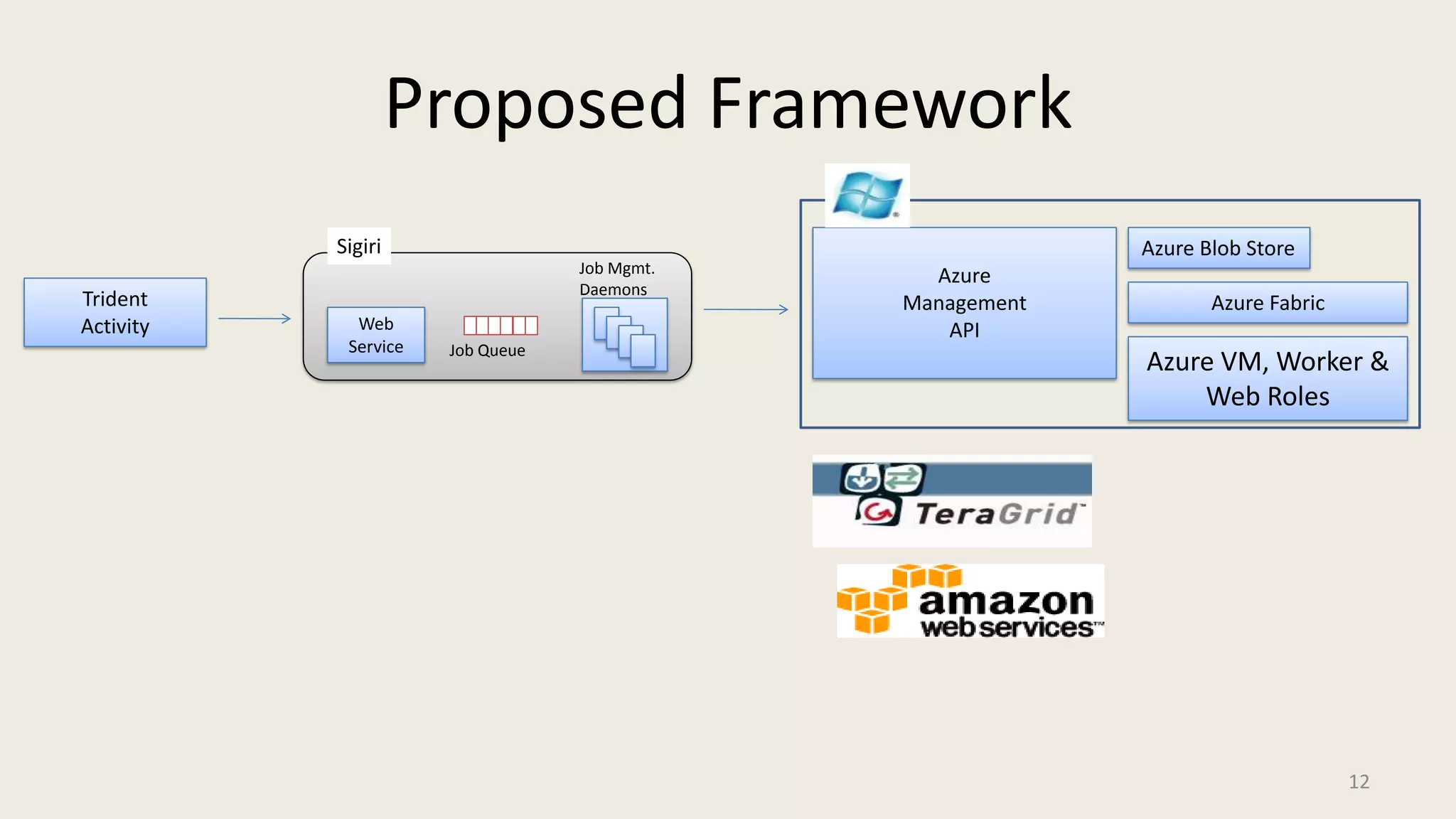

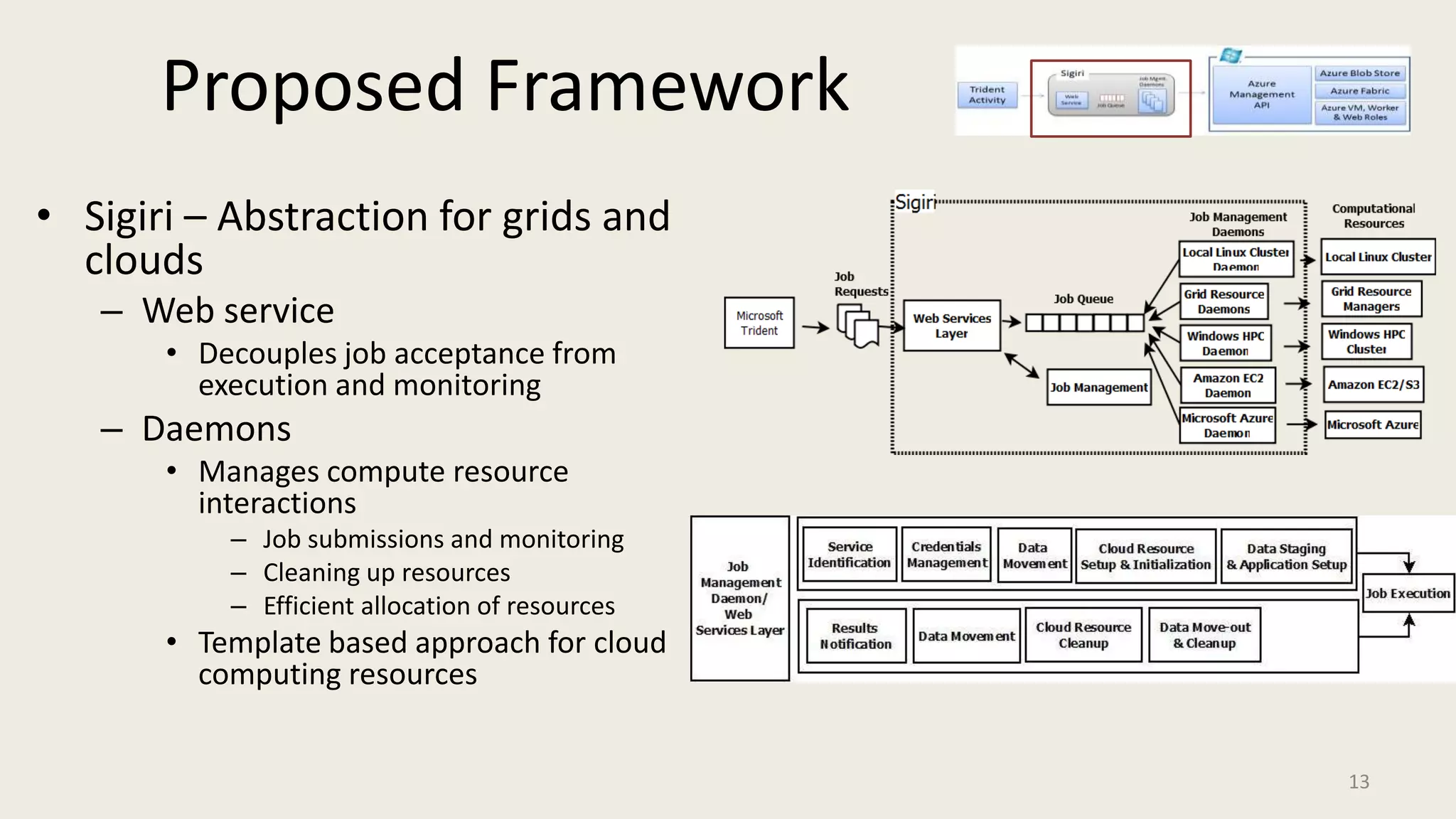

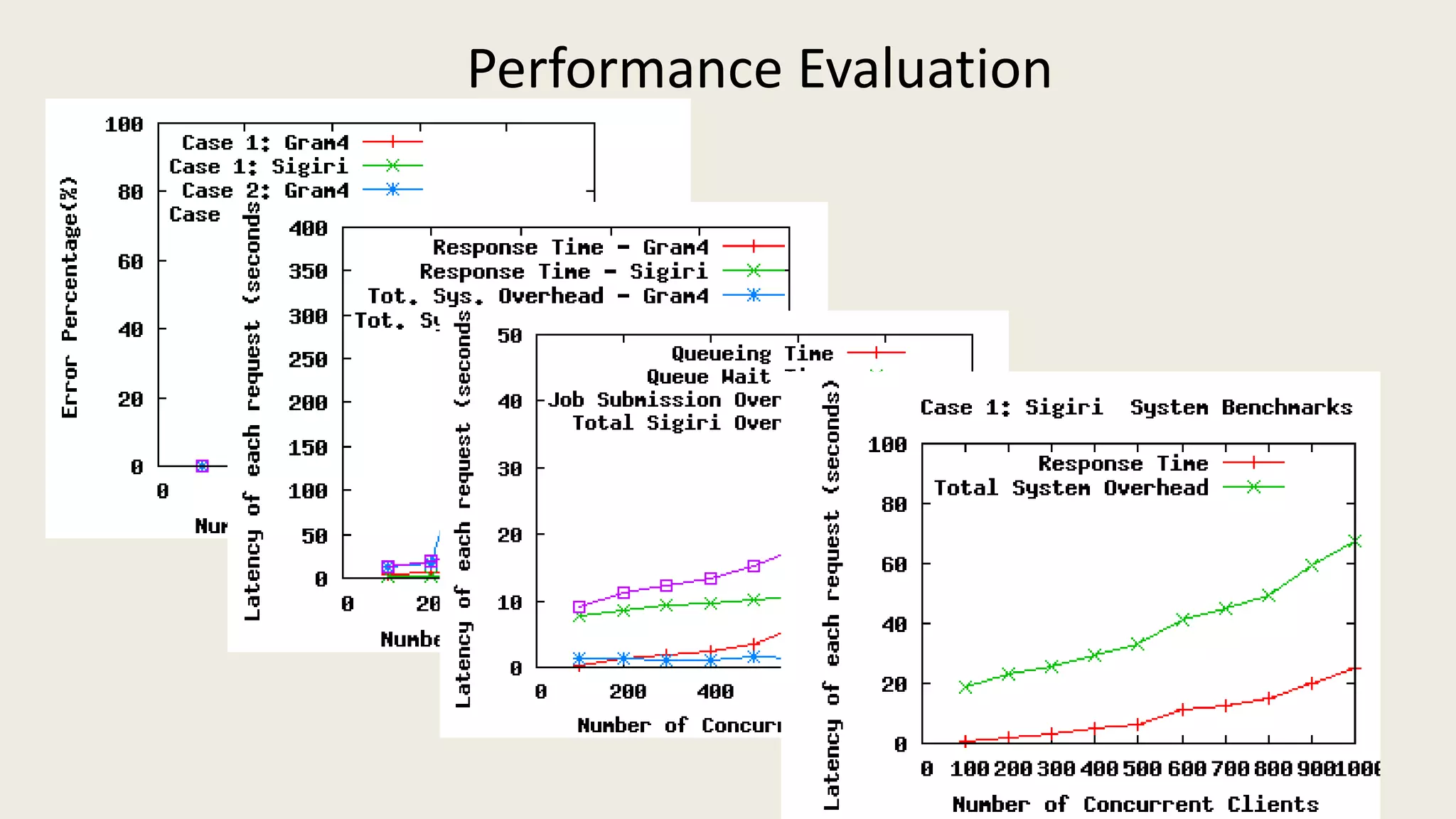

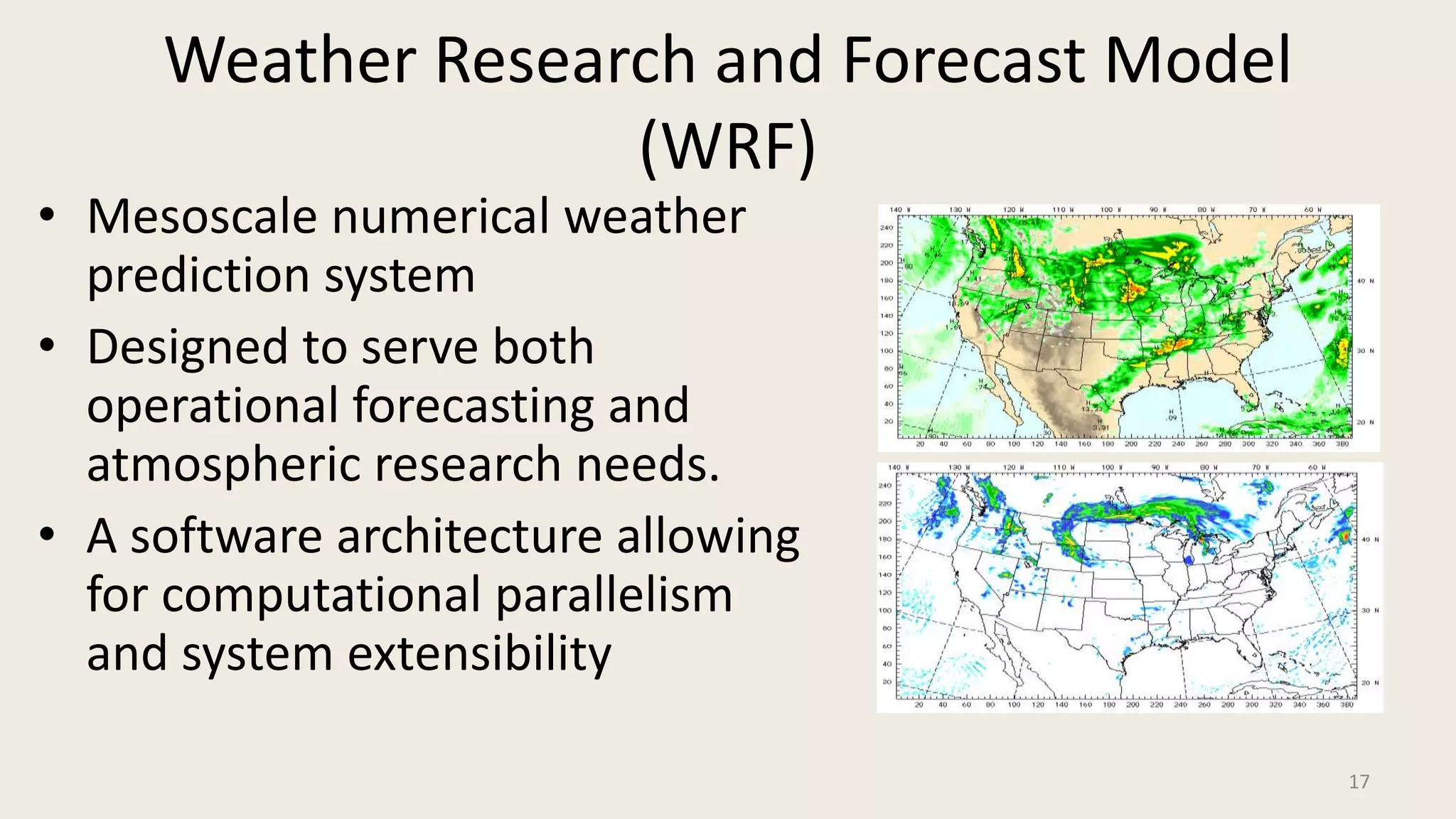

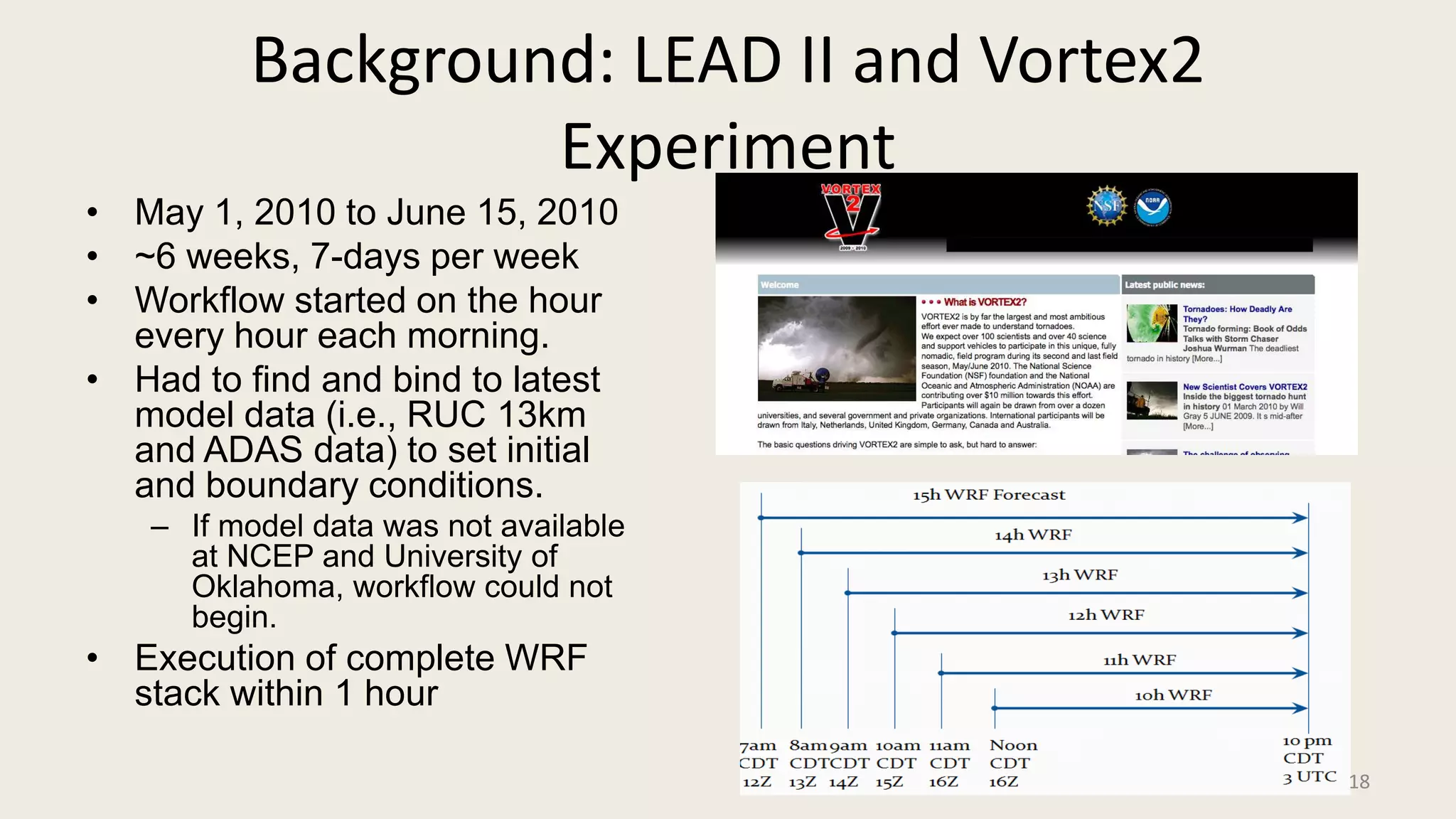

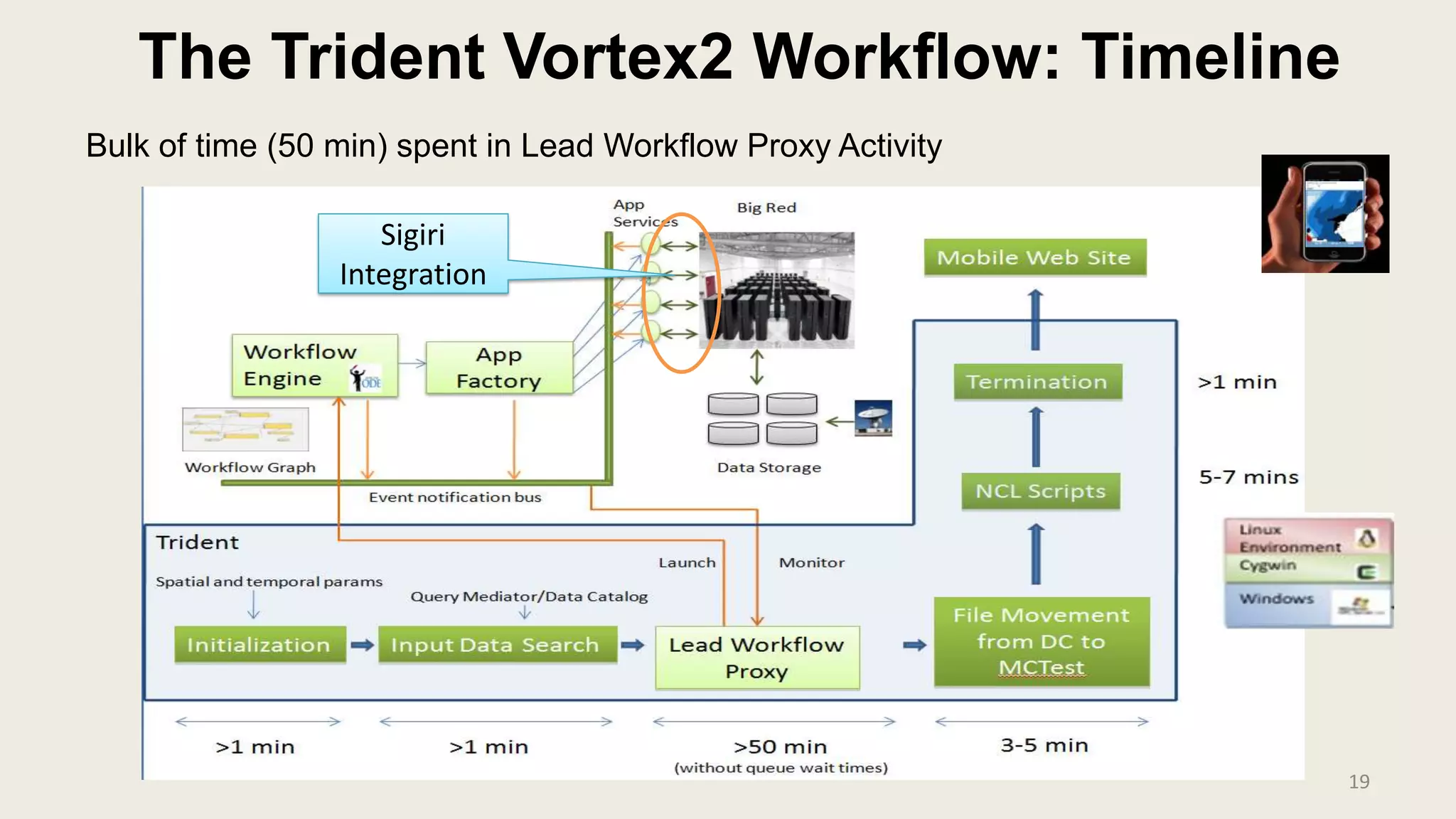

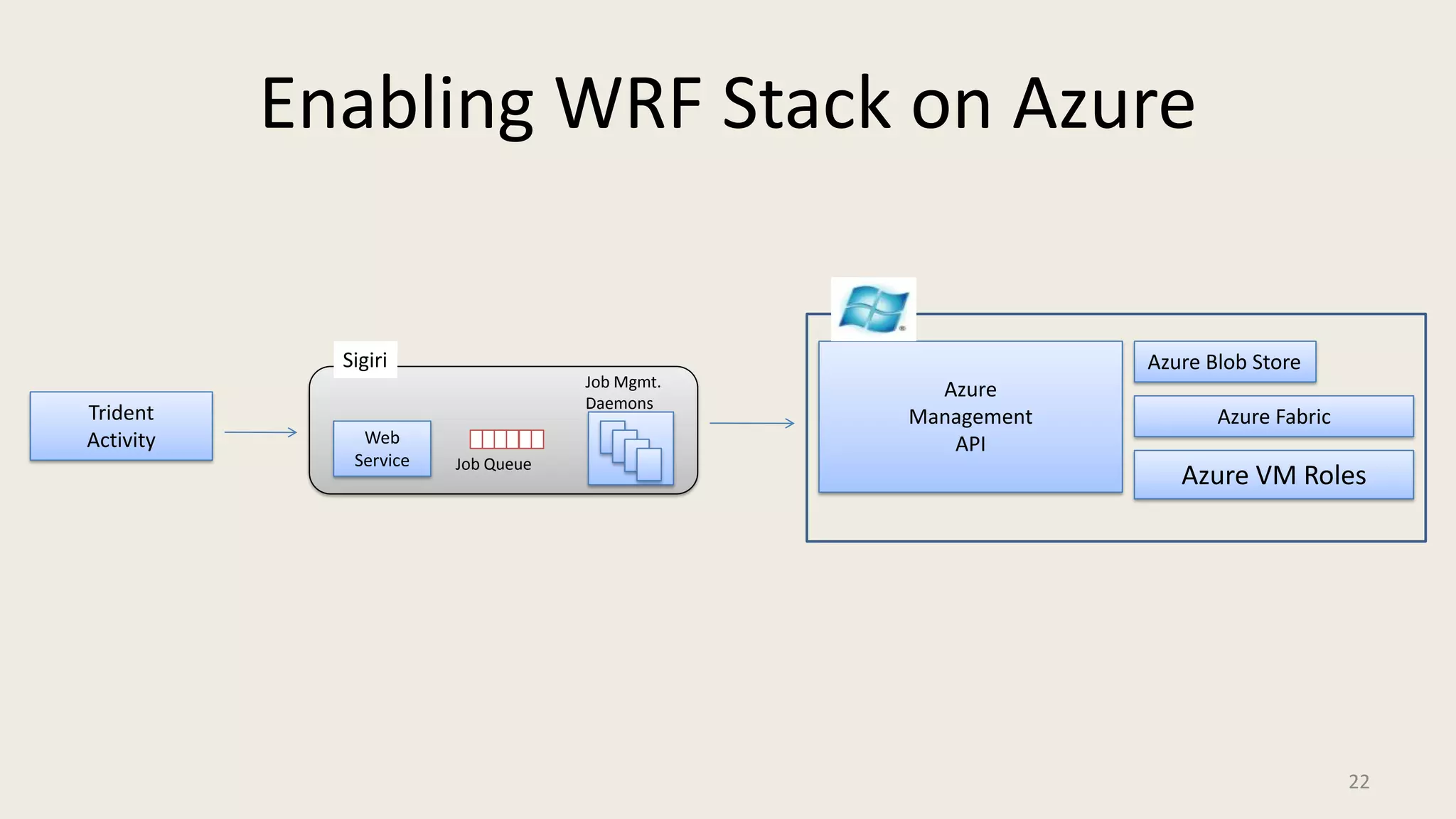

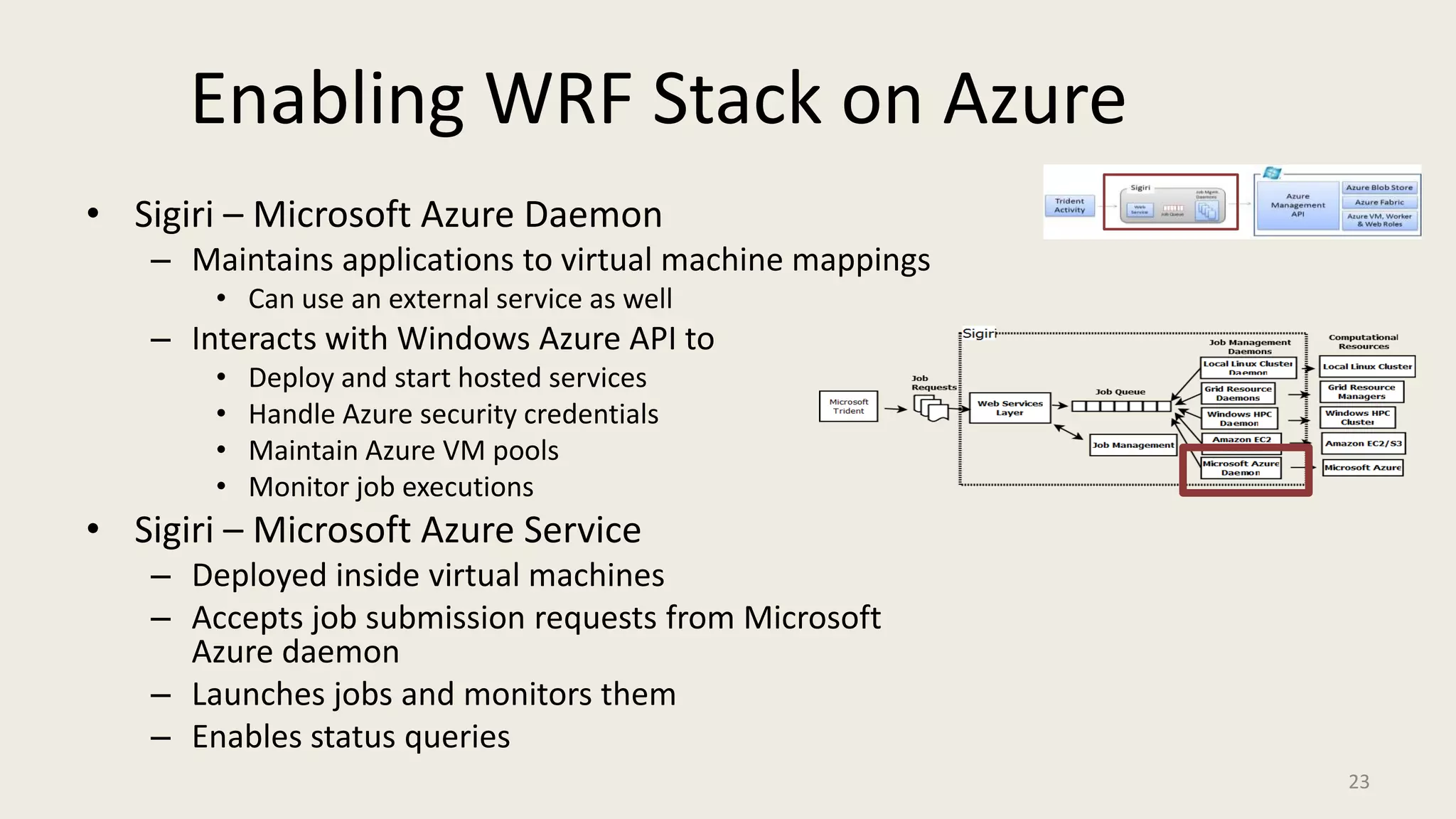

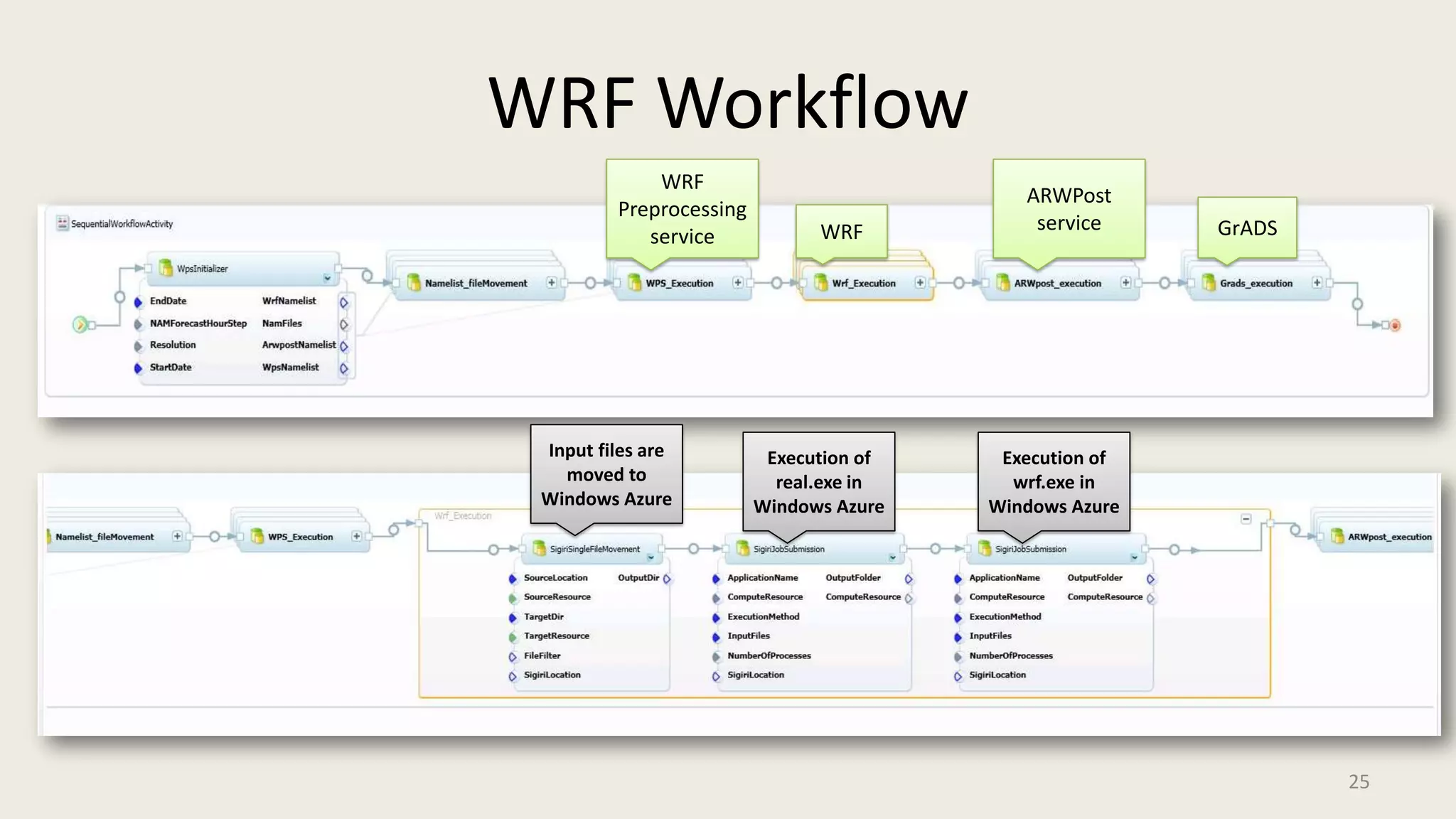

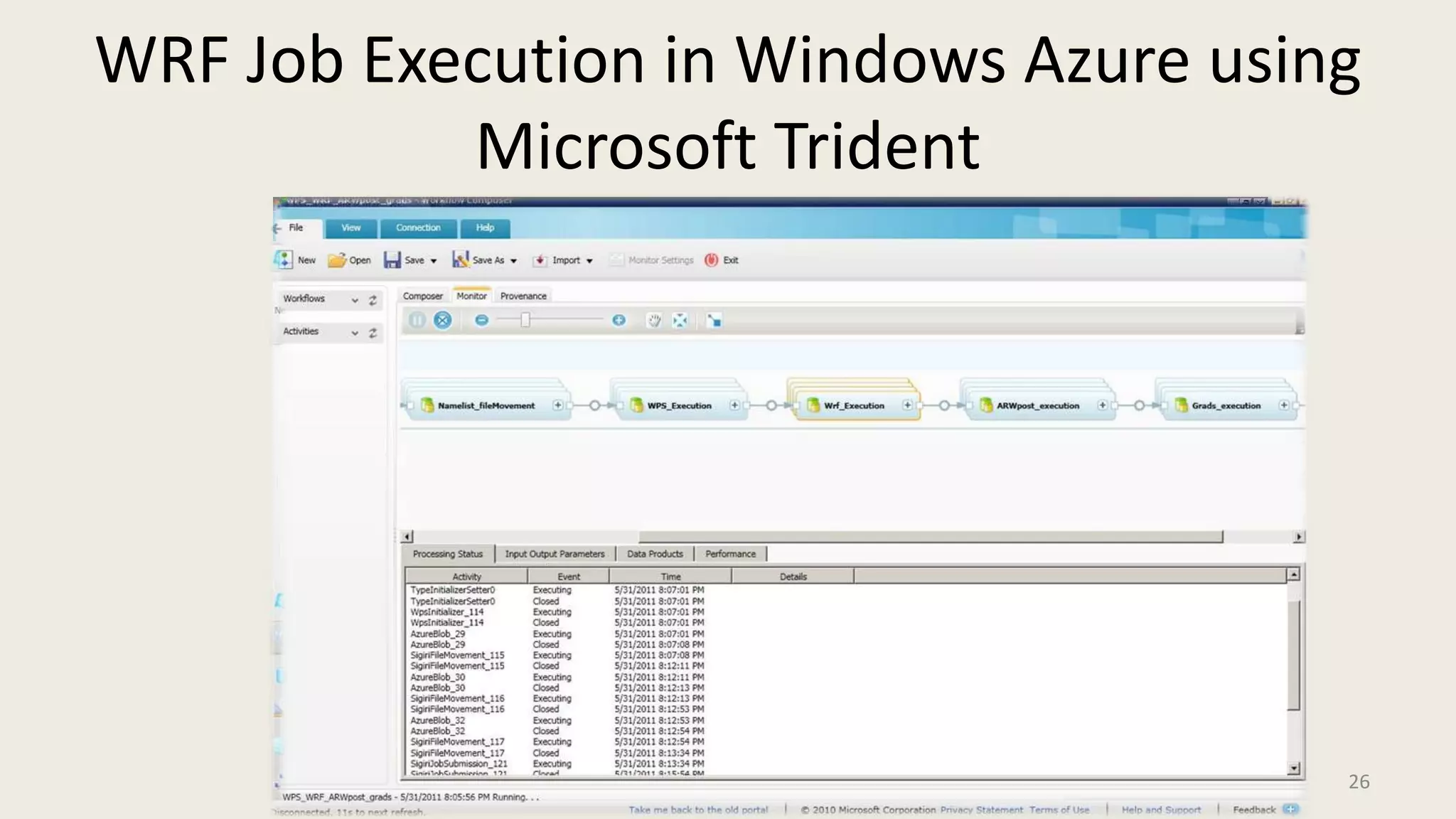

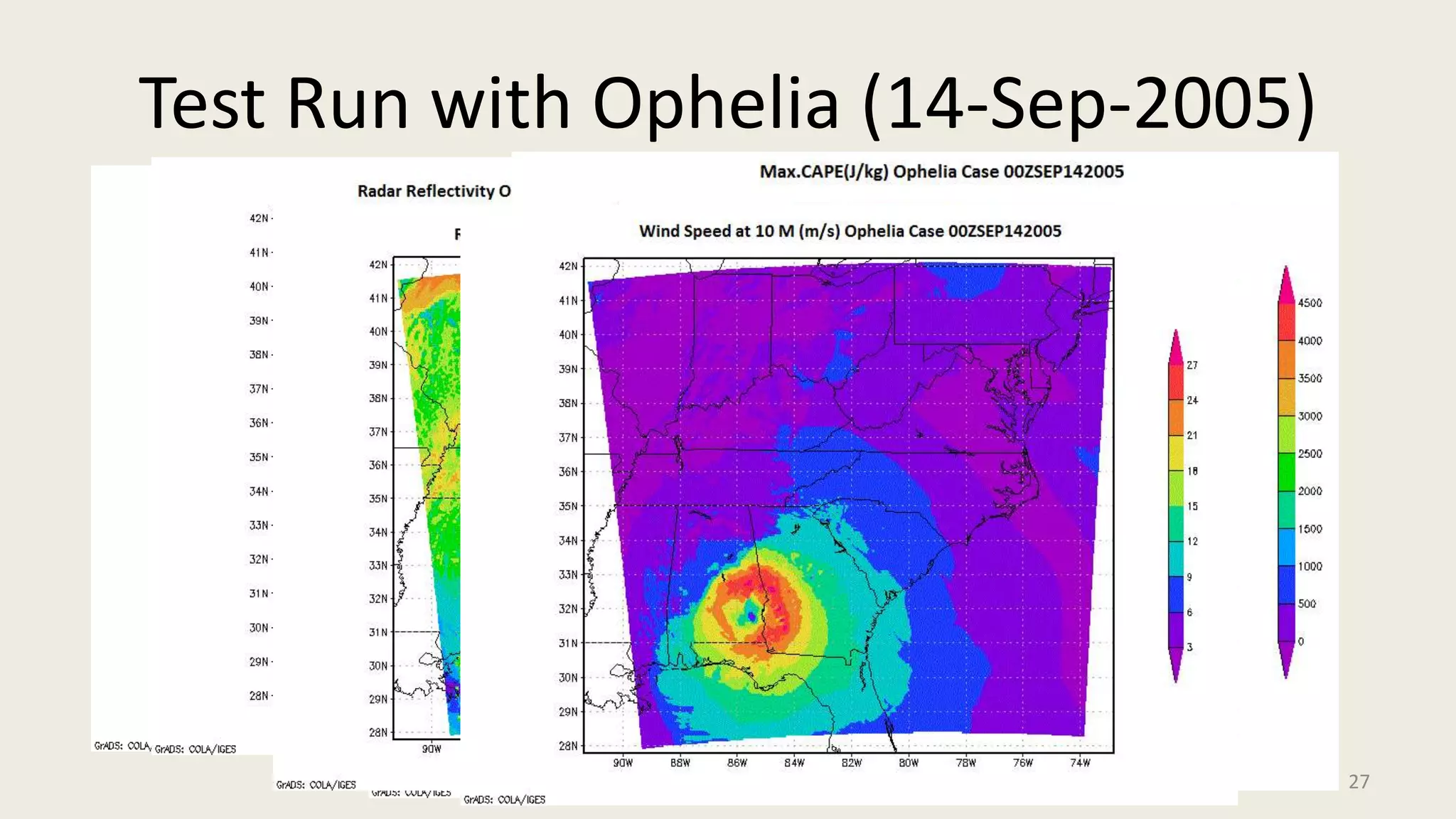

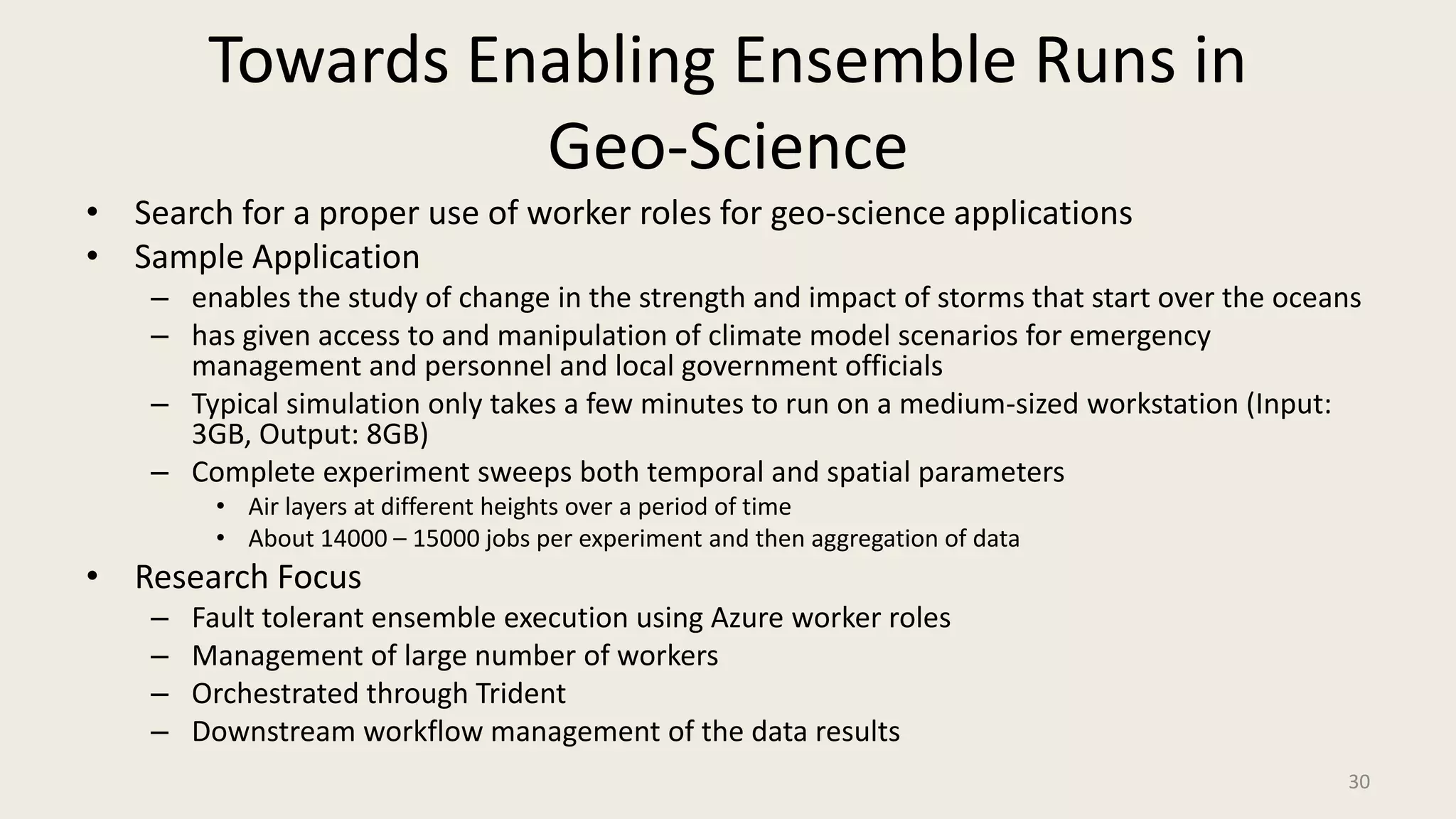

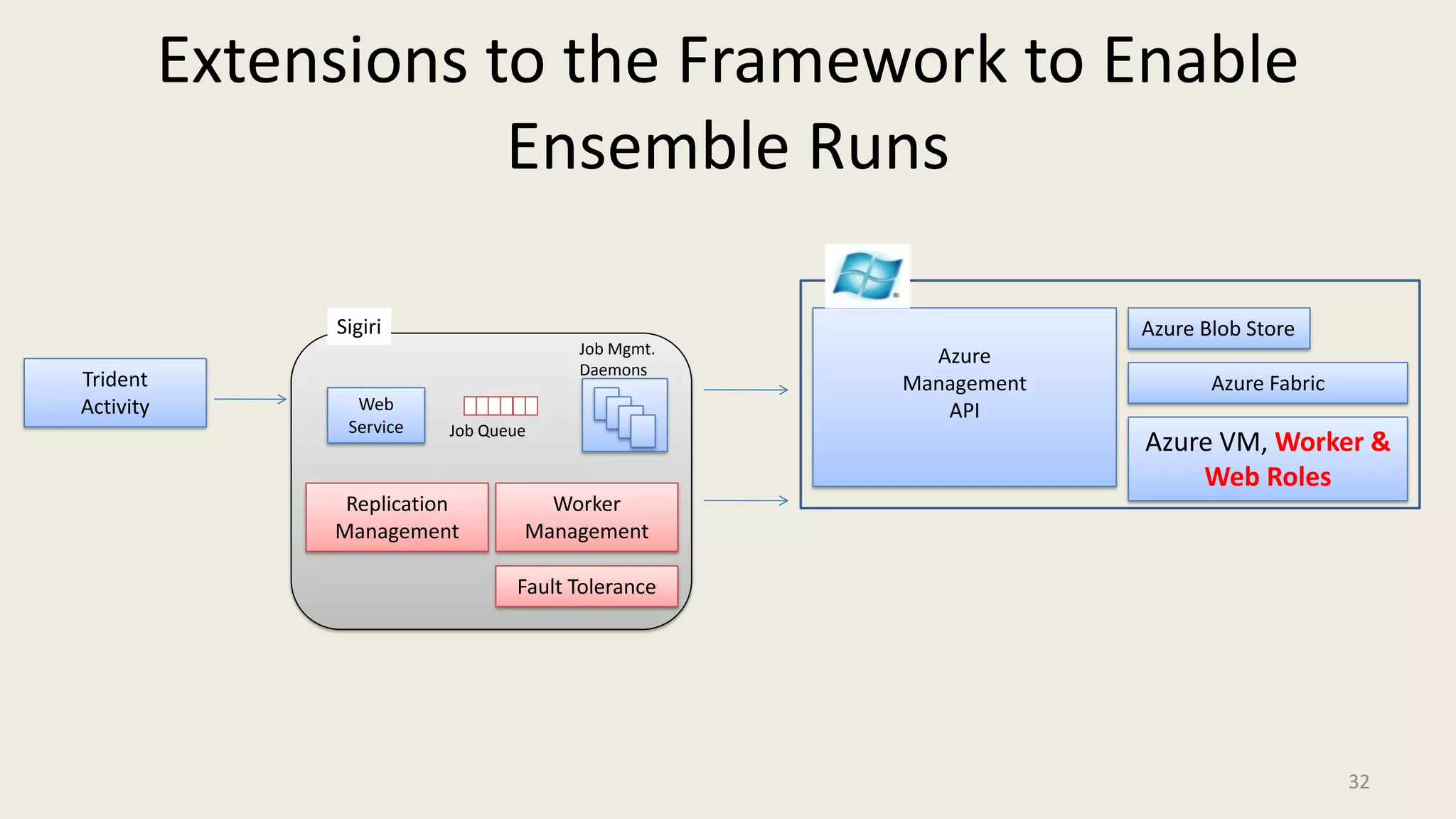

This document proposes a framework to enable mid-scale geo-science experiments through Microsoft Trident and Windows Azure. It discusses challenges faced by geo-science applications in utilizing computational resources and opportunities provided by cloud computing. The proposed framework uses Sigiri to schedule jobs on grid and cloud resources and Trident activities to compose scientific workflows. It aims to support applications like WRF and ensembles through optimized use of Azure worker roles, fault tolerance, and data management capabilities.