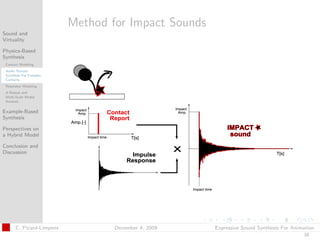

Ce document présente une thèse de doctorat sur la synthèse sonore expressif pour l'animation, abordant des méthodes comme la synthèse sonore basée sur la physique, le modélage de contact, et la synthèse basée sur des exemples. Il discute des défis et des perspectives d'un modèle hybride pour la génération de son interactif dans des environnements virtuels. Les contributions incluent une analyse automatique des enregistrements préexistants et des approches pour la synthèse sonore flexible adaptée à l'animation guidée par la physique.

![t

Sound from Contacts

Sound and

Virtuality

Physics-Based

Synthesis

Contact Modeling

Audio Texture

Synthesis For Complex

Dichotomy

Contacts

Resonator Modeling Impacts

A Robust and

Multi-Scale Modal

Analysis

Continuous contacts

Example-Based

Synthesis Two Schemes for Contact Force Modelling

Perspectives on

a Hybrid Model

Feed-forward scheme

Conclusion and [van den Doel et al. 01]

Discussion

Additive synthesis

Direct computation of contact forces

[Avanzini et al. 02]

Bristle model

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

8](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-12-320.jpg)

![t

Contact Modeling

Sound and

Virtuality

Physics-Based

Synthesis

Contact Modeling

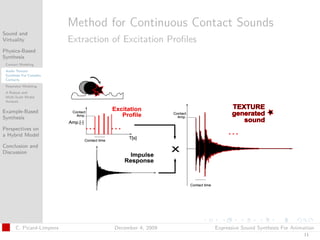

What Are The Current Limitations

Audio Texture

Synthesis For Complex

for Continuous Contacts?

Contacts

Resonator Modeling

Rate for physics engine report

A Robust and

Multi-Scale Modal

Analysis No geometric details when using visual textures

Example-Based

Synthesis

Authoring and control are challenging

Perspectives on

a Hybrid Model

HOW Can We Solve Them?

Conclusion and

Discussion By extracting

Excitation profiles from visual textures

with

Adaptive resolution

[Picard et al., VRIPHYS 08]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

9](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-14-320.jpg)

![t

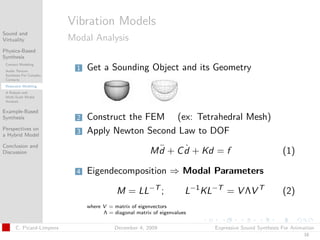

Vibration Models

Sound and

Virtuality Modal Analysis

Physics-Based

Synthesis

Contact Modeling

Audio Texture

Synthesis For Complex

Contacts Generating Sounds Based on Physics Simulation

Resonator Modeling

A Robust and

Multi-Scale Modal

In computer musics

Analysis

[Iovino et al. 97, Cook 02]

Example-Based

Synthesis In computer graphics

Perspectives on [Van Den Doel 01, O Brien et al. 02]

a Hybrid Model

Conclusion and

Discussion Improvements for Interactive Sound Rendering

Modal parameter tracking

[Maxwell et al. 07]

Frequency content sparsity

[Bonneel et al. 08]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

17](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-22-320.jpg)

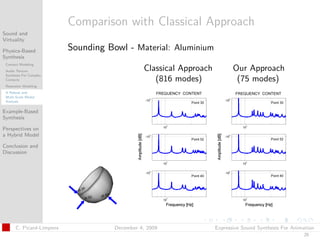

![t

Vibration Models

Sound and

Virtuality Modal Analysis

Physics-Based

Synthesis

Contact Modeling

Audio Texture

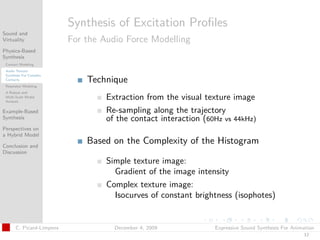

What Are

Synthesis For Complex

Contacts The Current Limitations?

Resonator Modeling

A Robust and

Multi-Scale Modal Meshing is difficult

Analysis

Example-Based

No real control on the FEM resolution

Synthesis

No clear interface between physics and audio

Perspectives on

a Hybrid Model

Conclusion and

Discussion

HOW Can We Solve Them?

By proposing

A robust and multi-scale modal analysis

which is

Coherent with the physics simulation

[Picard et al., DAFx 09]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

20](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-26-320.jpg)

![t

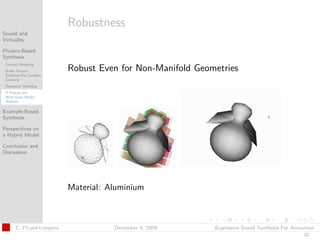

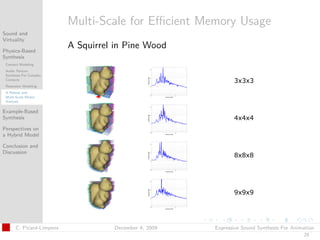

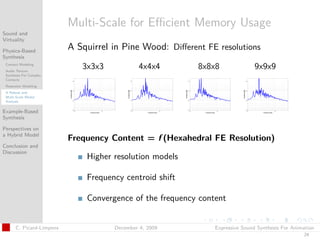

Our Deformation Model

Sound and

Virtuality

Physics-Based

Synthesis Inspired from Work by Nesme et al.

Contact Modeling

Audio Texture

[Nesme et al. 06]

Synthesis For Complex

Contacts

Resonator Modeling

A Robust and Technique

Multi-Scale Modal

Analysis Merged voxels used as Hexahedral Finite Elements

Example-Based

Synthesis

Perspectives on

a Hybrid Model

Conclusion and

Discussion

Implementation with the Sofa Framework

Validation of the Model

Tests on a metal cube

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

21](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-27-320.jpg)

![t

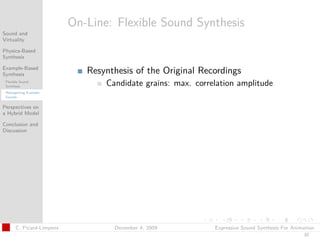

Implementation of Signal-Based Models

Sound and

Virtuality

Physics-Based

Synthesis

Example-Based

Synthesis

Flexible Sound

Concatenative Synthesis

Synthesis

Retargetting Example

[Roads 91, Schwarz 06]

Sounds

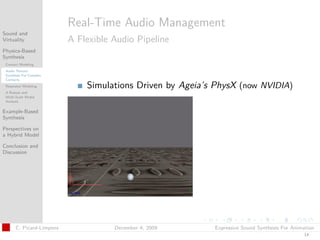

Perspectives on Sound Textures Based on Physics

a Hybrid Model

[Cook 99]

Conclusion and

Discussion [Dobashi et al. 03, Zheng et al. 09] Dobashi et al. 03

Authoring and Interactive Control

[Cook 02]

Cook 99

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

28](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-34-320.jpg)

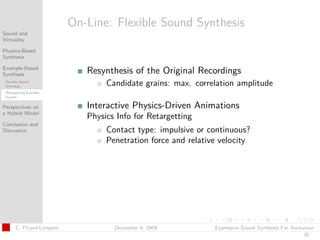

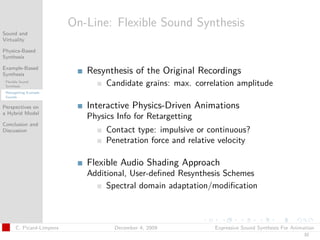

![t

Implementation of Signal-Based Models

Sound and

Virtuality

Physics-Based

Synthesis

Example-Based

Synthesis

What Are

Flexible Sound

Synthesis

The Current Limitations?

Retargetting Example

Sounds

Processing is not generic

Perspectives on

a Hybrid Model Parametrizing is difficult

Conclusion and

Discussion

HOW Can We Solve Them?

By

Retargetting example sounds

To physics-driven animation

[Picard et al., AES 09]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

29](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-36-320.jpg)

![t

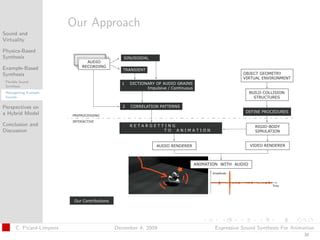

Preprocess: A Generic Analysis

Sound and

Virtuality

Physics-Based

Synthesis

Example-Based

Synthesis

Flexible Sound

Synthesis Impulsive and Continuous Contacts

Retargetting Example

Sounds

Spectral Modeling Synthesis (SMS) [Serra 97]

Perspectives on

a Hybrid Model

Conclusion and

Automatic Extraction of Audio Grains

Discussion Dictionary: Impulsive/Continuous

Generation of Correlation Patterns

between original recordings and audio grains

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

31](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-38-320.jpg)

![t

Sound Modeling

Sound and

Virtuality When Nonlinearity Occurs

Physics-Based

Synthesis

Example-Based

Synthesis

Perspectives on

a Hybrid Model

Motivation

Problems of Single Models

A Hybrid Model for

Fracture Events Vibration models assume linearity

Conclusion and

Discussion

Example-based sounds are hard to parametrize

Previous Work

Modeling nonlinearities

[O Brien et al. 01, Chadwick et al. 09]

[Cook 02]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

38](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-48-320.jpg)

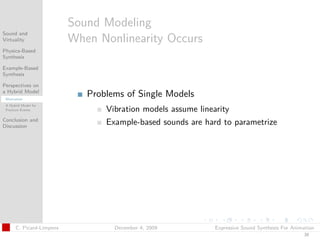

![t

Fracture Events

Sound and

Virtuality

Physics-Based

Synthesis

Example-Based Background

Synthesis

Frequently occur in virtual environments

Perspectives on

a Hybrid Model

Motivation Visual rendering

A Hybrid Model for

Fracture Events [O Brien et al. 99, 02]

Conclusion and [Parker and O Brien. 09]

Discussion

Sound rendering: Little research

[Warren et al. 84] [Rath et al. 03]

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

39](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-49-320.jpg)

![t

Fracture Events

Sound and

Virtuality

Physics-Based

Synthesis

Example-Based Background

Synthesis

Frequently occur in virtual environments

Perspectives on

a Hybrid Model

Motivation Visual rendering

A Hybrid Model for

Fracture Events [O Brien et al. 99, 02]

Conclusion and [Parker and O Brien. 09]

Discussion

Sound rendering: Little research

[Warren et al. 84] [Rath et al. 03]

Challenges

Event depends on the material involved

Differents phases emerge from fracture event

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

39](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-50-320.jpg)

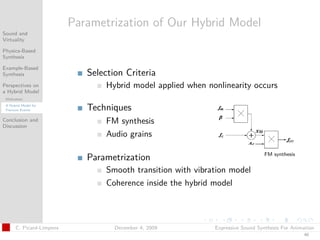

![t

Promising Directions for Future Work

Sound and

Virtuality

Physics-Based

Synthesis

Complex Contact Modeling

Example-Based

Synthesis Two interacting textures

Perspectives on Surface-based interactions

a Hybrid Model

Adequate perceptual experiments

Conclusion and

Discussion

Contributions Improved Modal Analysis for Resonator Modeling

Extensions and

Applications Recent work from [Nesme et al. Siggraph 09]

Investigations with GPU for in-line computation

Complete integration in a virtual scene

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

47](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-61-320.jpg)

![t

Promising Directions for Future Work

Sound and

Virtuality

Physics-Based

Synthesis

Complex Contact Modeling

Example-Based

Synthesis Two interacting textures

Perspectives on Surface-based interactions

a Hybrid Model

Adequate perceptual experiments

Conclusion and

Discussion

Contributions Improved Modal Analysis for Resonator Modeling

Extensions and

Applications Recent work from [Nesme et al. Siggraph 09]

Investigations with GPU for in-line computation

Complete integration in a virtual scene

Example-Based Technique

Clustering of similar grains

Statistical analysis of correlation patterns

Physics engine design

C. Picard-Limpens December 4, 2009 Expressive Sound Synthesis For Animation

47](https://image.slidesharecdn.com/thesispres-091217074508-phpapp02/85/Ph-D-Defense-Expressive-Sound-Synthesis-for-Animation-62-320.jpg)