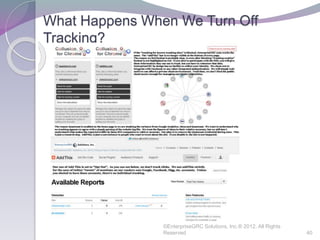

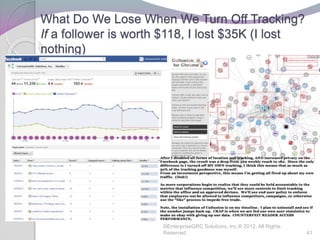

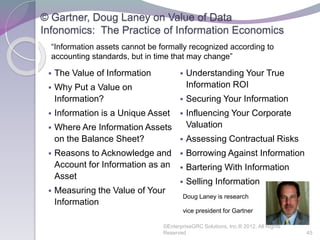

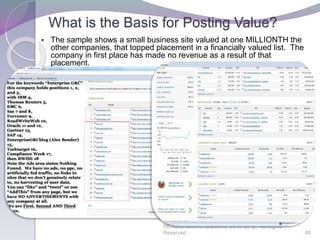

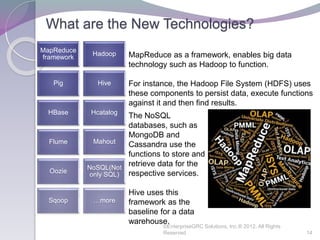

This document discusses the value and risks of big data. It begins with defining big data as large and complex data sets that require new technologies to manage and analyze. The document then discusses how big data is used for marketing, recommendations, analytics, and other purposes. It notes both the benefits but also risks of poor data quality and limited governance of big data projects. The document also provides overviews of technologies like Hadoop, MapReduce, Pig, Hive, and NoSQL that support big data. It questions whether social data should be considered a corporate asset and discusses the complexity of understanding big data risks. Overall, the document aims to highlight both the opportunities and governance challenges presented by big data.

![Facebook Resolves User Right of Publicity Claims

Concerning Sponsored Stories Advertising

Facebook recently settled a class-action lawsuit stemming from Facebook’s

alleged unauthorized use of users’ photographs in ‘sponsored stories’

advertisements on its site. The class action plaintiffs alleged that Facebook’s

use of their images in “Sponsored Stories” advertisements violated the

plaintiffs’ rights under the California right of publicity statute, which reserves to

the individual the right to control their image for commercial purposes. Under

the terms of the settlement, Facebook agreed to pay a total of $20 million, with

half of the settlement funds donated to charities and law schools, and the other

half going to plaintiffs’ attorneys. Other than the three class representatives, no

Facebook users will receive any funds from the settlement.

Facebook users had been serving as unwitting brand promoters on the site,

appearing without their permission or knowledge in promotional ‘stories’

featuring advertised products and services. Merely ‘liking’ a company or brand

functioned as an effective opt-in that allowed Facebook to use the user’s

image in that company or brand’s advertising on the site. The only means of

withdrawing from promotional use of one’s image was to ‘unlike’ the brand,

which, prior to this settlement, was not an easy feat. […]

Published In: Civil Remedies Updates, Communications & Media Law

Updates, Personal Injury Updates, Privacy Updates © Kilpatrick Townsend

2012 | Attorney Advertising

©EnterpriseGRC Solutions, Inc.® 2012, All Rights

Reserved 32

Kilpatrick Townsend on 7/11/2012 authors: Barry M. Benjamin; Andrew I. Gerber](https://image.slidesharecdn.com/thevalueofourdata-151210080327/85/The-value-of-our-data-32-320.jpg)