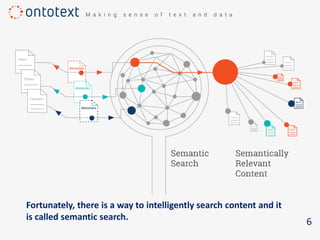

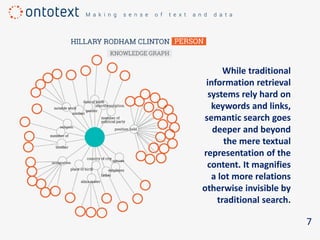

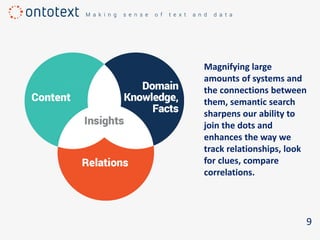

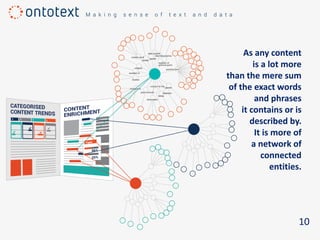

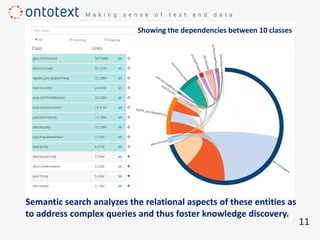

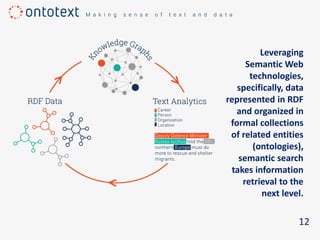

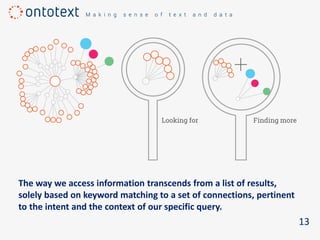

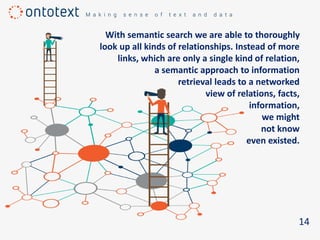

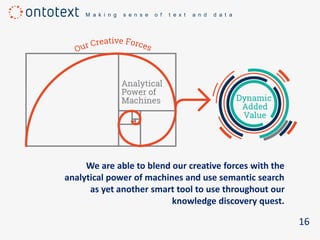

The document discusses the importance of semantic search in overcoming inefficiencies in content management and information retrieval, noting that traditional keyword-based approaches limit knowledge discovery. Semantic search enhances the ability to explore complex relationships between data entities and their meanings, providing a more intuitive and context-aware search experience. It leverages semantic web technologies to transform information retrieval from simple result lists to a network of relevant connections, maximizing the potential for turning data into insights.