This document discusses software coding standards and testing. It includes four lessons:

Lesson One discusses coding standards, which define programming style through rules for formatting source code. Coding standards help make code more readable, maintainable, and reduce costs. Common aspects of coding standards include naming conventions and formatting.

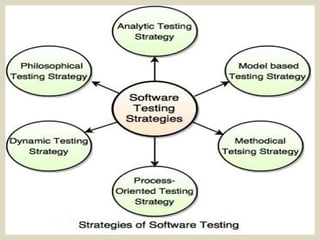

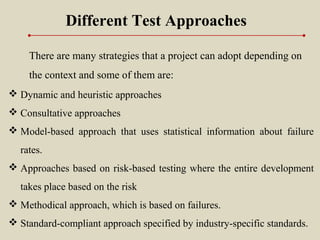

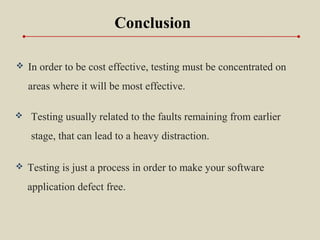

Lesson Two discusses software testing strategies and principles. Testing strategies provide a plan for defining the testing approach. Common strategies include analytic, model-based, and methodical testing. Key principles of testing include showing presence of defects, early testing, and that exhaustive testing is impossible.

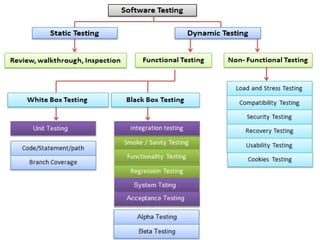

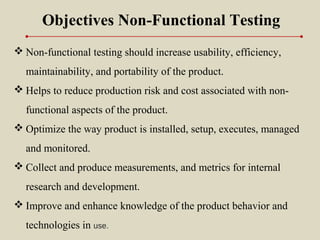

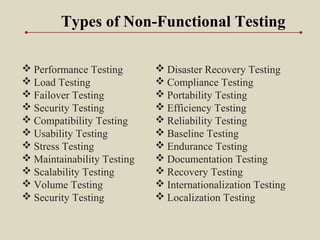

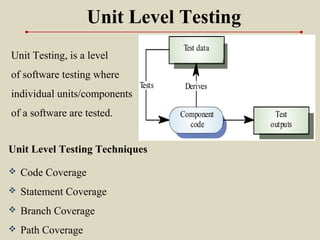

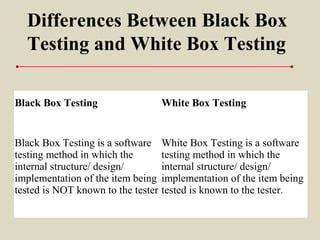

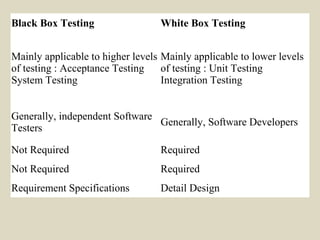

Lesson Three discusses software testing approaches and types but does not provide details.

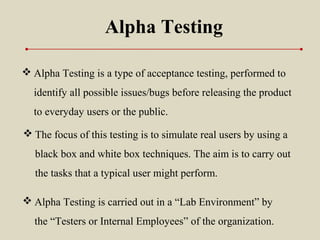

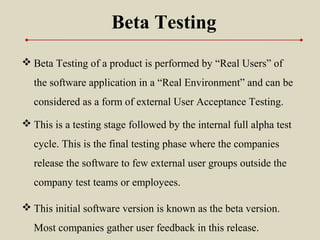

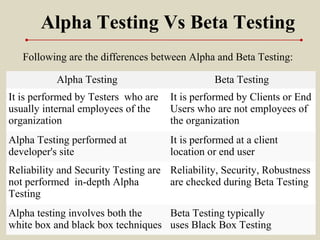

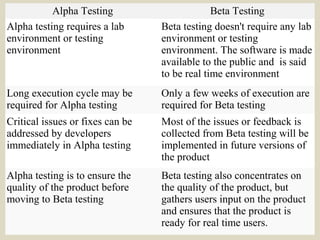

Lesson Four discusses alpha and beta testing as