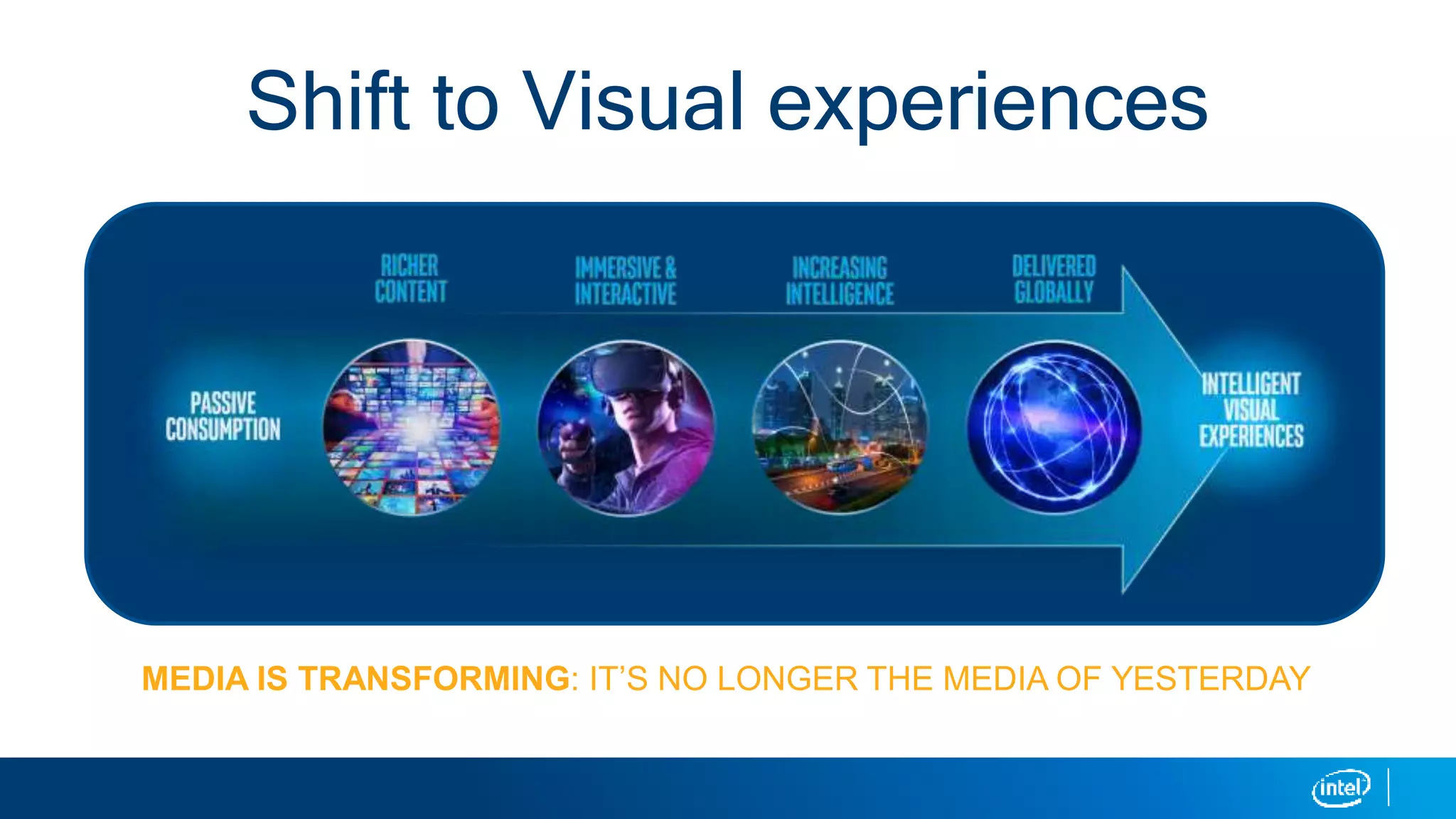

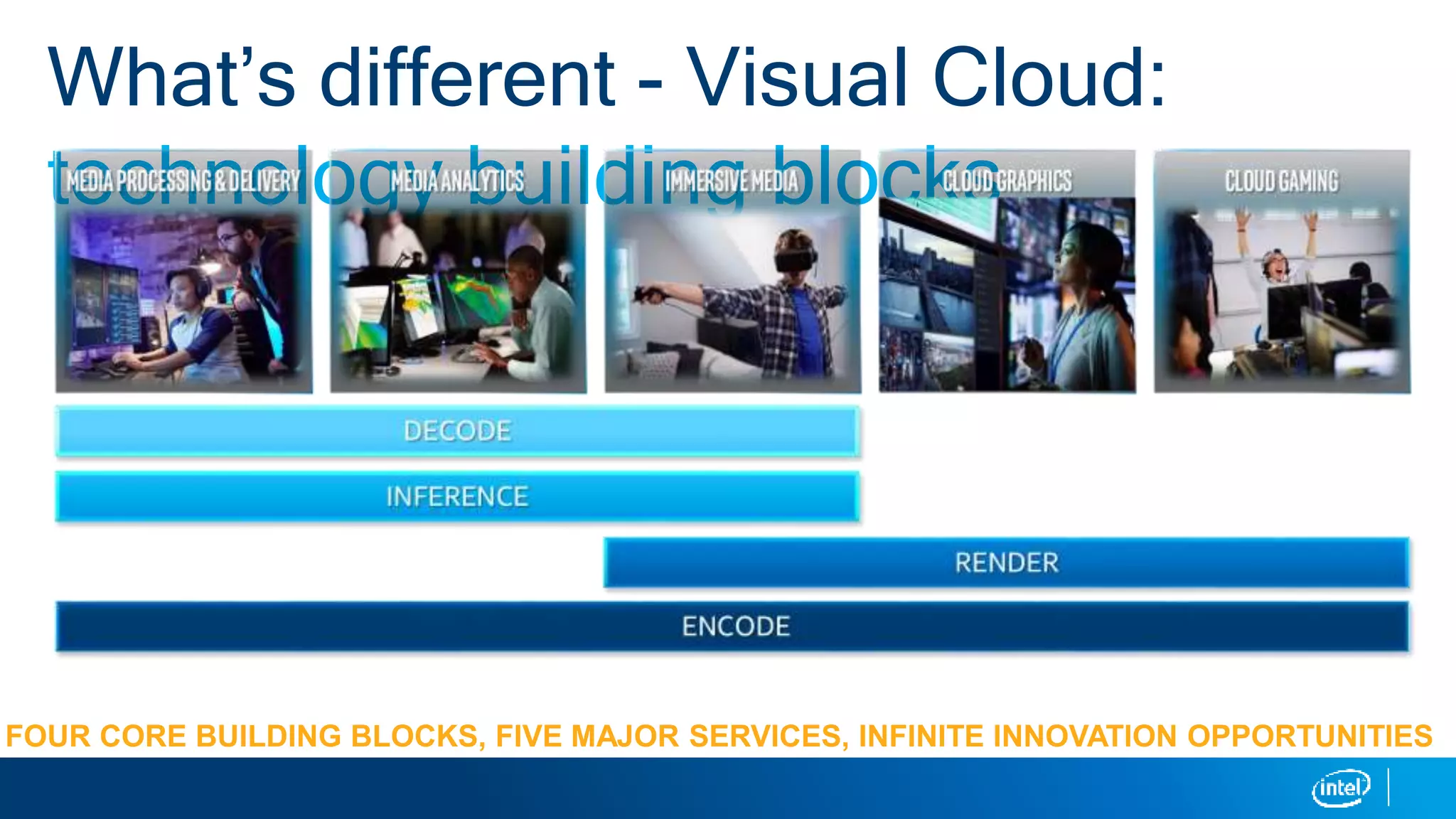

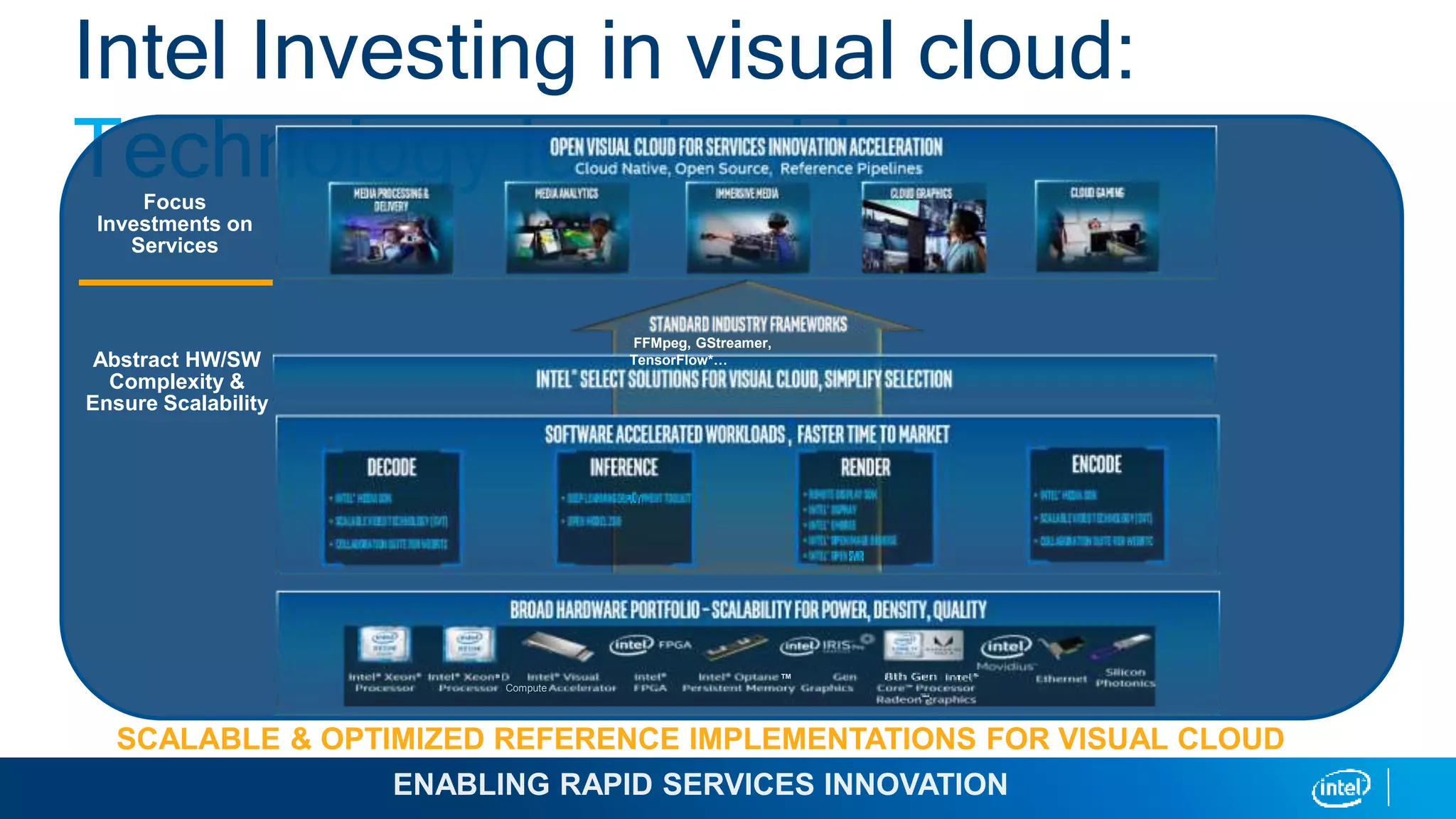

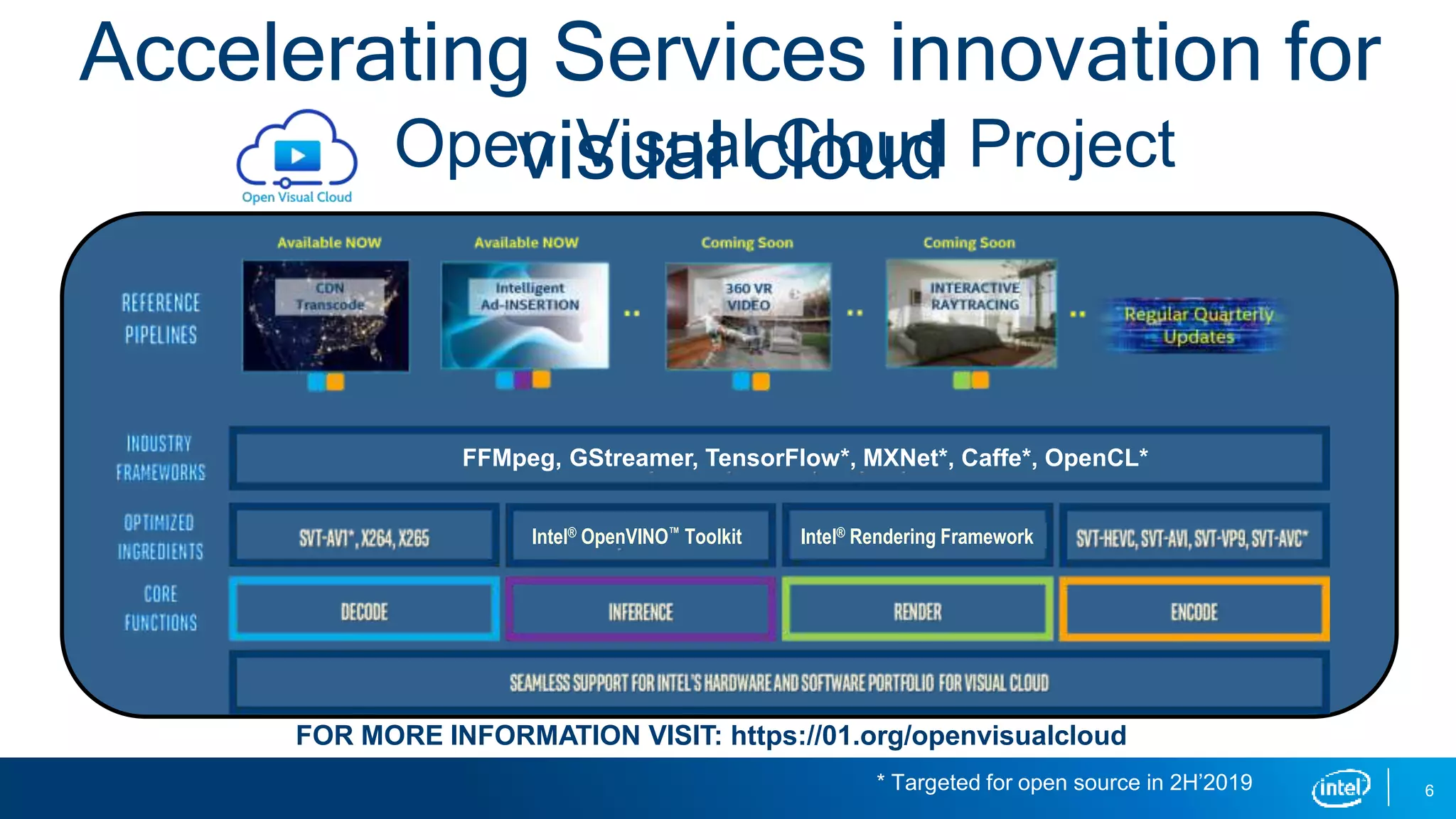

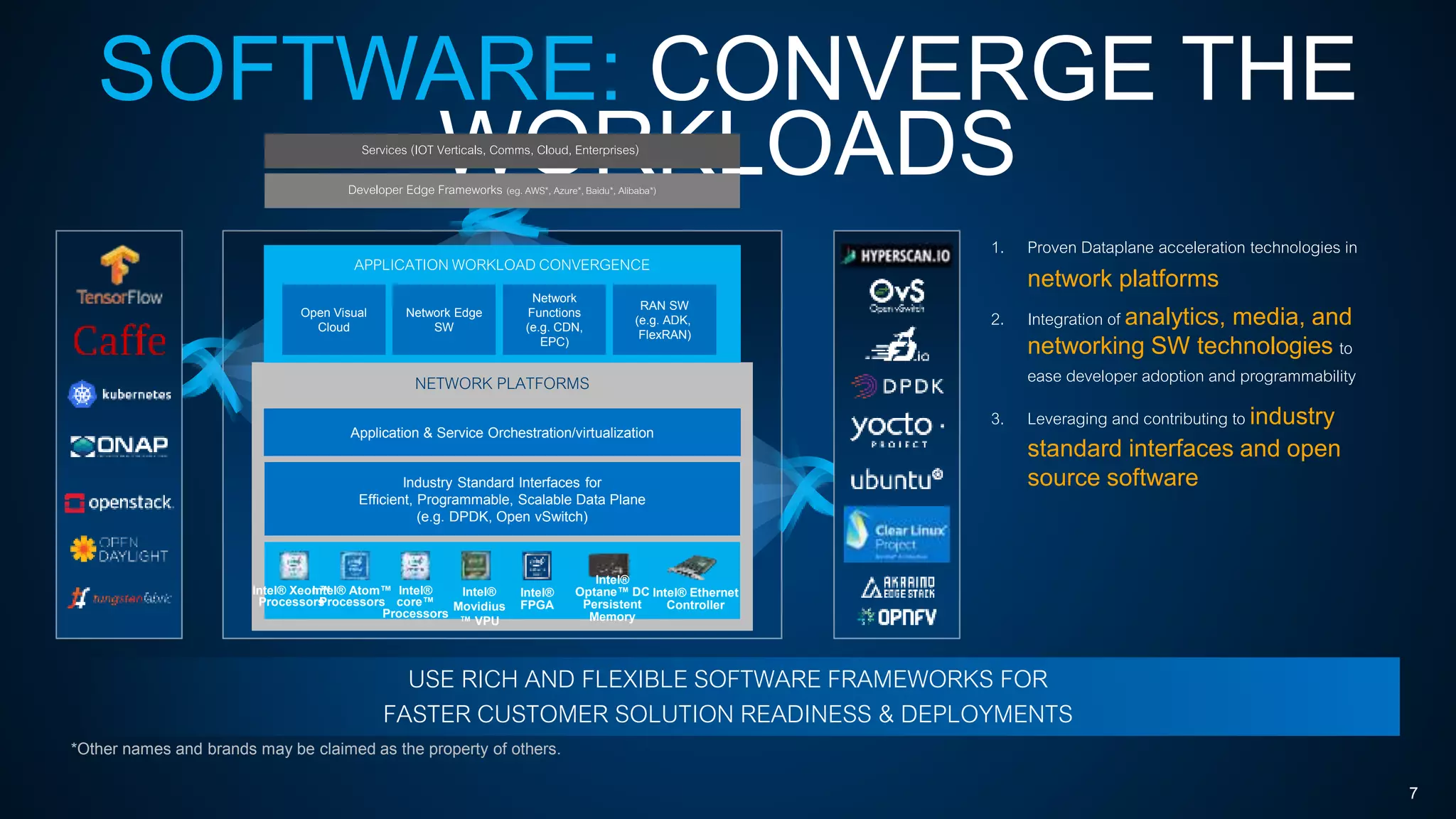

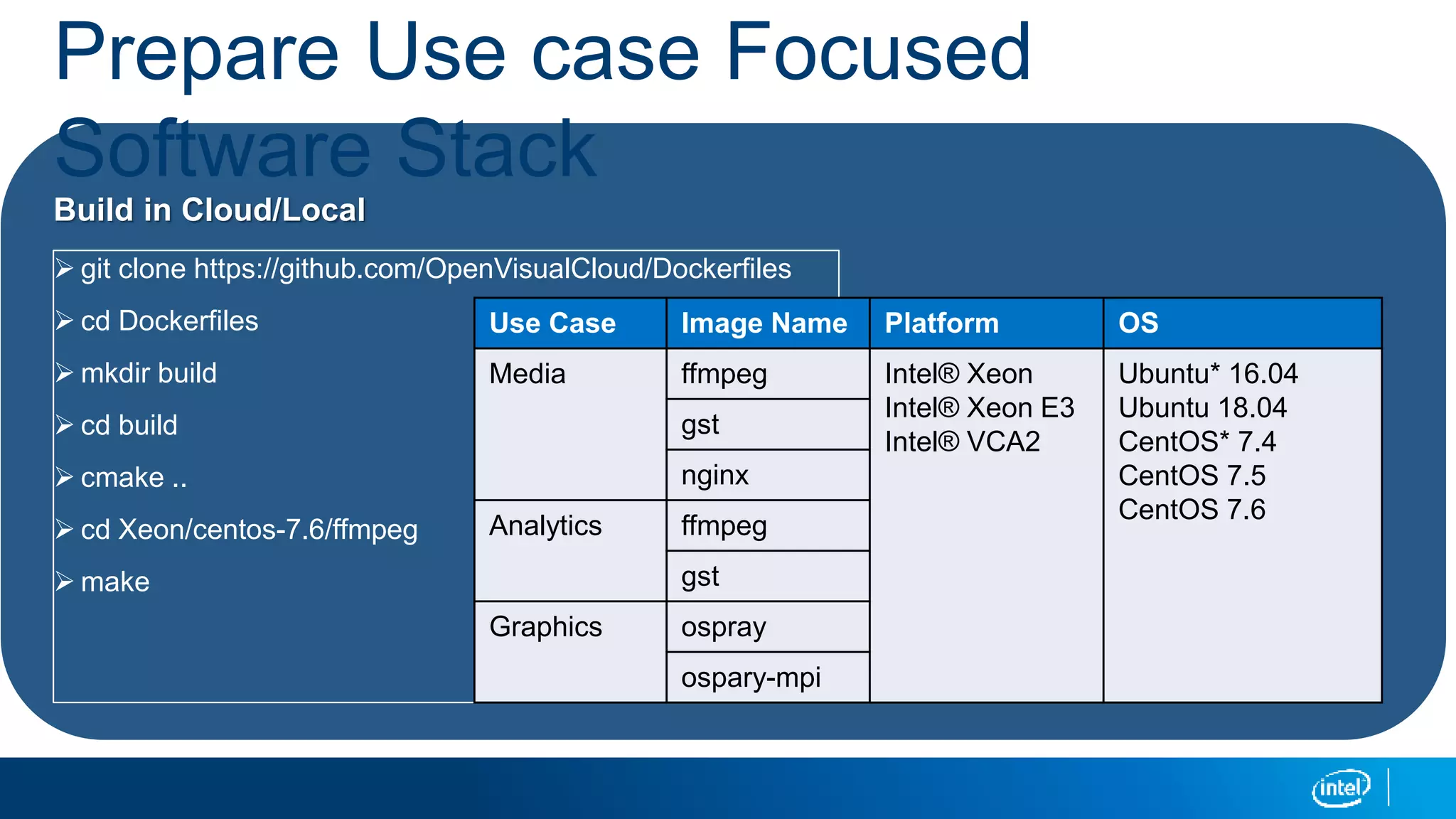

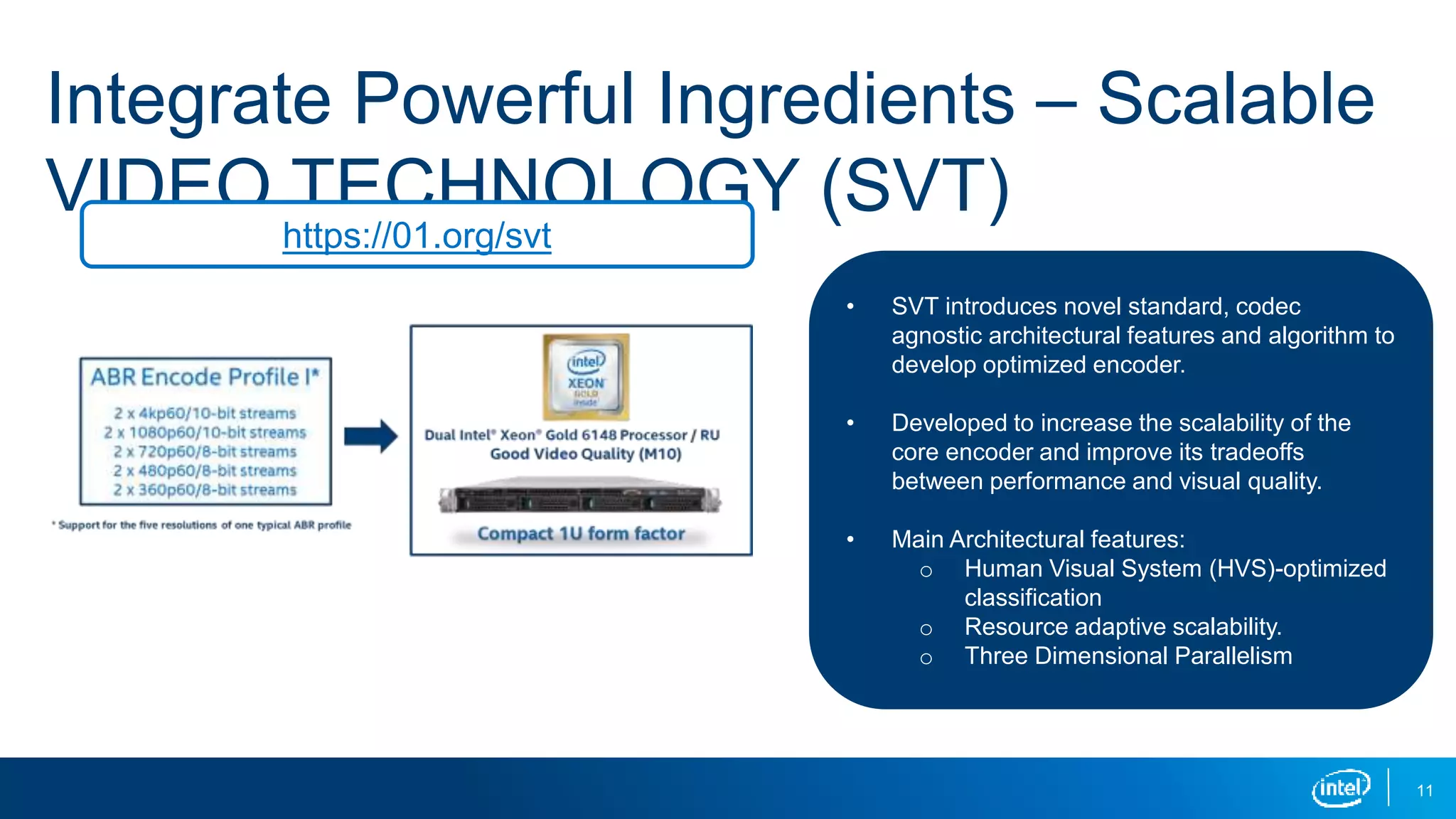

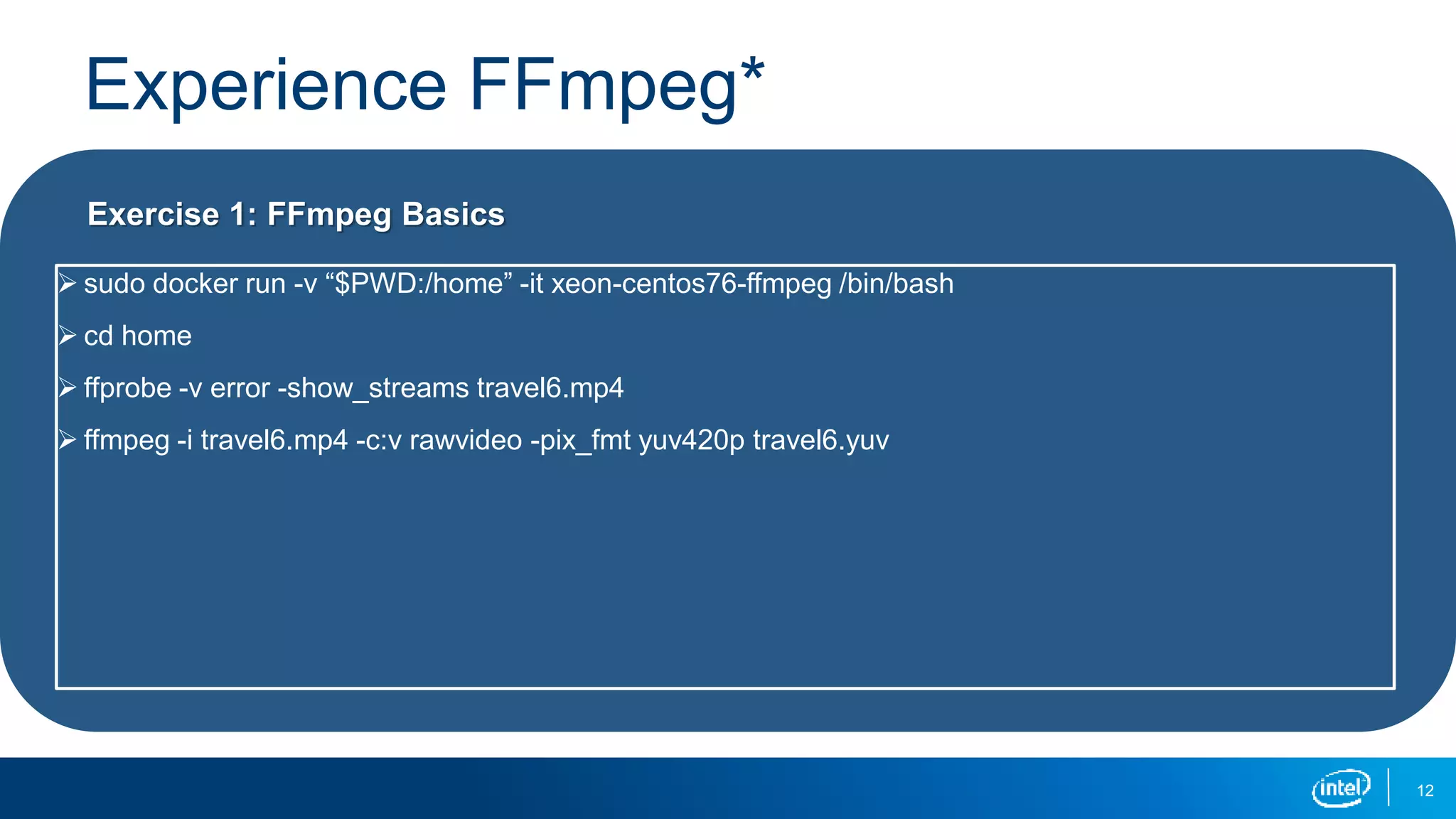

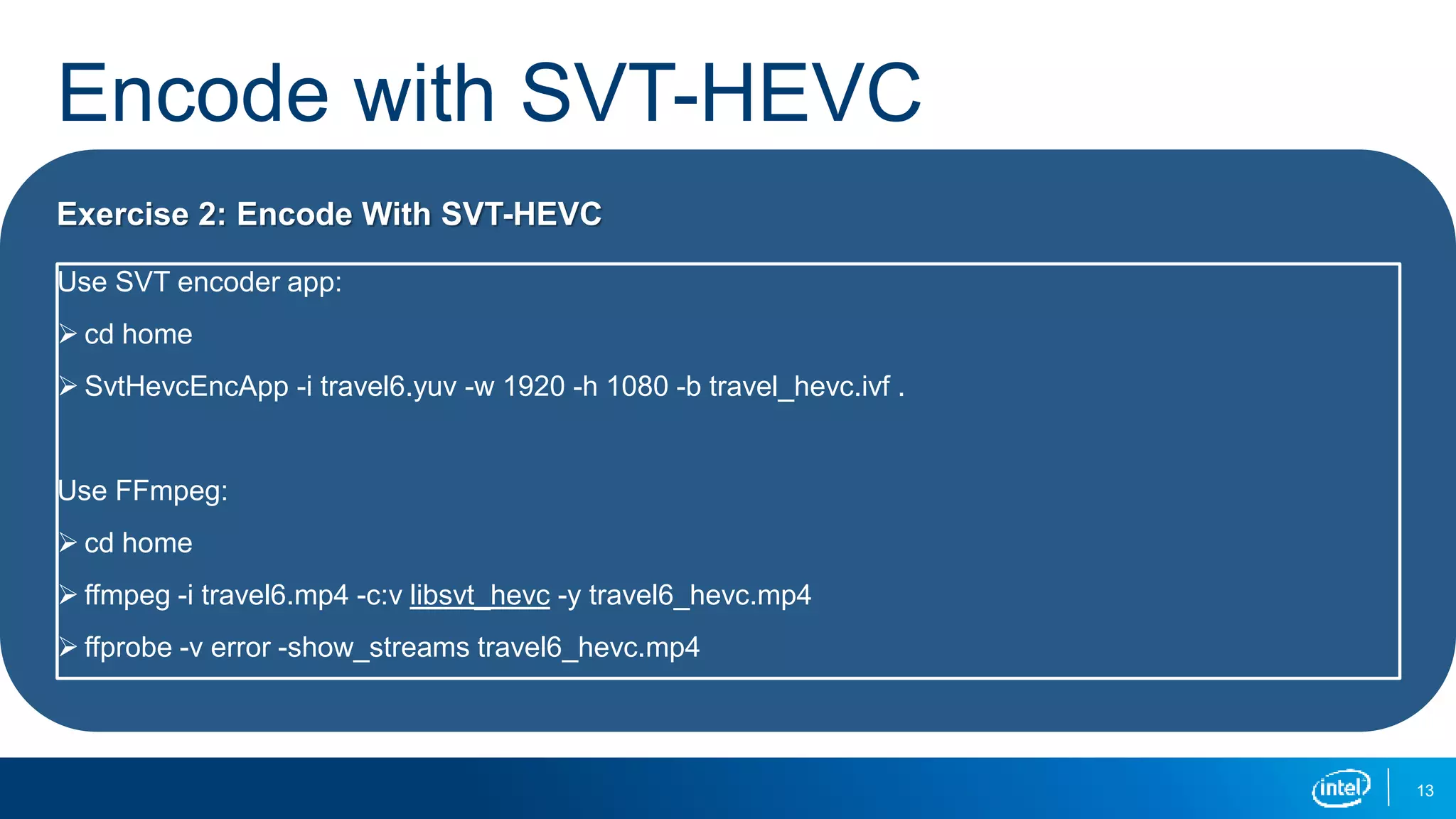

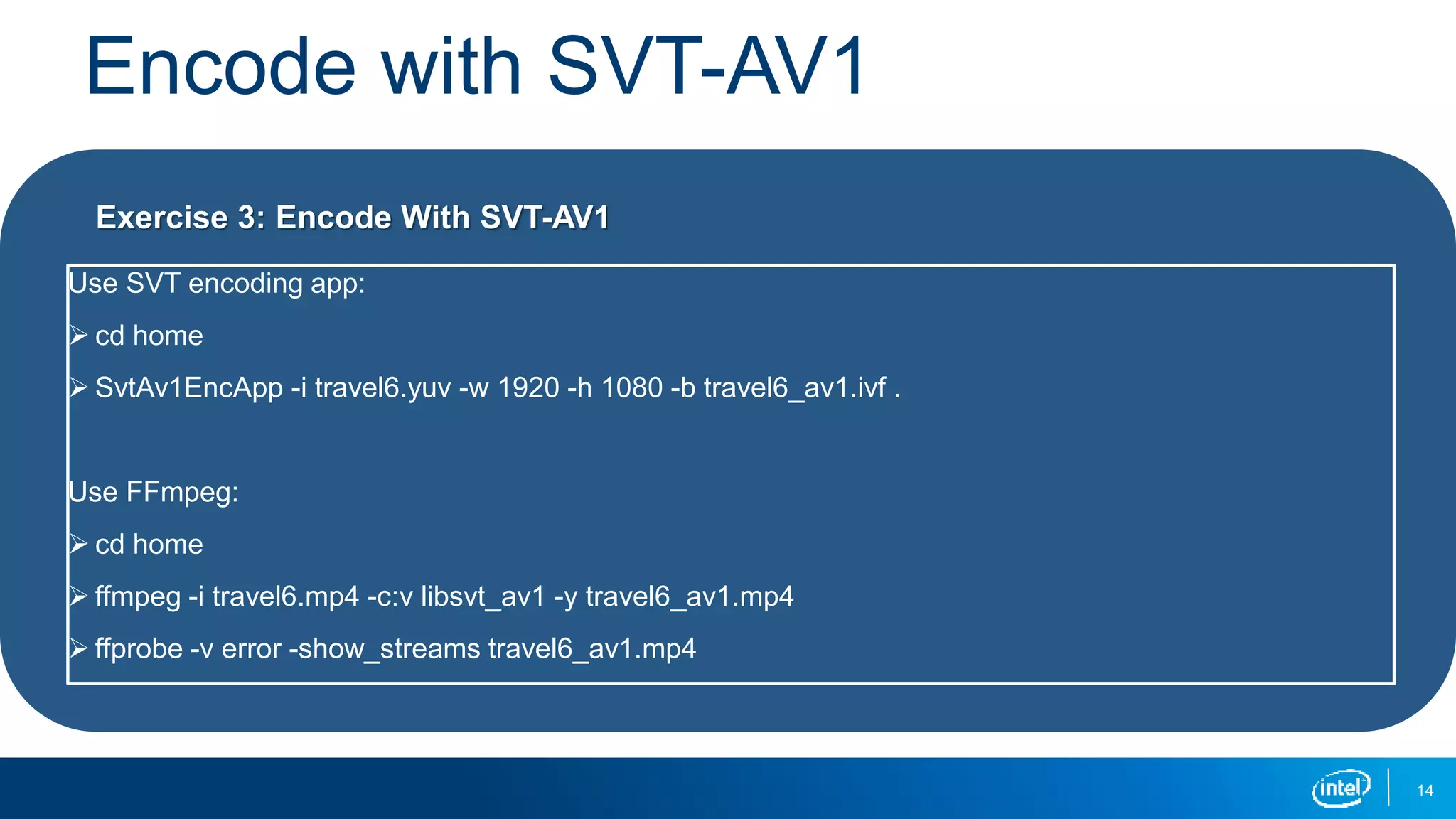

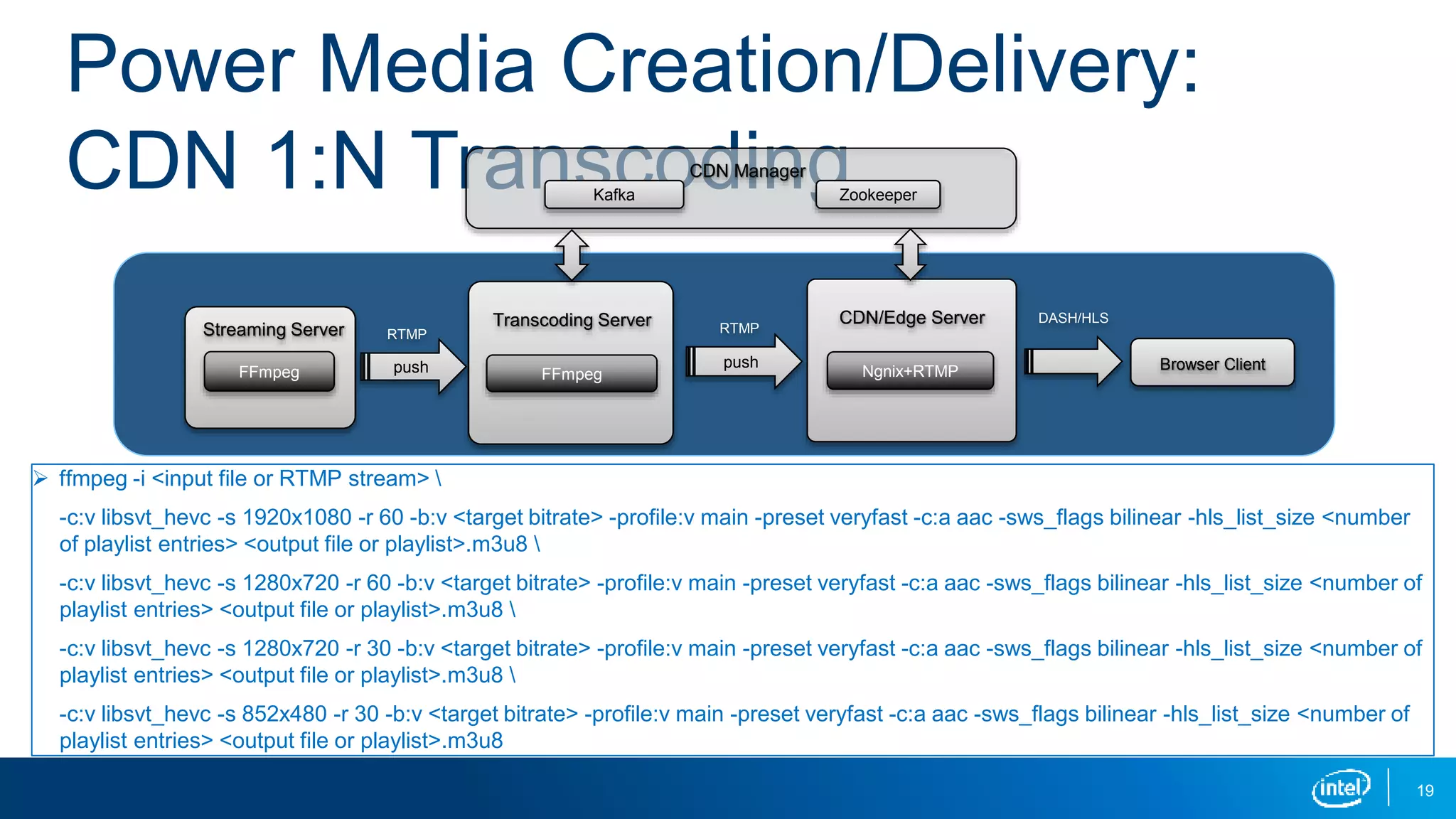

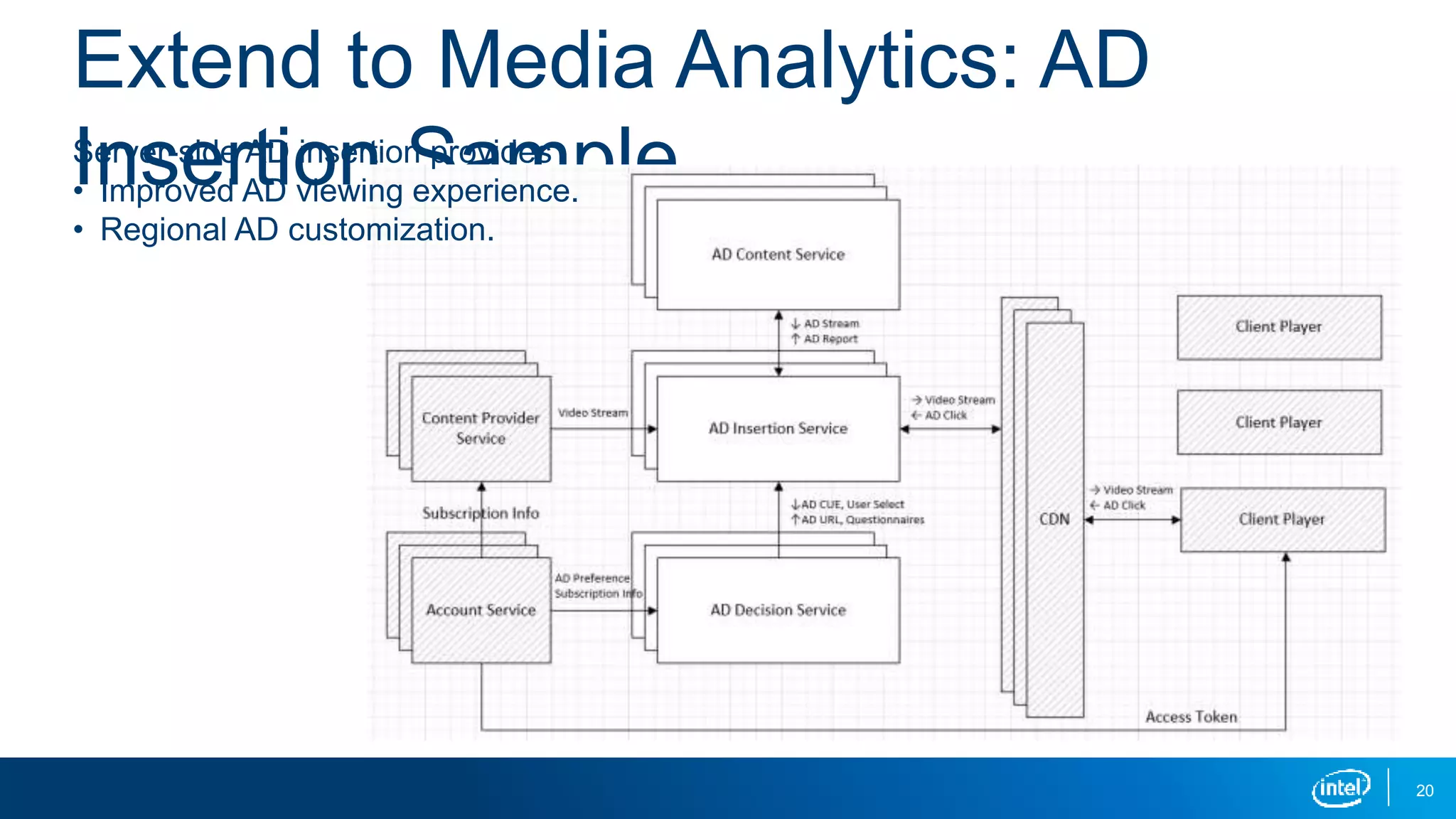

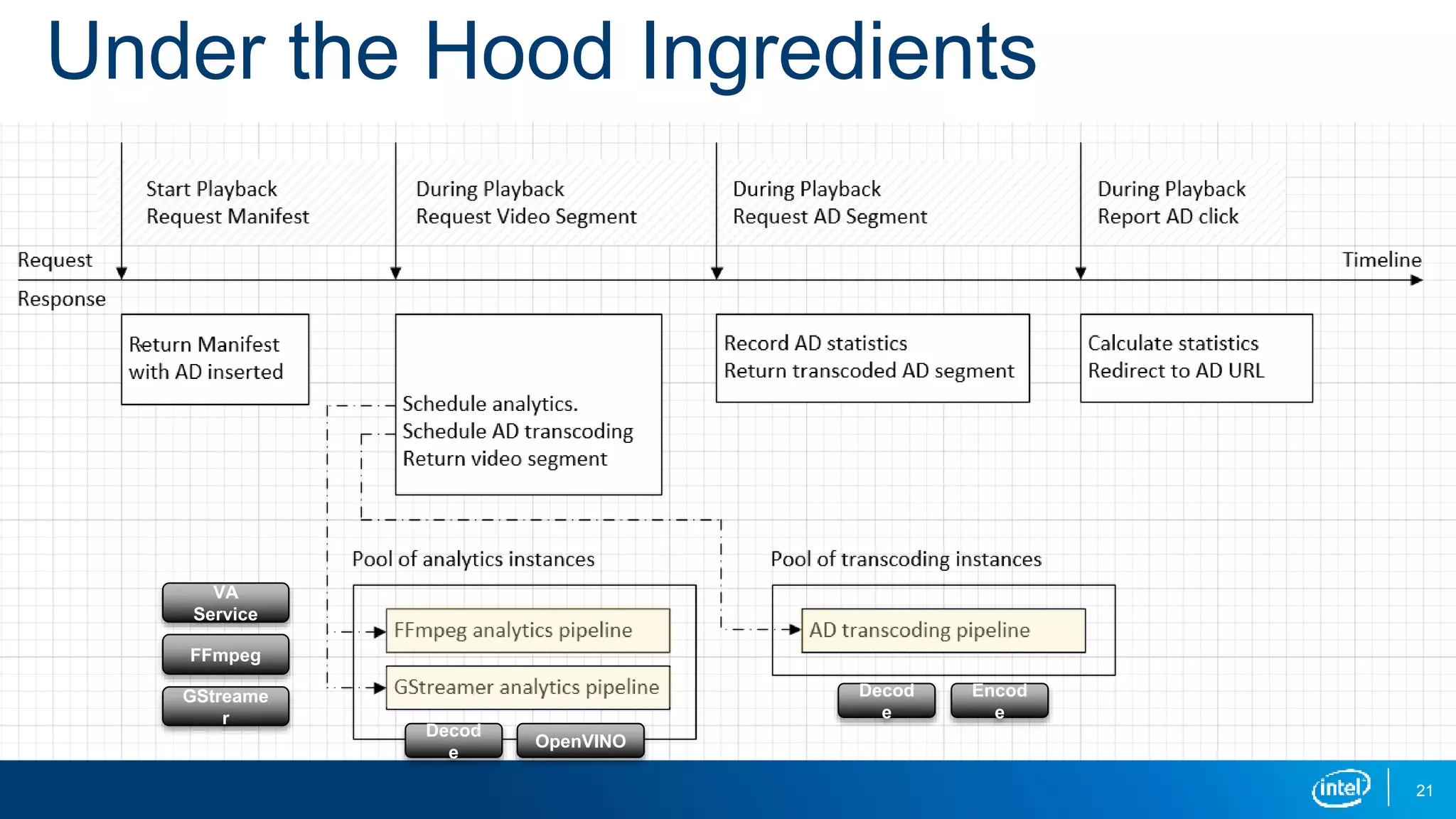

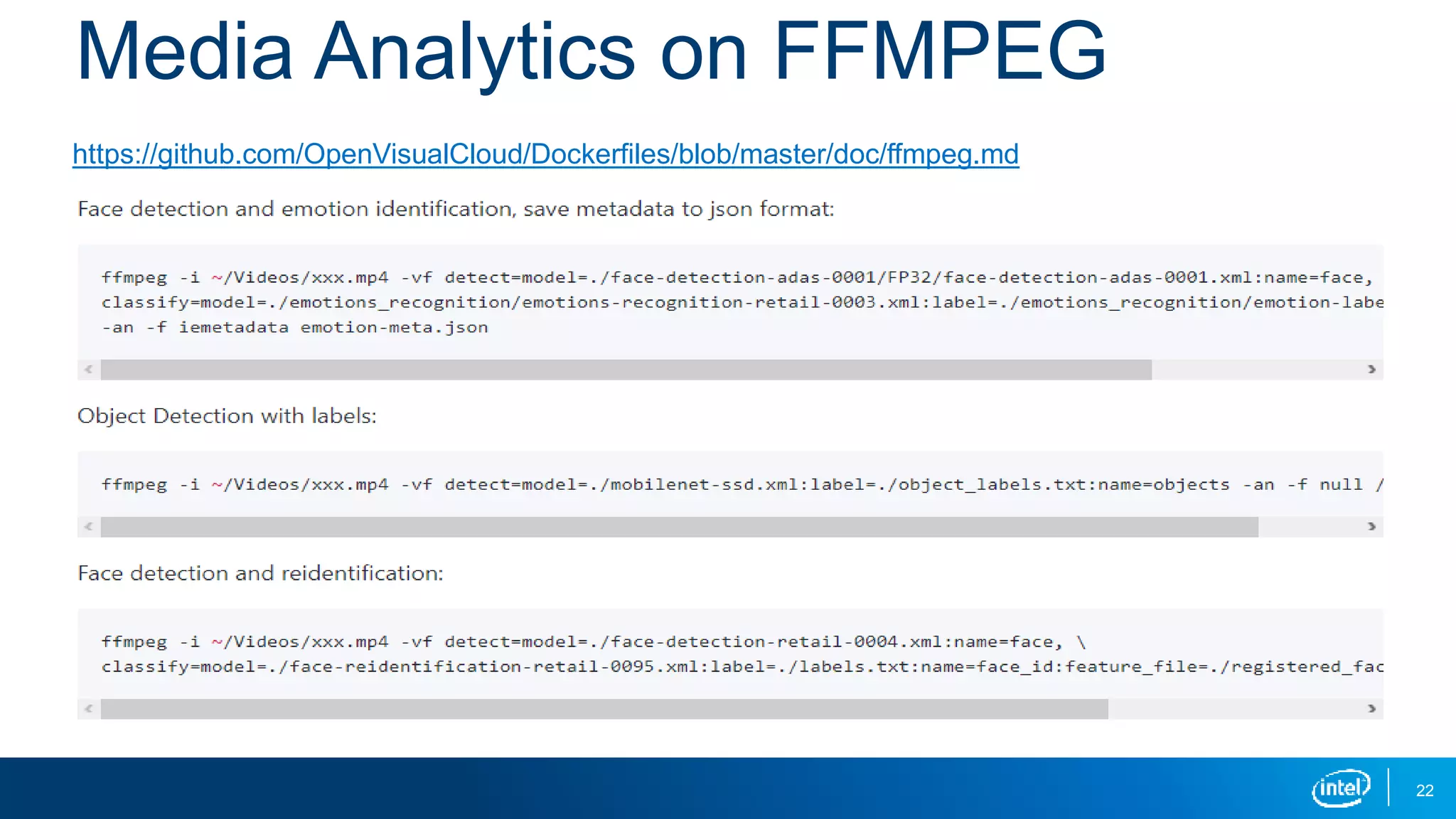

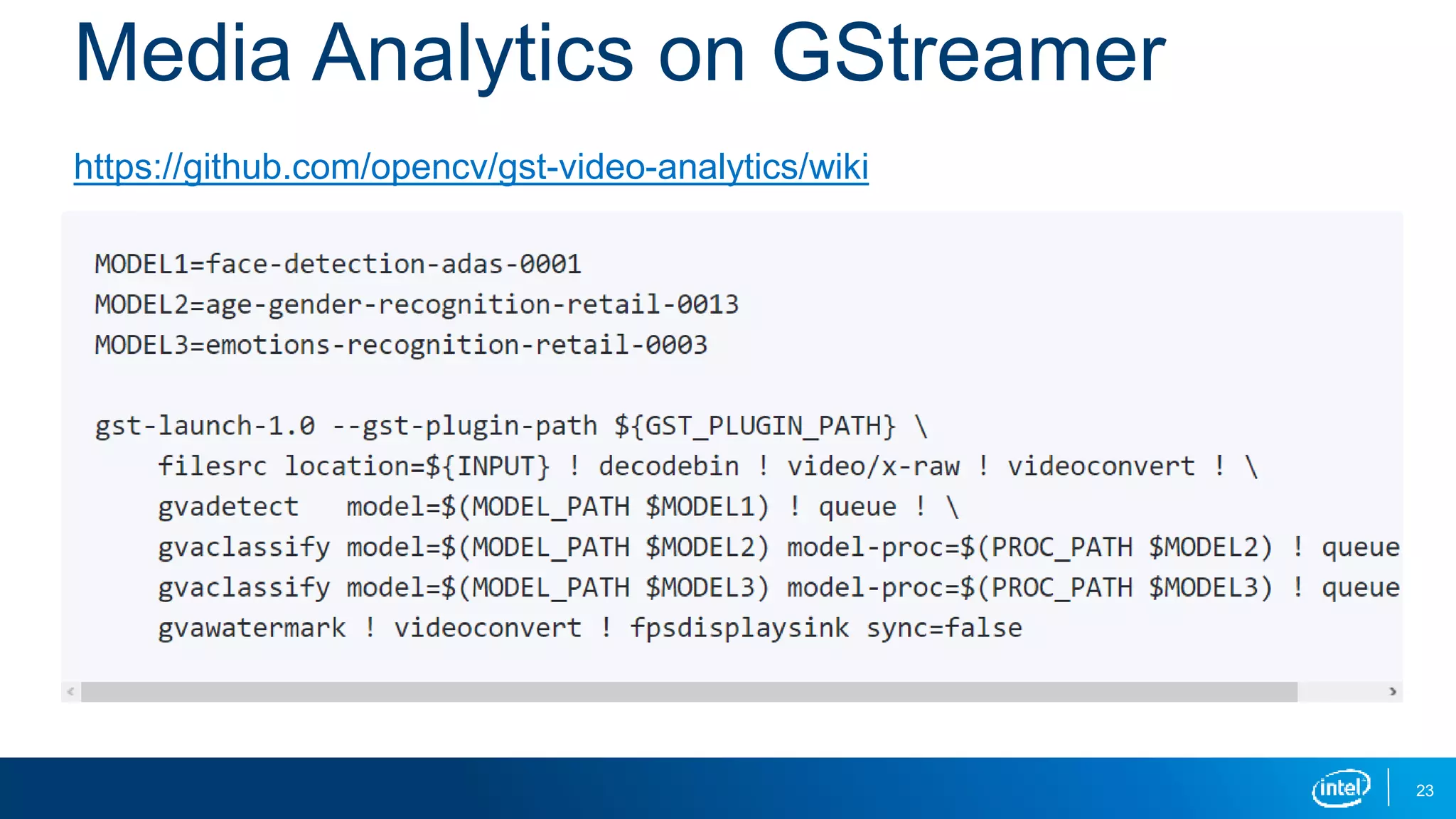

The document provides an overview of the Open Visual Cloud project, outlining its agenda, technology stack, and key services such as media creation, analytics, and immersive media. It highlights Intel's investment in technologies like FFmpeg, GStreamer, and TensorFlow, along with the Scalable Video Technology (SVT) architecture for video encoding. The document also includes actionable steps for developers to contribute and participate in the project via open source repositories.